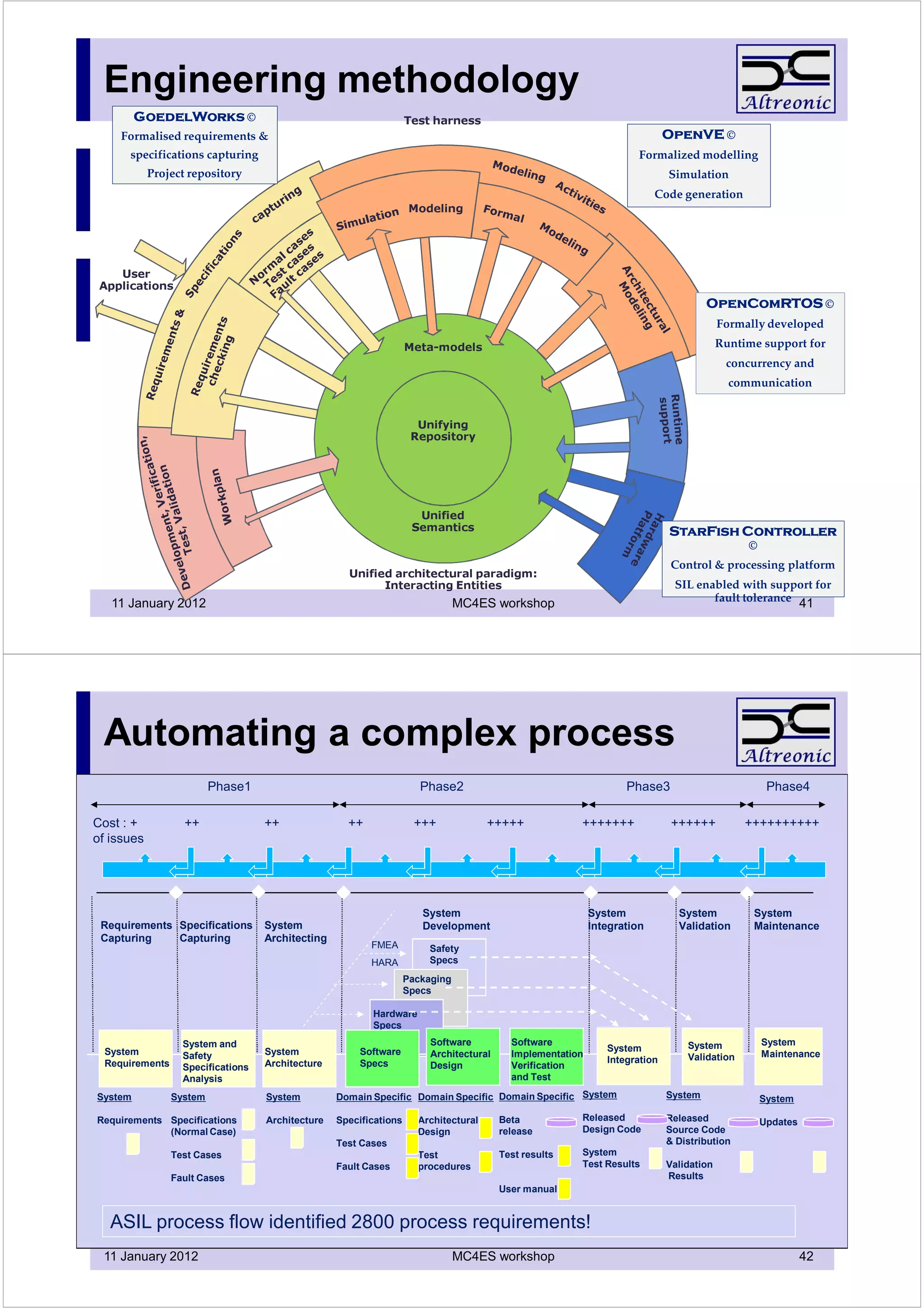

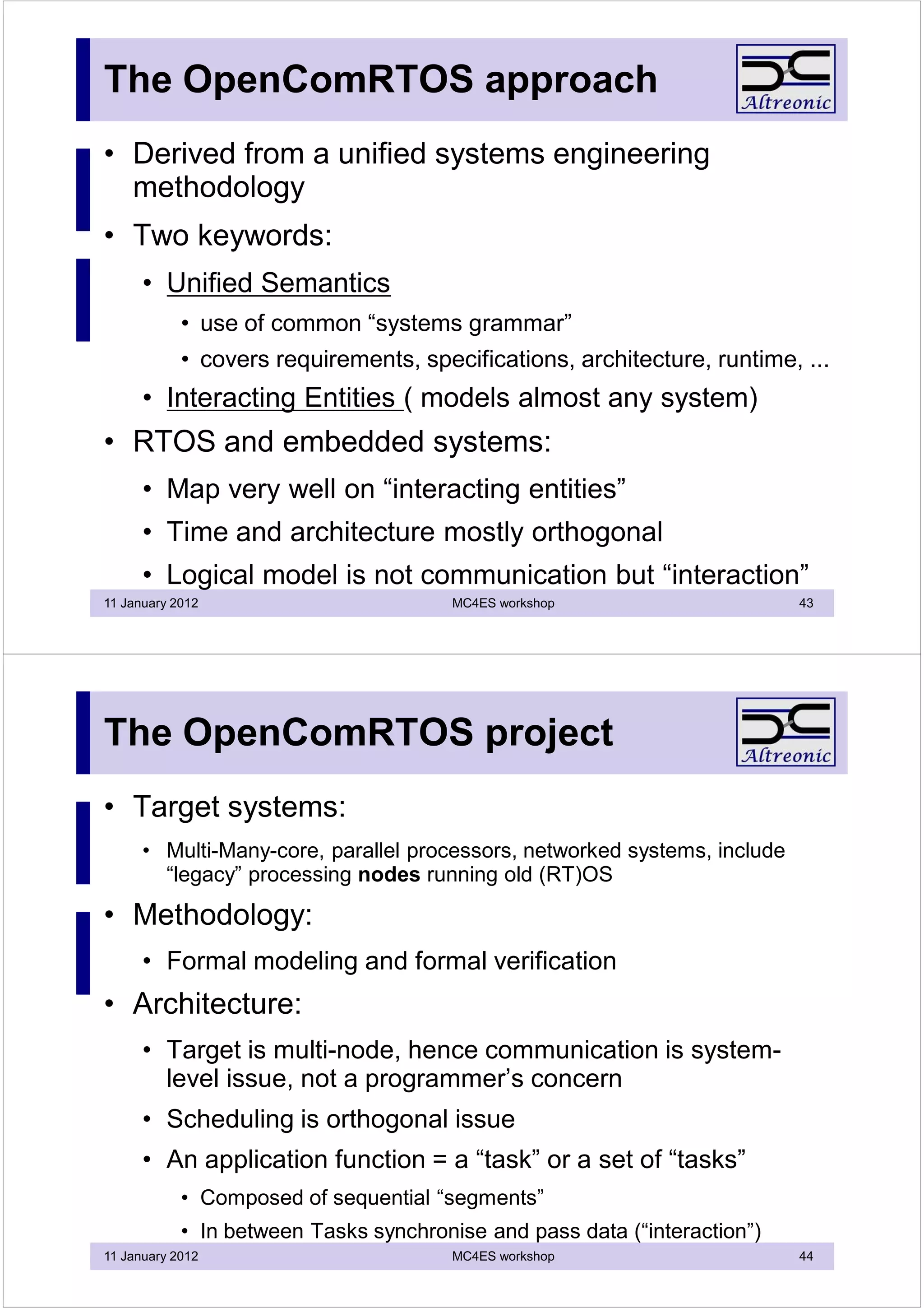

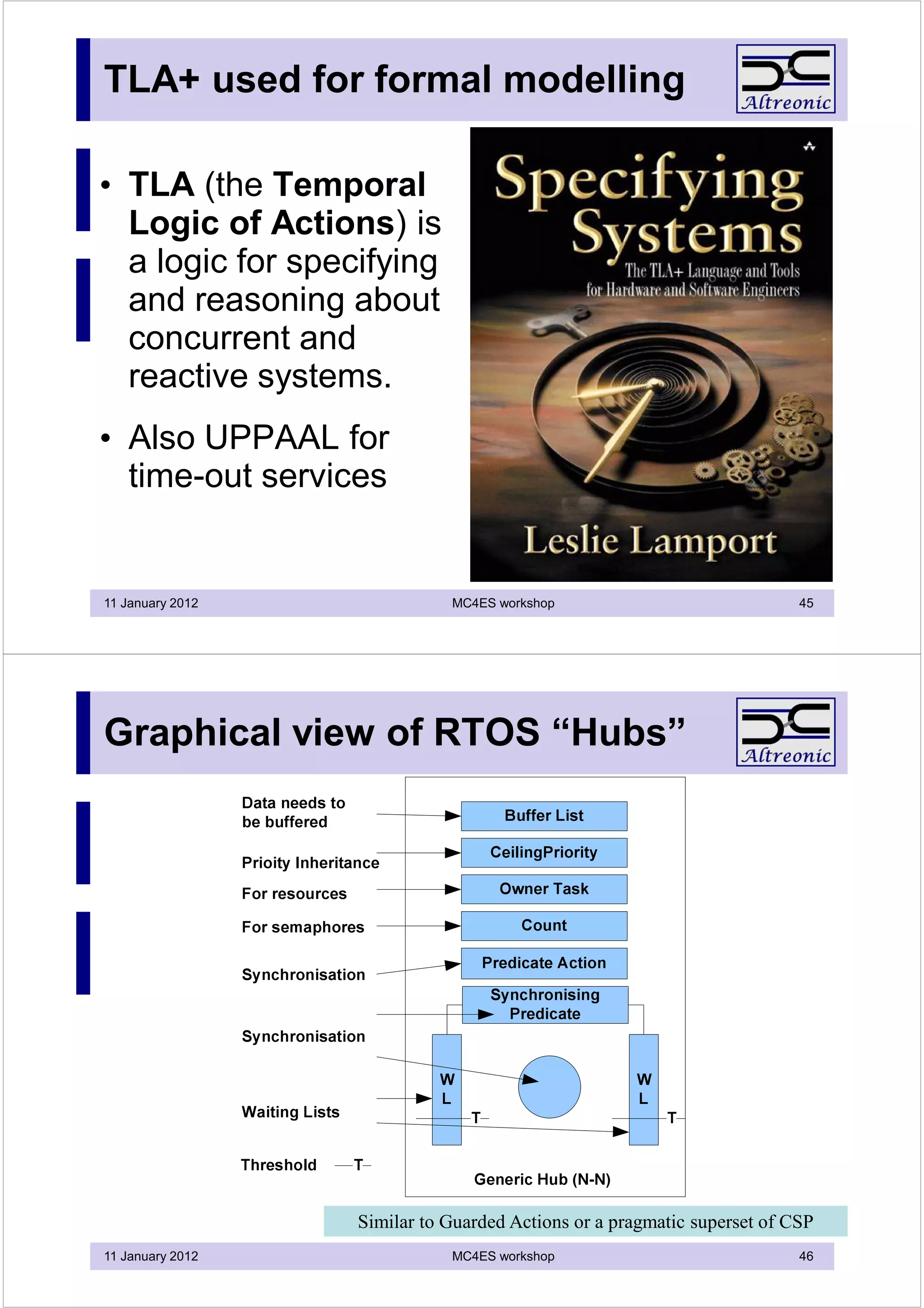

The document discusses the evolution and challenges of concurrent programming, particularly in embedded systems, highlighting the limitations of the von Neumann architecture for parallel processing. It explores multitasking and scheduling techniques essential for real-time behavior, and introduces concepts like virtual single processors and communication models to improve efficiency and software-hardware integration. The conclusion emphasizes the necessity of a unified approach in real-time operating systems to manage complexity and optimize performance in contemporary multi-core and networked environments.

![The von Neuman ALU

vs. an embedded processor

• The sequential programming paradigm is based on the von Neumann architecture

• But this was only meant for a single ALU

• A real processor in an embedded system :

• Inputs data, Processes the data (only this covered by von Neumann) Output the result

• On other words :

• at least two communications, often one computation

• => Communication/Computation ratio must be > 1 (in optimal case)

• Standard programming languages (C, Java, …) only cover the computation and sometimes

limited runtime multitasking

• We have an unbalance, and have been living with it for decades

• Reason ? : history

• Computer scientists use workstations (eye Nyquist Frequency is 50 Hz)

• Only embedded systems must process data in real-time

• Embedded systems were first developed by hardware engineers

11 January 2012 MC4ES workshop 3

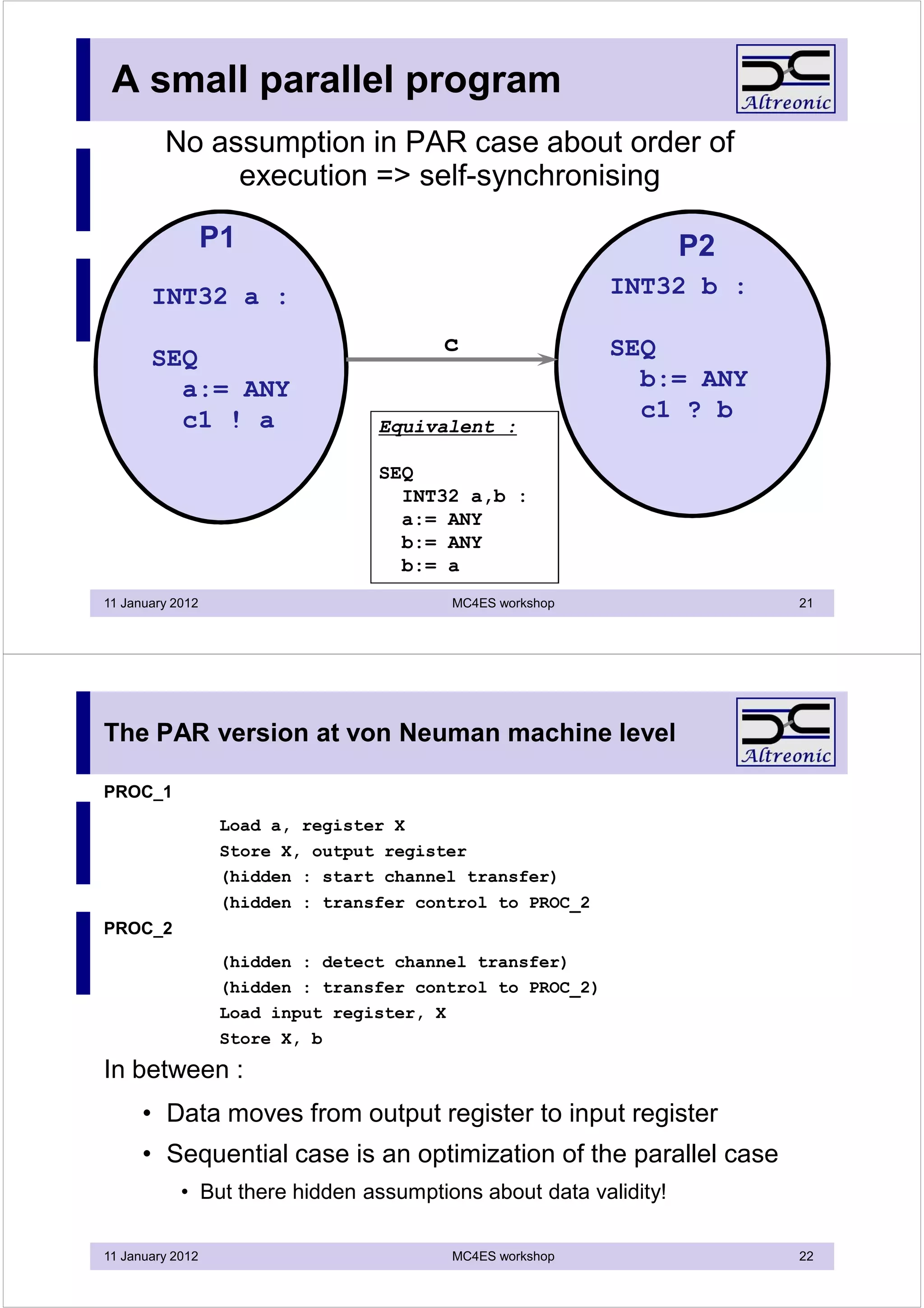

Multi-tasking:

doing more than one thing at a time

• Origin:

• A software solution to a hardware limitation

• von Neumann processors are sequential, the real-world is “parallel” by nature

and software is just modeling

• Developed out of industrial needs

• How?

• A function is a [callable] sequential stream of instructions

• Uses resources [mainly registers] => defines “context”

• Non-sequential processing =

• switching between ownership of processor(s)

• reducing overhead by using idle time or to avoid active wait :

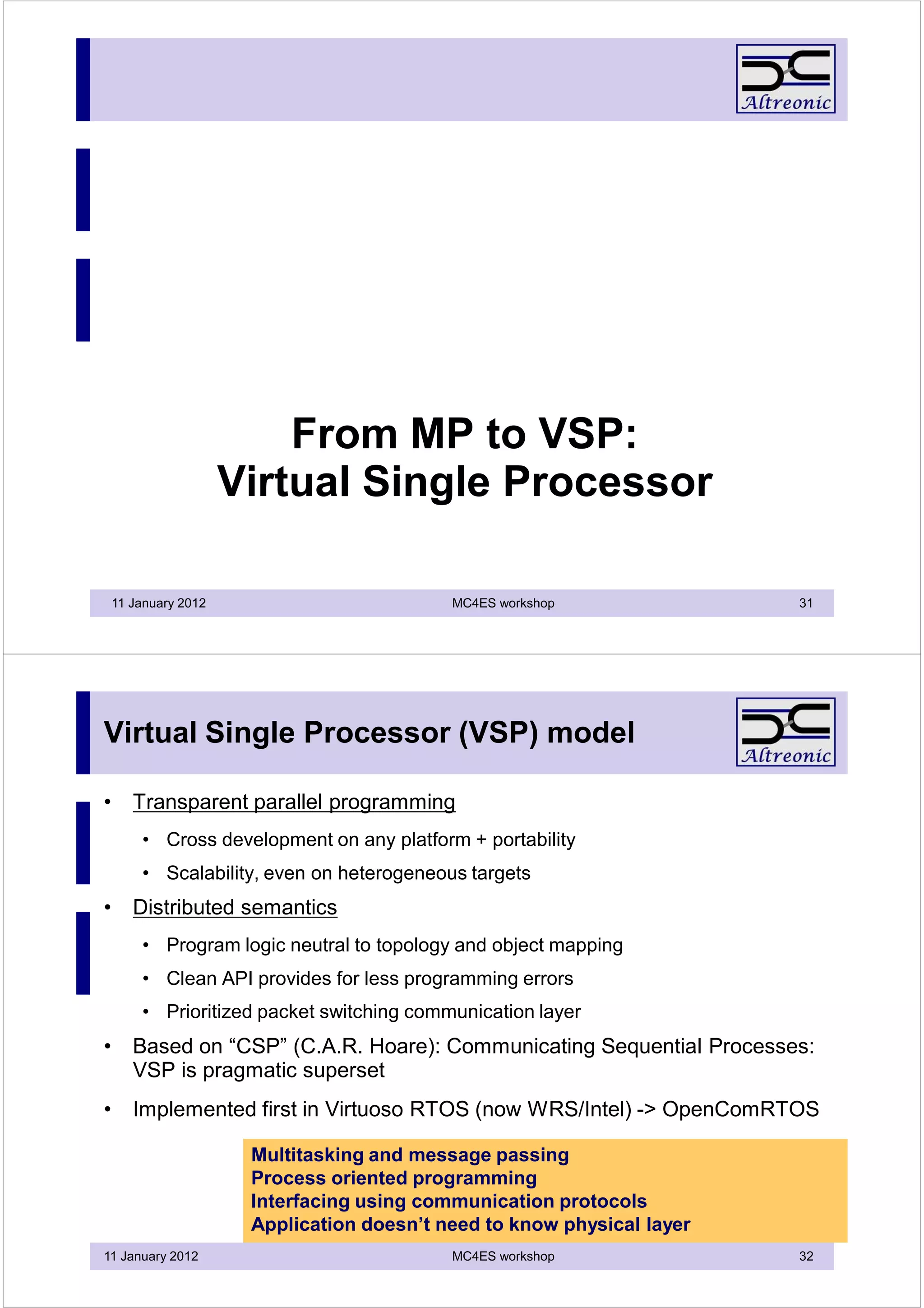

• each function has its own workspace

• a task = function with proper context and workspace

• Scheduling to achieve real-time behavior for each task

• preemption capability : switch asynchronously

11 January 2012 MC4ES workshop 4](https://image.slidesharecdn.com/session1introductionconcurrentprogramming-130402135019-phpapp02/75/Session-1-introduction-concurrent-programming-2-2048.jpg)

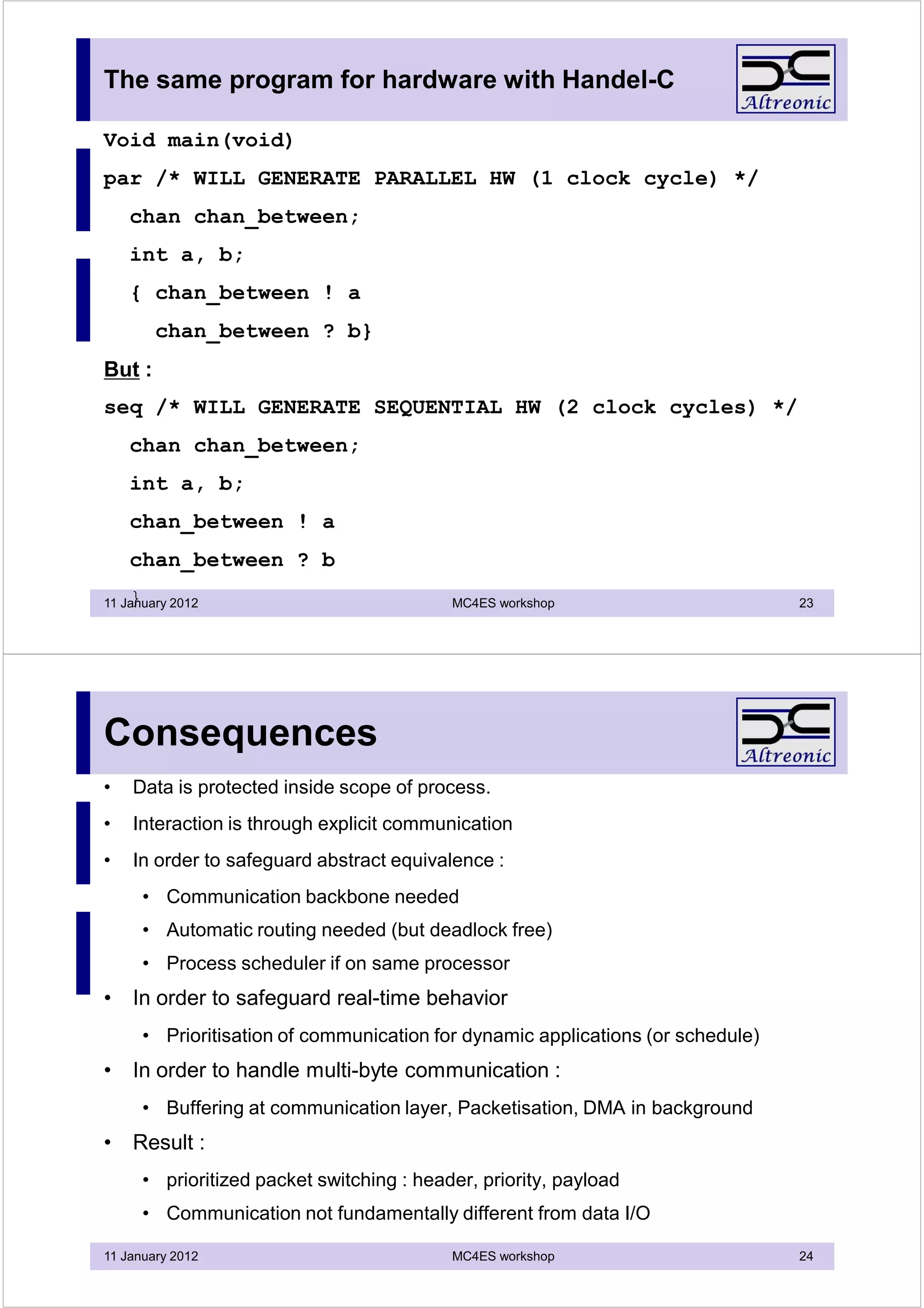

![Classical (RT) scheduling (1)

• Superloop :

• loops into single endless loop : [test, call function]

• Round-robin, FIFO :

• first in, first served

• cooperative multi-tasking, run till descheduling point, no preemption

• Priority based, pre-emptive

• tasks run when executable in order of priority

• can be descheduled at any moment

• Rata Monotonic Analysis (Liu & Stanckovic) : CPU load < 70 %

• simplified model : tasks are independent

• graceful degradation by design

11 January 2012 MC4ES workshop 7

Classical (RT) scheduling (2)

• Earliest deadline first (EDF):

• most dynamic form : deadlines are time based

• but complex to use and implement

• time granularity is an issue (hardware lacking support)

• catastrophic failure mode

• For MP systems:

• No real generic solution for embedded

• IT solutions focus on QoS and soft real-time + high overhead

• Higher need for error recovery

• Graceful degradation : garantee QoS even if HW resources fail

• RMA (priority, preemptive) still best approach

11 January 2012 MC4ES workshop 8](https://image.slidesharecdn.com/session1introductionconcurrentprogramming-130402135019-phpapp02/75/Session-1-introduction-concurrent-programming-4-2048.jpg)

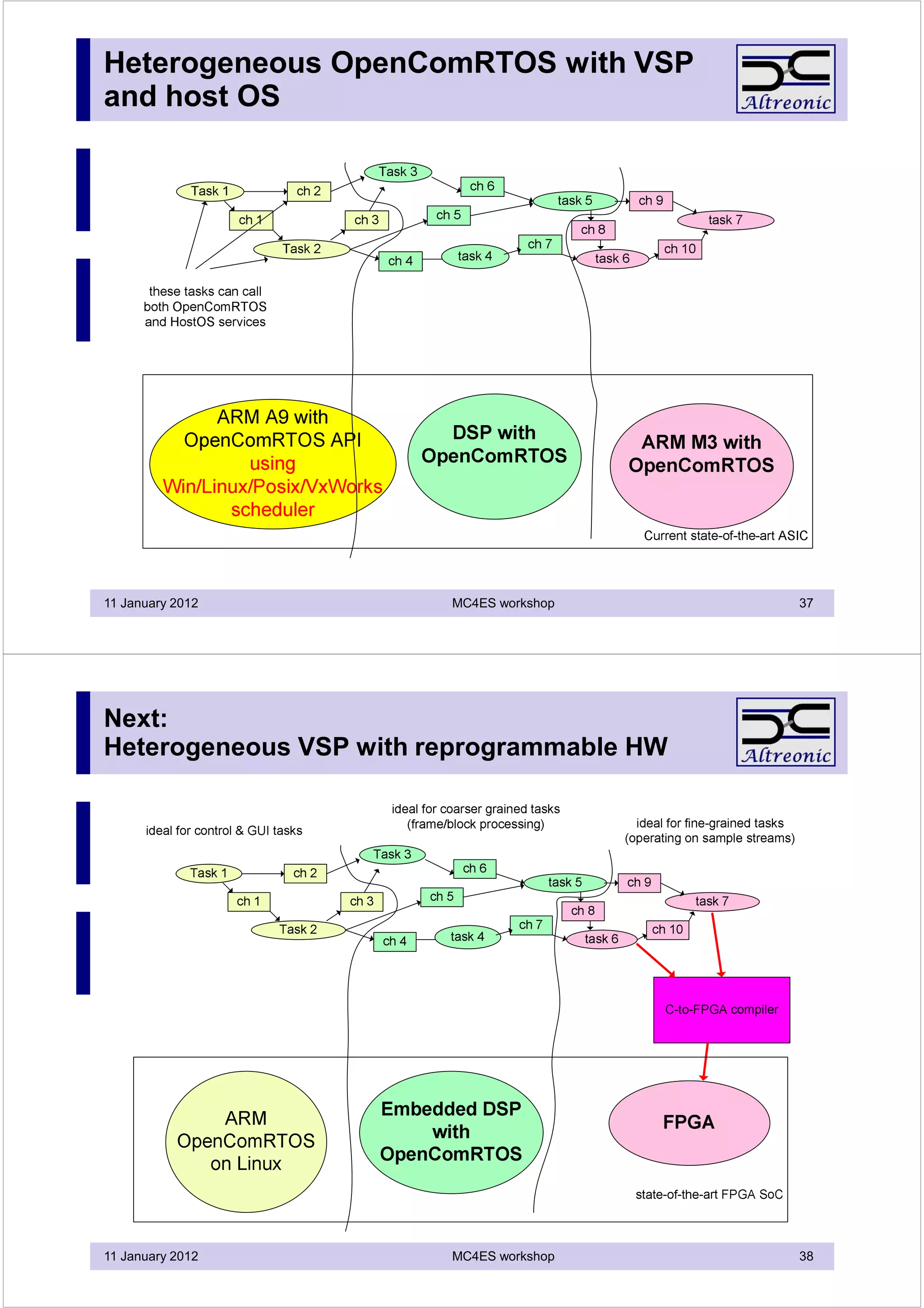

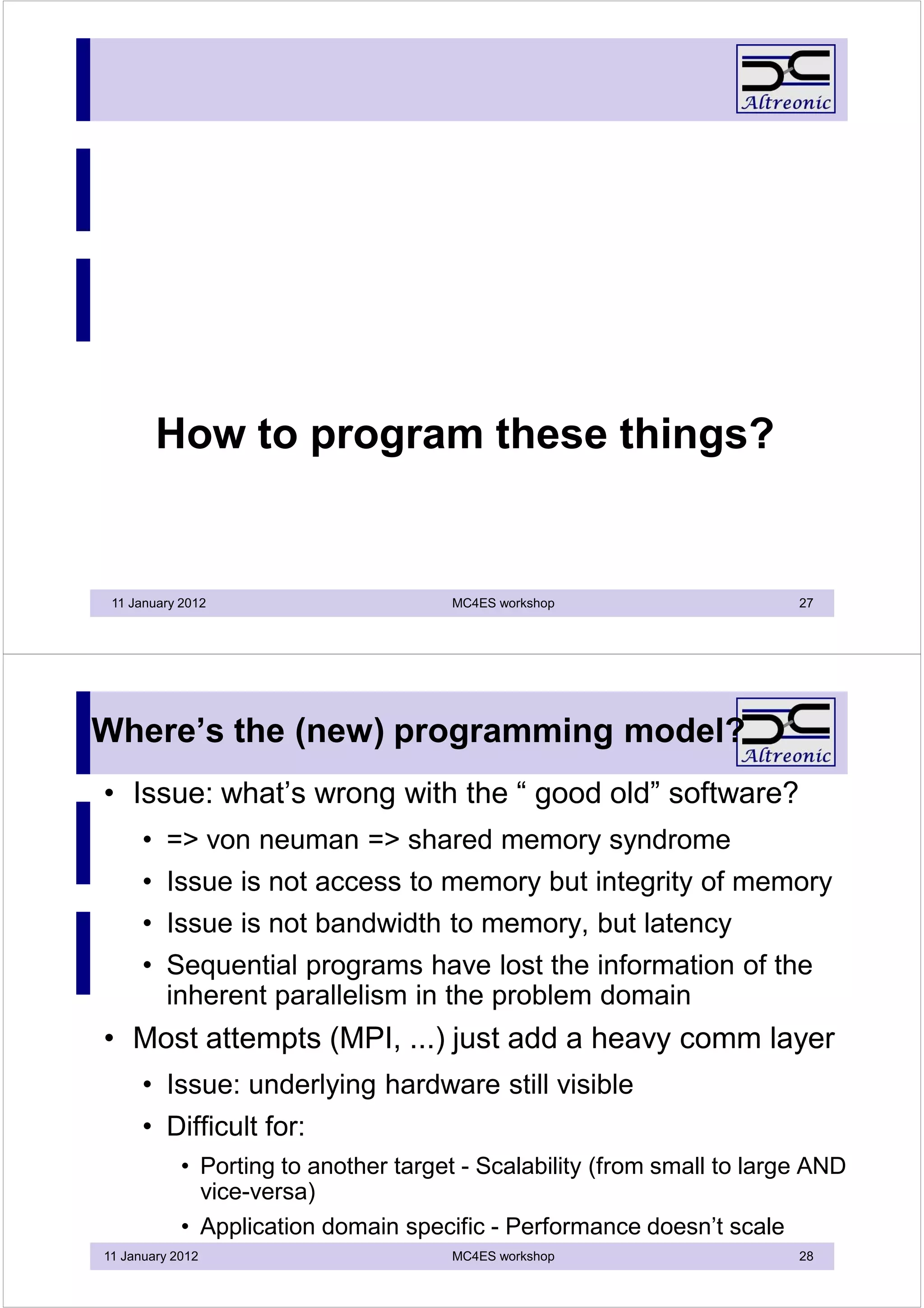

![Beyond concurent multi-tasking

• Multi-tasking = Process Oriented Programming

• A Task =

• Unit of execution

• Encapsulated functional behavior

• Modular programming

• High Level [Programming] Language :

• common specification :

• for SW: compile to asm

• for HW: compile to VHDL or Verilog

• E.g. program PPC with ANSI C (and RTOS), FPGA with Handel-C

• C level design is enabler for SoC “co-design”

• More abstraction gives higher productivity

• But interfaces be better standardized for better re-use

• Interfaces can be “compiled” for higher volume applications

11 January 2012 MC4ES workshop 29

Multi-tasking API: an orthogonal set

• Events : binary data, one to one, local -> interface to HW

• [counting] semaphore : events with a memory, many to many, distributed

• FIFO queue : simple datacomm between tasks, many to many, distributed

• Mailbox/Port : variable size datacomm between tasks, rendez-vous,

one/many to one/many, distributed

• Resources : ownership protection, priority inheritance

• Memory maps/pools

• semantic issues : distributed operation, blocking, non-blocking, time-out,

asynchronous operation, group operators

• L1_memcpy_[A][WT] (not just memcpy!)

• L1_SendPacketToPort_[NW][W][T][A]

11 January 2012 MC4ES workshop 30](https://image.slidesharecdn.com/session1introductionconcurrentprogramming-130402135019-phpapp02/75/Session-1-introduction-concurrent-programming-15-2048.jpg)