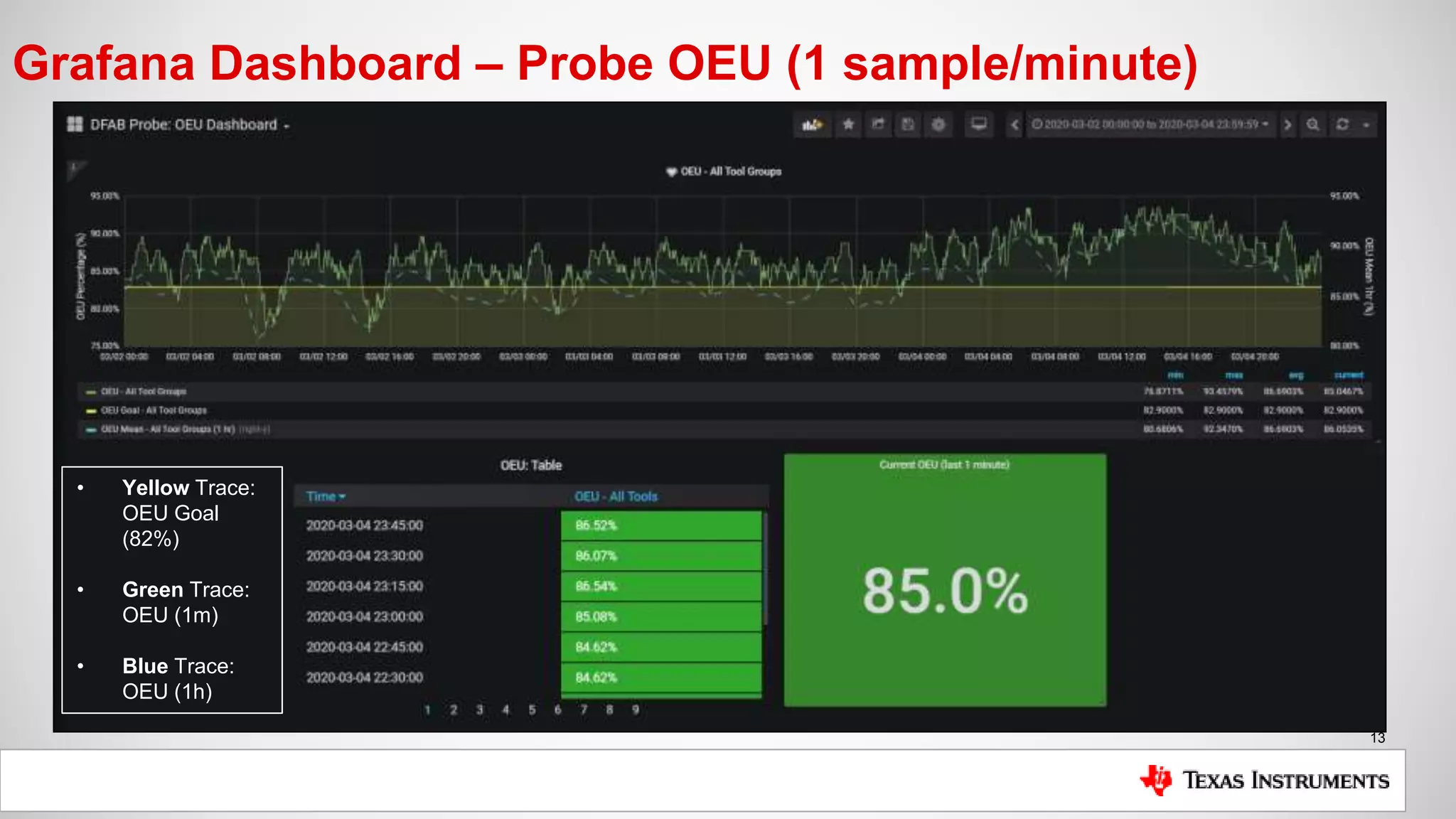

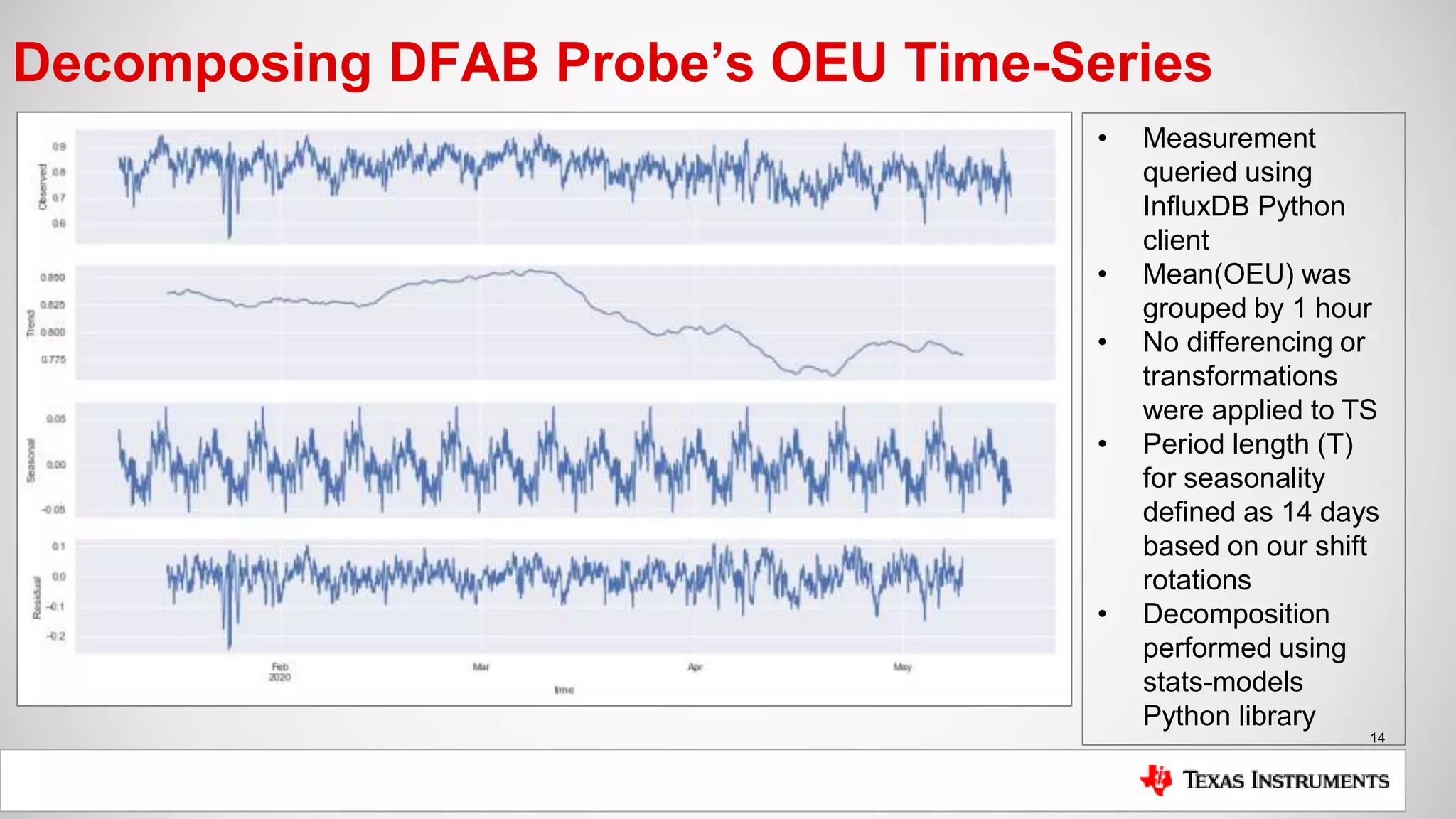

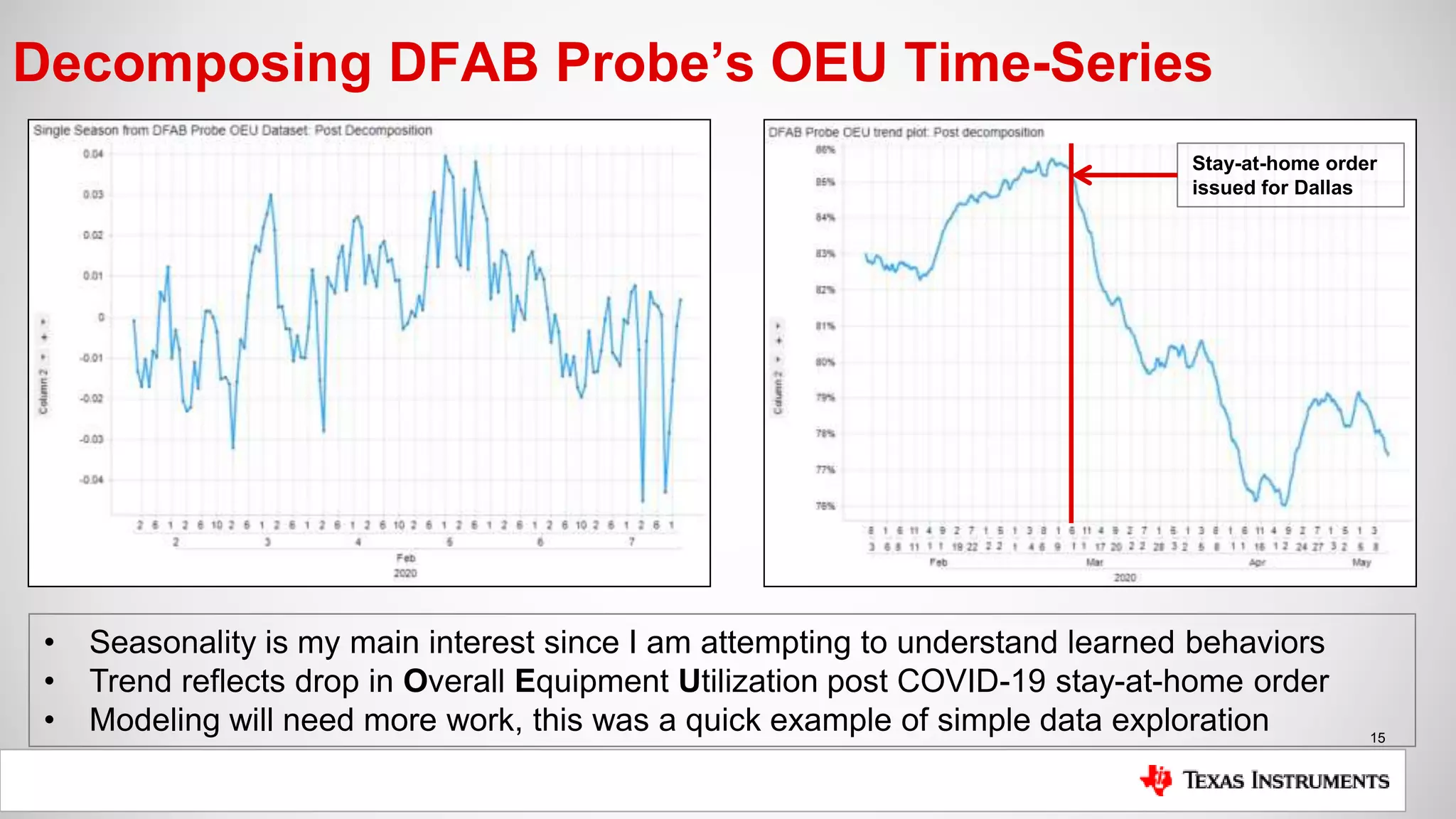

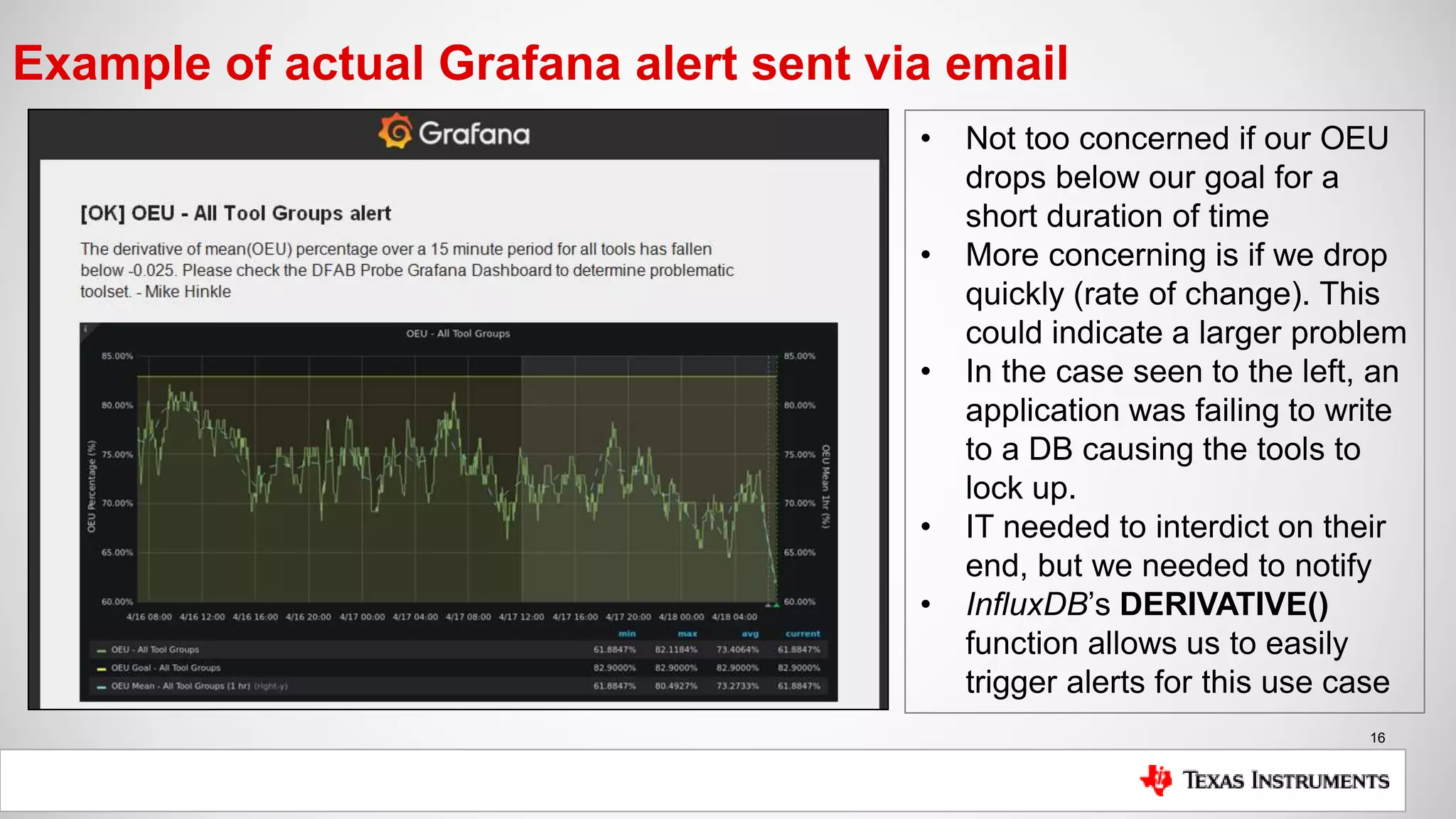

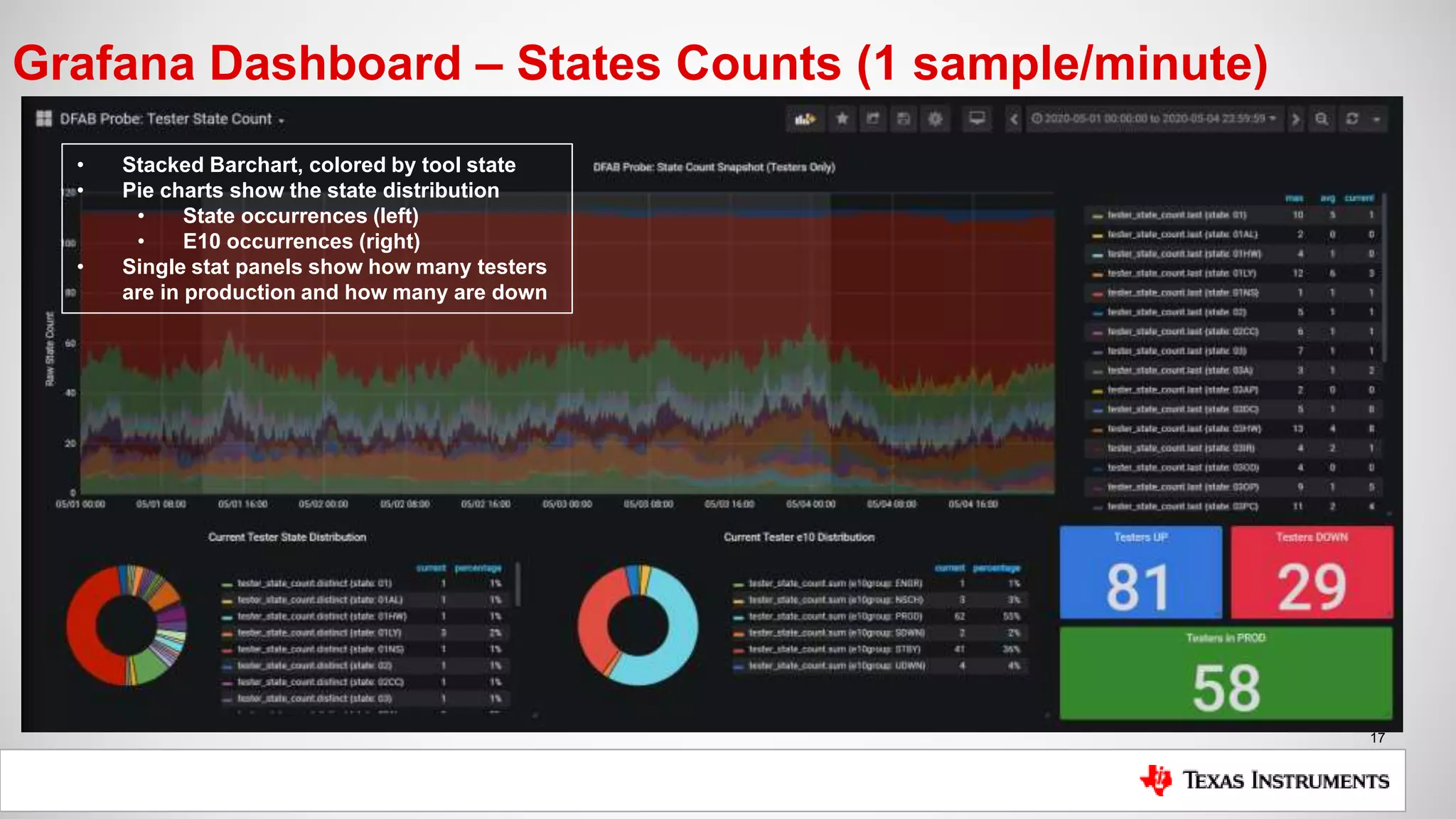

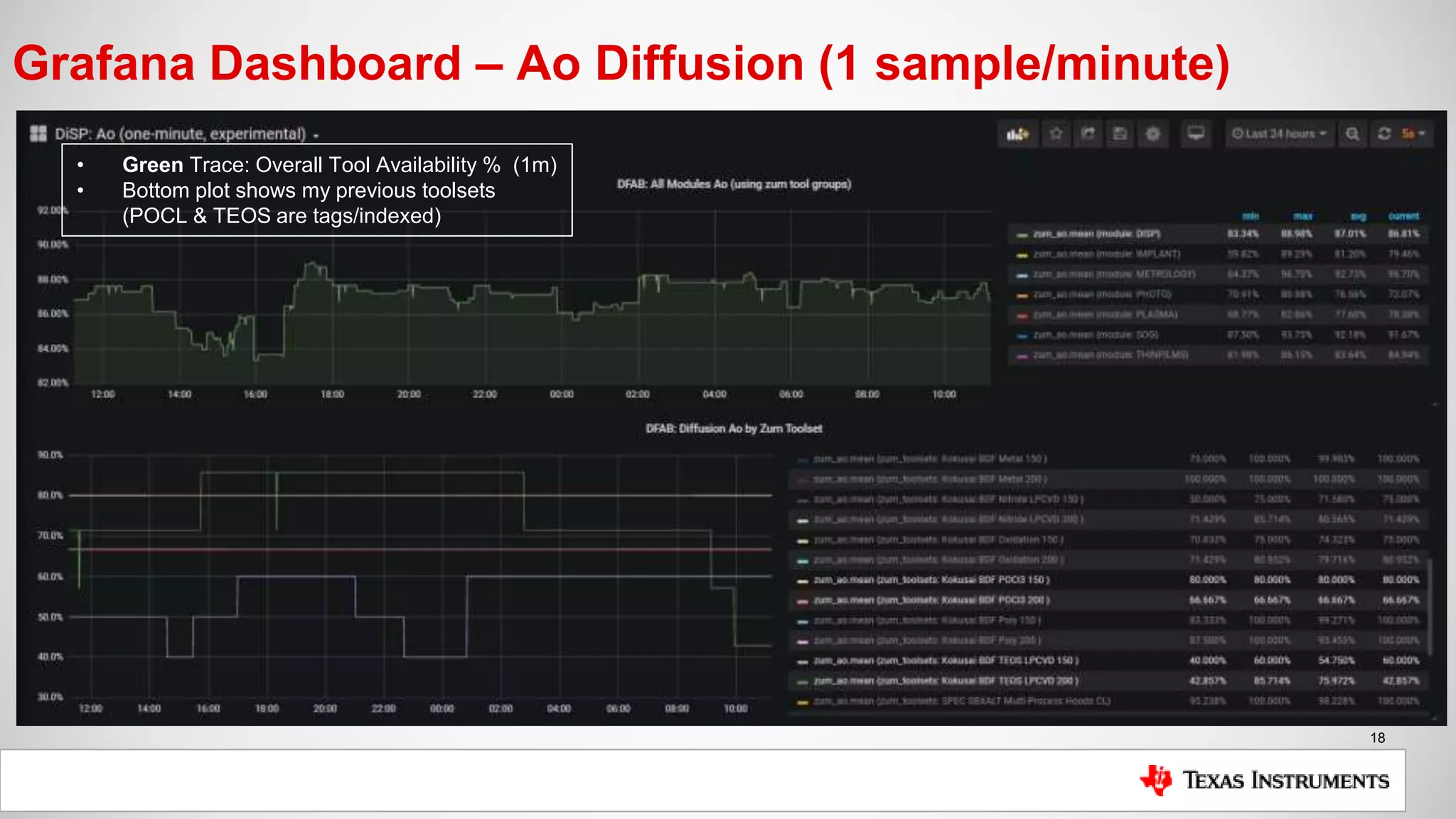

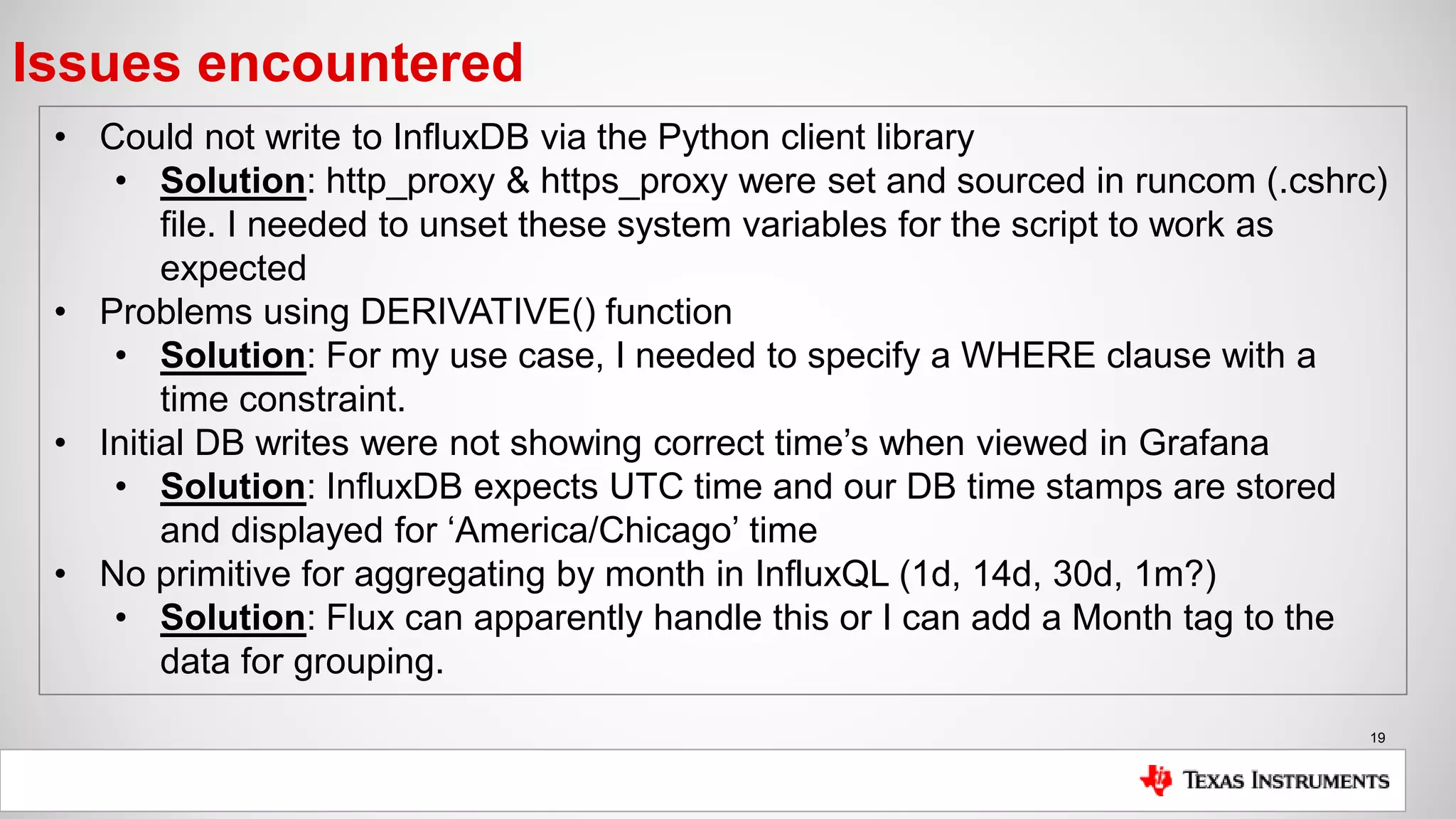

The document is a presentation by Mike Hinkle from Texas Instruments, detailing their use of InfluxDB for managing semiconductor manufacturing data. It covers the importance of accurate data for decision-making, the current challenges faced, and the reasons for choosing InfluxDB including ease of setup and performance. The presentation also discusses examples of data utilization, implemented dashboards, and future plans for data modeling and forecasting.

![11

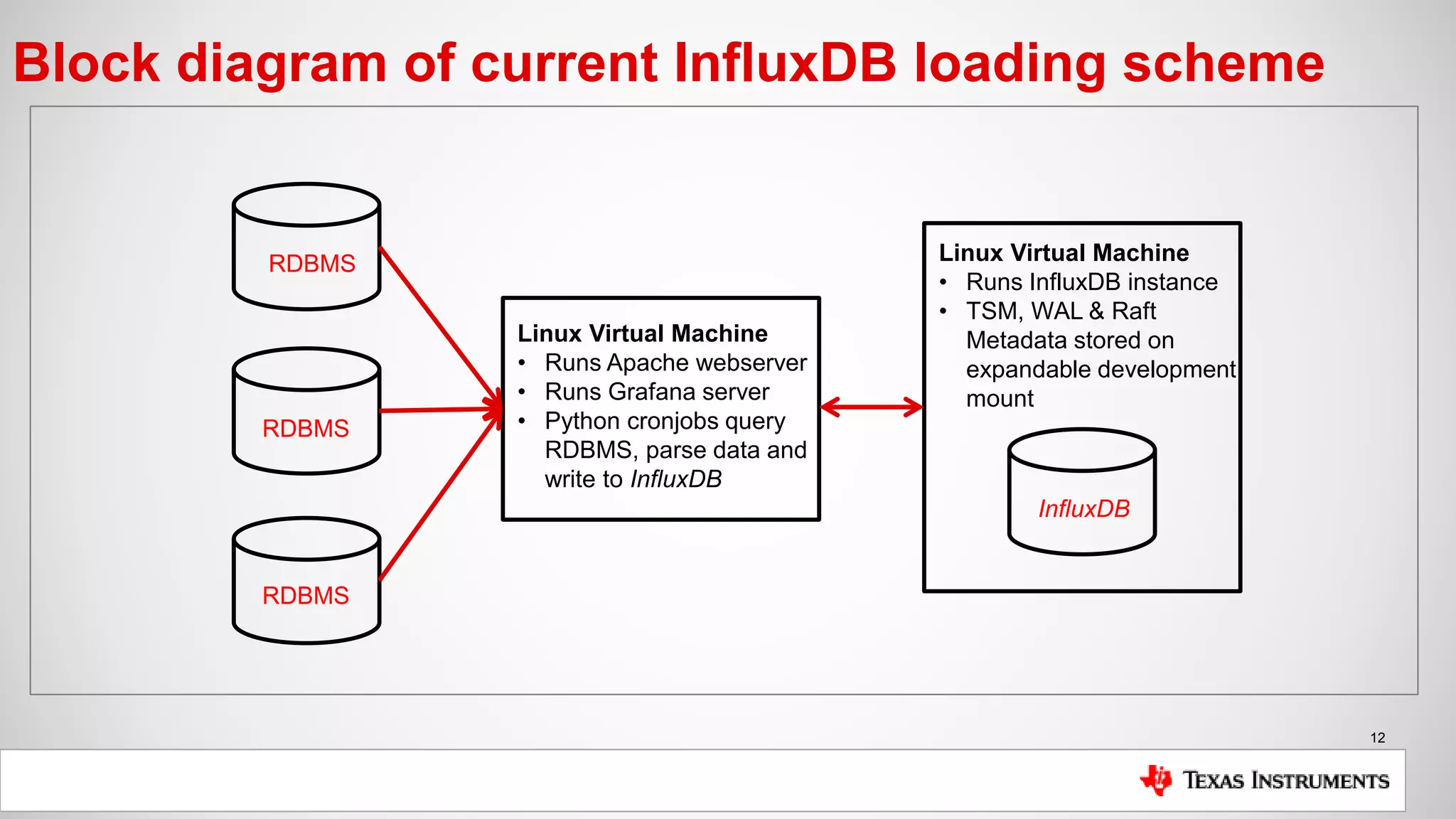

Current installation setup

Setup:

• Single InfluxDB instance (OSS) installed on Linux VM

• TSM, WAL & Raft data all stored on expandable development mount

• Loader scripts executed via cronjob, query RDBMS and load InfluxDB

• Data is filtered, sanitized & calculated if possible during initial query

• Grafana server running on separate Linux VM instance

• Apache web server is reverse proxied to point to Grafana server on port 3000

Metrics being collected:

• OEU (Overall Equipment Utilization: [Time Testing Good Wafers / Total Time])

• Ao (Tool Availability: [Uptime / Total Time])

• Occurrences of States (States define the state of our tools, PM’s, PROD, etc..)

• Cycle Time (how long it takes for a wafer to be tested [device tag])](https://image.slidesharecdn.com/tiinfluxpresentation2020-05-26mhinkle-200605234935/75/How-Texas-Instruments-Uses-InfluxDB-to-Uphold-Product-Standards-and-to-Improve-Efficiencies-11-2048.jpg)