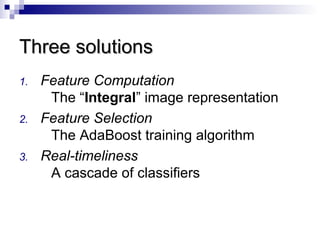

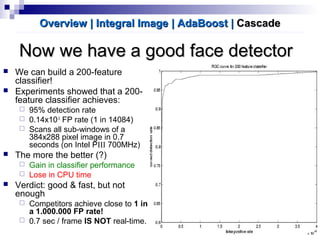

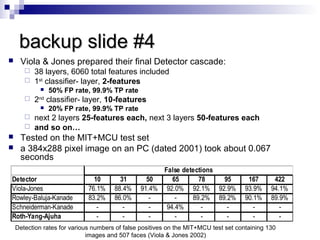

Robust Real-time Face Detection by Paul Viola and Michael Jones addresses three challenges:

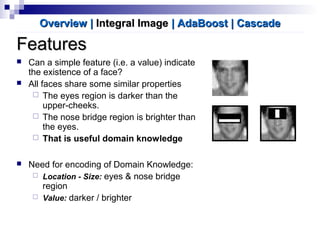

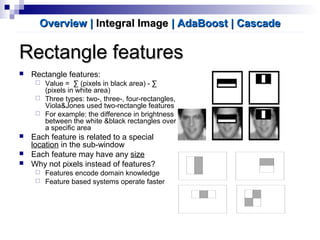

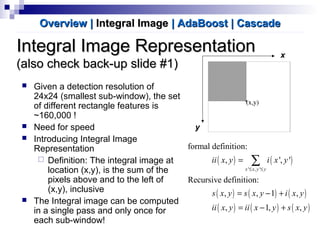

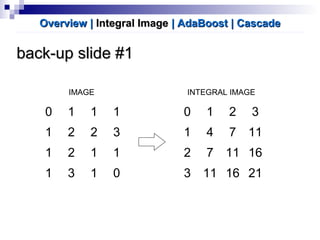

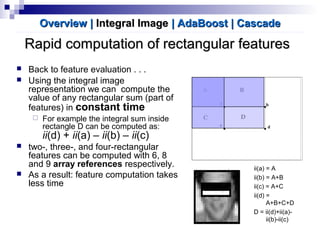

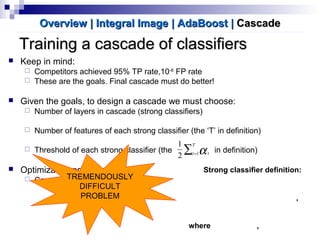

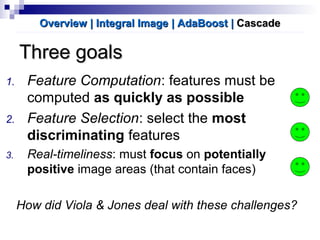

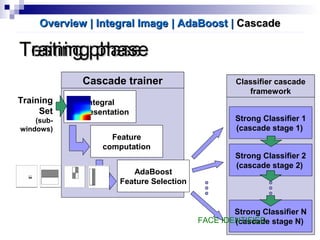

1. Rapidly computing features using an integral image representation

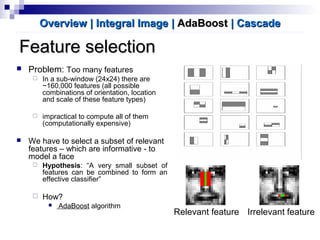

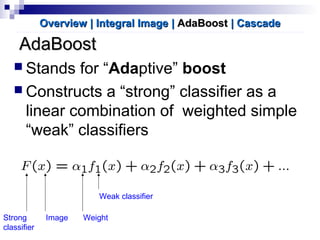

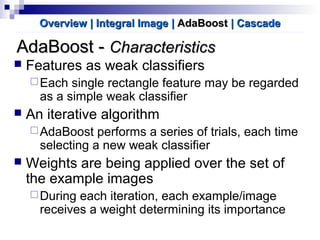

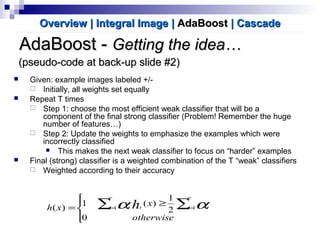

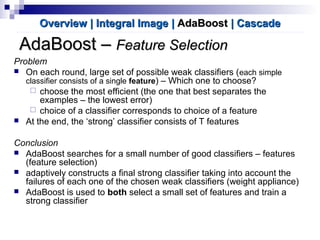

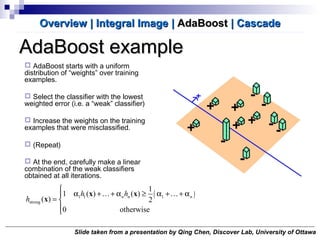

2. Selecting discriminative features using AdaBoost

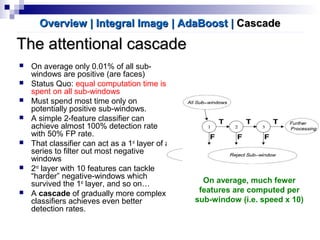

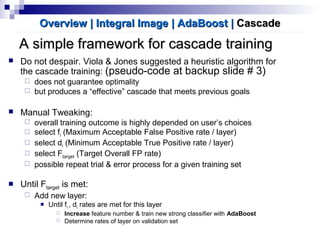

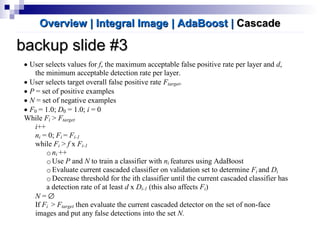

3. Achieving real-time performance through a cascade of classifiers that filter non-face regions with simple classifiers before more complex analysis.