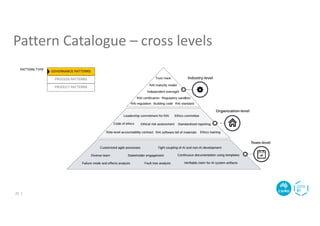

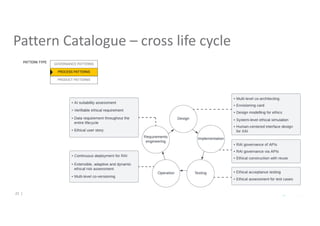

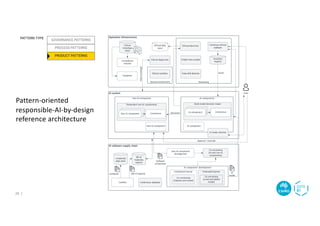

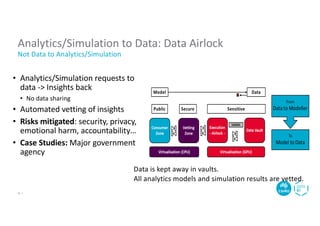

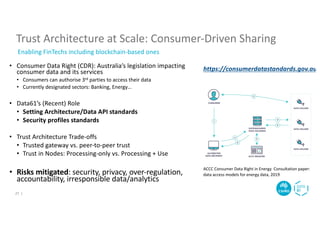

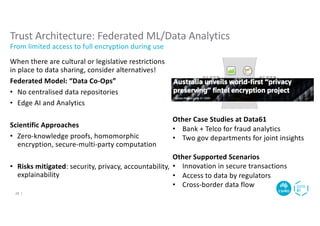

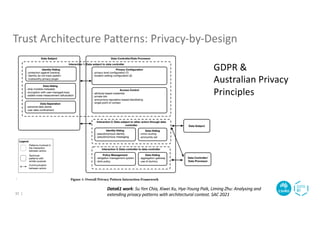

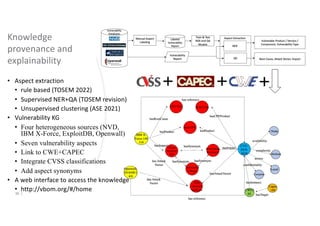

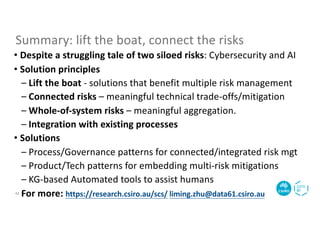

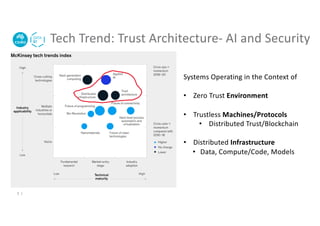

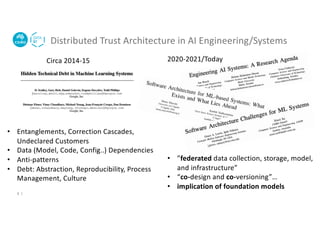

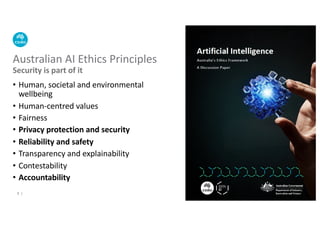

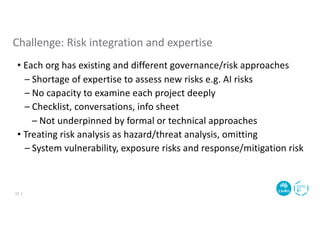

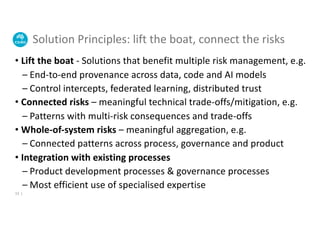

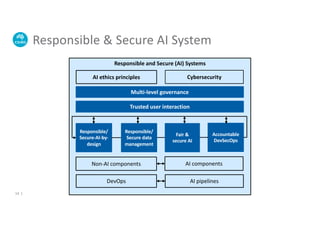

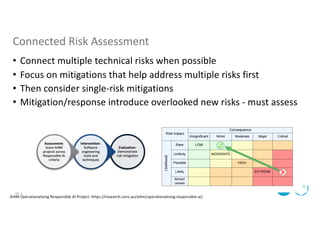

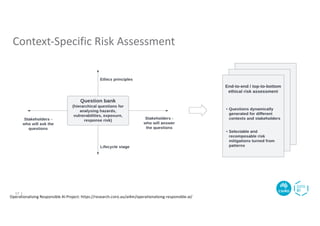

The document discusses the dual challenges of responsible AI and cybersecurity within Australia's national science agency, CSIRO's Data61. It emphasizes the importance of managing diverse risks through integrated approaches like federated learning and trust architecture while highlighting the need for ethical frameworks and regulatory compliance. Additionally, it presents techniques and tools for data sharing and risk mitigation, illustrating how collaboration and innovation can enhance AI systems' trustworthiness and security.

![Pattern Catalogue – cross aspects

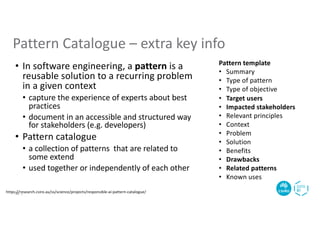

[1] https://research.csiro.au/ss/science/projects/responsible-ai-pattern-catalogue/

20 |](https://image.slidesharecdn.com/aicss22-keynote-20221122-linkedin-230304114212-c4eeef9b/85/Responsible-AI-Cybersecurity-A-tale-of-two-technology-risks-20-320.jpg)