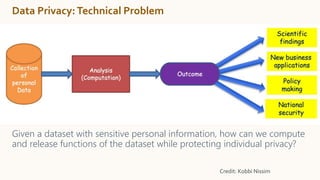

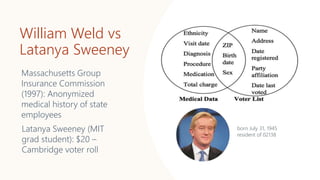

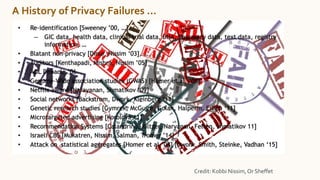

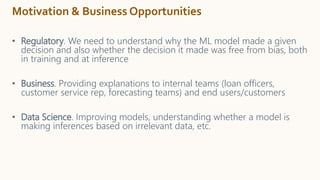

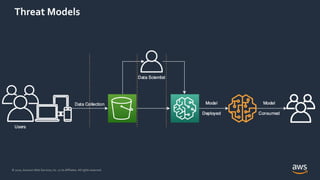

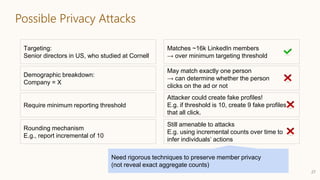

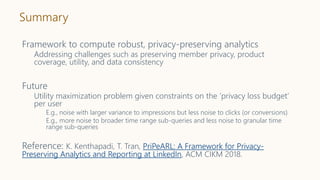

The document discusses the concept of privacy within AI/ML systems, highlighting the distinction between data privacy and data security while emphasizing the importance of preserving individual privacy in analytics. It presents challenges, lessons learned, and frameworks such as 'priPEARL' to ensure privacy-preserving analytics, along with addressing biases in machine learning models. Additionally, it outlines regulatory motivations, ethical considerations, and the need for rigorous privacy techniques in the rapidly evolving data landscape.

![What is Privacy?

• Right of/to privacy

• “Right to be let alone” [L. Brandeis & S. Warren, 1890]

• “No one shall be subjected to arbitrary interference with [their] privacy,

family, home or correspondence, nor to attacks upon [their] honor and

reputation.” [The United Nations Universal Declaration of Human Rights]

• “The right of a person to be free from intrusion into or publicity concerning

matters of a personal nature” [Merriam-Webster]

• “The right not to have one's personal matters disclosed or publicized; the

right to be left alone” [Nolo’s Plain-English Law Dictionary]](https://image.slidesharecdn.com/202011-privacyinaimlsystems-kenthapadi-201118203424/85/Privacy-in-AI-ML-Systems-Practical-Challenges-and-Lessons-Learned-2-320.jpg)

![Data Privacy (or Information Privacy)

• “The right to have some control over how your personal information is

collected and used” [IAPP]

• “Privacy has fast-emerged as perhaps the most significant consumer

protection issue—if not citizen protection issue—in the global

information economy” [IAPP]](https://image.slidesharecdn.com/202011-privacyinaimlsystems-kenthapadi-201118203424/85/Privacy-in-AI-ML-Systems-Practical-Challenges-and-Lessons-Learned-3-320.jpg)

![Differential Privacy

32

Databases D and D′ are neighbors if they differ in one person’s data.

Differential Privacy: The distribution of the curator’s output M(D) on database

D is (nearly) the same as M(D′).

Curator

+ your data

- your data

Dwork, McSherry, Nissim, Smith [TCC 2006]

Curator](https://image.slidesharecdn.com/202011-privacyinaimlsystems-kenthapadi-201118203424/85/Privacy-in-AI-ML-Systems-Practical-Challenges-and-Lessons-Learned-31-320.jpg)

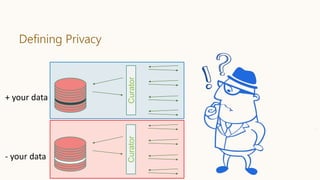

![(ε, 𝛿)-Differential Privacy: The distribution of the curator’s output M(D) on

database D is (nearly) the same as M(D′).

Differential Privacy

33

Curator

Parameter ε quantifies

information leakage

∀S: Pr[M(D)∊S] ≤ exp(ε) ∙ Pr[M(D′)∊S]+𝛿.Curator

Parameter 𝛿 gives

some slack

Dwork, Kenthapadi, McSherry, Mironov, Naor [EUROCRYPT 2006]

+ your data

- your data

Dwork, McSherry, Nissim, Smith [TCC 2006]](https://image.slidesharecdn.com/202011-privacyinaimlsystems-kenthapadi-201118203424/85/Privacy-in-AI-ML-Systems-Practical-Challenges-and-Lessons-Learned-32-320.jpg)

![Differential Privacy: Random Noise Addition

If ℓ1-sensitivity of f : D → ℝn:

maxD,D′ ||f(D) − f(D′)||1 = s,

then adding Laplacian noise to true output

f(D) + Laplacen(s/ε)

offers (ε,0)-differential privacy.

Dwork, McSherry, Nissim, Smith [TCC 2006]](https://image.slidesharecdn.com/202011-privacyinaimlsystems-kenthapadi-201118203424/85/Privacy-in-AI-ML-Systems-Practical-Challenges-and-Lessons-Learned-33-320.jpg)

![Simple but effective, privacy-preserving mechanism

Task: subsample from dataset using additional information in privacy-

preserving way.

Building on existing exponential analysis of k-anonymity, amplified by

sampling…

Mechanism M is (β, ε, δ)-differentially private

Model uncertainty via Bayesian NN

”Privacy-preserving Active Learning on Sensitive Data for User Intent

Classification” [Feyisetan, Balle, Diethe, Drake; PAL 2019]](https://image.slidesharecdn.com/202011-privacyinaimlsystems-kenthapadi-201118203424/85/Privacy-in-AI-ML-Systems-Practical-Challenges-and-Lessons-Learned-47-320.jpg)

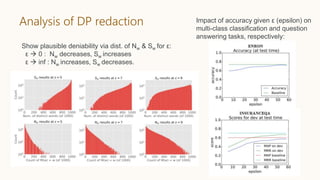

![Differentially-private text redaction

Task: automatically redact sensitive text for privatizing various ML models.

Perturb sentences but maintain meaning

e.g. “goalie wore a hockey helmet” “keeper wear the nhl hat”

Apply metric DP and analysis of word embeddings to scramble sentences

Mechanism M is d χ – differentially private

Establish plausible deniability statistics:

Nw := Pr[M(w ) = w ]

Sw := Expected number of words output by M(w)

“Privacy- and Utility-Preserving Textual Analysis via Calibrated Multivariate

Perturbations” [Feyisetan, Drake, Diethe, Balle; WSDM 2020]](https://image.slidesharecdn.com/202011-privacyinaimlsystems-kenthapadi-201118203424/85/Privacy-in-AI-ML-Systems-Practical-Challenges-and-Lessons-Learned-48-320.jpg)

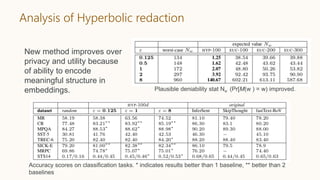

![Improving data utility of DP text redaction

Task: redact text, but use additional structured information to

better preserve utility.

Can we improve redaction for models that fail for extraneous words?

~Recall-sensitive

Extend d χ privacy to hyperbolic embeddings [Tifrea 2018] via

Hyperbolic: utilize high-dimensional geometry to infuse embeddings

with graph structure

E.g. uni- or bi-directional syllogisms from WebIsADb

New privacy analysis of Poincaré model and sampling procedure

Mechanism takes advantage of density in data to apply

perturbations more precisely.

“Leveraging Hierarchical Representations for Preserving Privacy

and Utility in Text” Feyisetan, Drake, Diethe; ICDM 2019

Tiling in Poincaré disk

Hyperbolic Glove emb.

projected into B2 Poincaré disk](https://image.slidesharecdn.com/202011-privacyinaimlsystems-kenthapadi-201118203424/85/Privacy-in-AI-ML-Systems-Practical-Challenges-and-Lessons-Learned-50-320.jpg)