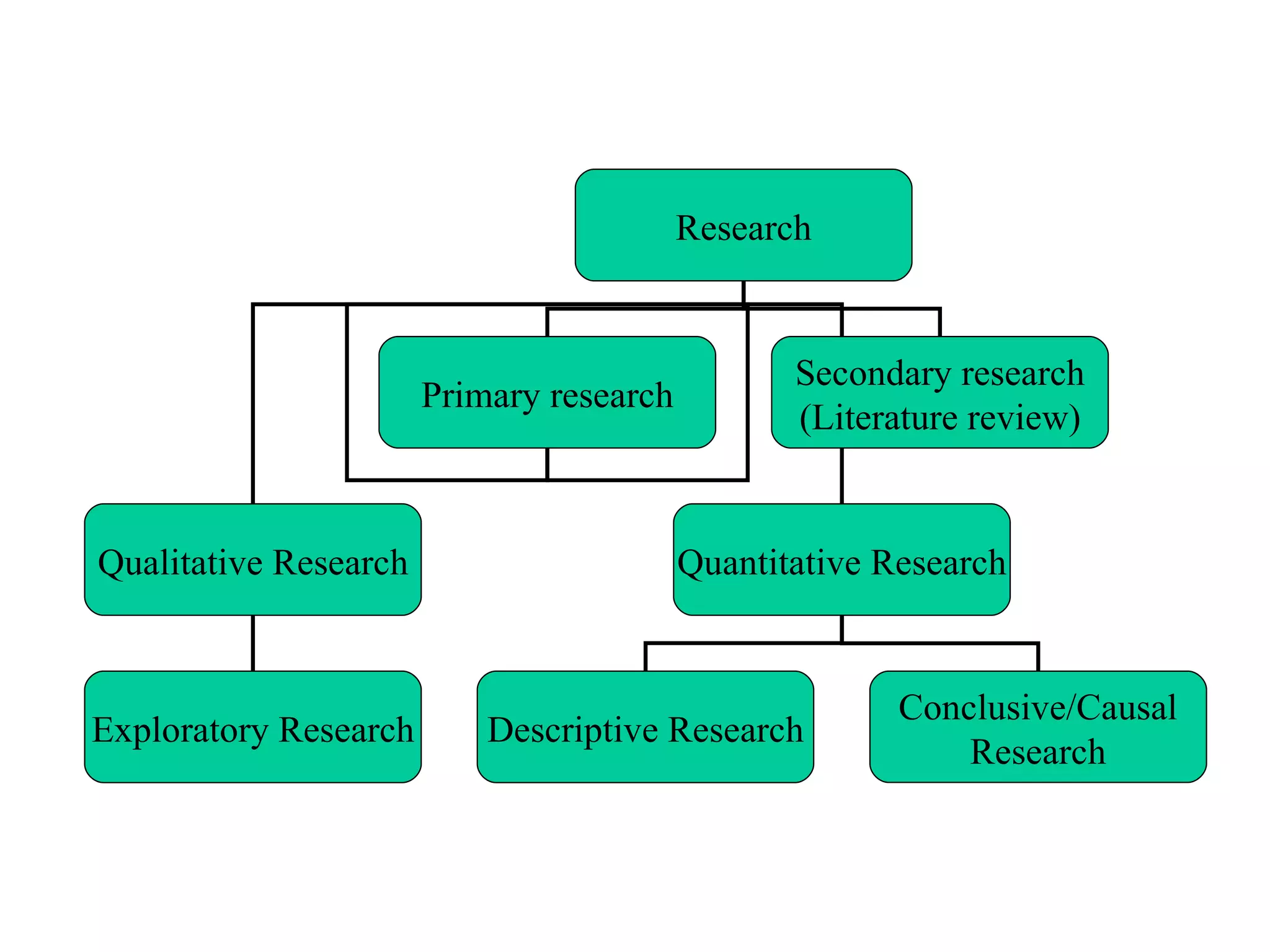

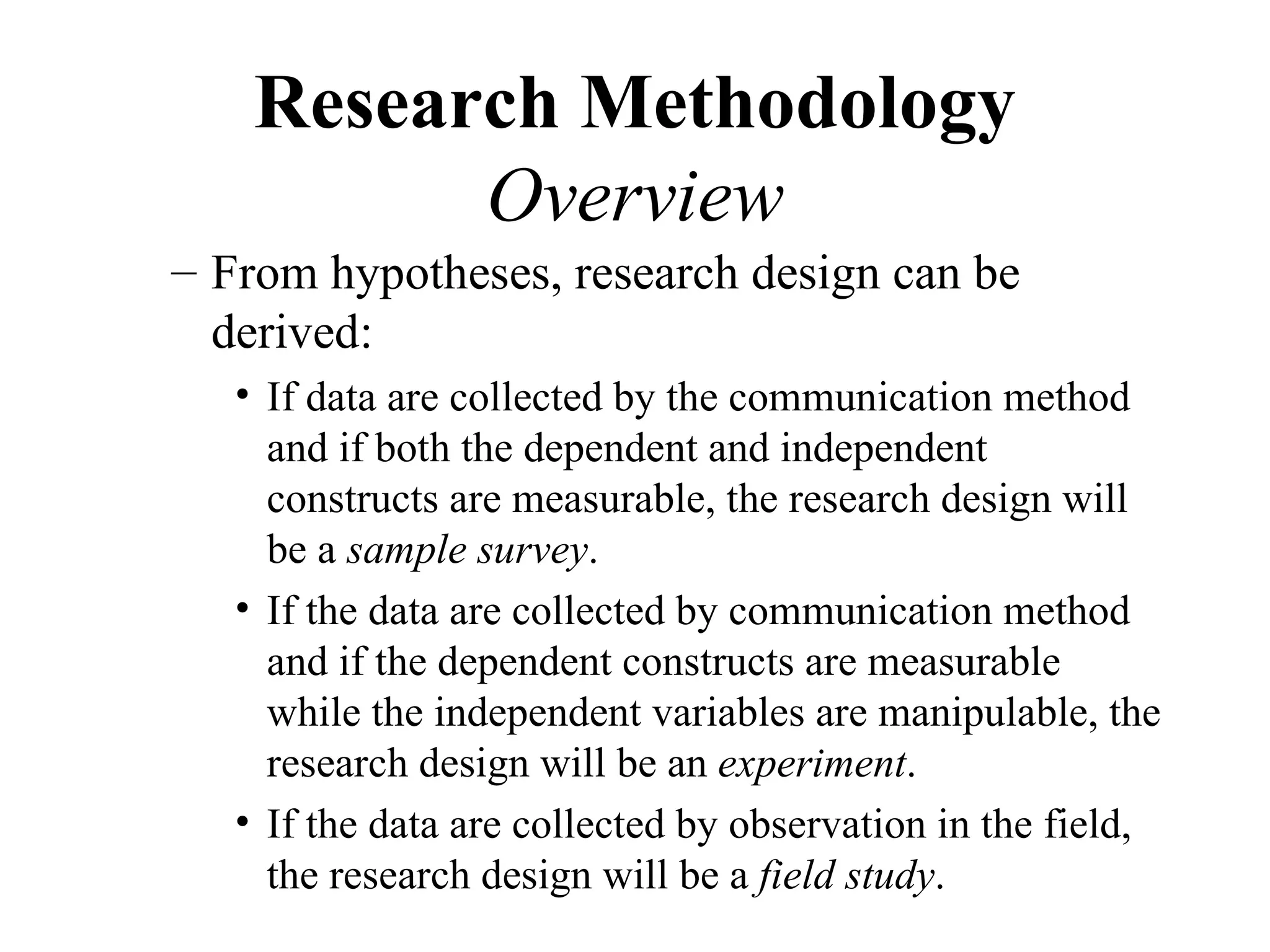

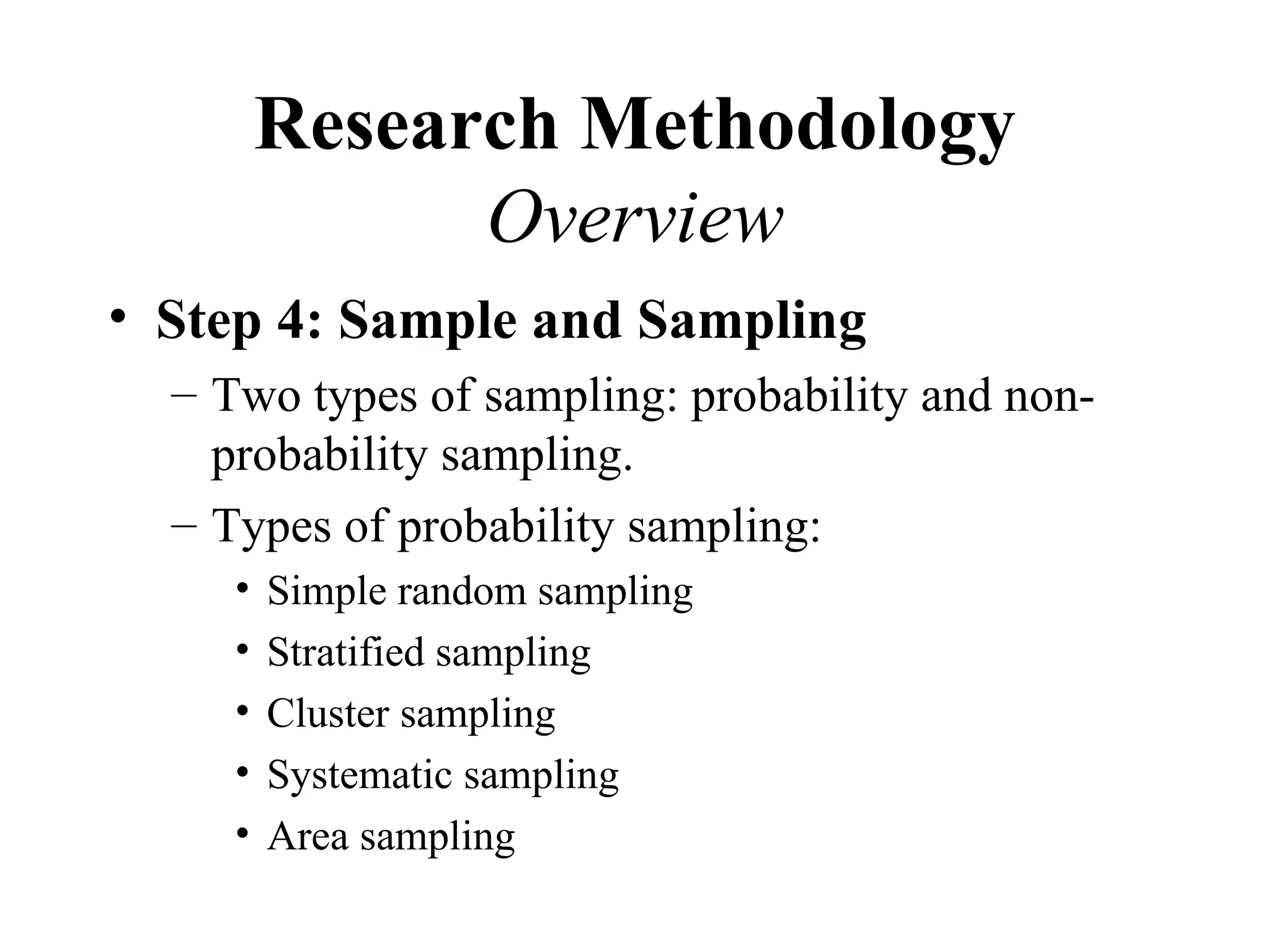

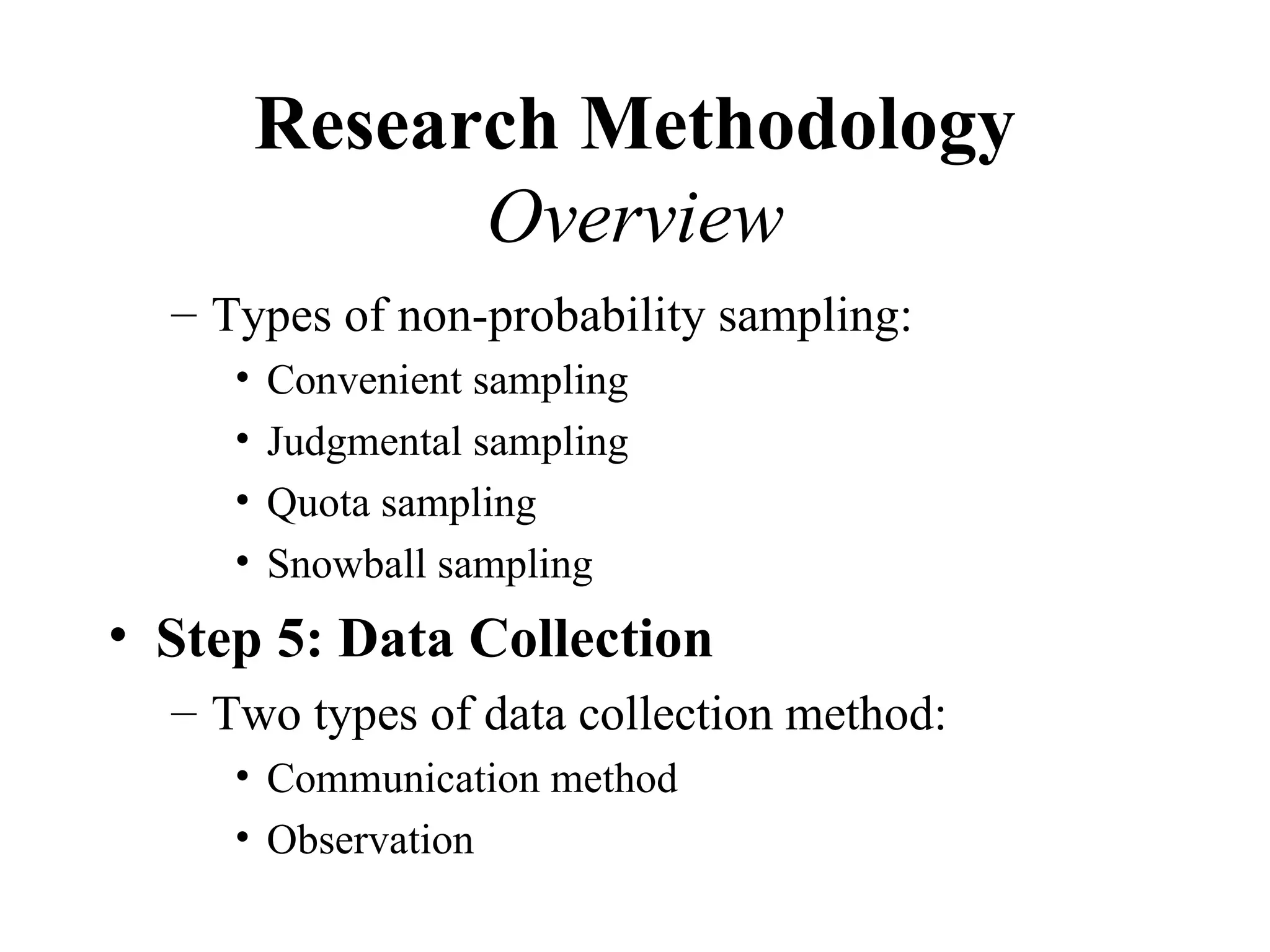

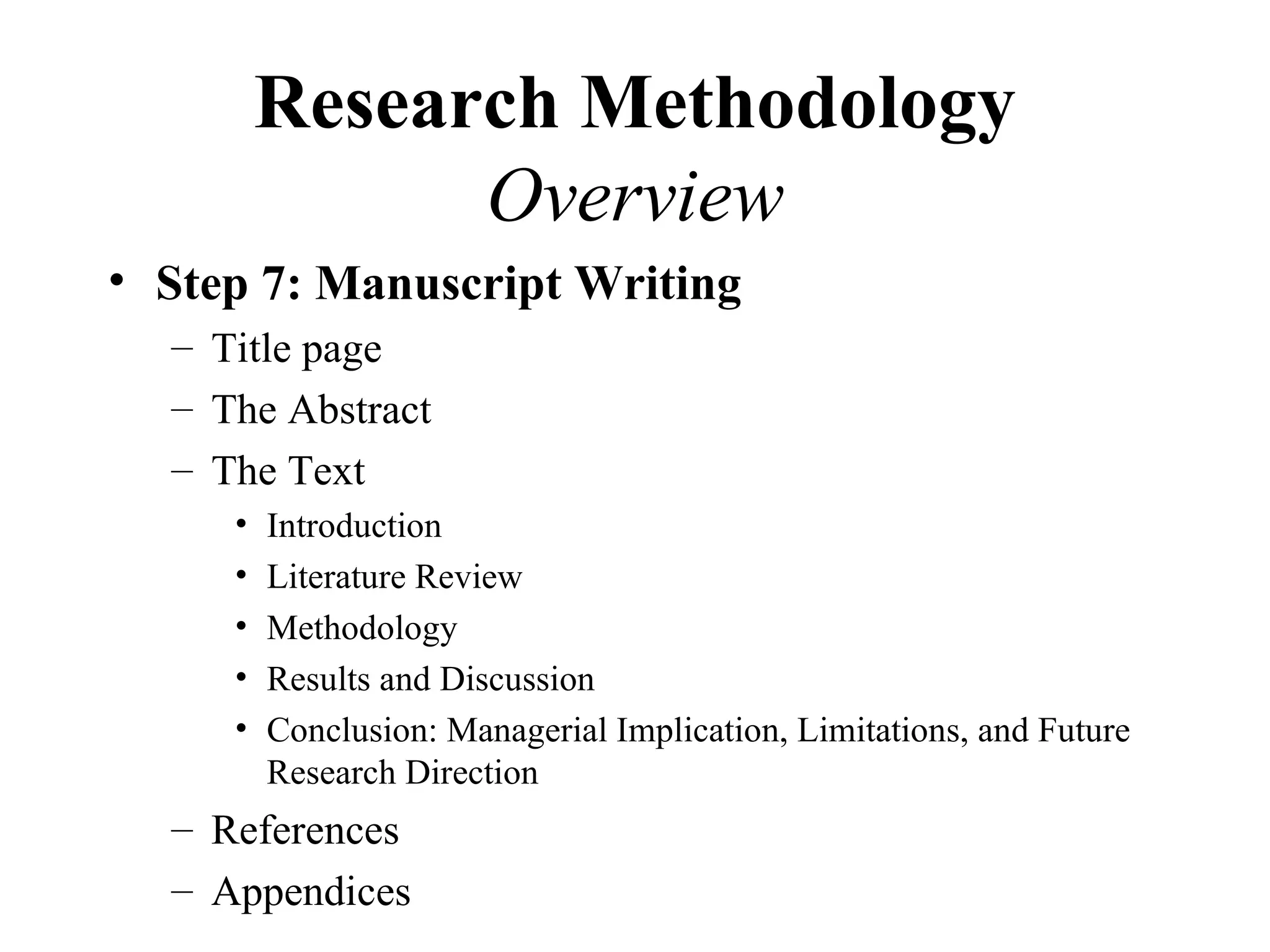

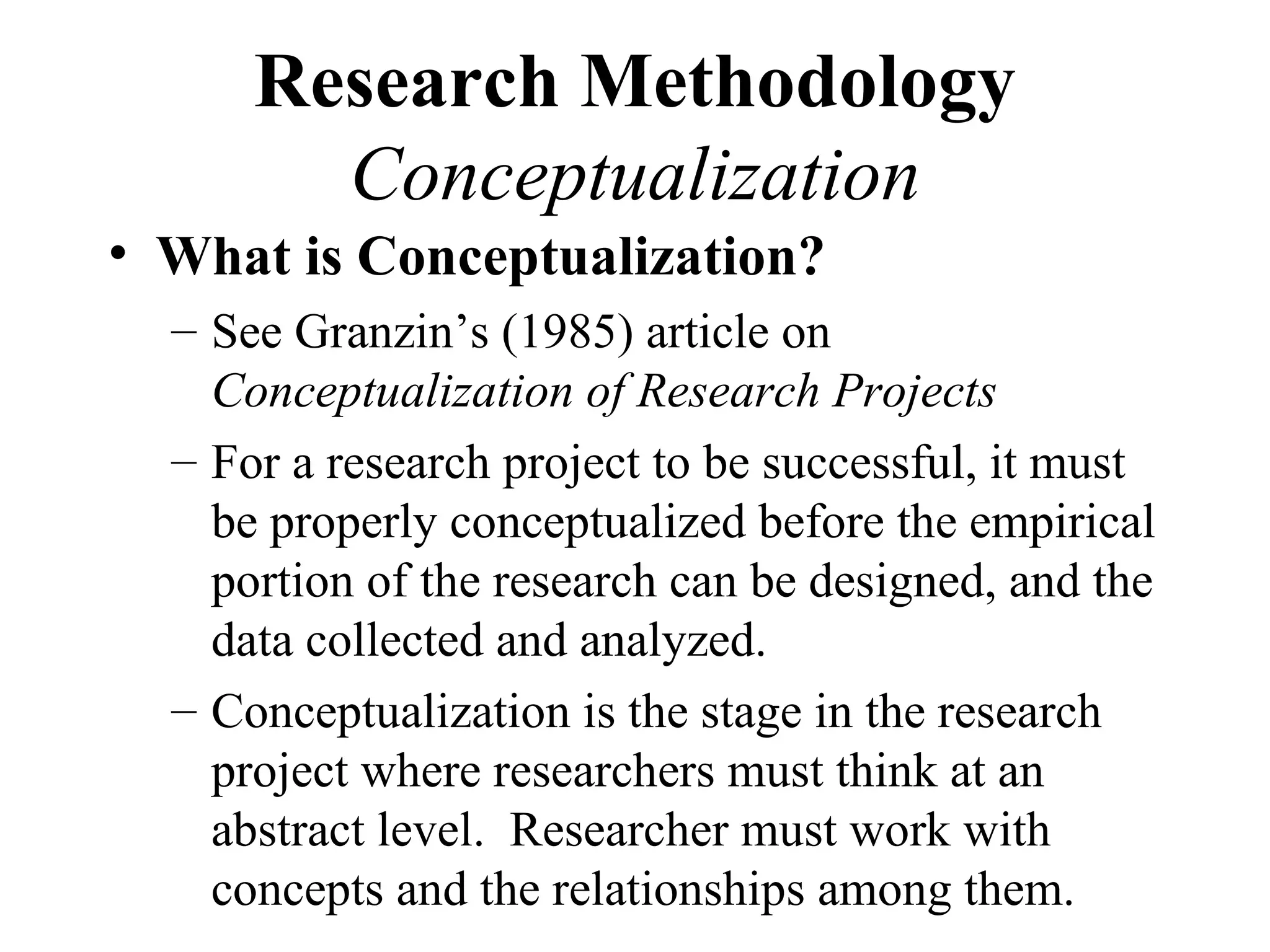

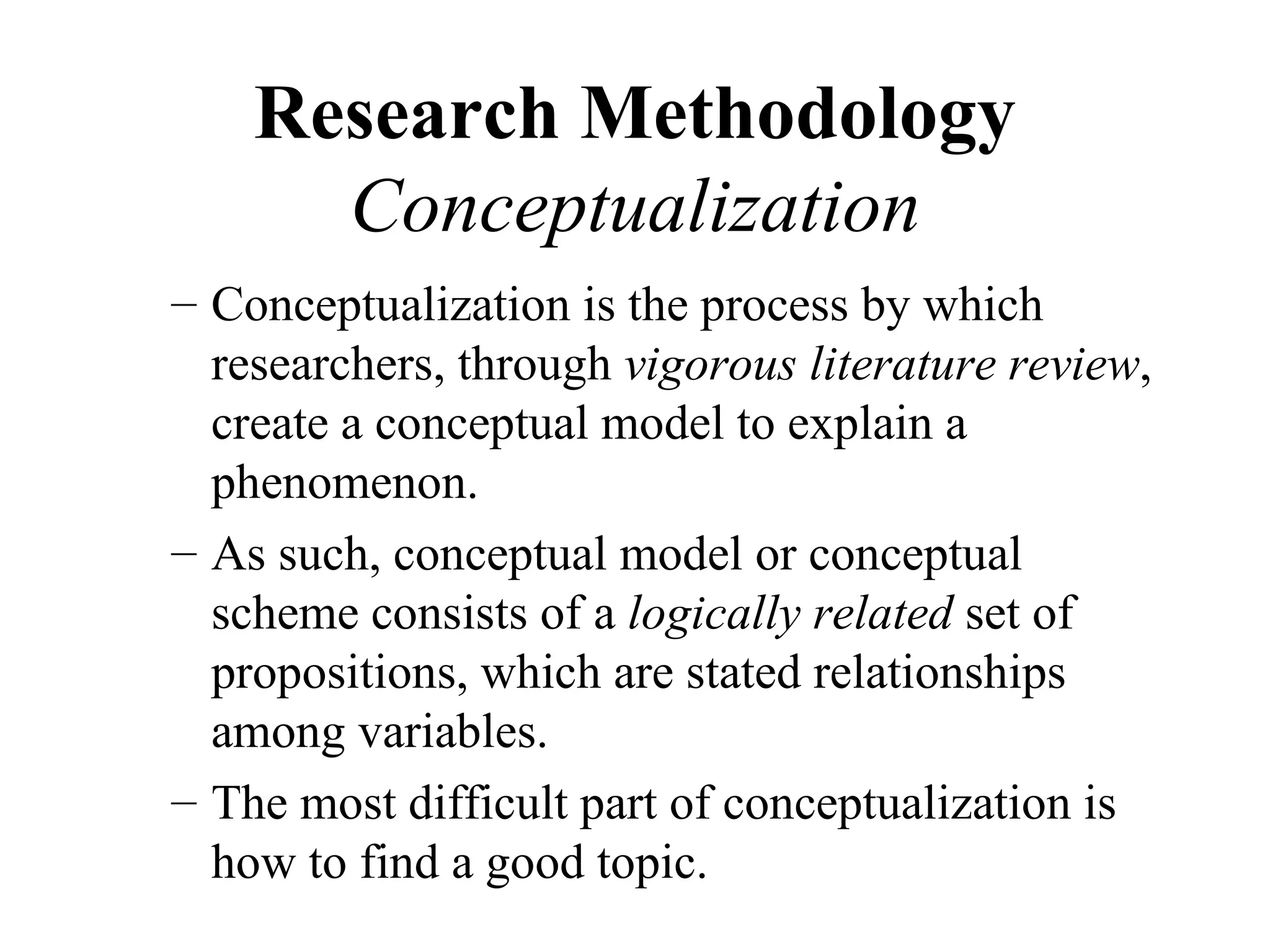

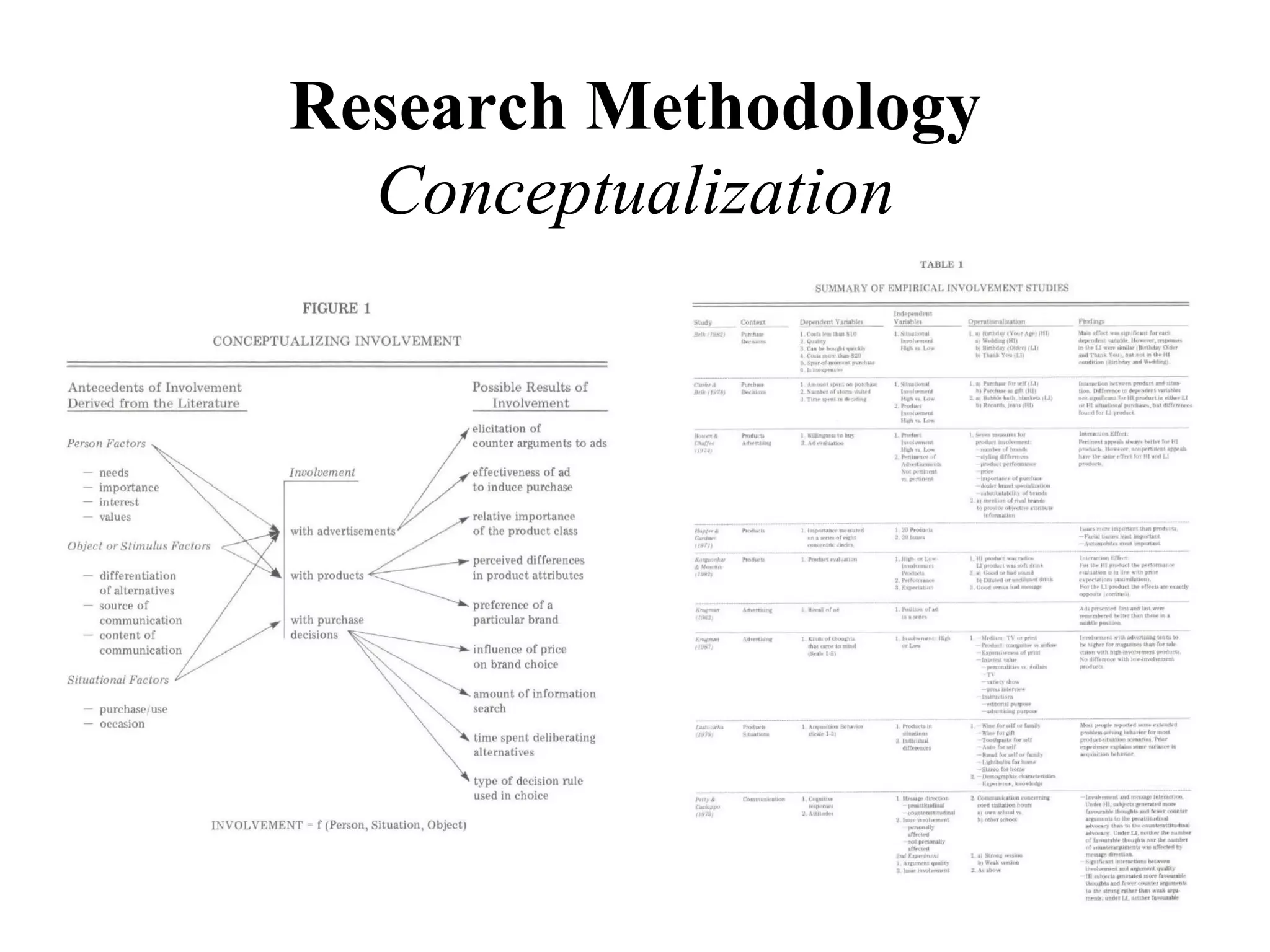

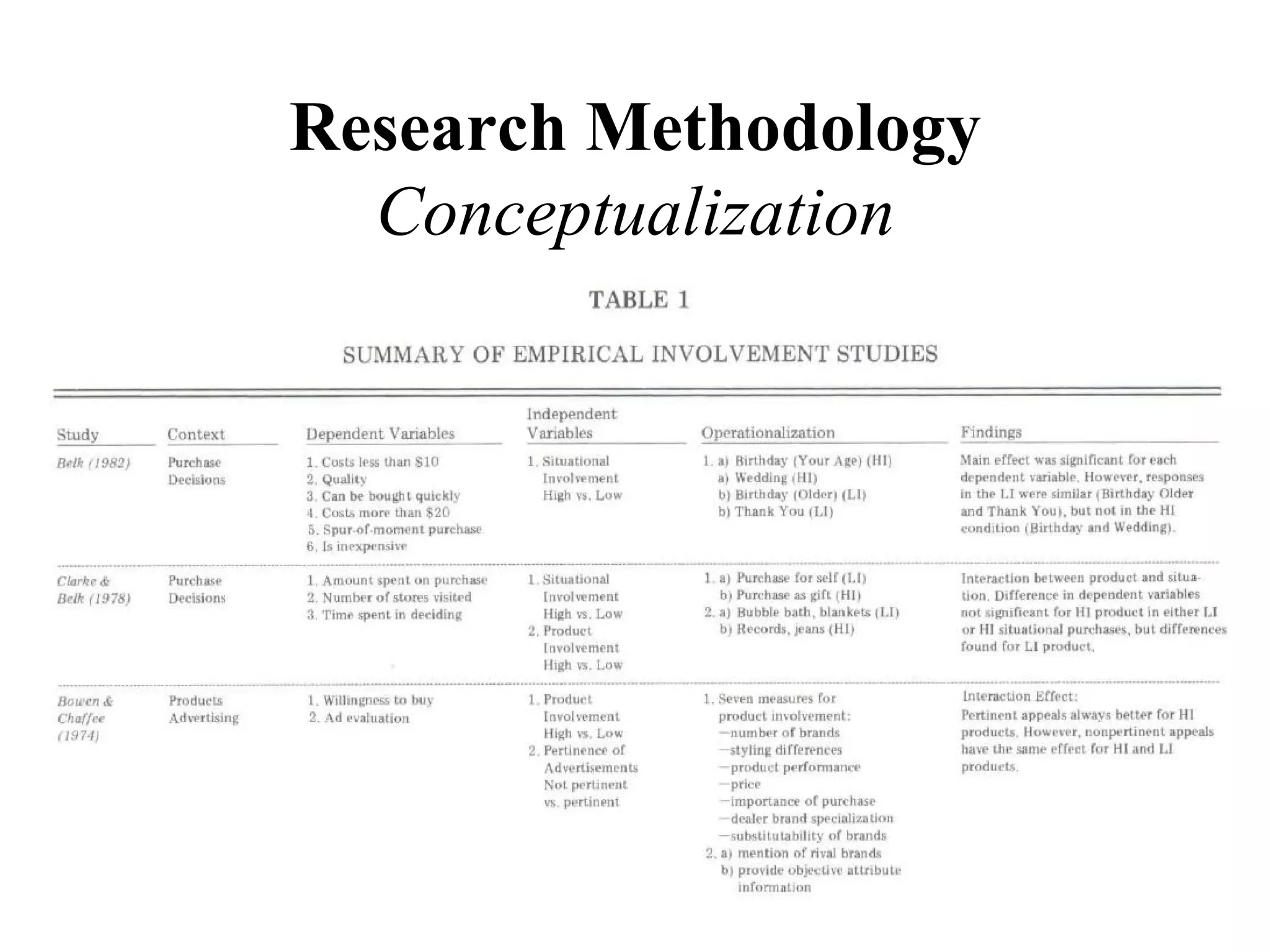

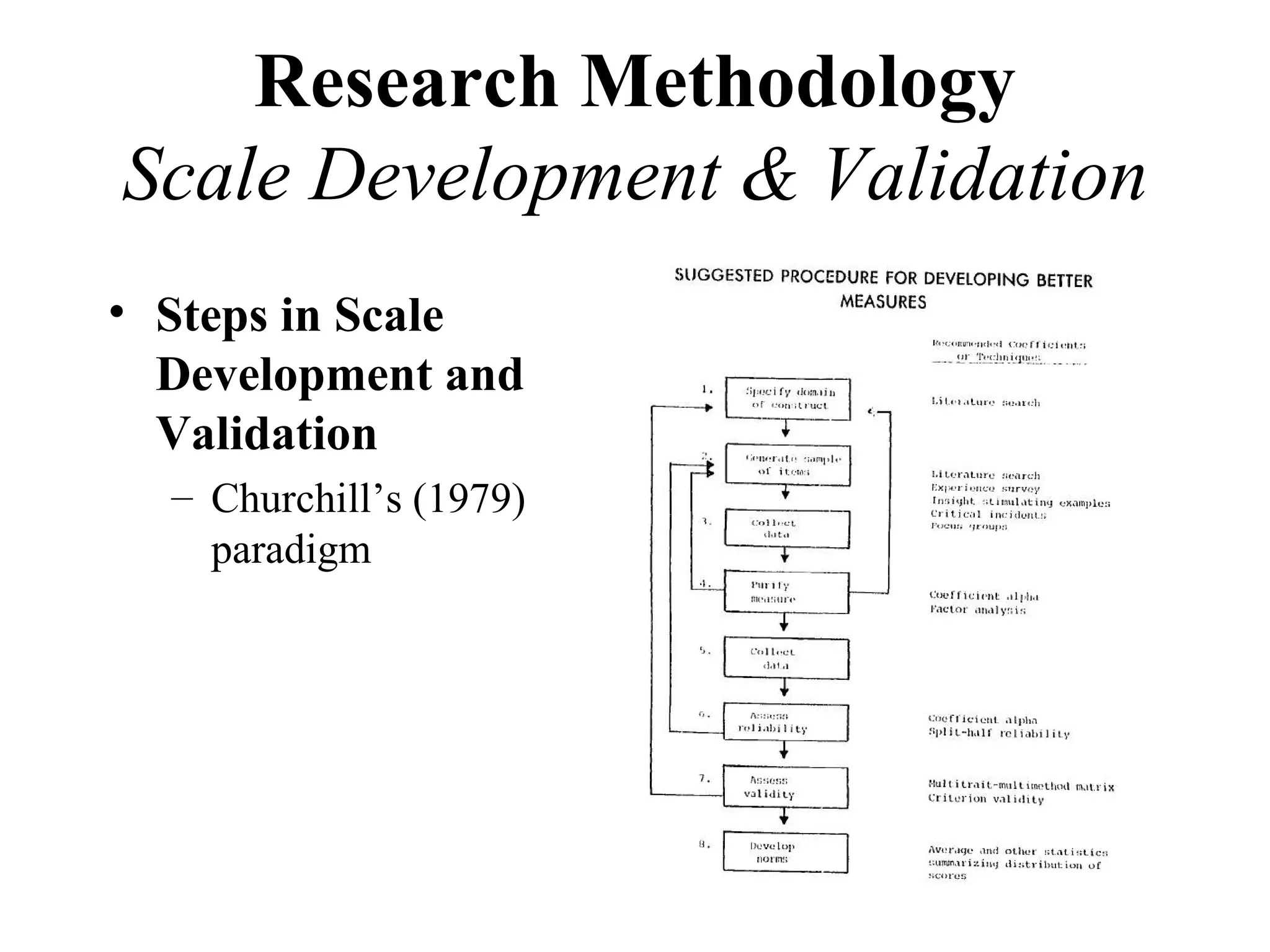

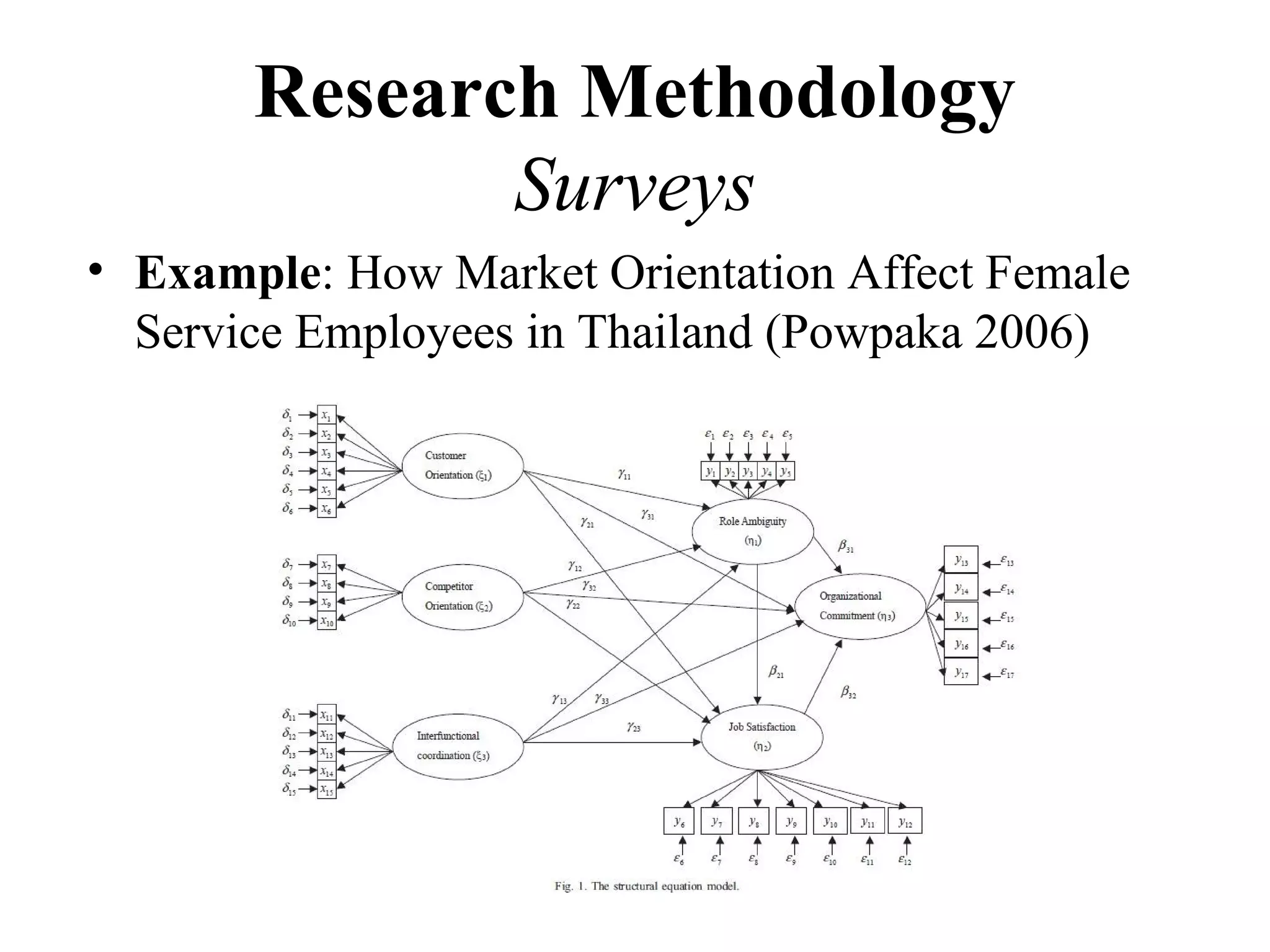

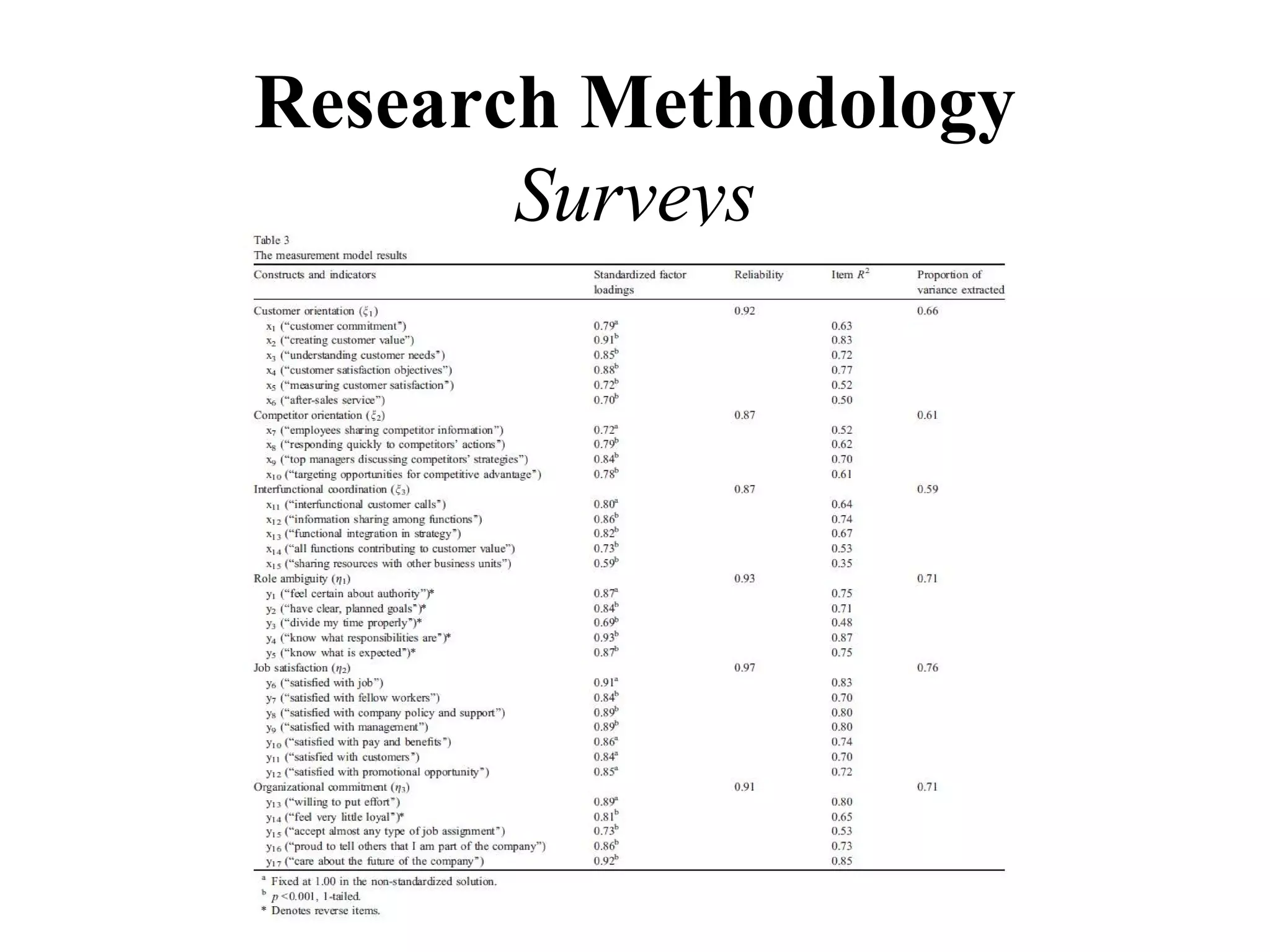

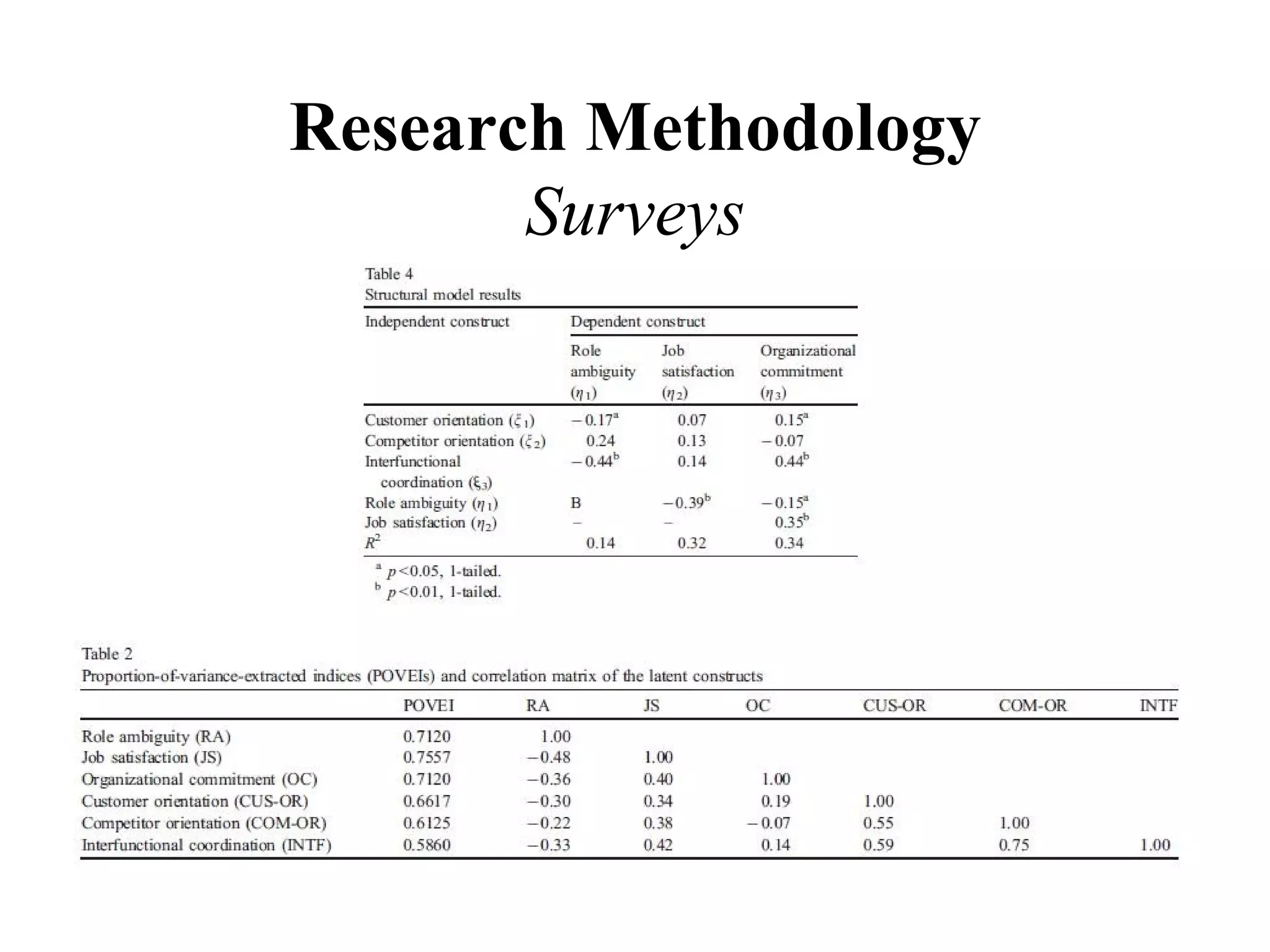

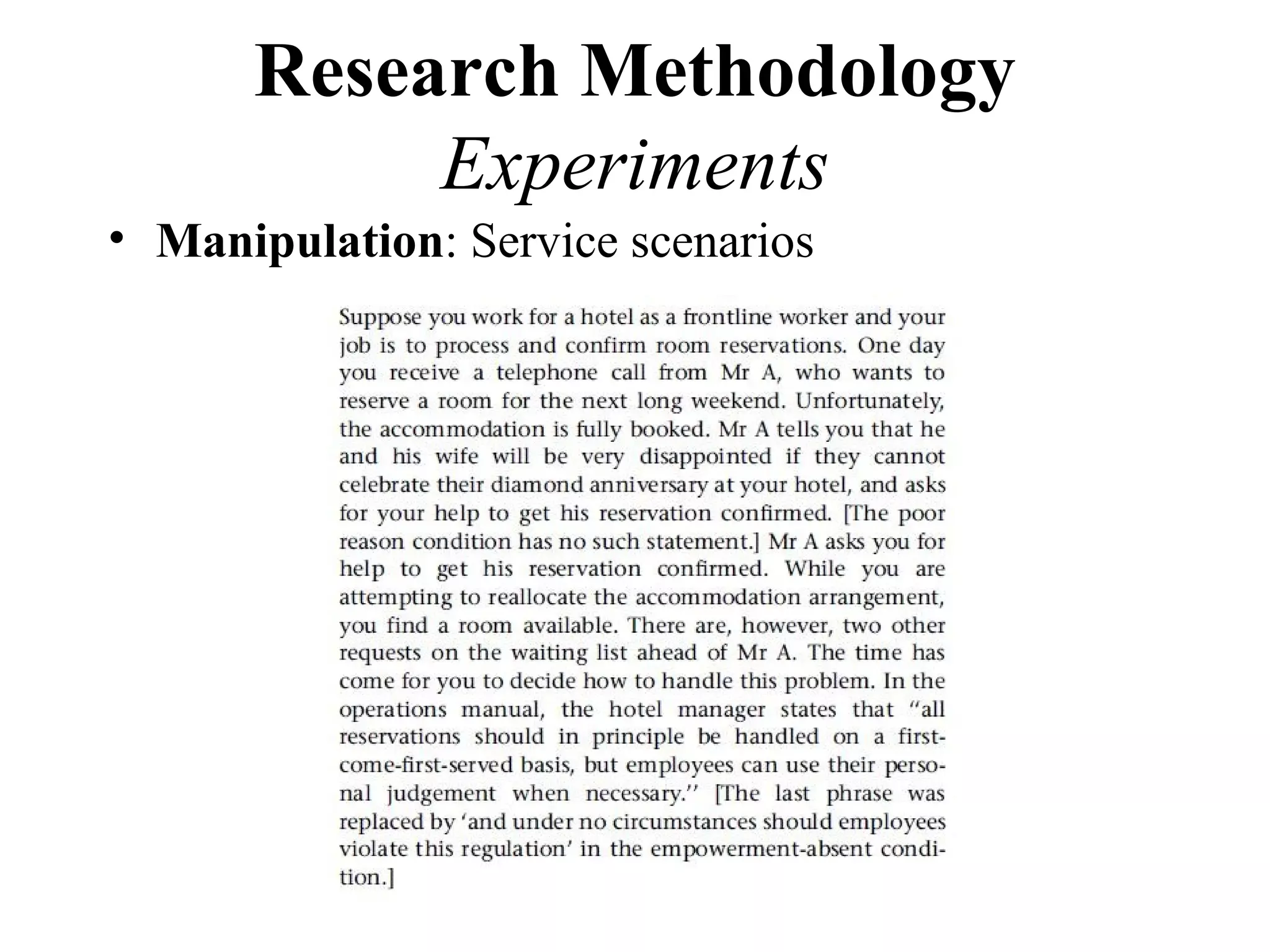

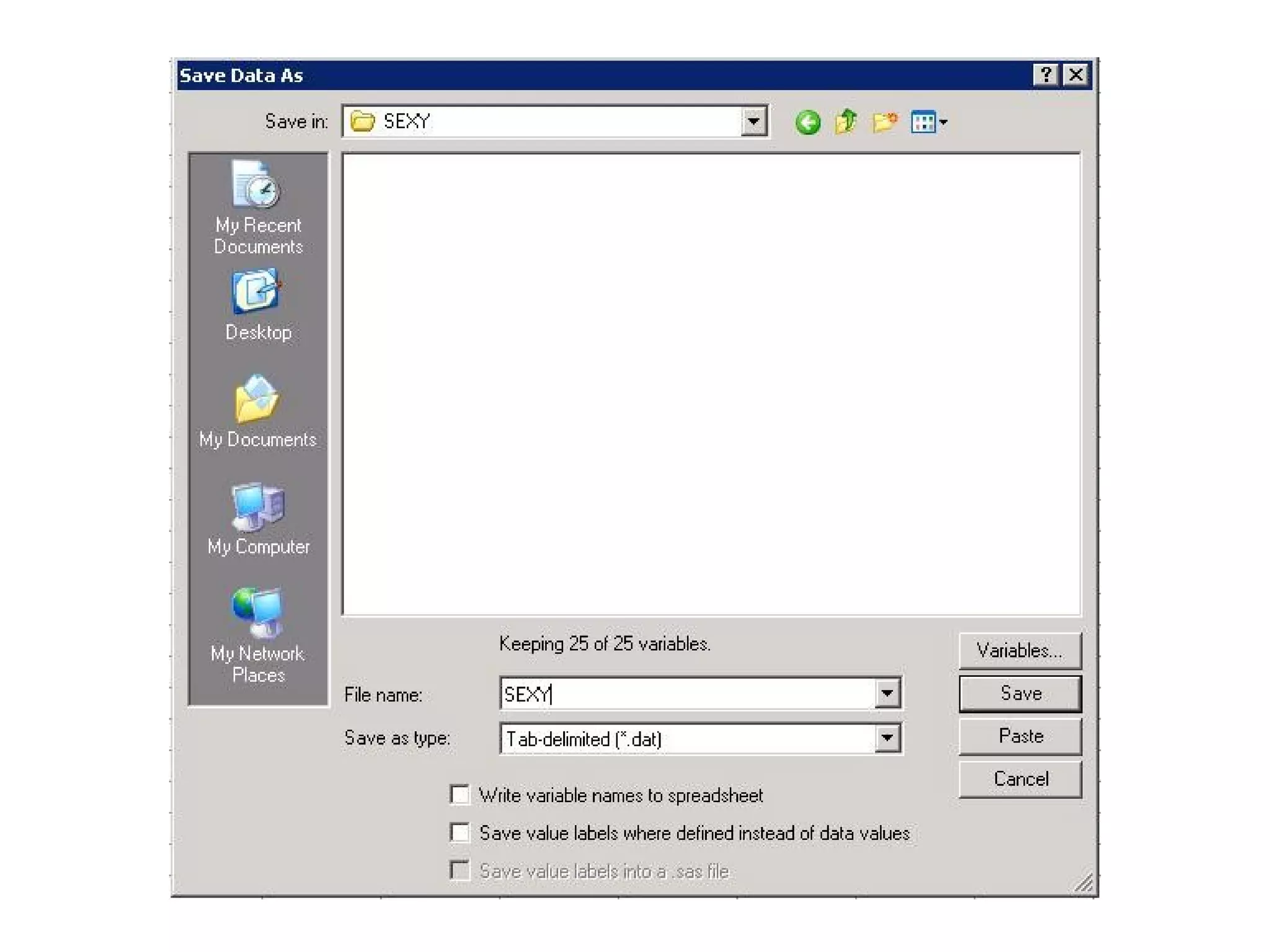

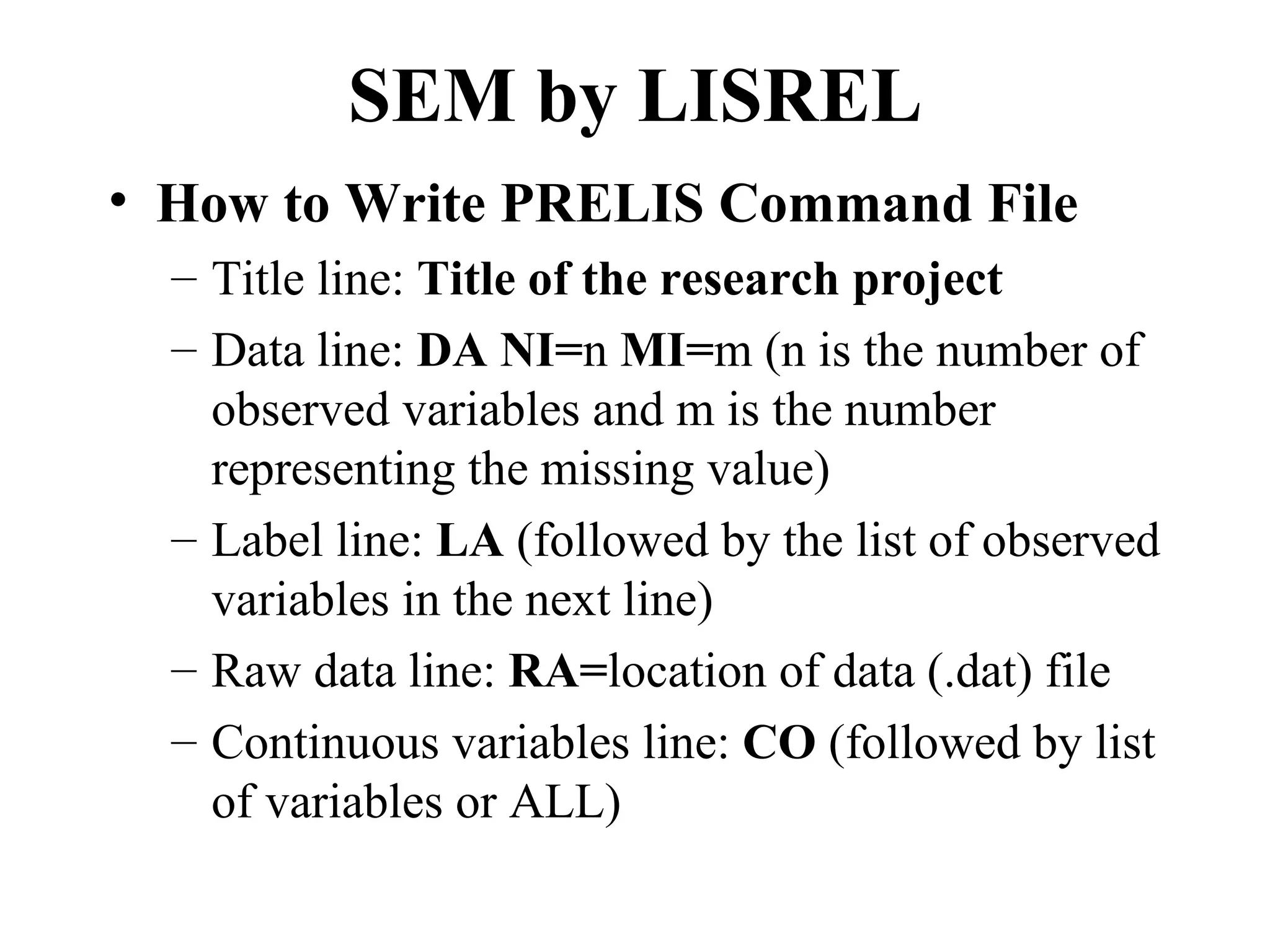

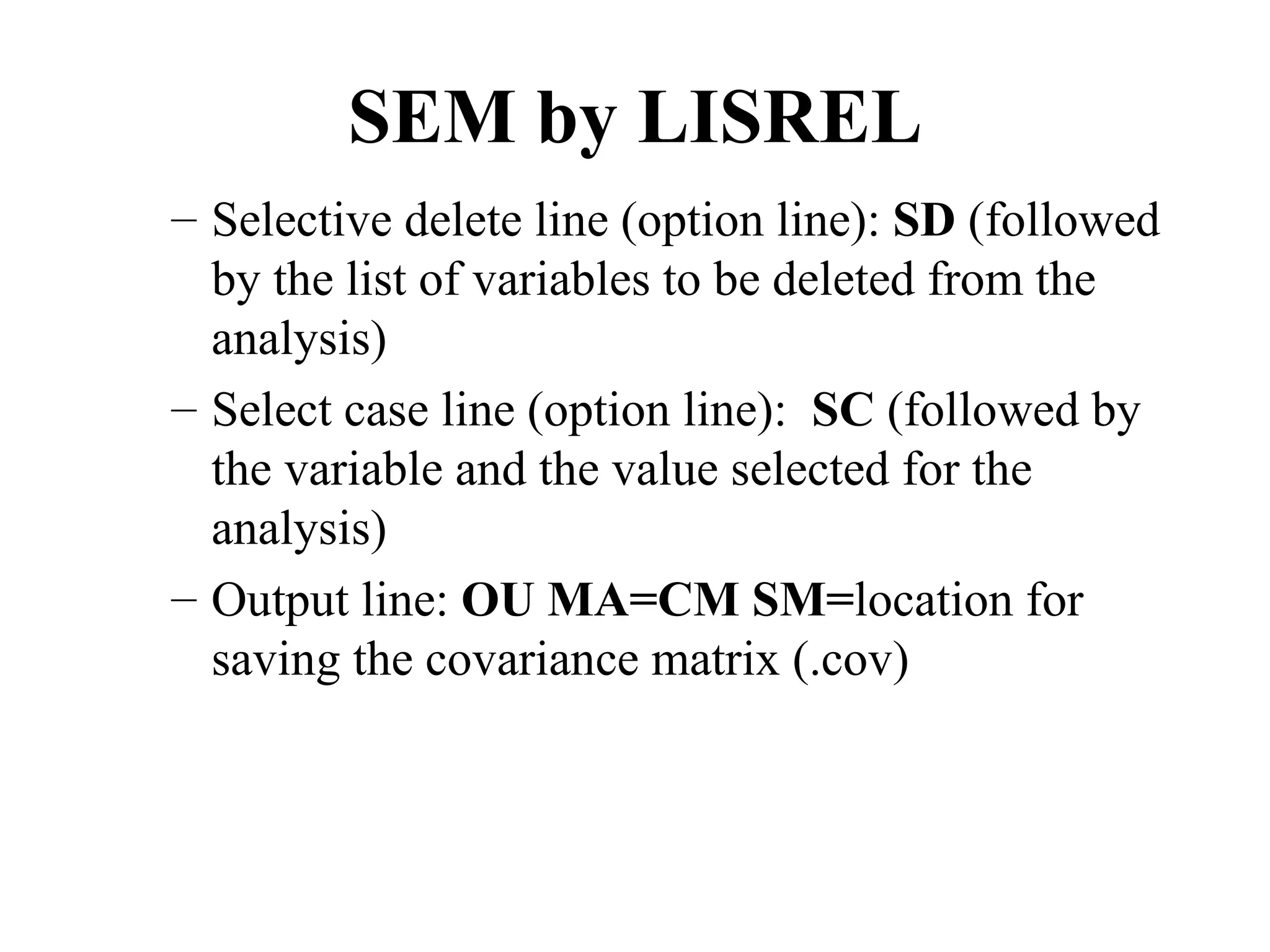

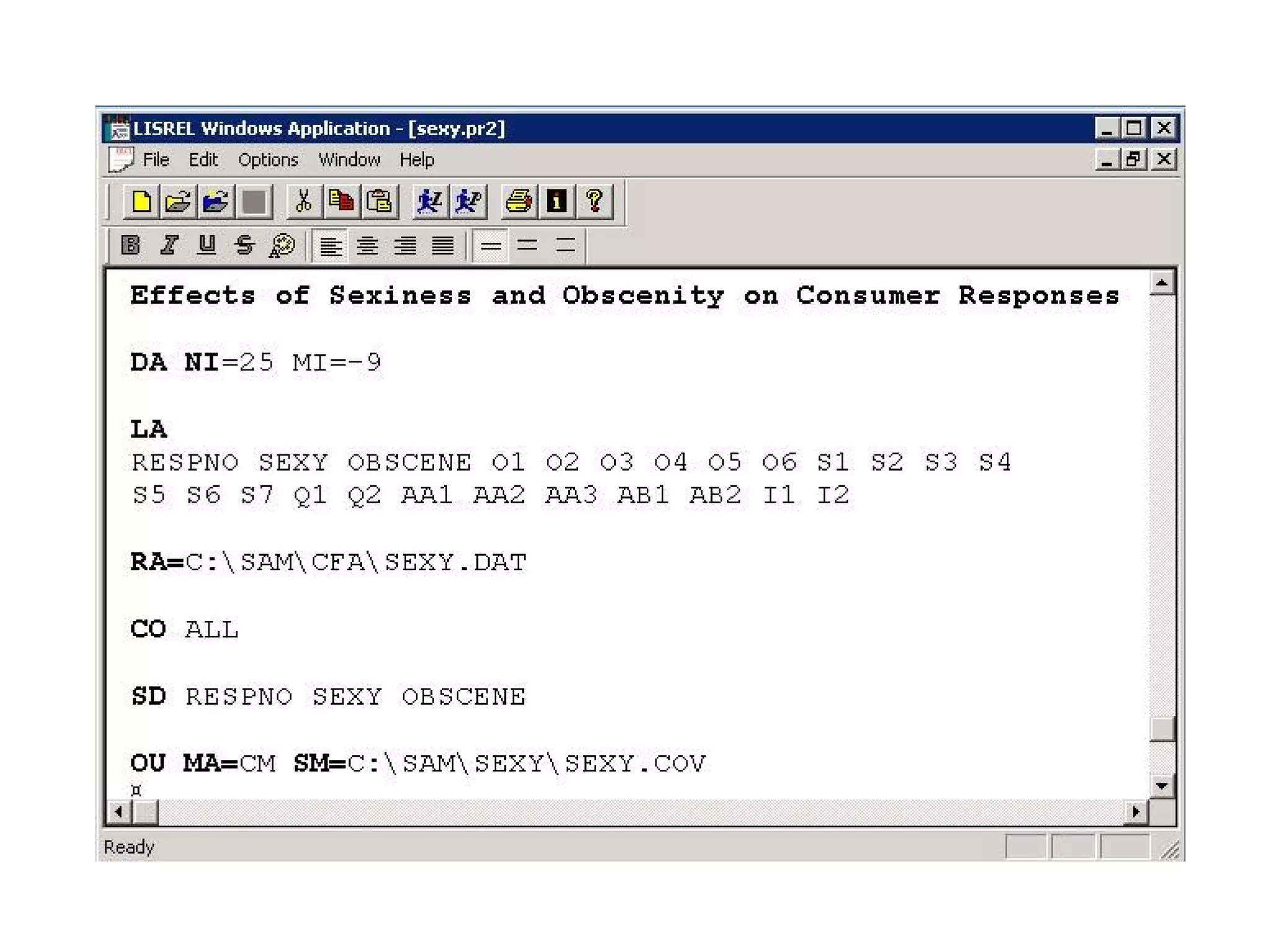

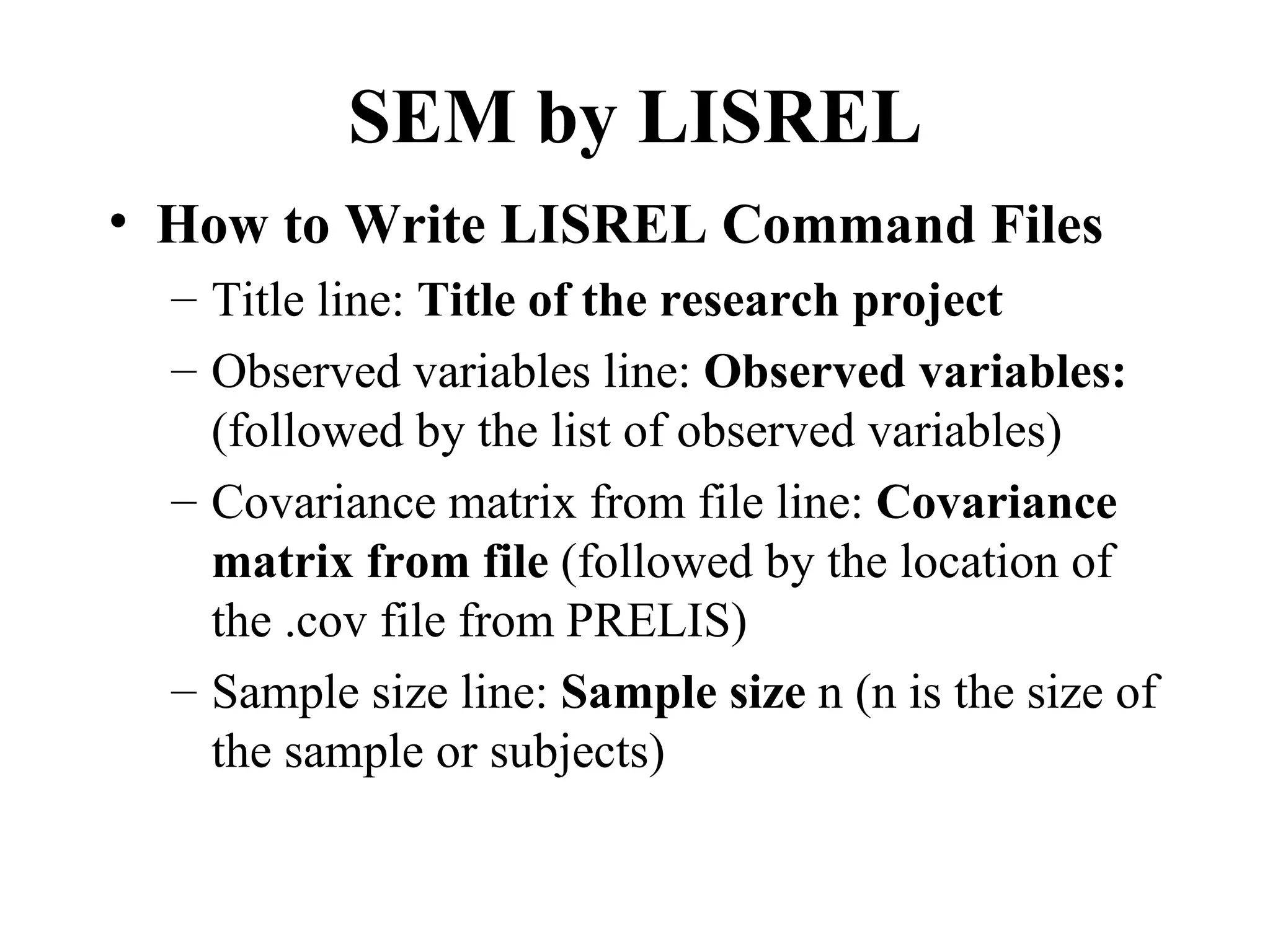

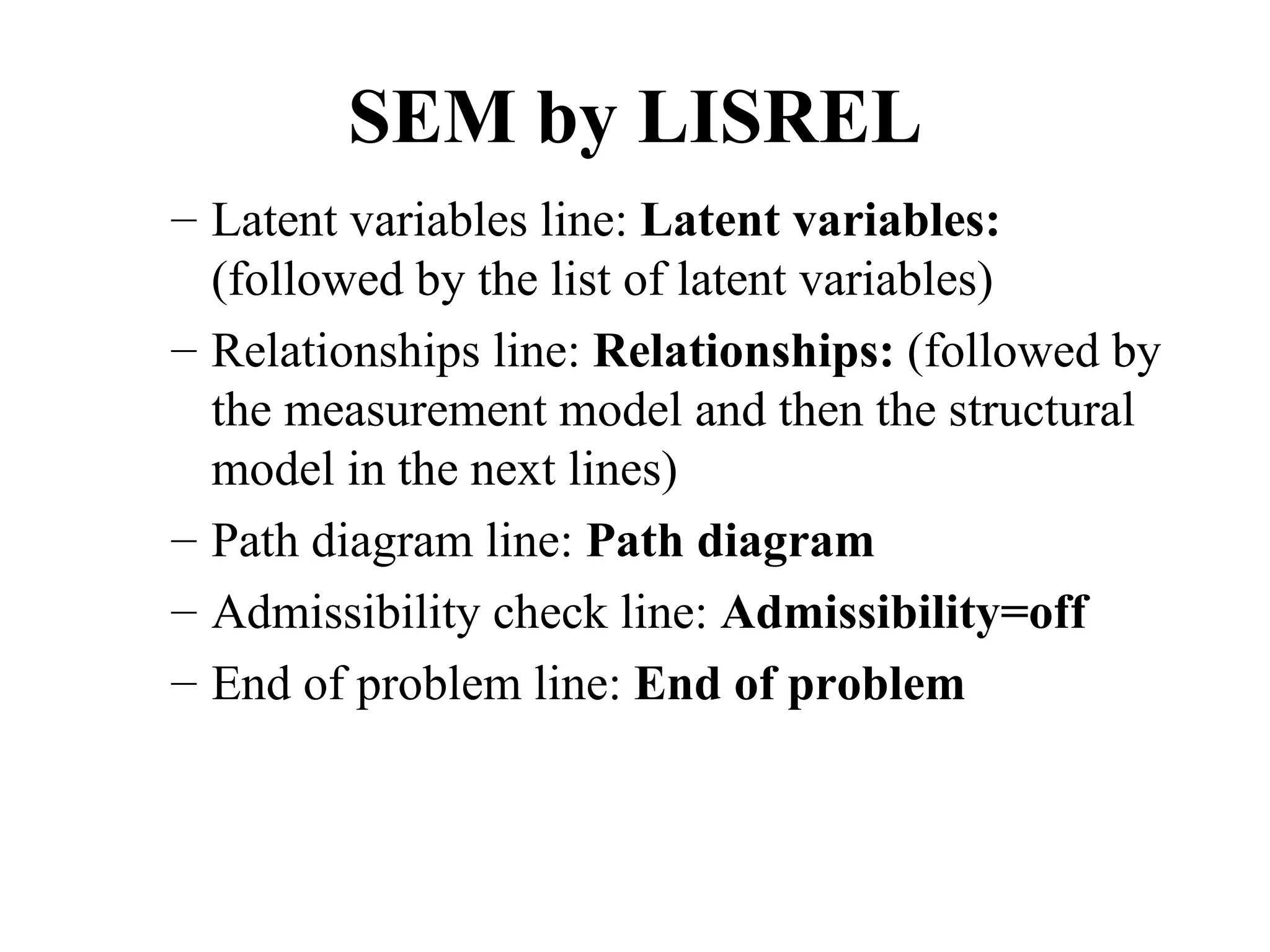

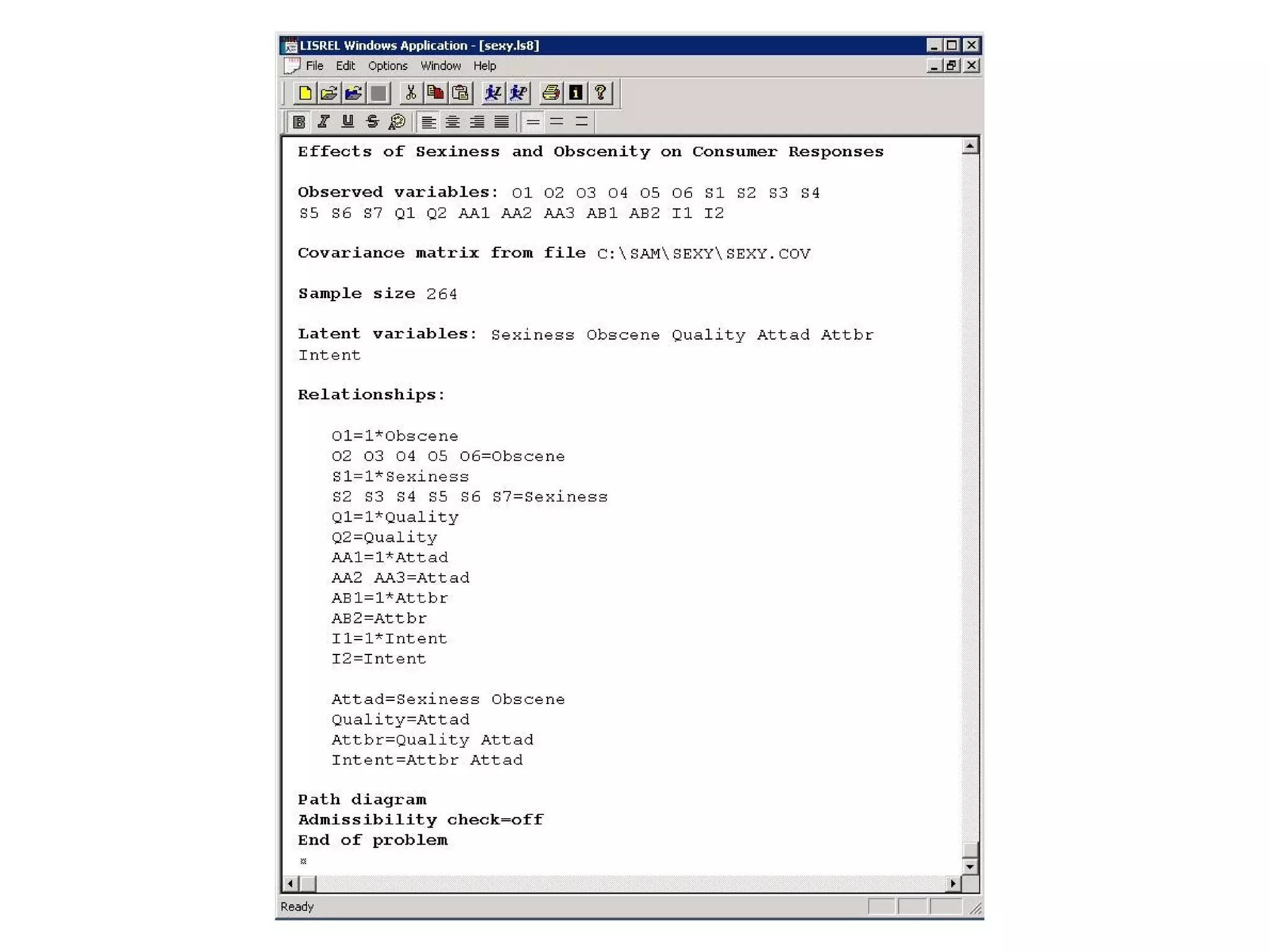

The document provides an overview of research methodology for behavioral research. It aims to introduce research methodology and multivariate data analysis to new Ph.D. students. Topics covered include conceptualization, measurement, research design, multivariate analysis, and structural equation modeling. The goal is to provide hands-on experience with techniques like LISREL for analyzing behavioral research questions.