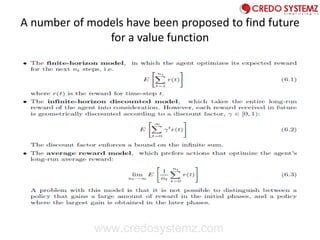

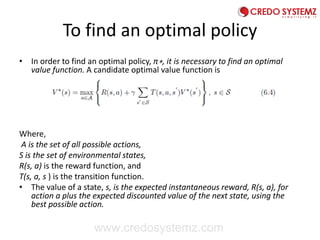

Reinforcement learning (RL) involves teaching an agent to learn optimal actions through trial and error by rewarding or punishing actions based on performance feedback. Key components of RL include a policy for decision making, a reward function, and a value function to evaluate long-term goals. The document discusses model-free RL methods such as temporal difference learning and Q-learning, and explores the integration of neural networks to enhance RL capabilities.

![1. Temporal Difference Learning

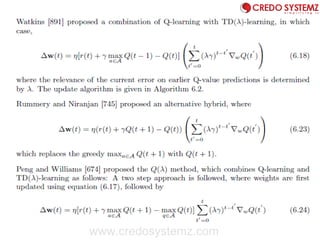

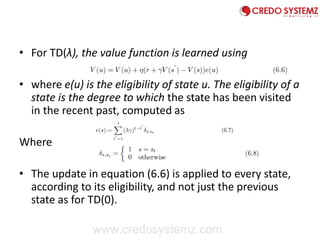

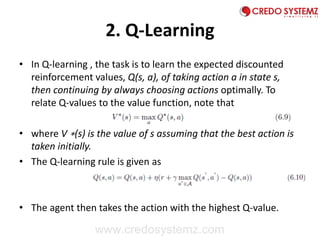

• Temporal difference (TD) learning [824] learns the value policy using

the update rule,

• where η is a learning rate, r is the immediate reward, γ is the

discount factor, s is the current state, and s is a future state. Based

on equation (6.5), whenever a state, s, is visited, its estimated value

is updated to be closer to r + ηV (s ` ).

• The above model is referred to as TD(0), where only one future step

is considered.

• The TD method has been generalized to TD(λ) strategies , where λ ∈

[0, 1] is a weighting on the relevance of recent temporal differences

of previous predictions

www.credosystemz.com](https://image.slidesharecdn.com/reinforcementlearning-converted1-190920044635/85/Reinforcement-Learning-Guide-For-Beginners-10-320.jpg)

![1. RPROP

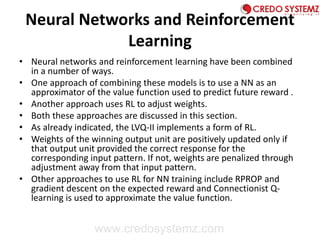

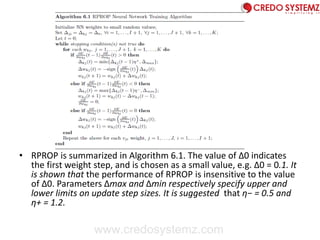

• Resilient propagation (RPROP) [727, 728] performs a direct adaptation of

the weight step using local gradient information. Weight adjustments are

implemented in the form of a reward or punishment, as follows: If the

partial derivative,

• Of weight vji (or wkj) changes its sign, the weight update value, Δji (Δkj), is

decreased by the factor, η−. The reason for this penalty is because the last

weight update was too large, causing the algorithm to jump over a local

minimum. On the other hand, if the derivative retains its sign, the update

value is increased by factor η+ to accelerate convergence.

• For each weight, vji (and wkj), the change in weight is determined as

• Where

• Using the above

www.credosystemz.com](https://image.slidesharecdn.com/reinforcementlearning-converted1-190920044635/85/Reinforcement-Learning-Guide-For-Beginners-14-320.jpg)

![2 . Gradient Descent Reinforcement

Learning

• For problems where only the immediate reward is maximized (i.e. there is no value

function, only a reward function), Williams [911] proposed weight update rules

that perform a gradient descent on the expected reward. These rules are then

integrated with back-propagation. Weights are updated as follows:

• where ηkj is a non-negative learning rate, rp is the reinforcement associated with

pattern zp, θk is the reinforcement threshold value, and ekj is the eligibility of

weight wkj, given as

• Where

• is the probability density function used to randomly generate actions, based on

whether the target was correctly predicted or not. Thus, this NN reinforcement

learning rule computes a GD in probability space.

• Similar update equations are used for the vji weights.

www.credosystemz.com](https://image.slidesharecdn.com/reinforcementlearning-converted1-190920044635/85/Reinforcement-Learning-Guide-For-Beginners-16-320.jpg)

![3. Connectionist Q-Learning

• Neural networks have been used to learn the Q-function in Q-

learning.

• The NN is used to approximate the mapping between states and

actions, and even to generalize between states.

• The input to the NN is the current state of the environment, and

the output represents the action to execute. If there are na actions,

then either one NN with na output units can be used, or na NNs,

one for each of the actions,can be used.

• Assuming that one NN is used per action, Lin [527] used the Q-

learning in equation (6.10) to update weights as follows:

• where Q(t) is used as shorthand notation for Q(s(t), a(t)) and ∇wQ(t)

is a vector of the output gradients, ∂Q ∂w (t), which are calculated

by means of back-propagation. Similar equations are used for the vj

weights.

www.credosystemz.com](https://image.slidesharecdn.com/reinforcementlearning-converted1-190920044635/85/Reinforcement-Learning-Guide-For-Beginners-17-320.jpg)