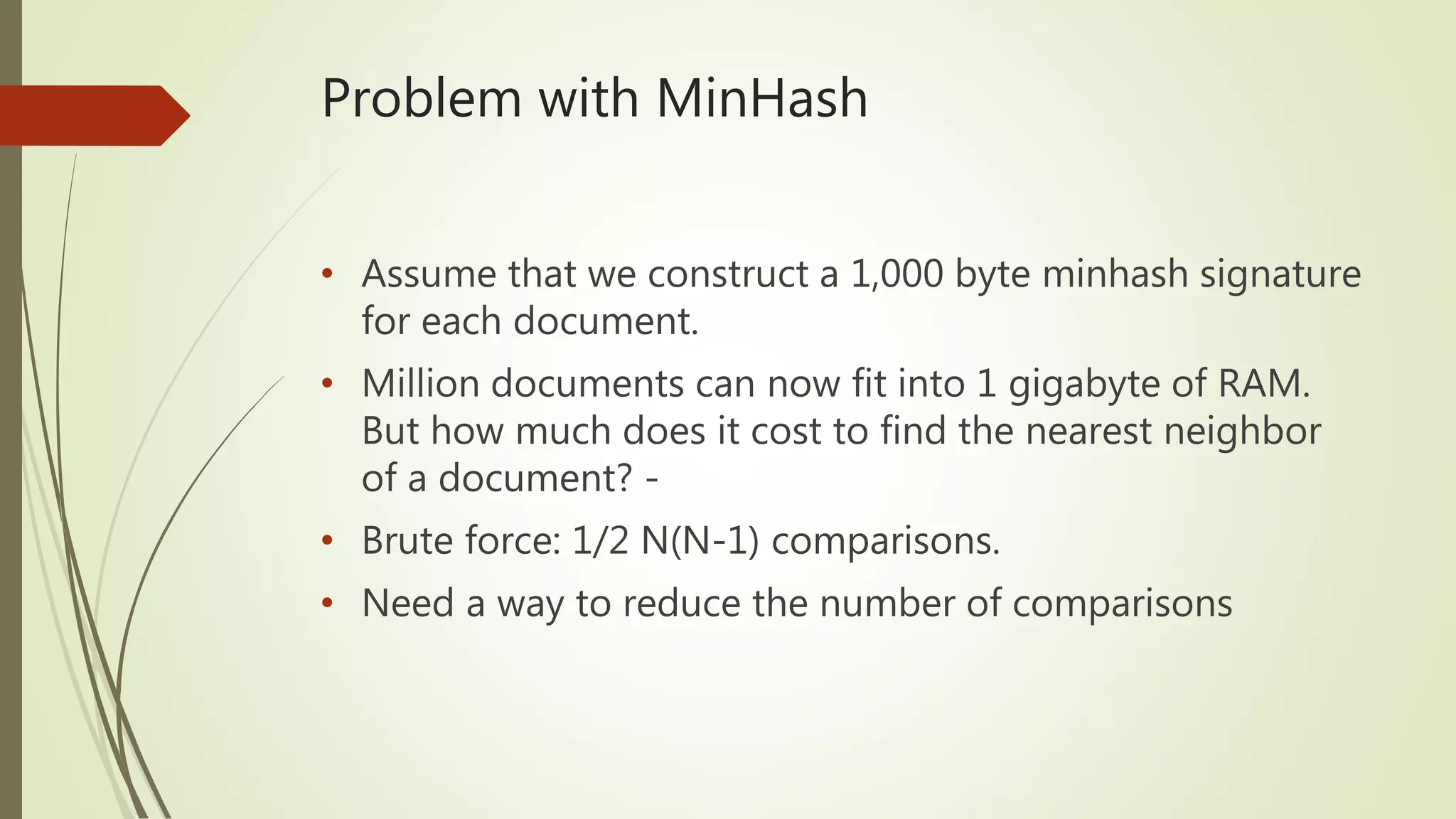

Four main types of probabilistic data structures are described: membership, cardinality, frequency, and similarity. Bloom filters and cuckoo filters are discussed as membership data structures that can tell if an element is definitely not or may be in a set. Cardinality structures like HyperLogLog are able to estimate large cardinalities with small error rates. Count-Min Sketch is presented as a frequency data structure. MinHash and locality sensitive hashing are covered as similarity data structures that can efficiently find similar documents in large datasets.

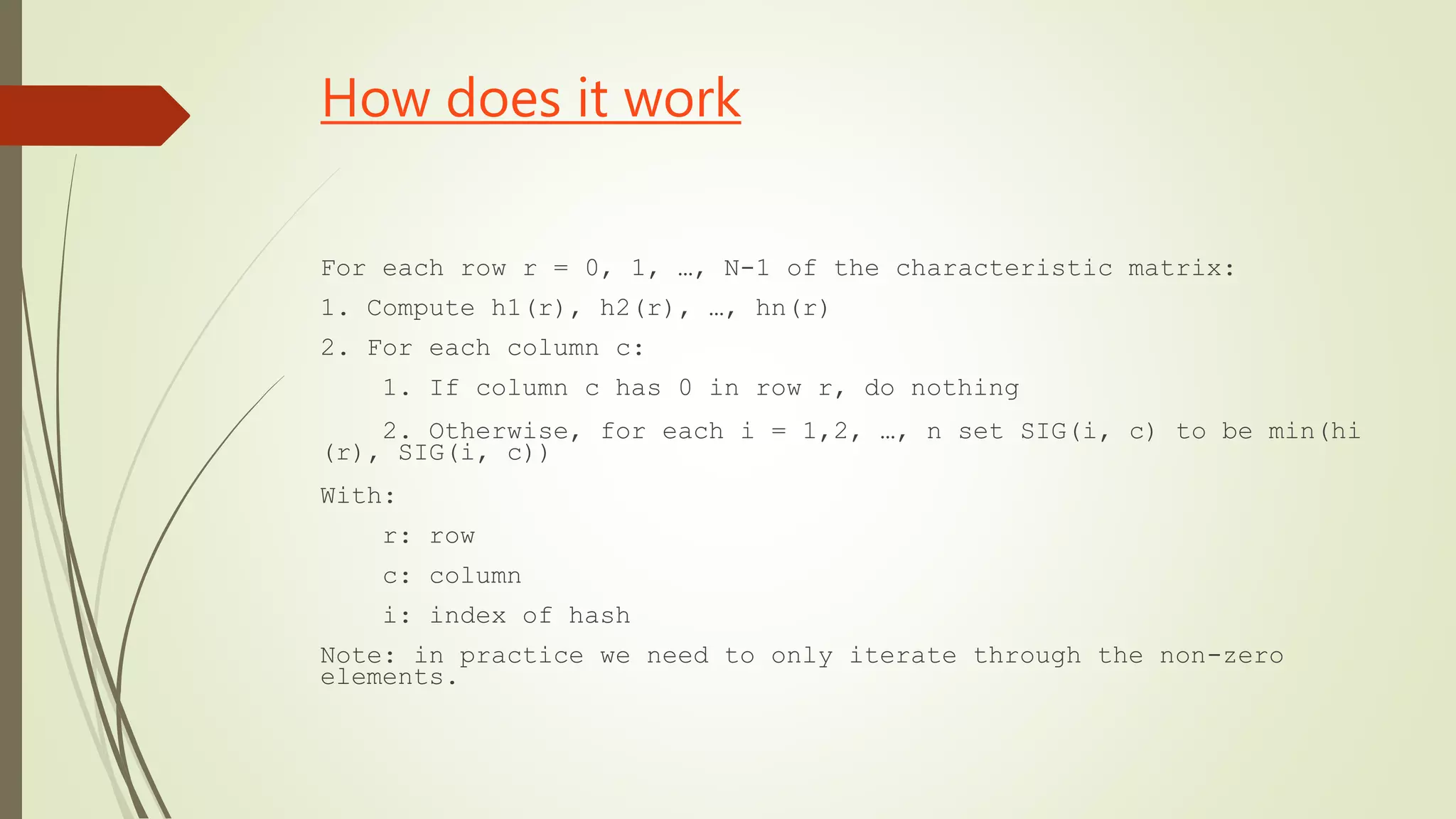

![How does it work

• Parameters of the Filter:

• 1. Two Hash Functions: h1 and h2

• 2. An array B with n buckets. The i-th bucket will be called B[i]

• Input: L, a list of elements to be inserted into the cuckoo filter.](https://image.slidesharecdn.com/probabilisticdatastructure-180307090753/75/Probabilistic-data-structure-10-2048.jpg)

![How does it work

While L is not empty:

Let x be the first item in the list L. Remove x from the list.

If B[h1(x)] is empty:

place x in B[h1(x)]

Else, If B[h2(x) is empty]:

place x in B[h2(x)]

Else:

Let y be the element in B[h2(x)].

Prepend y to L

place x in B[h2(x)]](https://image.slidesharecdn.com/probabilisticdatastructure-180307090753/75/Probabilistic-data-structure-11-2048.jpg)

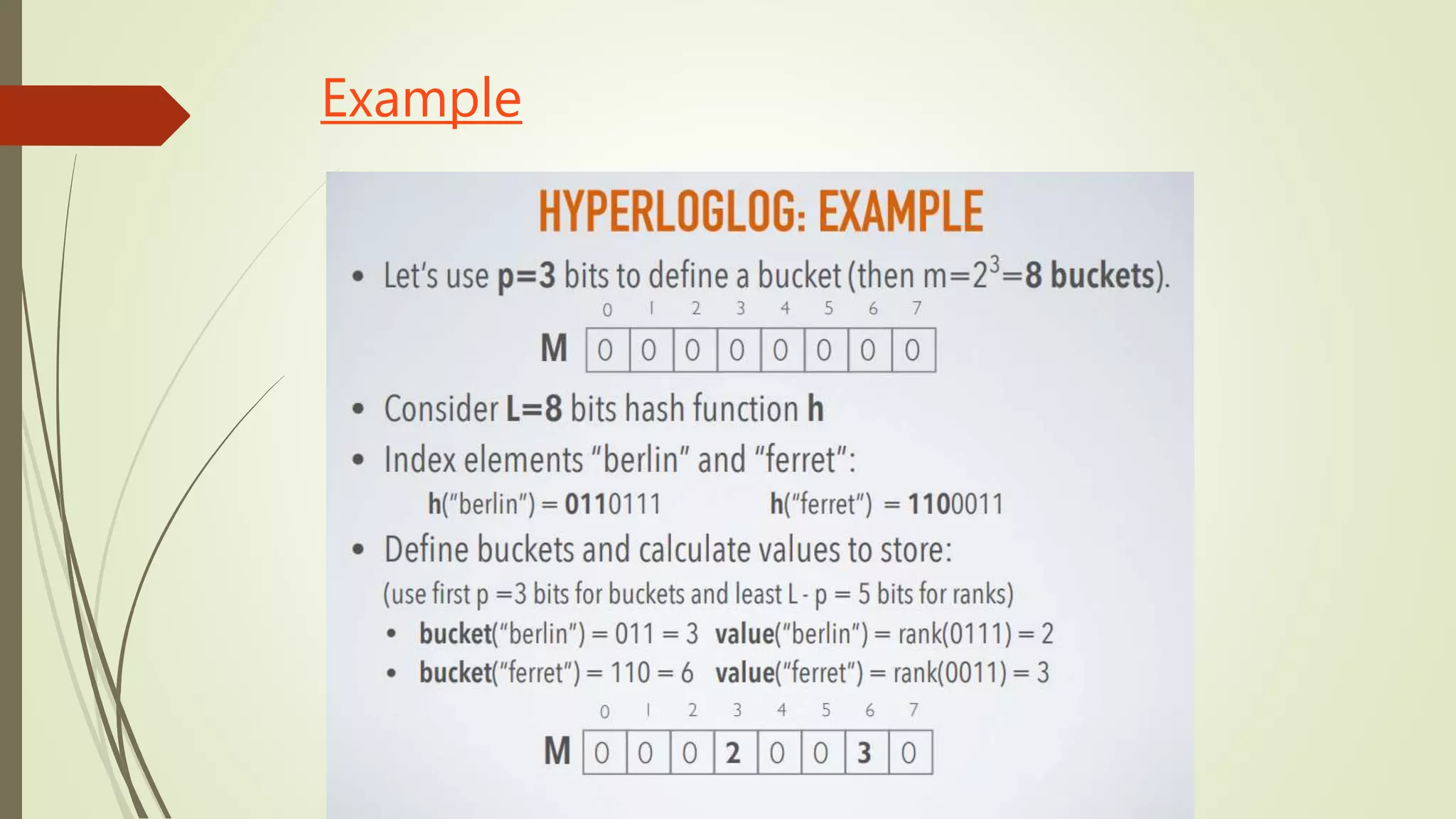

![How does it work

• Stochastic averaging is used to reduce the large variability:

• The input stream of data elements S is divided into m substreams S(i) using the first p

bits of the hash values (m = 2^p)

• In each substream, the rank (after the initial p bits that are used for substreaming) is

measured independently.

• These numbers are kept in an array of registers M, where M[i] stores the maximum rank

it seen for the substream with index i.

• The cardinality formula is calculated computes to approximate the cardinality of a

multiset.](https://image.slidesharecdn.com/probabilisticdatastructure-180307090753/75/Probabilistic-data-structure-20-2048.jpg)

![Properties

• Idea:

• From minHash, divide the signature matrix rows into b bands

of r rows hash the columns in each band with a basic hash

function each band divided to buckets [i.e a hashtable for

each band]

• If sets S and T have same values in a band, they will be

hashed into the same bucket in that band.

• For nearest-neighbor, the candidates are the items in the

same bucket as query item, in each band](https://image.slidesharecdn.com/probabilisticdatastructure-180307090753/75/Probabilistic-data-structure-29-2048.jpg)