This document provides information on using Perl to interact with and manipulate databases. It discusses:

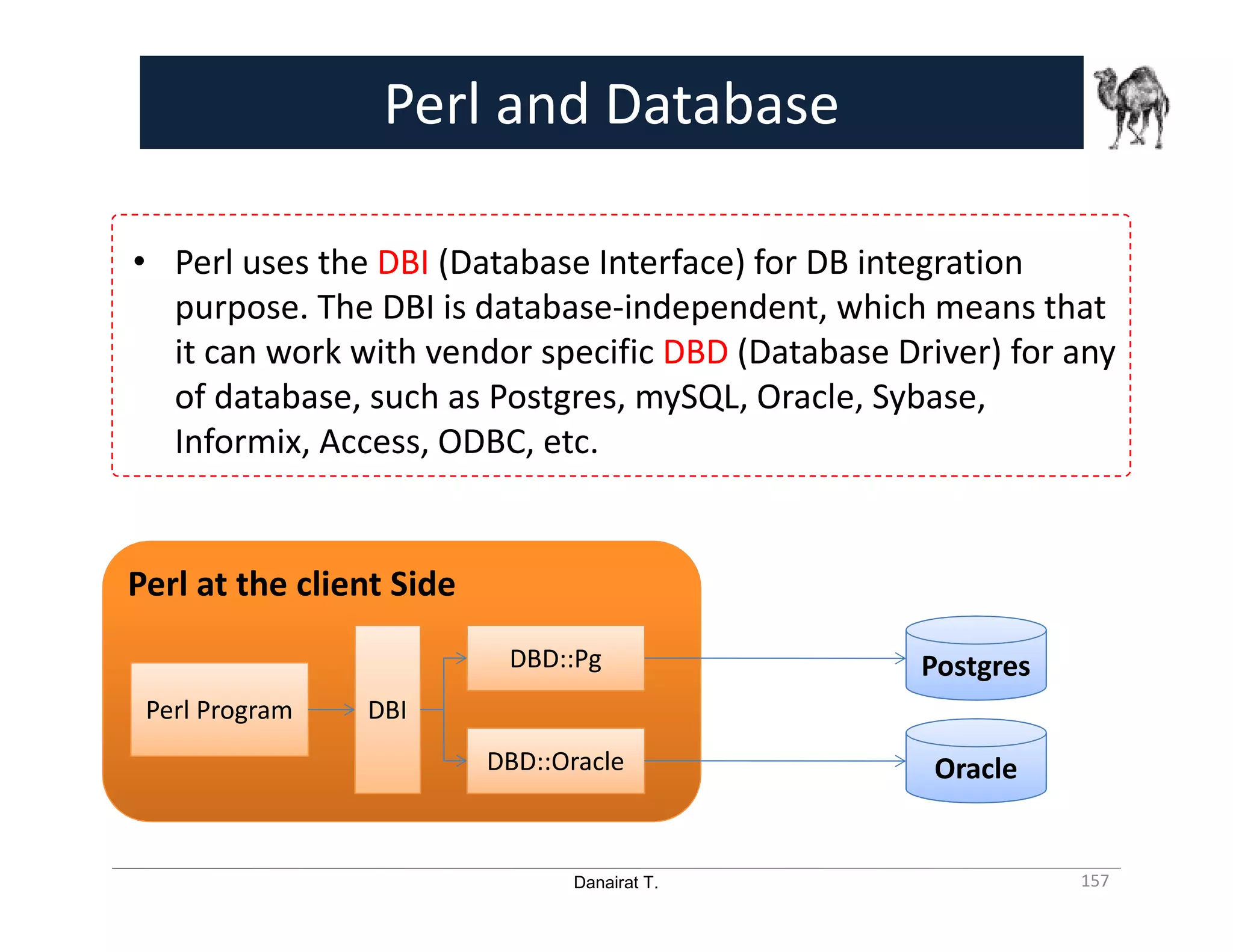

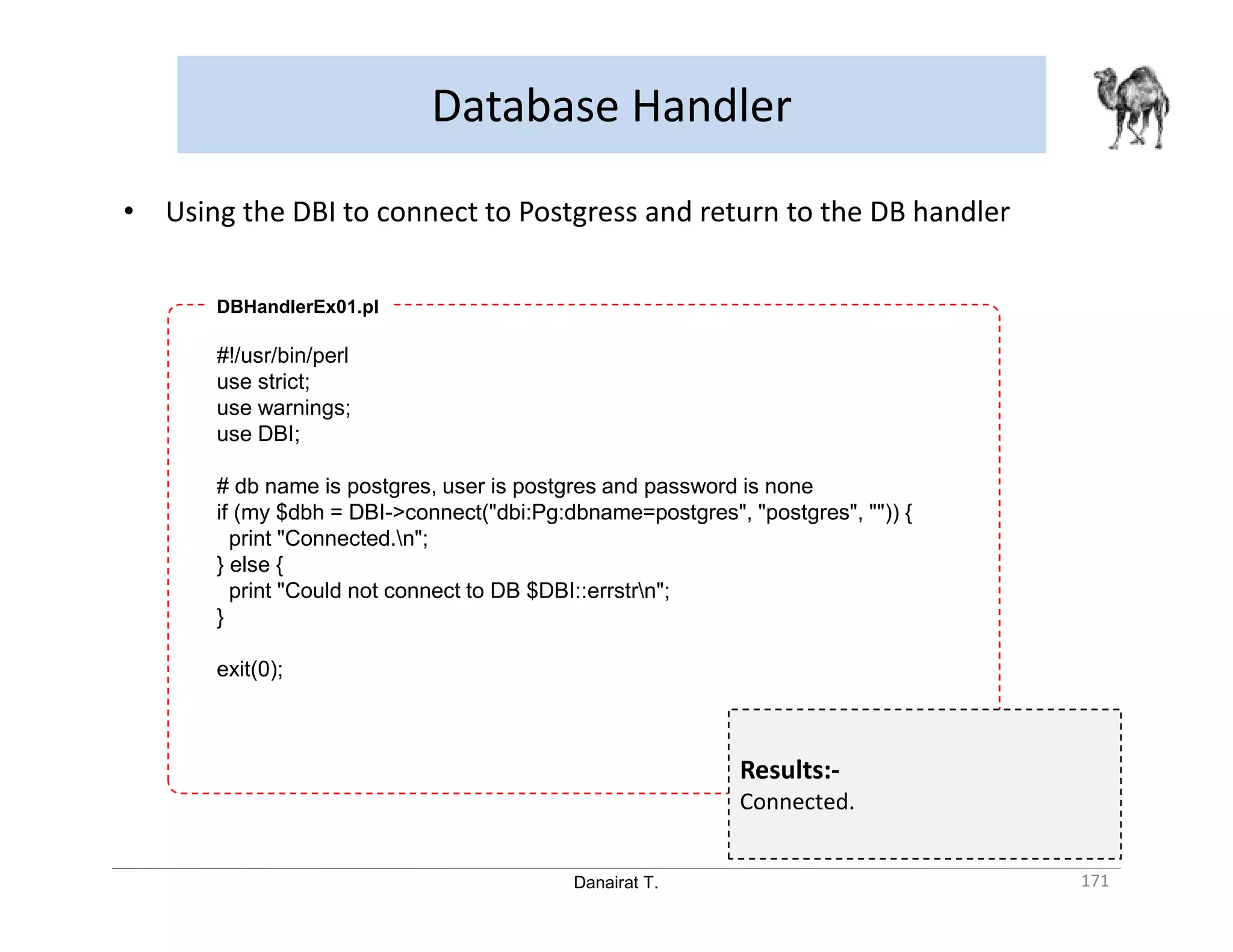

- Using the DBI module to connect to databases in a vendor-independent way

- Installing Perl modules like DBI and DBD drivers to connect to specific databases like Postgres

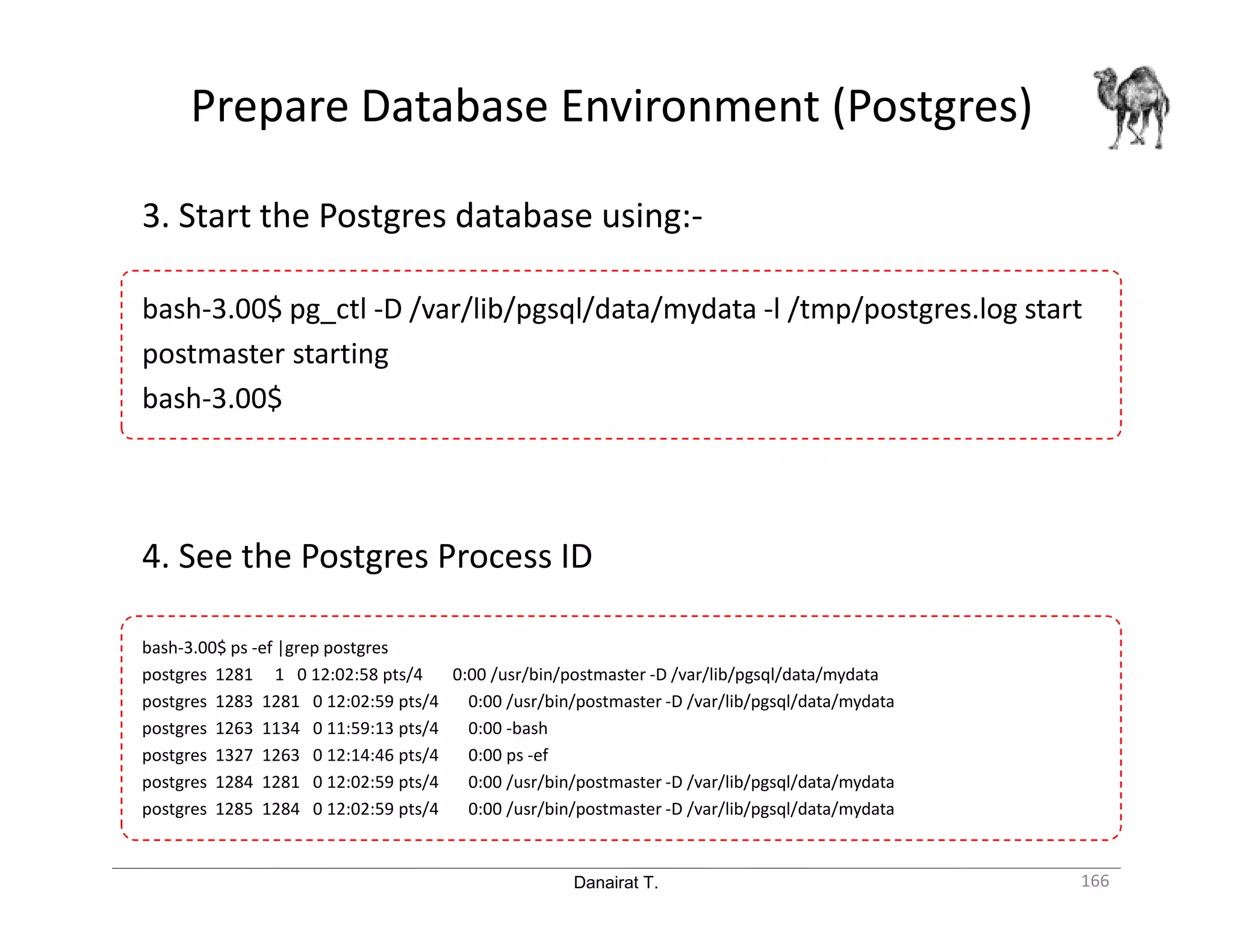

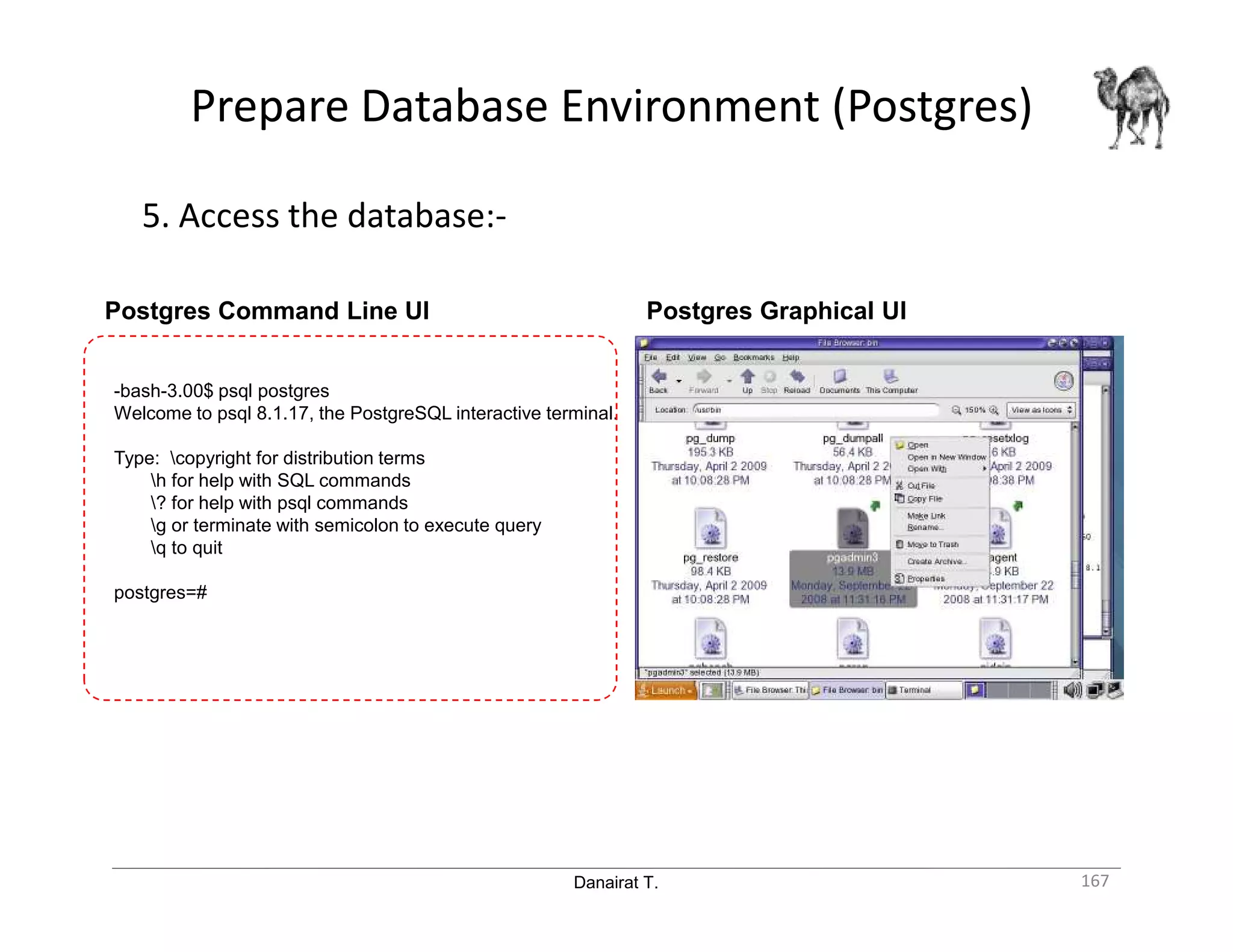

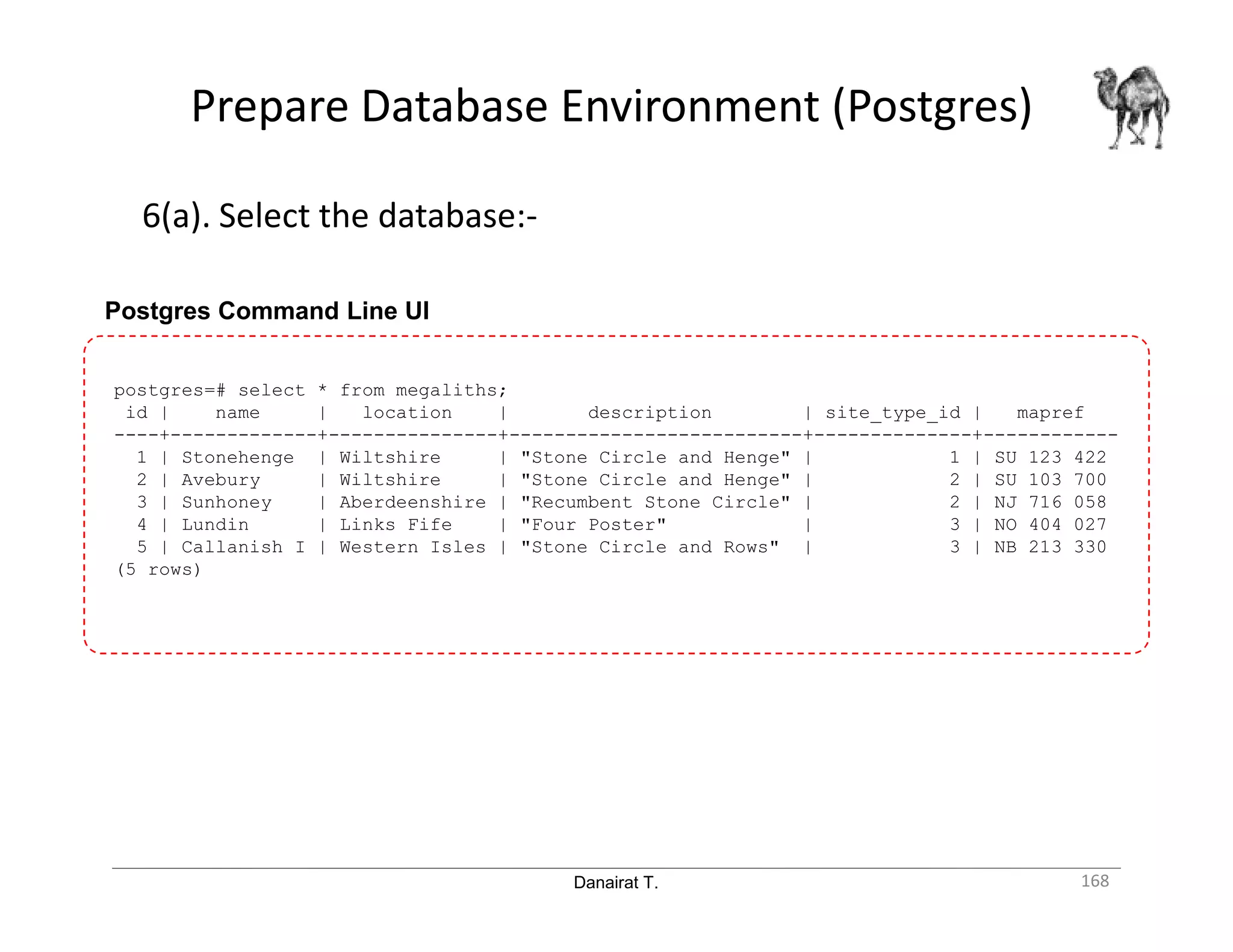

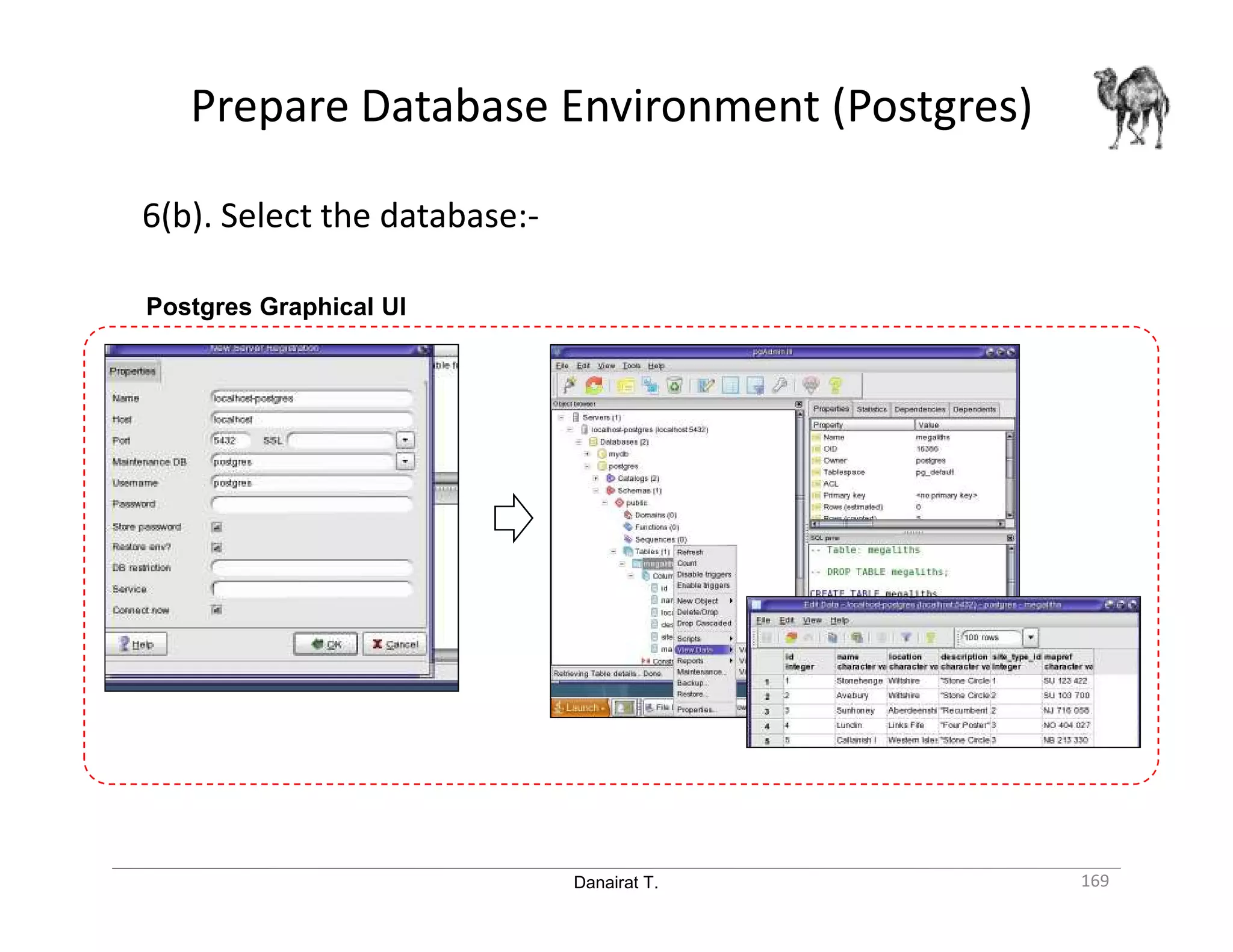

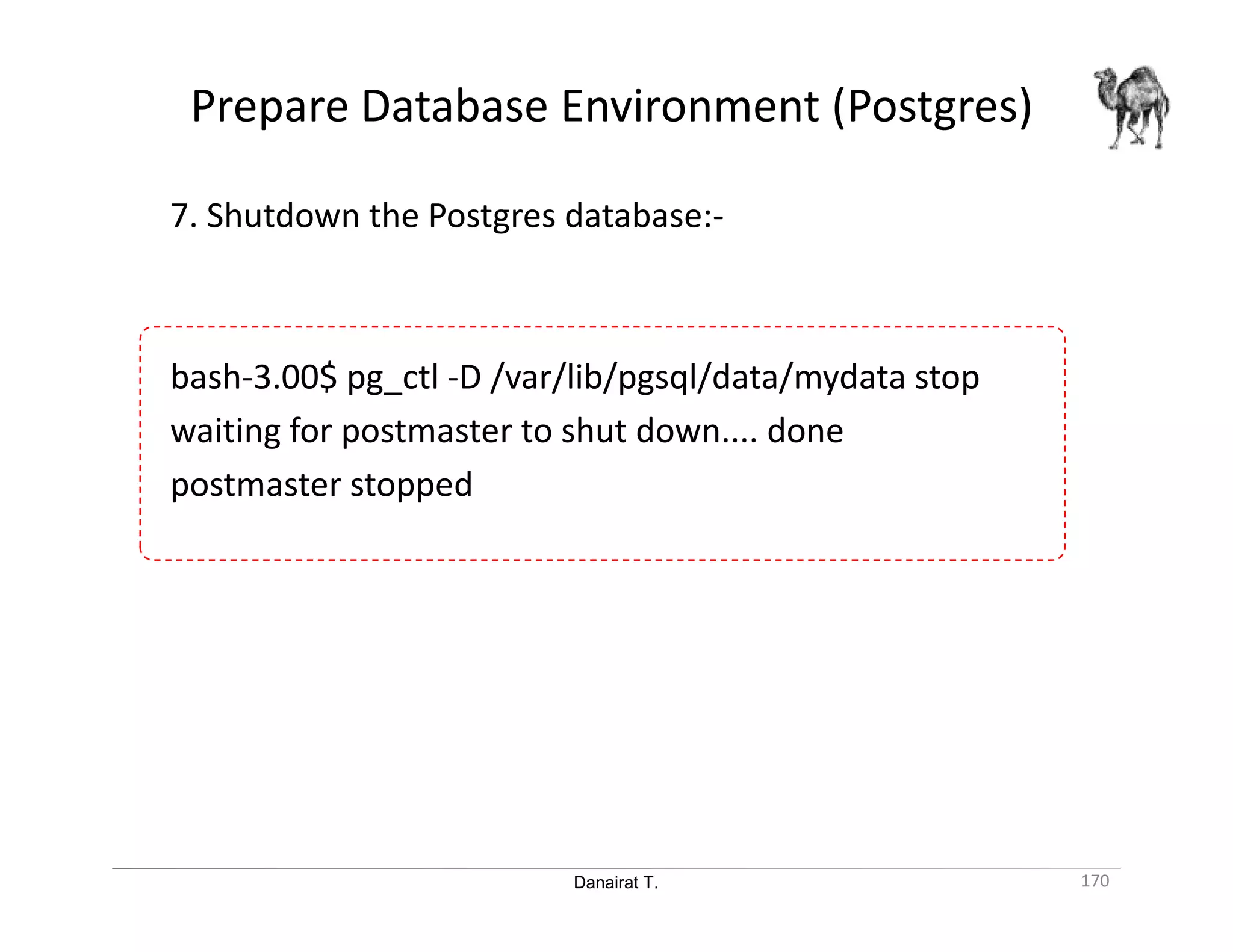

- Preparing the Postgres database environment, including initializing and starting the database

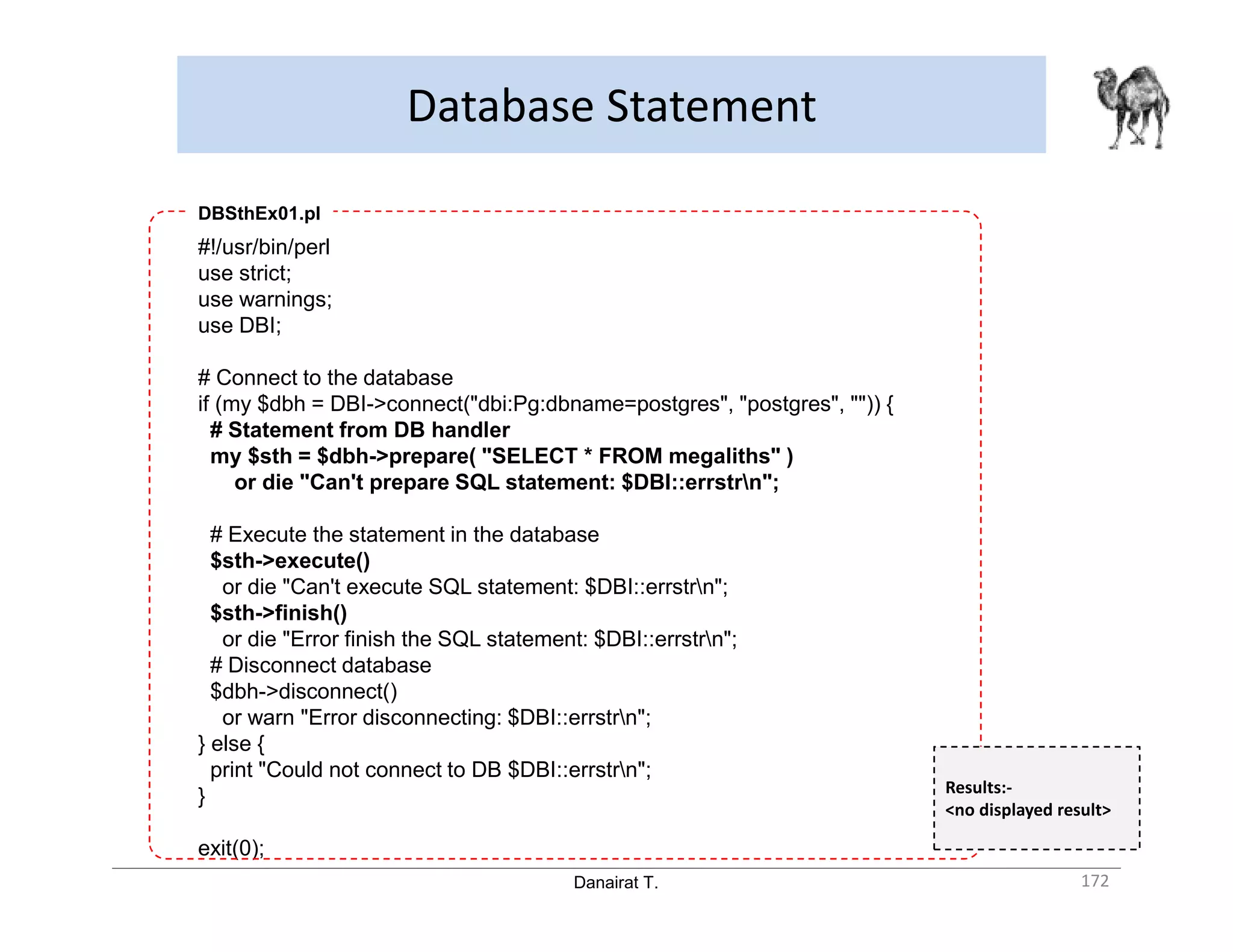

- Using the DBI handler and statements to connect to and execute queries on the database

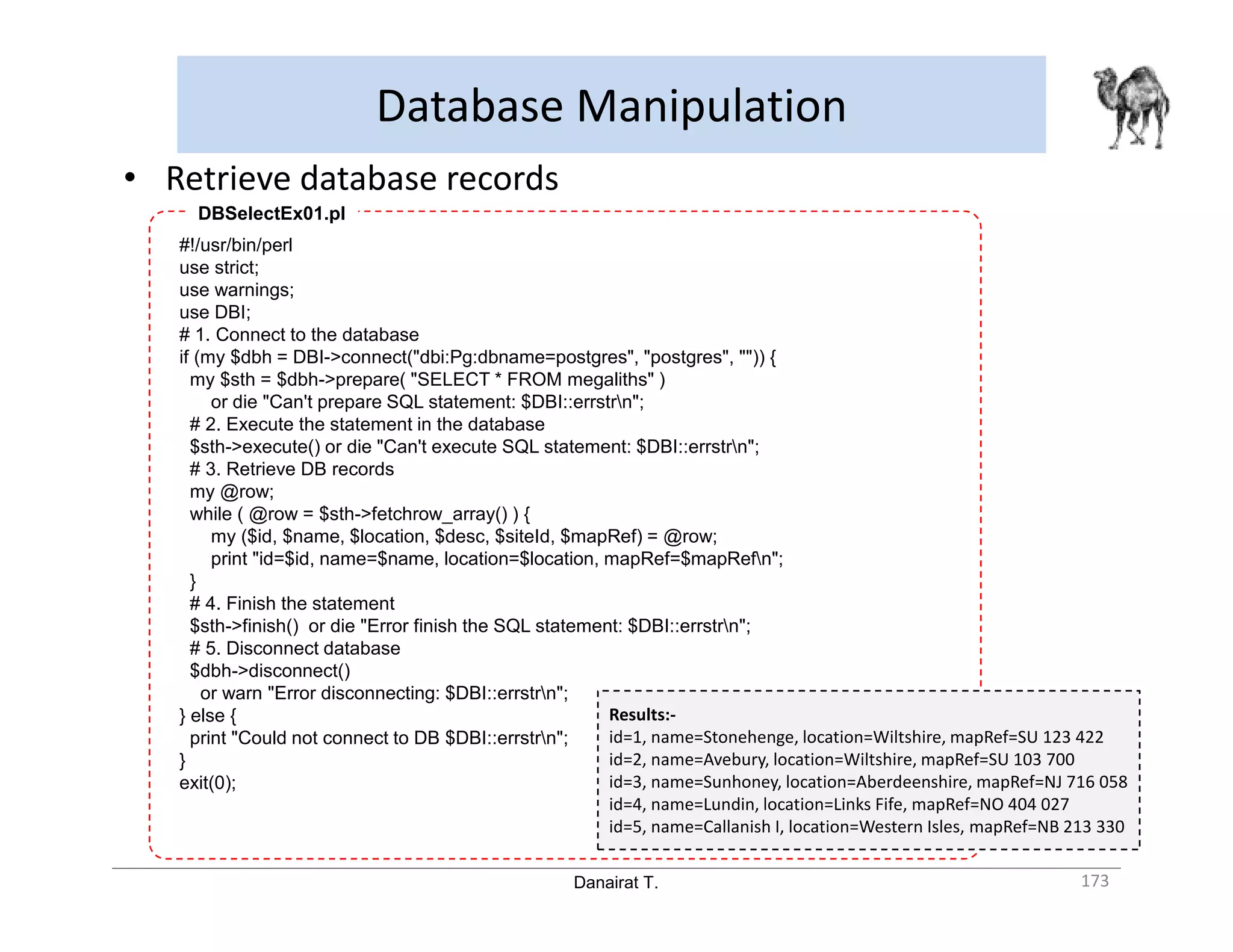

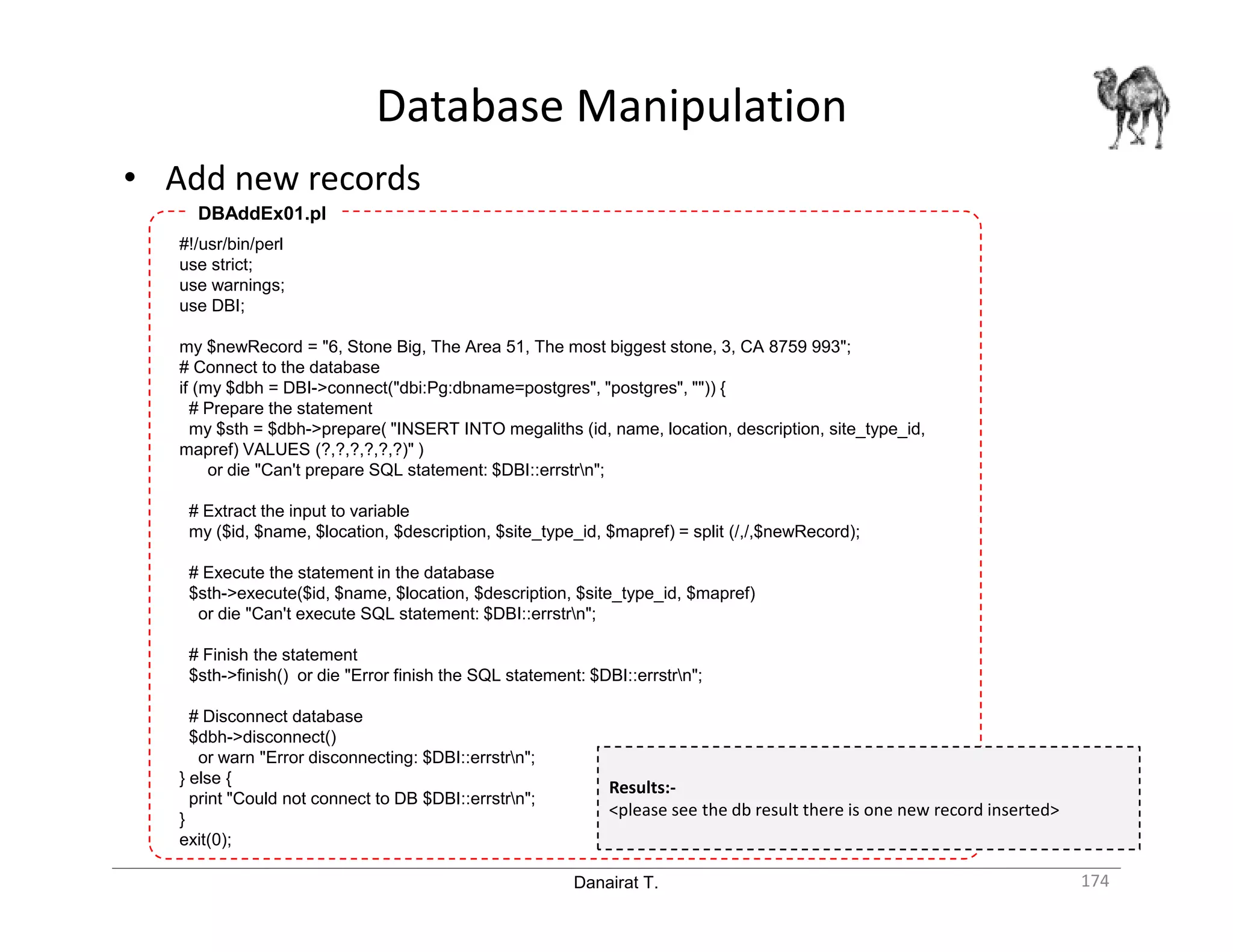

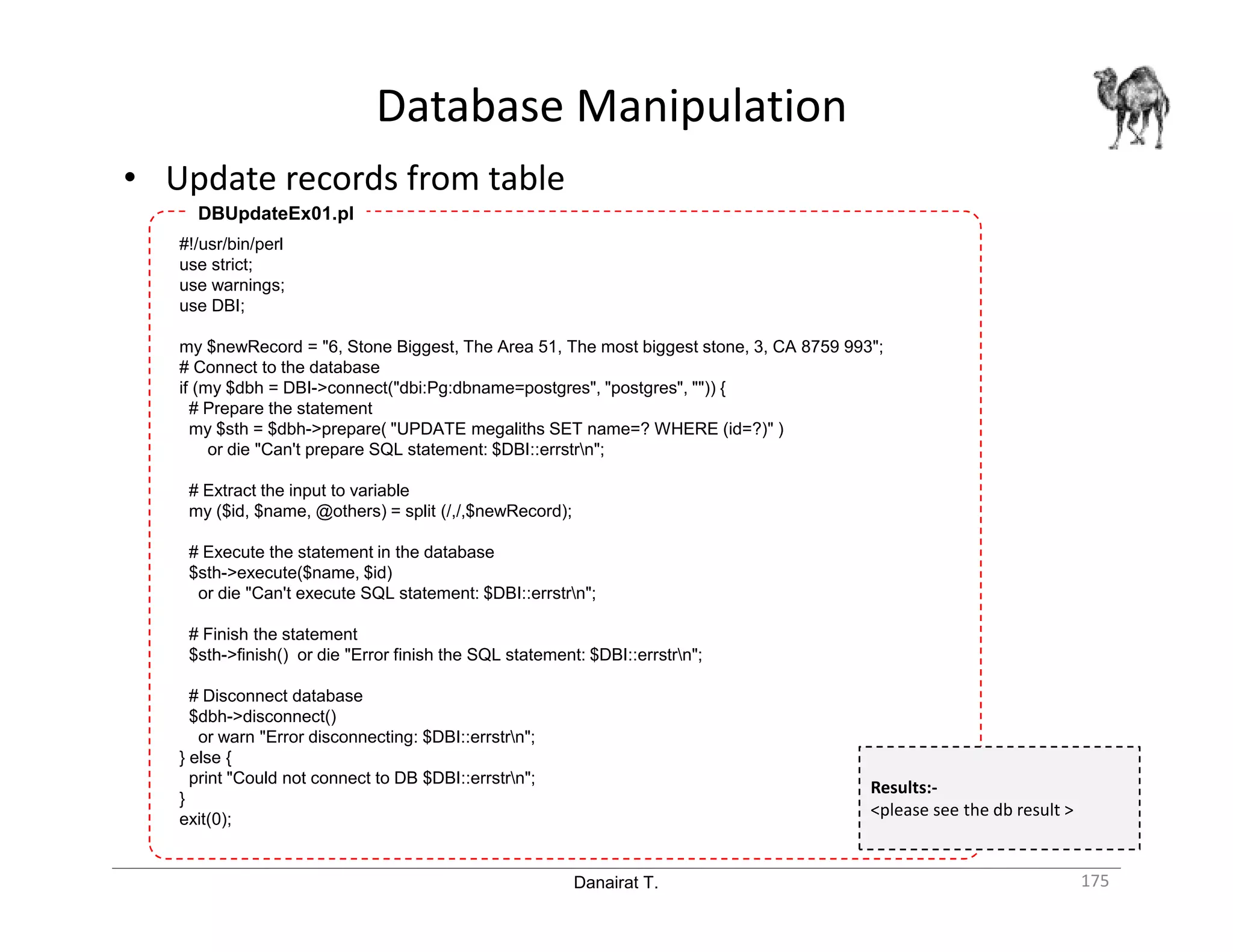

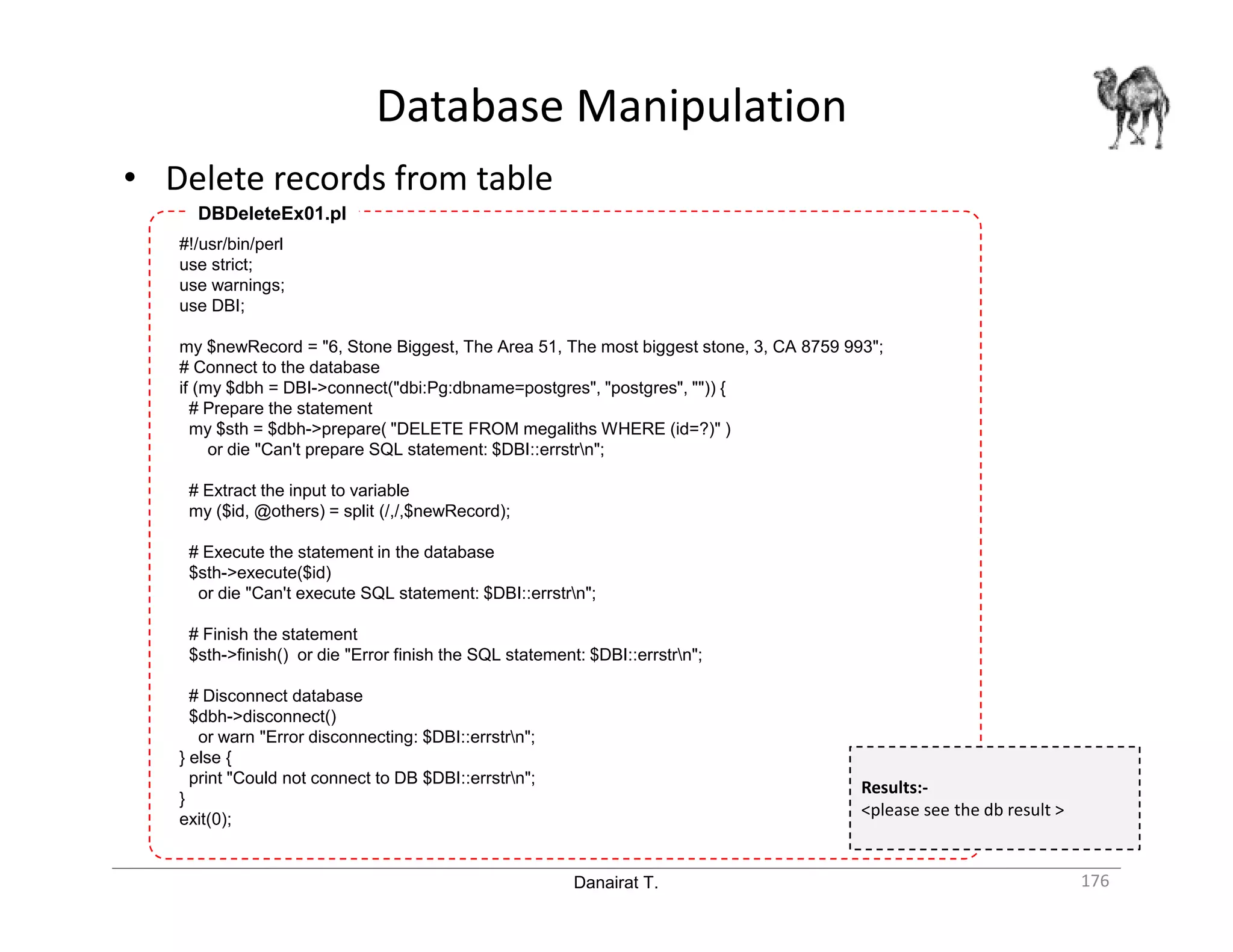

- Retrieving and manipulating database records through functions like SELECT, adding new records, etc.

The document provides code examples for connecting to Postgres with Perl, executing queries to retrieve data, and manipulating the database through operations like inserting new records. It focuses on