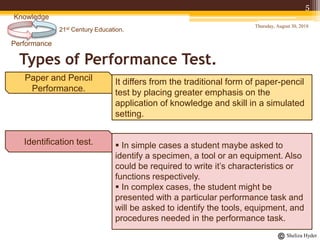

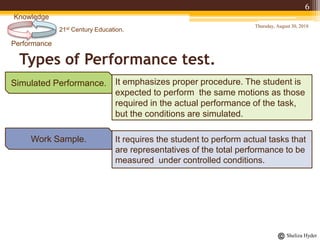

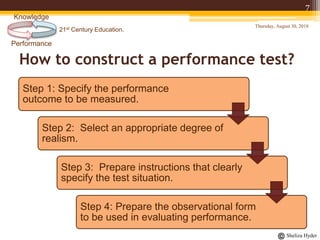

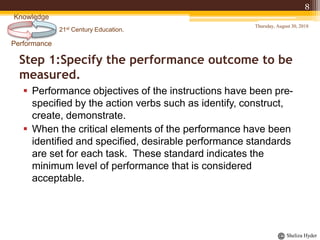

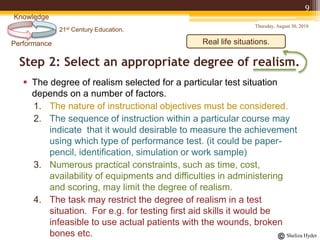

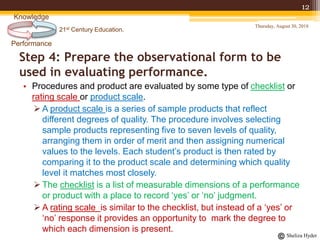

The document discusses performance tests in 21st-century education, emphasizing their role in assessing practical skills rather than just knowledge. It details the types of performance tests and provides a systematic approach for constructing them, including specifying outcomes, selecting realism, preparing instructions, and creating evaluation forms. Overall, it highlights the importance of performance tests in various academic fields to effectively evaluate students' skills and competencies.