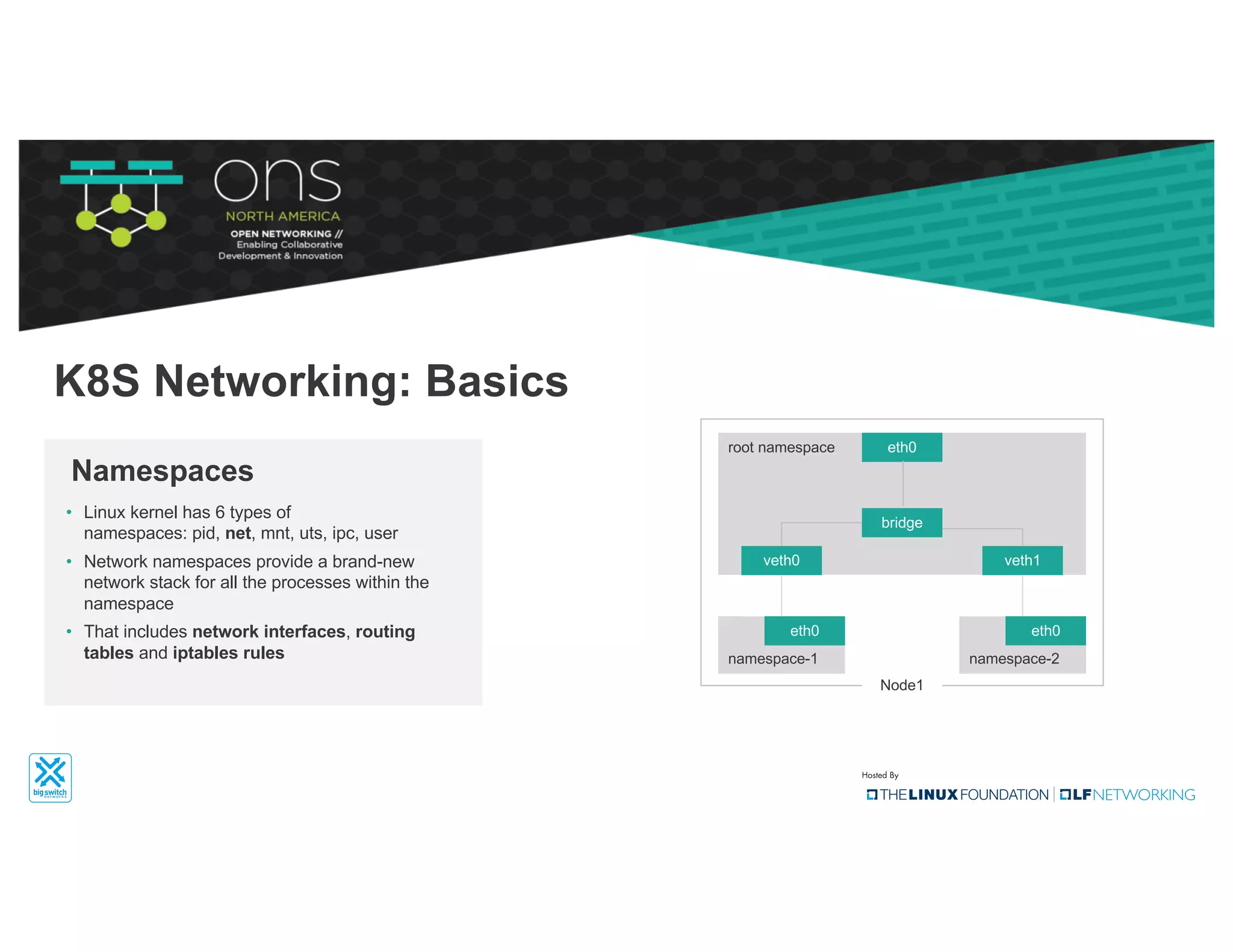

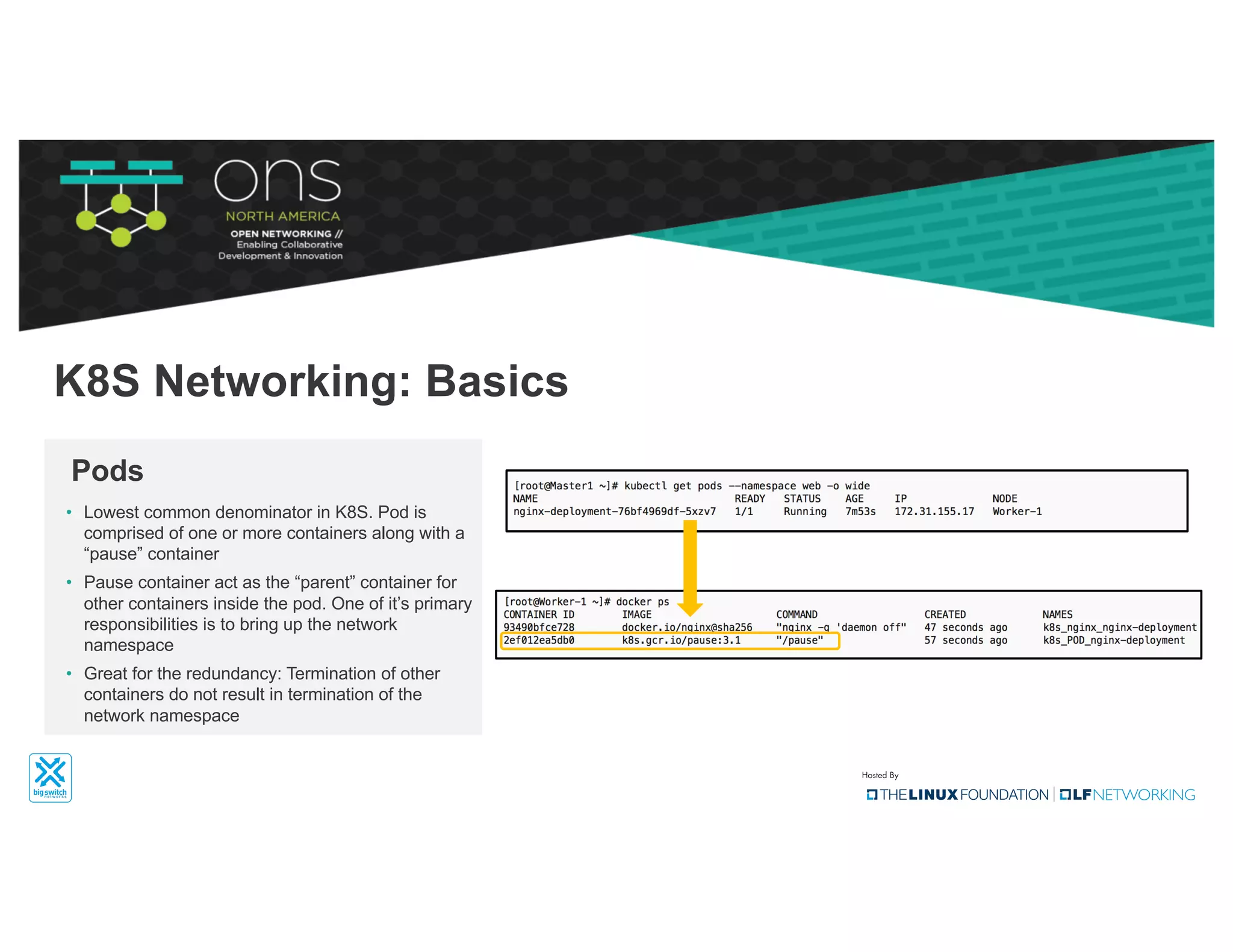

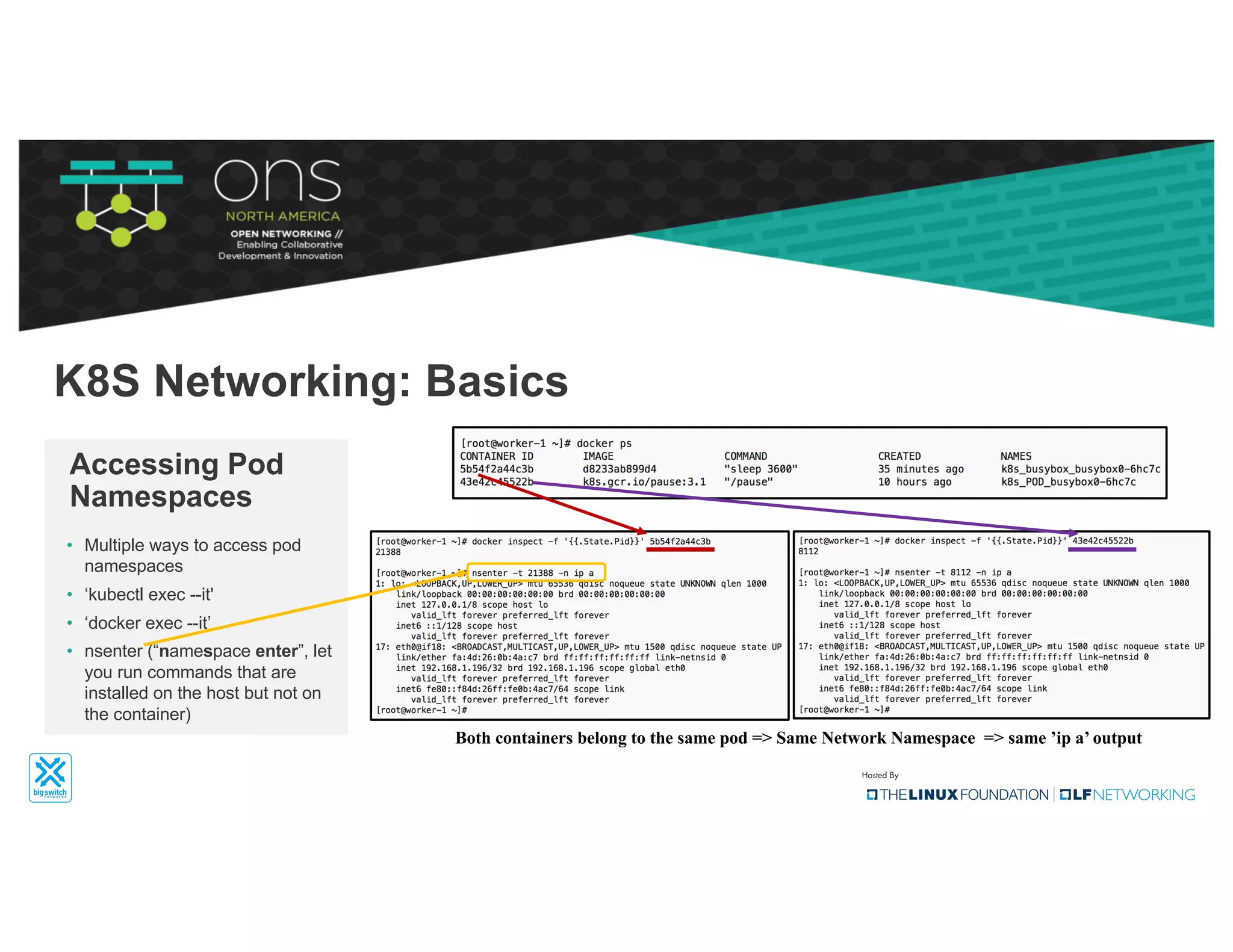

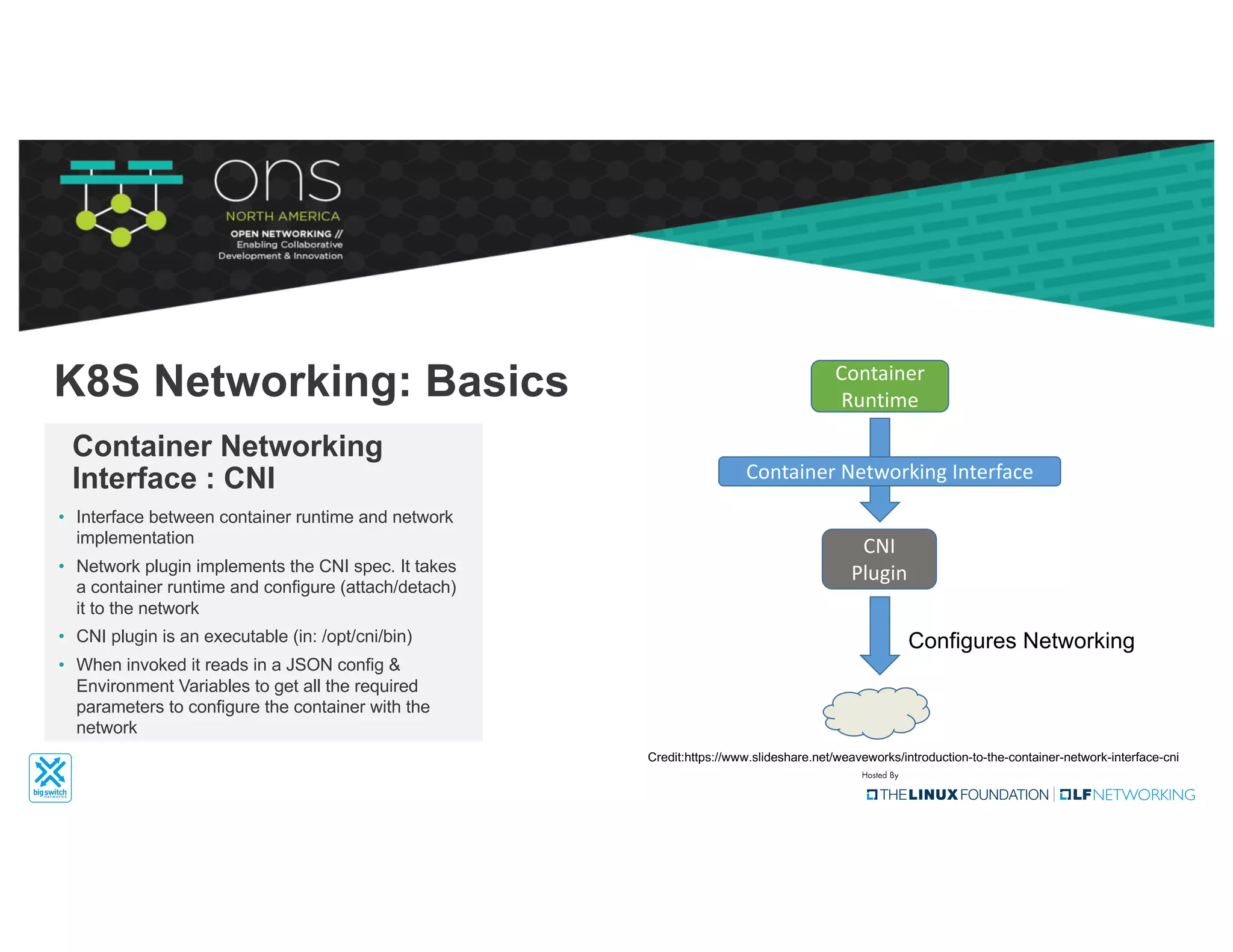

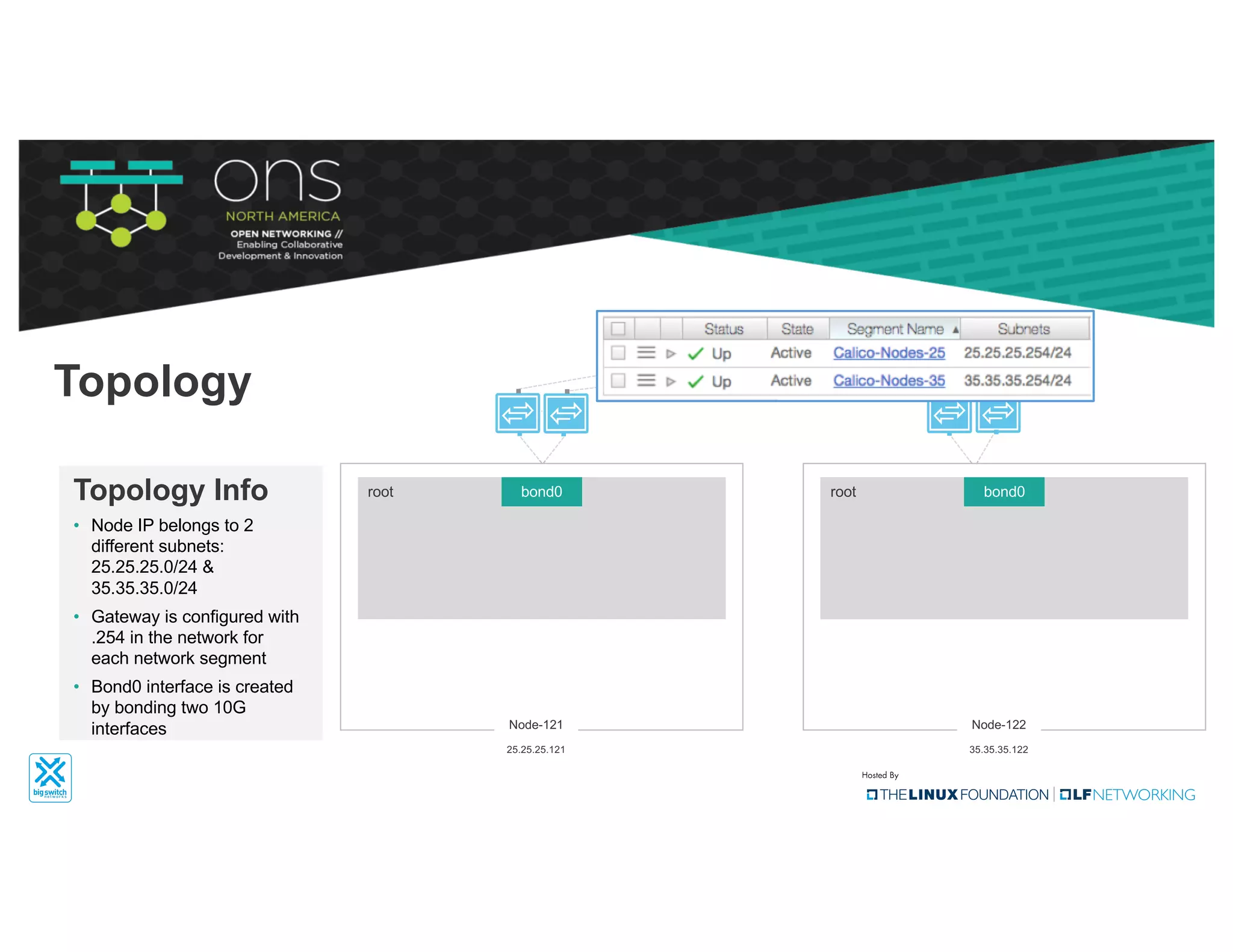

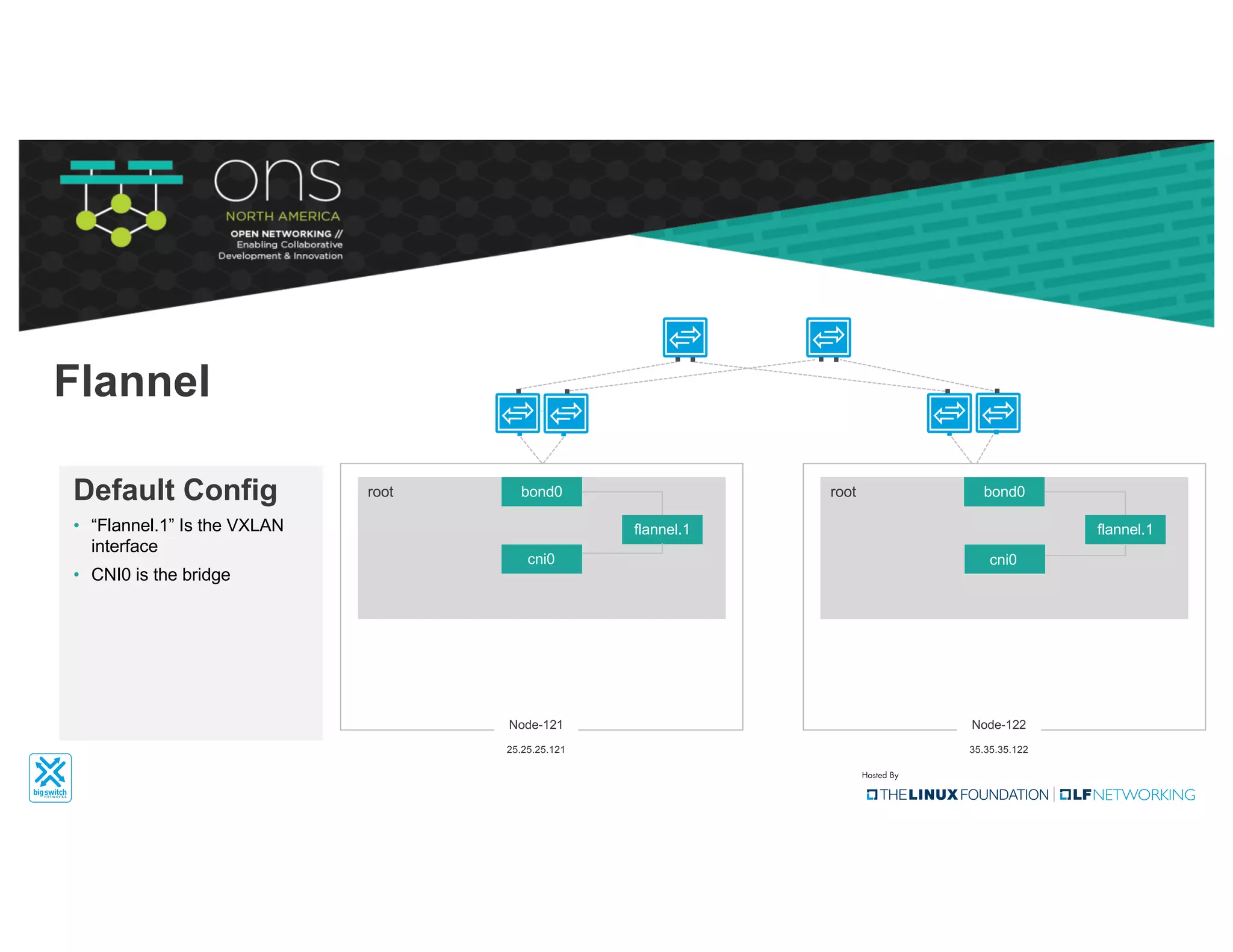

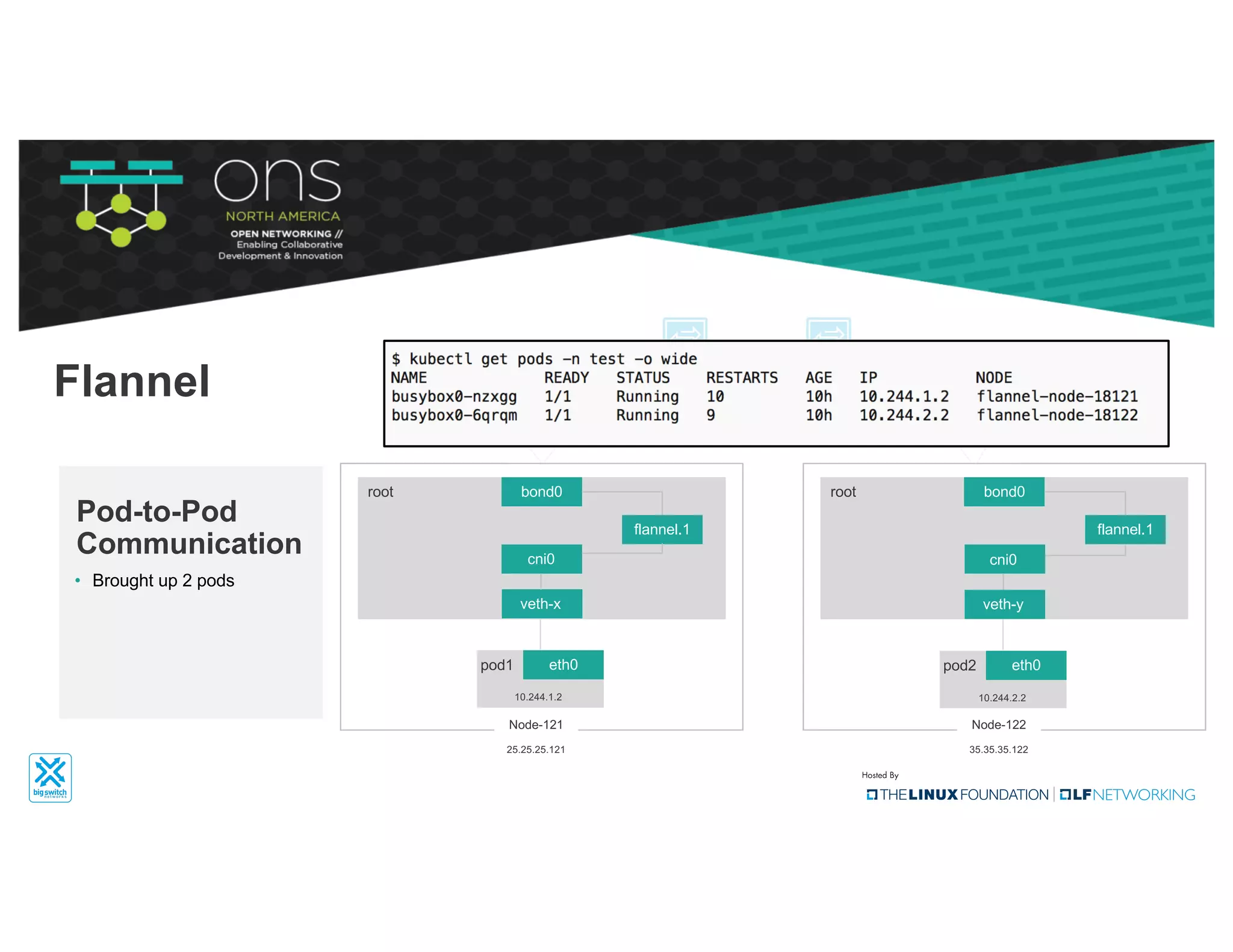

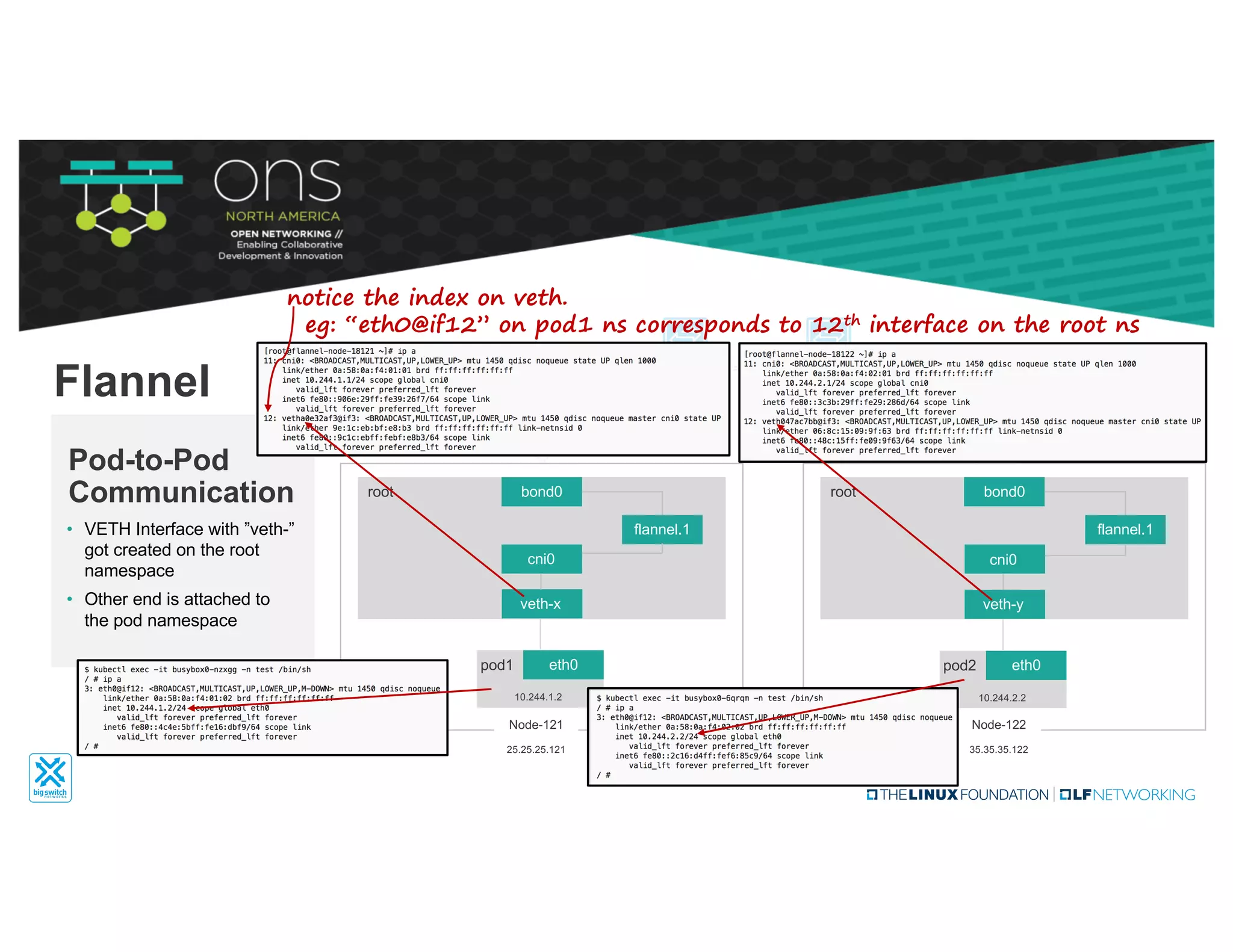

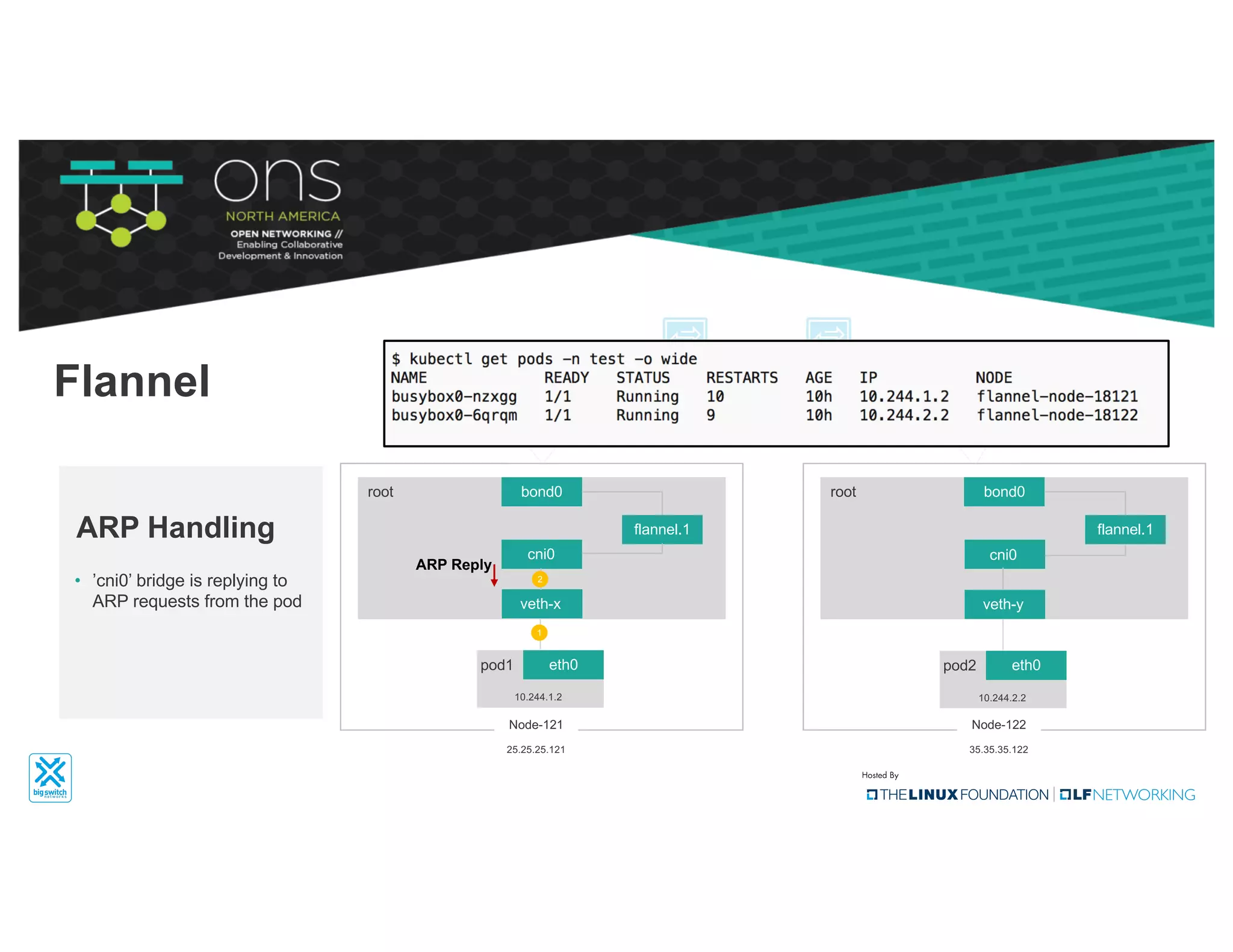

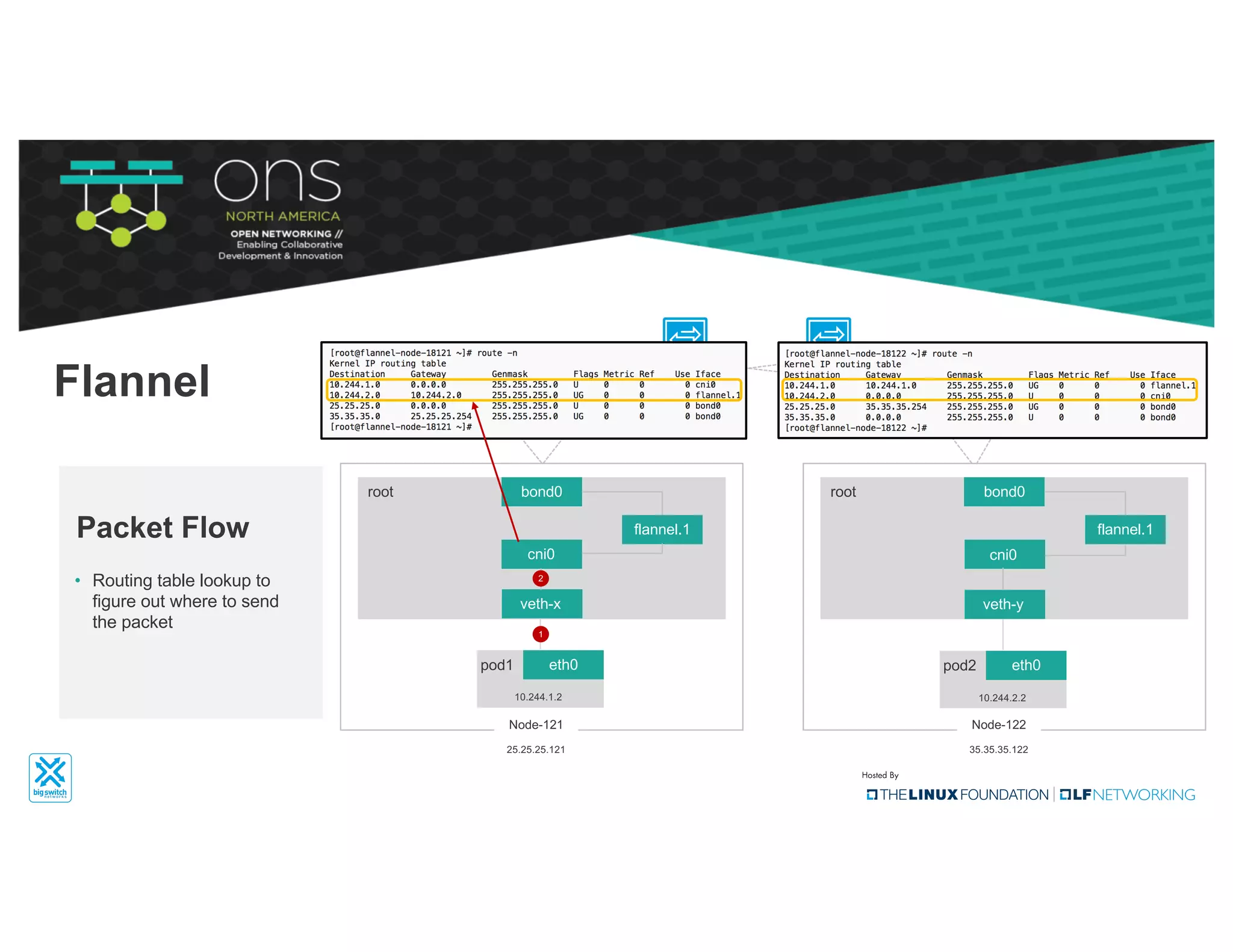

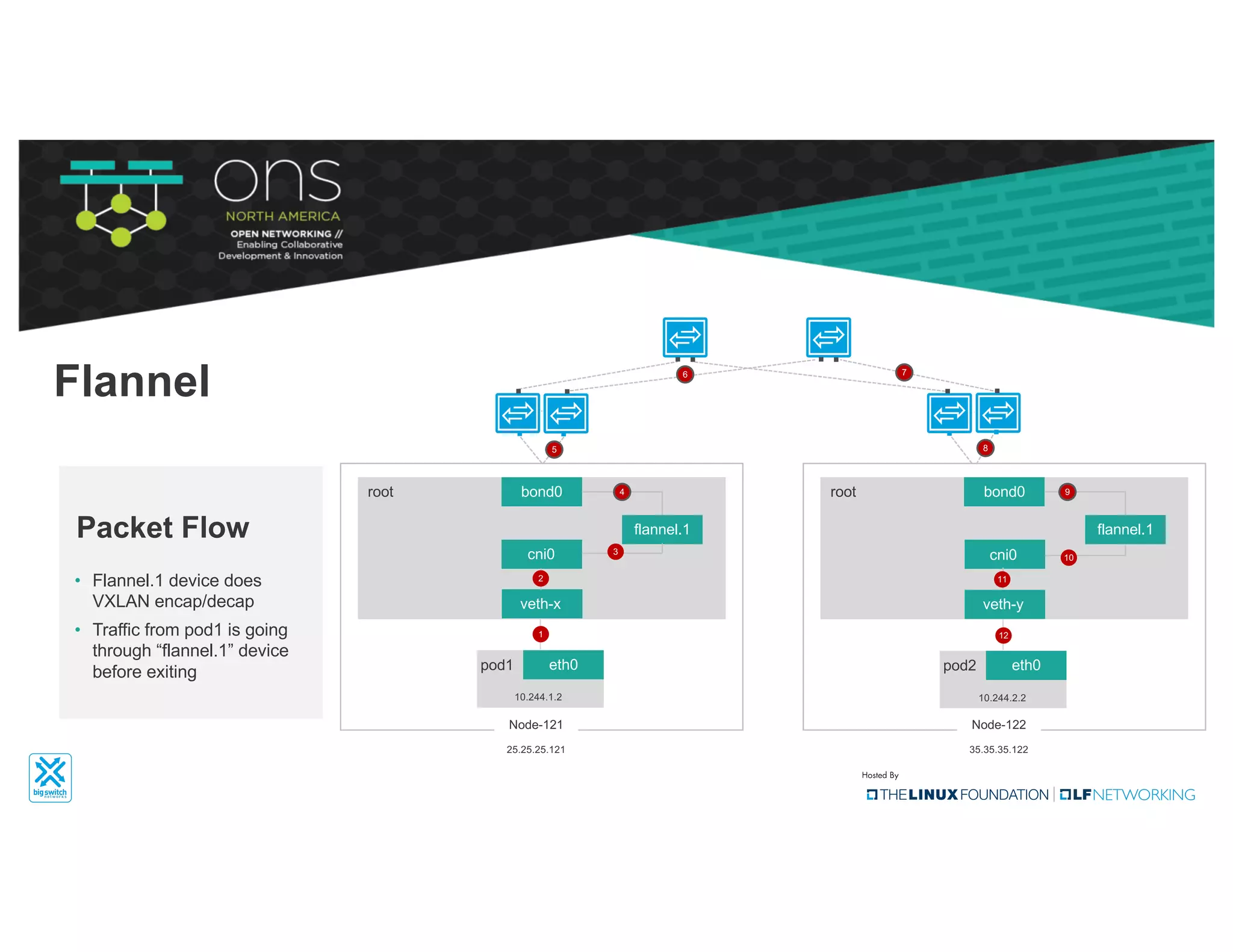

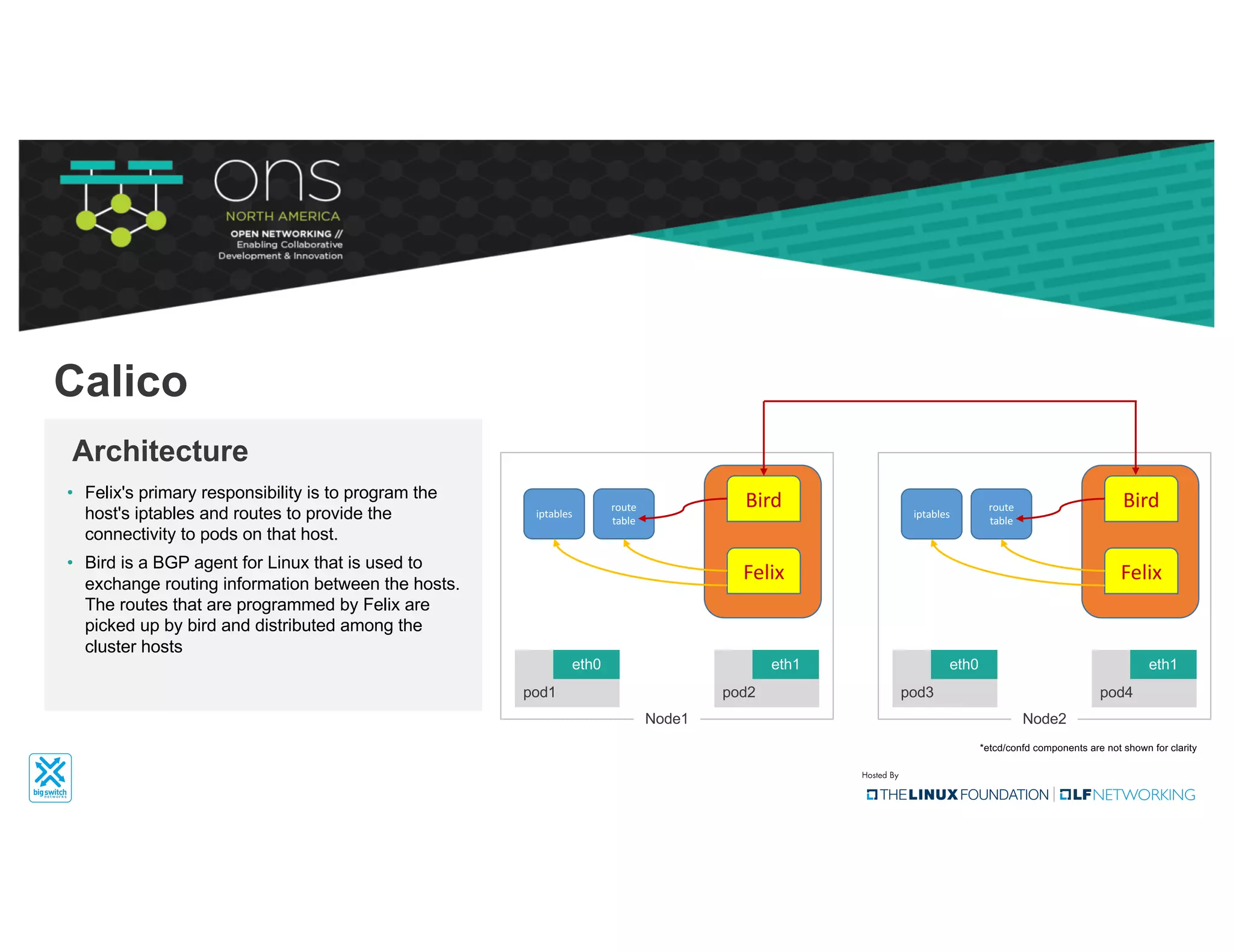

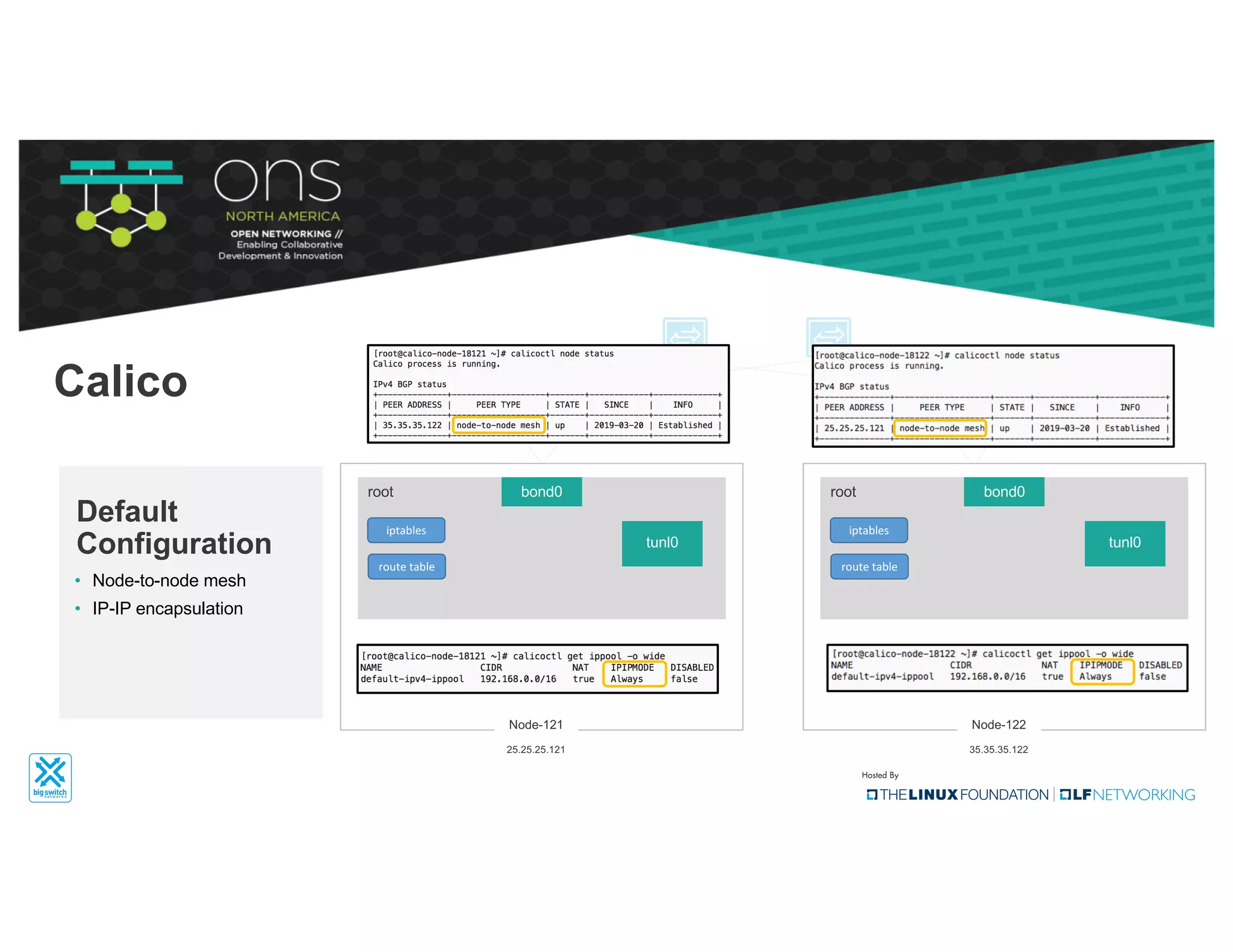

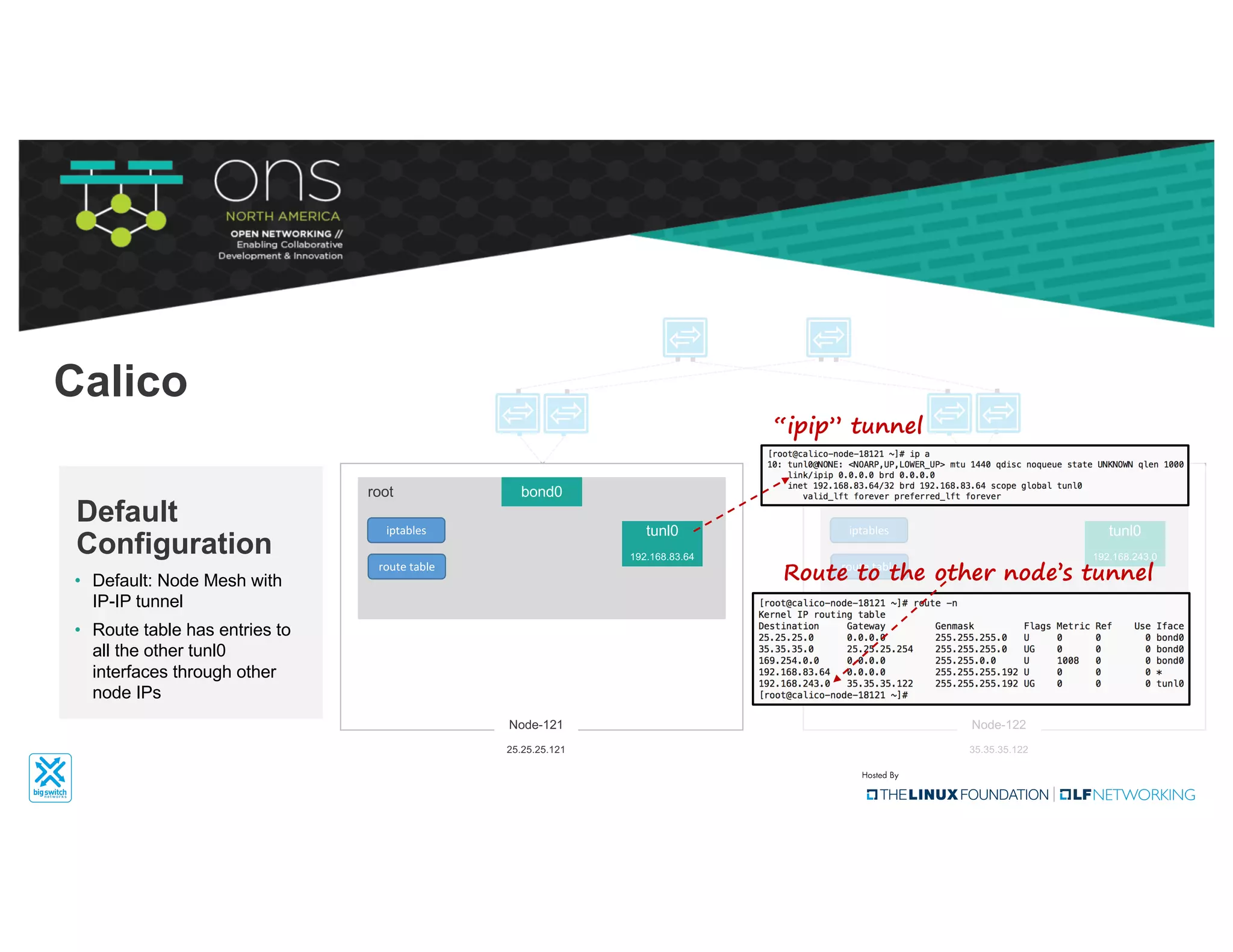

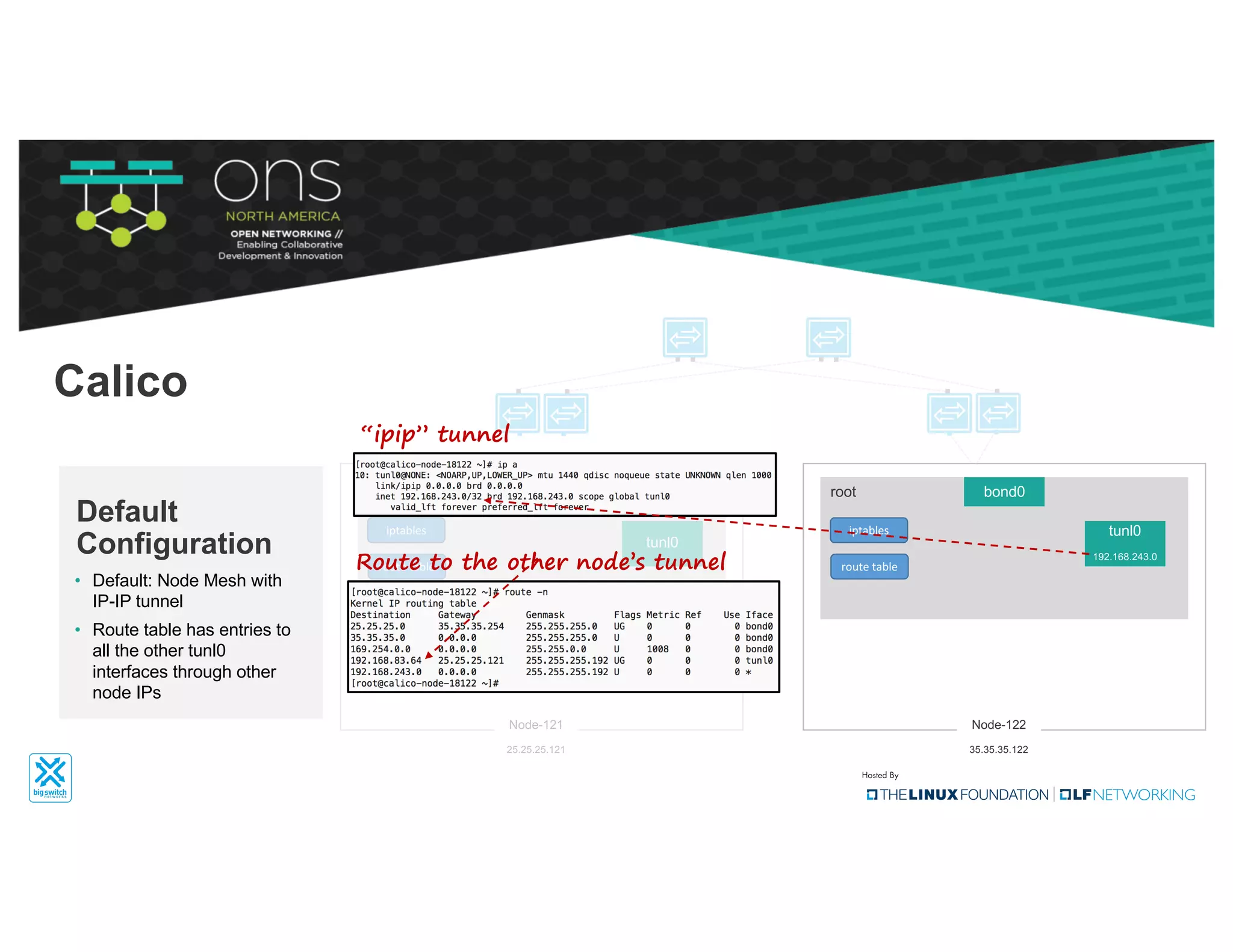

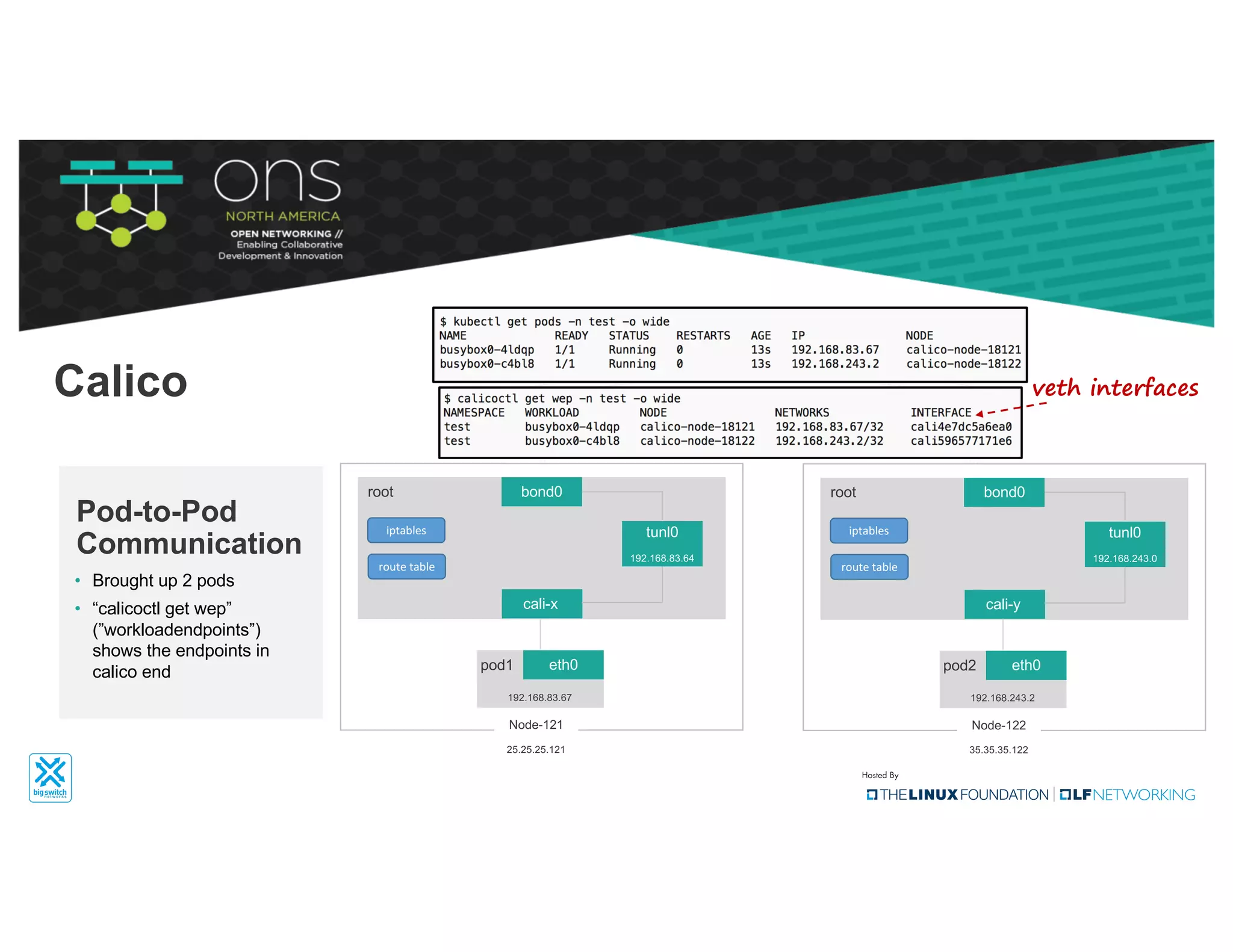

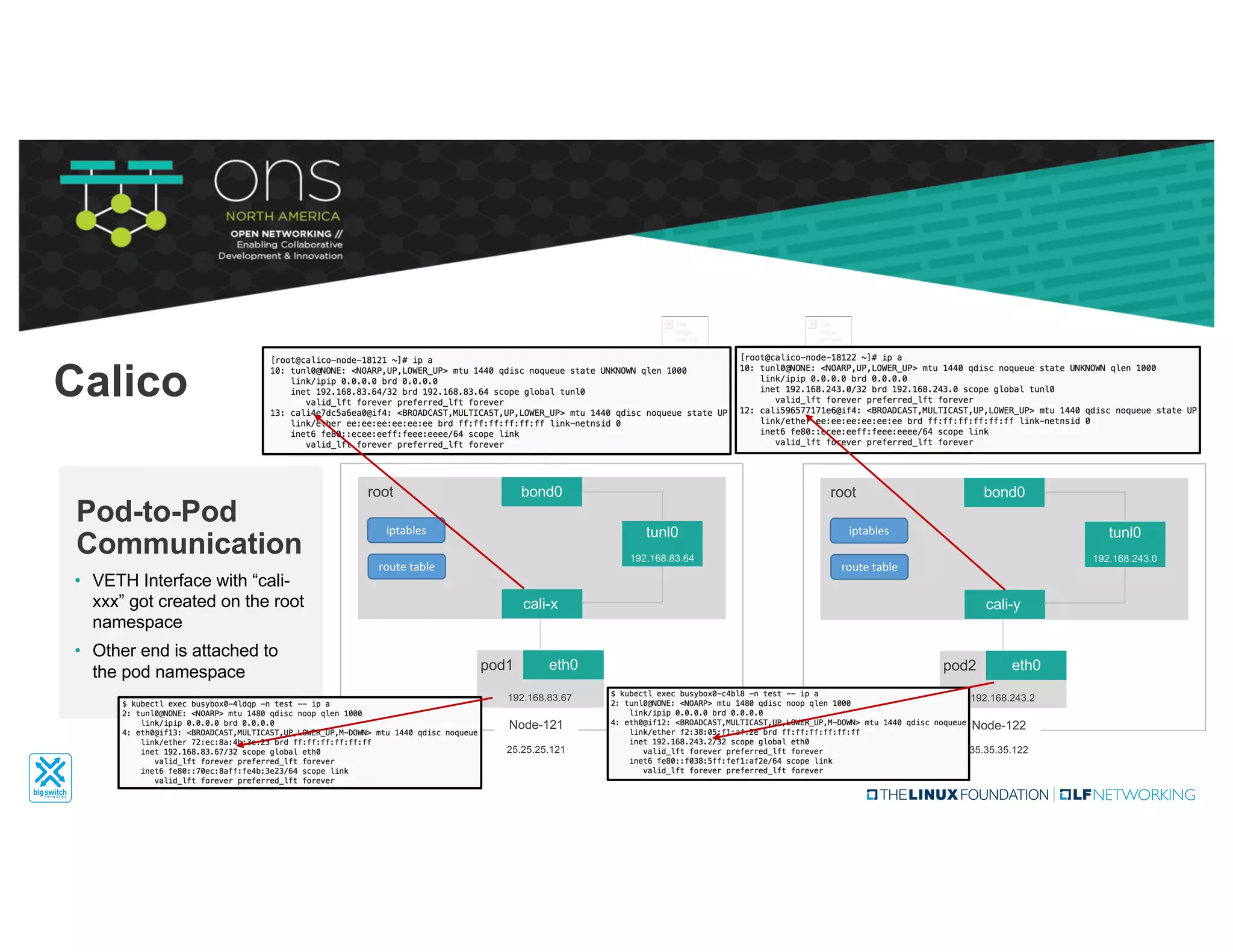

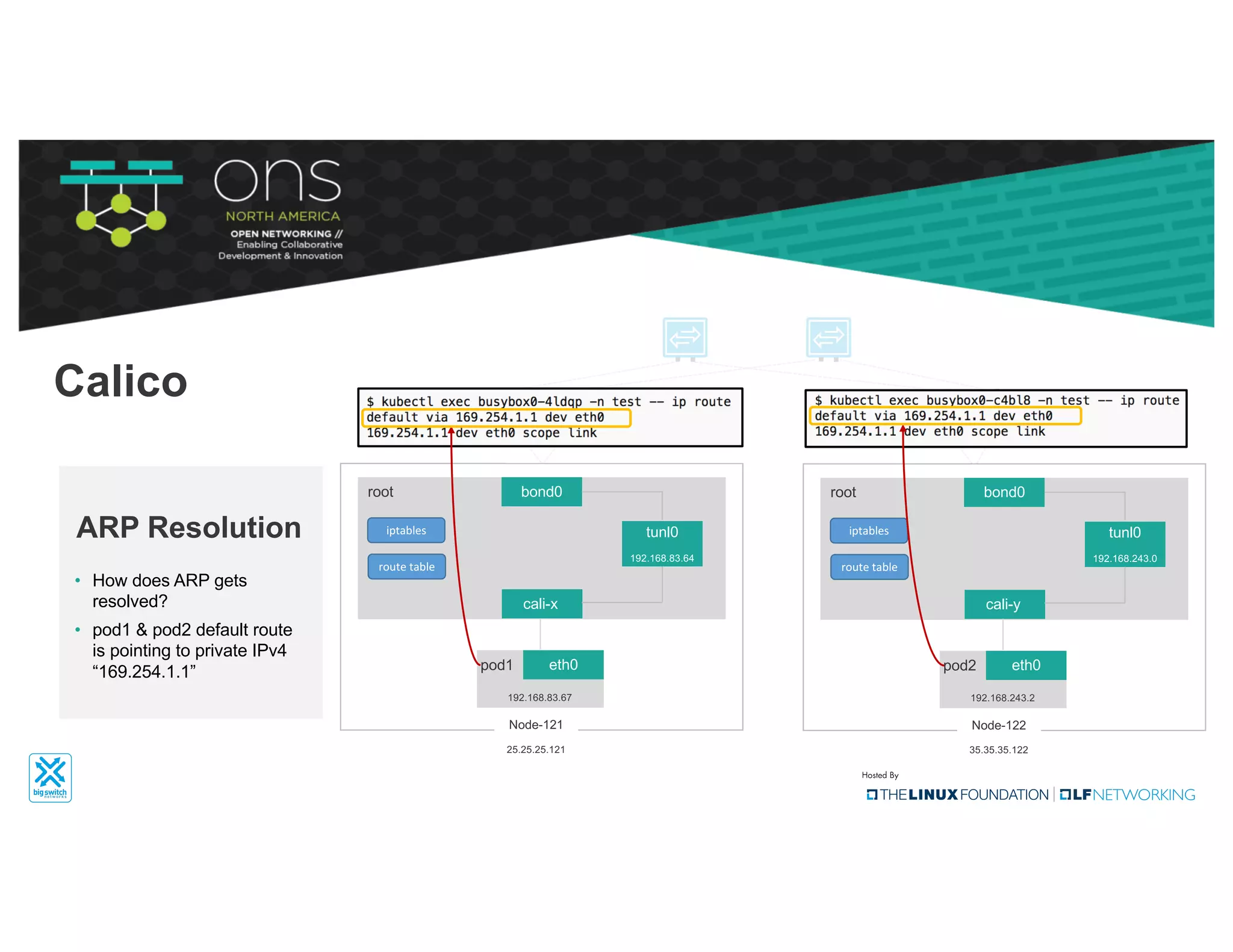

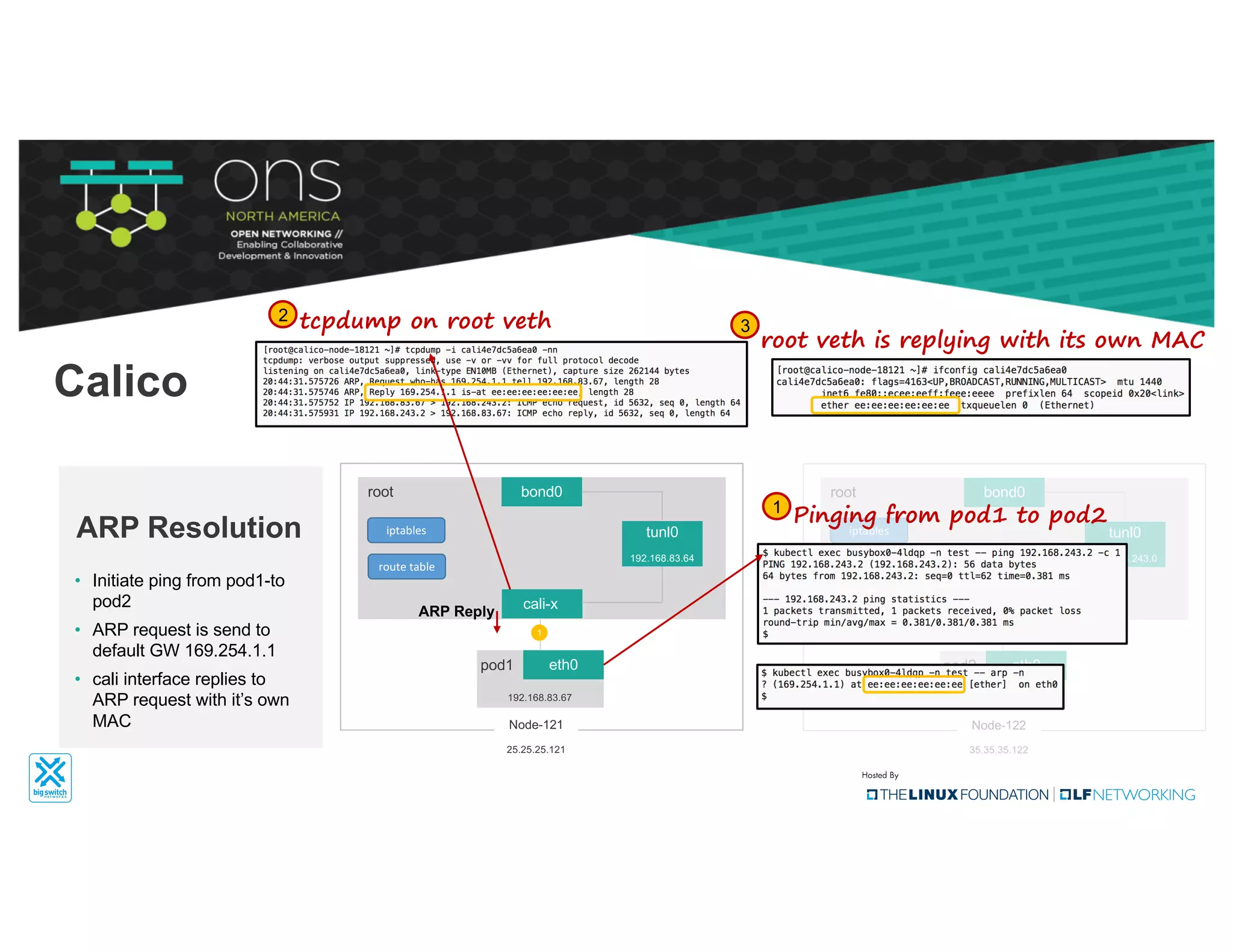

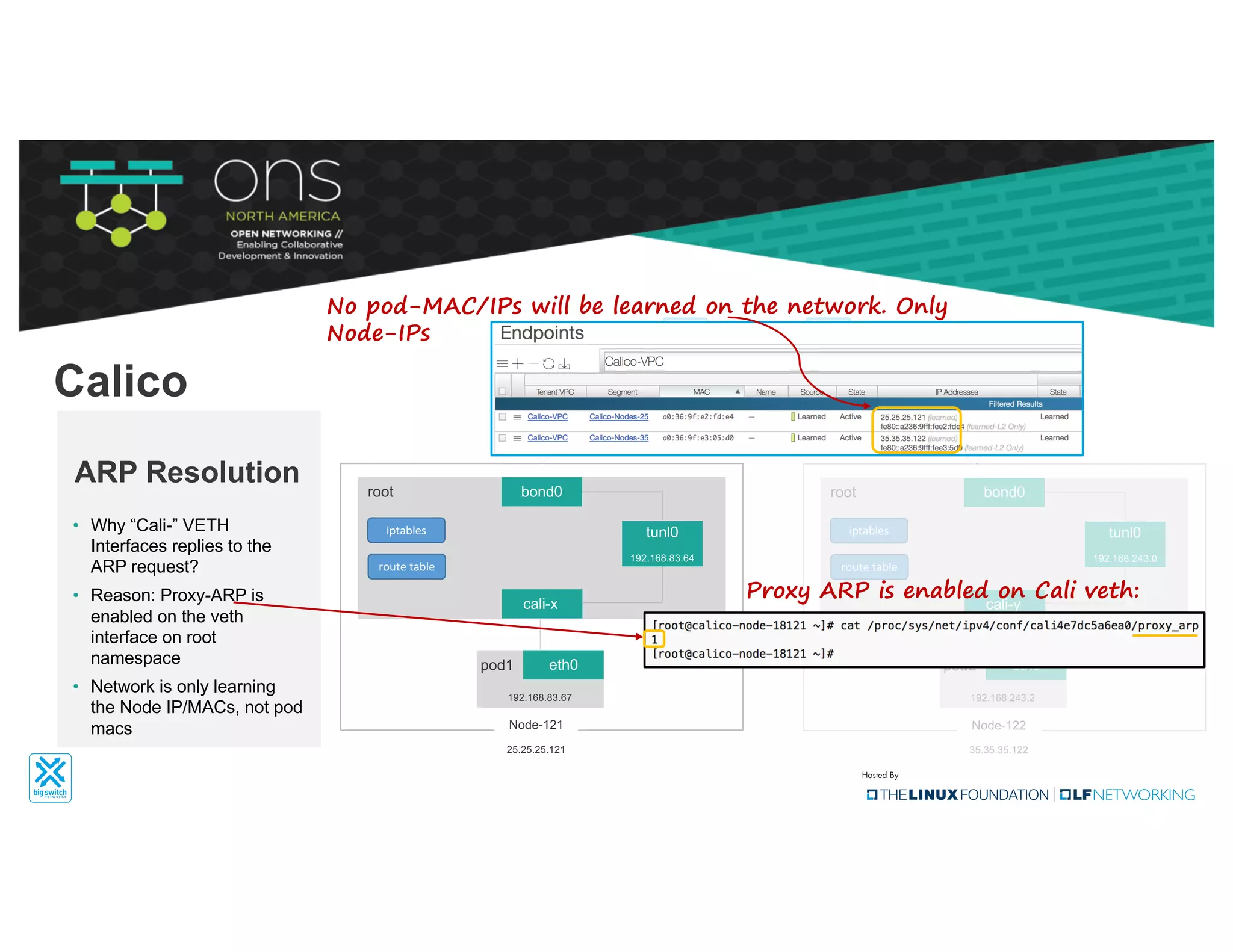

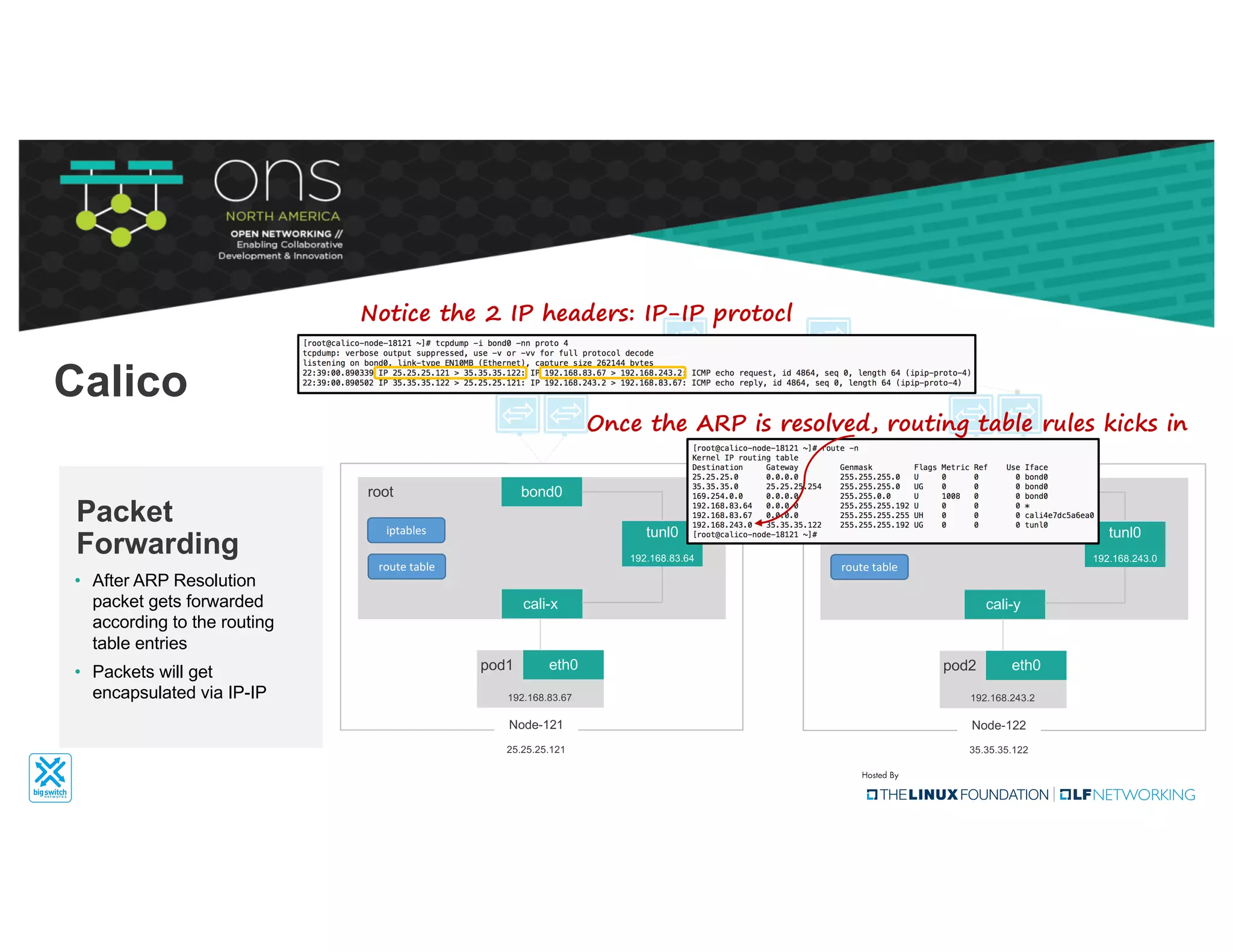

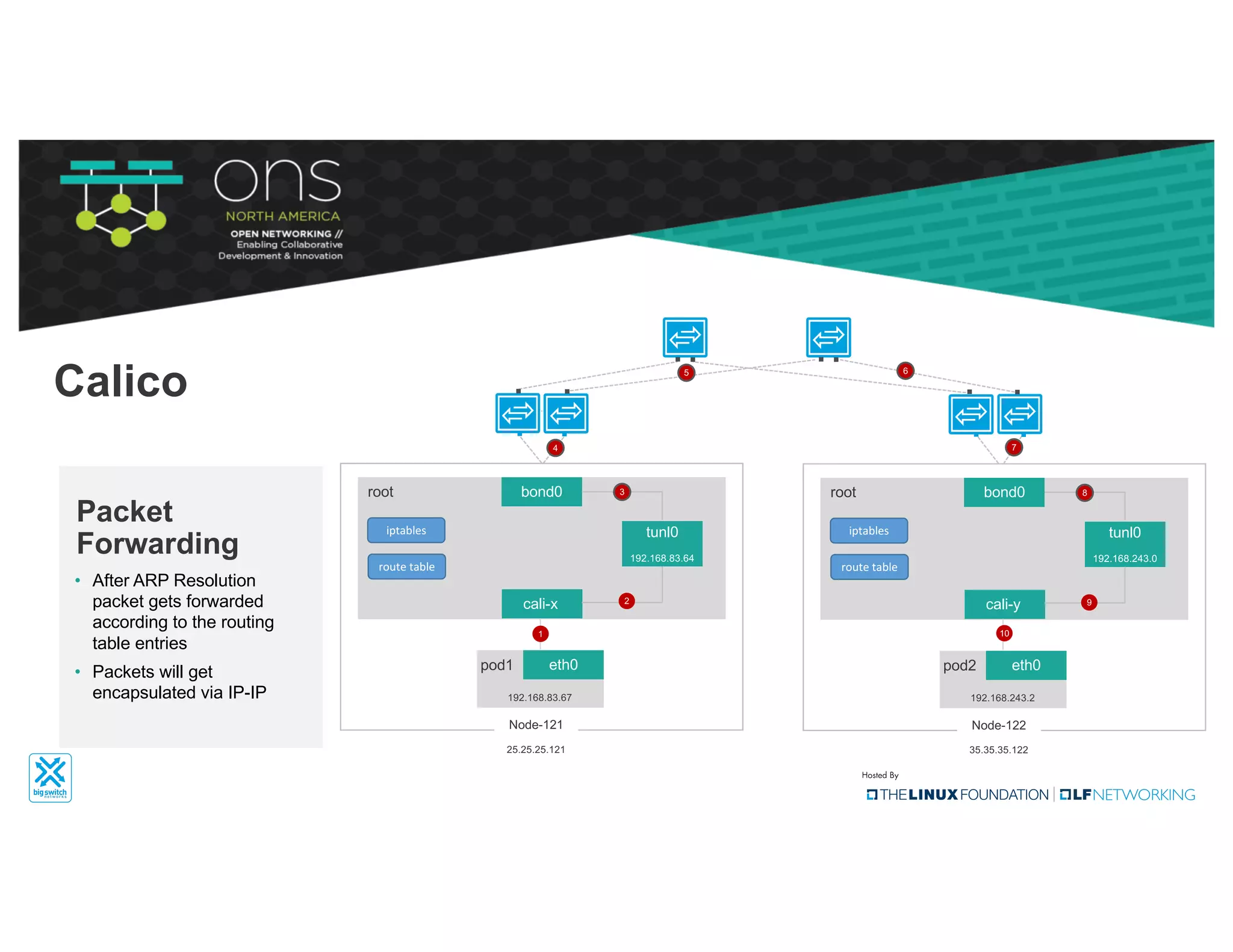

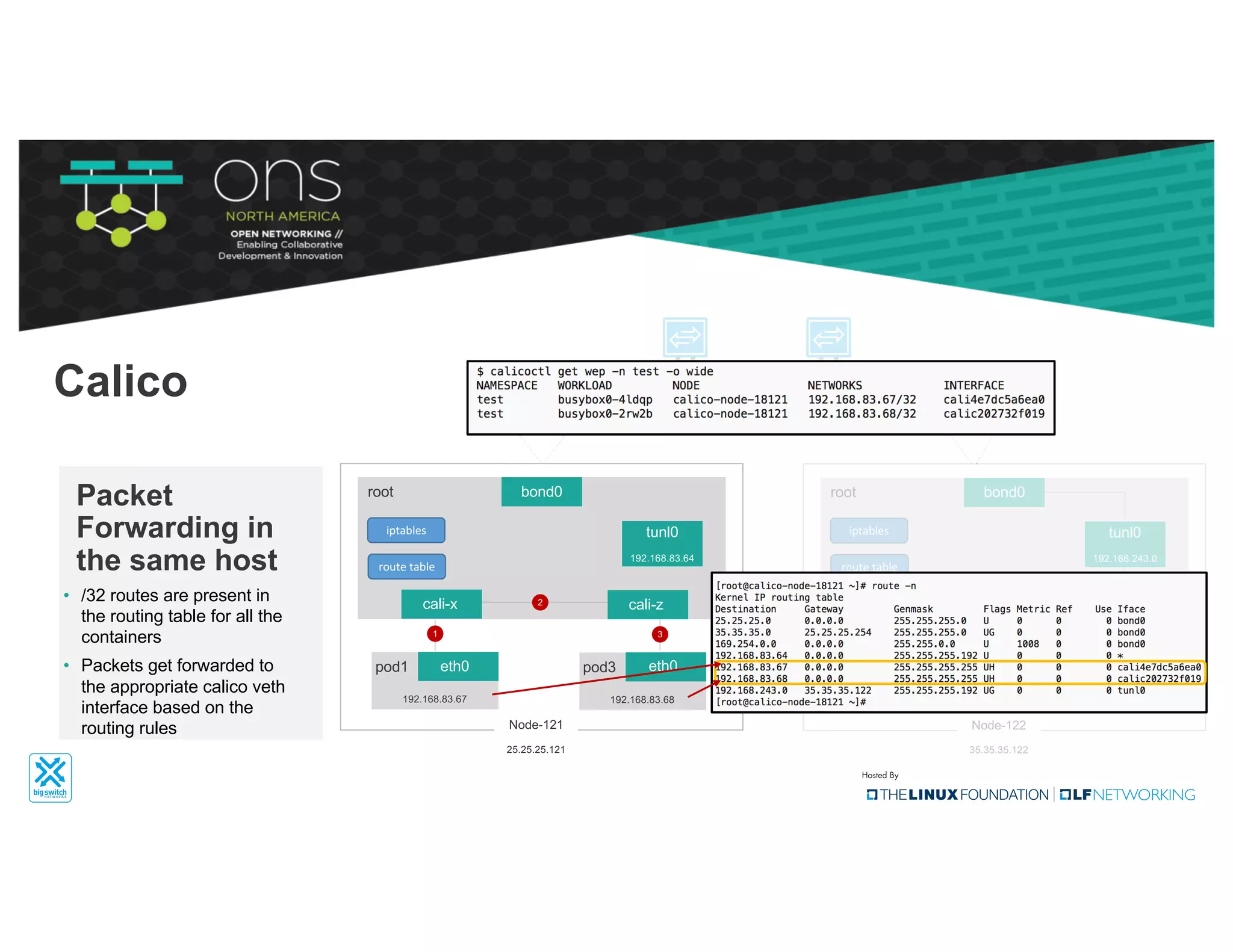

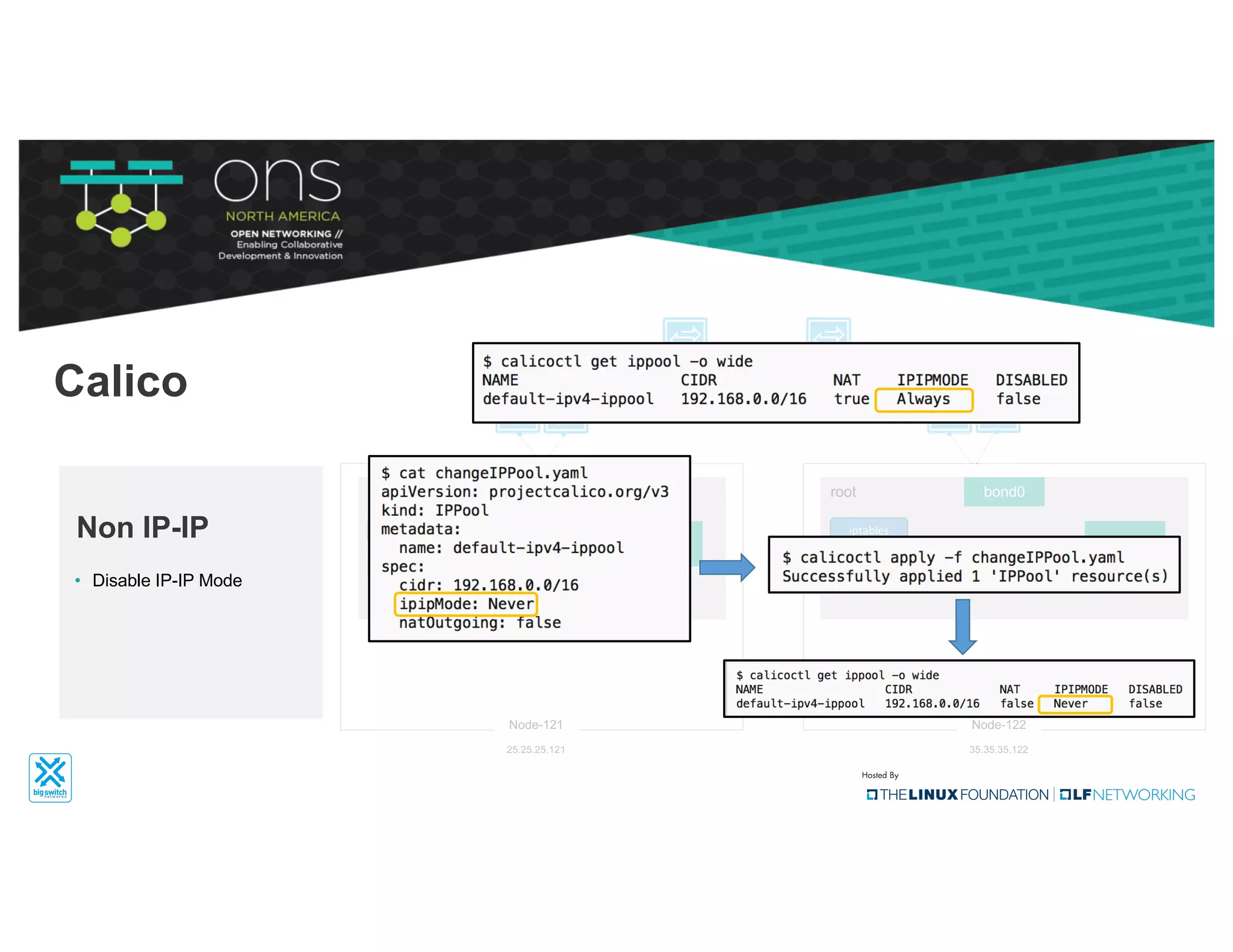

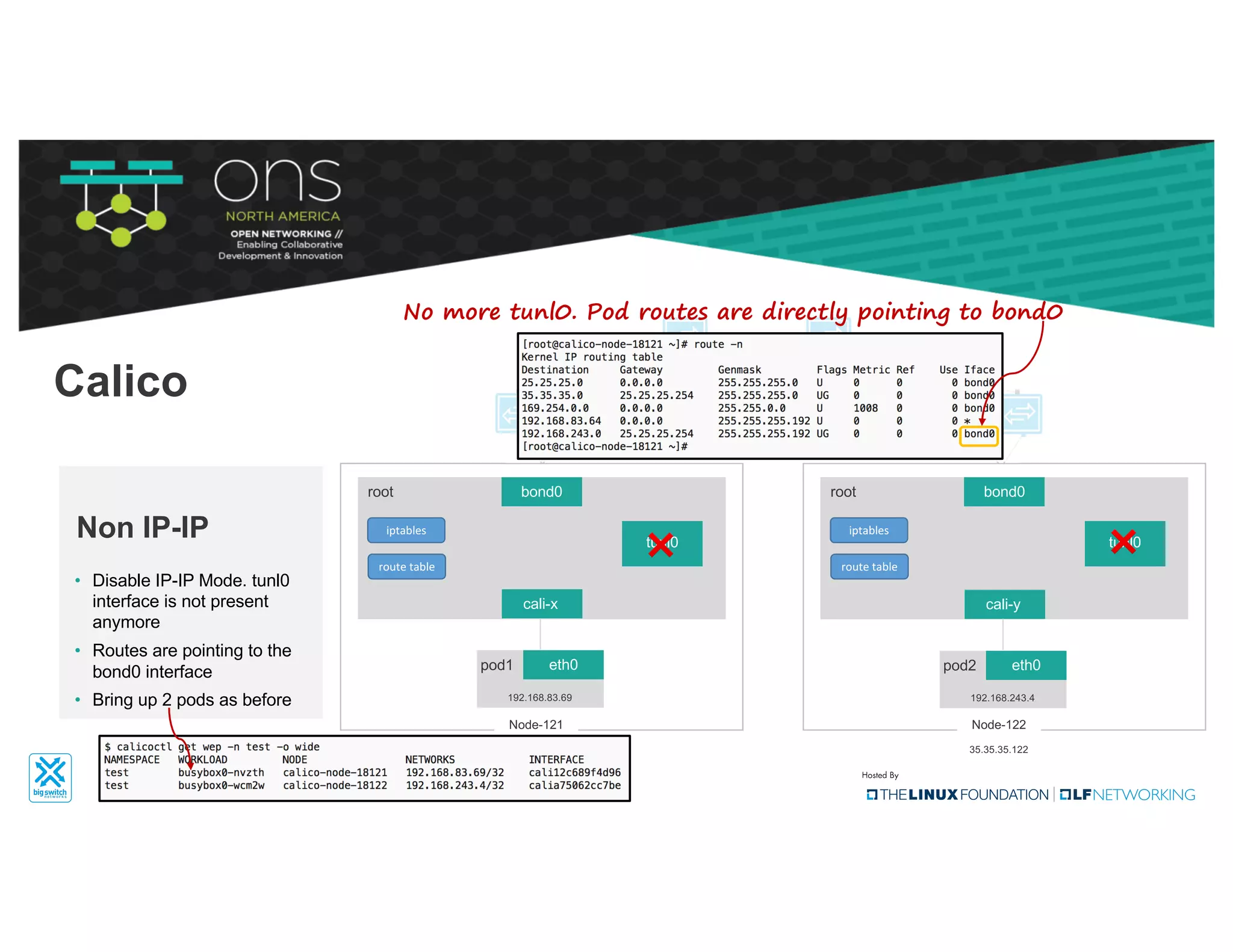

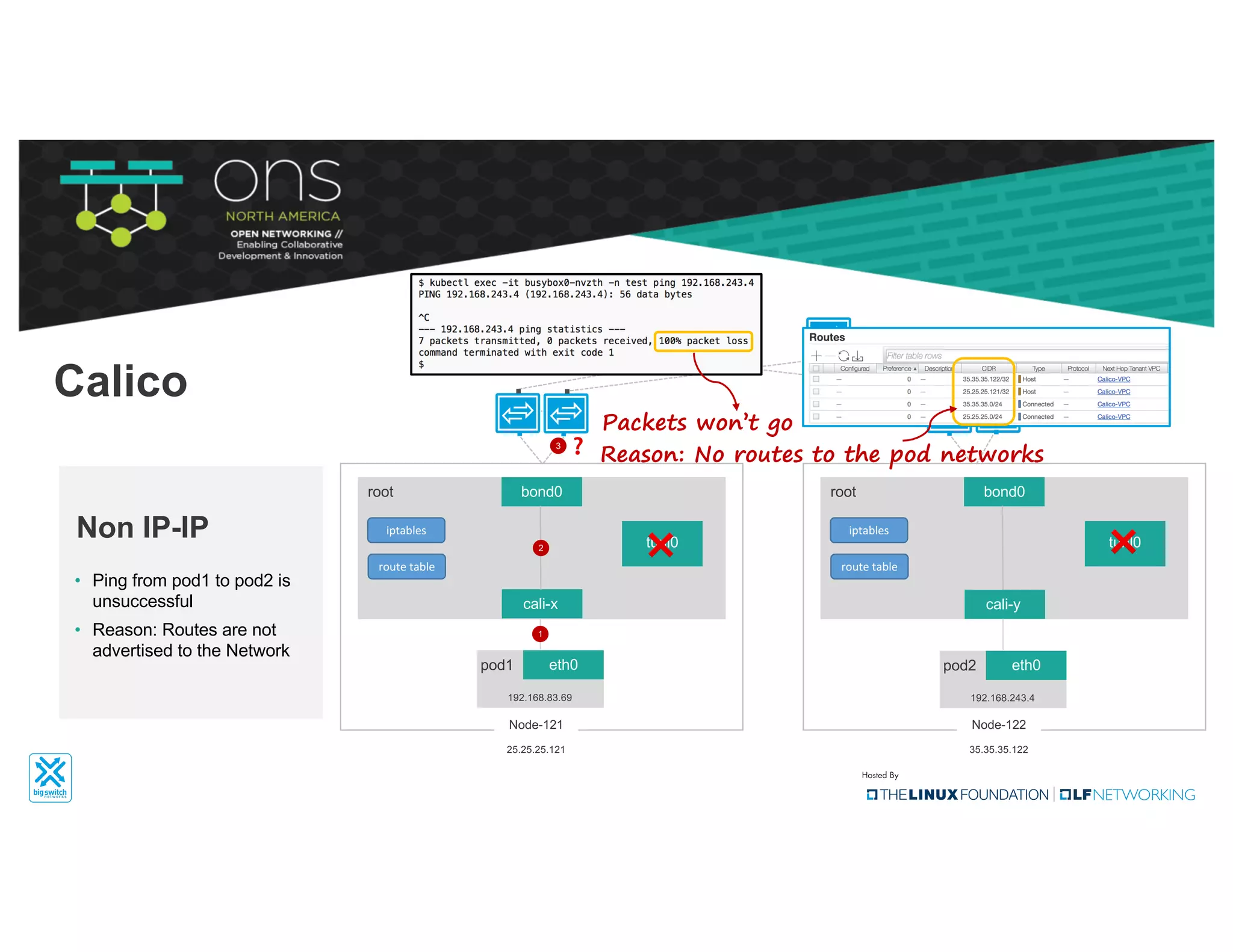

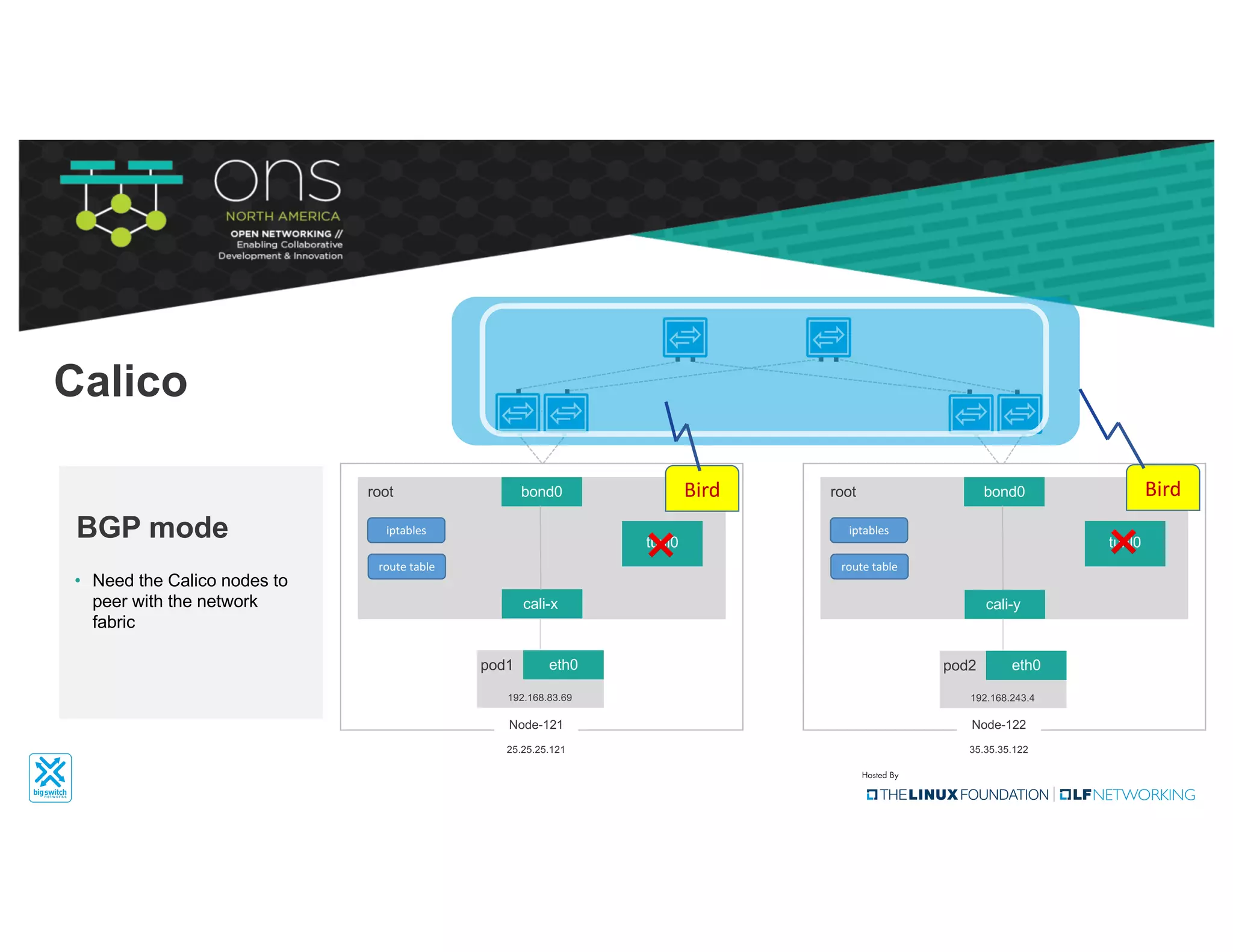

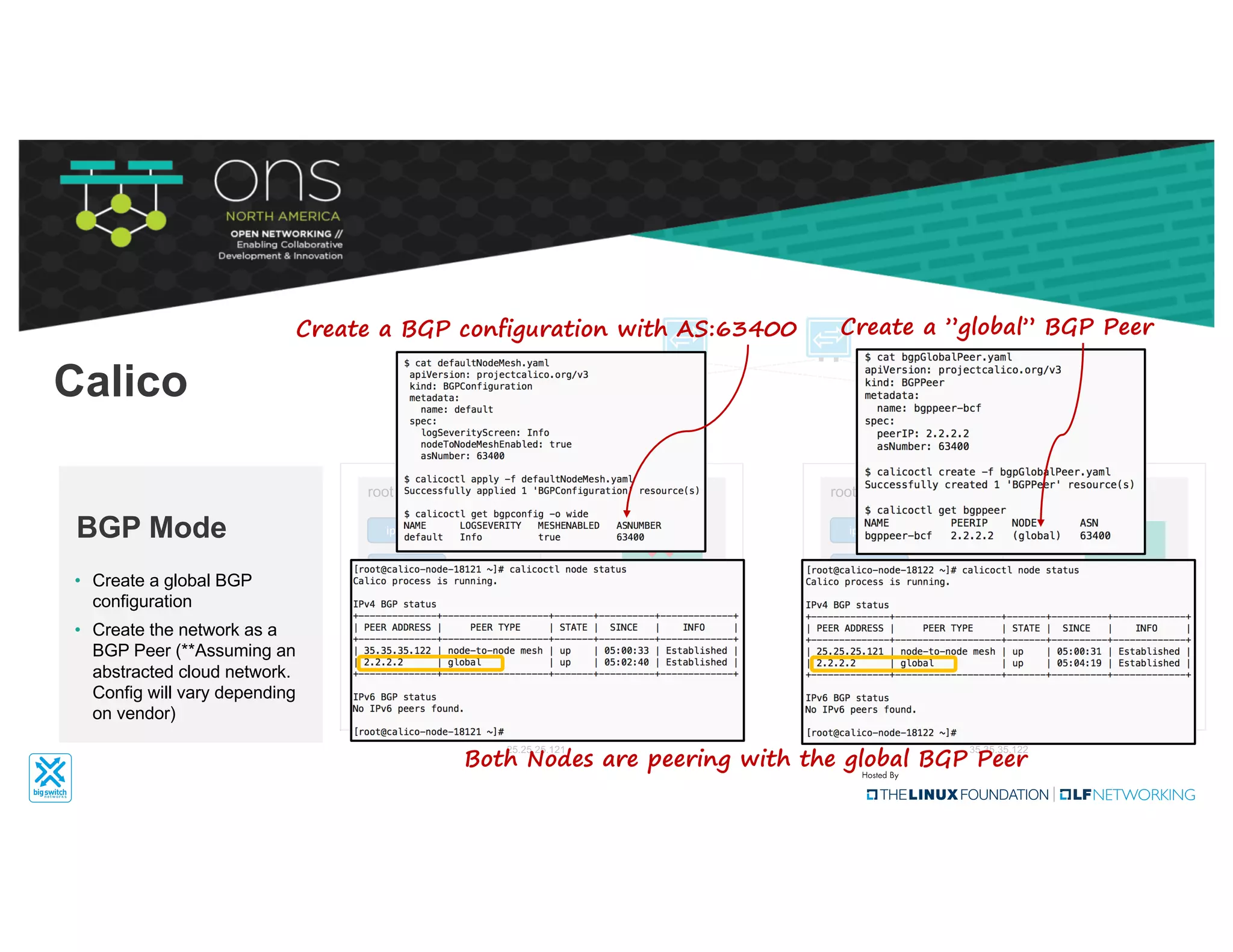

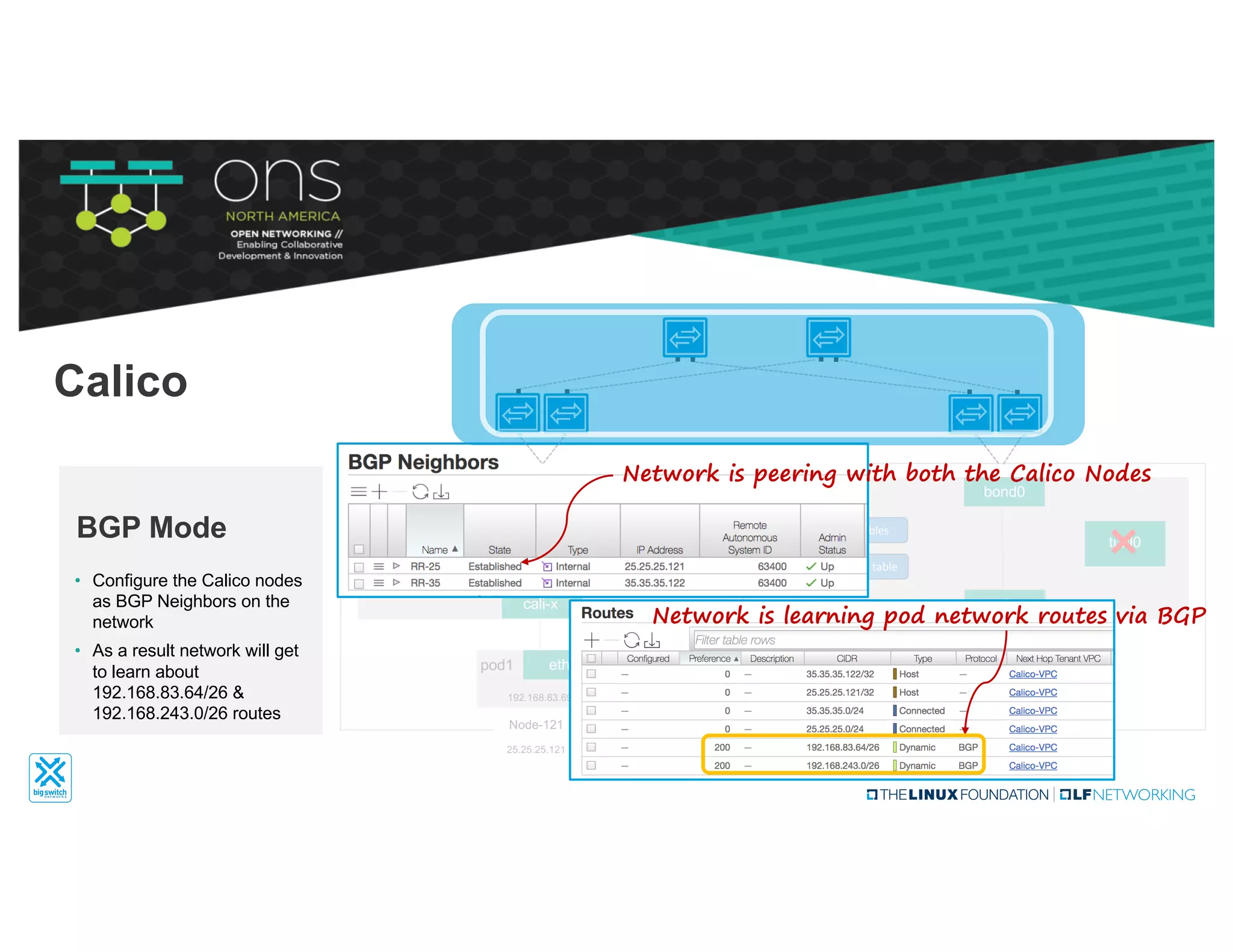

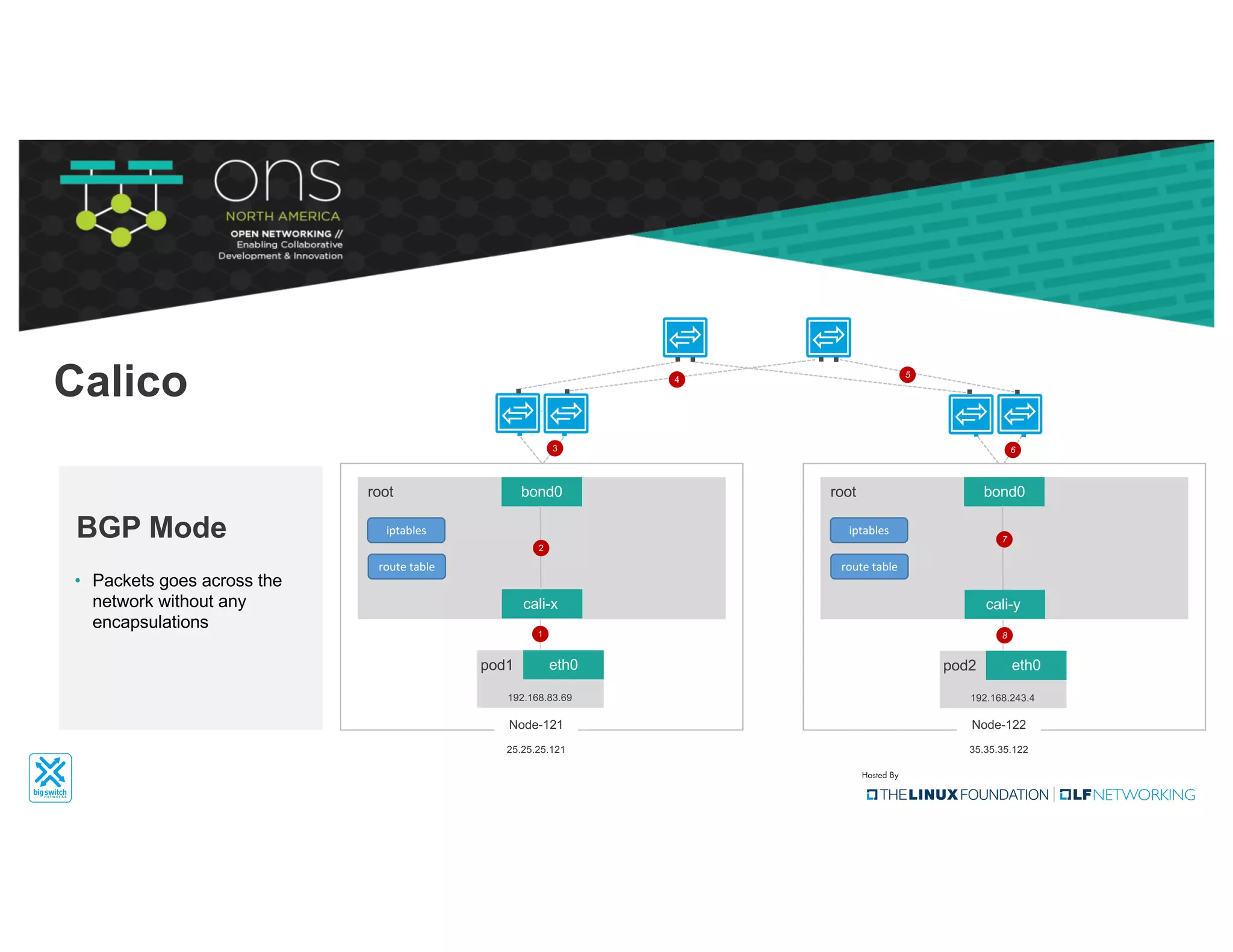

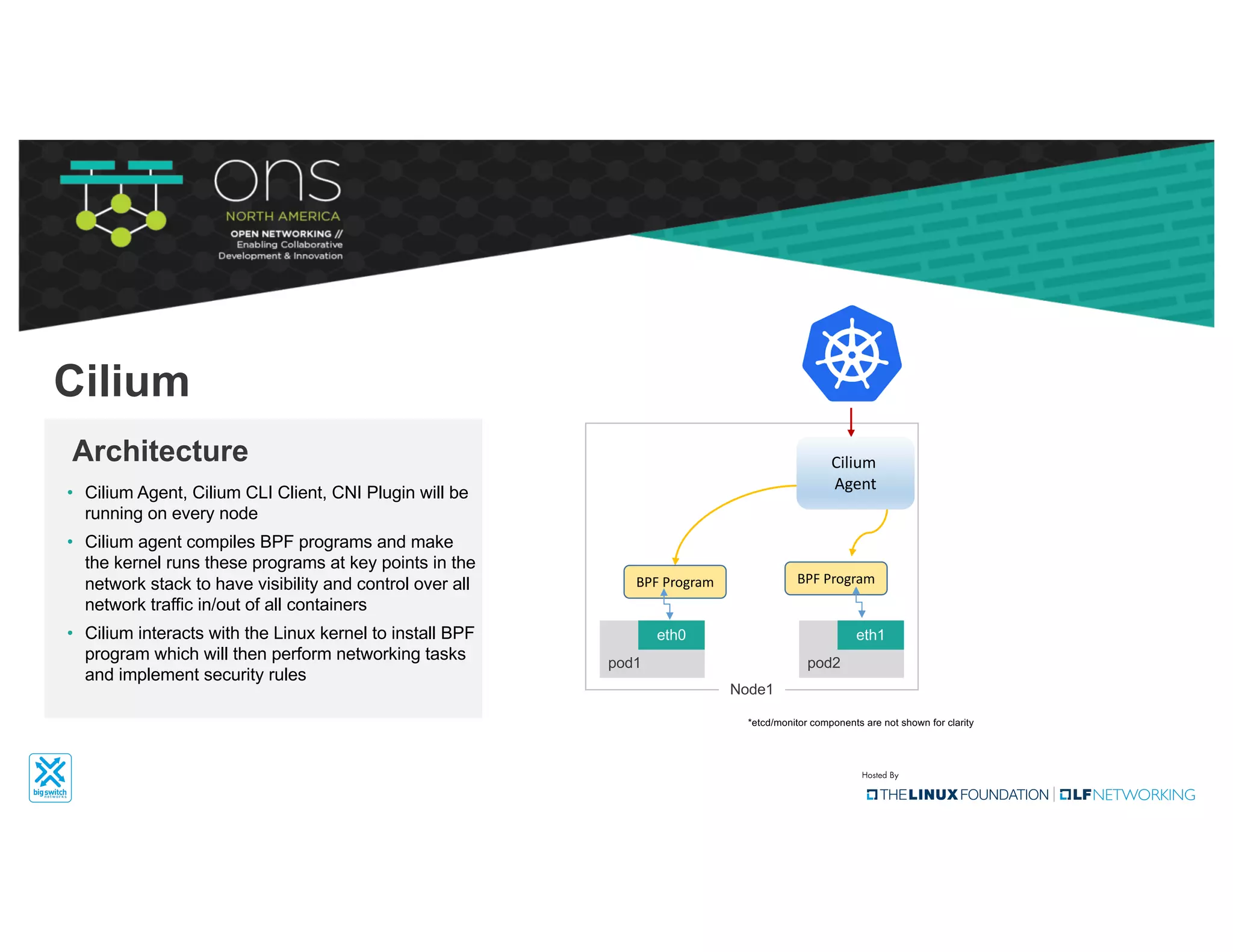

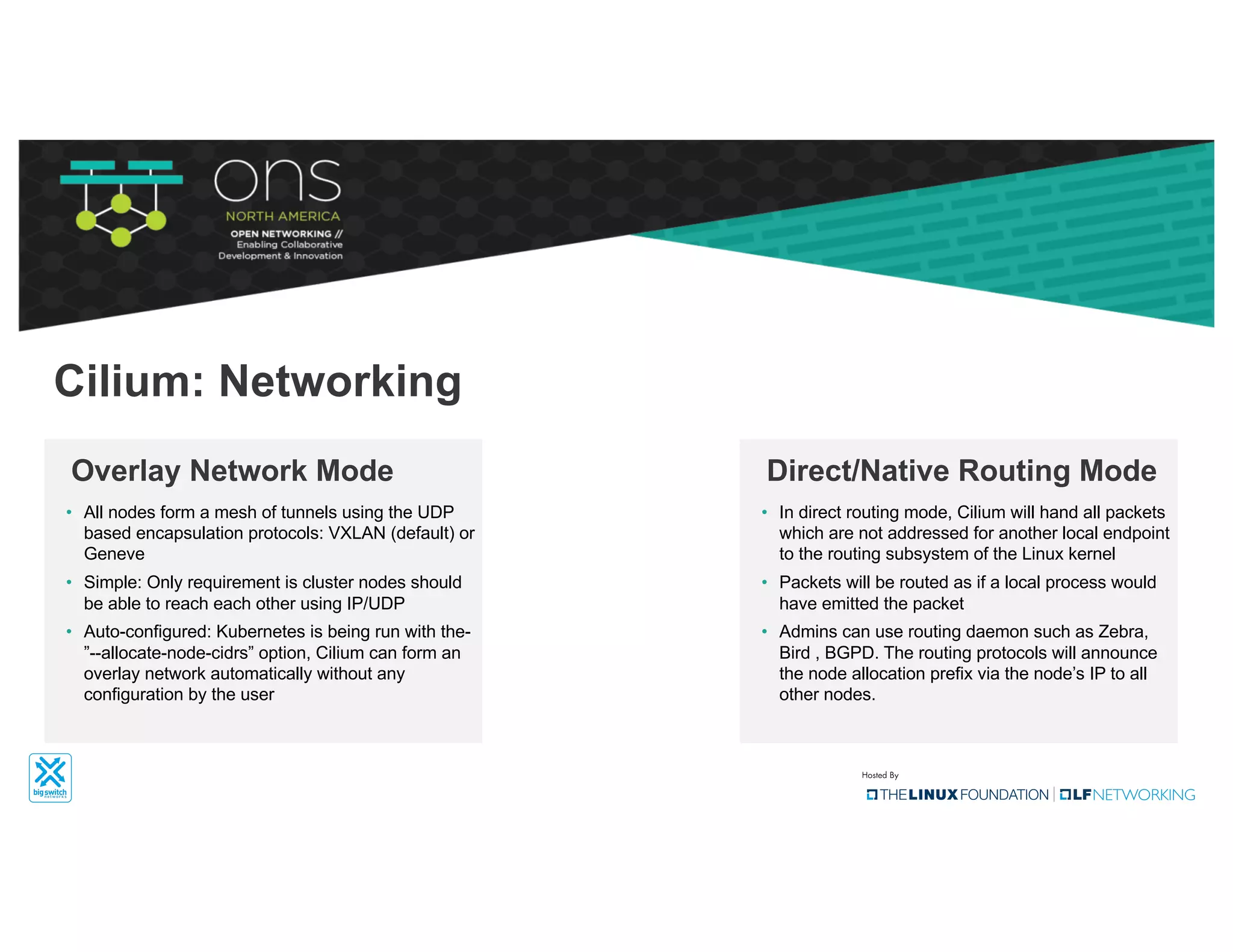

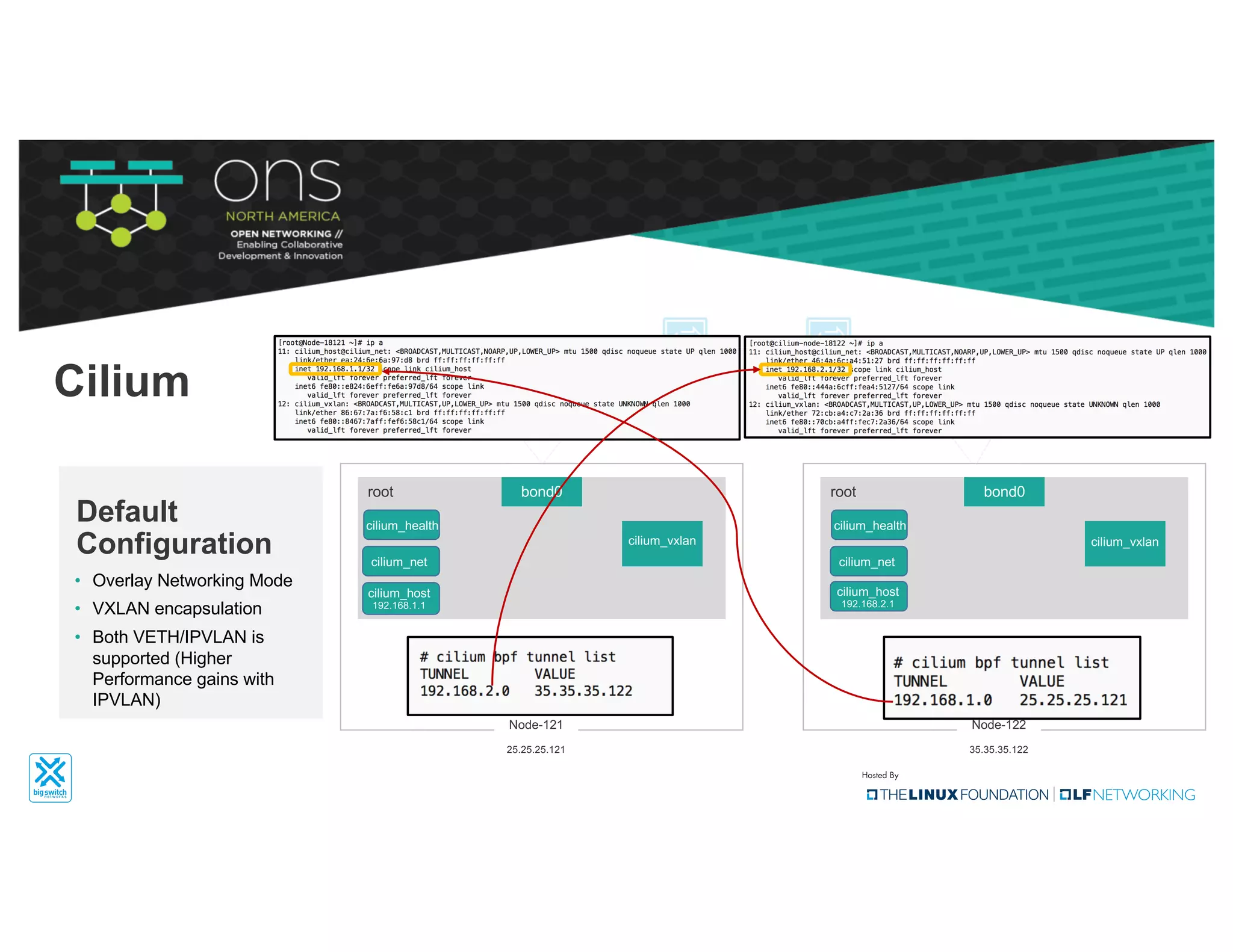

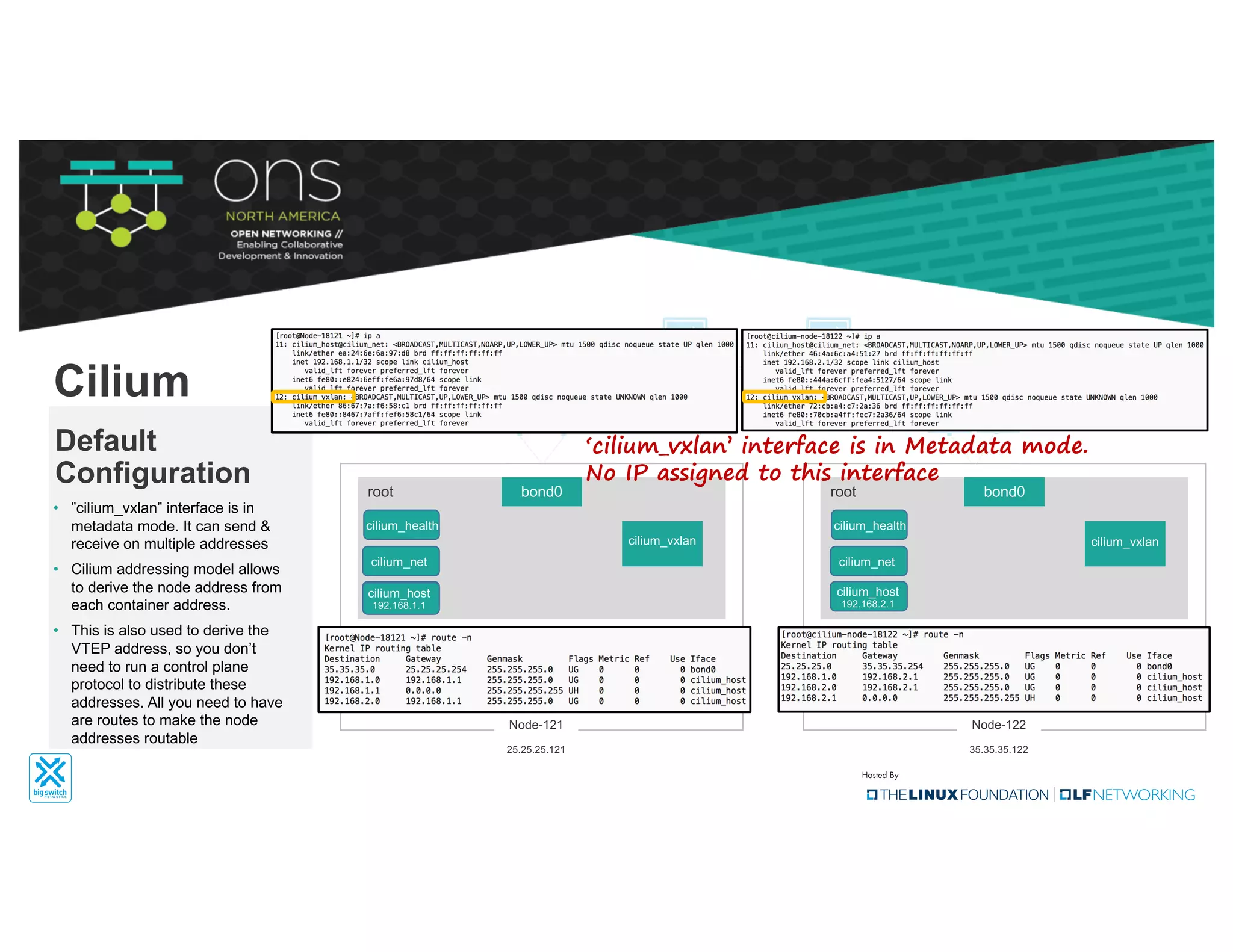

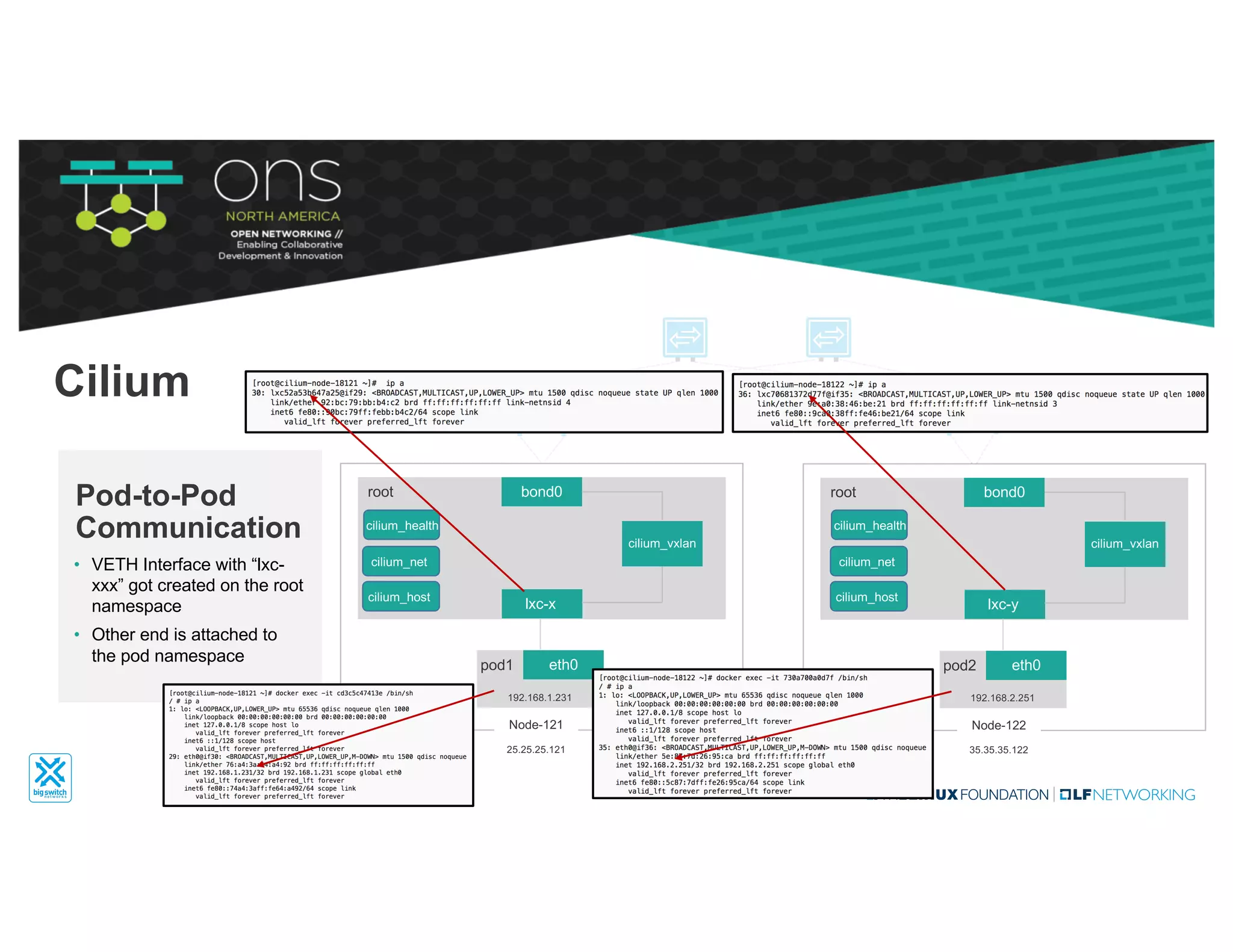

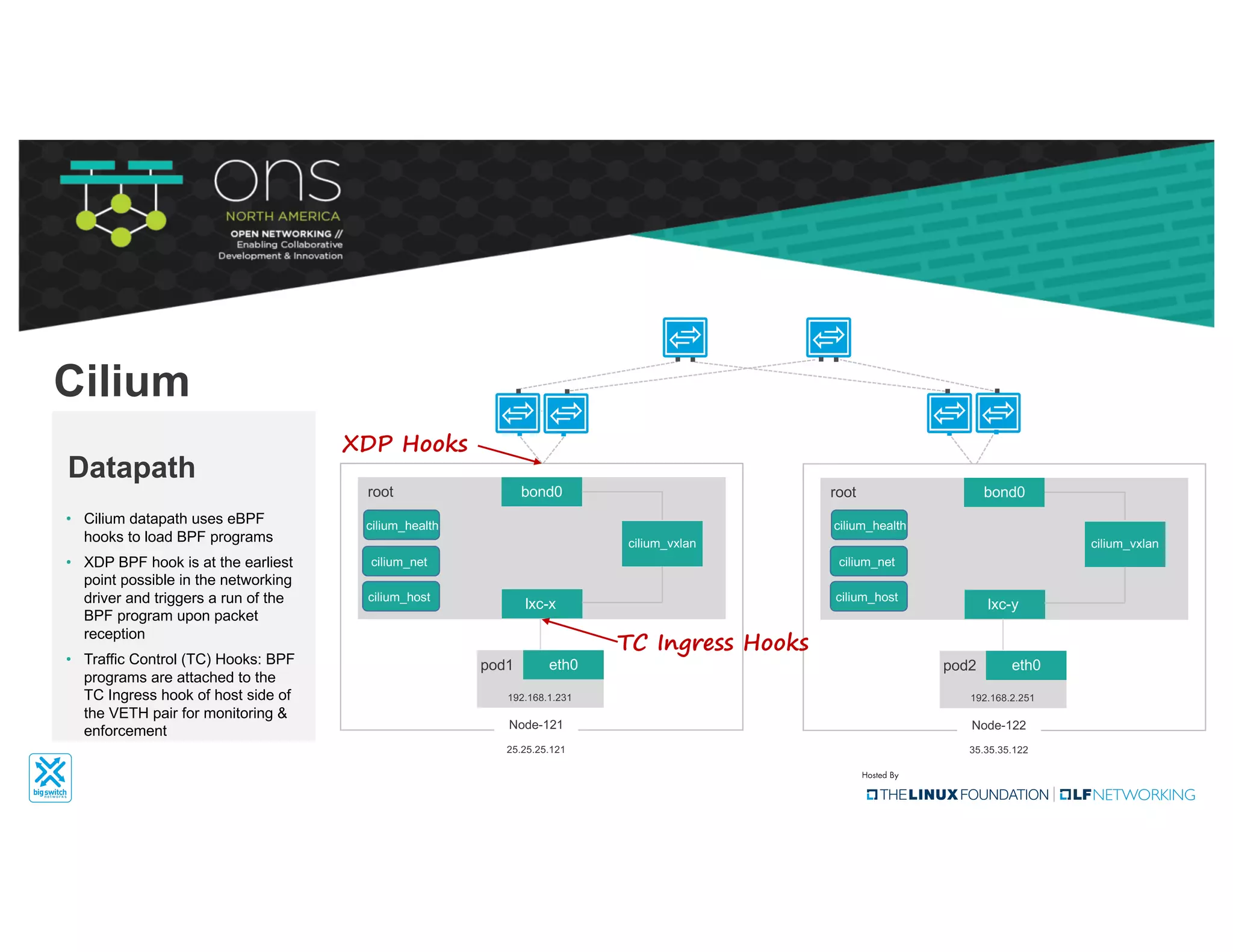

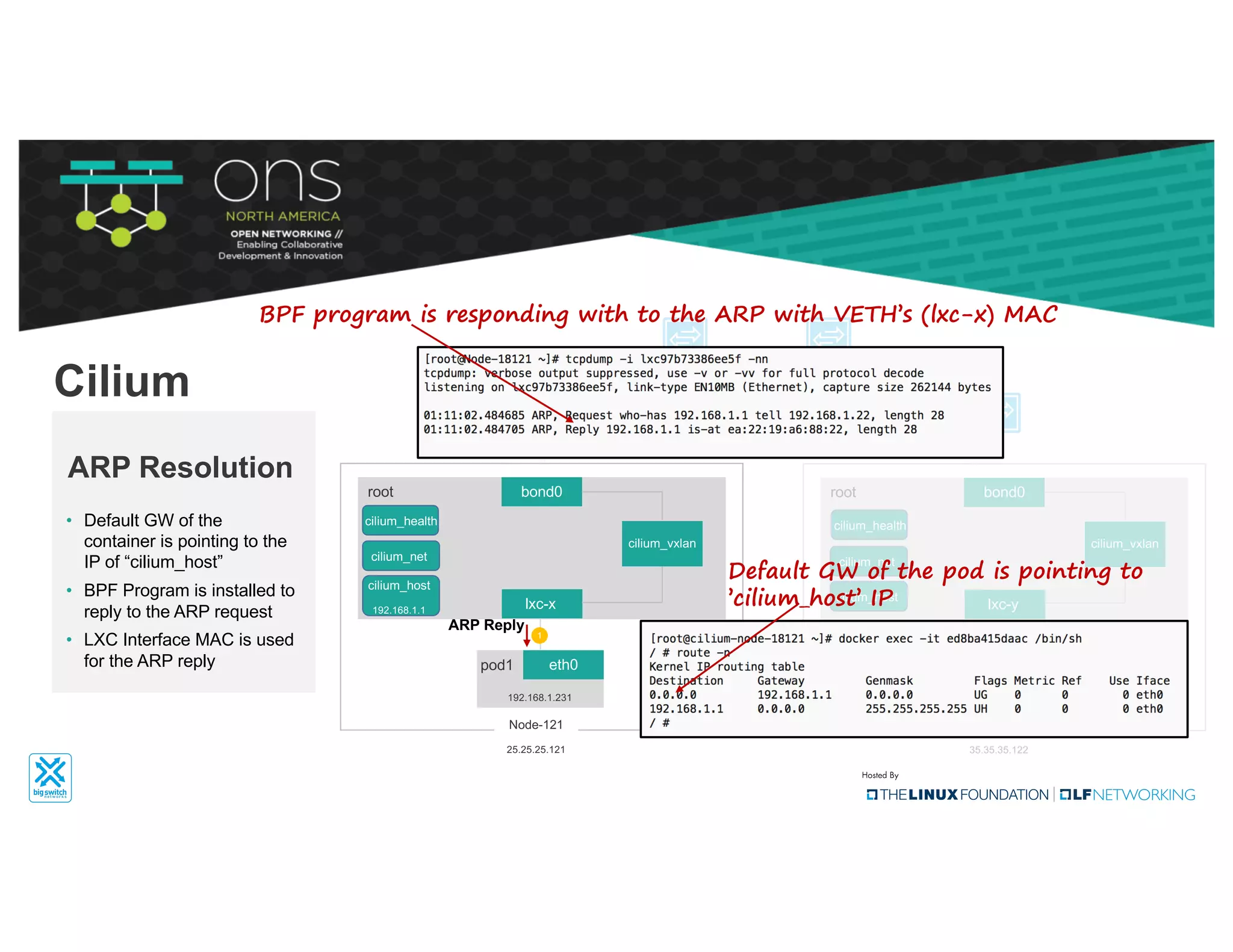

The document provides an overview of Kubernetes networking concepts and common networking solutions like Flannel and Calico. It describes how Kubernetes networking works at a basic level using namespaces and CNI plugins. It then dives deeper into how Flannel and Calico implement overlay networking between pods using techniques like VXLAN tunnels, IP-in-IP encapsulation, and BGP routing. The document walks through examples of pod-to-pod communication and how packets flow with ARP resolution and routing for both Flannel and Calico networks.