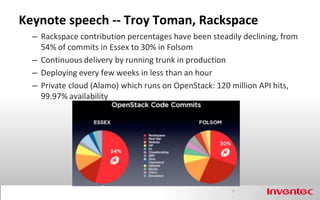

- The keynote at the OpenStack 2012 Fall Summit highlighted Rackspace's decreasing contribution to OpenStack commits over time and Rackspace's private cloud which runs OpenStack and sees high usage.

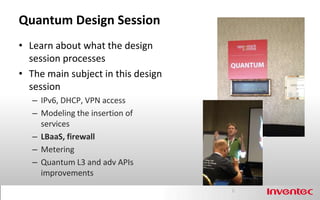

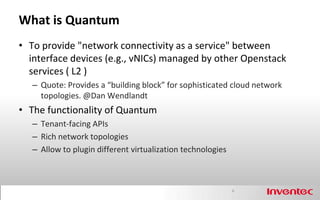

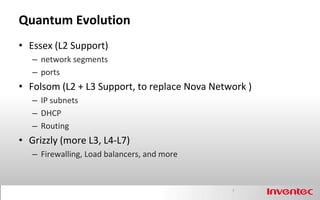

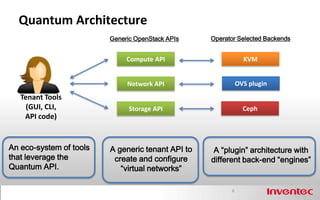

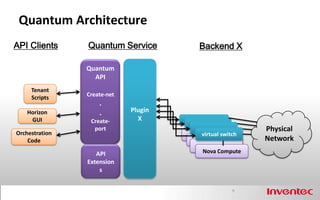

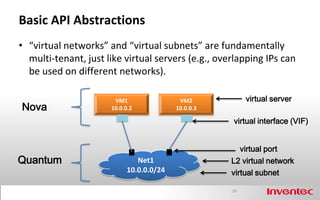

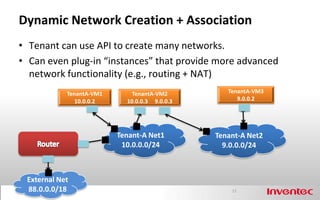

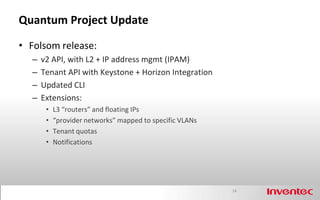

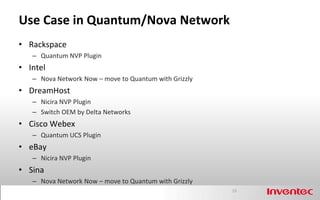

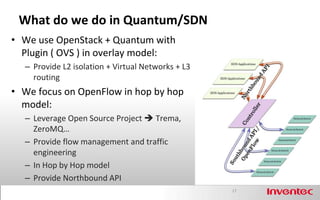

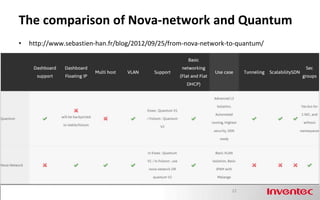

- The Quantum project in OpenStack provides network connectivity as a service and allows different virtualization technologies to be plugged in as backends. It has evolved to add L3 and L4-L7 network services.

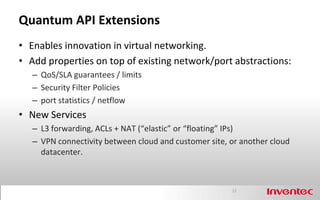

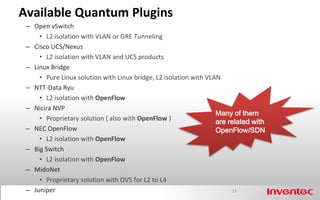

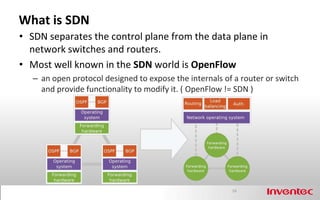

- Quantum uses a plugin architecture so that different virtual network backends like Open vSwitch, Linux bridge can be used. Extensions allow for additional network properties and new services like routing, load balancing to be added.