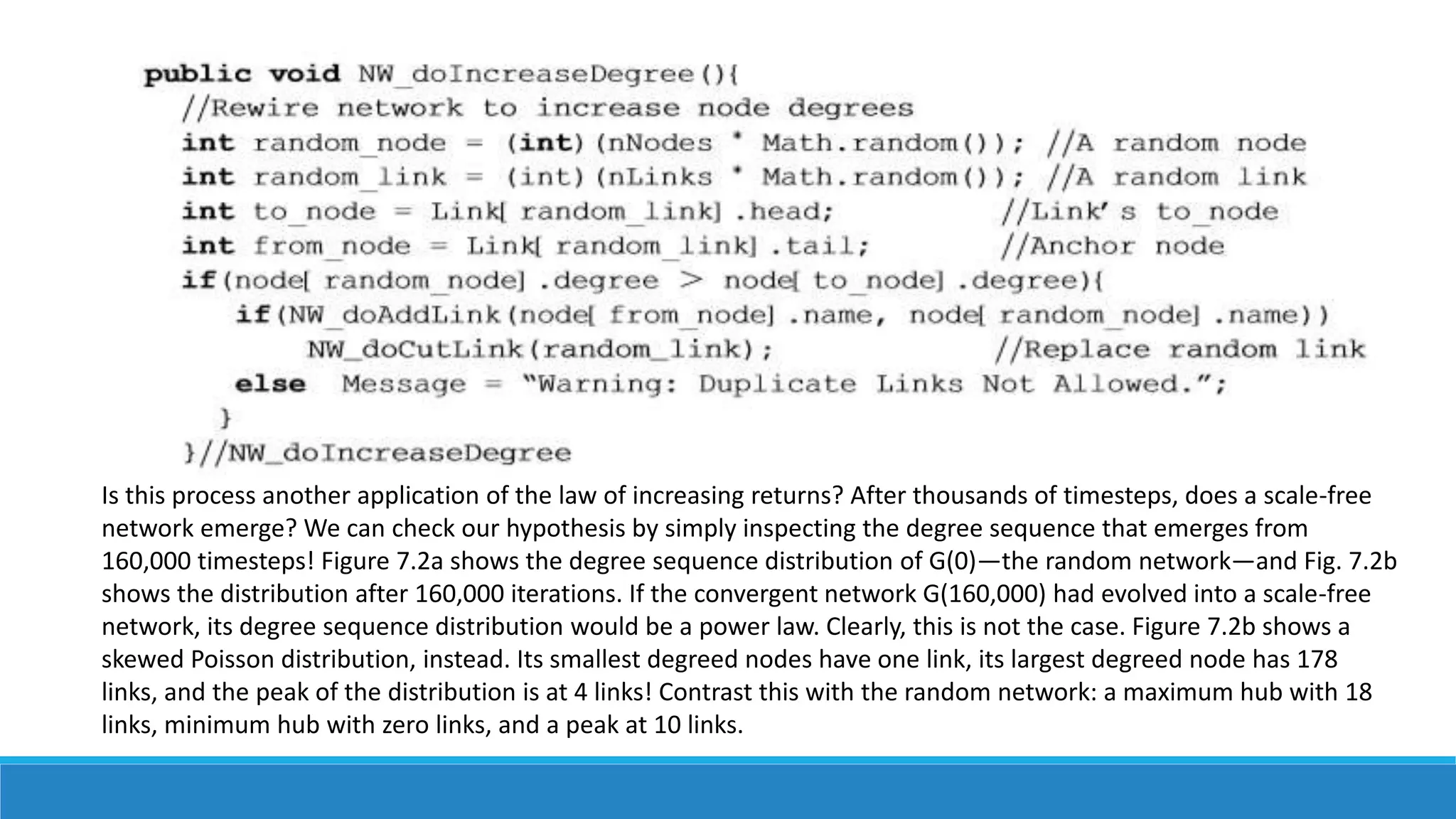

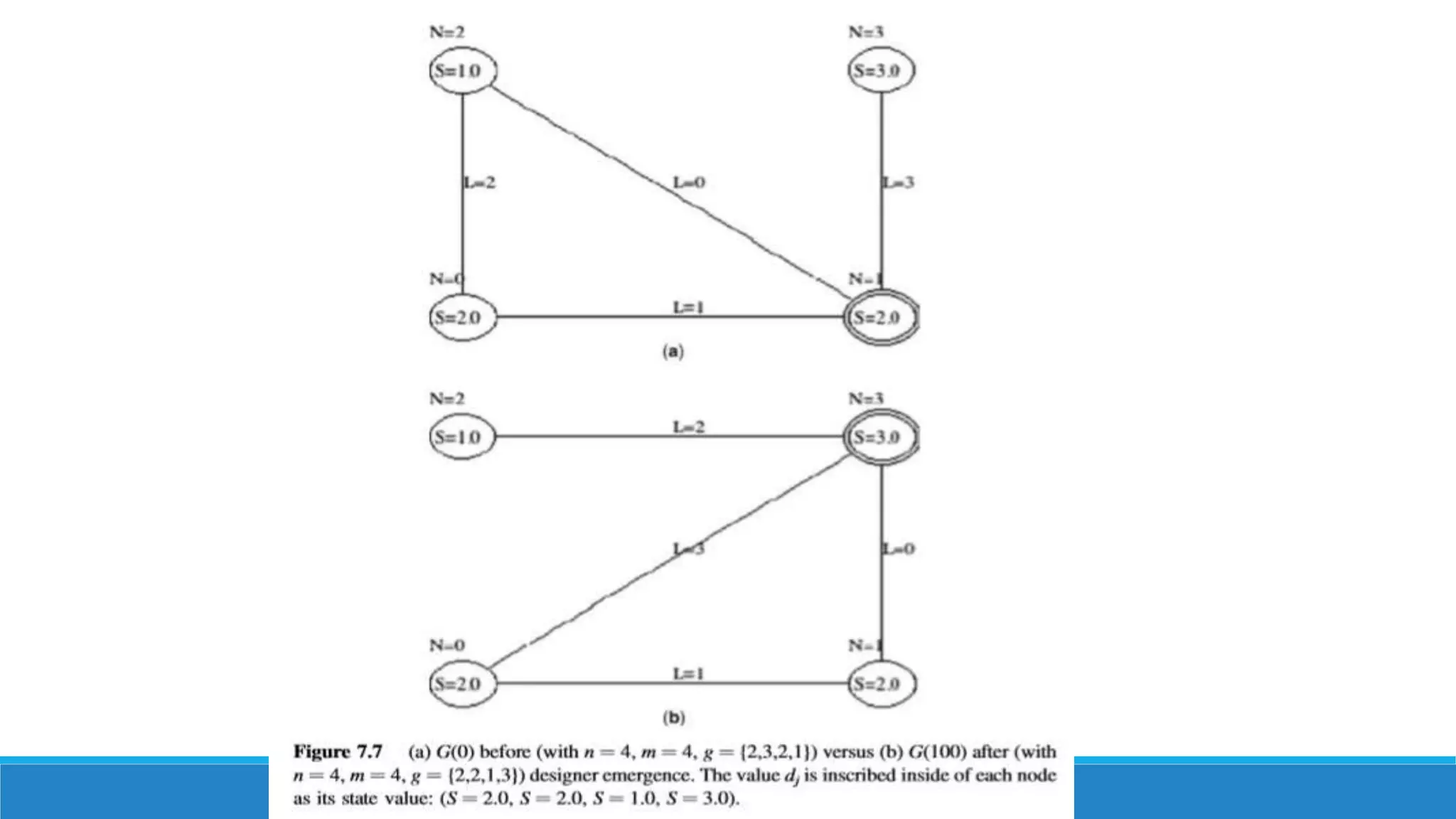

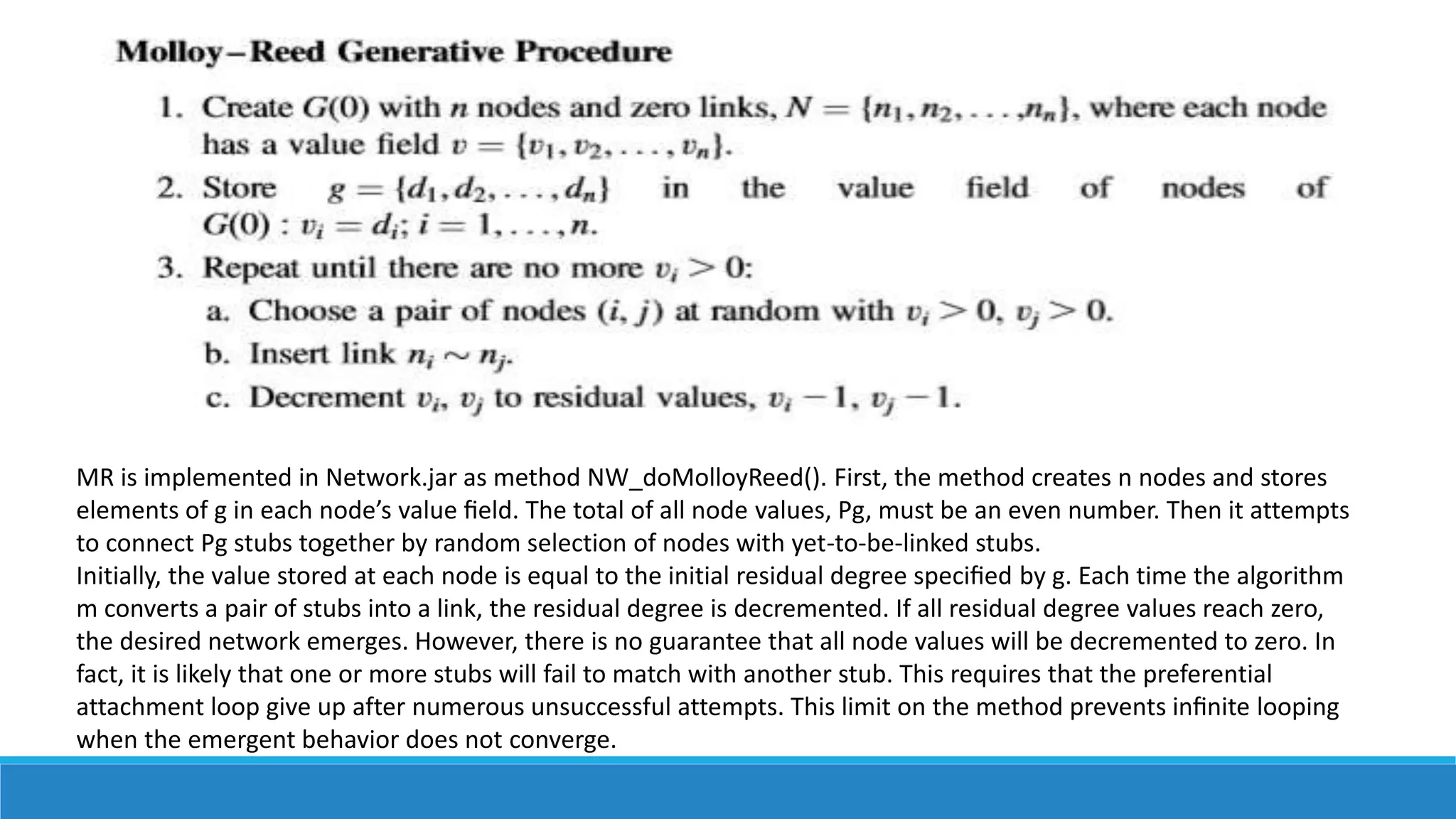

The document discusses network emergence and genetic evolution. It provides examples of emergence in various domains including social science, physical science, and biology. Emergence occurs when new properties or behaviors arise from the interaction of parts that the parts do not exhibit on their own. The document also discusses open-loop emergence where a network absorbs energy and changes over time through the repeated application of micro-level rules, as well as genetic emergence where micro-rules are repeatedly applied to observe how a network evolves. Designer networks with a given degree sequence can be generated using algorithms like the Molloy-Reed algorithm.