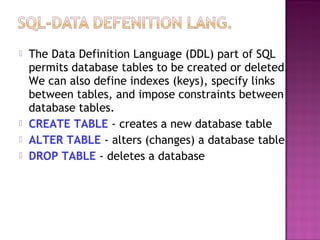

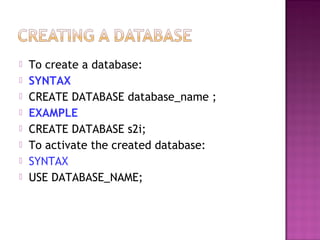

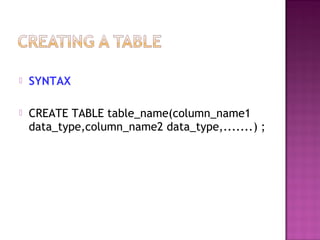

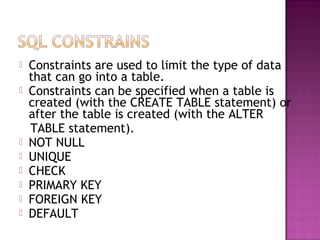

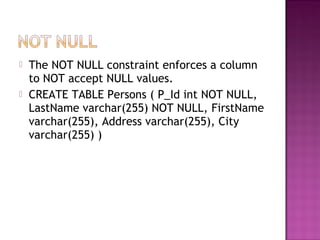

This document provides an overview of SQL (Structured Query Language) concepts including data definition and manipulation commands, data types, constraints, joins, aggregate and scalar functions. Key topics covered include using DDL commands to create and modify database tables, DML commands to insert, update, delete and select data, and SQL clauses like WHERE, ORDER BY, GROUP BY and more. The document also discusses database concepts like primary keys, foreign keys, indexes and constraints.

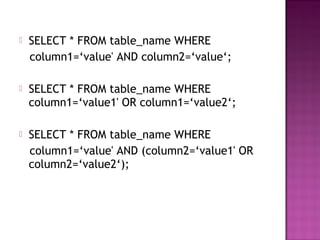

![ All values stored in mysql is in array formate.

$var1=mysql_query(“select*from table_name”,

$connection_name);

While($var=mysql_fetch_array($var1))

{

Echo “<br/>”;

Echo $var[‘column_name’];

}](https://image.slidesharecdn.com/mysql-120831075600-phpapp01-130817225354-phpapp02/85/Mysql-120831075600-phpapp01-15-320.jpg)

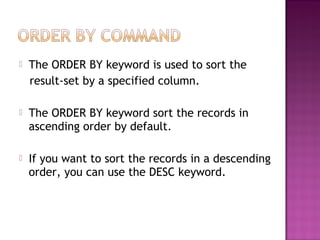

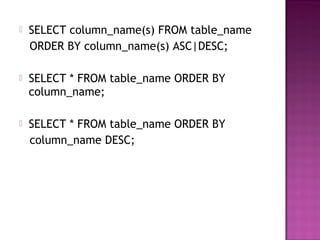

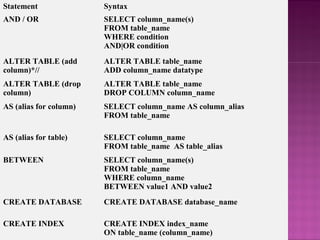

![ORDER BY SELECT column_name(s)

FROM table_name

ORDER BY column_name

[ASC|DESC]

SELECT SELECT column_name(s)

FROM table_name

SELECT * SELECT *

FROM table_name

SELECT DISTINCT SELECT DISTINCT

column_name(s)

FROM table_name

SELECT INTO

(used to create backup copies

of tables)

SELECT *

INTO new_table_name

FROM original_table_name

or

SELECT column_name(s)

INTO new_table_name

FROM original_table_name](https://image.slidesharecdn.com/mysql-120831075600-phpapp01-130817225354-phpapp02/85/Mysql-120831075600-phpapp01-49-320.jpg)

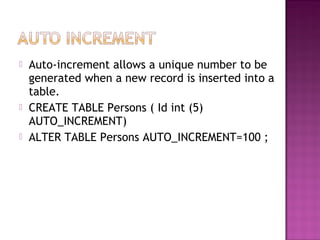

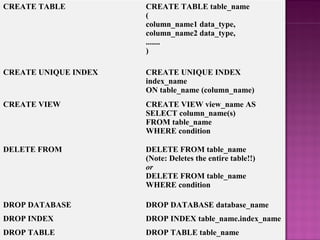

![SELECT INTO

(used to create backup copies of tables)

SELECT *

INTO new_table_name

FROM original_table_name

or

SELECT column_name(s)

INTO new_table_name

FROM original_table_name

TRUNCATE TABLE

(deletes only the data inside the table)

TRUNCATE TABLE table_name

UPDATE UPDATE table_name

SET column_name=new_value

[, column_name=new_value]

WHERE column_name=some_value

WHERE SELECT column_name(s)

FROM table_name

WHERE condition](https://image.slidesharecdn.com/mysql-120831075600-phpapp01-130817225354-phpapp02/85/Mysql-120831075600-phpapp01-50-320.jpg)