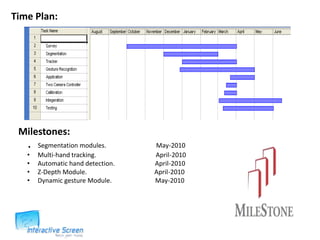

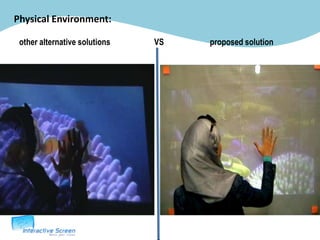

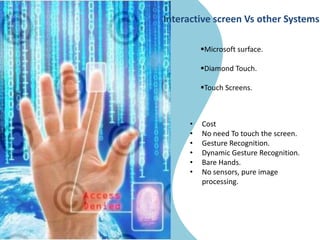

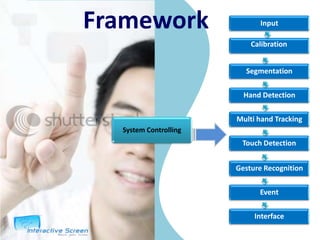

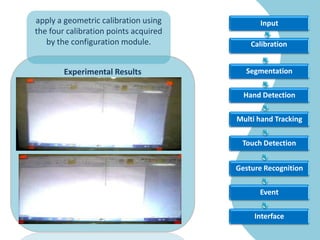

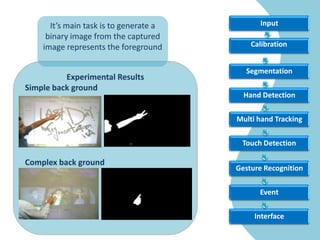

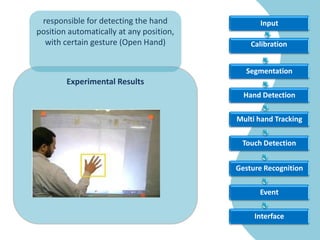

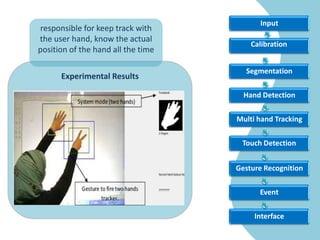

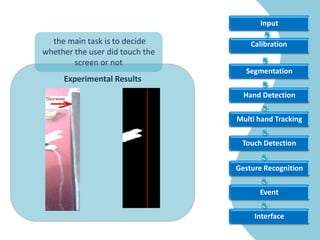

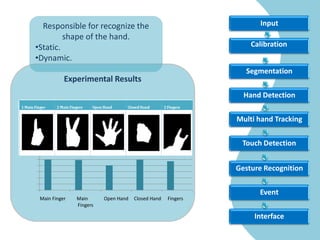

The document outlines the development of a multi-touch interactive screen system that uses human-computer interaction through gesture recognition, aiming to eliminate the need for conventional input devices. It discusses the system's framework, components, applications, limitations, and future enhancements such as multi-user capability and advanced body tracking. References to various research and existing technologies are included to support the project's foundation and goals.