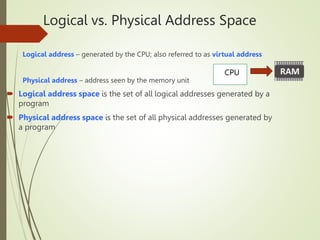

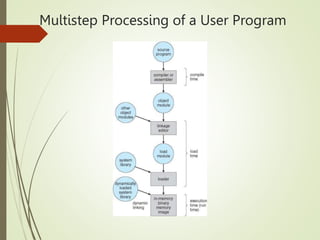

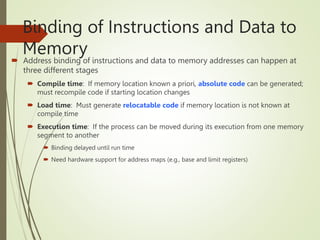

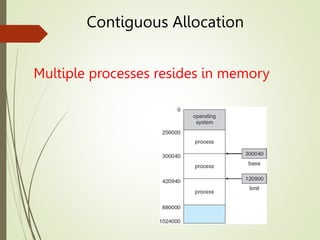

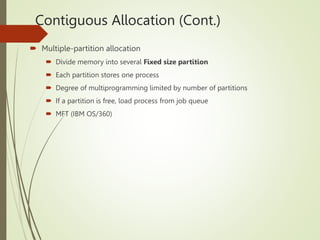

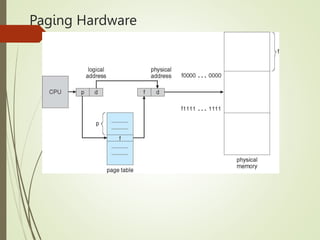

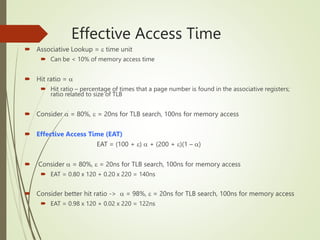

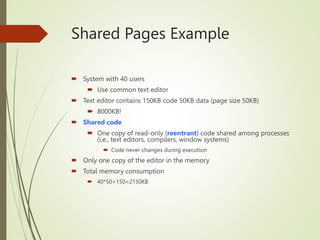

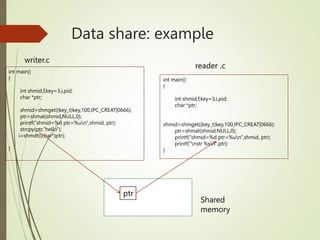

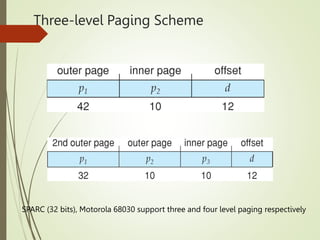

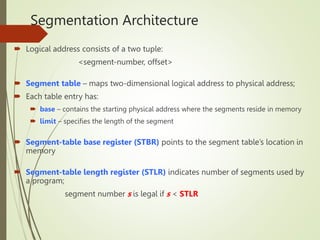

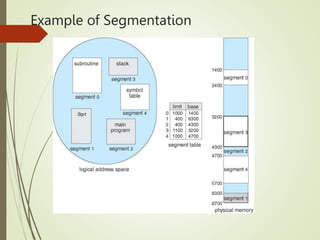

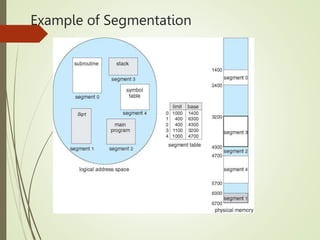

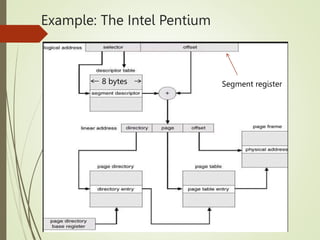

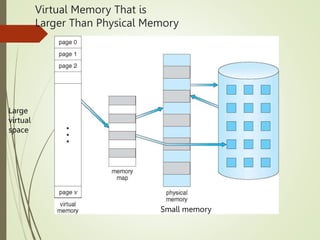

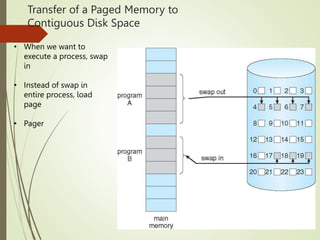

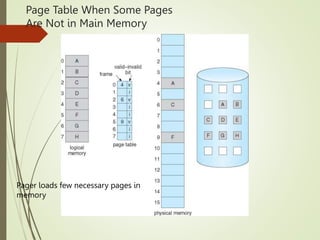

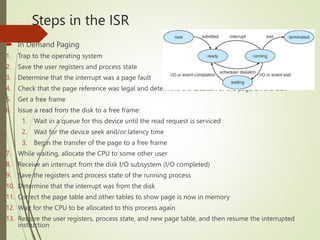

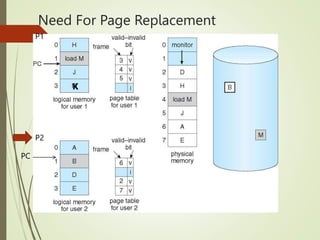

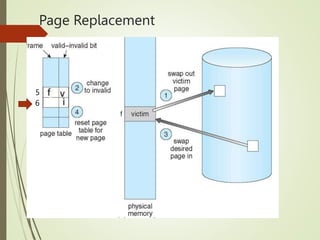

The document discusses memory management techniques. It begins by explaining logical vs physical address spaces and the need for memory protection when multiple processes reside in memory. It then covers various memory allocation schemes like contiguous allocation, paging, and segmentation. Paging divides memory into fixed-sized frames and logical memory into pages. Address translation uses a page table to map logical to physical addresses. Hierarchical paging is introduced to reduce the size of large page tables.