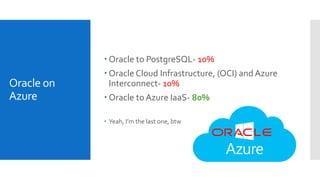

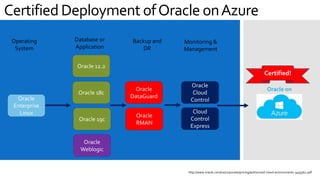

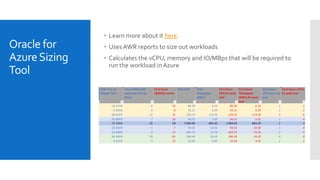

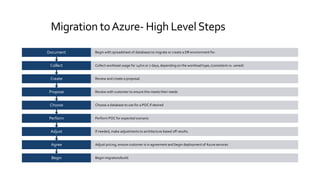

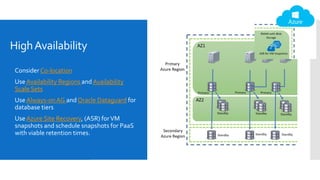

This document provides an overview of how to successfully migrate Oracle workloads to Microsoft Azure. It begins with an introduction of the presenter and their experience. It then discusses why customers might want to migrate to the cloud and the different Azure database options available. The bulk of the document outlines the key steps in planning and executing an Oracle workload migration to Azure, including sizing, deployment, monitoring, backup strategies, and ensuring high availability. It emphasizes adapting architectures for the cloud rather than directly porting on-premises systems. The document concludes with recommendations around automation, education resources, and references for Oracle-Azure configurations.