Histograms and Descriptive Statistics Scoring GuideCRITERIANON.docx

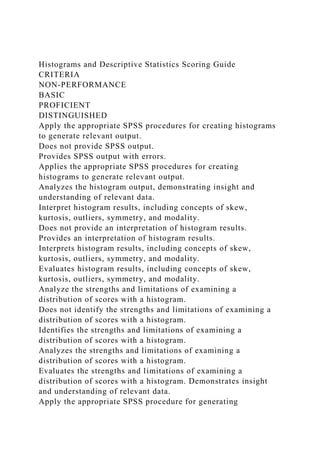

- 1. Histograms and Descriptive Statistics Scoring Guide CRITERIA NON-PERFORMANCE BASIC PROFICIENT DISTINGUISHED Apply the appropriate SPSS procedures for creating histograms to generate relevant output. Does not provide SPSS output. Provides SPSS output with errors. Applies the appropriate SPSS procedures for creating histograms to generate relevant output. Analyzes the histogram output, demonstrating insight and understanding of relevant data. Interpret histogram results, including concepts of skew, kurtosis, outliers, symmetry, and modality. Does not provide an interpretation of histogram results. Provides an interpretation of histogram results. Interprets histogram results, including concepts of skew, kurtosis, outliers, symmetry, and modality. Evaluates histogram results, including concepts of skew, kurtosis, outliers, symmetry, and modality. Analyze the strengths and limitations of examining a distribution of scores with a histogram. Does not identify the strengths and limitations of examining a distribution of scores with a histogram. Identifies the strengths and limitations of examining a distribution of scores with a histogram. Analyzes the strengths and limitations of examining a distribution of scores with a histogram. Evaluates the strengths and limitations of examining a distribution of scores with a histogram. Demonstrates insight and understanding of relevant data. Apply the appropriate SPSS procedure for generating

- 2. descriptive statistics to generate relevant output. Does not provide SPSS output. Includes some, but not all, of the required output. Numerous errors in SPSS output. Applies the appropriate SPSS procedure for generating descriptive statistics to generate relevant output. Applies the appropriate SPSS procedure for generating descriptive statistics to generate relevant output. Includes all relevant output; no irrelevant output is included. No errors in SPSS output. Analyze meaningful versus meaningless variables reported in descriptive statistics. Does not identify meaningful versus meaningless variables reported in descriptive statistics. Identifies meaningful versus meaningless variables reported in descriptive statistics. Analyzes meaningful versus meaningless variables reported in descriptive statistics. Evaluates meaningful versus meaningless variables reported in descriptive statistics. Interpret descriptive statistics for meaningful variables. Does not identify meaningful variables. Identifies meaningful variables. Interprets descriptive statistics for meaningful variables. Evaluates descriptive statistics for meaningful variables. Apply the appropriate SPSS procedures for creating z scores and descriptive statistics to generate relevant output. Does not provide SPSS output. Provides SPSS output with errors. Applies the appropriate SPSS procedures for creating z scores and descriptive statistics to generate relevant output. Analyzes the z scores and descriptive statistics output, demonstrating insight and understanding of relevant data. Analyze the relevant data from the computation, interpretation, and application of z scores. Does not identify the relevant data or generate output.

- 3. Identifies the relevant data and generates output. Analyzes the relevant data from the computation, interpretation, and application of z scores. Evaluates the relevant data from the computation, interpretation, and application of z scores. Justifies the meaningfulness of selected variables. Analyze real-world application of Type I and Type II errors, and the research decisions that influence the relative risk of each. Does not describe a real-world application of Type I and Type II errors and the research decisions that influence the relative risk of each. Describes, but does not analyze, a real-world application of Type I and Type II errors and the research decisions that influence the relative risk of each. Analyzes a real-world application of Type I and Type II errors and the research decisions that influence the relative risk of each. Evaluates real-world application of Type I and Type II errors and the research decisions that influence the relative risk of each. Apply the logic of null hypothesis testing to cases. Does not apply the logic for null hypothesis testing. Inconsistently applies the logic for null hypothesis testing. Applies the logic of null hypothesis testing to cases. Analyzes the logic of null hypothesis testing. Demonstrates insight and understanding of relevant data to either reject or not reject the null hypothesis. Communicate in a manner that is scholarly, professional, and consistent with expectations for members of the identified field of study. Does not communicate in a manner that is scholarly, professional, and consistent with the expectations for members in the identified field of study. Inconsistently communicates in a manner that is scholarly, professional, and consistent with the expectations for members

- 4. in the identified field of study. Communicates in a manner that is scholarly, professional, and consistent with the expectations for members in the identified field of study. Communicates in a manner that is professional, scholarly, and consistent with expectations for members of the identified field of study. Adheres to APA guidelines, and work is appropriate for publication. Running head: Z SCORE ASSESSMENT ANSWER TEMPLATE 1 UNIT 4 ASSIGNMENT 1 ANSWER TEMPLATE 5z-Score Assessment Answer TemplateStudent NameCapella UniversityUnit 4 Assignment 1 Answer Template The following assignment includes three sections consisting of: 1. z scores in SPSS. 2. Case studies of Type I and Type II errors. 3. Case studies of null hypothesis testing. Additional notes: · Answer in complete sentences. · Follow APA rules for scholarly writing. · Include a reference list if necessary. · Save your answers and upload this template to the assignment area for grading. Section 1: z Scores in SPSS A z score is typically analyzed when population mean (µ) and

- 5. population standard deviation (σ) are known. However, in SPSS, we can still calculate z scores with the grades.sav data using the sample mean (M) and sample standard deviation (s). To do this, open grades.sav in SPSS. On the Analyze menu, point to Descriptive Statistics, and then click Descriptives… You will be calculating and interpreting z scores for the total variable. In the Descriptives dialog box, move the total variable into the Variable(s) box. Select the Save standardized values as variables option and click OK. SPSS provides descriptive statistics for total in the Output window. SPSS also creates a new variable in the far right column, labeled Ztotal, in the Data Editor area. Ztotal provides a z score for each case on the total variable. You are now prepared to answer the following Section 1 questions.Question 1 What is the sample mean (M) and sample standard deviation (s) for total? You will use these values in Question 2 below. [Answer here in complete sentences. Also insert the output from SPSS here. Replace this prompt and the prompts below, using as much space as necessary to answer questions.] Question 2 A z score for this sample is calculated as [(X – M) ÷ s]. Locate Case #53’s unstandardized total score (X) in the Data Editor. In the formula below, replace X, M, s, and ? to show how the z score in Ztotal is derived for Case #53. (X – M ) ÷ s = ?Question 3 Run Descriptives… on Ztotal. What are the mean and standard deviation of Ztotal? (Hint: “0E7” in SPSS is scientific notation for 0). Are the mean and standard deviation what you would expect? Justify your answer.

- 6. [Answer here in complete sentences. Also place the SPSS output here.] Question 4 Case number 6 has a Ztotal score of 1.22. What does a z value of 1.22 represent? [Answer here in complete sentences.] Question 5 Identify the case with the lowest z score. Refer to Z Scores in Suggested Resources. Interpret the percentile rank of this z score rounded to whole numbers. [Answer here in complete sentences.] Question 6 Identify the case with the highest z score. Refer to Z Scores in Suggested Resources. Interpret the percentile rank of this z score rounded to whole numbers. [Answer here in complete sentences.]Section 2: Cases Studies of Type I and Type II Errors Question 7 A jury must determine the guilt of a criminal defendant (not guilty, guilty). Identify how the jury would make a correct decision. Analyze how the jury would commit a Type I error versus a Type II error. [Answer here in complete sentences.]Question 8 An I/O psychologist asks employees to complete surveys measuring job satisfaction and organizational citizenship behavior. She intends to measure the strength of association

- 7. between these two variables. The researcher is concerned that she will commit a Type I error. What research decision influences the magnitude of risk of a Type I error in her study? [Answer here in complete sentences] Question 9 A clinical psychologist is studying the efficacy of a new drug medication for depression. The study includes a placebo group (no medication) versus a treatment group (new medication). He then measures the differences in depressive symptoms across the two groups. What would a Type I error represent within the context of his study? How can he reduce the risk of committing a Type I error? How does this decision affect the risk of committing a Type II error? [Answer here in complete sentences.] Section 3: Case Studies of Null Hypothesis TestingQuestion 10 You are running a series of statistical tests in SPSS using the standard criterion for rejecting a null hypothesis. You obtain the following p values. Test 1 calculates group differences with a p value = .07. Test 2 calculates the strength of association between two variables with a p value = .50. Test 3 calculates group differences with a p value = .001. For each test below, state whether or not you reject the null hypothesis. For each test, also explain what your decision implies in terms of group differences (Test 1 and Test 3) and in terms of the strength of association between two variables (Test 2). Test 1 (group differences) =

- 8. Test 2 (strength of association) = Test 3 (group differences) = Question 11 A researcher calculates a statistical test and obtains a p value of .86. He decides to reject the null hypothesis. Is this decision correct, or has he committed a Type I or Type II error? Explain your answer. [Answer here in complete sentences] Question 12 You are proposing a research study that you would like to conduct while attending Capella University. During the proposal, a committee member asks you to explain in your own words what you meant by saying “p less than .05.” Provide an explanation. [Answer here in complete sentences]References Provide references if necessary. This concludes Unit 4 Assignment 1. Save your answers and upload this template to the assignment area. Warner, R. M. (2013). Applied statistics: From bivariate through multivariate techniques (2nd ed.). Thousand Oaks, CA: Sage. Print Copy/Export Output Instructions SPSS output can be selectively copied and pasted into Word by using the Copy command: 1. Click on the SPSS output in the Viewer window. 2. Right-click for options. 3. Click the Copy command. 4. Paste the output into a Microsoft Word document. The Copy command will preserve the formatting of the SPSS tables and charts when pasting into Microsoft Word.

- 9. An alternative method is to use the Export command: 1. Click on the SPSS output in the Viewer window. 2. Right-click for options. 3. Click the Export command. 4. Save the file as Word/RTF (.doc) to your computer. 5. Open the .doc file. Data Set Instructions The grades.sav file is a sample SPSS data set. The fictional data represent a teacher’s recording of student demographics and performance on quizzes and a final exam across three sections of the course. Each section consists of about 35 students (N = 105).Software Installation Make sure that IBM SPSS Statistics Standard GradPack is fully licensed, installed on your computer, and running properly. It is important that you have either the Standard or Premium version of SPSS that includes the full range of statistics. Proper software installation is required in order to complete your first SPSS data assignment in Assessment 1. Next, click grades.sav in the Assessment 1 Resources to download the file to your computer. · You will use grades.sav throughout the course. The definition of variables in the grades.sav data set are found in the Assessment 1 Context. Understanding these variable definitions is necessary for interpreting SPSS output. In Assessment 1, you will define values and scales of measurement for all variables in your grades.sav file. Verify the values and scales of measurement assigned in the grades.sav file using information in the Data Set on page 2 of this document. Data Set There are 21 variables in grades.sav,. Open your grades.sav file and go to the Variable View tab. Make sure you have the

- 10. following values and scales of measurement assigned. SPSS variable Definition Values Scale of measurement id Student identification number Nominal lastname Student last name Nominal firstname Student first name Nominal gender Student gender 1 = female; 2 = male Nominal ethnicity Student ethnicity 1 = Native; 2 = Asian; 3 = Black; 4 = White; 5 = Hispanic Nominal year Class rank 1 = freshman; 2 = sophomore; 3 = junior; 4 = senior Scale lowup Lower or upper division 1 = lower; 2 = upper Ordinal section

- 11. Class section Nominal gpa Previous grade point average Scale extcr Did extra credit project? 1 = no; 2 = yes Nominal review Attended review sessions? 1 = no; 2 = yes Nominal quiz1 Quiz 1: number of correct answers Scale quiz2 Quiz 2: number of correct answers Scale quiz3 Quiz 3: number of correct answers Scale quiz4 Quiz 4: number of correct answers Scale quiz5 Quiz 5: number of correct answers Scale final

- 12. Final exam: number of correct answers Scale total Total number of points earned Scale percent Final percent Scale grade Final grade Nominal passfail Passed or failed the course? Nominal 2 Remove or Replace: Header Is Not Doc Title Assessment 1 ContextTransitioning from Descriptive Statistics to Inferential Statistics In this assessment, we begin the transition from descriptive statistics to inferential statistics which include correlation, t- tests, and analysis of variance (ANOVA). This context document includes information on key concepts related to descriptive statistics, as well as concepts related to probability and the logic of null hypothesis testing (NHT).Scales of

- 13. Measurement In our initial quest to develop comprehension of statistical analysis, it is first important to be sure the raw materials used in the activity are understood. Statistical methods are methods of analyzing data. What does that mean? In order to understand statistics, we must first develop a basic vocabulary for describing data, and recognize a system of names for different categories and kinds of data. For the most part, the statement means statistics provide various ways of answering specific questions about data. It may serve us well to first back up and make sure the fundamental units of statistical data are understood. In statistical analysis we make use of a concept called variables. A variable is an abstract concept of a placeholder or a reserved space. For instance, we may have a variable named GENDER. Gender can have two possible values, male or female. The concept of a variable is often easiest to understand in a concrete sense by likening data to a typical table like those seen in textbooks, or like a spreadsheet table found on computers. It is a series of rows and columns which cross over each other. A column in a table may be arranged such that the gender identities of a group of people is recorded. We may have a list of names in each of the rows of the table in the first column, then a second column in the table which has the letters M or F for each name. The title, or heading, of this column might read "gender." The concept of a variable is like the column heading where the gender is recorded. The column is the reserved space for gender data, called a variable, and the column heading is the name of the variable. Notice that the values of gender—male and female—vary among different people in the rows. This is why the entire column is called a variable. The values of the variable vary among the rows. Given this concept, be sure you understand that male and female

- 14. are NOT variables. The variable corresponds to the name of the column where the information is recorded in the table. In the case of this example, the name of the variable would probably be GENDER—not male or female. Make sure you understand this distinction so you do not use the term variable incorrectly, as it will cause a great deal of confusion when trying to communicate with others about statistics. In the case of the data we are discussing here, it would make no sense whatsoever, and would confuse whoever you were trying to communicate with, if you said something like "the male variable," or that you are working with two variables—male and female. That is not true, and it will confuse whoever you say it to. Consider that in the table where our list of people's names were recorded along with their gender values, we now have two columns. The first column contains names, the second column contains the gender value for each person. Suppose there was a third column in the table. In that column, we may decide to write down each person's height in inches. At the top of the column, we would place the title HEIGHT, and each row in the table would have a number which was the height of the person designated in the first column of each row. Notice that HEIGHT is another variable. Notice it is a fundamentally different kind of variable from GENDER, because it is a number which corresponds to how tall the person is, while GENDER only has two qualitative values—male and female. This points to another important idea about data. There are several different types of data, and you must be able to use a system of names which have been developed to distinguish between different types of variables. Understanding these categories of data is critical to launching your effort to understand statistics, because if you do not learn this system before beginning your exposure to statistical analysis, you will be completely lost more times than you are successful. It is worth your time to spend the requisite amount of time and effort required to understand the concepts we are about to discuss before you speed ahead to your

- 15. introduction to statistics in order to be finished. Of course, that is only true if you want to understand the statistics. If you would rather be completely lost, and have to call your instructor later in the course and ask for extensions on your assignments while you seek help, or if you look forward to asking for an incomplete grade because you cannot comprehend anything you are studying and you cannot do the assignments, then simply browse through this concept of levels of measurement without understanding it. You are guaranteed to suffer that fate. There are two ways to understand what is important about the different types of variables. The first is the most extensive and formal, and the second is a rough approximation that will usually serve your purposes. First, an important concept in understanding descriptive statistics is the four levels of measurement—nominal, ordinal, interval, and ratio (Warner, 2013). These are sometimes called "scales of measurement," "levels of data," or simply "data levels." These scales of measurement, or kinds of variables, are concepts which are needed to begin your study, because each technique of analysis to which you are introduced is designed for only specified kinds of variables, or levels of measurement. In order to understand what kind of variables to use for the analysis, and which kinds of variables are involved in different parts of the analysis, you must be able to recognize the level of measurement of a variable when you see its definition. There are two important concepts involved in determining the level of measurement for a variable. It is critical to know both the characteristics of the variable's values (such as male or female, or height in inches), but also what it is the values are being used to measure or designate in the research project where the variable is being used. Consider that last statement again. We must know (1) the kinds of values that are written in the column where the variable is

- 16. recorded, and (2) what those values correspond to in the people they are being used to describe. Failure to consider both ideas will result in misunderstanding levels of measurement. It is instructive to begin by simply describing the four levels, and then move on to some examples and explanations. First, please remember that the four levels are a hierarchy. That is, they are in order from lowest to highest, and each successive level has all the qualities of the levels before it, plus some new added category of information. · Nominal data refers to numbers (or letters) arbitrarily assigned to represent group membership, such as gender (male = 1; female = 2). Nominal data is useful in comparing groups, but they are meaningless in terms of measures of central tendency and dispersion reviewed below. It does not matter whether the values of the nominal variable are numbers or letters. If it is numbers, they are mathematically meaningless. That is, 2 is not "more" than 1. · Ordinal data represents ranked data, such as coming in first, second, or third in a marathon. However, ordinal data does not tell us how much of a difference there is between measurements. The first-place and second-place finishers could finish 1 second apart, whereas the third-place finisher arrives 2 minutes later. Ordinal data lacks equal intervals, which again prevents most mathematical interpretations, such as adding or averaging the values. · Interval data refers to data with equal intervals between data points. This is the first level of measurement where the values can have general mathematical meaning. An example is degrees measured in Fahrenheit. The drawback for interval data is the lack of a "true zero" value (freezing at 32 degrees Fahrenheit, and 0 does not mean "no heat"). The most serious consequence of a lack of a true zero is that one cannot reason using ratios – 4 is not necessarily twice as large as 2, and 5 is not necessarily

- 17. half as much as 10. · Ratio data do have a true zero, such as heart rate, where "0" represents a heart that is not beating. This level allows full mathematical interpretation of the values, including ratios. These four scales of measurement are routinely reviewed in introductory statistics textbooks as the "classic" way of differentiating measurements. However, the boundaries between the measurement scales are fuzzy; for example, is intelligence quotient (IQ) measured on the ordinal or interval scale? Recently, researchers have argued for a simpler dichotomy in terms of selecting an appropriate statistic. Most of the time, being able to classify a variable into one of the two following categories will serve the purposes needed: · Categorical versus quantitative measures. · A categorical variable is a nominal variable. It simply categorizes things according to group membership (for example, apple = 1, banana = 2, grape = 3). · A quantitative measure represents a difference in magnitude of something, such as a continuum of "low to high" statistics anxiety. In contrast to categorical variables designated by arbitrary values, a quantitative measure allows for a variety of arithmetic operations, including =, <, >, +, -, x, and ÷. Arithmetic operations generate a variety of descriptive statistics discussed next. Note that categorical variables generally correspond to the nominal and ordinal levels of measurement in the previous system, and quantitative variables typically correspond to the interval and ratio levels of measurement. Quantitative variables, or variables which are at the interval or ratio level of measurement, are designated as scale variables in SPSS software.

- 18. In order to determine the level of measurement of a variable, one must consider the nature of the values as well as what those values represent, or what the variable is measuring. For instance, the same type of variable may have two levels of measurement if the two versions are measuring different things in a research project. The level of measurement refers to the construct in the research project which is being measured—not the values of the variable itself. A most interpretable example would be a distinction between two different variables which are exactly the same, except their operational definition in the research project in which they are used is different. Consider a score on a teacher-made test, where the score consists of the number of correct answers out of 100 questions. The variable can be defined in two ways: 1. An index which corresponds to the amount of knowledge the test taker has in the topic area covered by the test. 2. The number of grade points credit earned on the test by the test taker. Notice that every person will have the same values on each of these two variables. It is tempting to say there are no differences. A closer look reveals these two variables are at two different levels of measurement. First, when defined by the first definition above—as the amount of knowledge—we know very little about the meaning of the numbers involved. We do not know if each question has the same amount of knowledge in it. Also, we certainly cannot say that a score of zero means the person has "no knowledge." Notice that this restricts the variable to the ordinal level of measurement, because we cannot even be sure that the necessary qualities for interval level measurement are met (equal distances between points in terms of amount of knowledge). We cannot say that a person that scores 10 has twice as much knowledge as a person who scores 5, and we cannot say that the difference in knowledge between

- 19. two people who score 5 and 6 is the same as the difference between two people who score 1 and 2. On the other hand, when the measurement is defined by the second definition above, the level of measurement is ratio. Clearly, all the requirements for ratio level data are met. Zero means no grade points, and a person who scores 6 has twice as many grade points as a person who scores 3. The point of the preceding paragraph is that the level of measurement of a variable depends on what it is being used for—what is being measured in the research project. Measures of Central Tendency and Dispersion Descriptive statistics summarize a set of scores in terms of central tendency (for example, mean, median, mode) and dispersion (for example, range, variance, standard deviation). As an example, consider a psychologist who measures 5 participants' heart rates in beats per minute: 62, 72, 74, 74, and 118. · The simplest measure of central tendency is the mode. It is the most frequent score within a distribution of scores (for example, two scores of hr = 74). Technically, in a distribution of scores, you can have two or more modes. An advantage of the mode is that it can be applied to categorical data. It is also not sensitive to extreme scores. This measure is suitable for all levels of measurement. · The medianis the geometric center of a distribution because of how it is calculated. All scores are arranged in ascending order. The score in the middle is the median. In the five heart rates above, the middle score is a 74. If you have an even number of scores, the average of the two middle scores is used. The median also has the advantage of not being sensitive to extreme scores. This measure is only suitable for data which are at the ordinal level of measurement or above. · The meanis probably what most people consider to be an

- 20. average score. In the example above, the mean heart rate is (62+72+74+74+118) ÷ 5 = 80. Although the mean is more sensitive to extreme scores (for example, 118) relative to the mode and median, it can be more stable across samples, and it is the best estimate of the population mean. It is also used in many of the inferential statistics studied in this course, such as t-tests and analysis of variance (ANOVA). This measure is suitable only for interval and ratio level variable (quantitative measures). It relies on math (sums and quotients) which are not valid for ordinal measures. · In contrast to measures of central tendency, measures of dispersion summarize how far apart data are spread on a distribution of scores. The rangeis a basic measure of dispersion quantifying the distance between the lowest score and the highest score in a distribution (for example, 118 – 62 = 56). A deviance represents the difference between an individual score and the mean. For example, the deviance for the first heart rate score (62) is 62– 80, or - -18. By calculating the deviance for each score above from a mean of 80, we arrive at -18, -8, -6, -6, and +38. Summing all of the deviances equals 0, which is not a very informative measure of dispersion. An alternative measure of dispersion is the interquartile range (IQR), as well as the semi-interquartile range (sIQR). If the values are placed in order from lowest to highest, each value can be assigned a rank, with the lowest rank being 1 which corresponds to the lowest value in the data set. The highest value of the variable will have a rank equal to the number of values in the data set. The ranks can be used to identify two important scores in the data set. First, the 25th percentile is the score which 25% of the scores fall at or below. There is also a 75th percentile, which is the score which 25% of the sample scores above. The IQR is the difference between the scores at the 75th and 25th percentiles, and the sIQR is half of that value. These measures are suitable for variables at the ordinal level of measurement and above. · A somewhat more informative measure of dispersion is sum of squares (SS), which you will see again in the study of analysis

- 21. of variance (ANOVA). To get around the problem of summing to zero, the sum of squares involves calculating the square of each deviation and then summing those squares. In the example above, SS = [(-18)2 + (-8)2 + (-6)2 + (-6)2 + (+38)2] = [(324) + (64) + (36) + (36) + (1444)] = 1904. The problem with SS is that it increases as data points increase (Field, 2009), and it still is not a very informative measure of dispersion. This measure is suitable only for interval and ratio levels of measurement. · This problem is solved by next calculating the variance (s2), which is the average distance between the mean and a particular score (squared). Instead of dividing SS by 5 for the example above, we divide by the degrees of freedom, N – 1, or 4. The variance is therefore SS ÷ (N– 1), or 1904 ÷ 4 = 476. The problem with interpreting variance is that it is the average distance of "squared units" from the mean. What is, for example, a "squared" heart rate score? This measure is suitable only for interval and ratio levels of measurement. · The final step is calculating the standard deviation(s), which is simply calculated as the square root of the variance, or in our example, √476 =21.82. The standard deviation represents the average deviation of scores from the mean. In other words, the average distance of heart rate scores to the mean is 21.82 beats per minute. If the extreme score of 118 is replaced with a score closer to the mean, such as 90, then s = 9.35. Thus, small standard deviations (relative to the mean) represent a small amount of dispersion; large standard deviations (relative to the mean) represent a large amount of dispersion (Field, 2009). The standard deviation is an important component of the normal distribution. This measure is suitable only for interval and ratio levels of measurement. Notice that the various methods of expressing central tendency and dispersion are suitable for different levels of measurement. A brief summary of the types of measures used with different levels of measurement are shown in the table below:

- 22. . Typical Measures Central Tendency Dispersion Levels of Measurement Categorical Quantitative Nominal Ordinal Interval Ratio Mode Median Mean k* sIQR Standard Deviation Note: The number of categories or groups (often designated as k) is the primary expression of variation for nominal level data.Visual Inspection of a Distribution of Scores An assumption of the statistical tests that you will study in this course is that the scores for a dependent variable, like a range of heart rate scores, are normally (or approximately normal) in shape. The assumption is first checked by examining a histogram of the distribution. This method is meaningful only for quantitative variables—interval or ratio levels of measurement. It makes no sense to create histograms of nominal or ordinal (categorical) variables. Departures from normality and symmetry are assessed in terms of skew and kurtosis. Skewness is the tilt or extent a distribution deviates from symmetry around the mean. A distribution that is positively skewed has a longer tail extending to the right (that is, the "positive" side of the distribution) A distribution that is negatively skewed has a longer tail extending to the left (that is, the "negative" side of the distribution) In contrast to skewness, kurtosis is defined as the peakedness of a distribution of scores. The use of these terms is not limited to your description of a

- 23. distribution following a visual inspection. They are included in your list of descriptive statistics and should be included when analyzing your distribution of scores. Skew and kurtosis scores of near zero indicate a shape that is symmetric or close to normal respectively. Values of -1 to +1 are considered ideal, whereas values ranging from -2 to +2 are considered acceptable for psychometric purposes.Outliers Outliers are defined as extreme scores on either the left of right tail of a distribution, and they can influence the overall shape of that distribution. There are a variety of methods for identifying and adjusting for outliers. Outliers can be detected by calculating z-scores or by inspection of a box plot. Once an outlier is detected, the researcher must determine how to handle it. The outlier may represent a data entry error that should be corrected. The outlier may be a valid extreme score, and can either be left alone, deleted, or transformed. Whatever decision is made regarding an outlier, the researcher must be transparent and justify his or her decision. Probability and Hypothesis Testing The interpretation of inferential statistics requires an understanding of probability and the logic of null hypothesis testing (NHT). The logic and interpretative skills addressed in this assessment are vital to your success in interpreting data in this course as well as to your success in advanced courses in statistics. It is useful to first consider some general ideas about hypothesis testing to be sure terminology is clear and automatically understood. Inaccuracies in vocabulary can present barriers which will follow you through to the advanced concepts and spoil your learning experience, so it is best to clear up common errors before beginning to study the more complex ideas. An hypothesis is simply a precise statement or prediction of a fact we think is true. The first thing you should note is that we are using two words here: hypothesis and hypotheses. The only difference is the “is” and “es” in the last two letters. This is

- 24. simply the distinction between singular and plural. We may examine a single hypothesis, or we may speak of many different hypotheses. The first term rhymes with “this” and the second rhymes with “these.” In fundamental statistics (and even in most advanced methods), we encounter hypotheses of two types. Confusion among these types is a common barrier in learning statistics, so it will be valuable for you to study these ideas carefully in order to provide and advance organizer for material to come. The two types of hypotheses tests we will study are hypotheses tests related to (1) group differences, and hypotheses related to (2) association between variables. Each of these types of hypotheses tests have two mutually exclusive and conflicting statistical hypotheses, called the null hypothesis and the alternative hypothesis. In each of these two general types of hypotheses tests, the purpose of the statistical test is to show that the null hypothesis has a low probability of being true. The reason this is done is usually to support the alternative hypothesis, because if the null hypothesis is false, the alternative hypothesis must be true.Group Differences An hypothesis of group differences is related to the question of whether or not two or more groups have the same central tendency (such as equal means). There are two possible hypotheses in this category: The groups have equal means or the groups do not have equal means. The first hypothesis, that the means are equal, is called the null hypothesis. The hypothesis that the groups do not have equal means is the alternative hypothesis. This is true regardless of what the researcher is interested in (whether the researcher wants the groups to be equal or not). It is related to the mathematical structure of the tests, and it is a constant in the statistical analysis. The purpose of the statistical test is to demonstrate that the null hypothesis has a very small probability of being true. If the null hypothesis is not true, then the alternative hypothesis must be true.Association Between Variables

- 25. The second type of is whether or not two variables are association (related or correlated). When two variables are associated, scores or values are analyzed in pairs. The hypothesis is related to the idea that the values of the first variable somehow predict the values of the second. The relationship between the two variables is usually expressed by some type of correlation coefficient. It is usually an index which varies between -1 and +1. If the relationship between the two variables is computed to be zero, then there is no association between them. The value on the first variable has nothing to do with the value on the second. If the correlation coefficient is positive (between 0 and +1), it means those cases with high values on the first variable tend to have high values on the second. If the correlation coefficient is negative (between -1 and 0), then it means high values on the first variable tend to match up with low values on the second. The magnitude or size of the correlation coefficient (its distance from zero and closeness to -1 or +1) is the strength of the relationship between the two variables. If the strength of the relationship is zero, then there is no relationship – the value of the first variable has nothing to do with the second. Consider a table of data where students are listed in rows, and variables are listed in columns, like a teacher’s gradebook. There may be two variables, such as total number of absences in one column and final test scores in another. Each case (each student) has a value on both of these variables. We might expect those students who have high numbers on absences would tend to have lower numbers on the final exam. Note we would expect the correlation between absences and exam scores to be negative—those students who have many absences will tend to have low exam scores. Statistical hypotheses are generally related to questions of whether there is a relationship between the two variables or not. In this type of statistical test, there are two hypotheses: (1) the correlation between the two variables is zero (the null hypothesis), or the correlation between the two

- 26. variable is not zero (the alternative hypothesis). This is true regardless of what the researcher is interested in (regardless of whether the research wants the variables to be related, or thinks they are related, or not). The purpose of the statistical test is to demonstrate that the null hypothesis has a very small probability of being true. If the null hypothesis is not true, then the alternative hypothesis must be true.The Standard Normal Distribution and z-Scores A student receives an intelligence quotient (IQ) score of 115 on a standardized intelligence test. What is his or her percentile rank? To calculate the percentile rank, you must understand the logic and application of the standard normal distribution. The advantage of the standard normal distribution is that the proportional area under the curve is constant. This constancy allows for the calculation of a percentile rank of an individual score X for a given distribution of scores. When a population mean (µ) and population standard deviation (σ) are known, such as a distribution of scores for a standardized intelligence test, the standard normal distribution determines the percentile rank of a given X-score (for example, 50th percentile on IQ). Around 2/3rds of standard scores (68.26%) fall within +/- 1 population standard deviation from the population mean. Approximately 95 percent of standard scores fall within +/- 2 population standard deviations. Approximately 99 percent of standard scores fall within +/- 3 population standard deviations. Knowing this, we can begin the process of determining a percentile rank from an individual score. Consider a standardized intelligence quotient (IQ) test where µ = 100 and σ = 15. A standard score, or z-score, is calculated with individual X scores rescaled to µ = 0 and σ = 1. The formula for a z-score is [( X - µ) ÷ σ]. For example, an IQ score of X = 100 would be rescaled to z = 0.00 [(100 – 100) ÷ 15 = 0].

- 27. A z-score of 0 means that the X-score is 0 standard deviations above or below the mean. An IQ score of X = 85 would be rescaled to z = -1.00 [(85 – 100) ÷ 15 = -1.00]. A z-score of -1 represents an IQ score 1 standard deviation below the mean. An IQ score of 130 would be rescaled to z = 2.00 [(130 – 100) ÷ 15 = 2.00). A z-score of 2 represents an IQ score 2 standard deviations above the mean. In short, a negative z-score falls to the left of the population mean, whereas a positive z-score falls to the right of the population mean. Once a given z-score is calculated for a given X-score, its percentile rank can be determined. The proportion of area under the curve can also be calculated. An important z-score is +/- 1.96, where 95 percent of scores fall under the area of the curve, whereas 2.5 percent fall to the left and 2.5 percent fall to the right (2.5% + 2.5% = 5%). A z score beyond these cutoffs is typically considered to be "extreme" (Warner, 2013). In addition, we will see below that most inferential statistics set "statistical significance" to obtained probability values of less than 5 percent. Hypothesis Testing Probability is crucial for hypothesis testing. In hypothesis testing, you want to know the likelihood that your results occurred by chance. As was discussed in the introduction to hypothesis testing, we are generally concerned with the probability that a null hypothesis is true (recall that a null hypothesis is an hypothesis that two group means are equal, or that two variables have no relationship). Whenever two groups have equal means in a population, taking random samples of those two groups and computing their means, then examining the difference between those means, will rarely result in the means having exactly zero difference. Because the samples are selected randomly and do not contain all members of the population, there will tend to be some small or negligible

- 28. difference in means for almost any two samples. These differences are due to chance alone in the random selection process. In the same way, whenever two variables are completely unrelated in a population, random selection of a small sample will rarely result in the two variable having exactly zero correlation in those samples. There will be some small amount of correlation between the two variables in most random samples by chance alone. No matter how unlikely, there is always the possibility that your results have occurred by chance, even if that probability is less than 1 in 20 (5%). However, you are likely to feel more confident in your inferences if the probability that your results occurred by chance is less than 5 percent compared to, say, 50 percent. Most psychologists find it reasonable to designate less than a 5 percent chance as a cutoff point for determining statistical significance. This cutoff point is referred to as the alpha level. An alpha level is set to determine when a researcher will reject or fail to reject a null hypothesis (discussed next). The alpha level is set before data are analyzed to avoid "fishing" for statistical significance. In high-stakes research (for example, testing a new cancer drug), researchers may want to be even more conservative in designating an alpha level, such as less than 1 in 100 (1%) that the results are due to chance.Null and Alternative Hypotheses The null hypothesis (H0) refers to the difference between a group mean and a given population parameter, such as a population mean of 100 on IQ, or to the difference between two group means. Imagine that we ask two groups of students to complete a standardized IQ test, and then we calculate the mean IQ score for each group. We observe that the mean IQ for Group A is 100 (MA = 100), whereas the mean IQ for group B is 115 (MB = 115). Is a mean difference of 15 IQ points statistically significant or just due to chance? The null hypothesis predicts that H0: MA = MB. That is, the null hypothesis predicts "no"

- 29. difference between groups. Remember that "null" also means "zero," so we could also state the null hypothesis as H0: MA – MB = 0. When comparing groups, in general, the null hypothesis predicts that group means will not differ. When testing the strength of a relationship between two variables, such as the correlation between IQ scores and grade point average (GPA), in general, the null hypothesis is that the relationship (expressed as a single correlation coefficient) between Variable x and Variable y is zero. By contrast, the alternative hypothesis (H1)does predict a difference between two groups, or in the case of relationships, that two variables are significantly related. An alternative hypothesis can be directional (for example, H1: Group A has a higher mean score than Group B) or nondirectional (H1: Group A and Group B will differ). In hypothesis testing, you either reject or fail to reject the null hypothesis. Note that this is not stating, "accept the null hypothesis as true." By default, if you reject the null hypothesis, you accept the alternative hypothesis as true (because the hypotheses are mutually exclusive). However, if you do not reject the null hypothesis, you cannot accept the null hypothesis as true. You have simply failed to find statistical justification to reject the null hypothesis, and the null hypothesis was assumed to begin with. There was no evidence for it. The test was simply done to show it was false in order to support the allegation that the alternative hypothesis was true.Type I and Type II Errors If you commit a Type Ierror, this means that you have incorrectly rejected a true null hypothesis. You have incorrectly concluded that there is a significant difference between groups, or a significant relationship, where no such difference or relationship actually exists. Type I errors have real-world significance, such as concluding that an expensive new cancer drug works when actually it does not work, costing money and potentially endangering lives. Keep in mind that you will probably never know whether the null hypothesis is "true" or not, as we can only determine that our data fail to reject it.

- 30. If you commit a Type IIerror, this means that you have NOT rejected a false null hypothesis when you should have rejected it. You have incorrectly concluded that no differences or no relationships exist when they actually do exist. Type II errors also have real-world significance, such as concluding that a new cancer drug does not work when it actually does work and could save lives. Your alpha level will affect the likelihood of making a Type I or a Type II error. If your alpha level is small (for example, .01, less than 1 in 100 chance), you are less likely to reject the null hypothesis. So, you are less likely to commit a Type I error. However, you are more likely to commit a Type II error. You can decrease the chances of committing a Type II error by increasing the alpha level (for example, .10, less than 1 in 10 chance). However, you are now more likely to commit a Type I error. Since the chances of committing Type I and Type II errors are inversely proportional, you will have to decide which type of error is more serious. You need to assess the risk associated with each type of error. Your research questions will help in this decision. In standard psychological research, alpha level is set to .05 (that is, a 1 in 20 chance of committing a Type I error). An alpha level of .05 is used throughout the remainder of this course.Probability Values and the Null Hypothesis The statistic used to determine whether or not to reject a null hypothesis is referred to as the calculated probability value or p value, denoted p. When you run an inferential statistic in SPSS, it will provide you with a p value for that statistic (SPSS labels p values as “Sig.”). If the test statistic has a probability value of less than 1 in 20 (.05), we can say "p < .05, the null hypothesis is rejected." Keep in mind in the coming weeks that we are looking for values less than .05 to reject the null hypothesis. This may seem counterintuitive at first, because usually we assume that "bigger is better." In the case of null hypothesis testing, the opposite is the case—if we expect to reject a null hypothesis, remember that, for p values, "smaller is better." Any

- 31. p value less than .05, such as .02, .01, or .001, means that we reject the null hypothesis. Any p value greater than .05, such as .15, .33, or .78, means that we do not reject the null hypothesis. Make sure you understand this point, as it is a common area of confusion among statistics learners. Based on your understanding of the null hypothesis, the alternative hypothesis, the alpha level, and the p value, you can begin to make statements about your research results. If your results fall within the rejection region, you can claim that they are "statistically significant," and you reject the null hypothesis. In other words, you will conclude that groups do differ in some way or that two variables are significantly related. If the results do not fall within the rejection region, you cannot make this claim. Your data fail to reject the null hypothesis. In other words, you cannot conclude that the groups differ in some way, or that the two variables are related. This assessment covers the terminology and concepts behind hypothesis testing, which prepares you for the remaining assessments in this course. The statistical tests include: · Correlations. · t-Tests. · Analysis of Variance. ReferencesFarris, J. R. (2012). Organizational commitment and job satisfaction: A quantitative investigation of the relationships between affective, continuance, and normative constructs (Doctoral dissertation, Capella University). Available from ProQuest Dissertations and Theses database. (UMI No. 3527687)Field, A. (2009). Discovering statistics using SPSS (3rd ed.). Thousand Oaks, CA: Sage Publications.George, D., & Mallery, P. (2014). IBM SPSS statistics 21 step by step: A simple guide and reference (13th ed.). Upper Saddle River, NJ:

- 32. Pearson.Lane, D. M. (2013). HyperStat online statistics textbook. Retrieved from http://davidmlane.com/hyperstat/index.htmlOnweugbuzie, A. J. (1999). Statistics anxiety among African American graduate students: An affective filter? Journal of Black Psychology, 25, 189–209.Pan, W., & Tang, M. (2004). Examining the effectiveness of innovative instructional methods on reducing statistics anxiety for graduate students in the social sciences. Journal of Instructional Psychology, 31, 149–159. Scoville, D. M. (2012). Hope and coping strategies among individuals with chronic neuropathic pain (Doctoral dissertation, Capella University). Available from ProQuest Dissertations and Theses database. (UMI No. 3518448) Stewart, A. M. (2012). An examination of bullying from the perspectives of public and private high school children (Doctoral dissertation, Capella University). Available from ProQuest Dissertations and Theses database. (UMI No. 3505818) Warner, R. M. (2013). Applied statistics: From bivariate through multivariate techniques (2nd ed.). Thousand Oaks, CA: Sage. 1 11