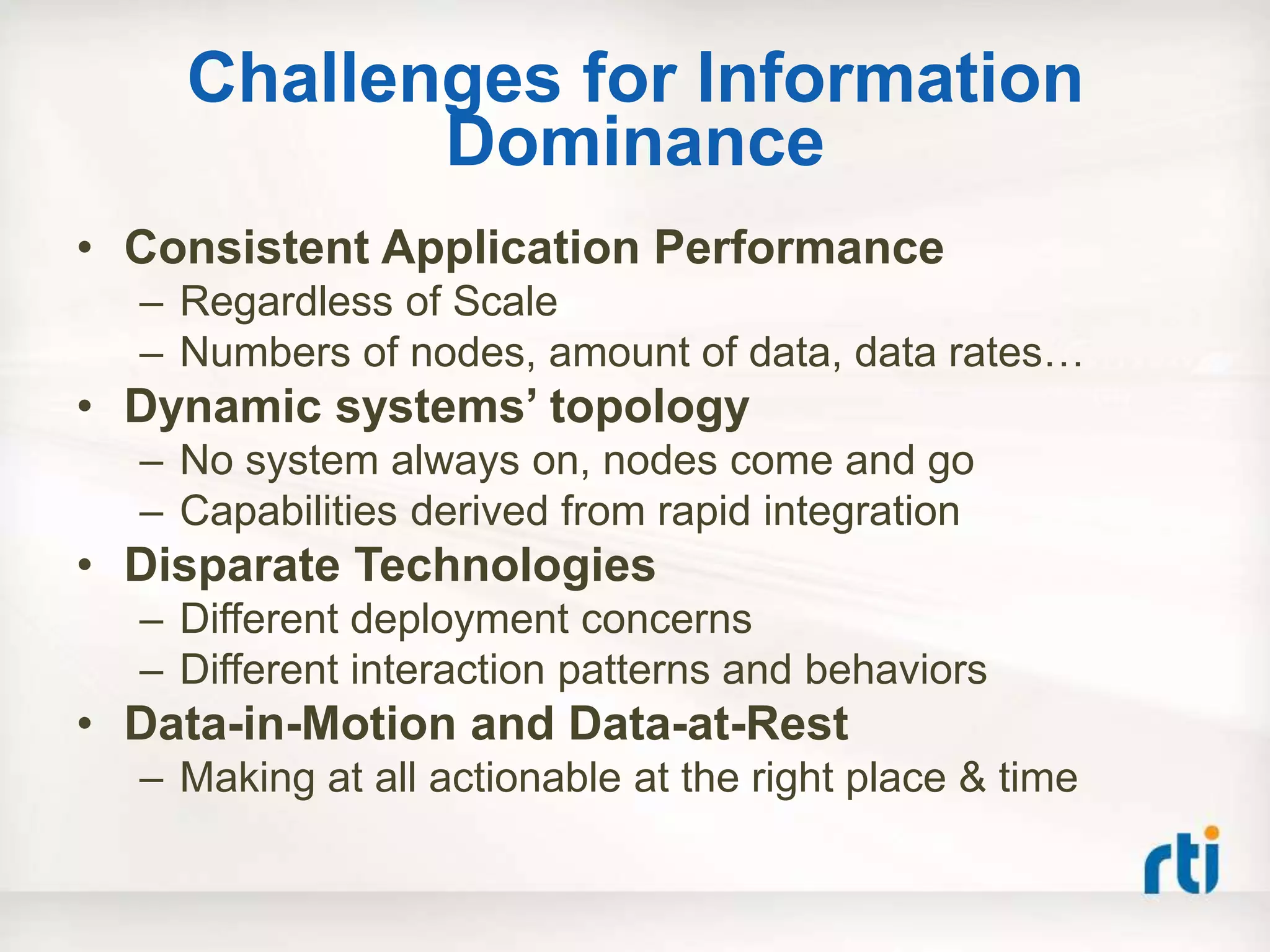

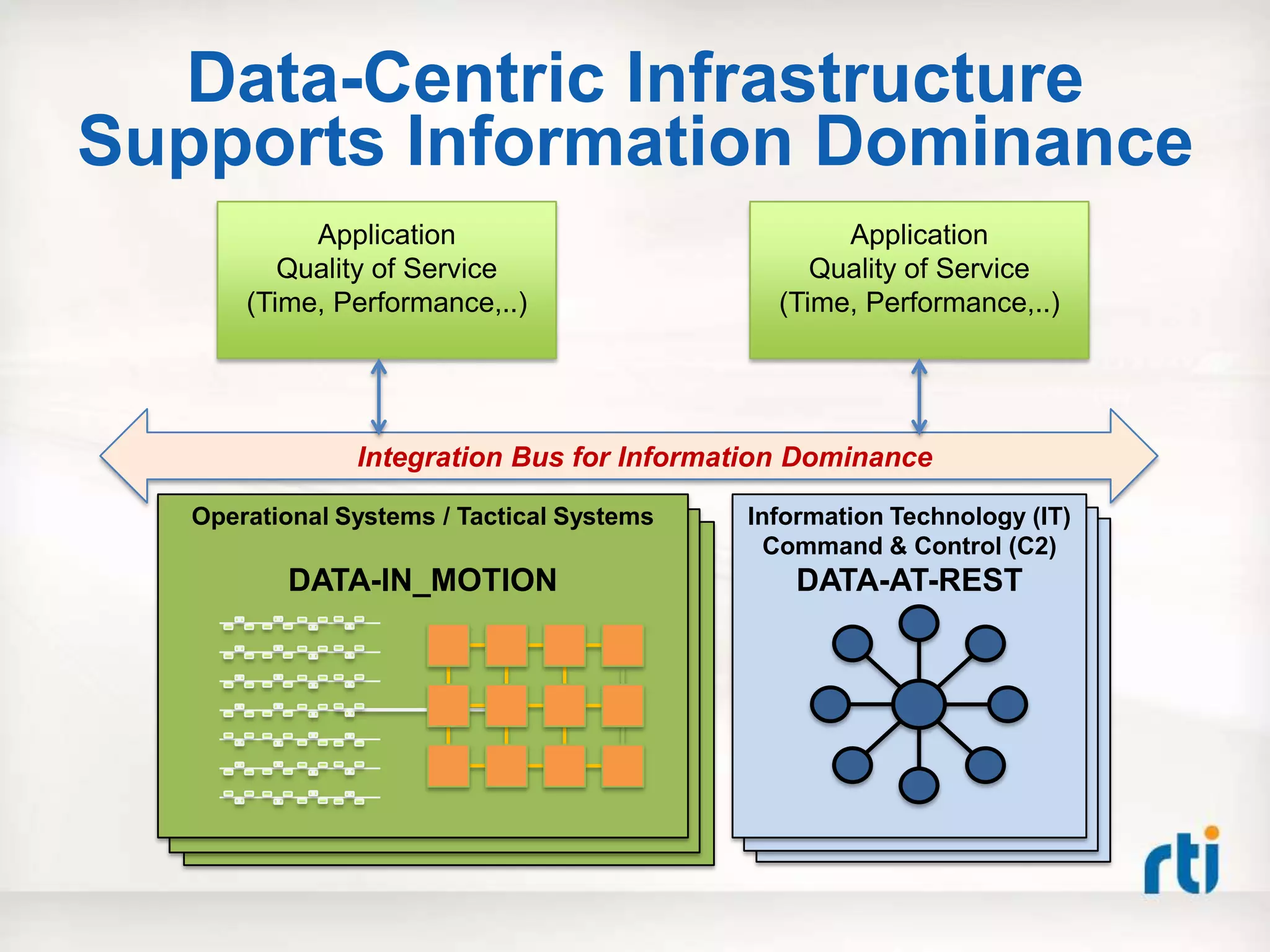

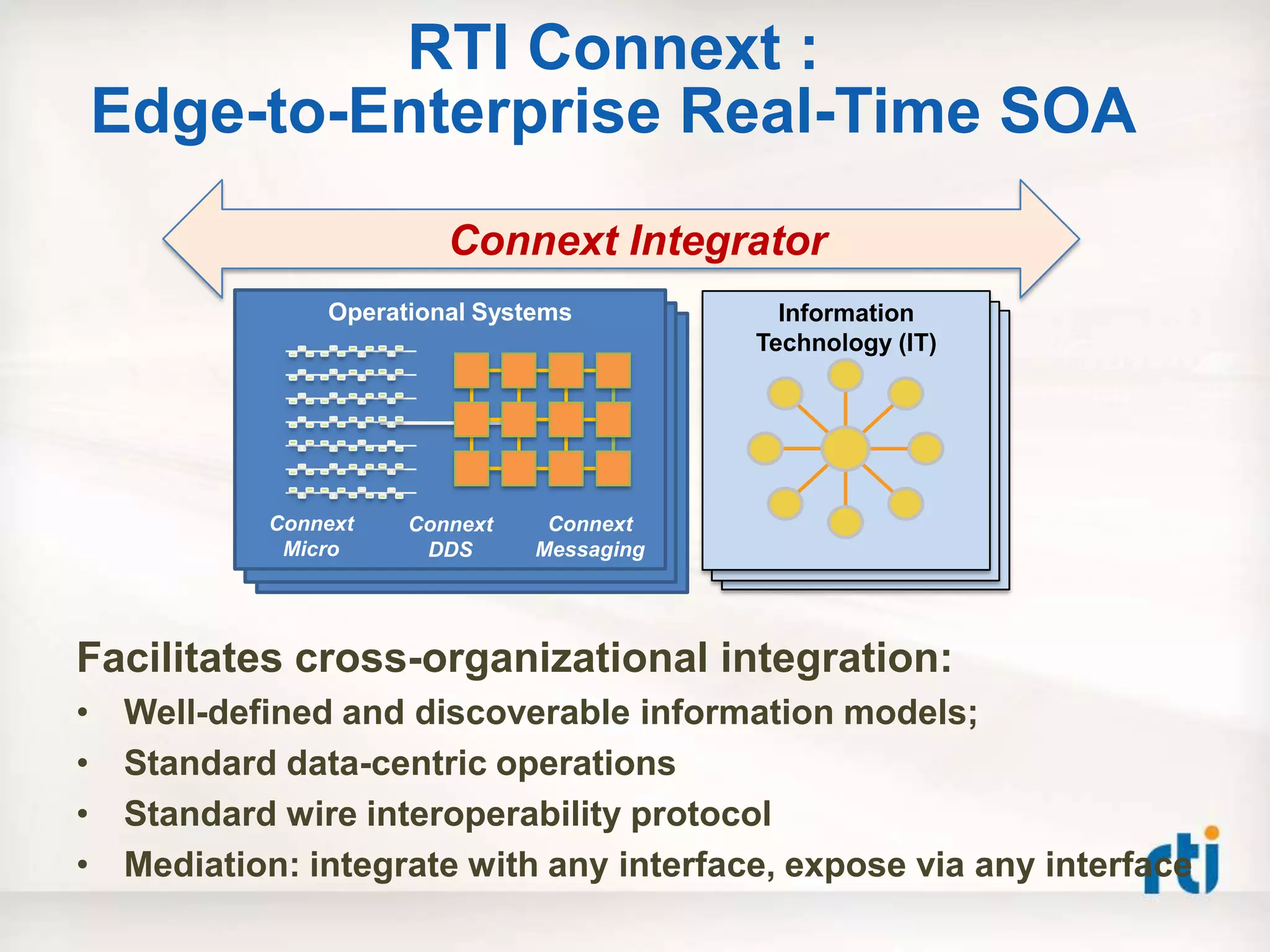

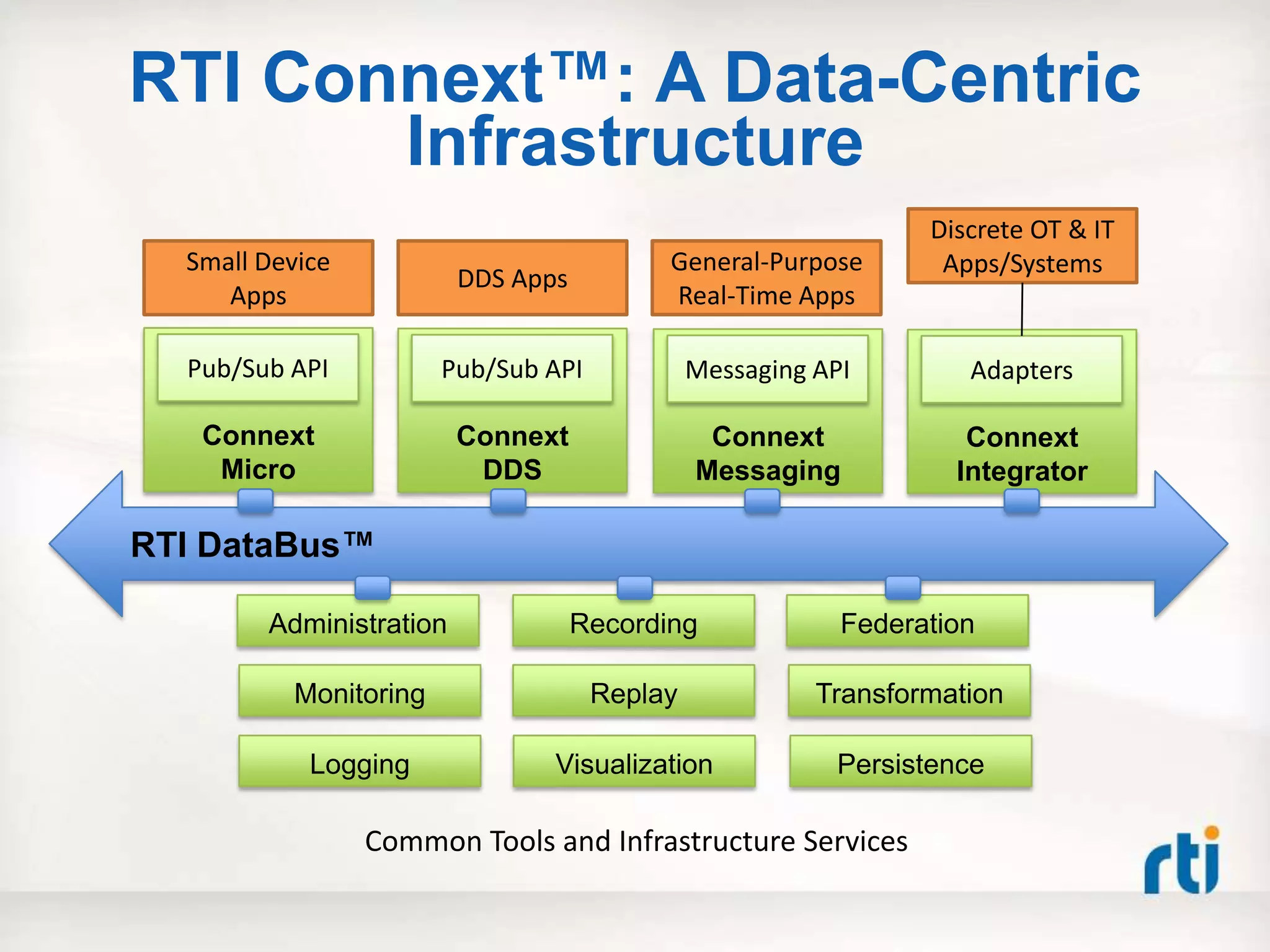

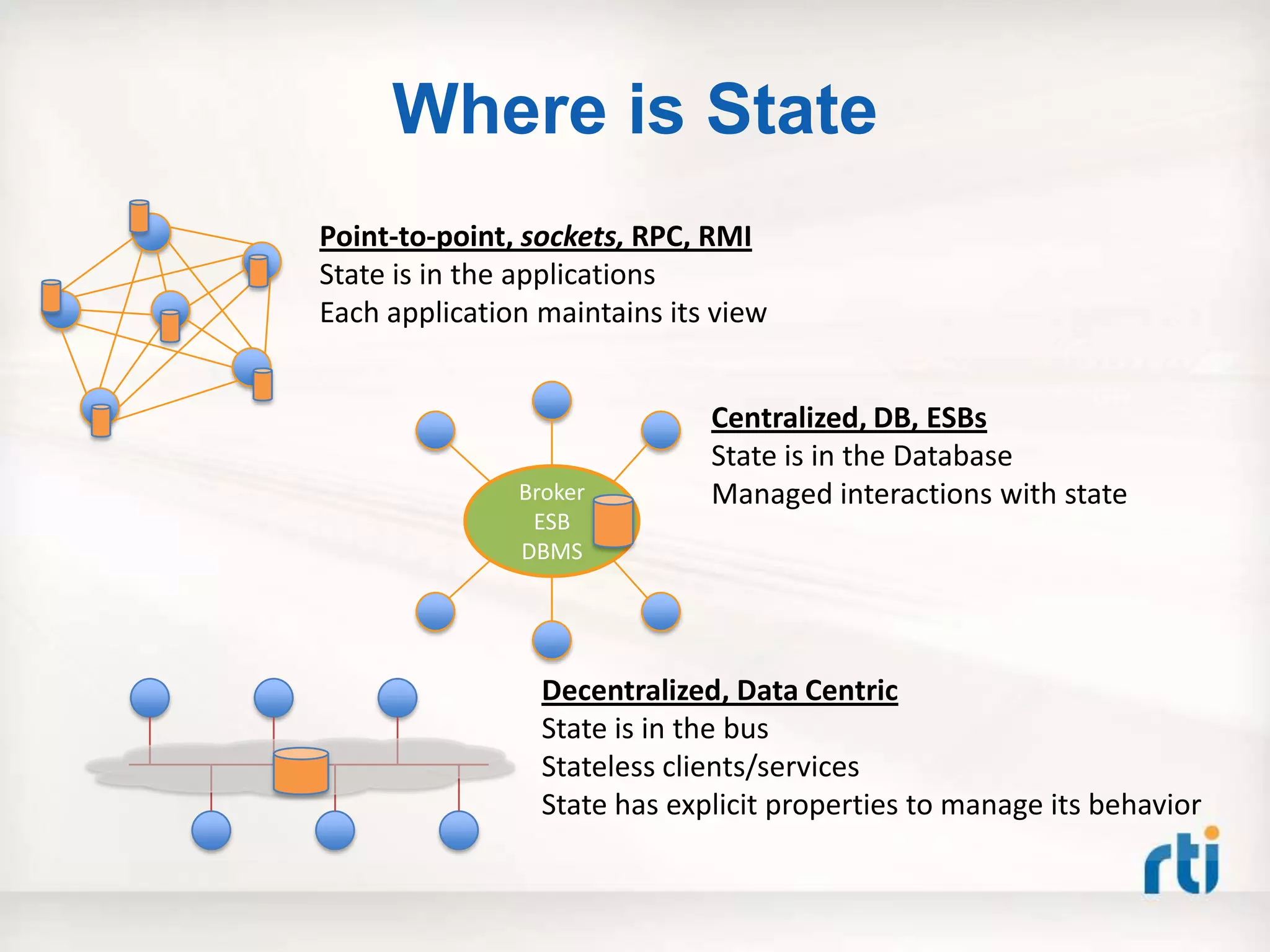

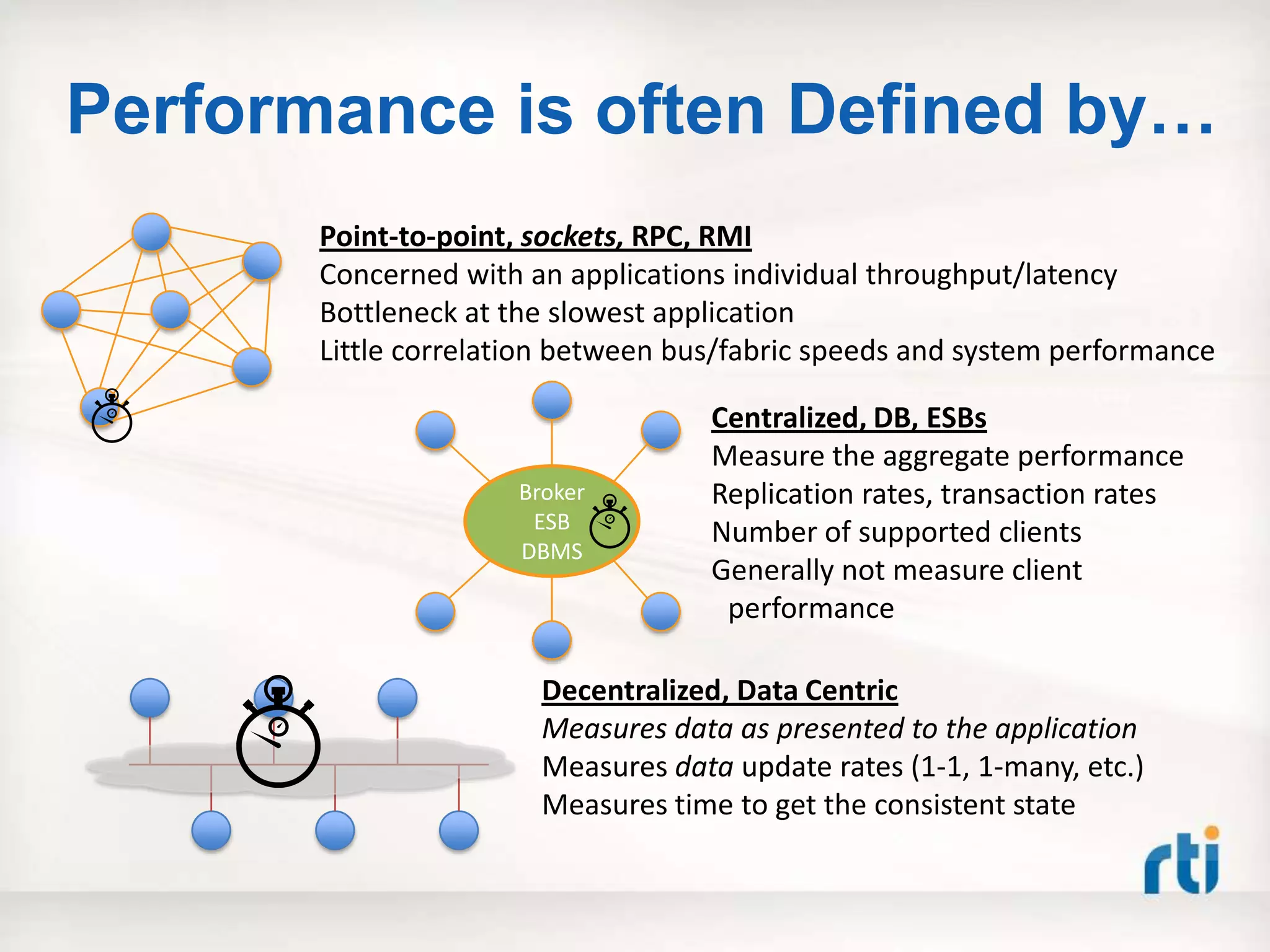

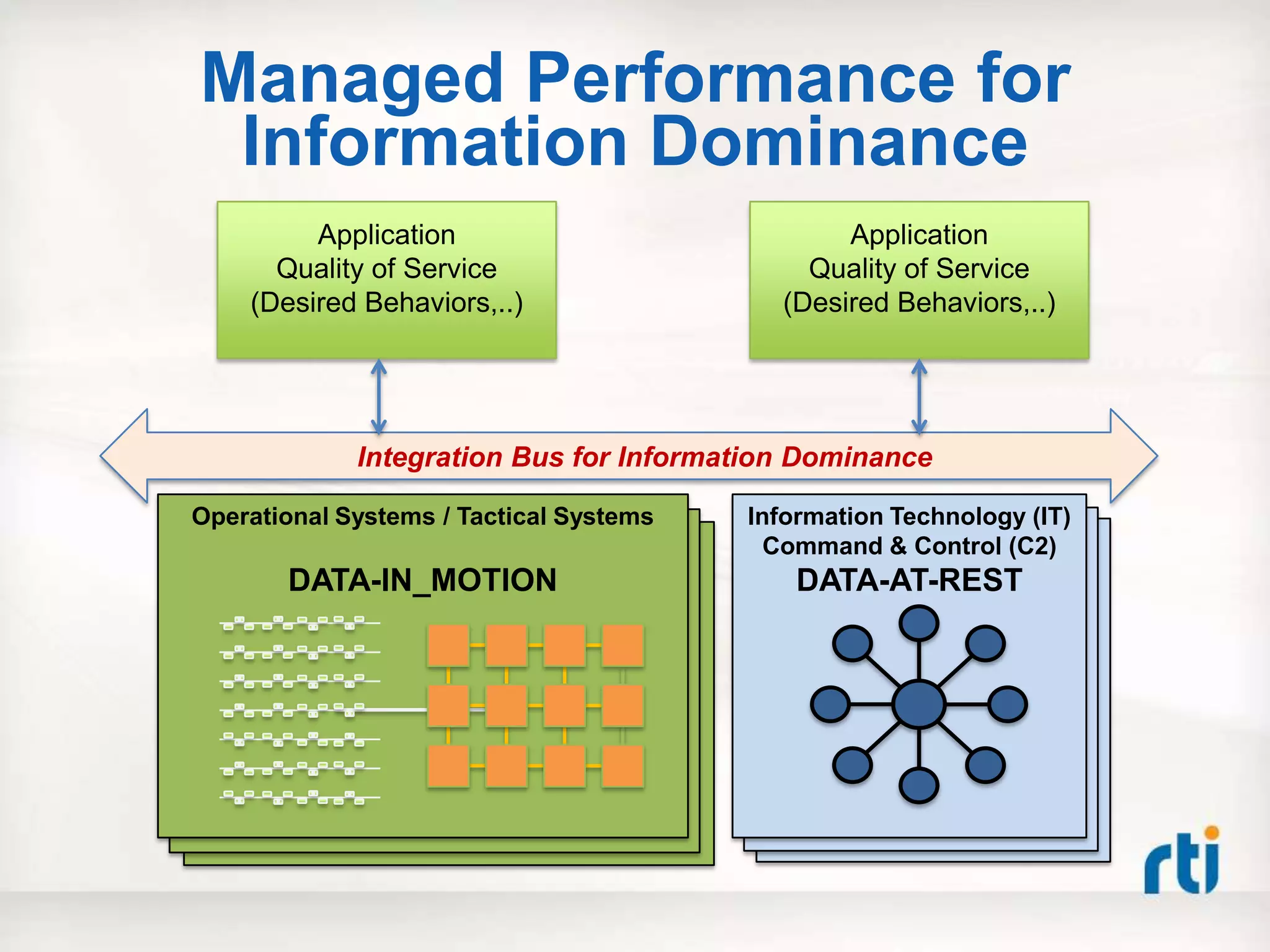

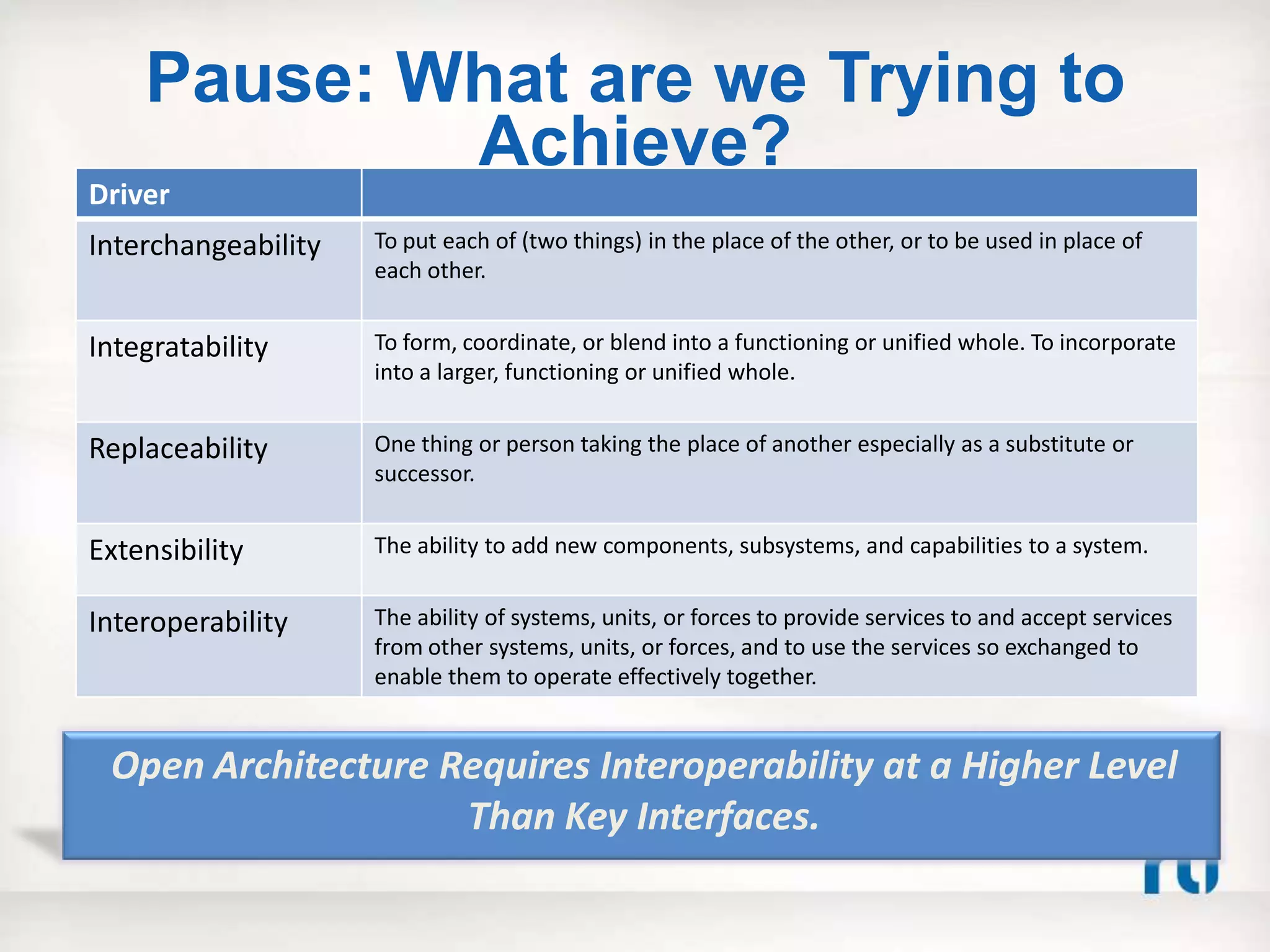

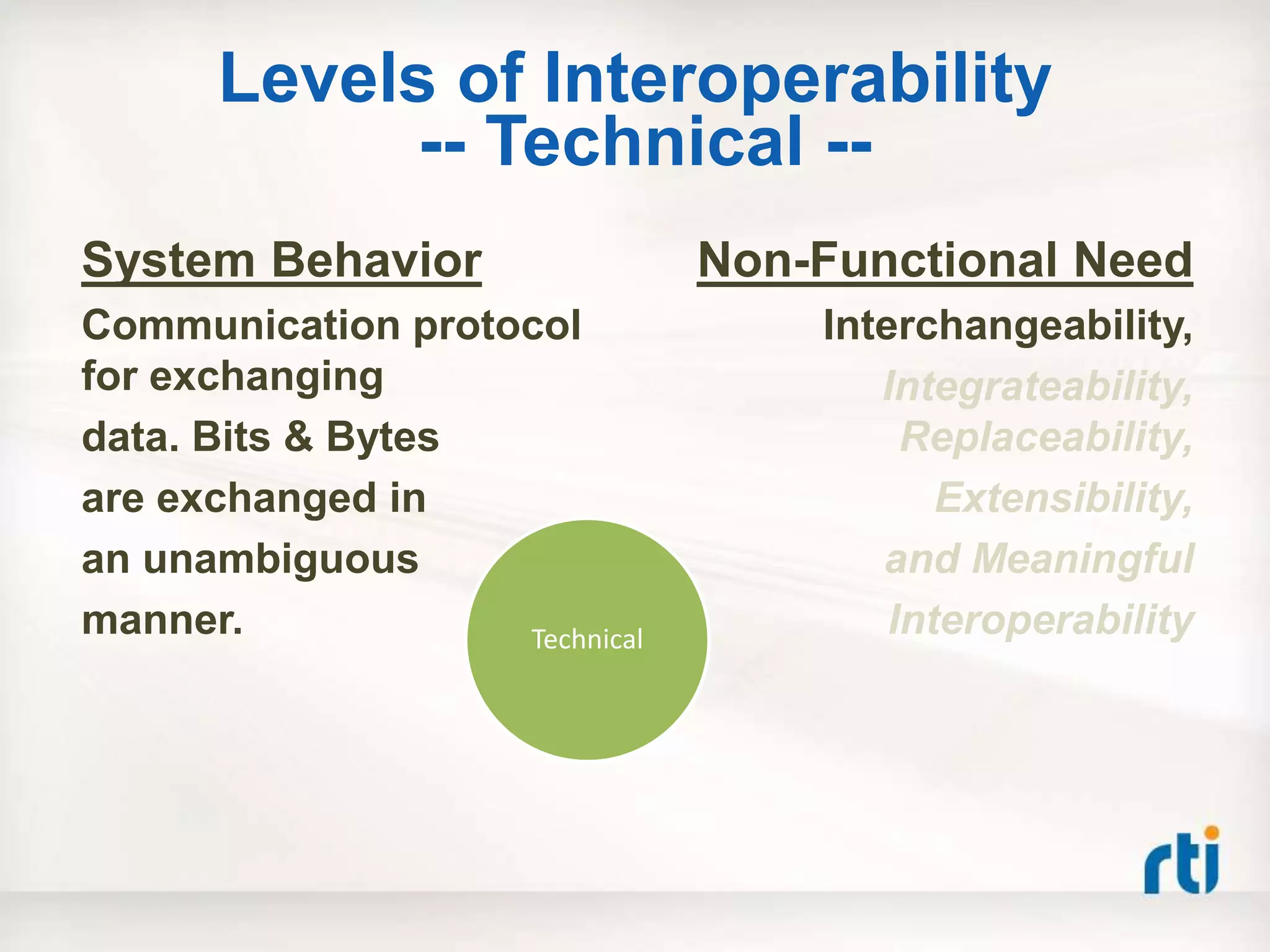

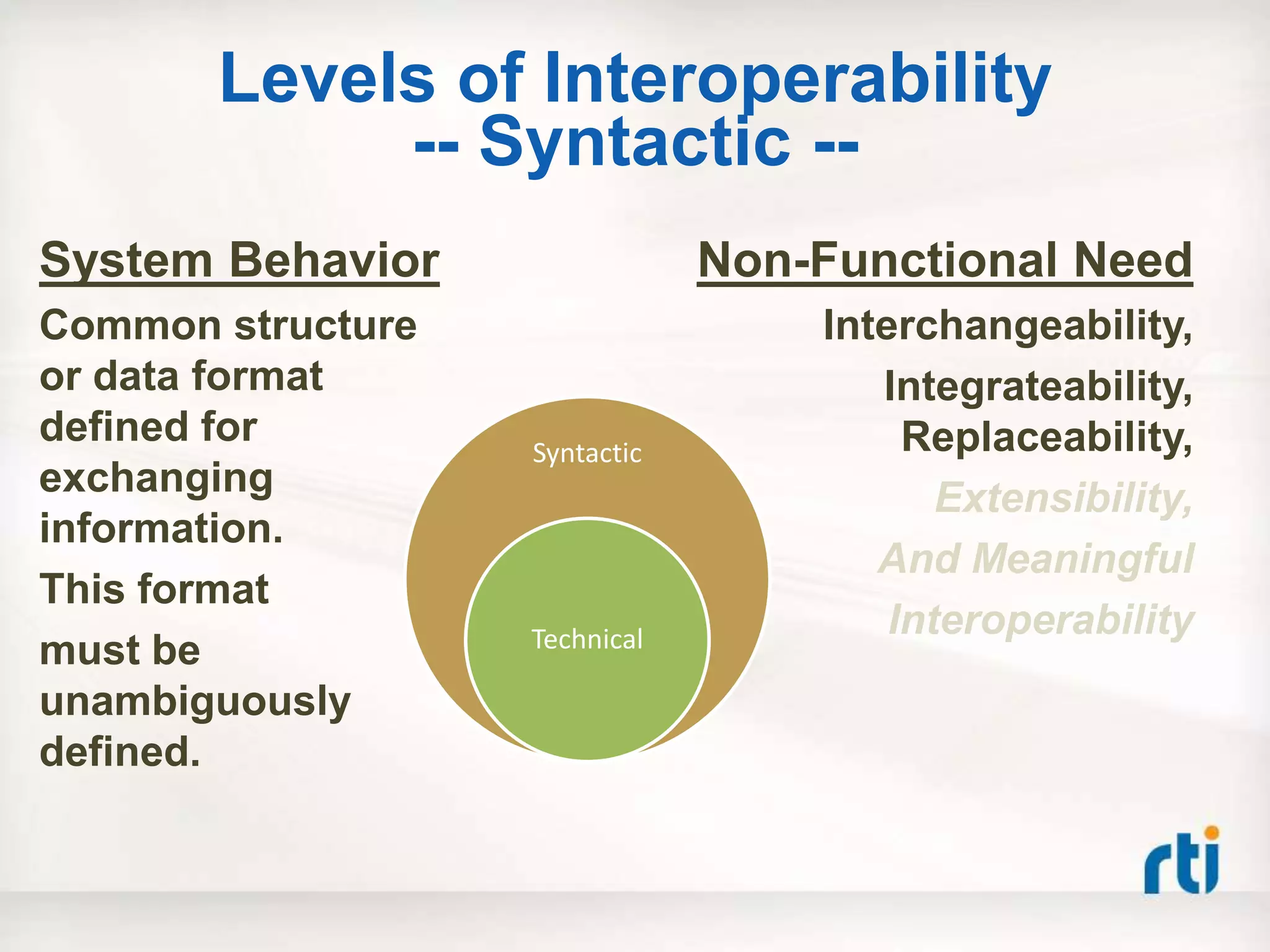

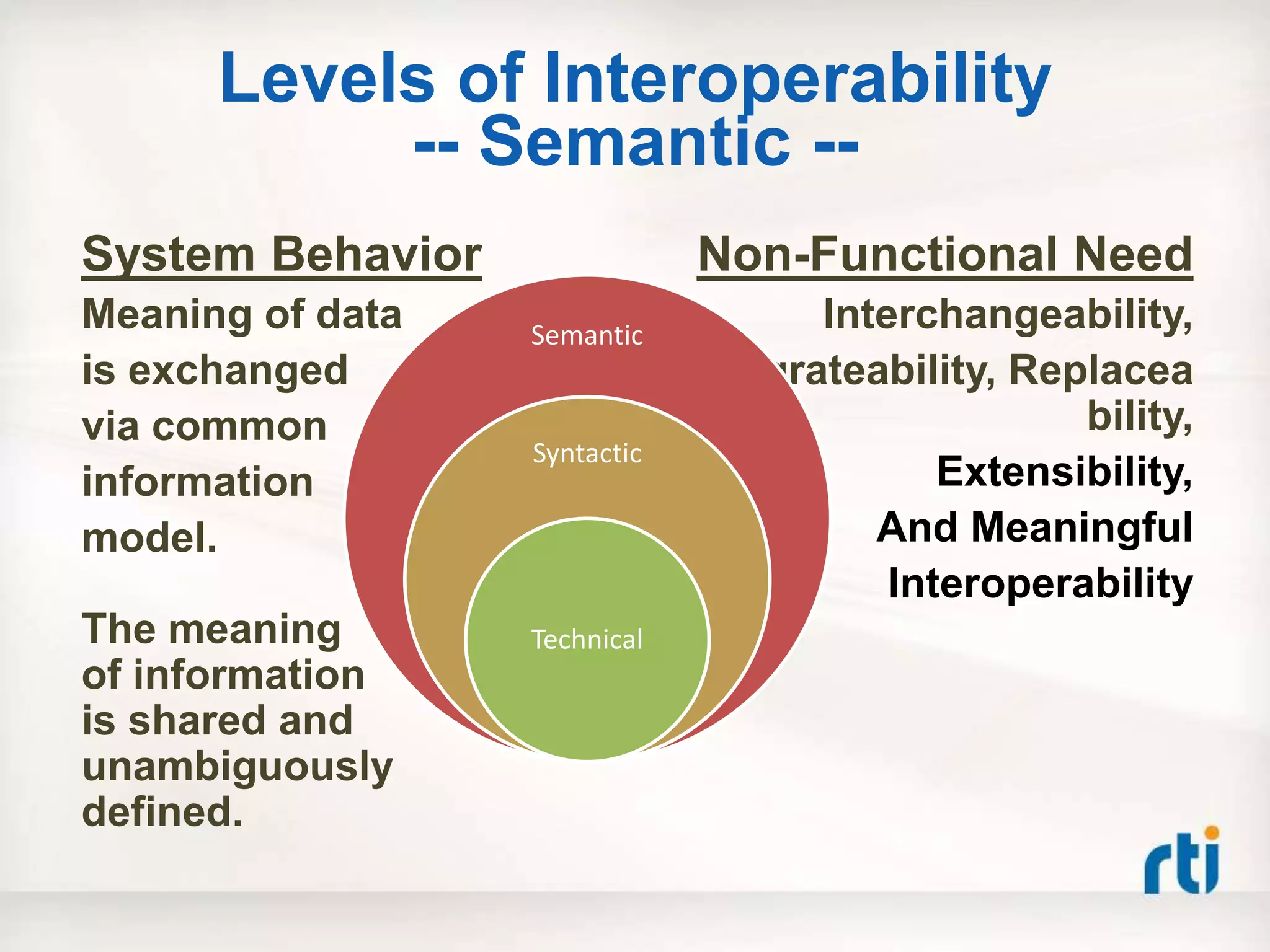

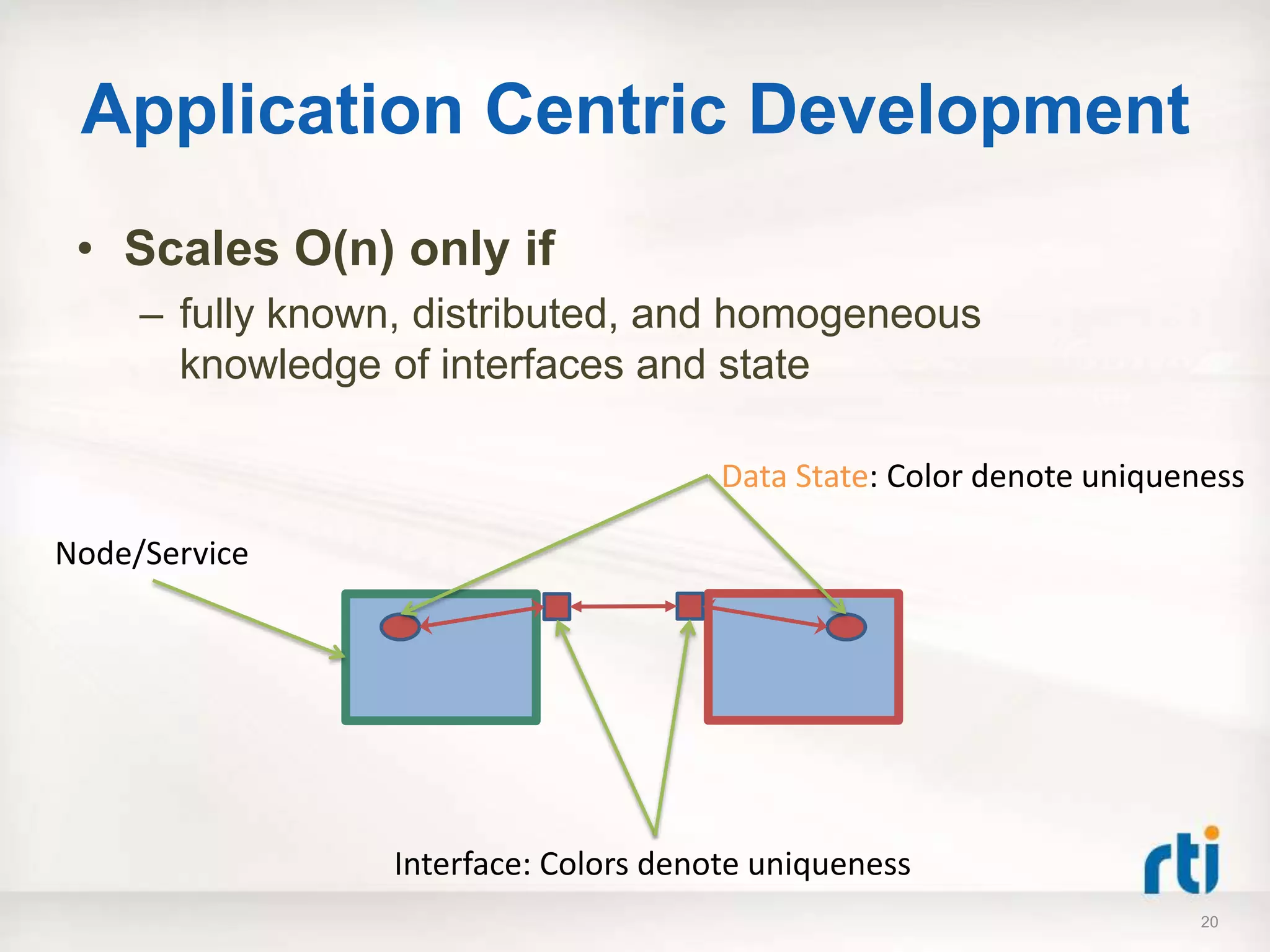

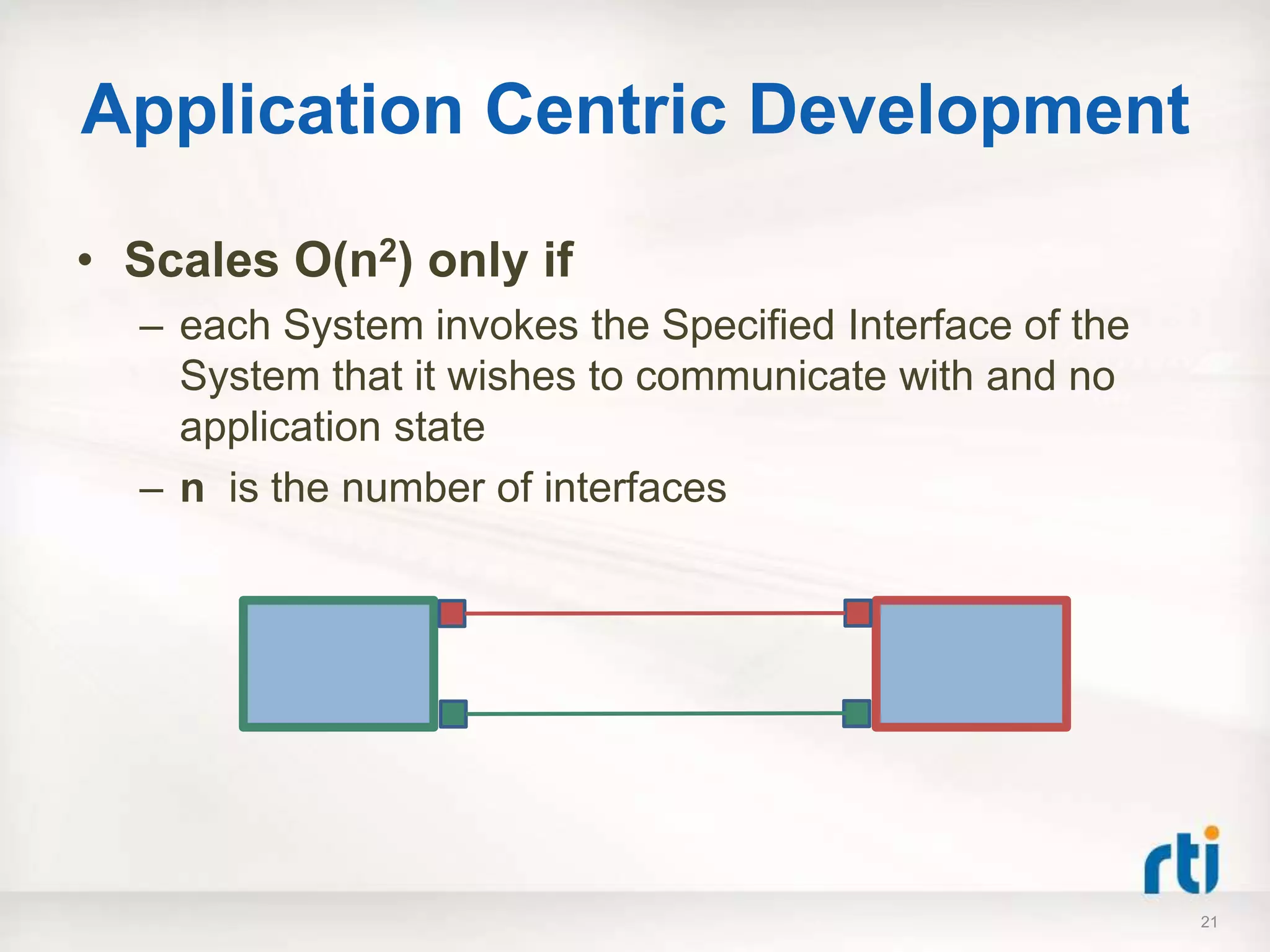

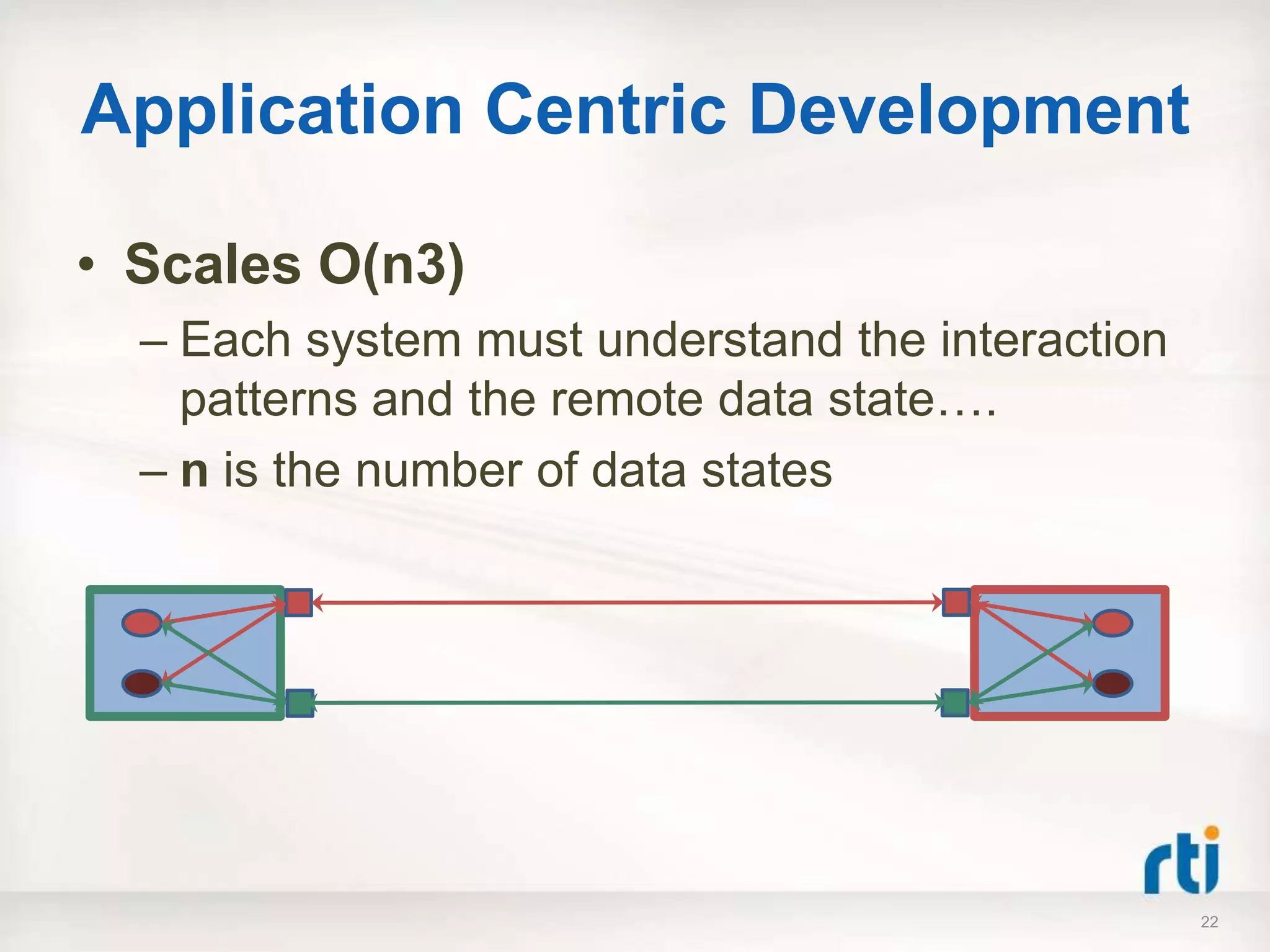

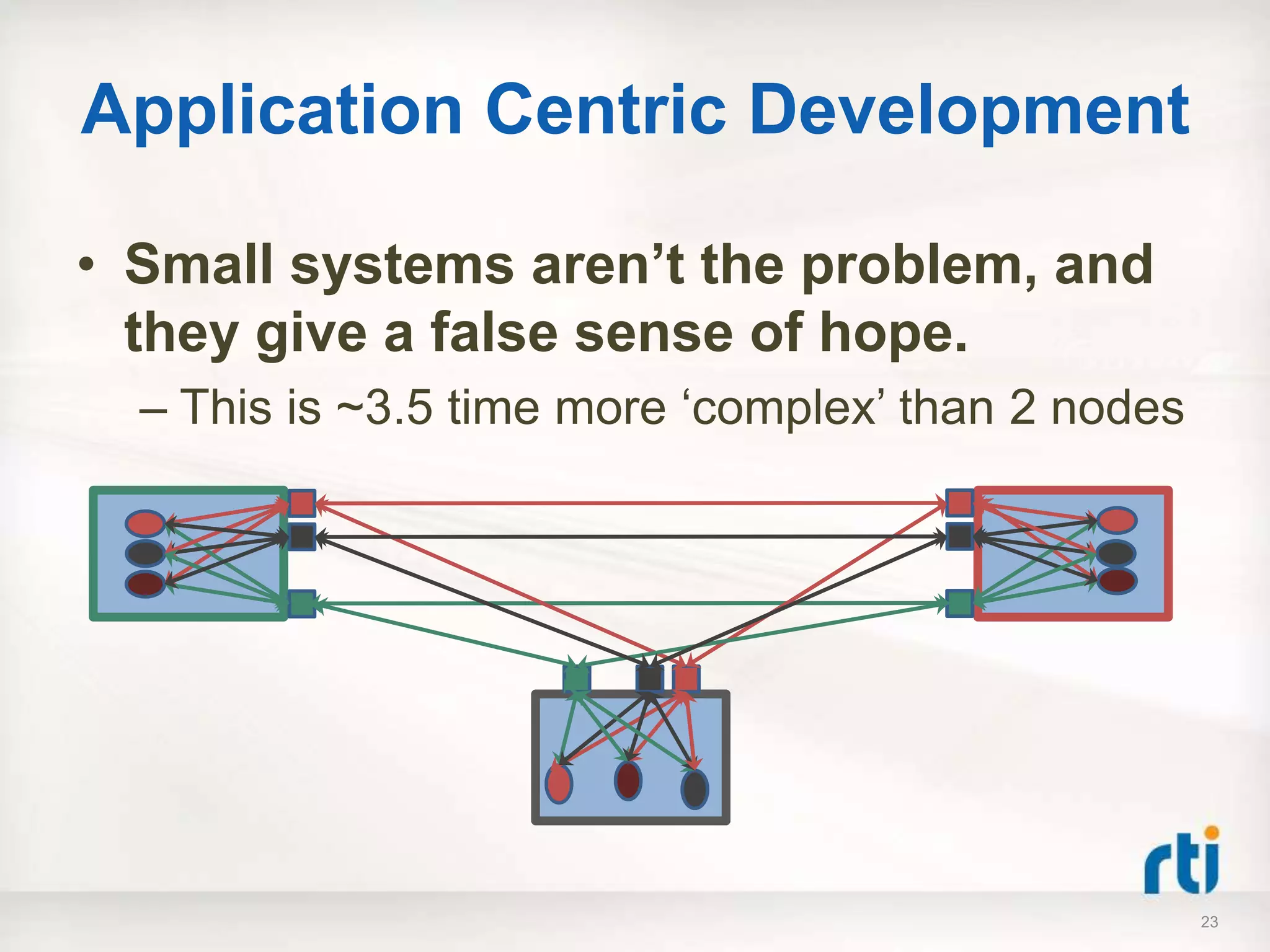

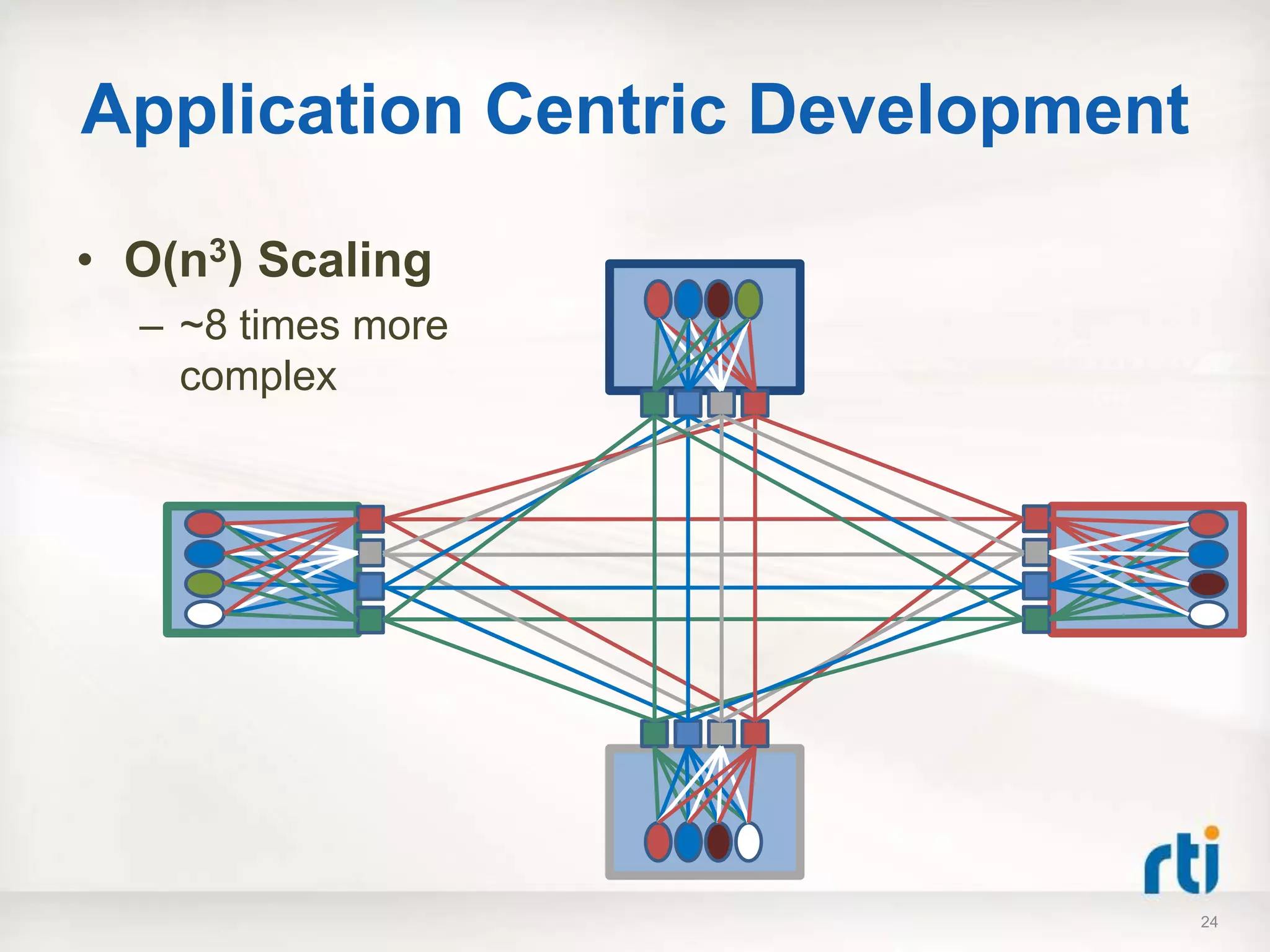

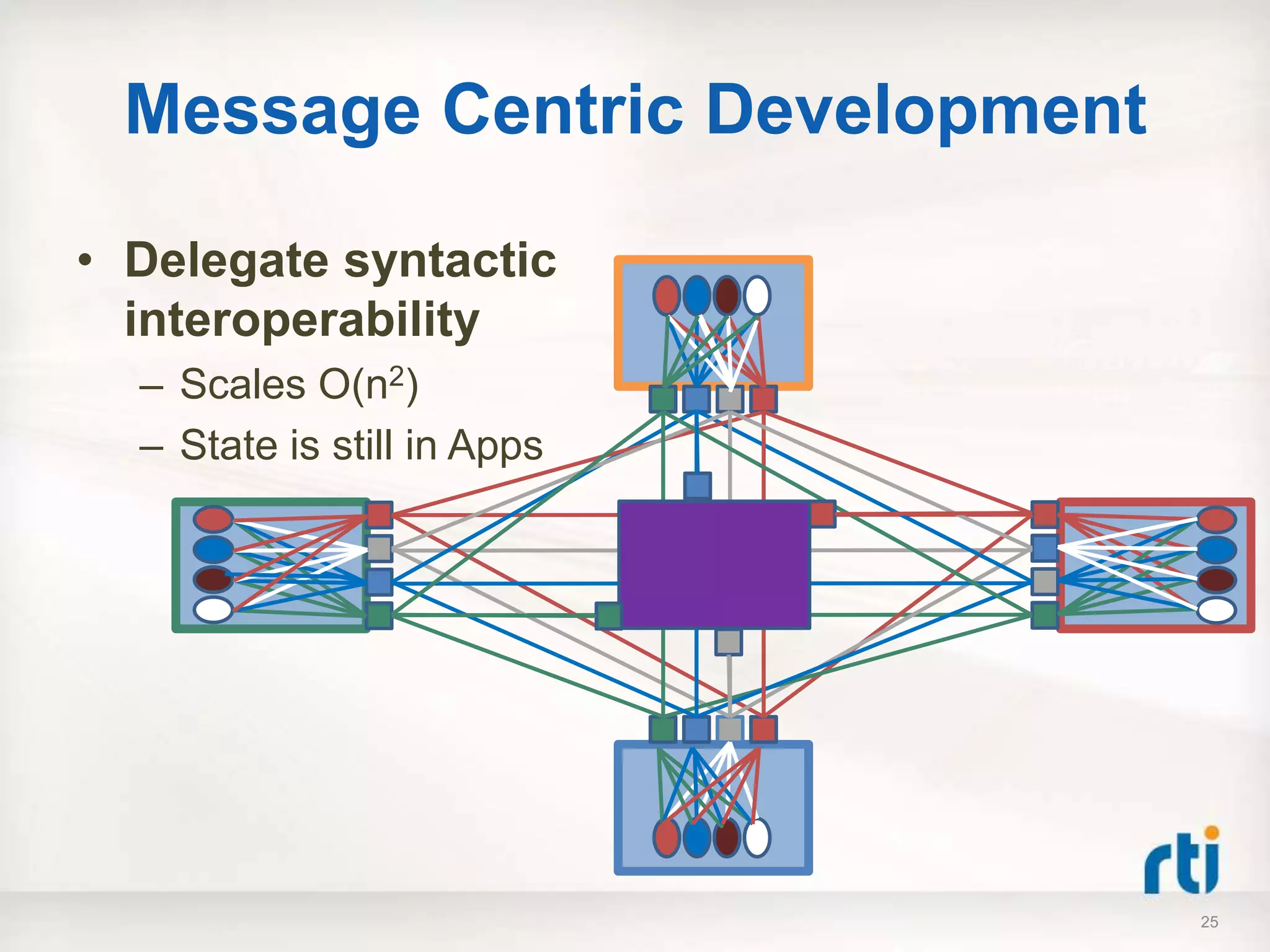

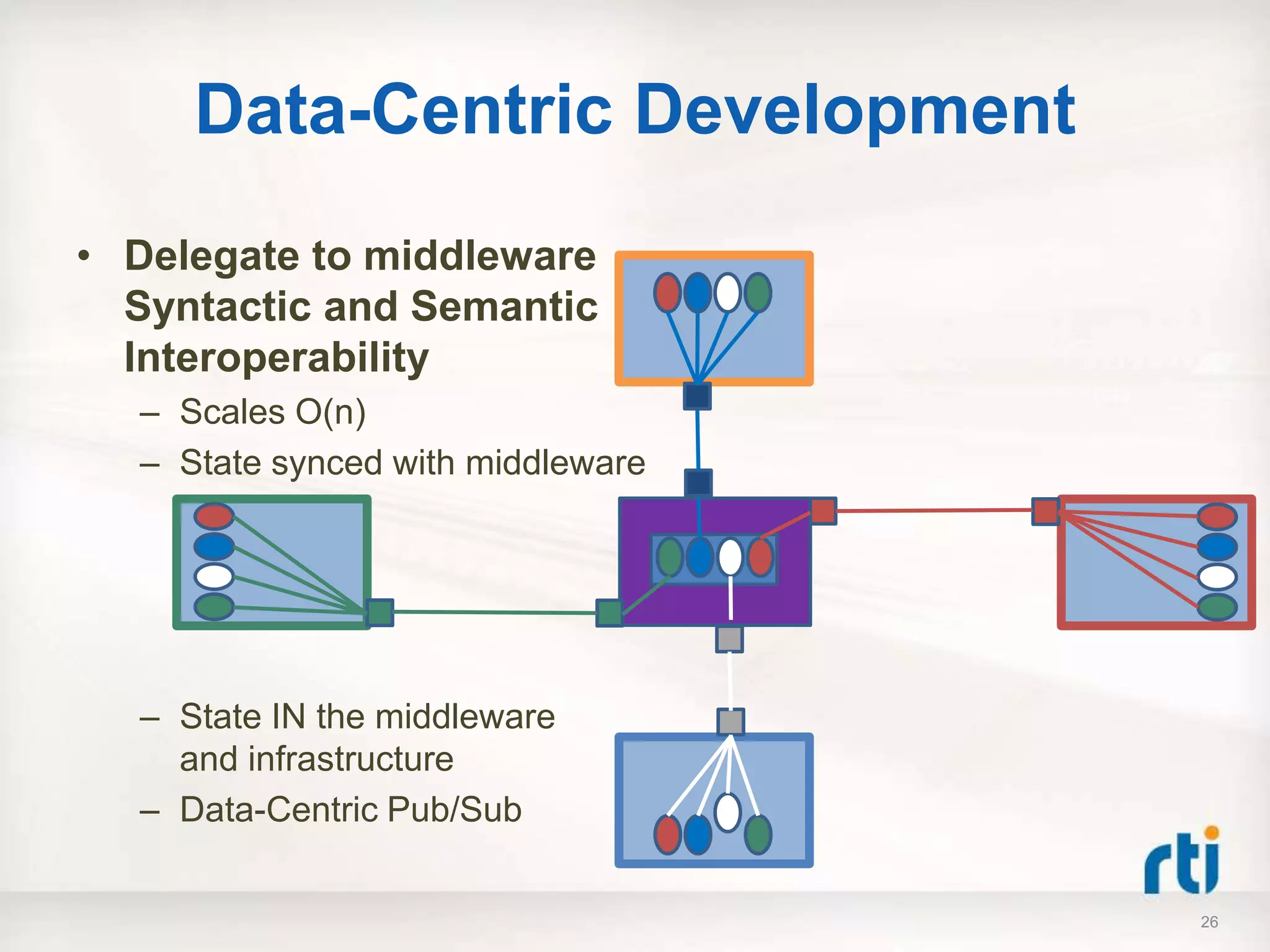

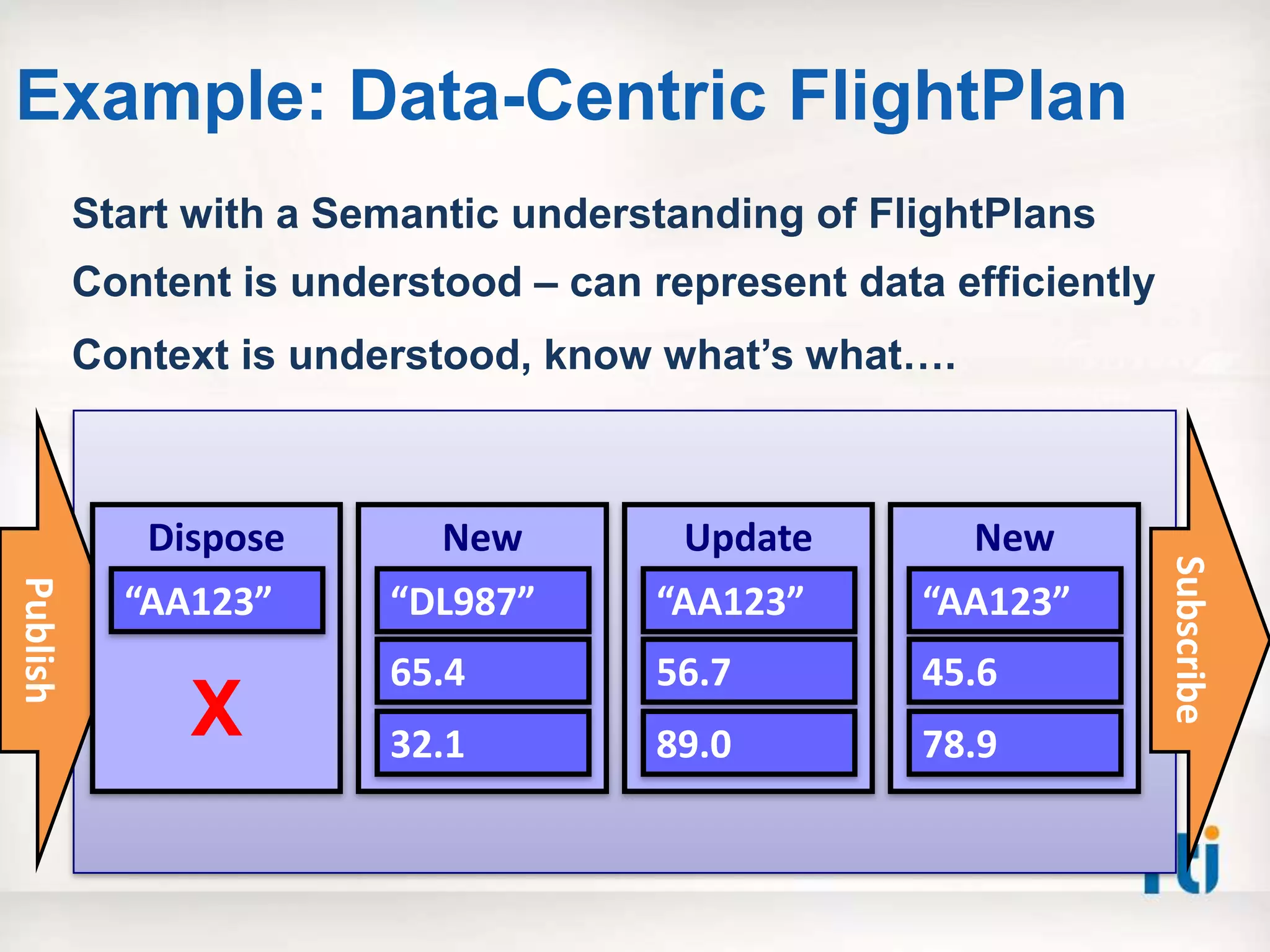

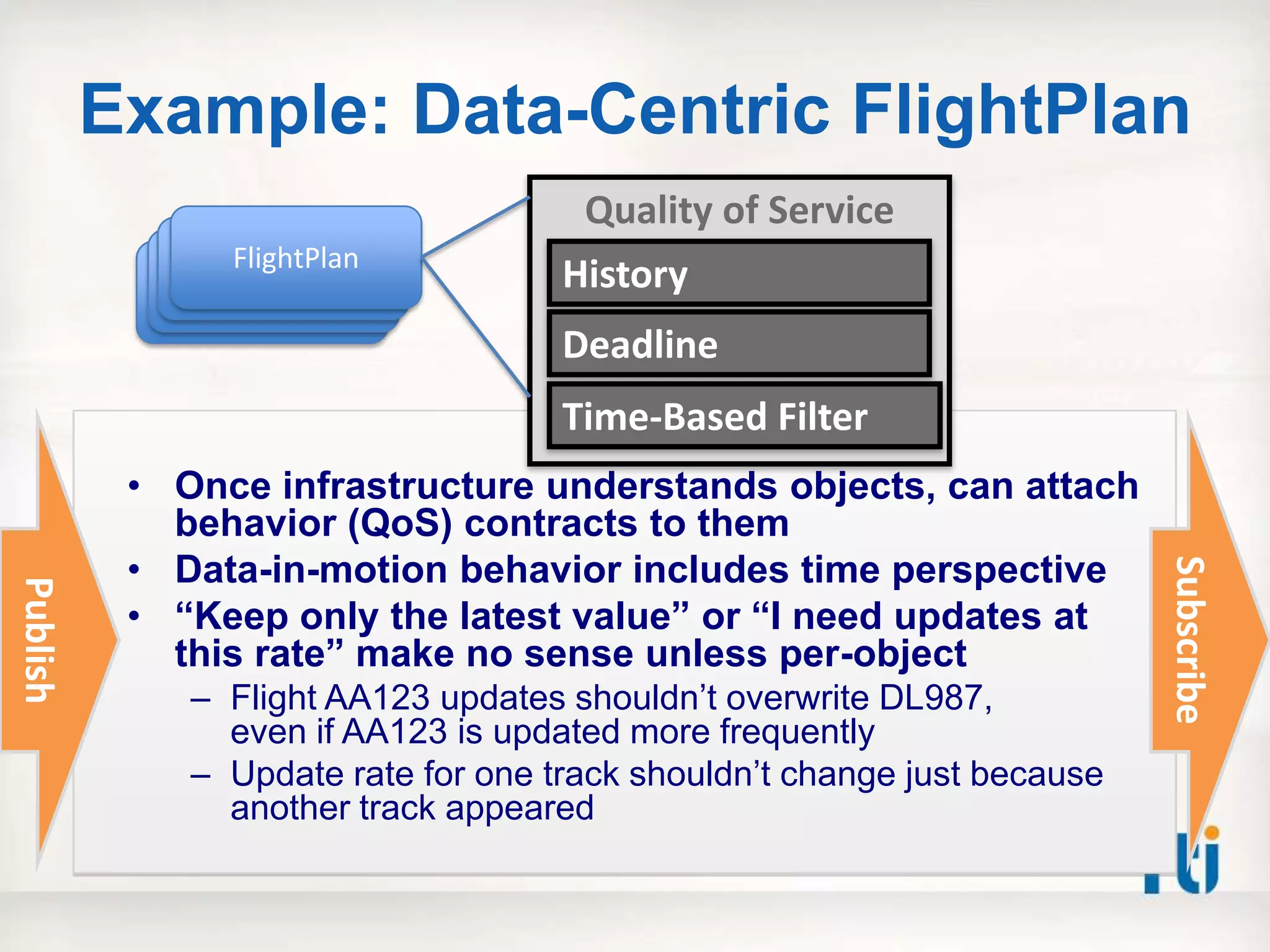

The document discusses a high-performance interoperable architecture aimed at achieving information dominance, emphasizing the need for effective management of application performance, behavior, and data state. It outlines various interoperability levels and architectural approaches, contrasting application-centric, message-centric, and data-centric models, with a focus on a data-centric approach that delegates interoperability responsibilities to middleware. Additionally, it highlights challenges and best practices for integration, emphasizing the importance of decoupling application views from infrastructure management of state to enhance operational efficiency.

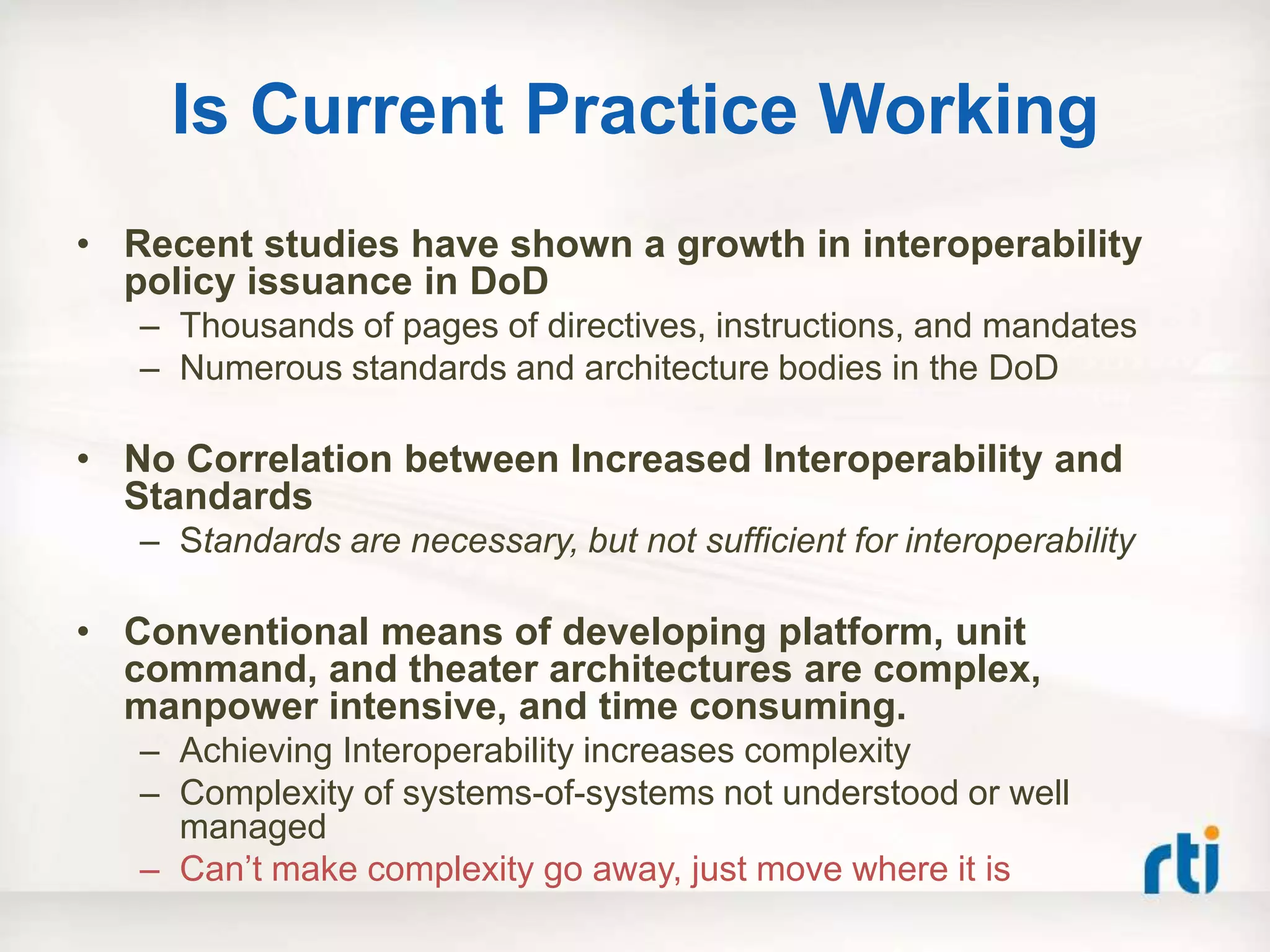

![Agenda

• High Performance

– It really is about time…

• Interoperable Architecture

– What is Interoperability

– Architecture foundations

• [for] Information Dominance

– Putting it all together

– Right time – right data – right place](https://image.slidesharecdn.com/informaiondominance-finalv2-120709133746-phpapp02/75/High-Performance-Interoperable-Architecture-for-Information-Dominance-2-2048.jpg)

![[for] Information Dominance

Making it Happen, Steps you

can take…

32](https://image.slidesharecdn.com/informaiondominance-finalv2-120709133746-phpapp02/75/High-Performance-Interoperable-Architecture-for-Information-Dominance-32-2048.jpg)