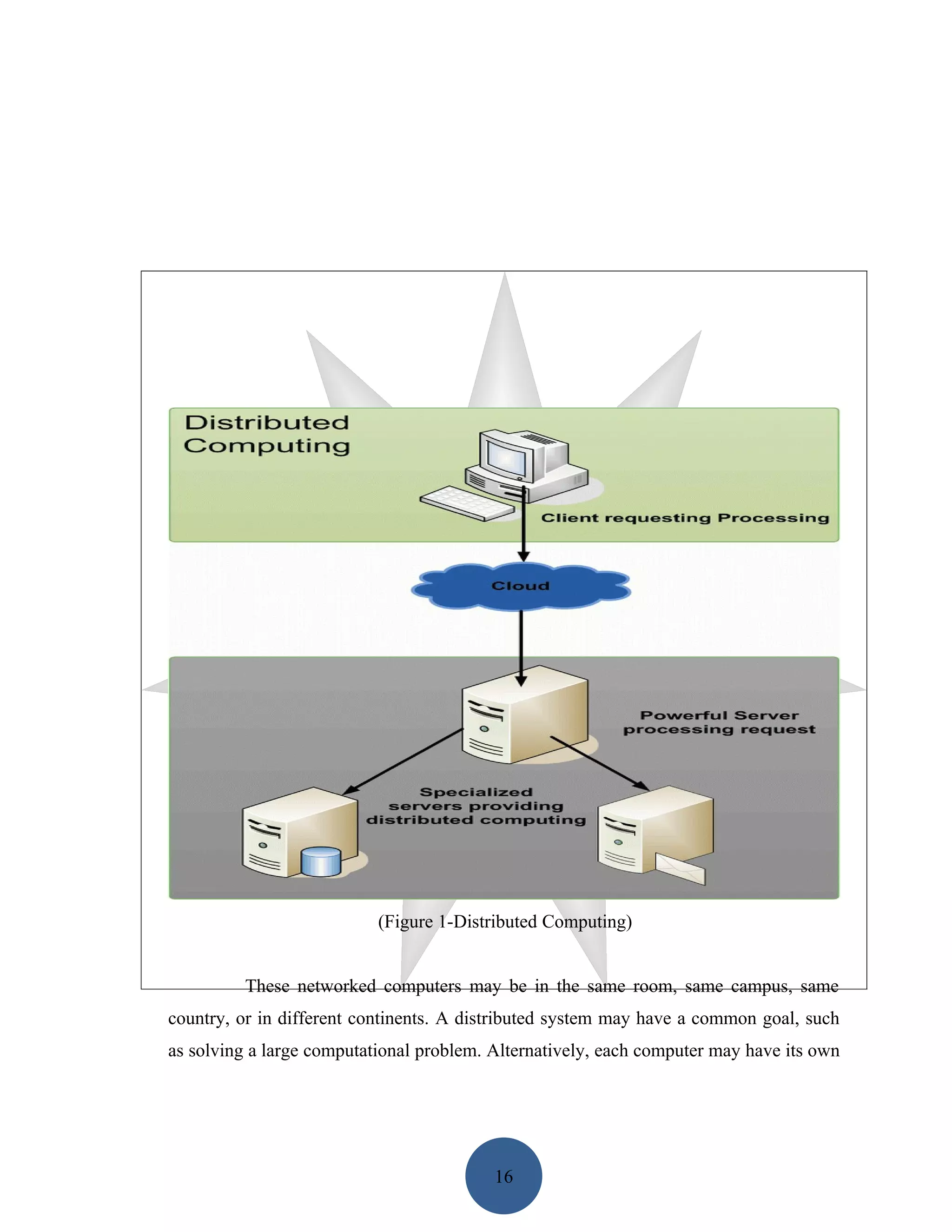

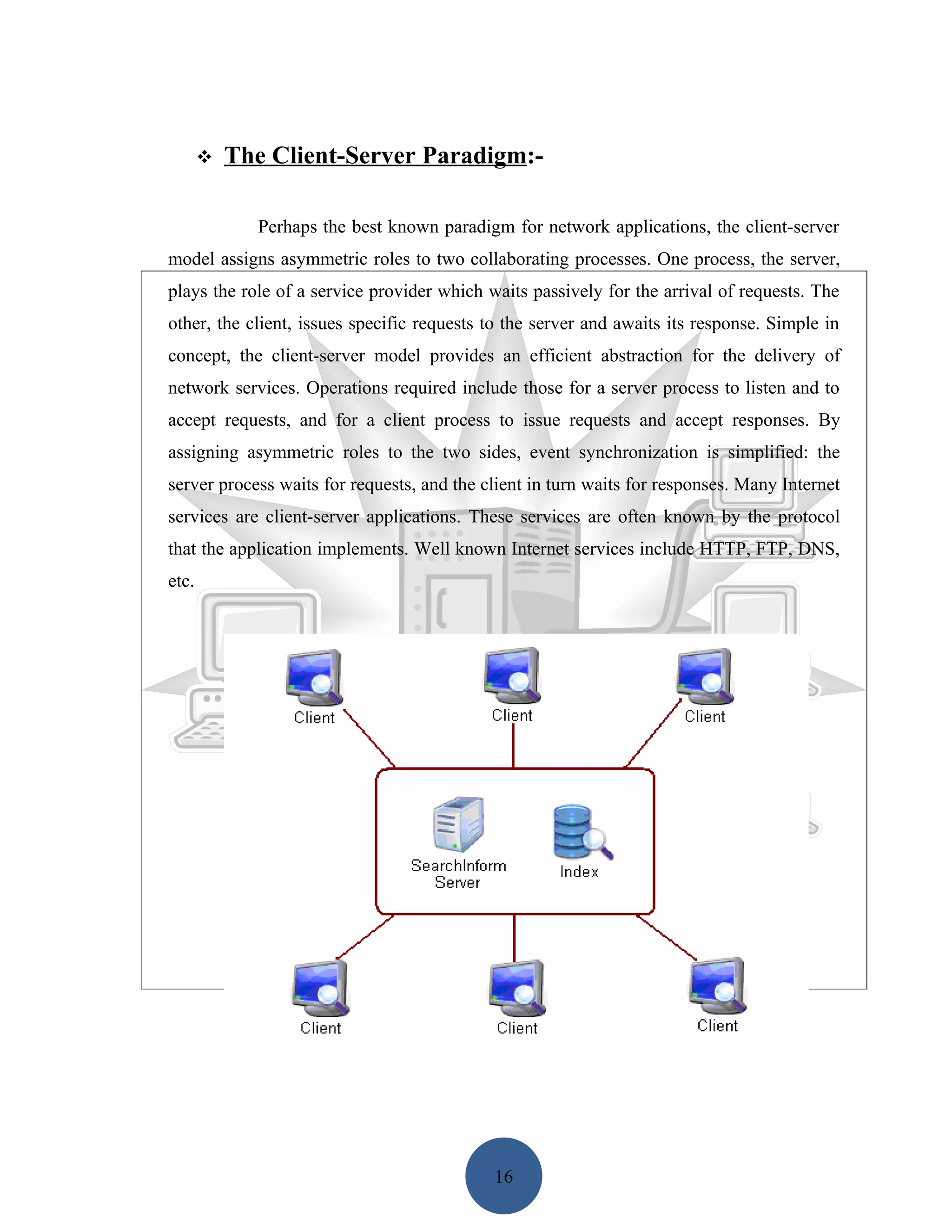

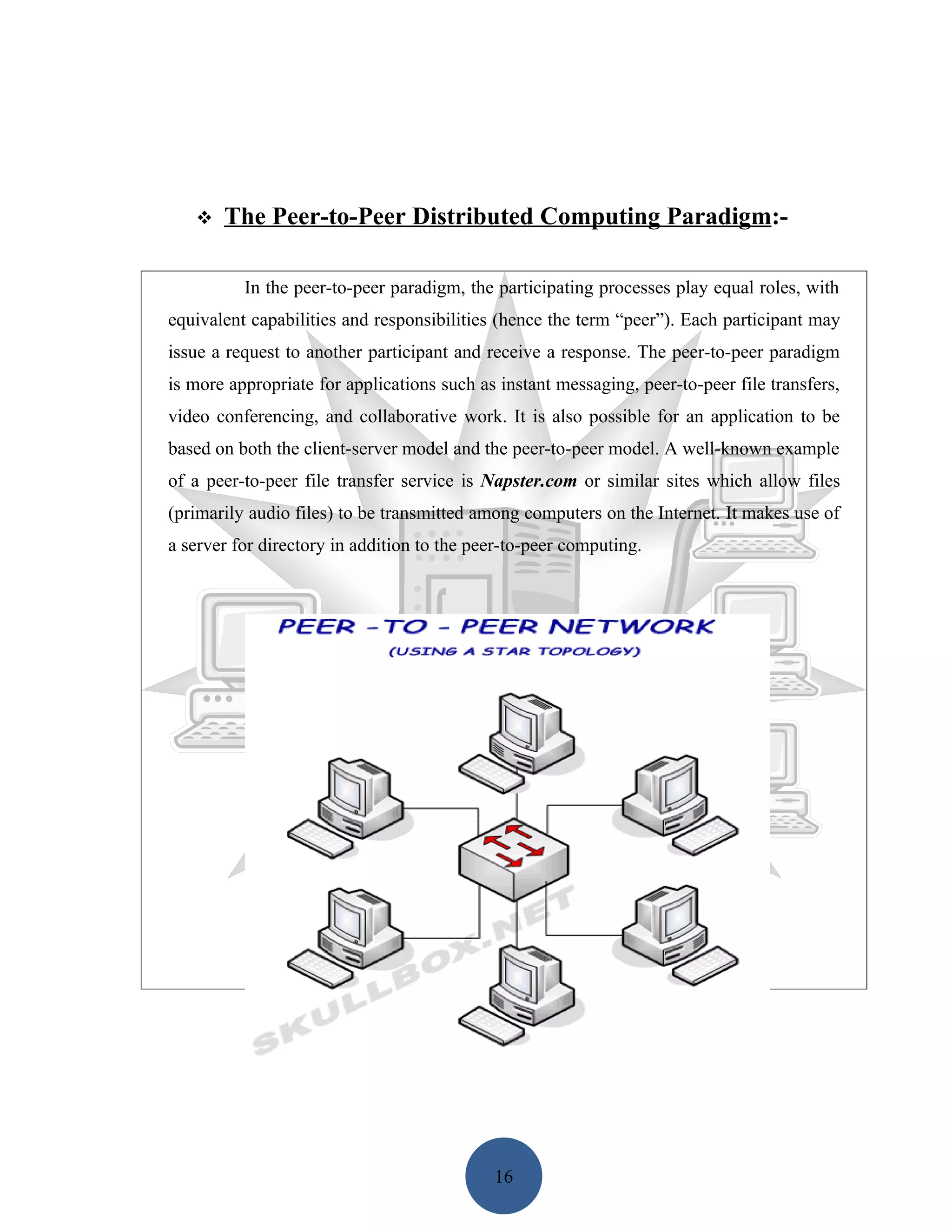

Distributed computing allows computers connected over a network to coordinate activities and share resources. It appears as a single, integrated system to users. Key characteristics include resource sharing, openness, concurrency, scalability, fault tolerance, and transparency. Common architectures include client-server, n-tier, and peer-to-peer. Paradigms for distributed applications include message passing between processes, the client-server model with asymmetric roles, and the peer-to-peer model with equal roles.