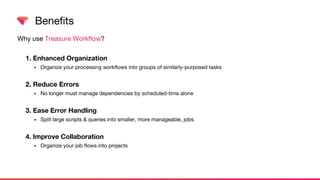

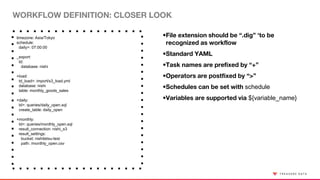

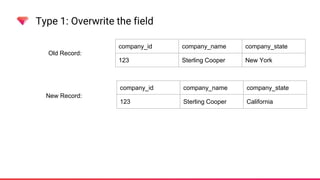

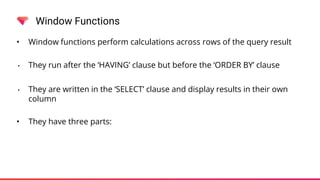

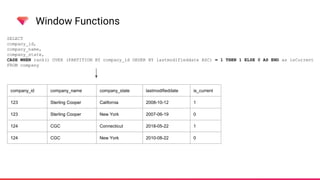

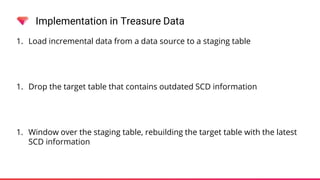

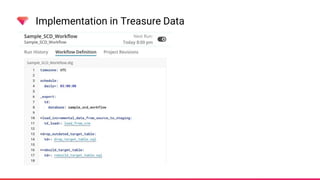

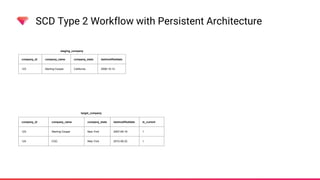

The document discusses managing Slowly Changing Dimensions (SCDs) using Treasure Data's CDP and workflows. It outlines the types of SCDs, including Type 1, Type 2, Type 3, and Type 4, and explains the advantages of using Treasure Data workflows for data orchestration and error handling. Additionally, it provides practical implementations and examples of using window functions to effectively manage SCDs within the platform.