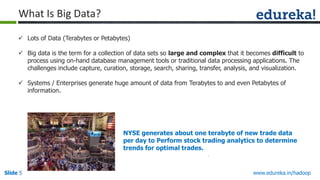

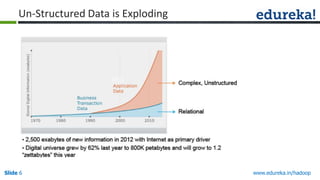

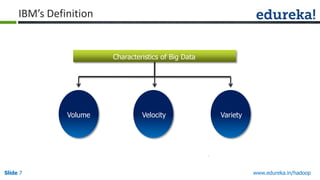

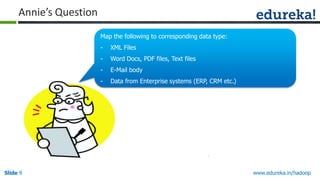

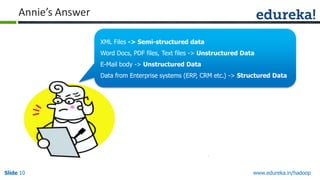

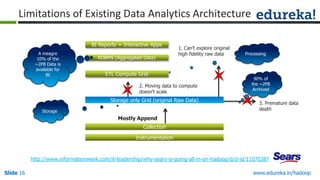

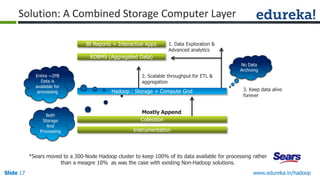

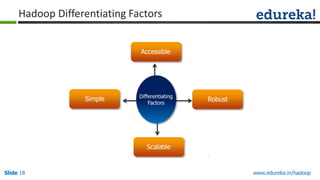

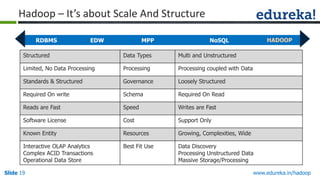

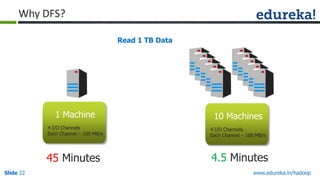

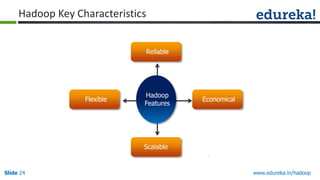

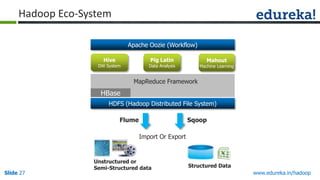

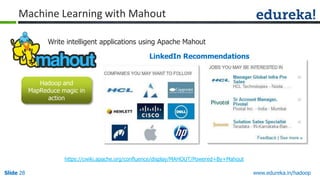

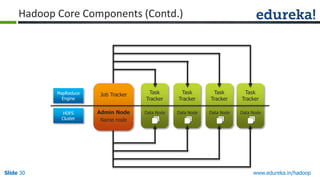

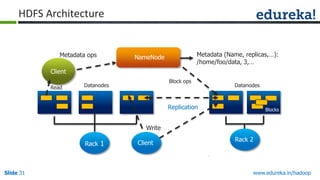

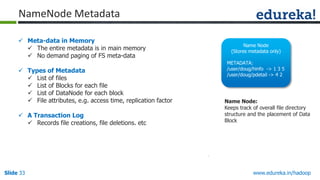

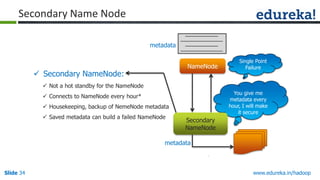

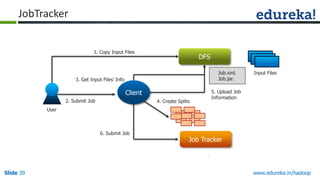

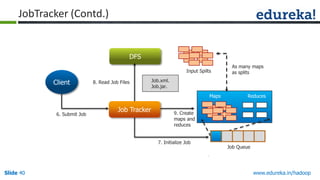

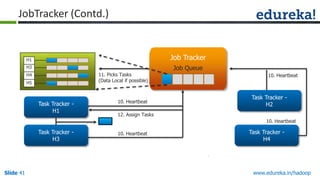

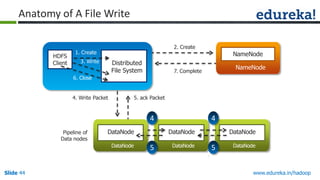

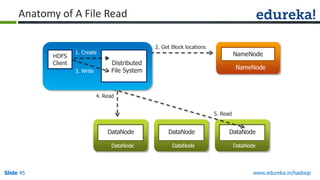

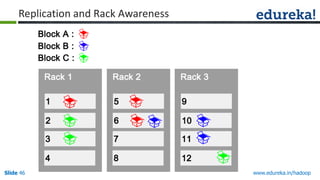

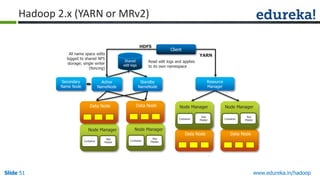

The document presents an overview of a comprehensive Hadoop course offered by Edureka, detailing its features, course topics, and the importance of Hadoop in managing and processing big data. It covers the structure of big data, the functionality of Hadoop's architecture, and introduces its various components such as HDFS and MapReduce. Additionally, the document highlights real-world applications and common scenarios where big data analytics can provide value across different industries.