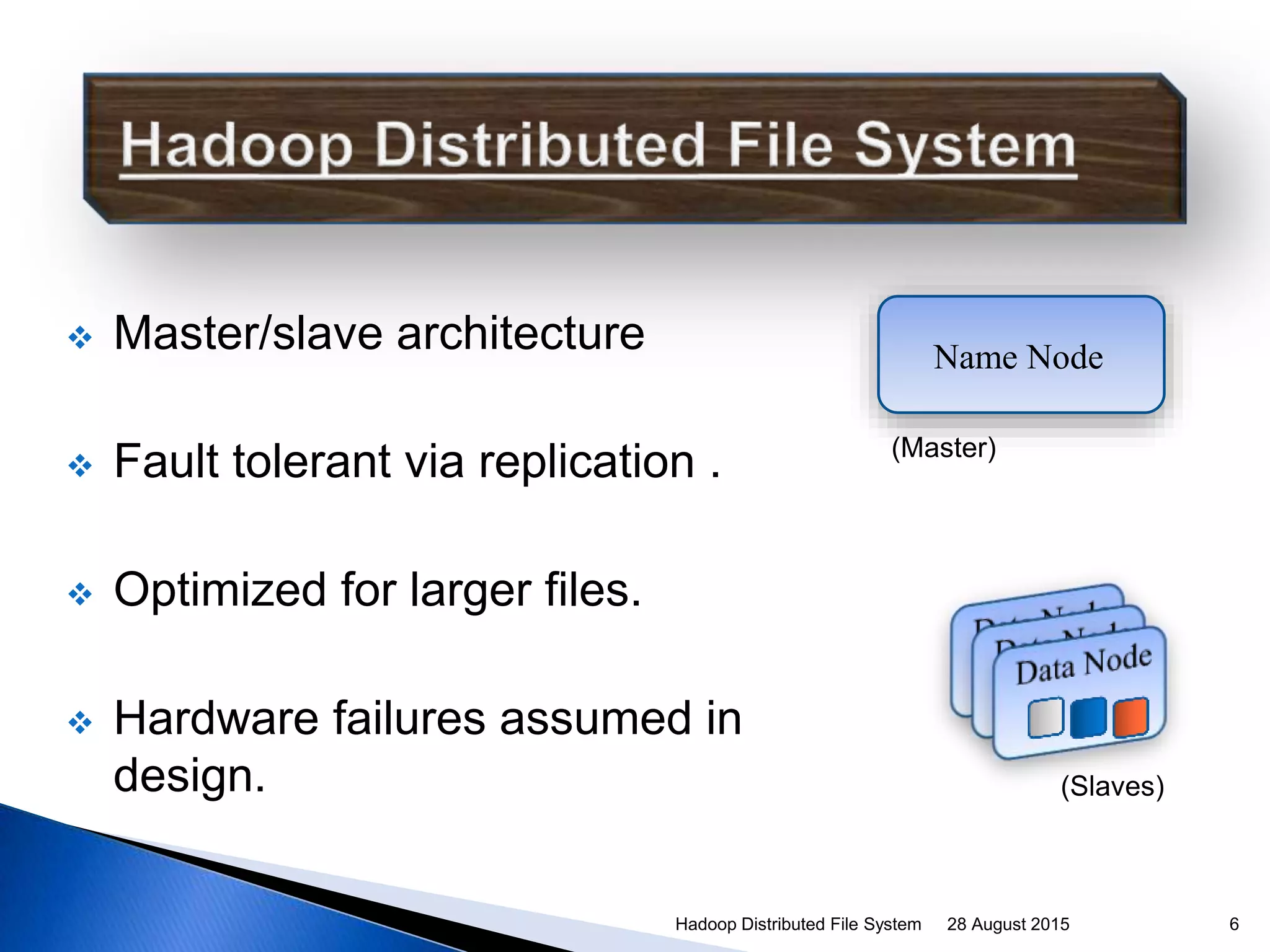

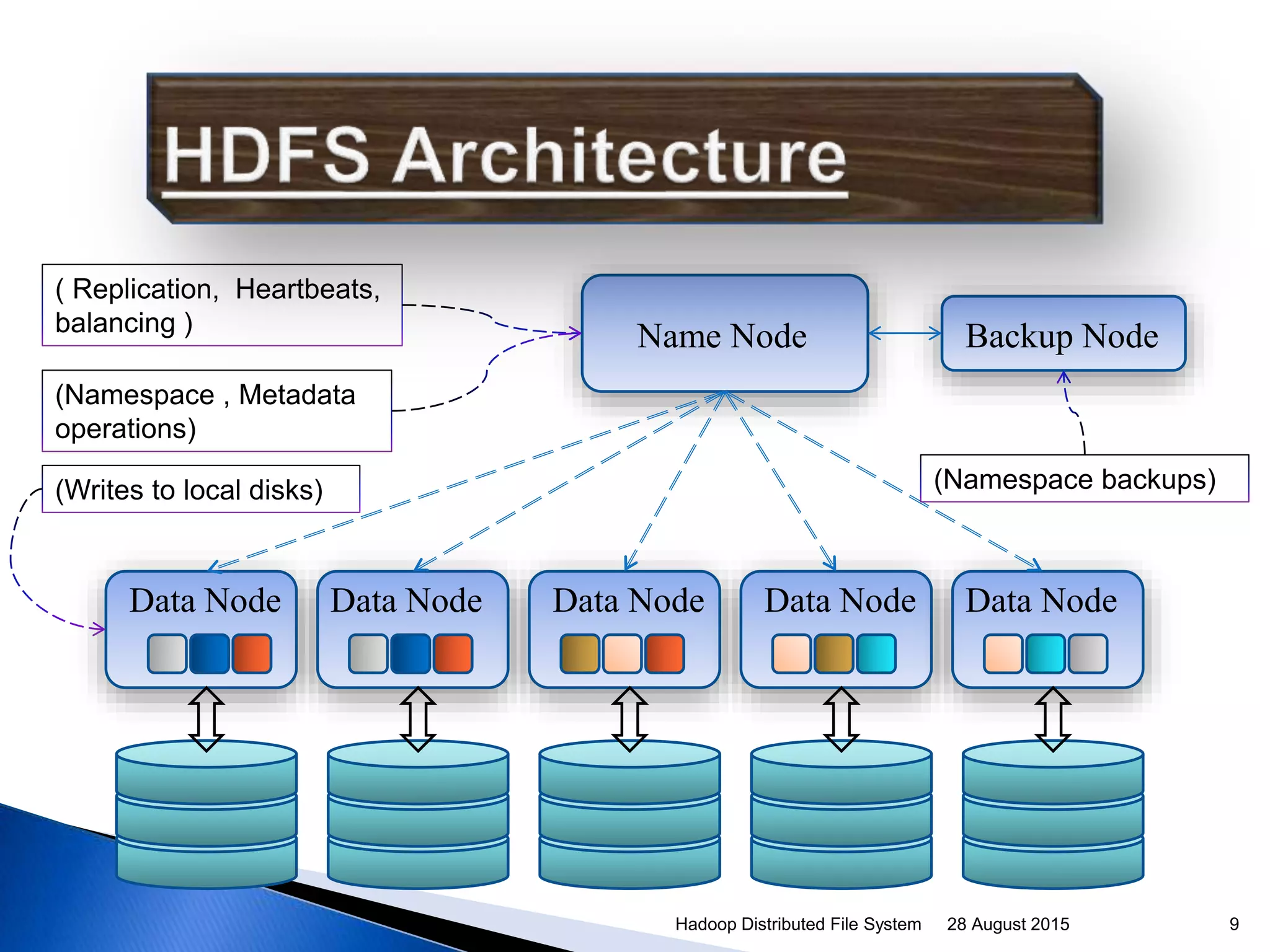

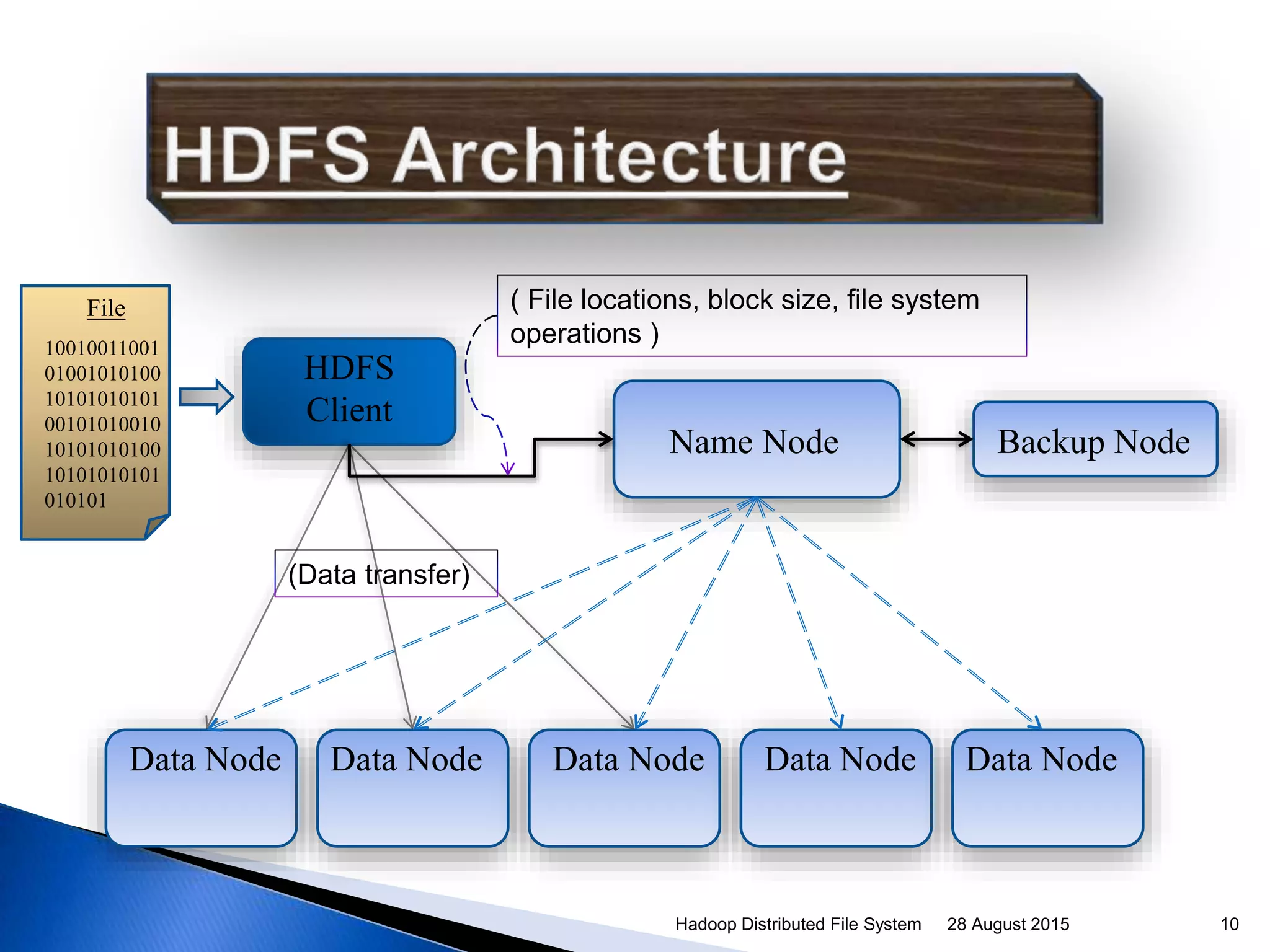

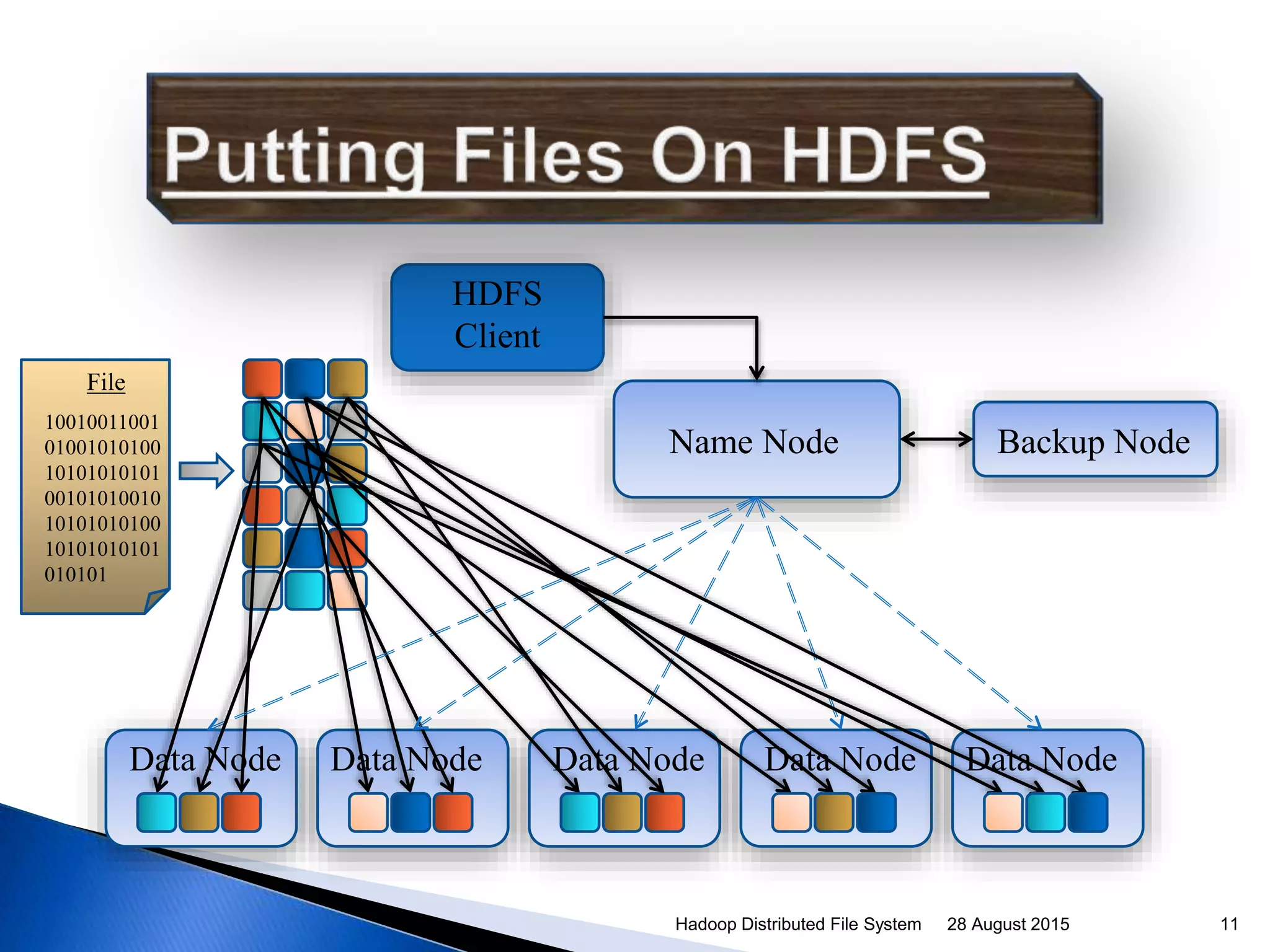

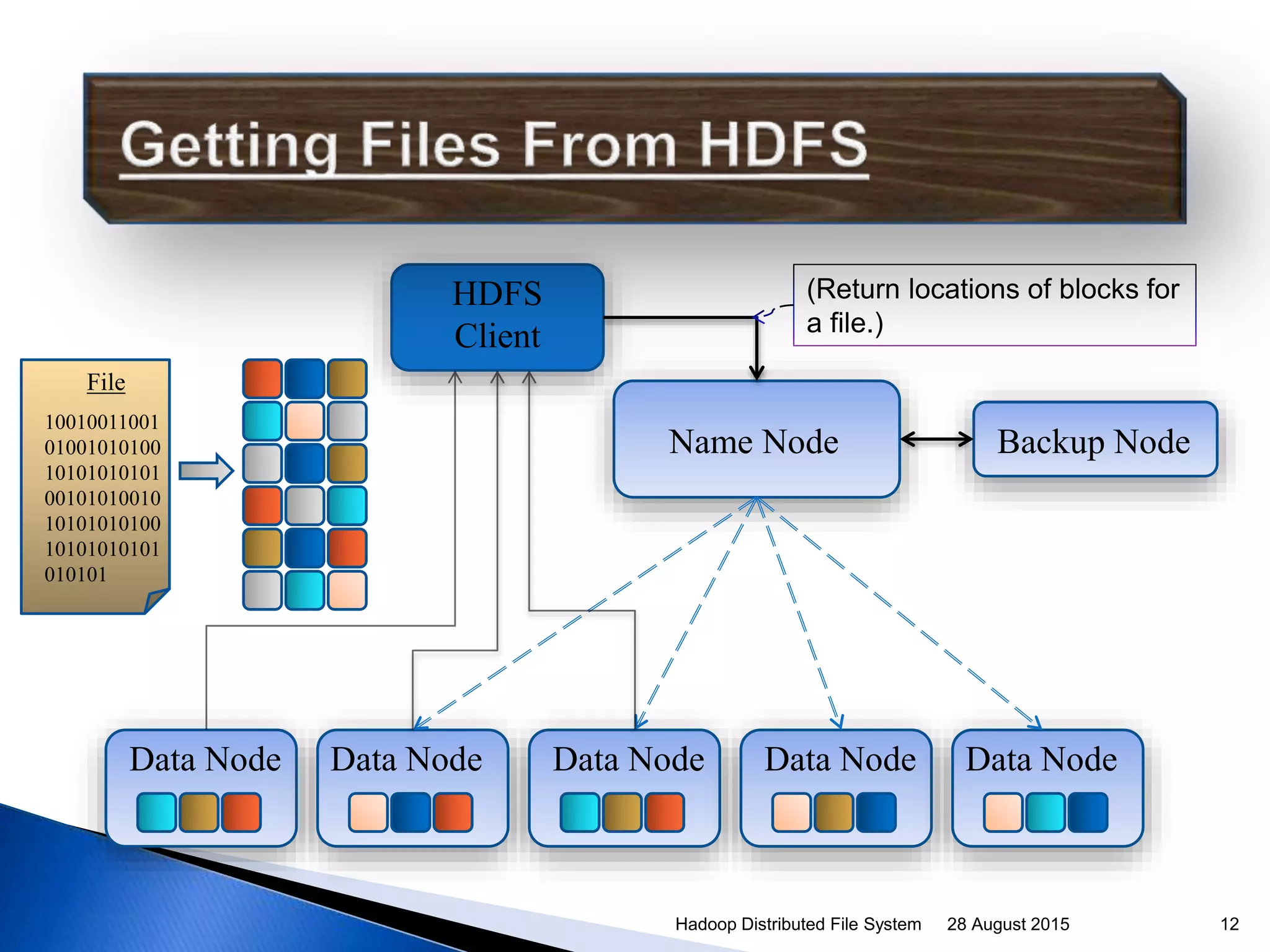

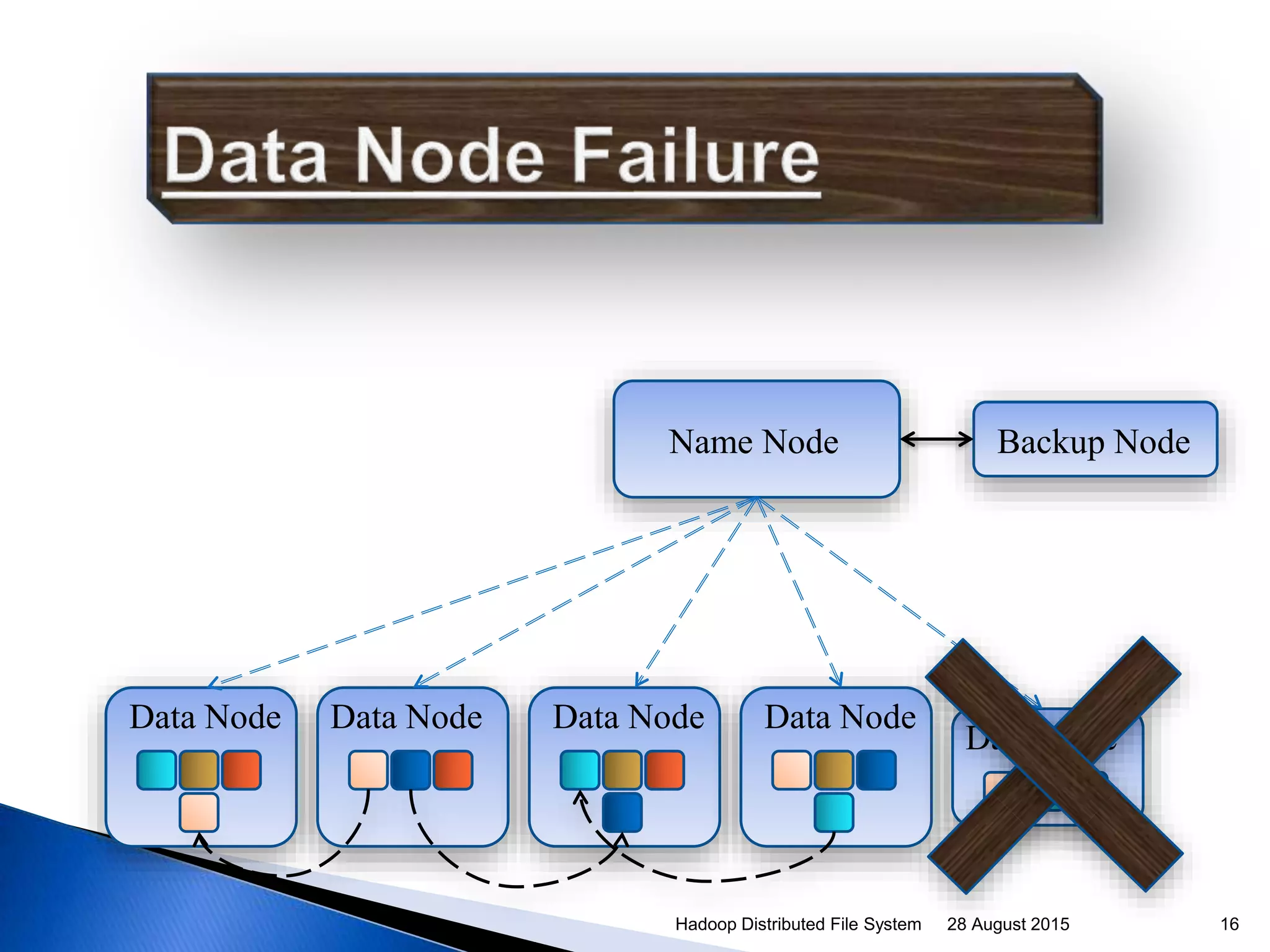

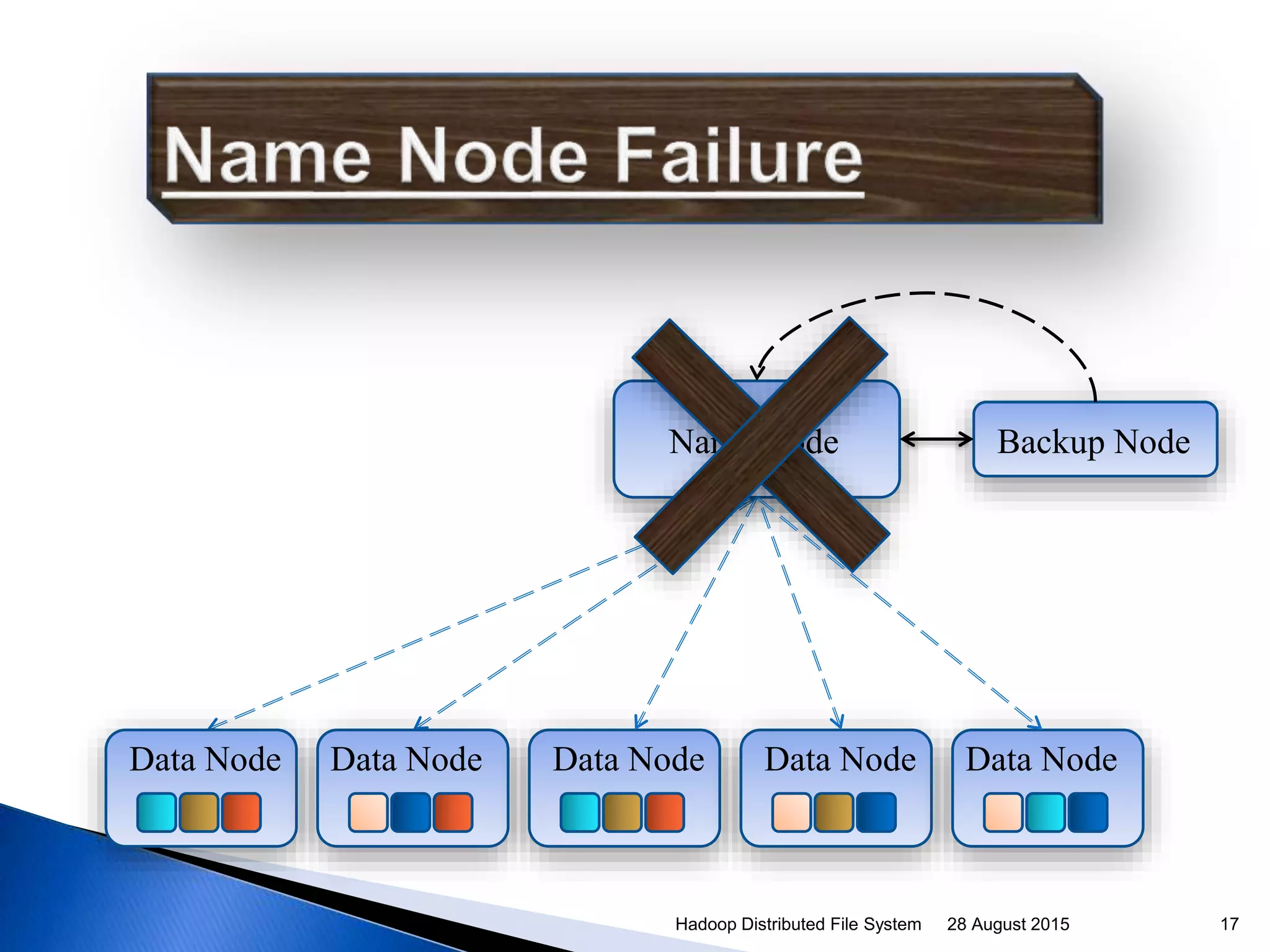

The document discusses the Hadoop Distributed File System (HDFS), which was created by Doug Cutting to address the need for large-scale data processing. HDFS is designed for streaming data across commodity hardware and uses a master/slave architecture with one NameNode master and multiple DataNodes. The NameNode manages the file system namespace and regulates access to files by clients via the DataNodes, which store data blocks and ensure replication for fault tolerance.

![ “Framework for running [distributed]

applications on large cluster built of commodity

hardware“ .

- From Hadoop Wiki.

Originally created by Doug Cutting .

Named the project after his son’s name.

Inspired by Google’s architecture: Map Reduce

and GFS

28 August 2015Hadoop Distributed File System 3](https://image.slidesharecdn.com/9467edb1-e638-4f17-974e-7cc8867c2655-150828152436-lva1-app6892/75/Hadoop-Distributed-File-System-3-2048.jpg)