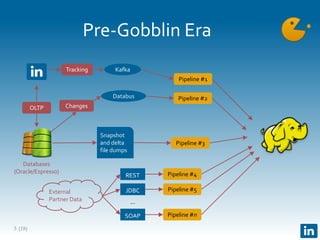

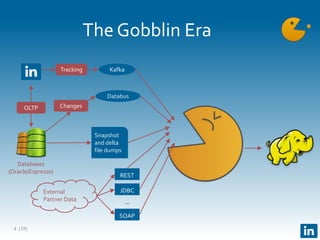

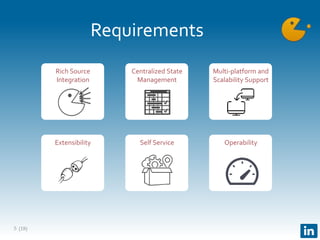

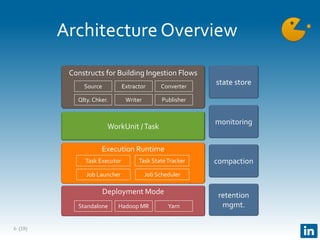

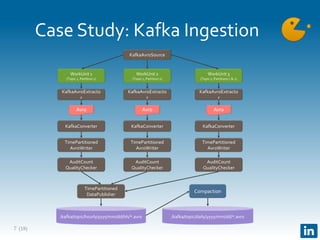

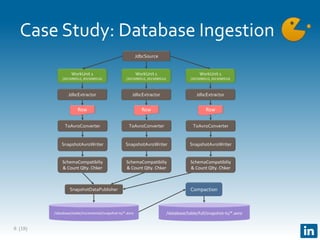

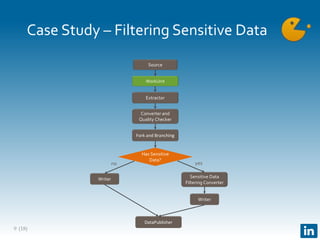

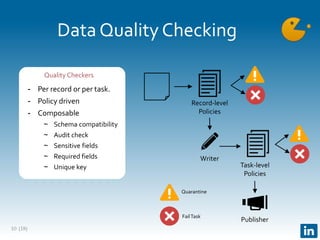

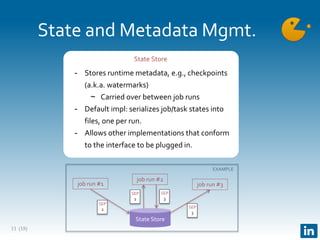

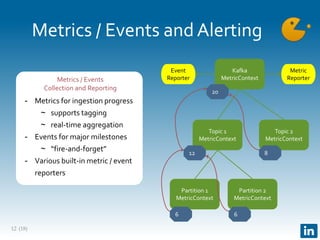

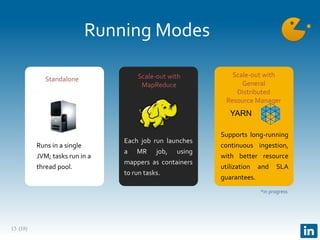

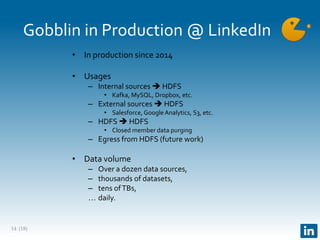

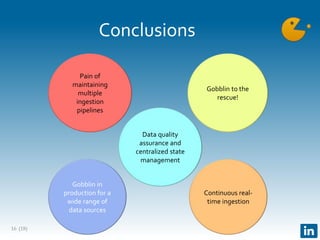

The document presents Gobblin, a unified data ingestion system developed by LinkedIn, designed to address challenges in data ingestion across various platforms. It covers the architecture, case studies, and features such as centralized state management and data quality checking, emphasizing Gobblin's production usage since 2014 with multiple data sources. Future developments include enhancements for real-time ingestion and improved integration with other data processing tools.