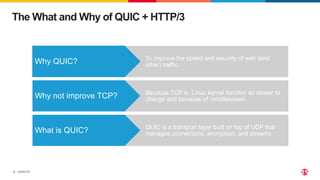

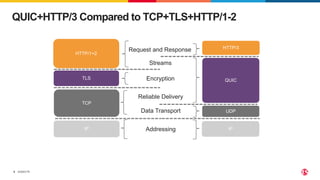

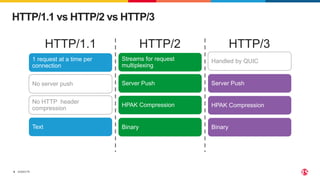

The document details a webinar discussing QUIC and HTTP/3 technologies, highlighting their advantages over TCP, TLS, and HTTP/1-2. It explains the connection establishment process of QUIC and its implications for latency and security, as well as providing practical guidance on configuring QUIC in NGINX. Additional resources and a lab session for hands-on experience are also included.

![©2023 F5

15

A Simple NGINX QUIC Configuration

http {

log_format quic '$remote_addr - $remote_user [$time_local]'

'"$request" $status $body_bytes_sent ' '"$http_referer"

"$http_user_agent" "$server_protocol"’;

access_log logs/access.log quic;

server {

# for better compatibility it's recommended # to use the same port for quic and https

listen 8443 http3 reuseport;

listen 8443 ssl;

ssl_certificate certs/example.com.crt;

ssl_certificate_key certs/example.com.key;

ssl_protocols TLSv1.3;

location / {

# required for browsers to direct them into quic port

add_header Alt-Svc 'h3=":8443"; ma=86400’;

}

}

}](https://image.slidesharecdn.com/nginx101quic-230419201234-dd264875/85/Get-Hands-On-with-NGINX-and-QUIC-HTTP-3-15-320.jpg)