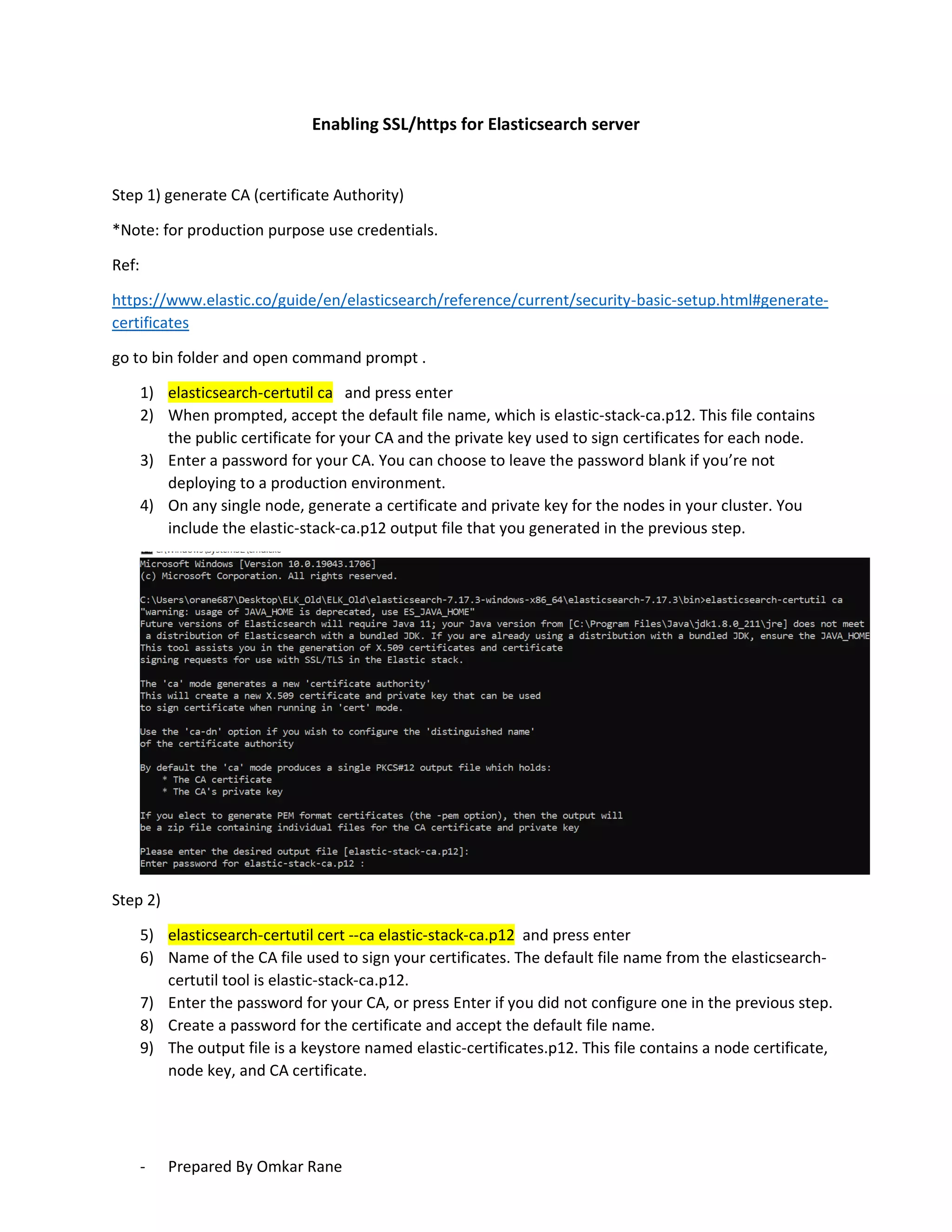

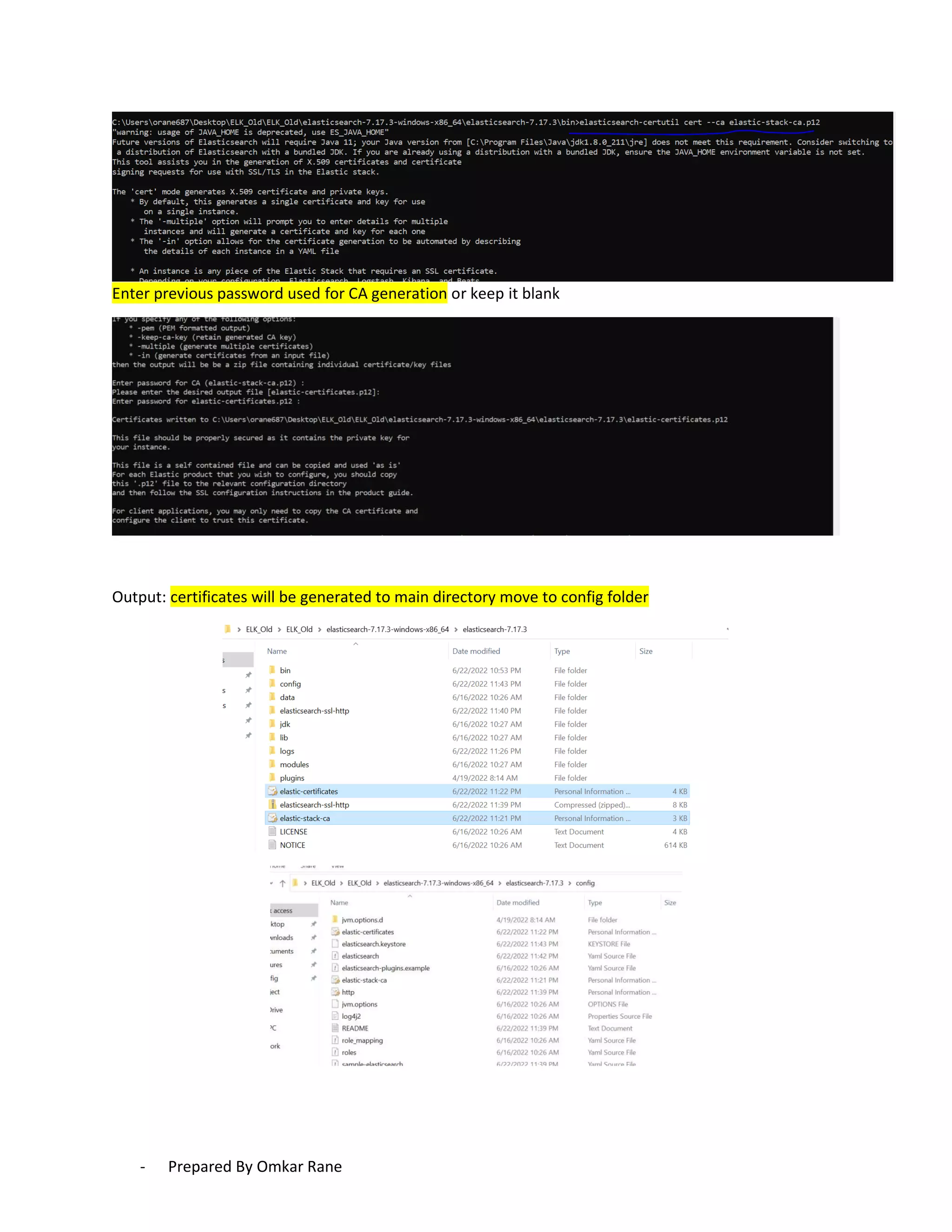

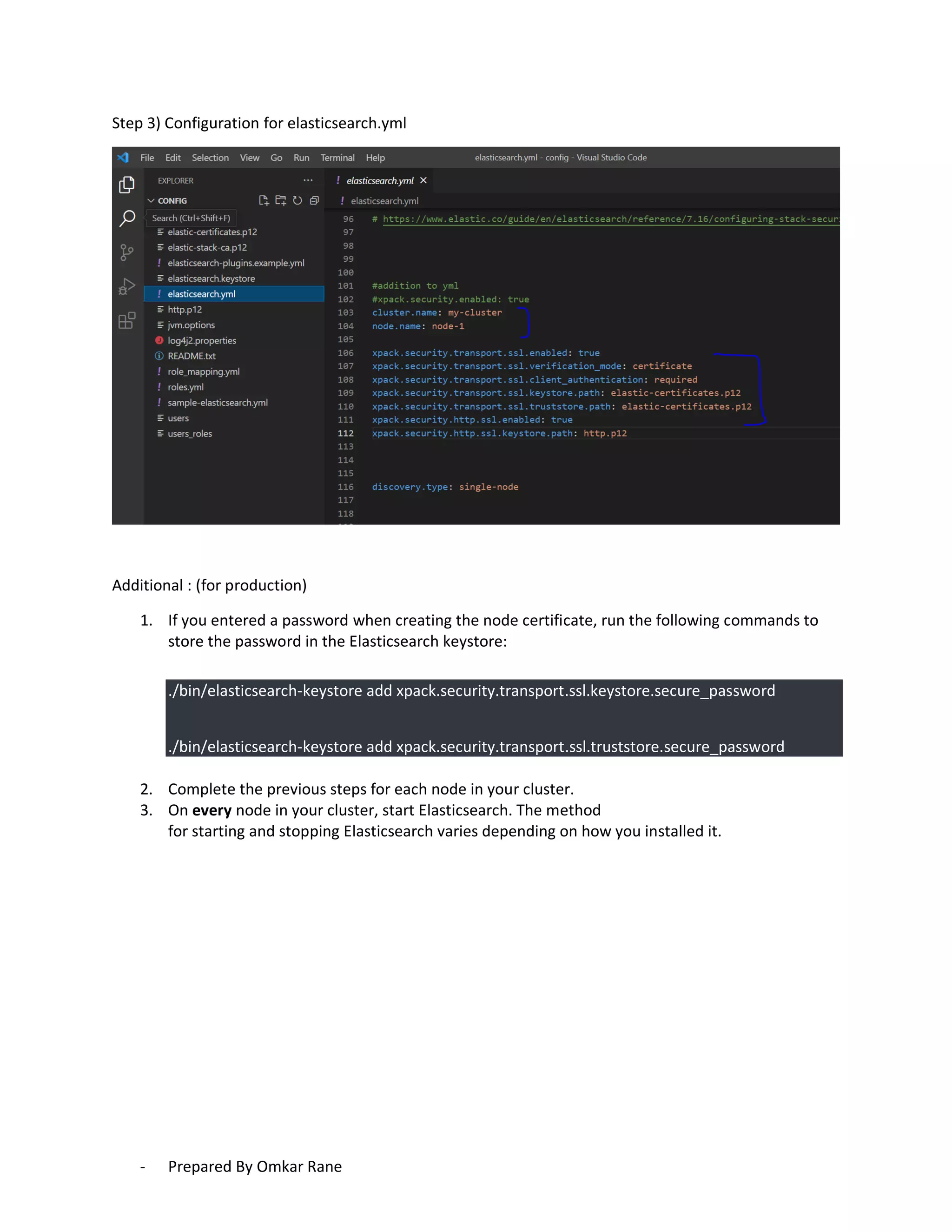

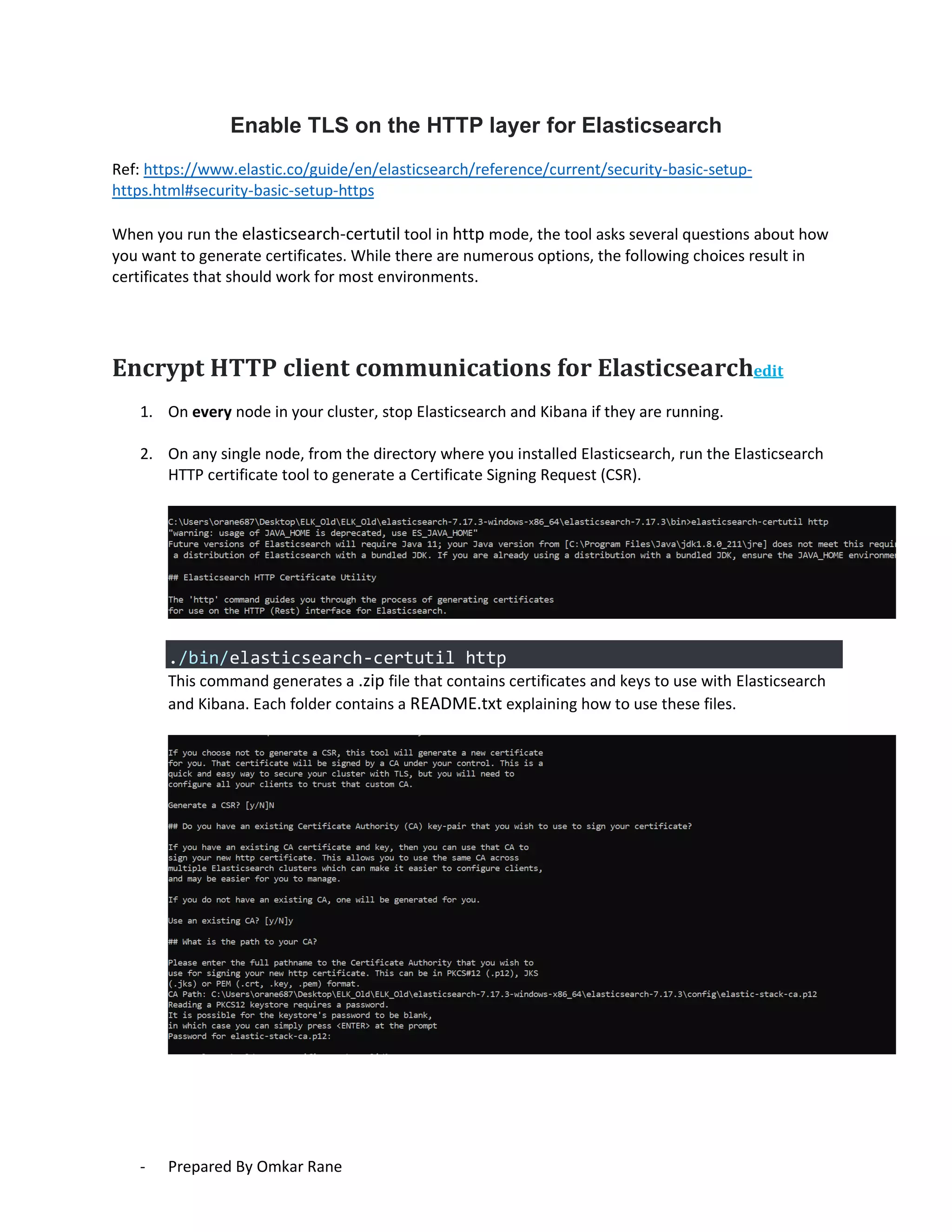

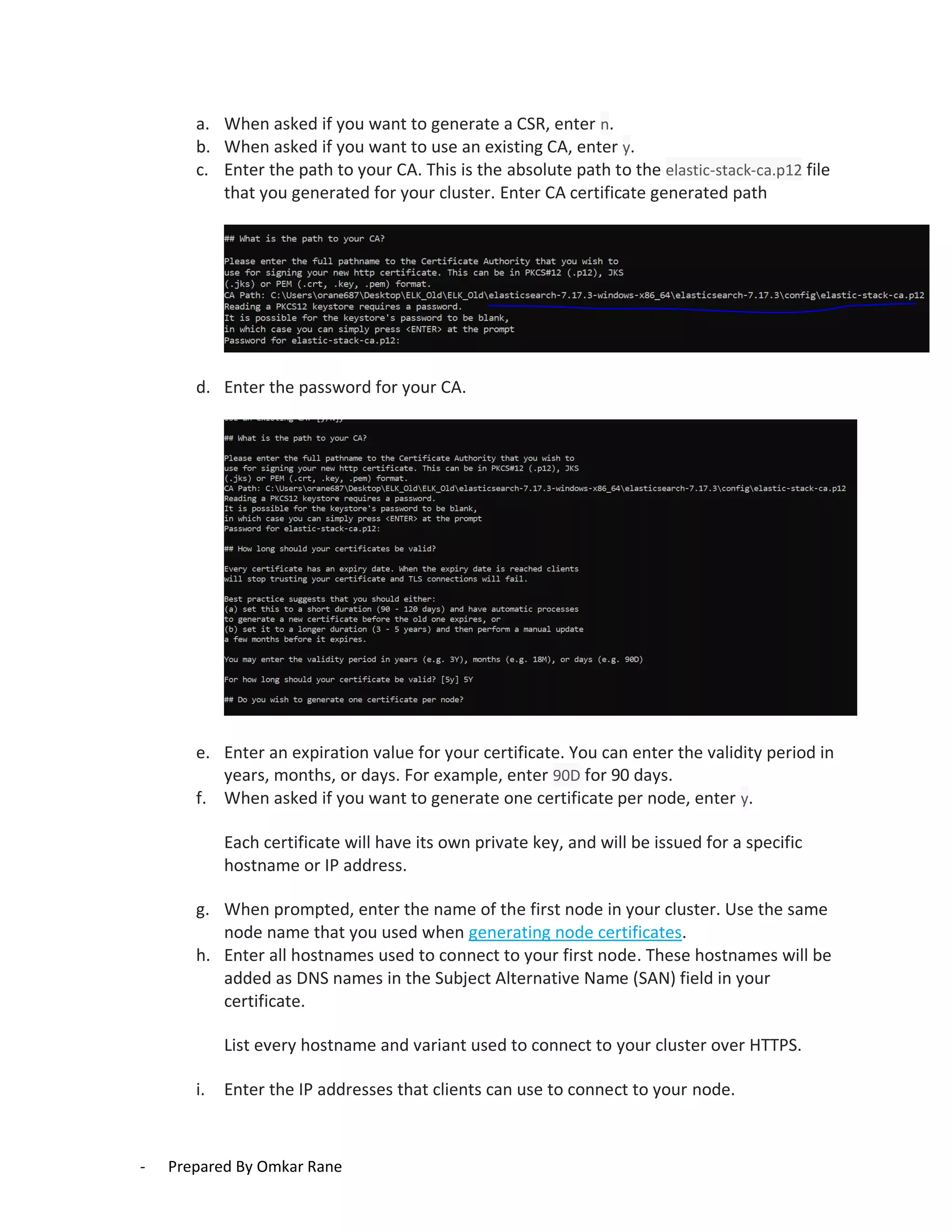

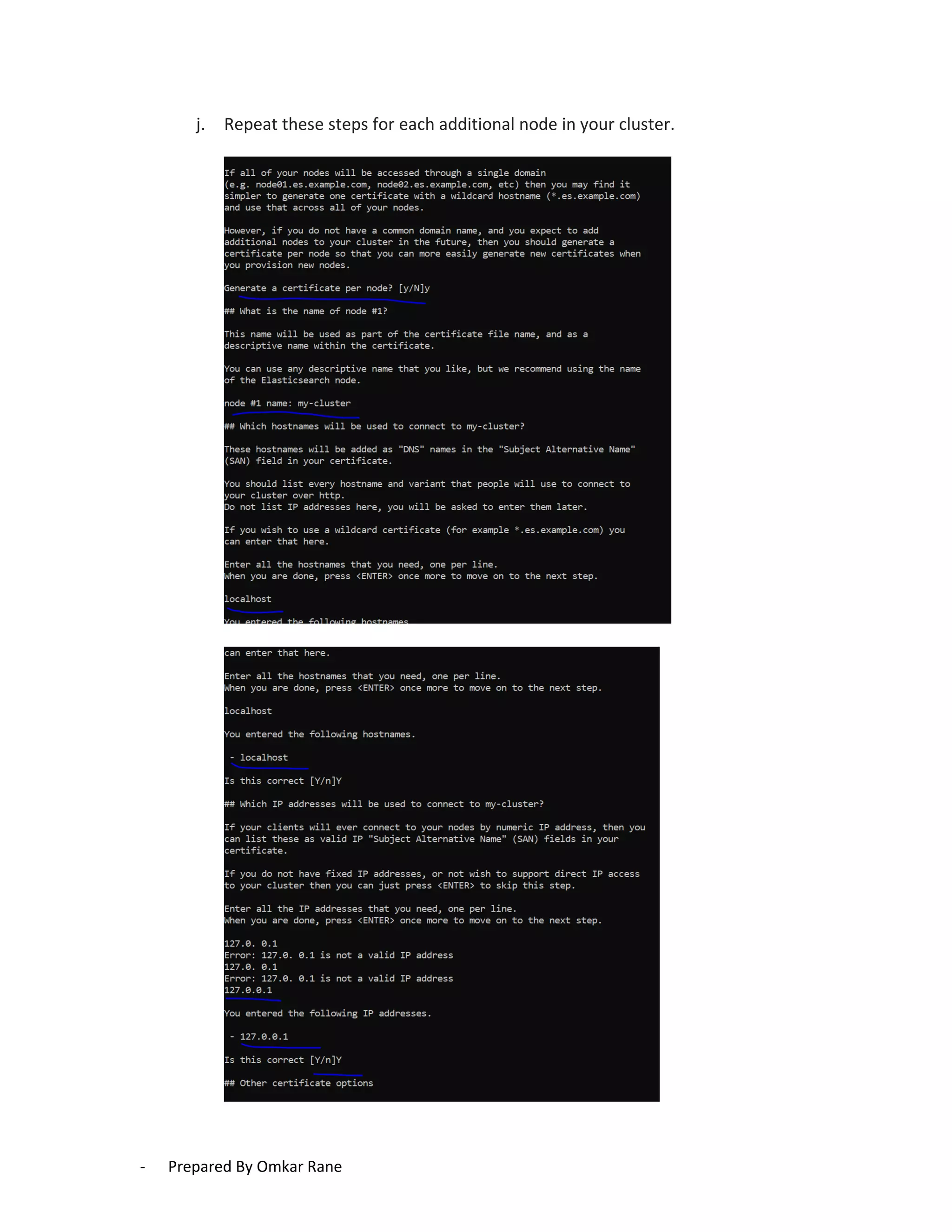

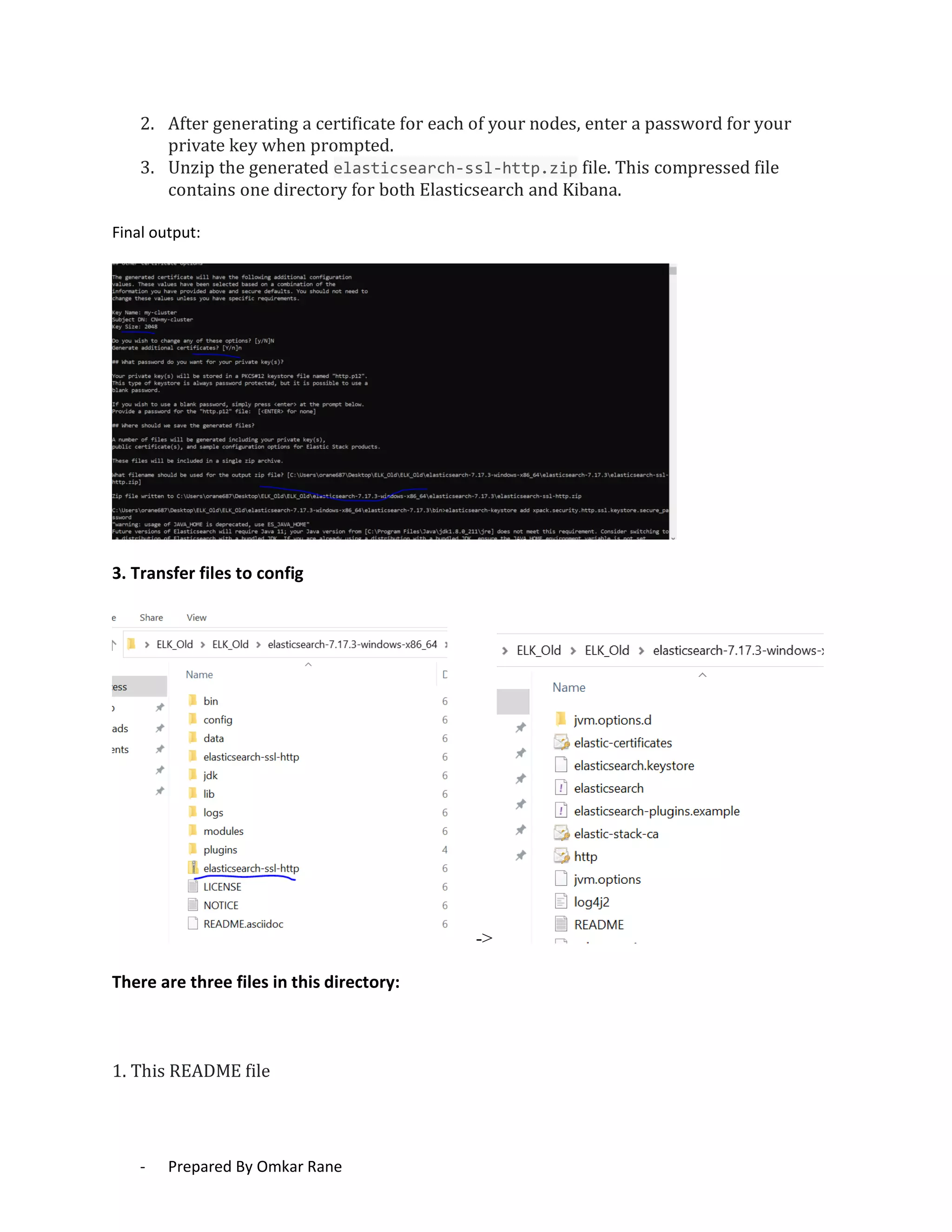

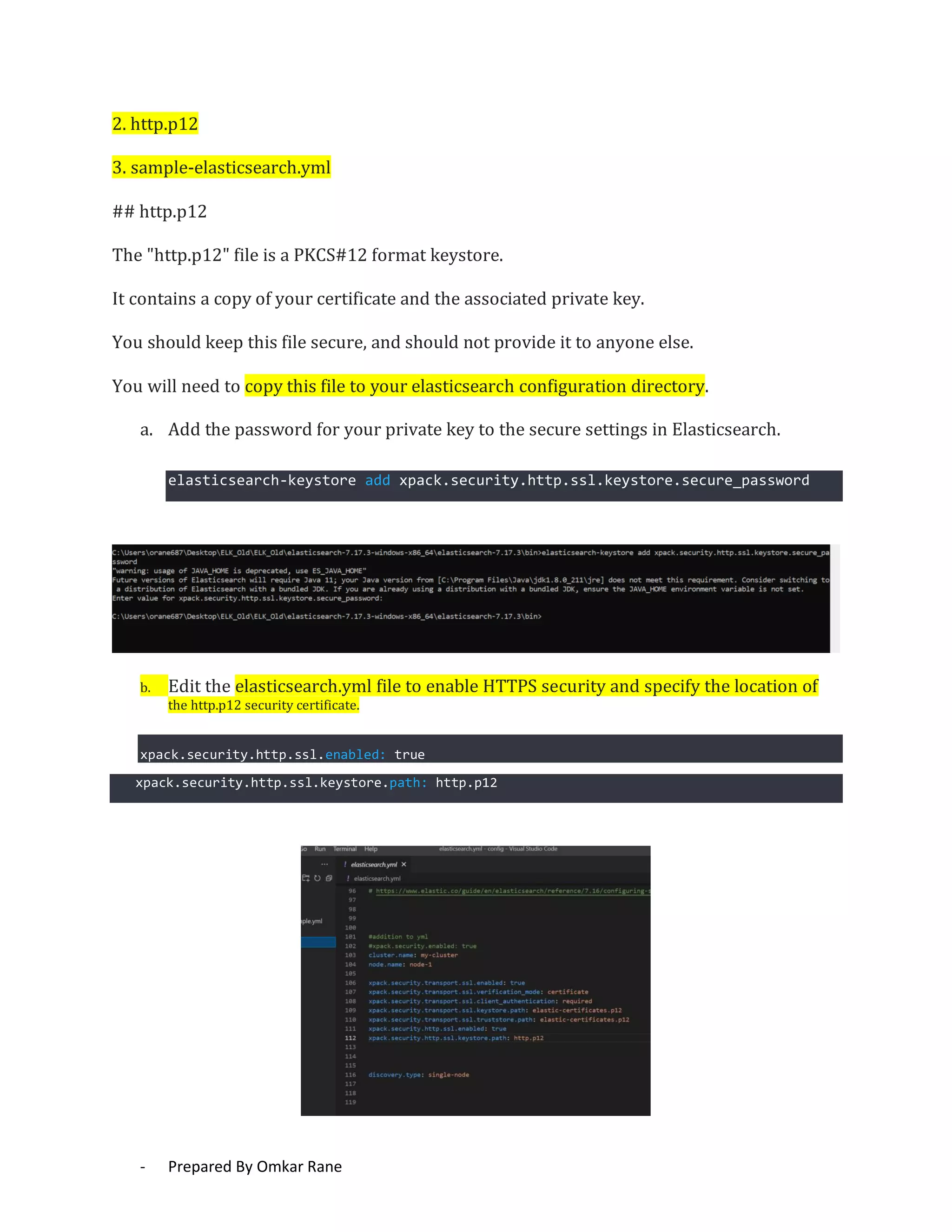

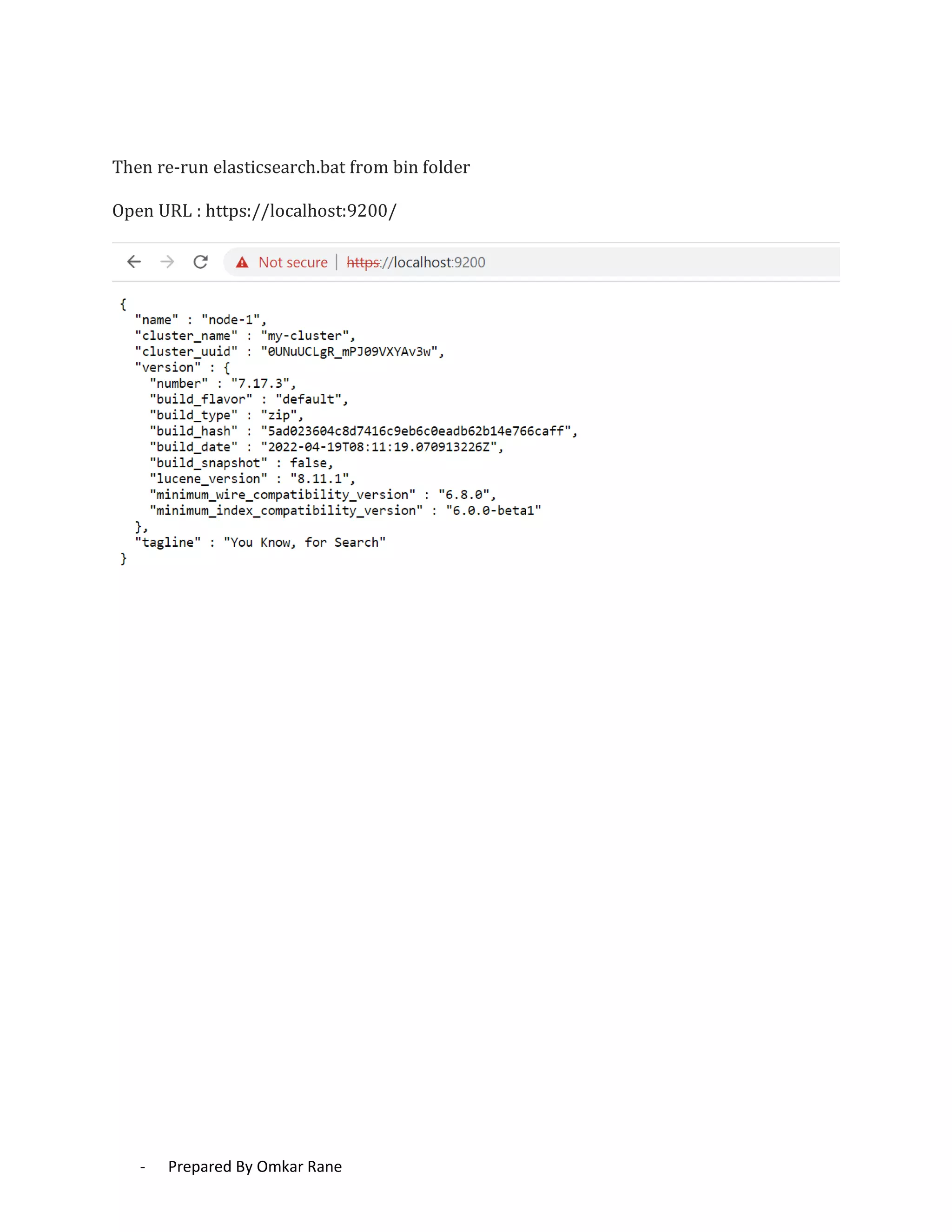

This document provides steps to enable SSL/HTTPS for an Elasticsearch server. It involves generating certificates, configuring Elasticsearch, and enabling TLS for both transport and HTTP layers. The process includes generating a CA certificate, creating node certificates signed by the CA, editing the Elasticsearch configuration file, and restarting Elasticsearch to enable HTTPS.