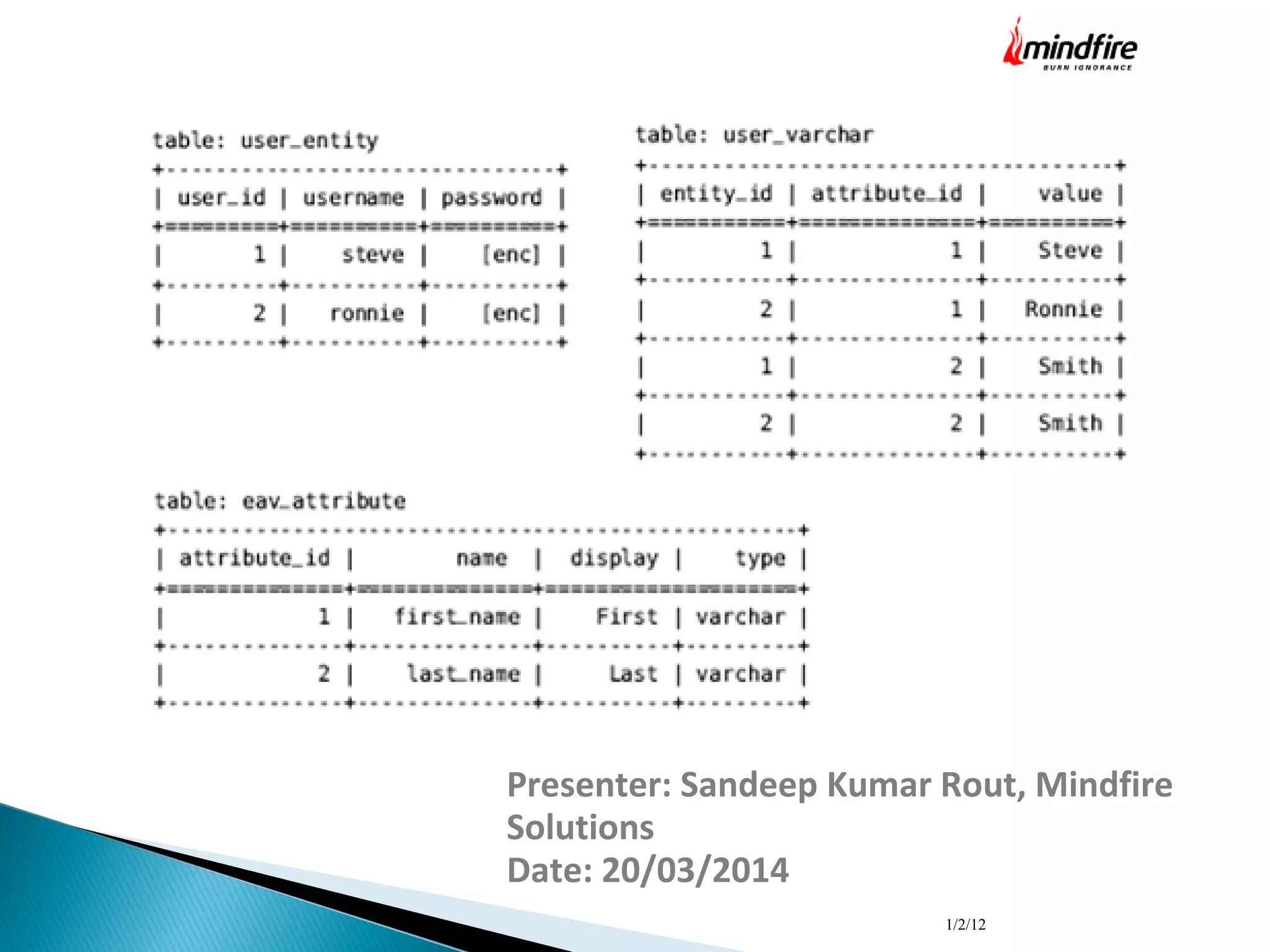

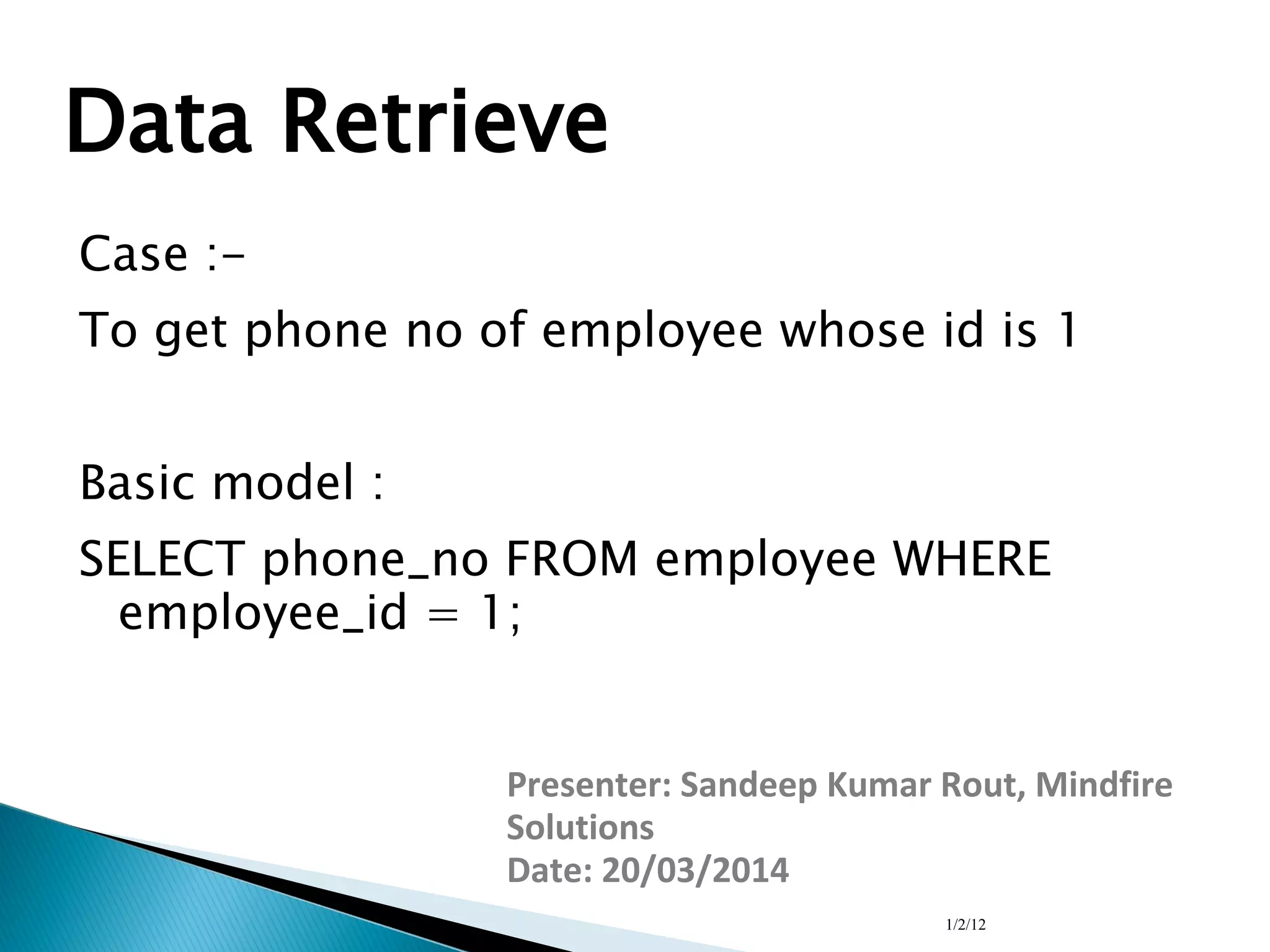

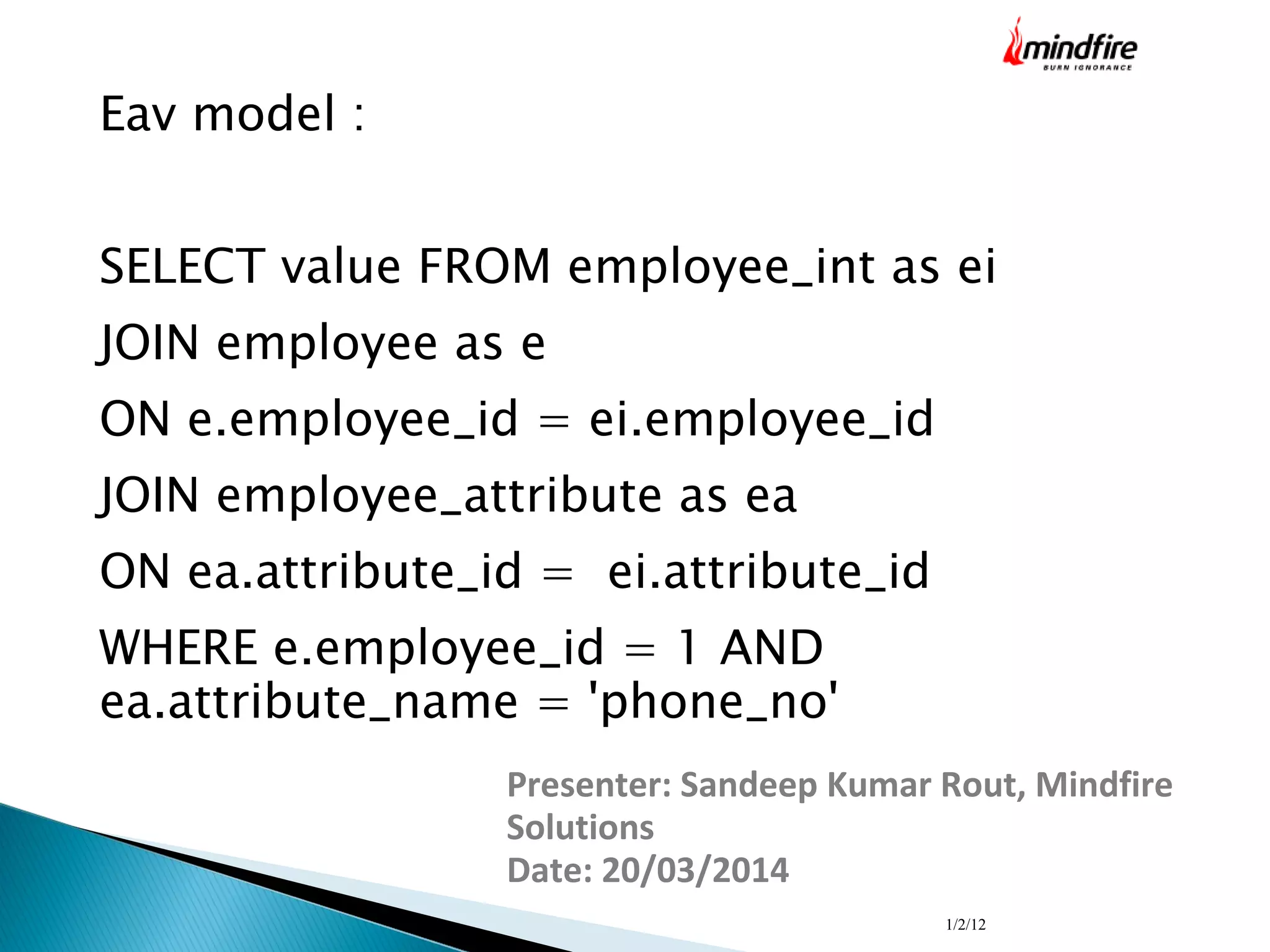

The document presents an overview of the Entity Attribute Value (EAV) data model by Sandeep Kumar Rout from Mindfire Solutions, detailing its structure, history, and use cases. It explains the components of the EAV model—entities, attributes, and values—as well as the advantages (flexibility, scalability) and disadvantages (performance issues) associated with it. Additionally, it describes the process of indexing to enhance data retrieval performance in an EAV structured database.