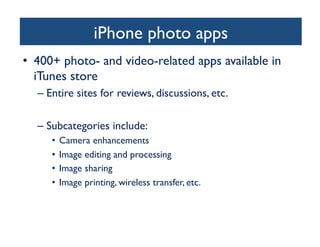

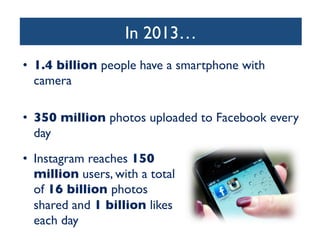

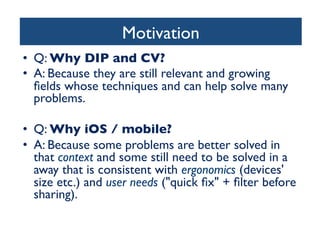

- Image processing and computer vision applications are becoming more common on mobile devices like the iPhone and iPad. There are many opportunities to build successful apps that can improve how users work with images and videos.

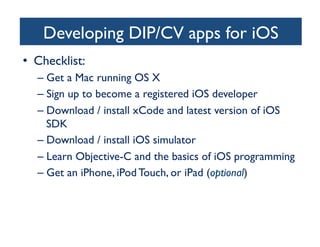

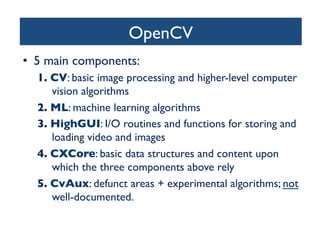

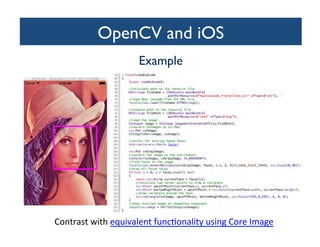

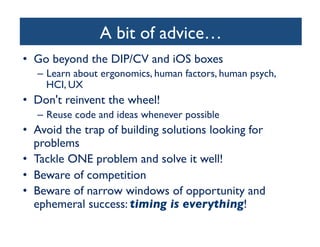

- The talk provided an overview of developing image and computer vision apps for iOS, including recommended tools like Core Image and OpenCV. It also offered advice on focusing an app idea on solving a specific problem and being aware of competition and market timing.

- Mobile image processing and computer vision have a promising future, and there is a need for good solutions to specific problems in this area that developers can work on building.

![search. As an example, we then present the Stanford Product

Search system, a low-latency interactive visual search system.

Several sidebars in this article invite the interested reader to dig

deeper into the underlying algorithms.

each query feature vector with all t

base and is the key to very fast retr

features they have in common wit

of potentially similar images is sele

Finally, a geometric verificatio

most similar matches in the datab

spatial pattern between features of

didate database image to ensure

Example retrieval systems are pres

For mobile visual search, ther

to provide the users with an int

deployed systems typically transm

the server, which might require t

large databases, the inverted file in

memory swapping operations slow

ing stage. Further, the GV step

and thus increases the response t

the retrieval pipeline in the follow

the challenges of mobile visual se

Example: a natural use case for CBIR

ROBUST MOBILE IMAGE RECOGNITION

Today, the most successful algorithms for content-based image

retrieval use an approach that is referred to as bag of features

(BoFs) or bag of words (BoWs). The BoW idea is borrowed from

text retrieval. To find a particular text document, such as a Web

page, it is sufficient to use a few well-chosen words. In the

database, the document itself can be likewise represented by a

• Content-Based Image Retrieval (CBIR) using the

Query-By-Example (QBE) paradigm

– The example is right there, in front of the user!

Query

Image

[FIG1] A snapshot of an outdoor mobile visual search system

being used. The system augments the viewfinder with

information about the objects it recognizes in the image taken

with a camera phone.

Feature

Extraction

[FIG2] A Pipeline for image retrieva

from the query image. Feature mat

images in the database that have m

with the query image. The GV step

feature locations that cannot be pl

in viewing position.](https://image.slidesharecdn.com/dipcviphoneipadiosday2013marques-131219072221-phpapp01/85/Image-Processing-and-Computer-Vision-in-iOS-13-320.jpg)