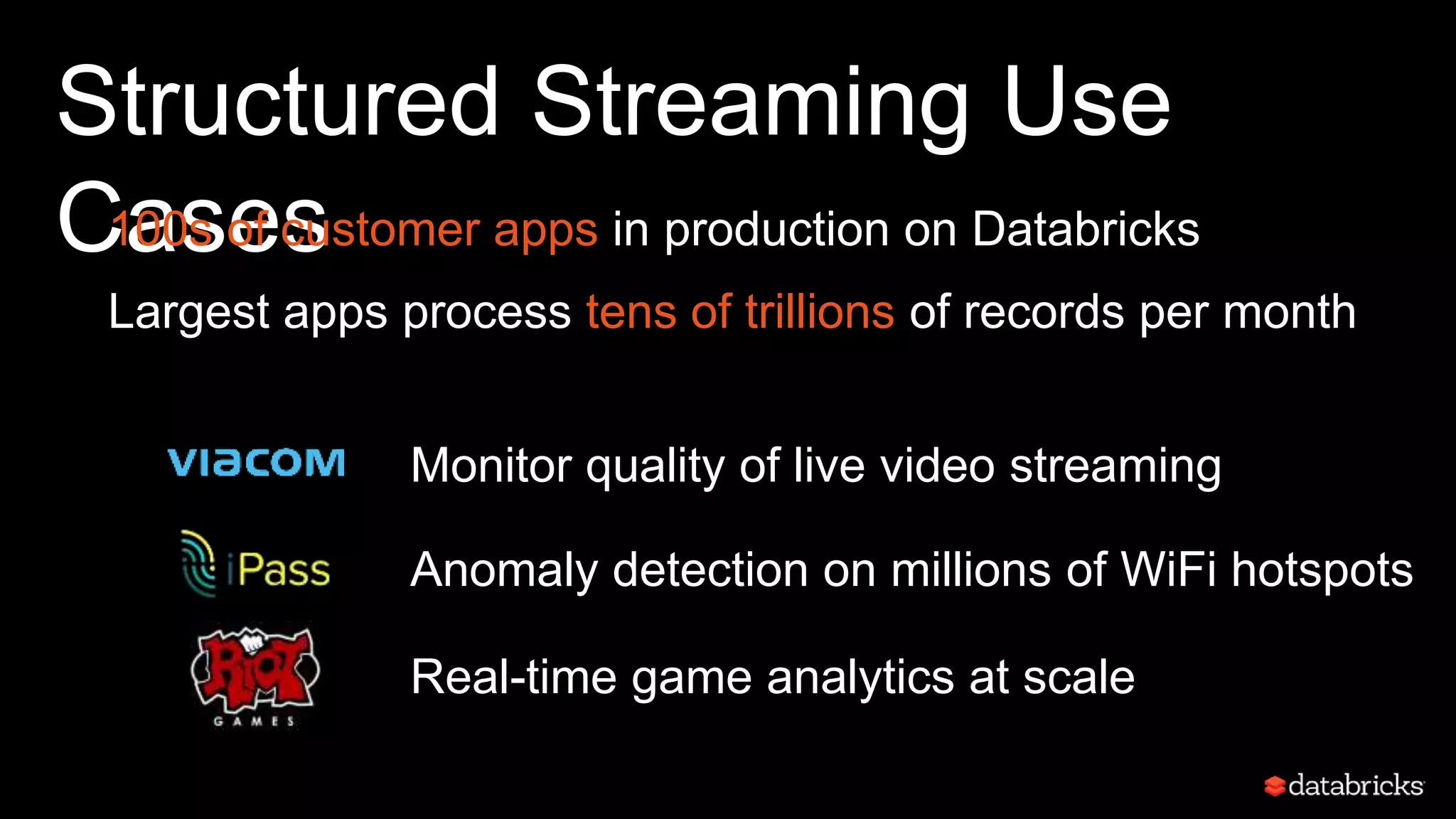

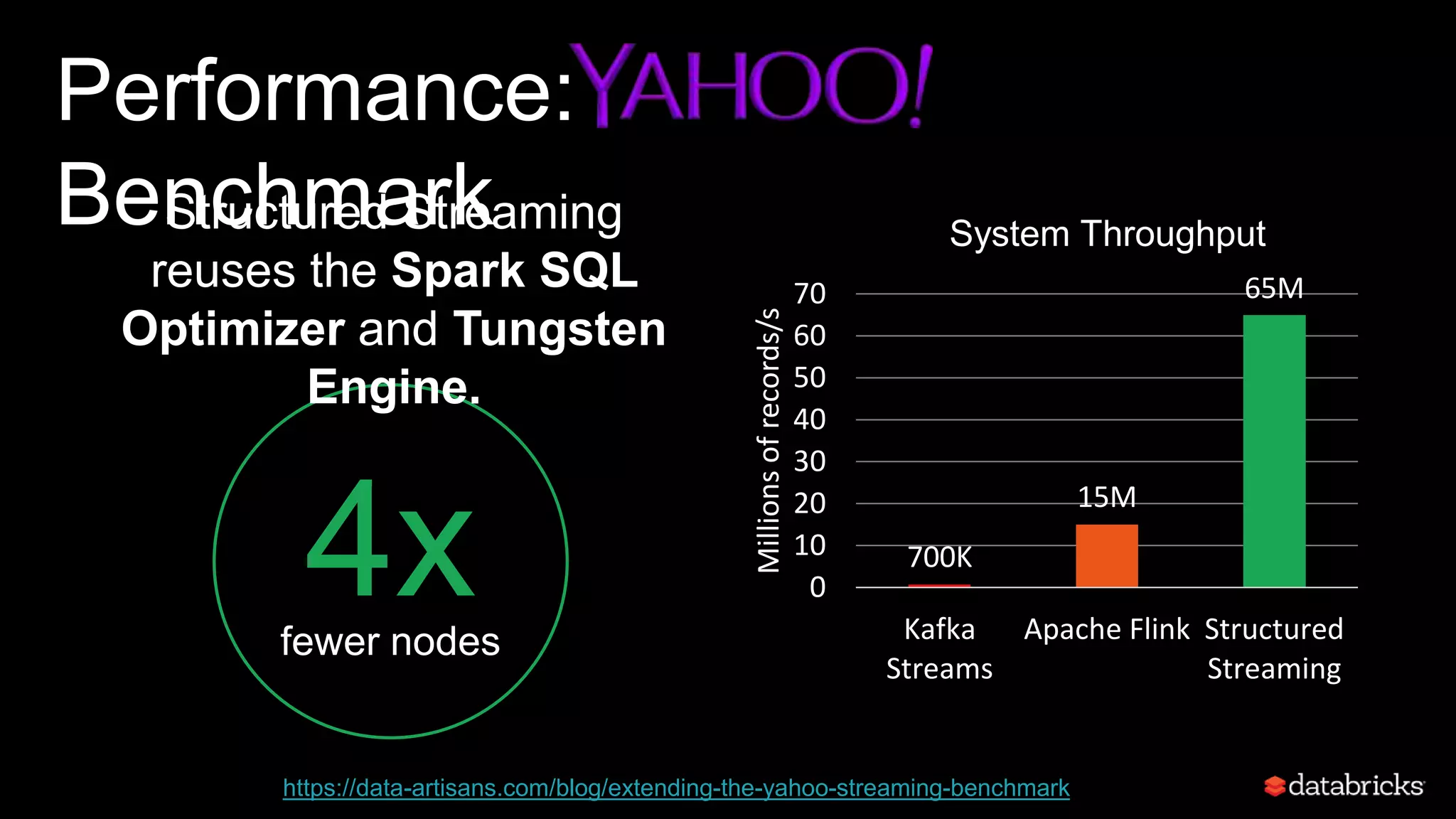

The document discusses advancements in Apache Spark 2.x, particularly focusing on structured streaming and deep learning capabilities. It highlights the introduction of a high-level API for streaming that simplifies processes and allows for end-to-end applications, as well as the integration of deep learning frameworks to enhance usability. Key features include improved performance, cost efficiency, and the goal of making deep learning accessible to a broader range of users.

![Example: Model Search

est = KerasImageFileEstimator()

grid = ParamGridBuilder()

.addGrid(est.modelFile, ["InceptionV3", "ResNet50"])

.addGrid(est.kerasParams, [{'batch': 32}, {'batch': 64}])

.build()

CrossValidator(est, eval, grid).fit(image_df)

InceptionV3

batch size 32

ResNet50

batch size 32

InceptionV3

batch size 64

ResNet50

batch size 64

Spark

Driver](https://image.slidesharecdn.com/sseu-2017-val-171026084953/75/Deep-Learning-and-Streaming-in-Apache-Spark-2-x-with-Matei-Zaharia-22-2048.jpg)