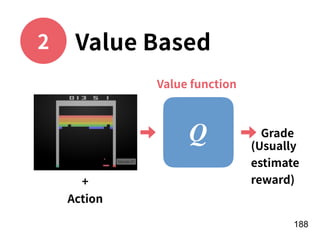

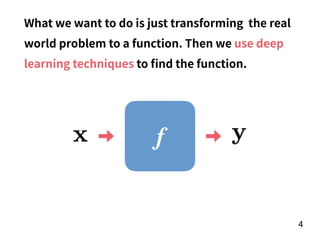

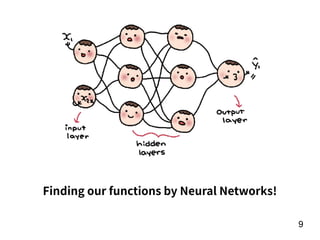

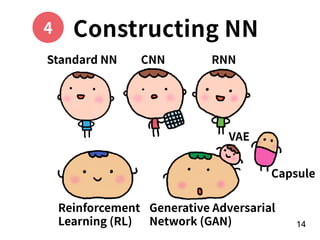

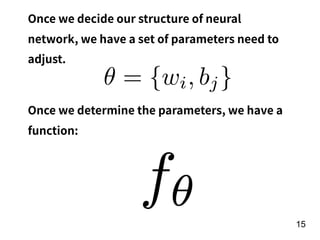

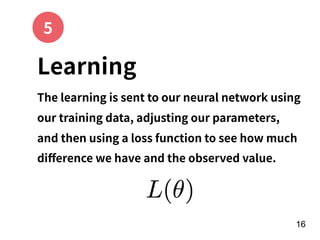

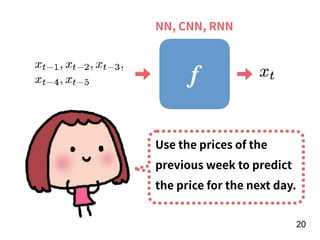

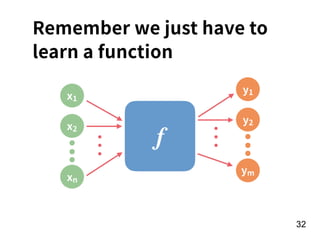

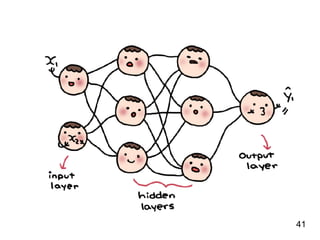

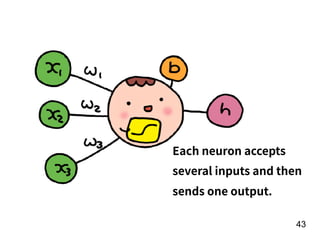

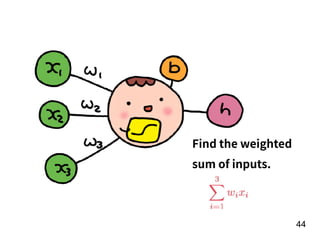

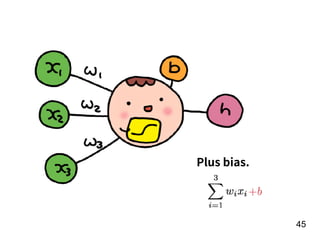

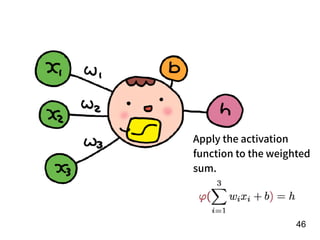

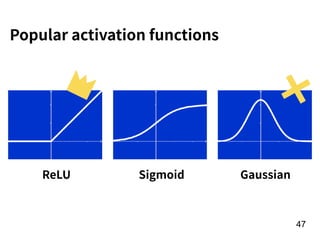

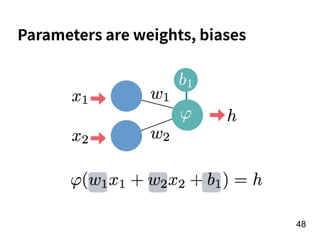

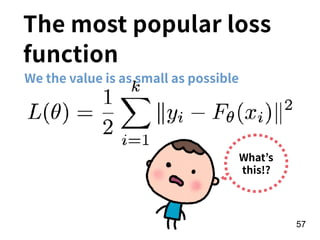

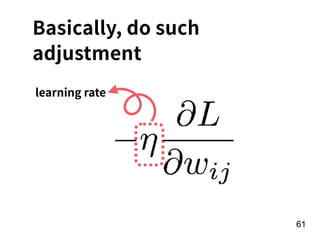

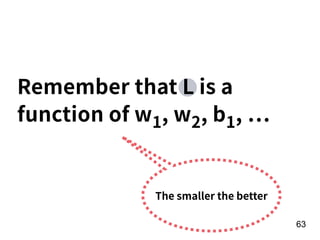

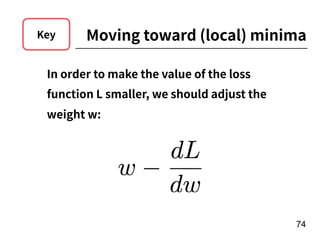

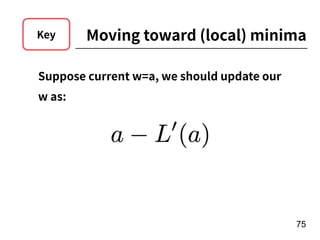

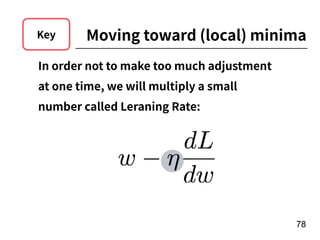

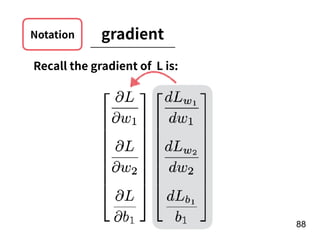

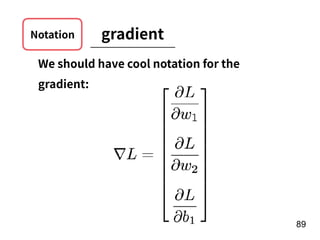

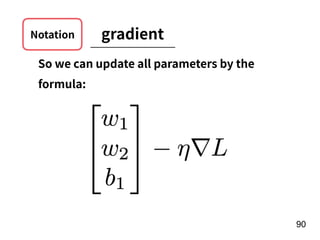

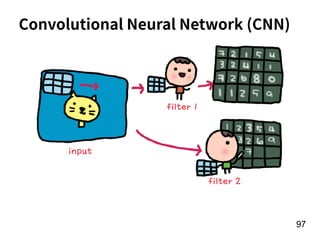

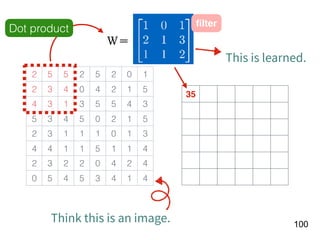

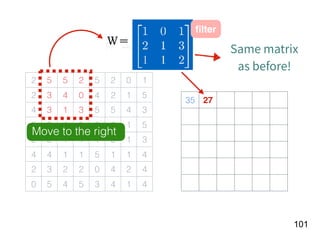

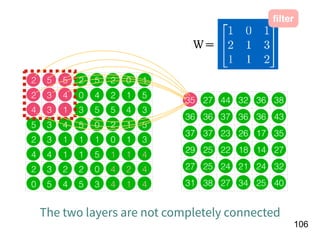

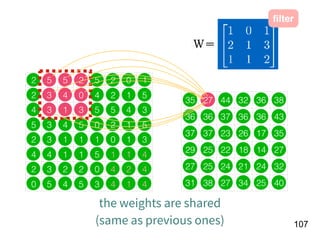

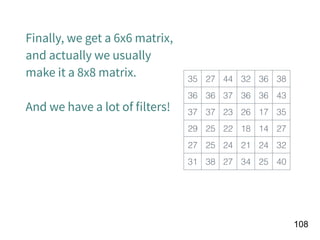

The document discusses the application of deep learning techniques in transforming real-world problems into functions that neural networks can solve. It covers various aspects of neural networks, including their structures, training methods, types like CNNs and RNNs, and key concepts like gradient descent and loss functions. Additionally, it highlights practical applications such as image recognition and chatbots.

![!22

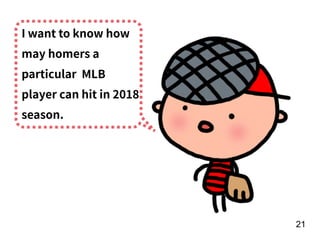

Player

preformance

in the year of

t-1

[Age, G, PA, AB, R, H, 2B, 3B, HR,

RBI, SB, BB, SO, OPS+, TB]

15 features!

f

Number of

homers in the

year of t

RNN](https://image.slidesharecdn.com/deeplearninganddesignthinking-180509111347/85/Deep-Learning-and-Design-Thinking-22-320.jpg)

![!156

Year t-1

[Age, G, PA, AB, R, H, 2B, 3B, HR,

RBI, SB, BB, SO, OPS+, TB]

15 features

Number of

homers in the

year of t

f](https://image.slidesharecdn.com/deeplearninganddesignthinking-180509111347/85/Deep-Learning-and-Design-Thinking-156-320.jpg)