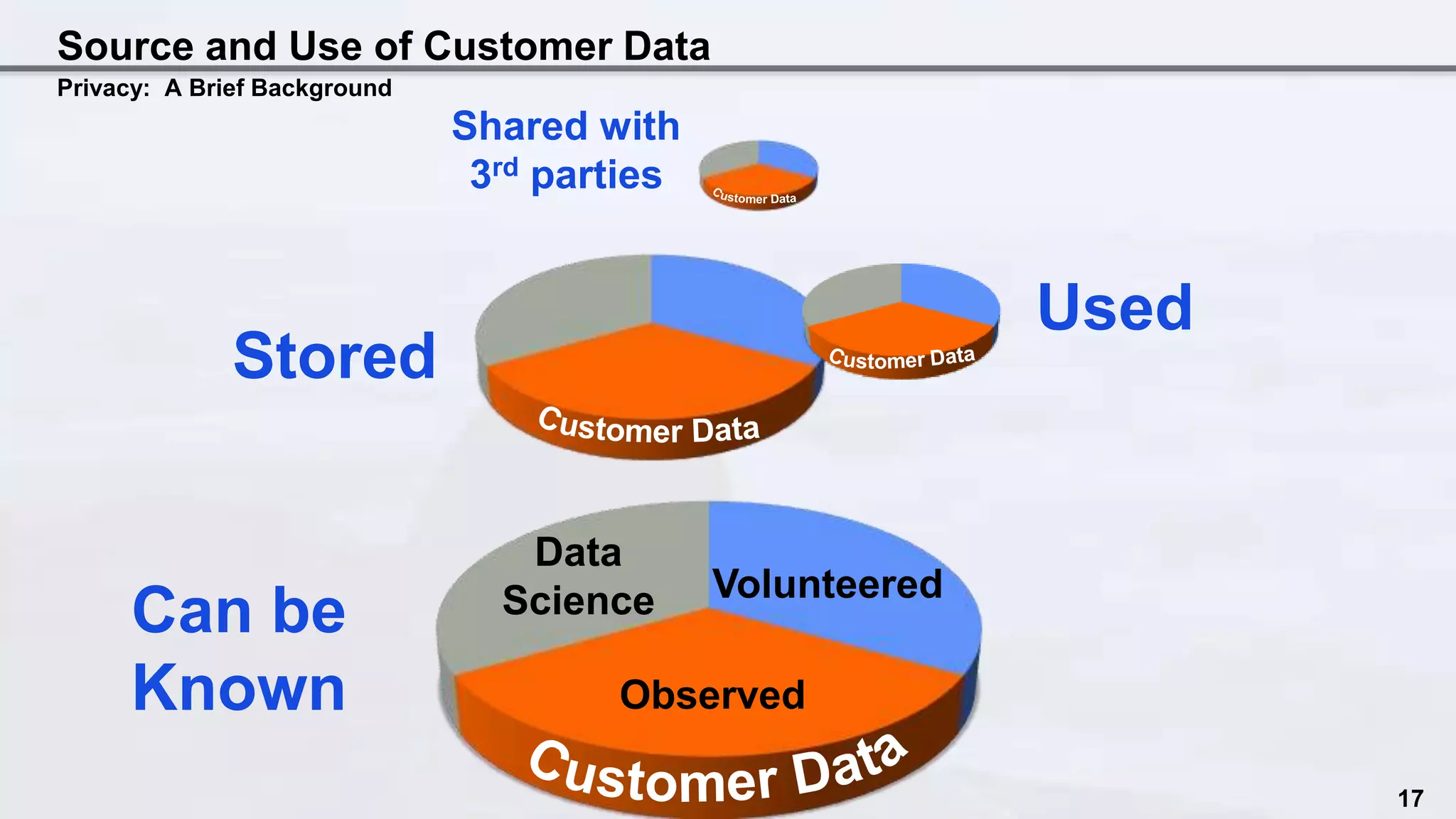

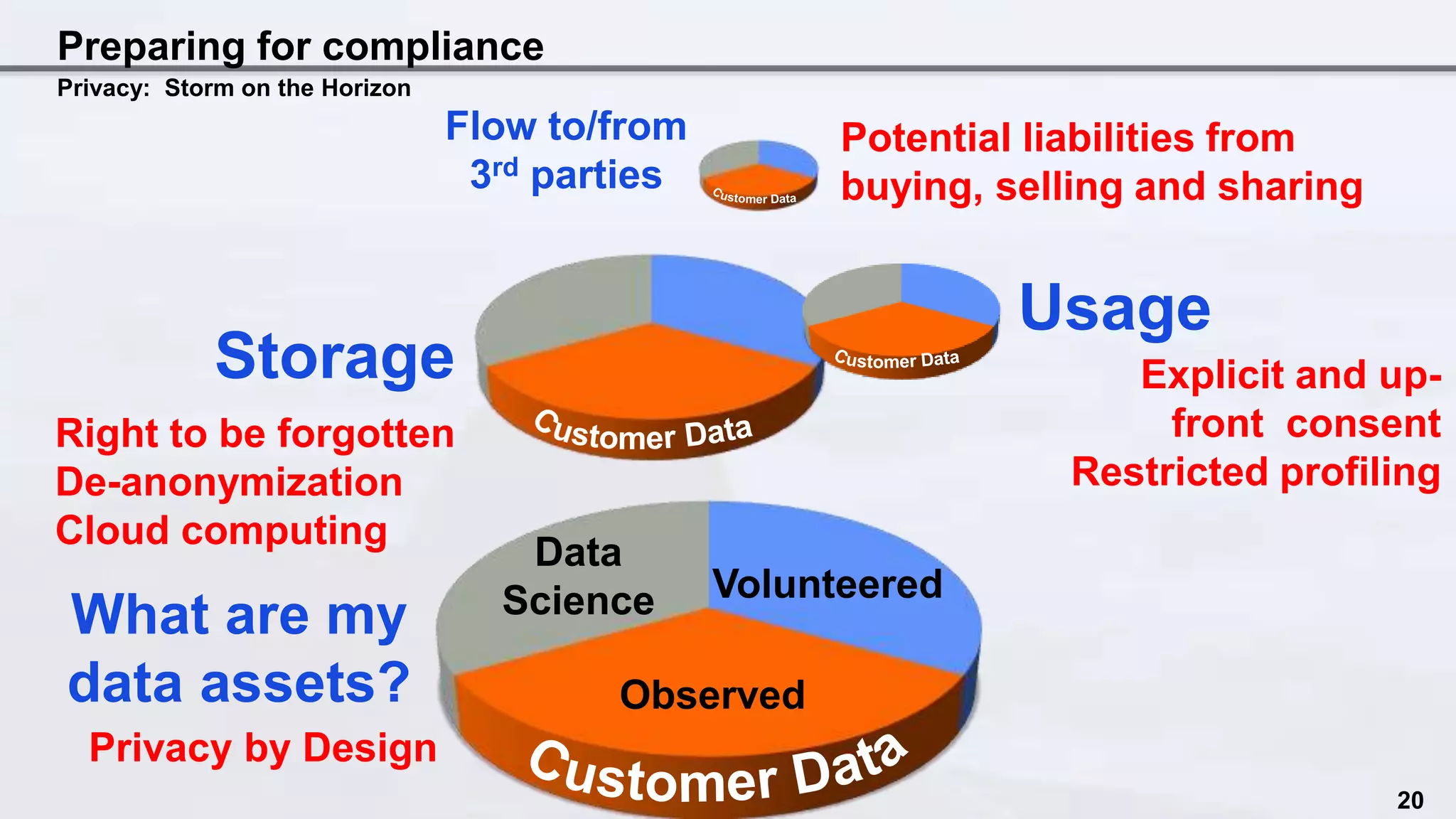

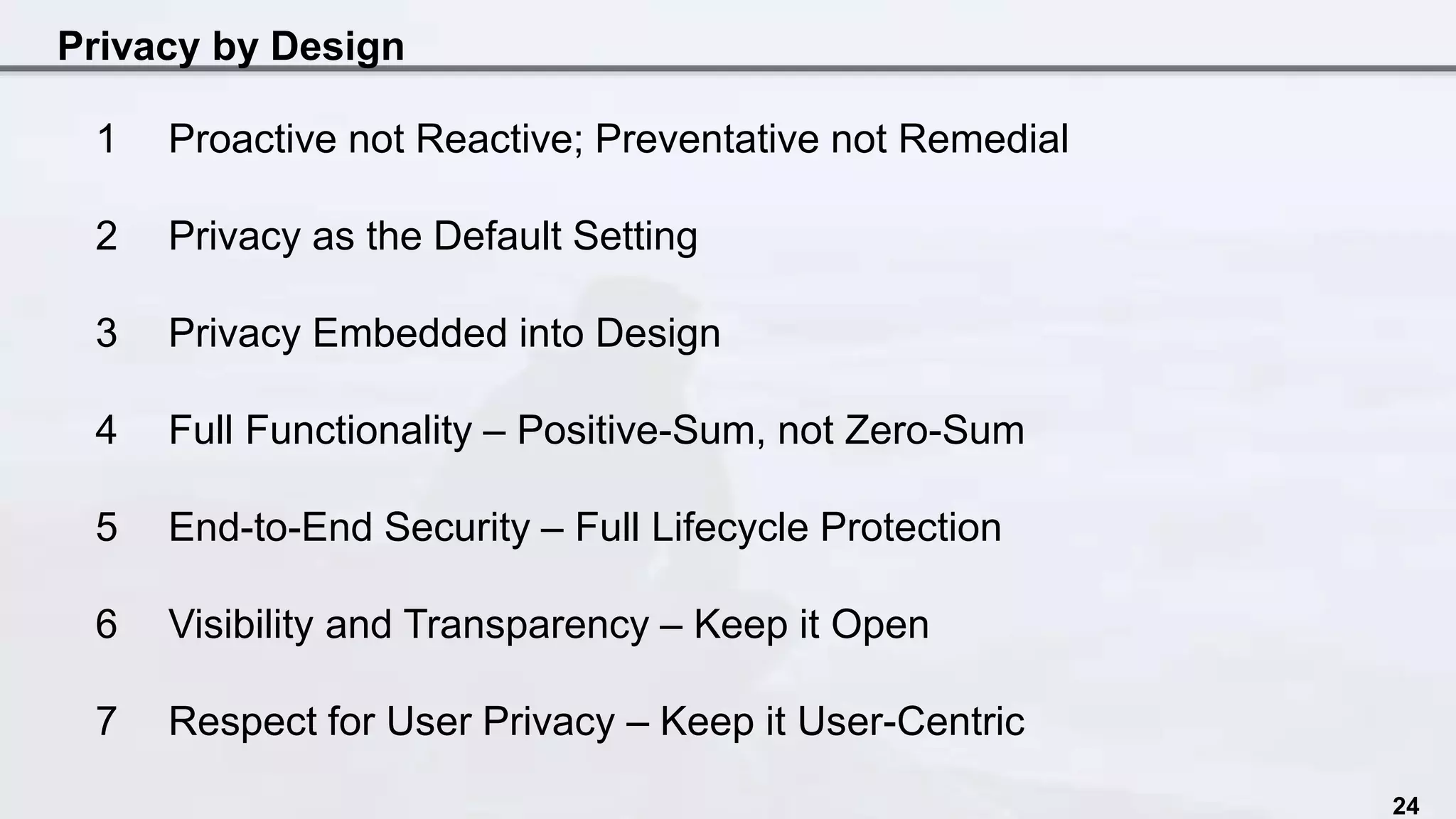

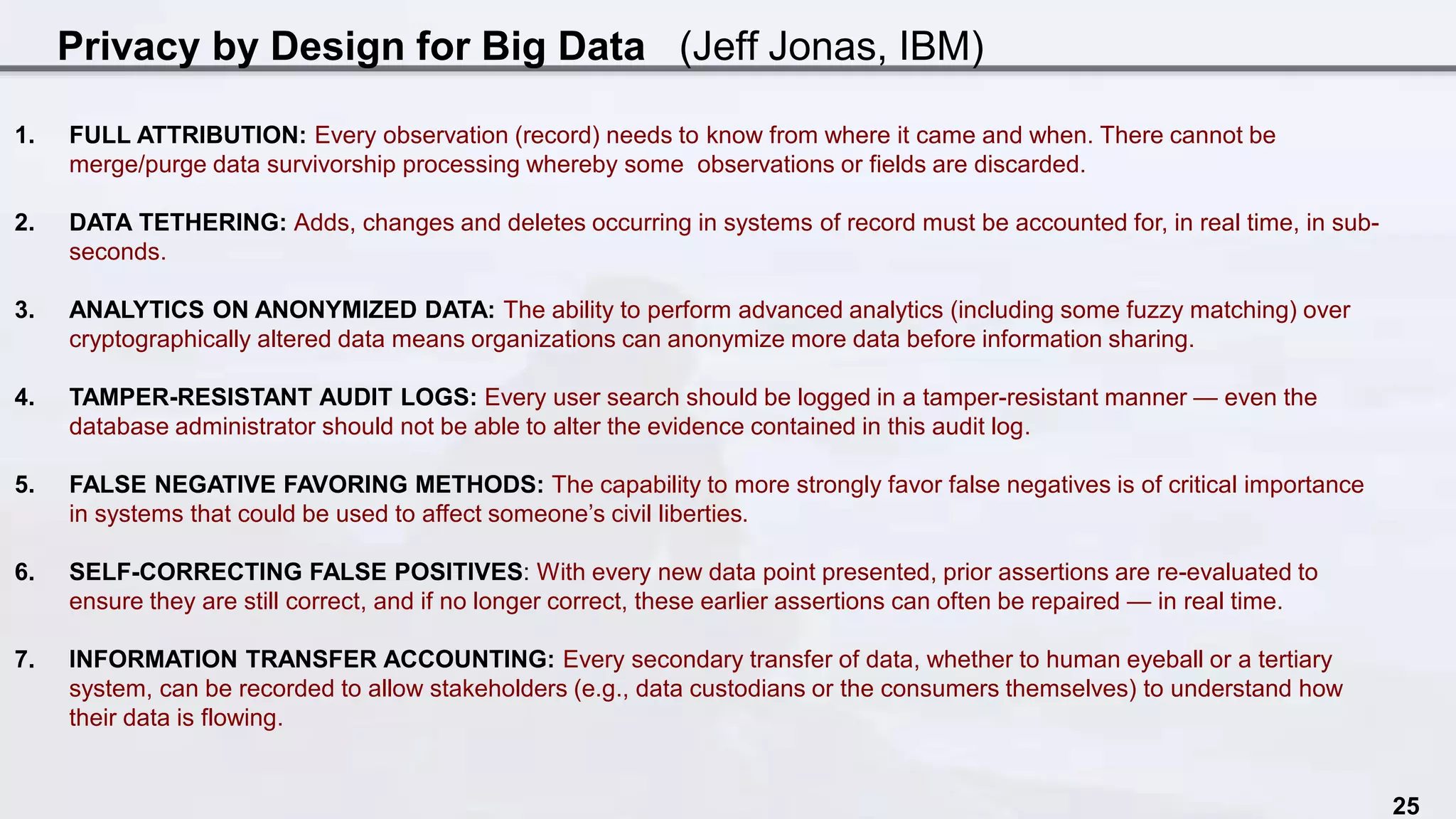

The document discusses the intersection of data science and EU privacy regulations, highlighting the growth and usage of data alongside increasing privacy concerns. It includes case studies that illustrate privacy violations and emphasizes the need for compliance with privacy legislation, advocating for proactive measures like privacy by design. Key recommendations stress the importance of auditing data ecosystems, obtaining user consent, and ensuring transparency in data handling practices.