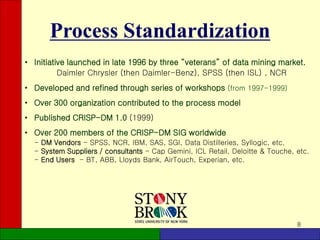

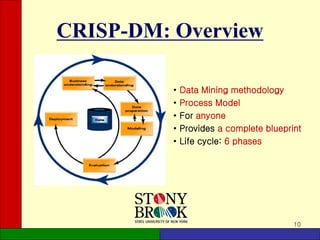

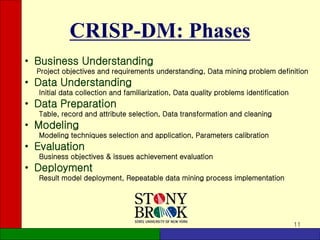

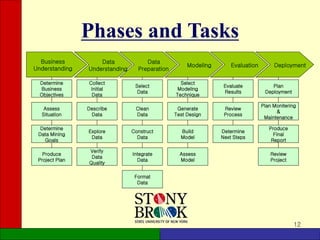

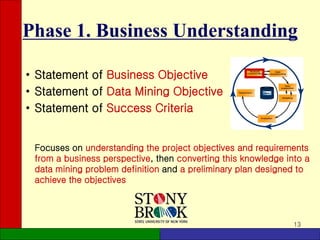

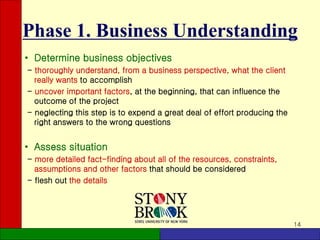

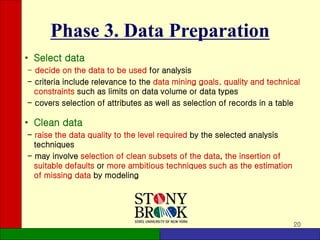

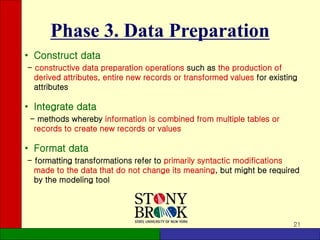

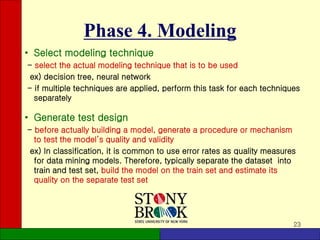

The document provides an overview of the CRISP-DM (Cross-Industry Standard Process for Data Mining) methodology. It describes CRISP-DM as a standard process for data mining projects that consists of 6 phases: business understanding, data understanding, data preparation, modeling, evaluation, and deployment. Each phase has defined tasks to guide users through a complete data mining process from start to finish. The document outlines the objectives and key activities in each phase to give readers a high-level understanding of the CRISP-DM methodology.