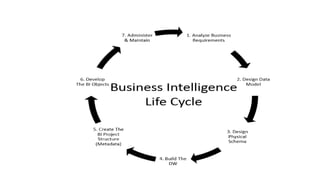

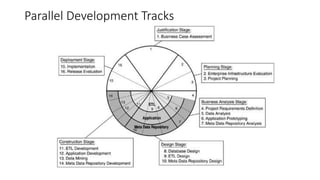

The document discusses the business intelligence (BI) lifecycle, which includes 6 key stages: 1) Analyzing business requirements, 2) Designing a data model, 3) Designing the physical schema, 4) Building the data warehouse, 5) Creating project metadata, and 6) Developing BI objects. It also describes the Enterprise Performance Lifecycle (EPLC) framework, which manages project deliverables and reviews across various stages to minimize risk and ensure best practices are followed throughout the project lifecycle.