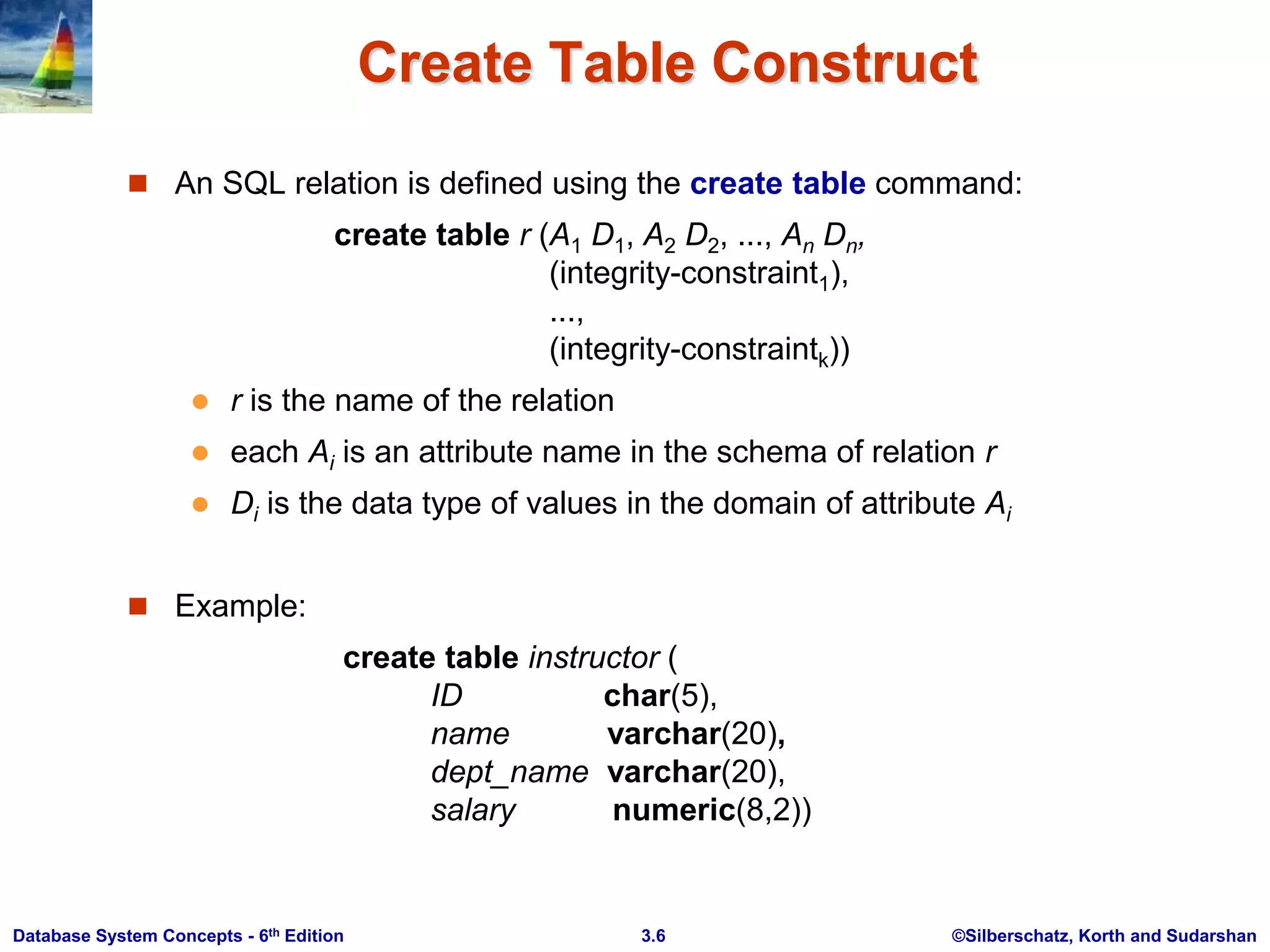

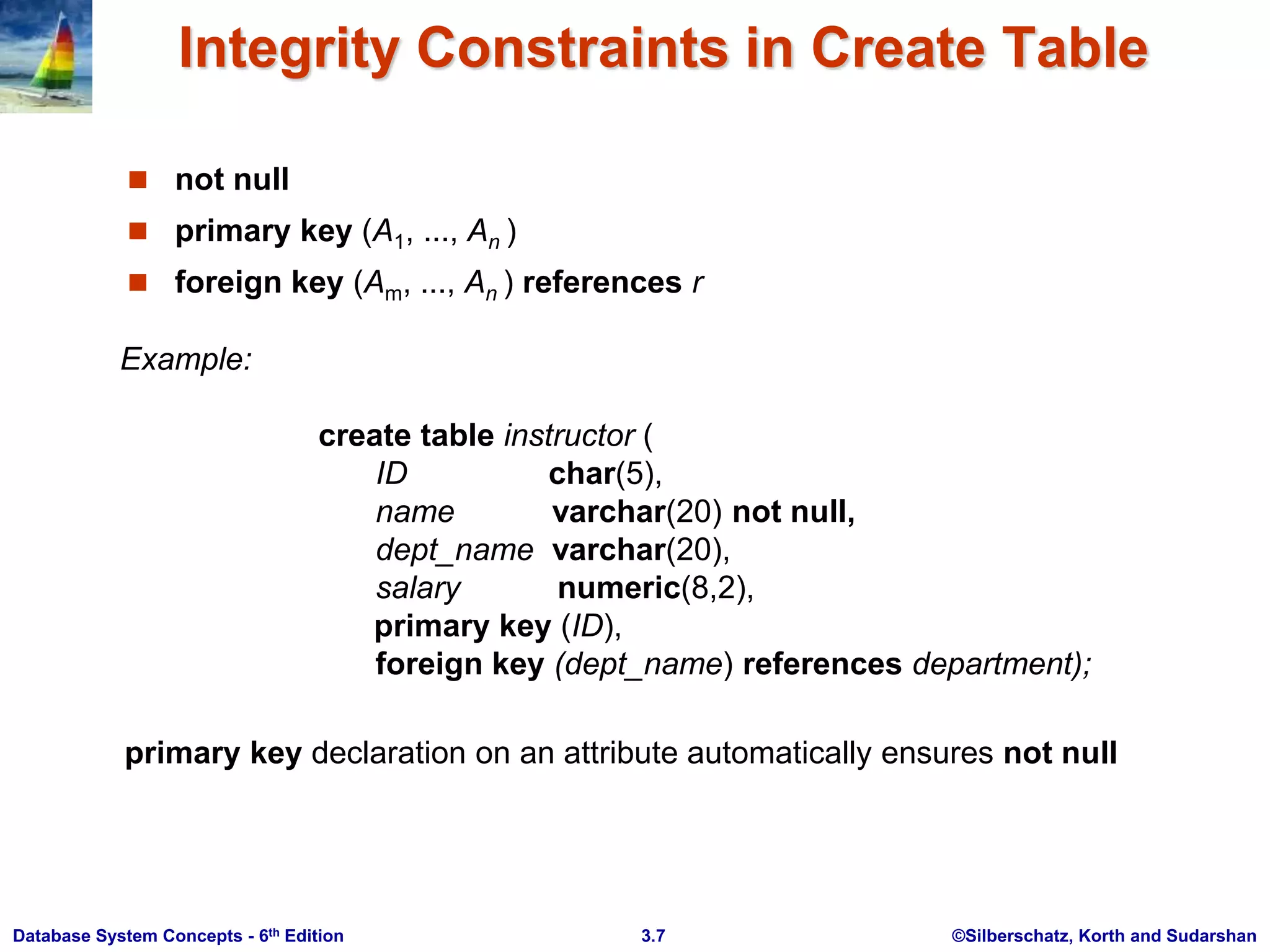

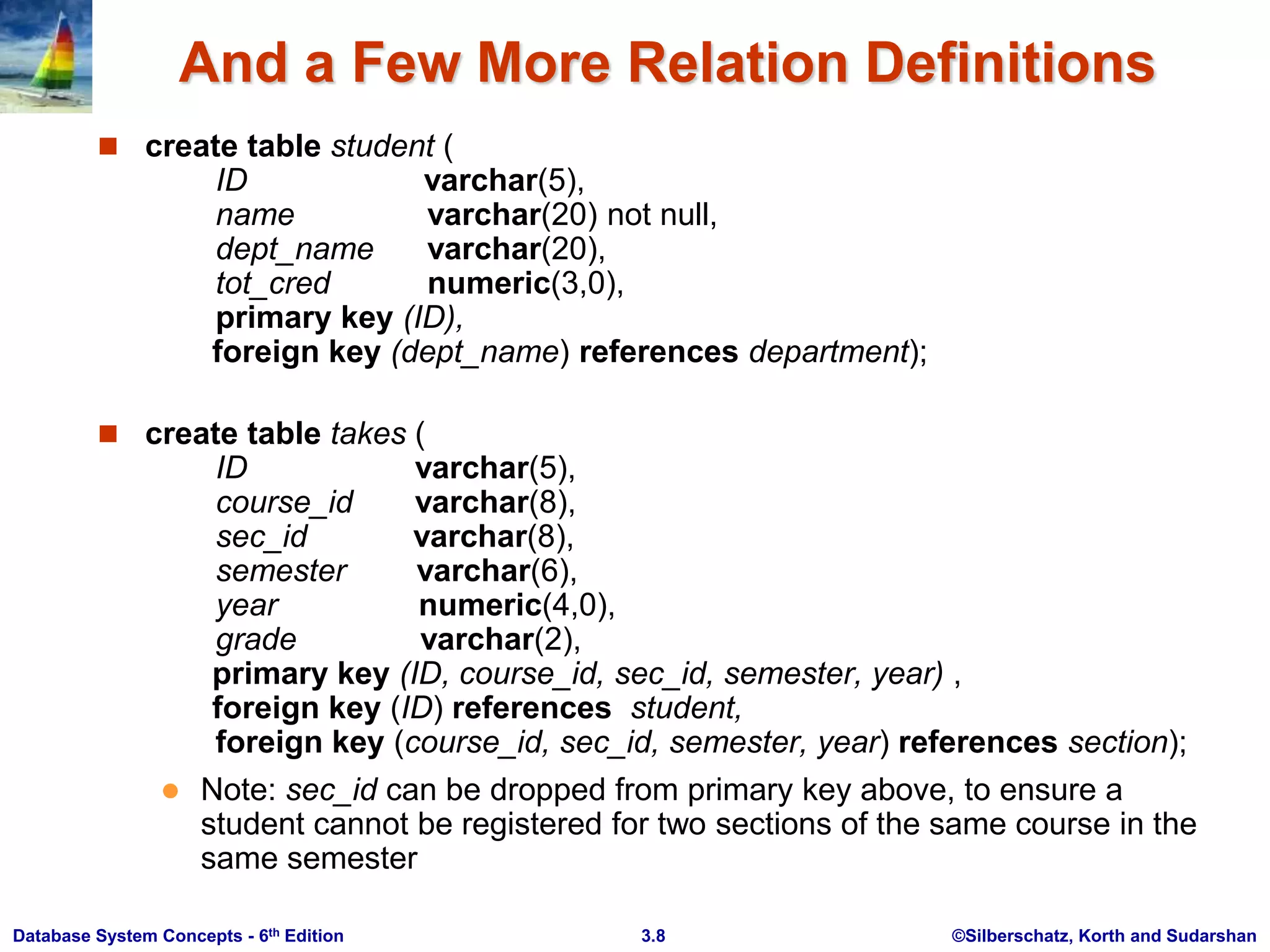

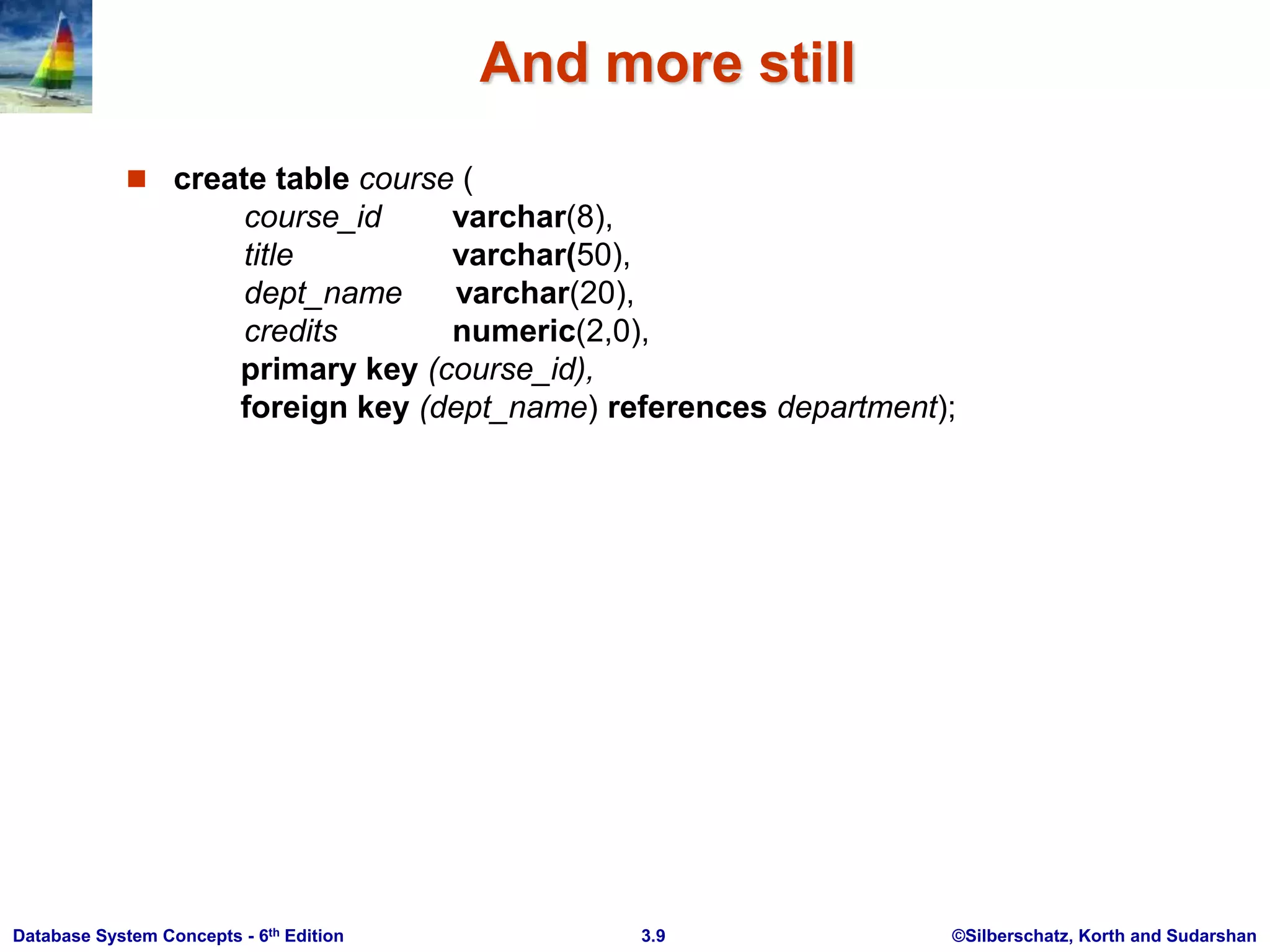

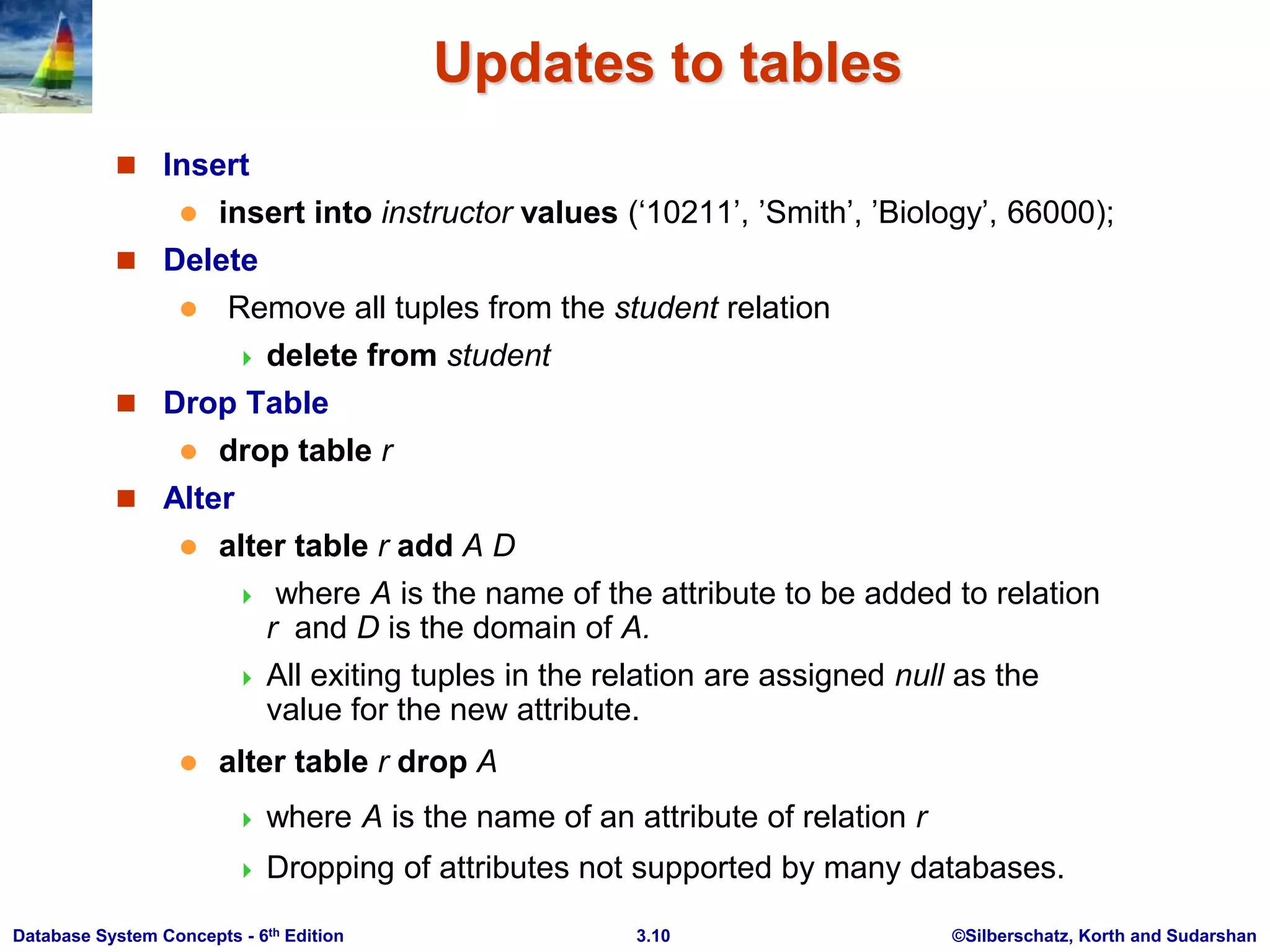

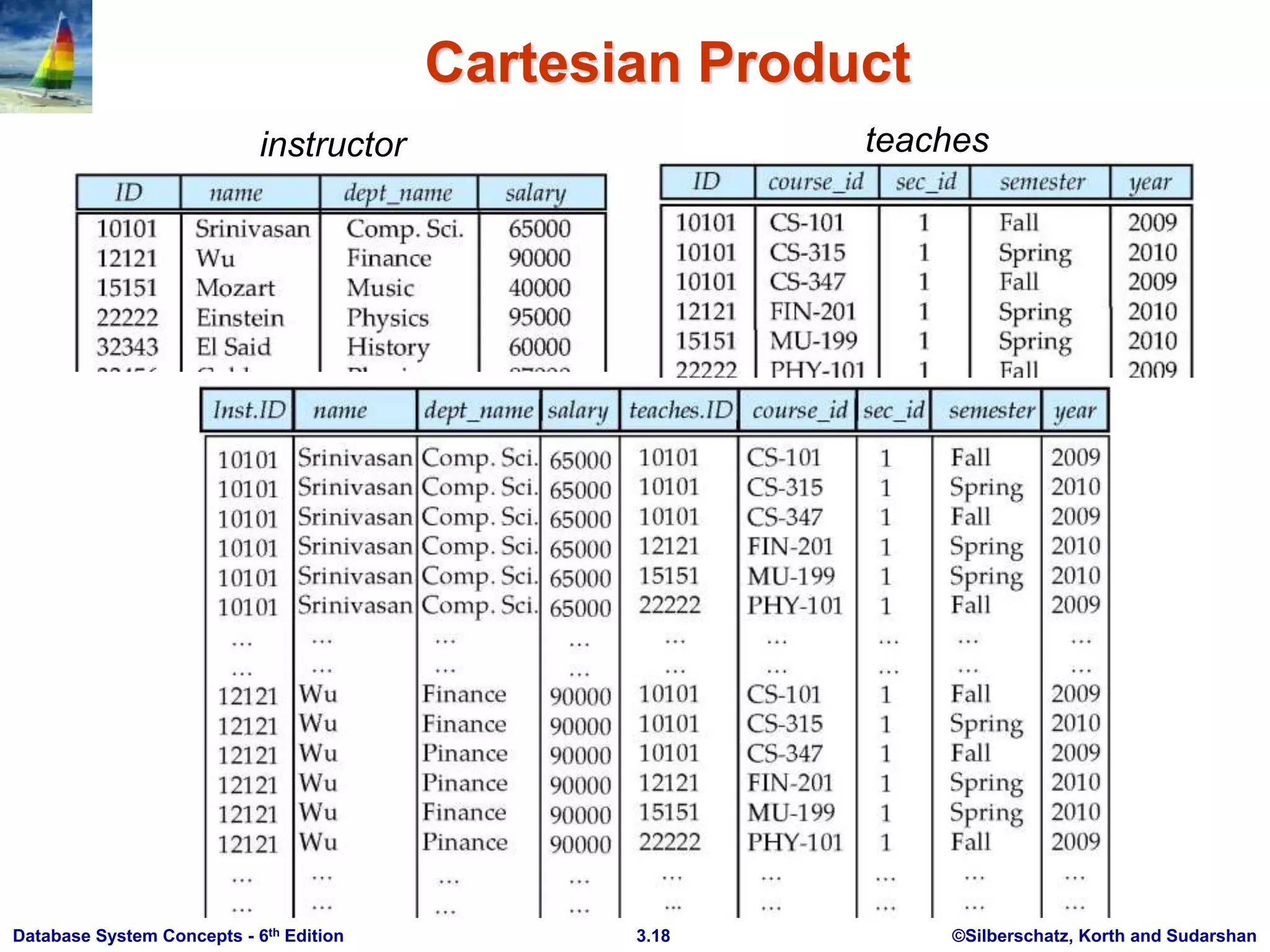

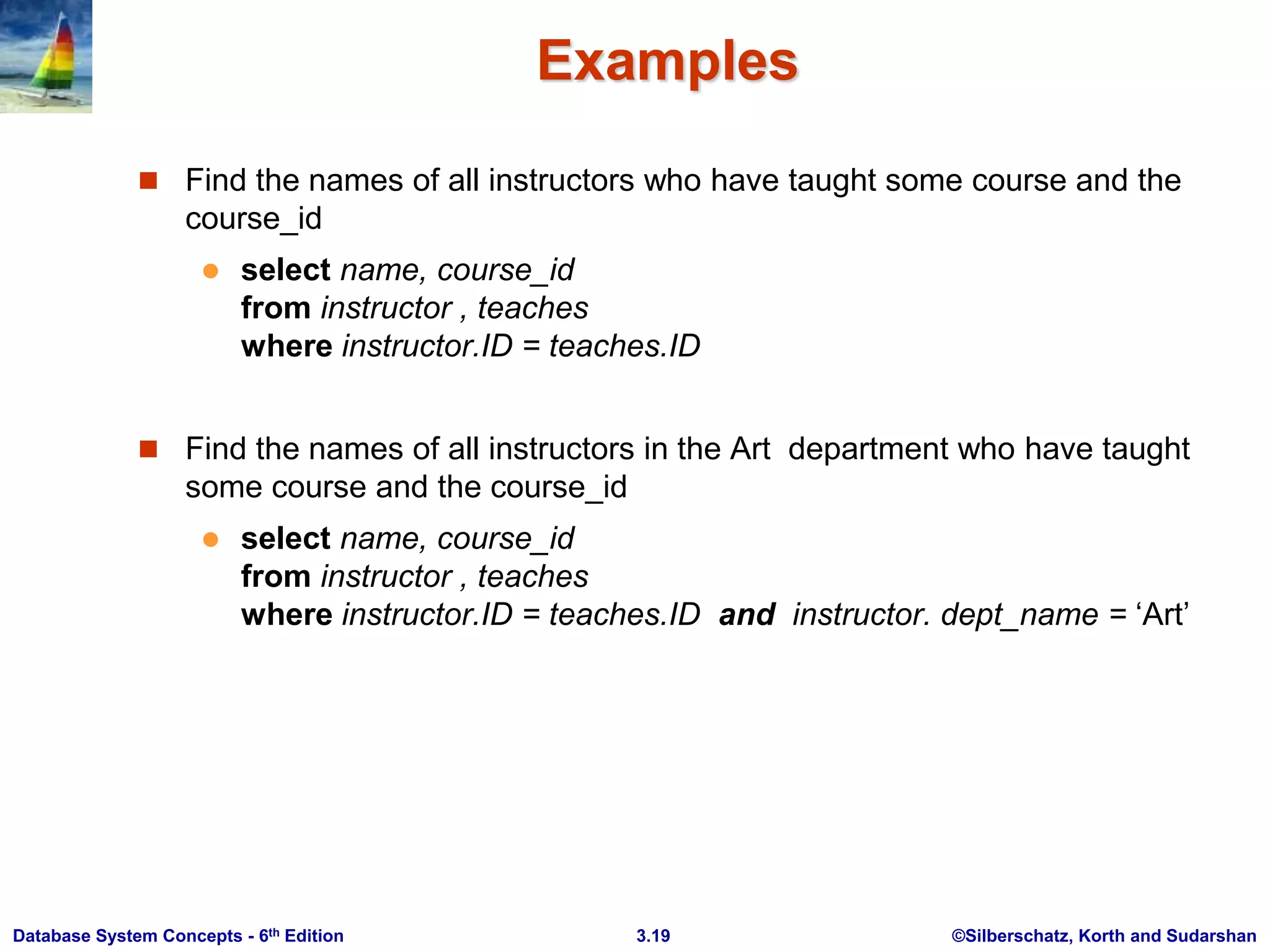

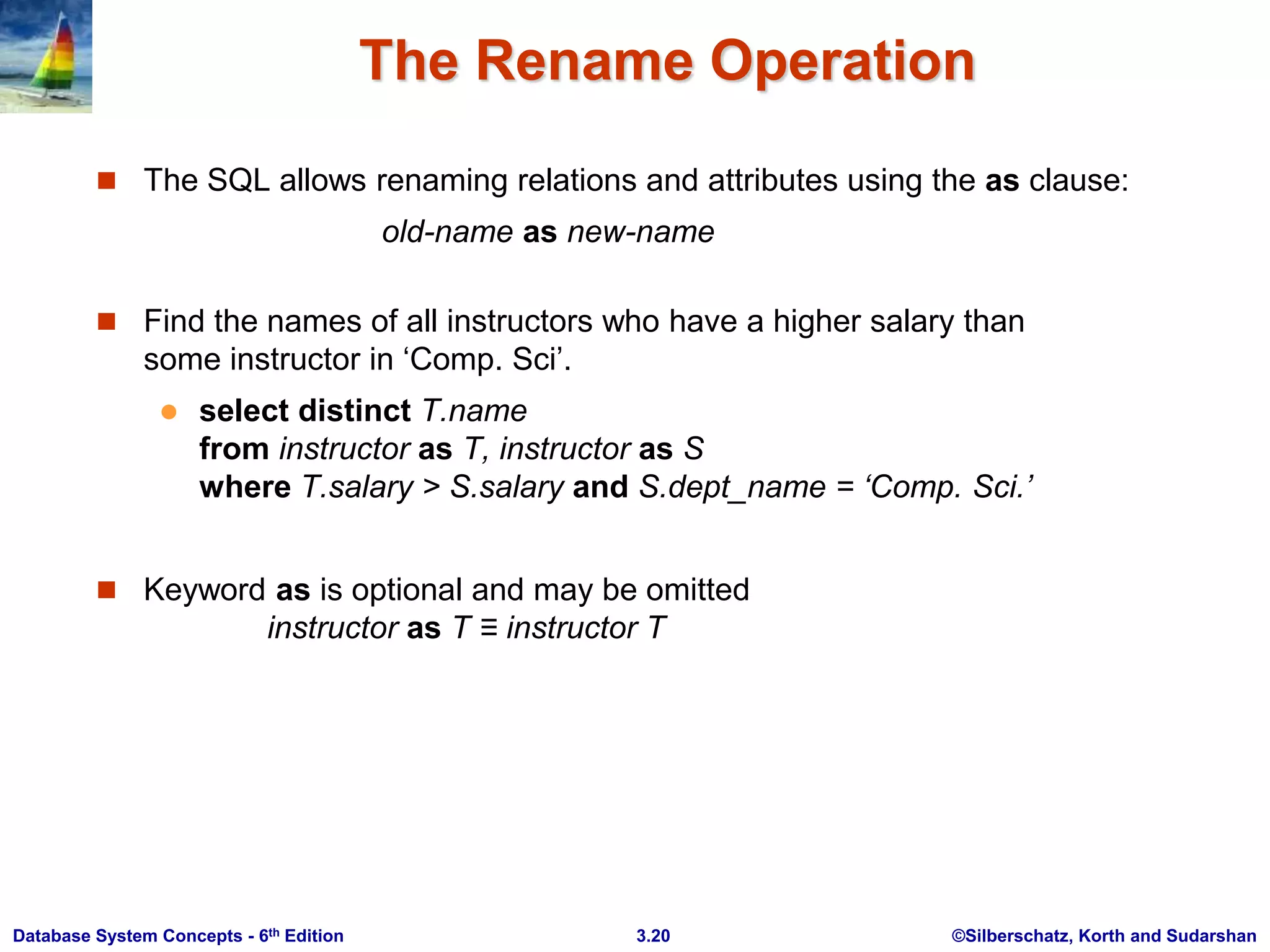

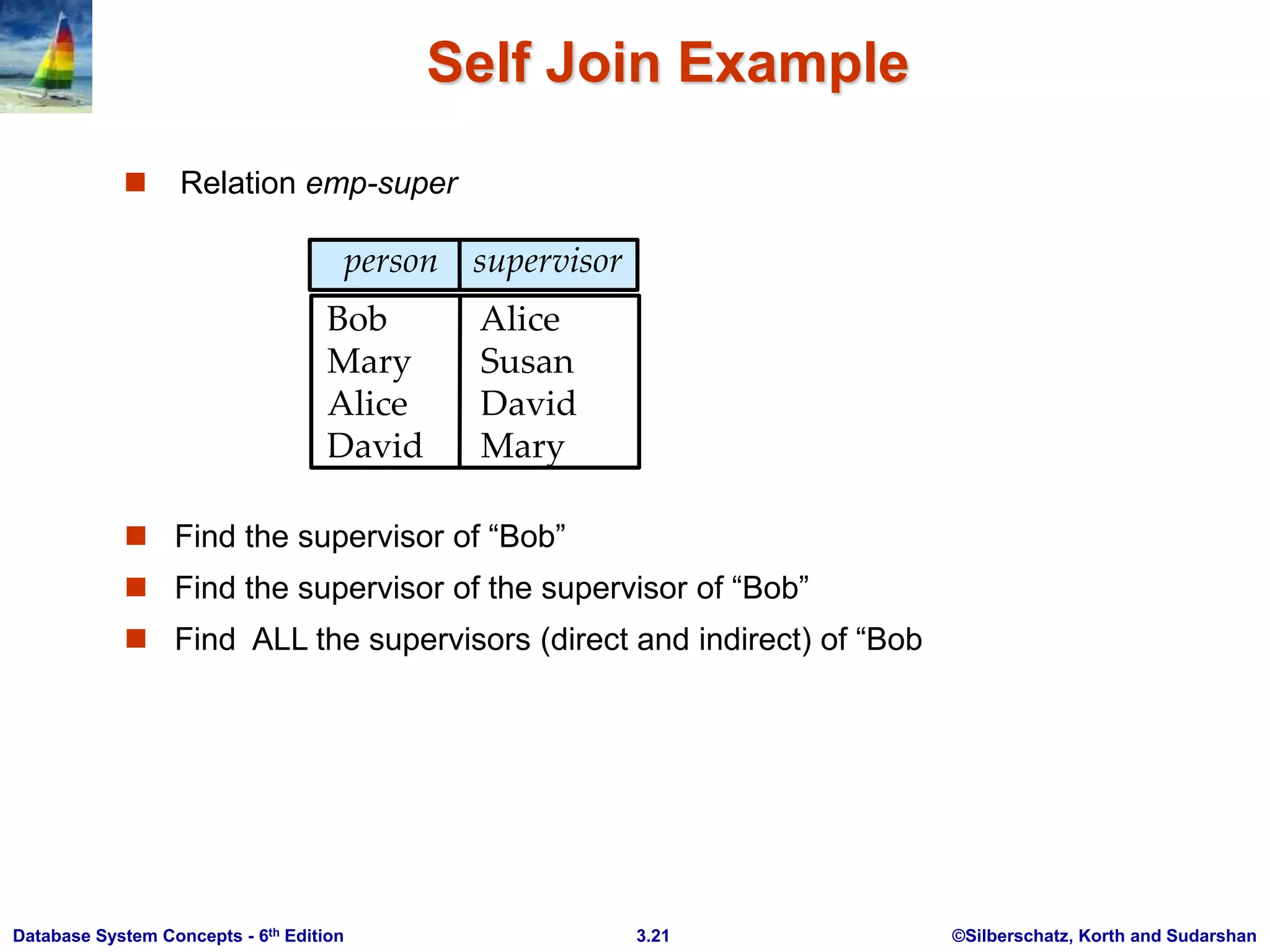

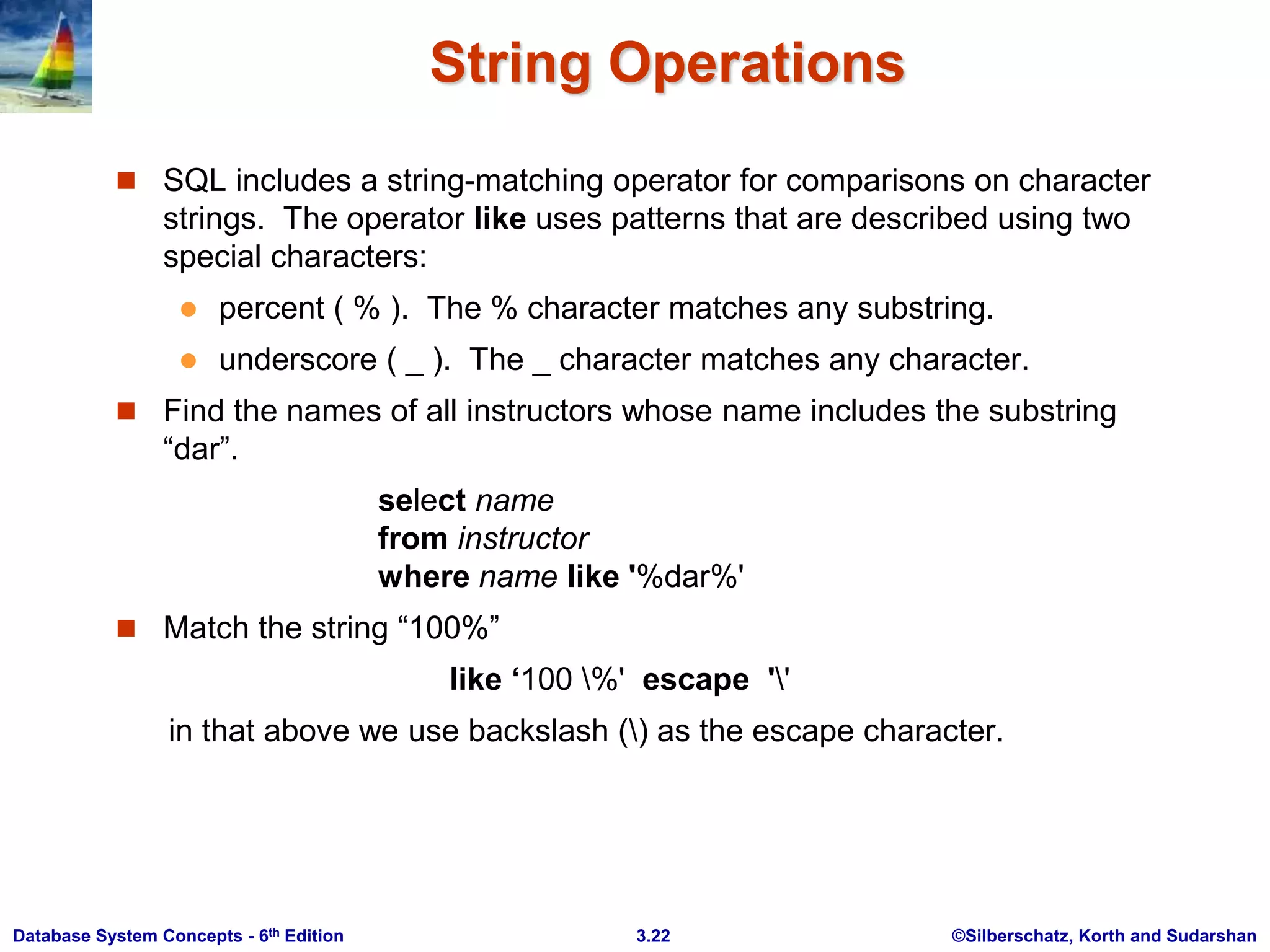

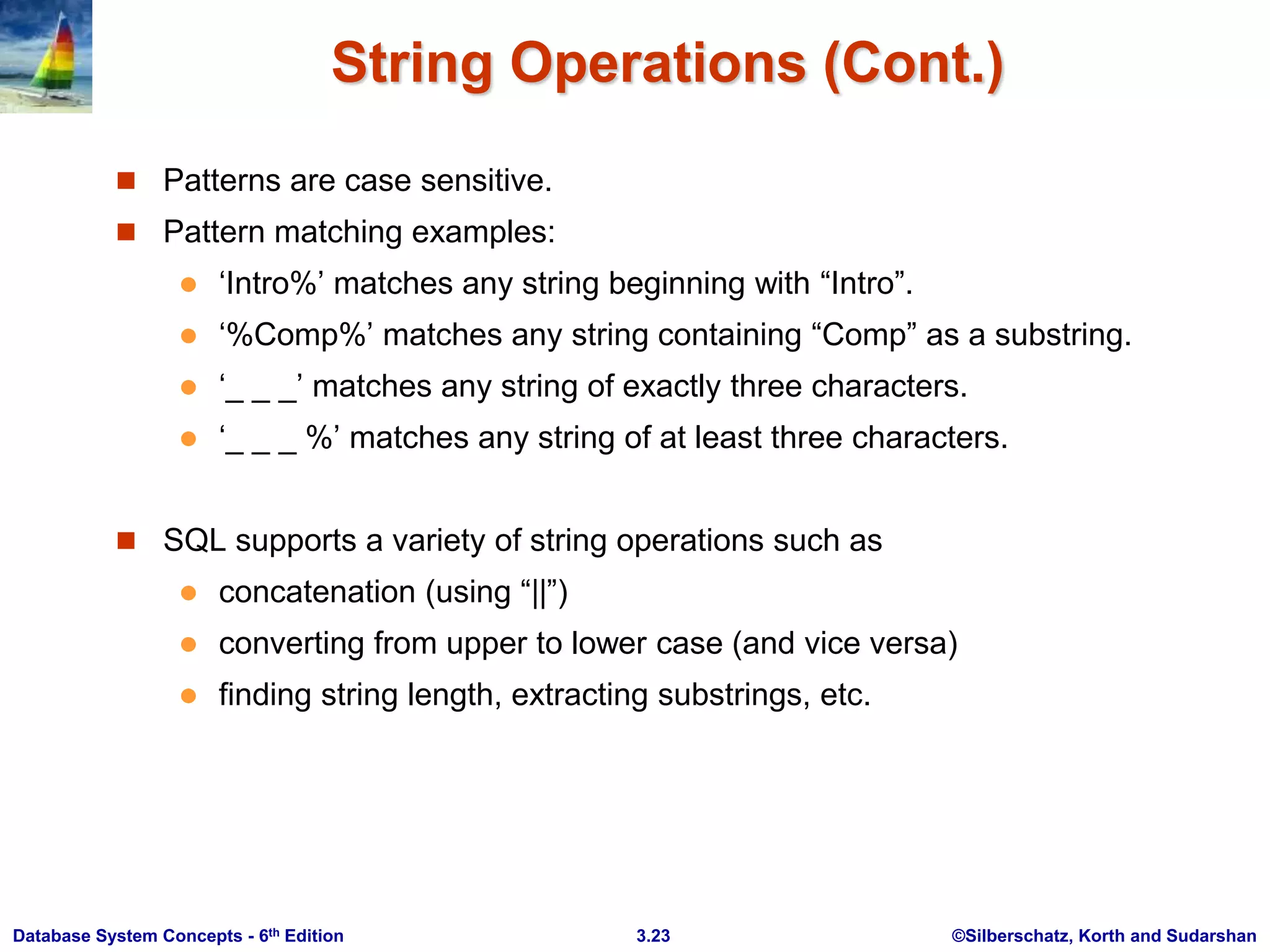

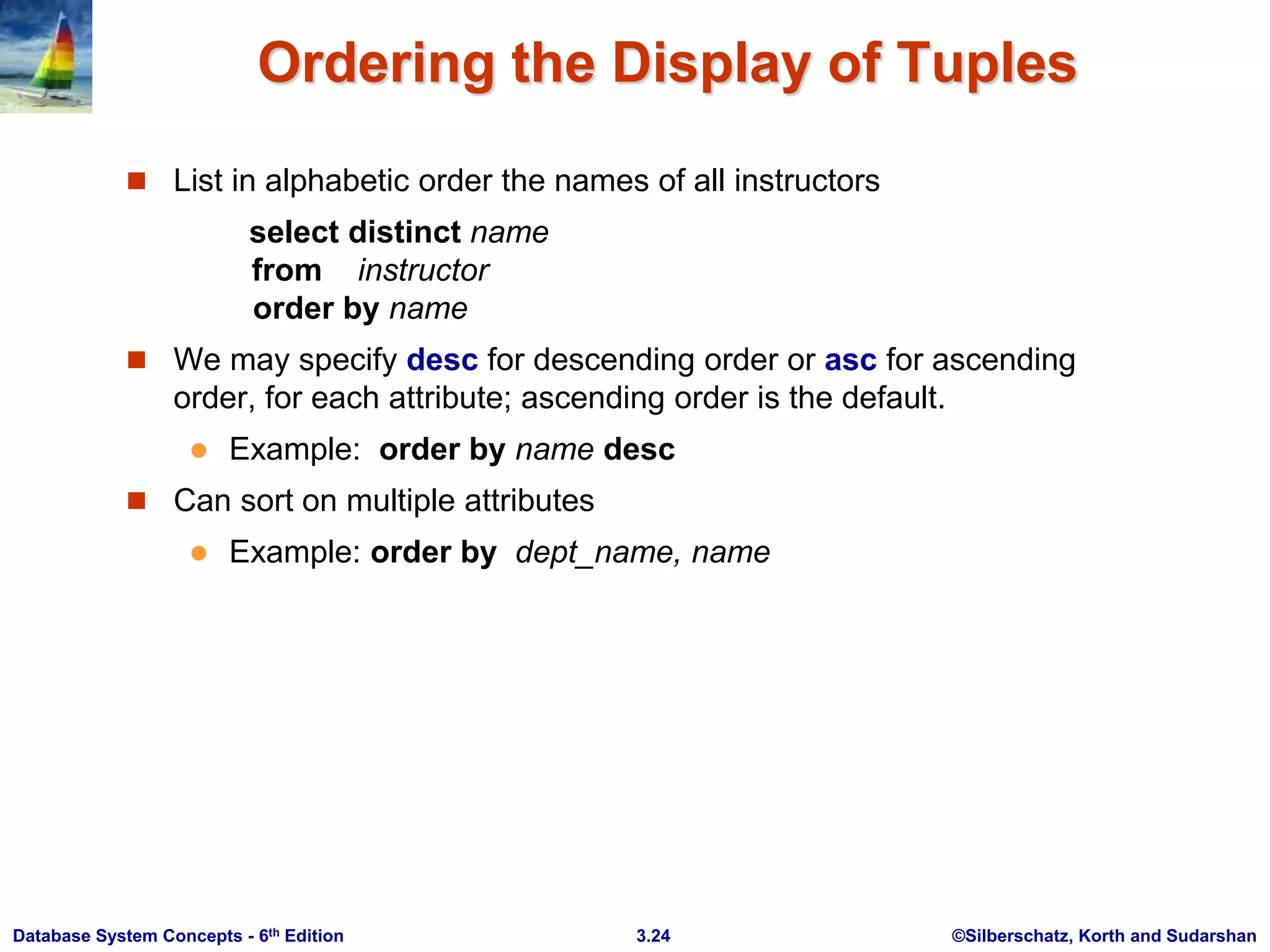

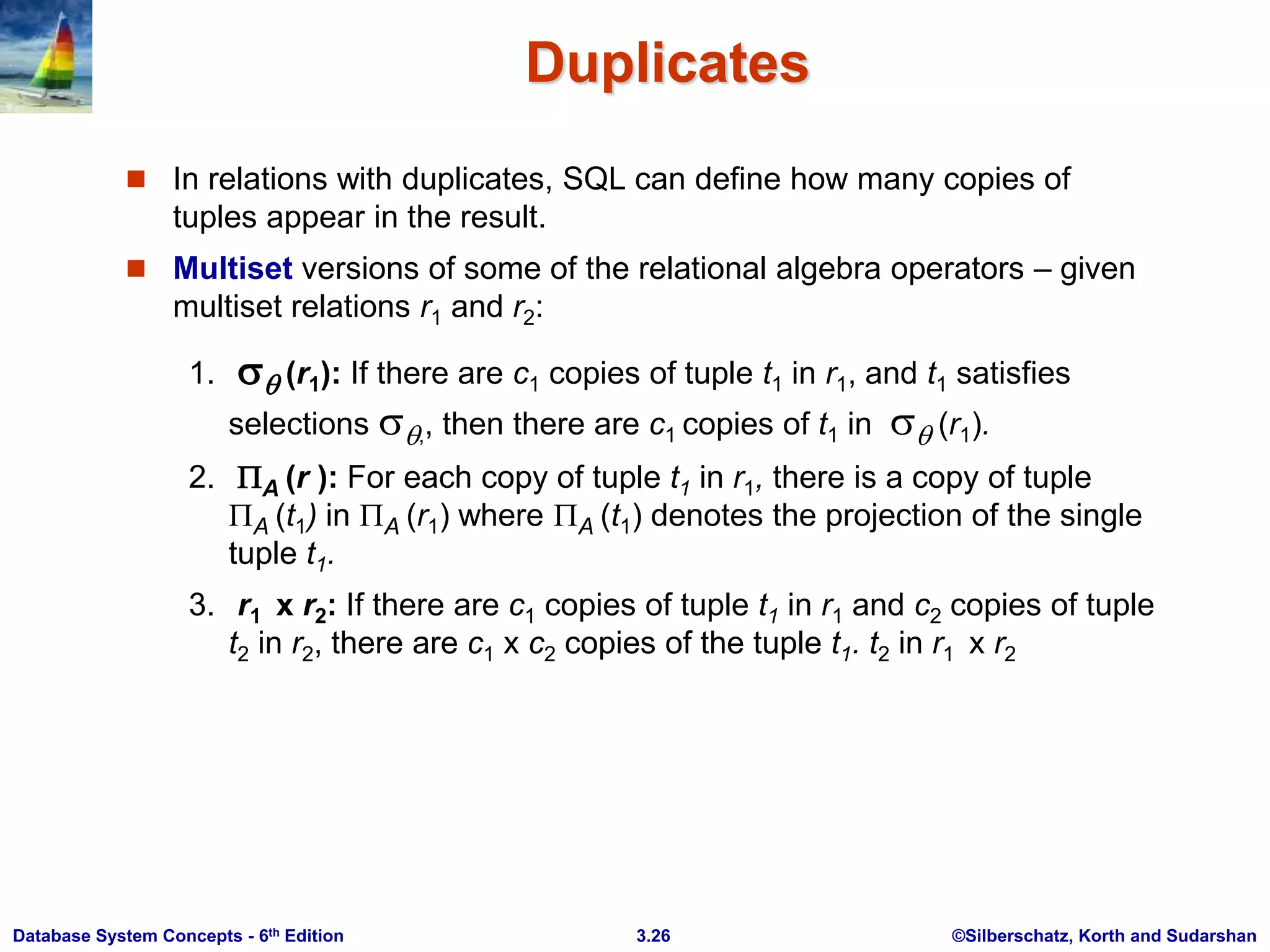

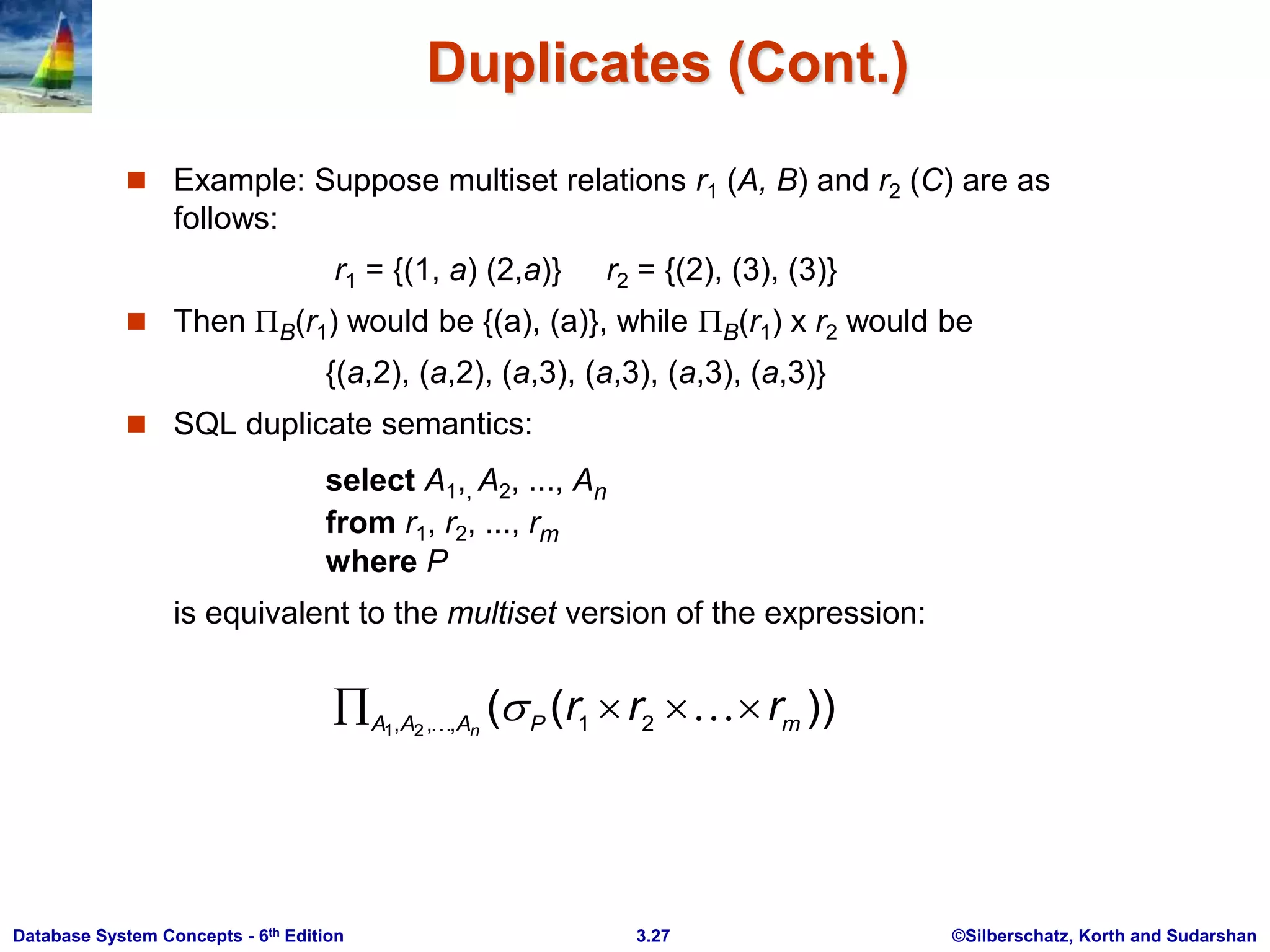

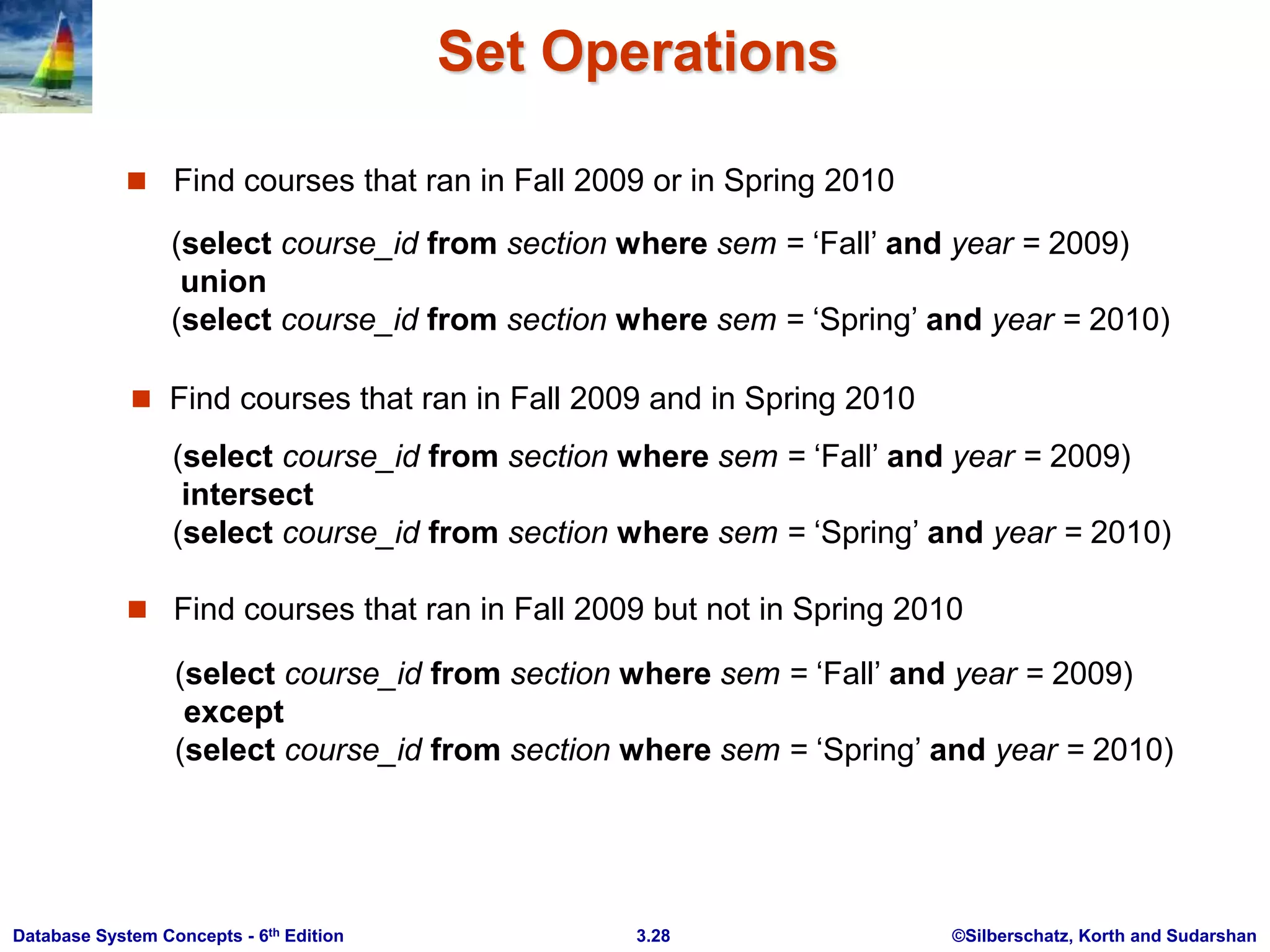

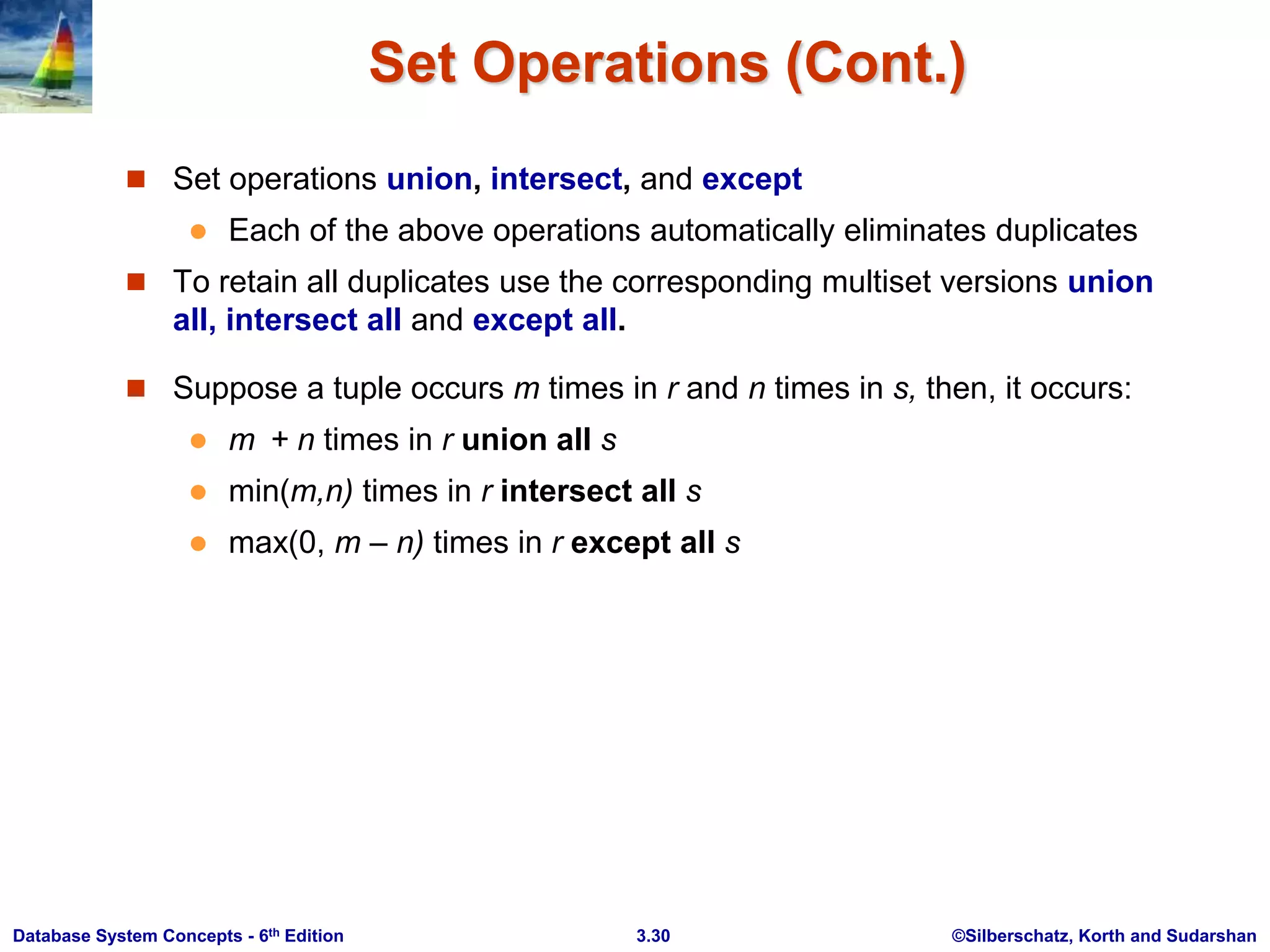

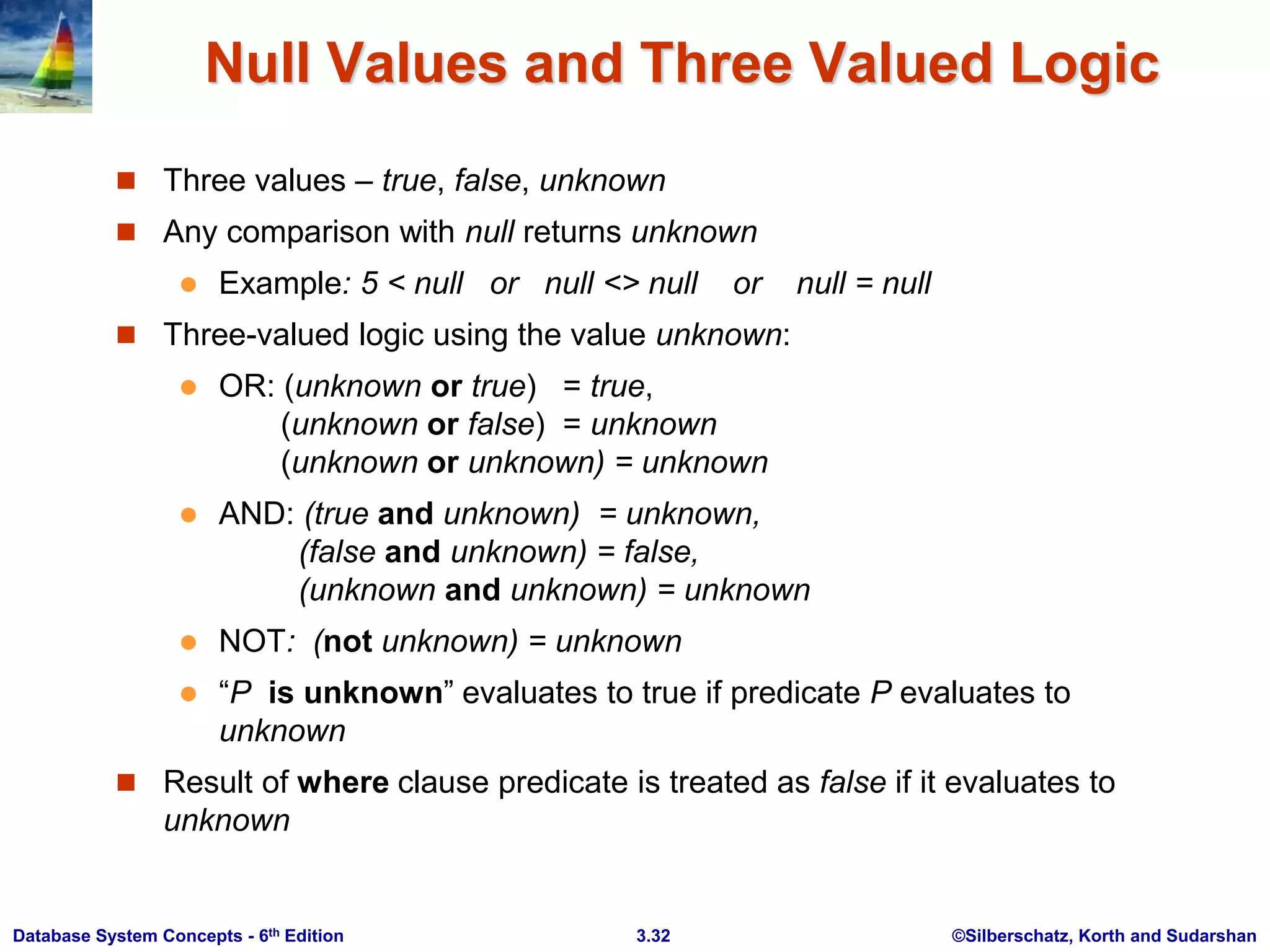

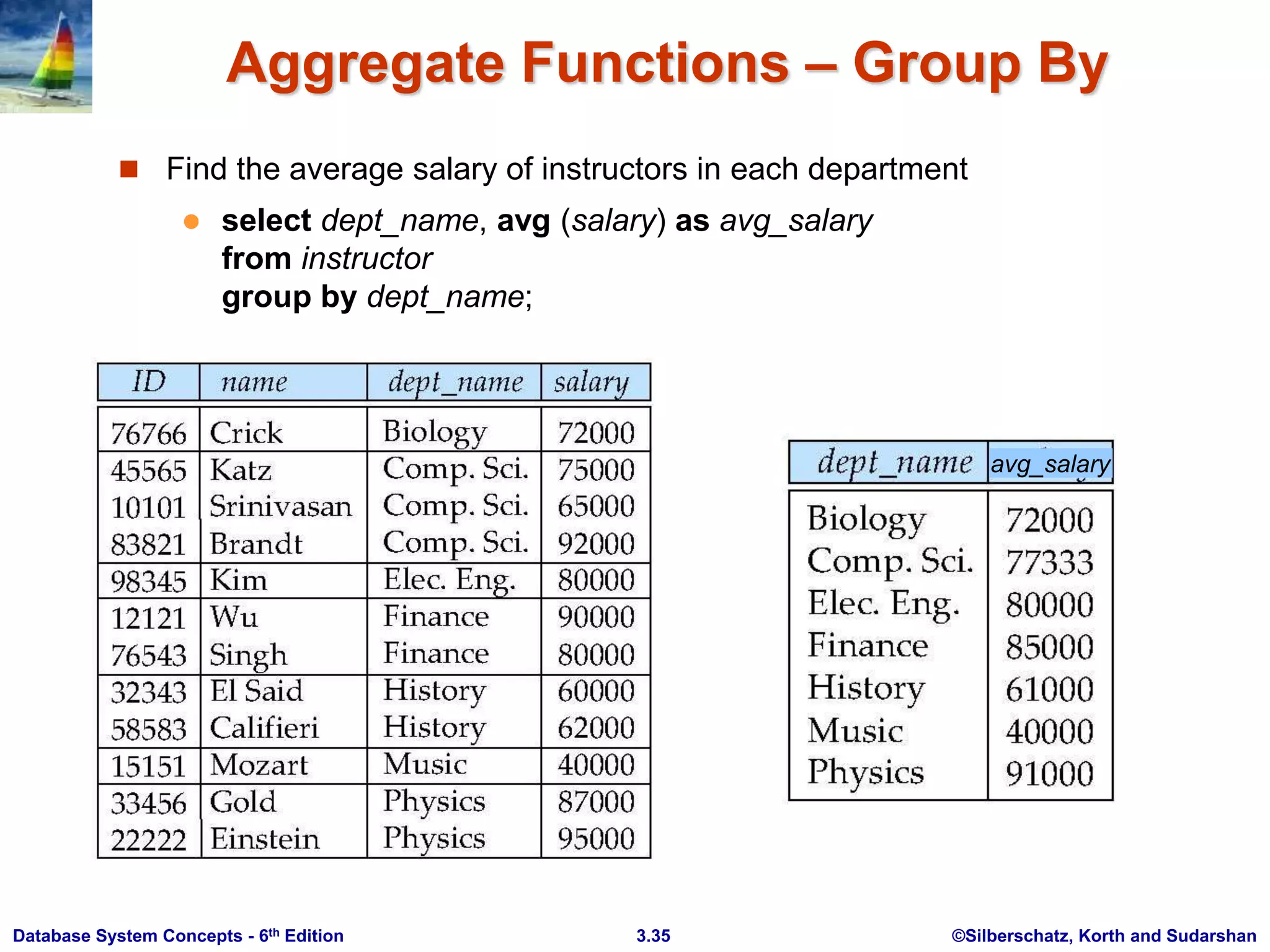

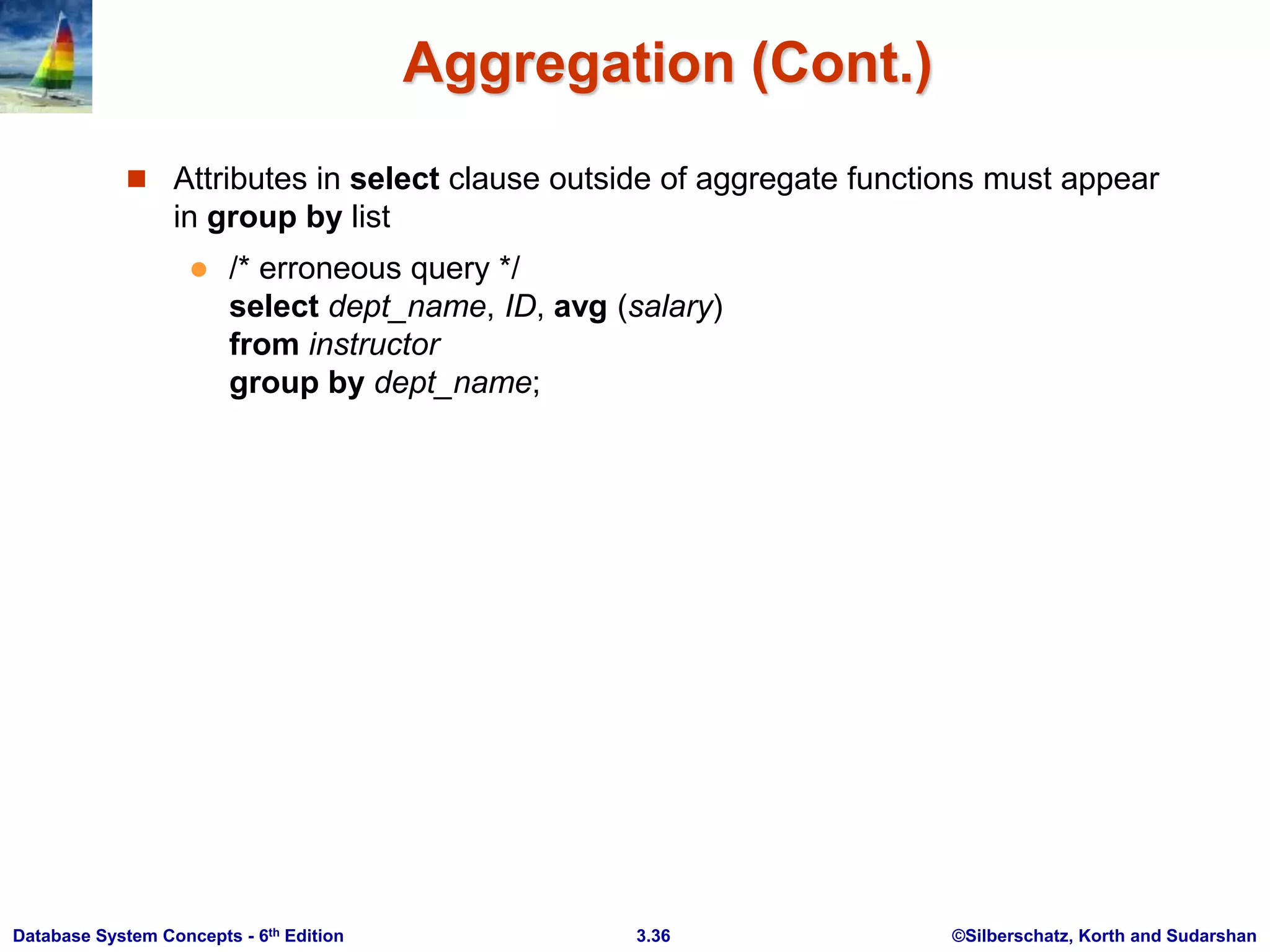

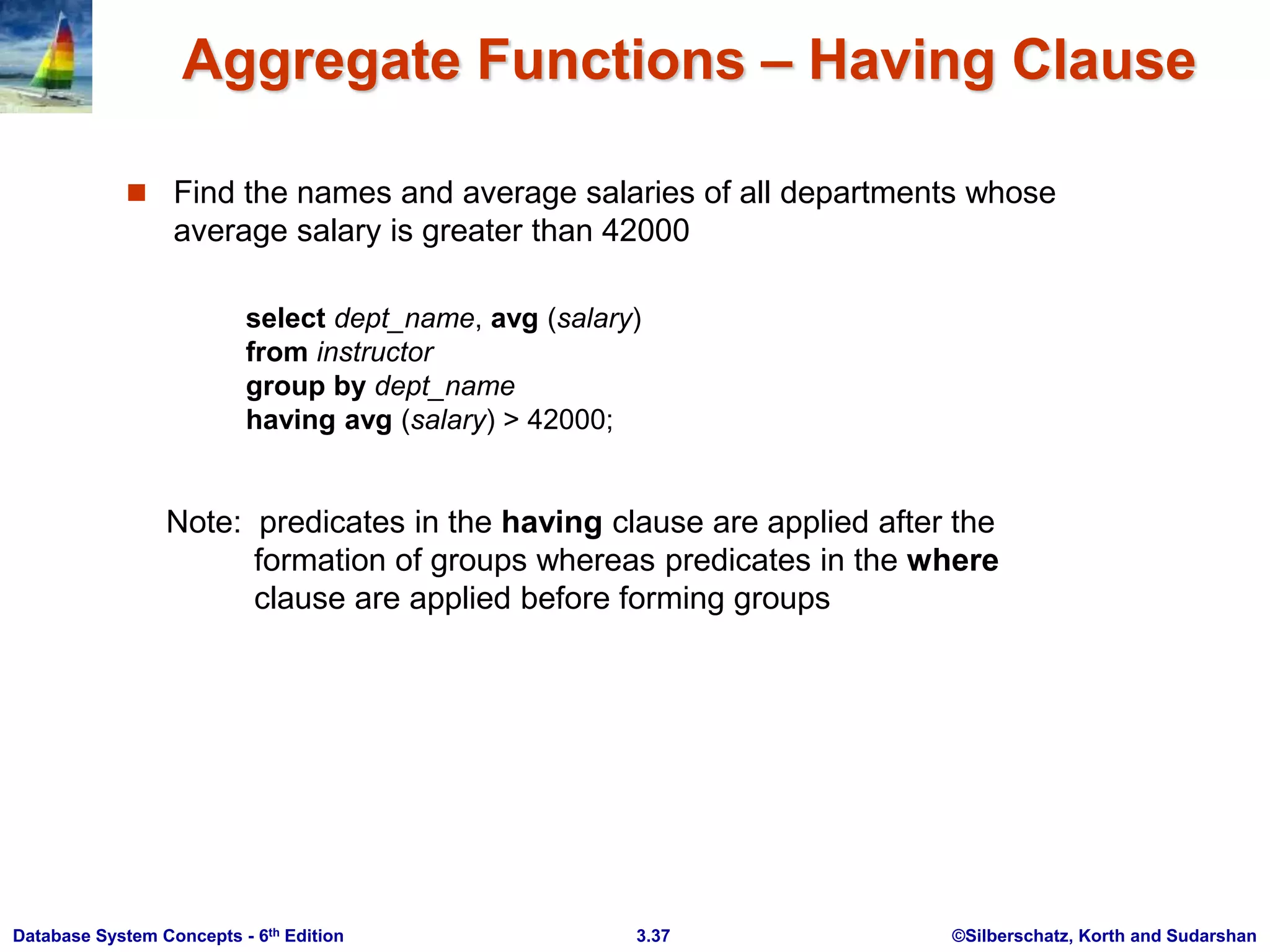

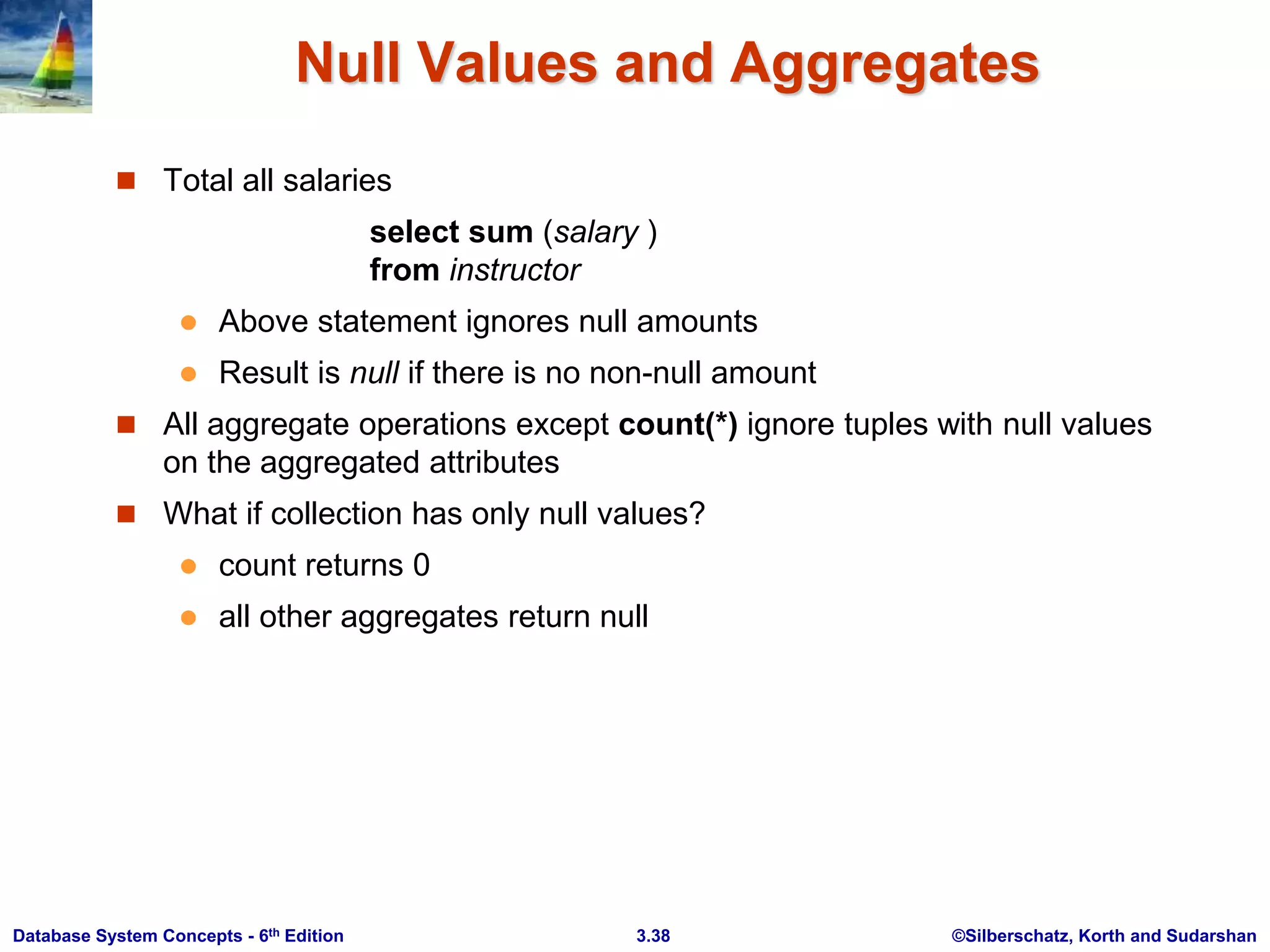

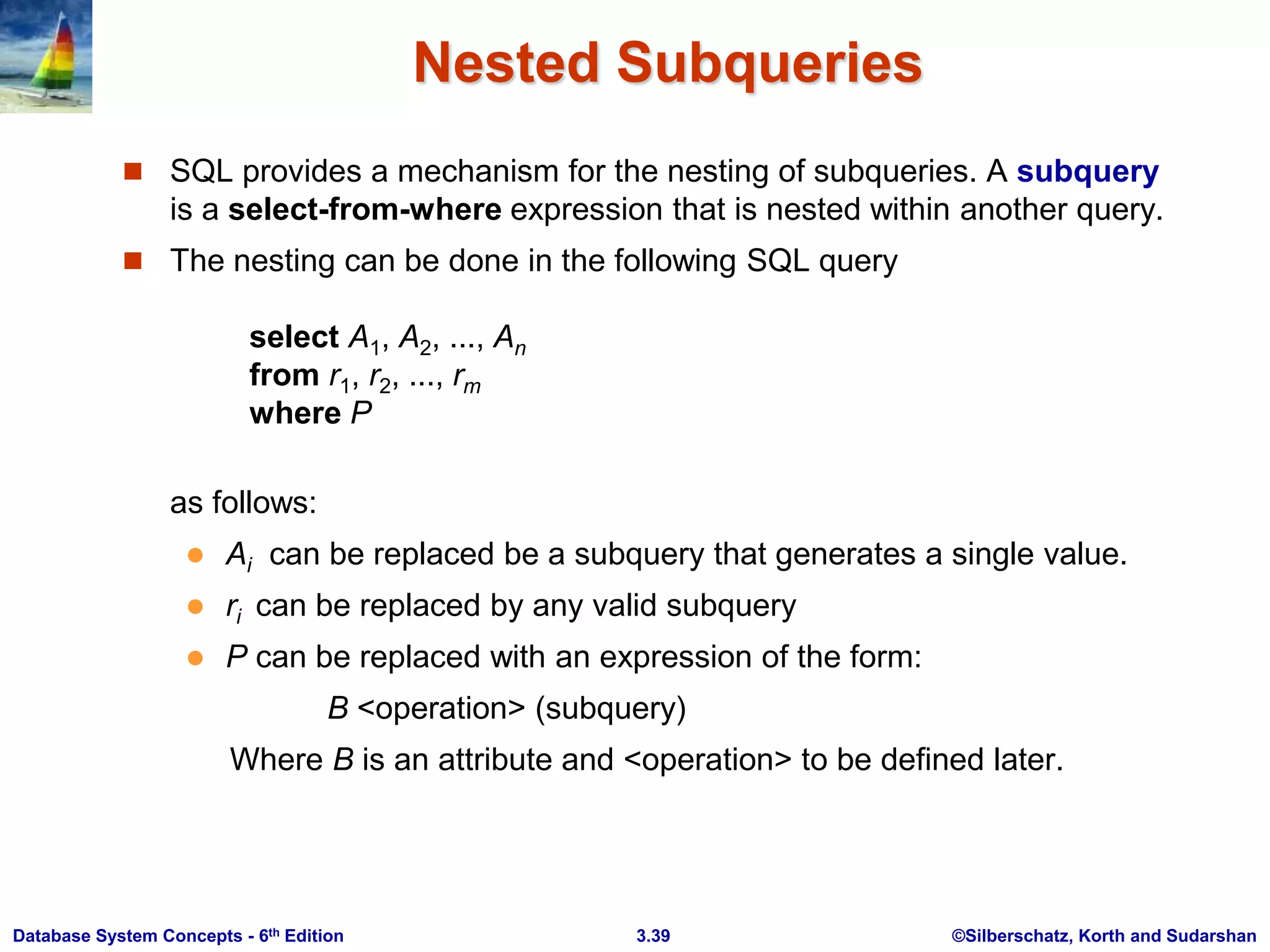

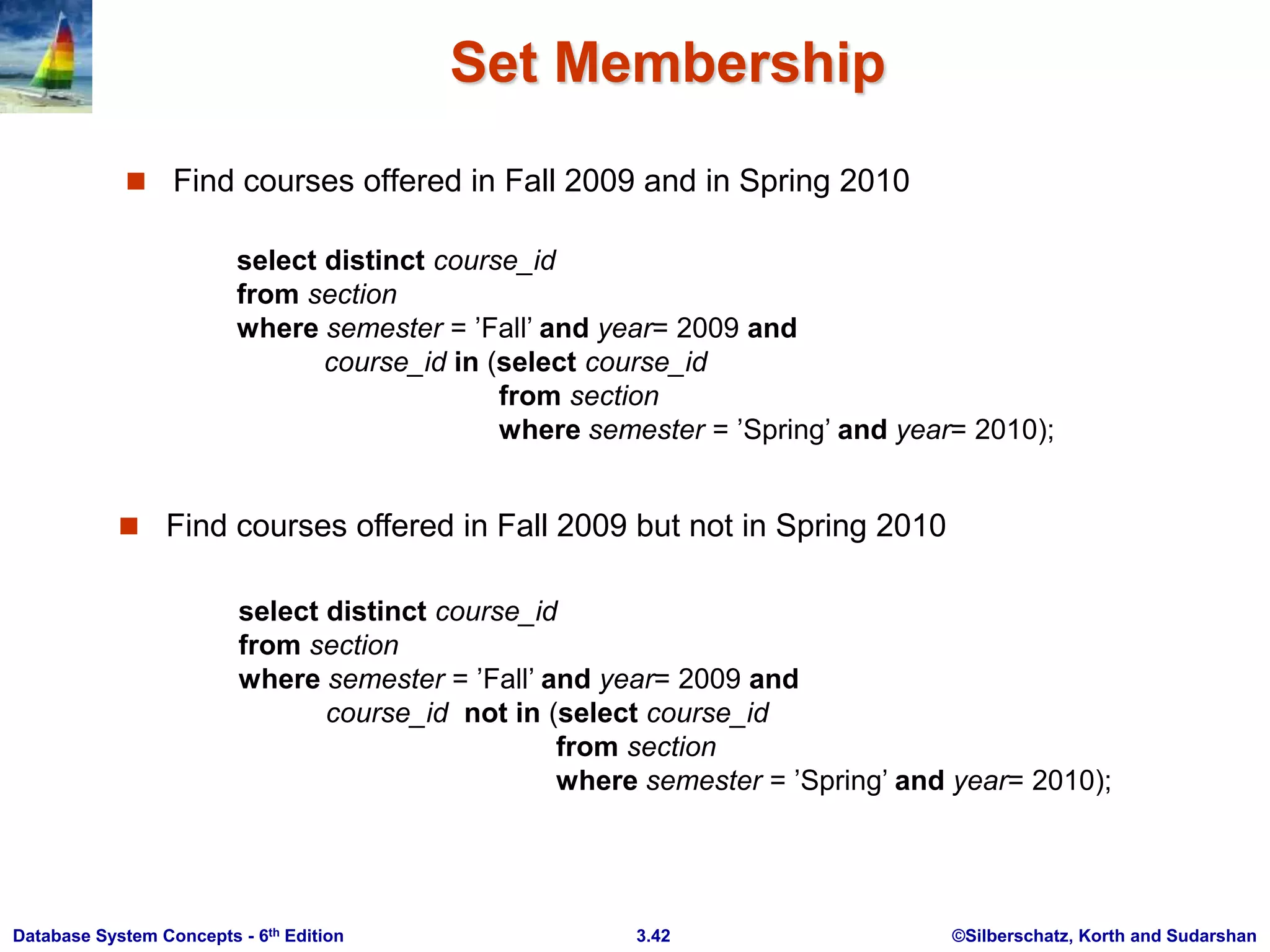

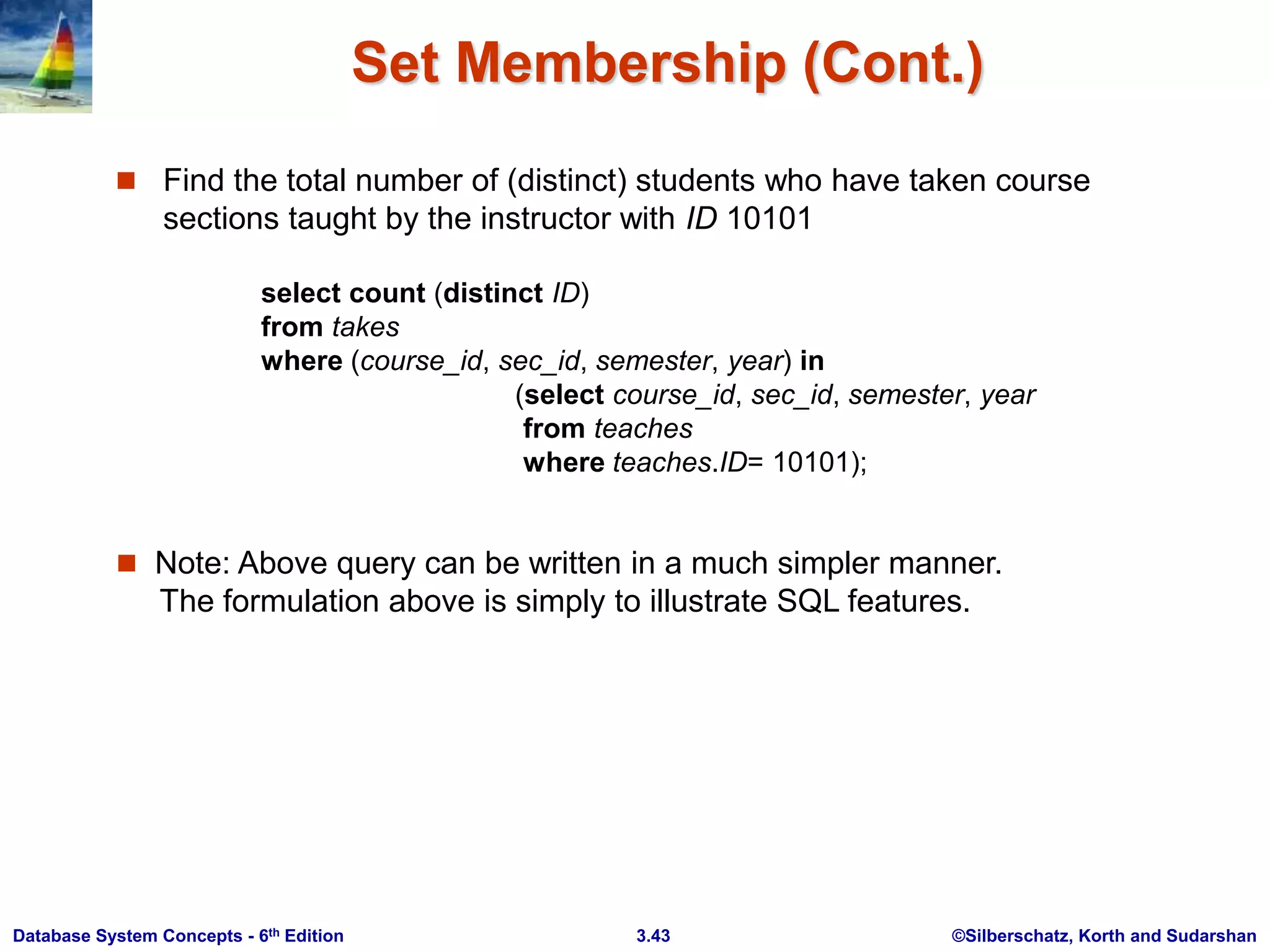

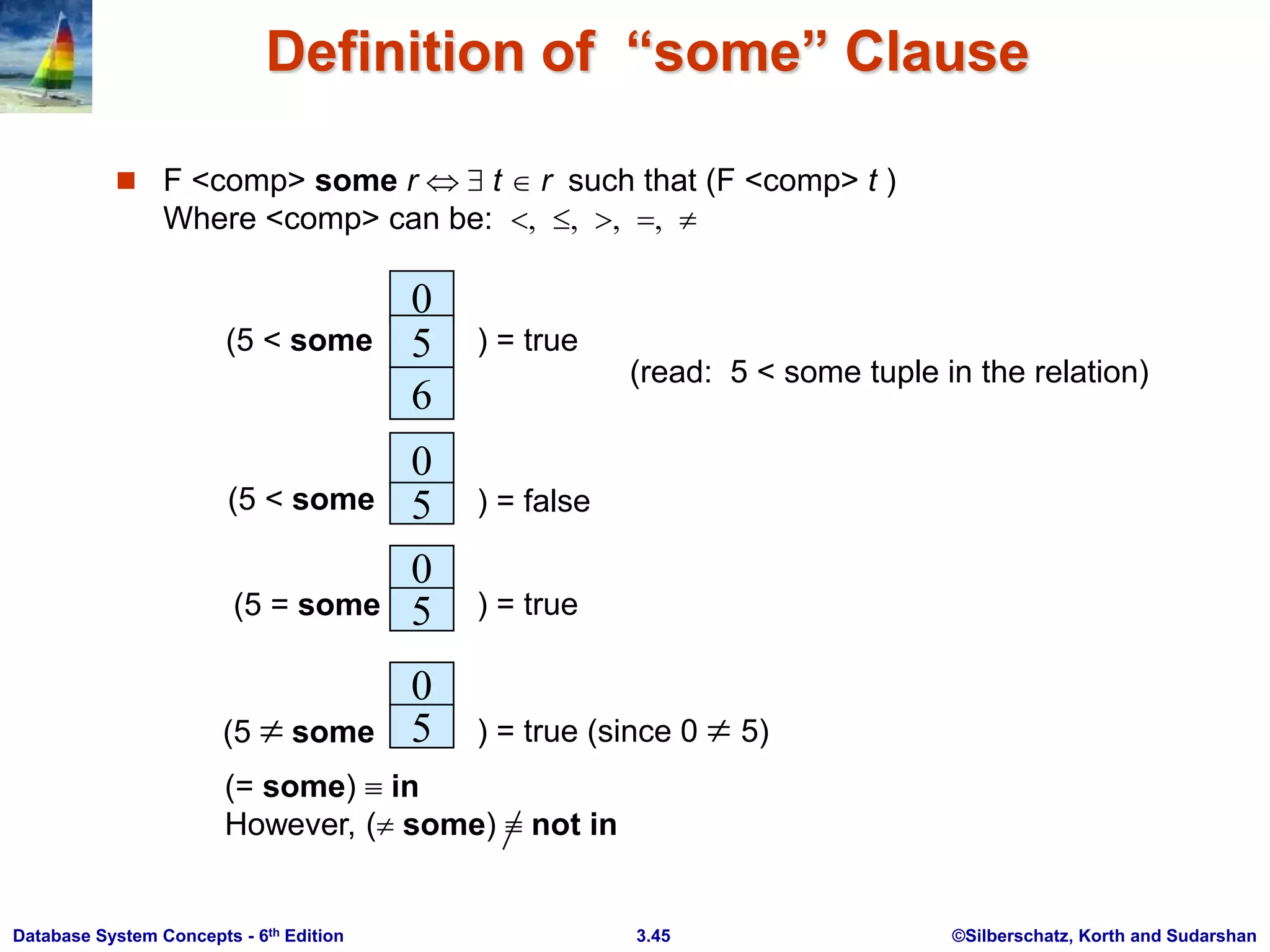

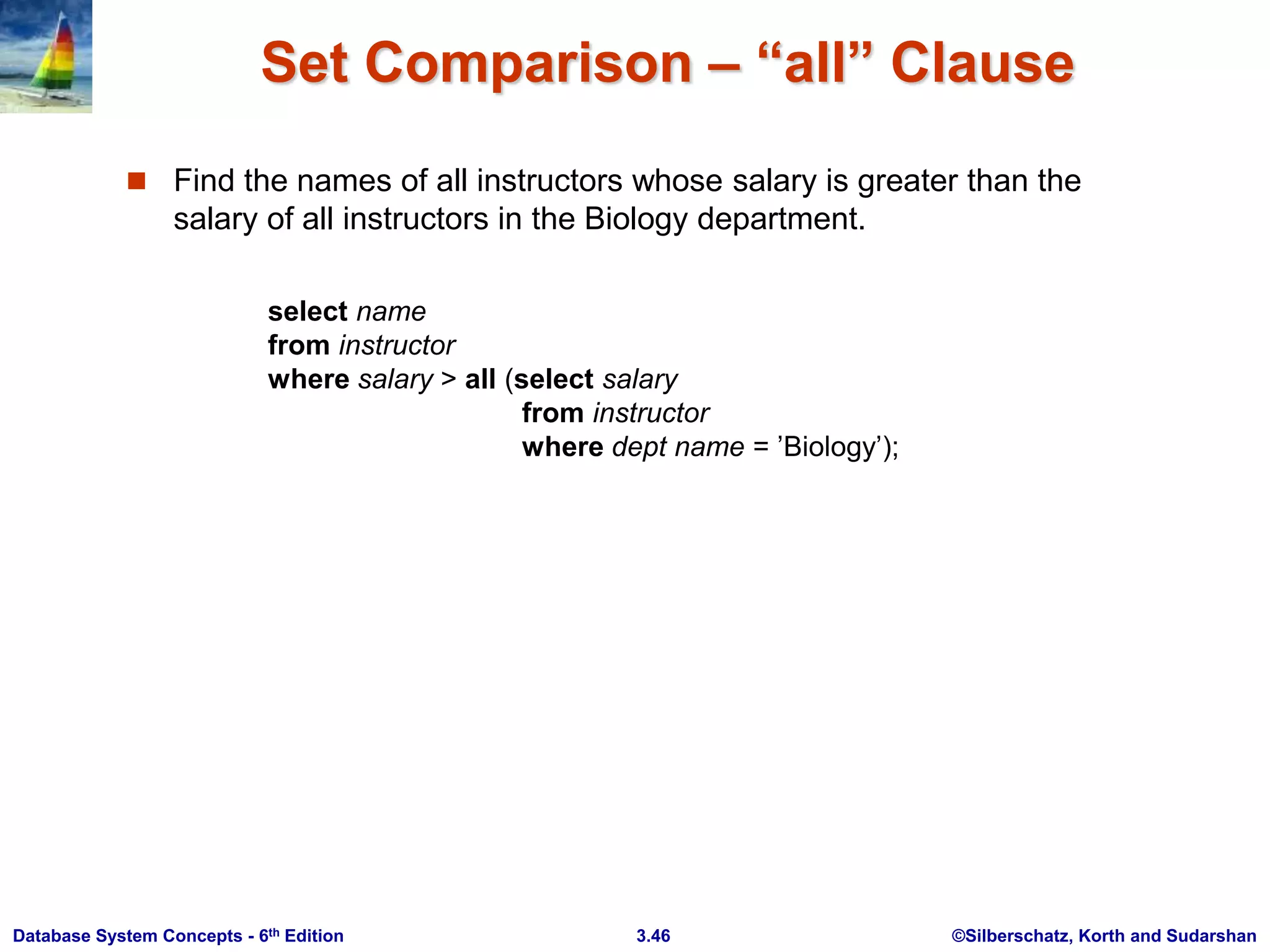

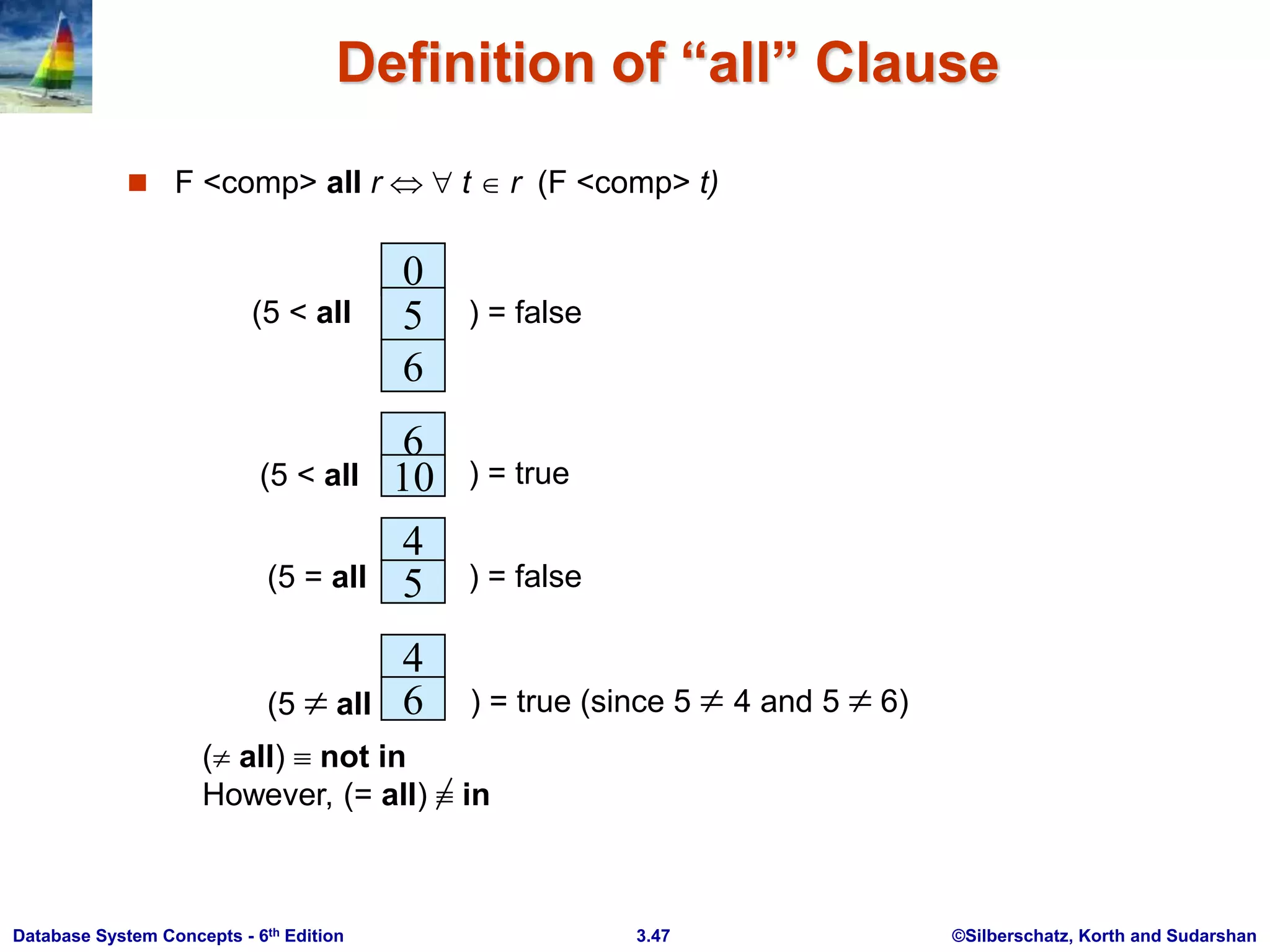

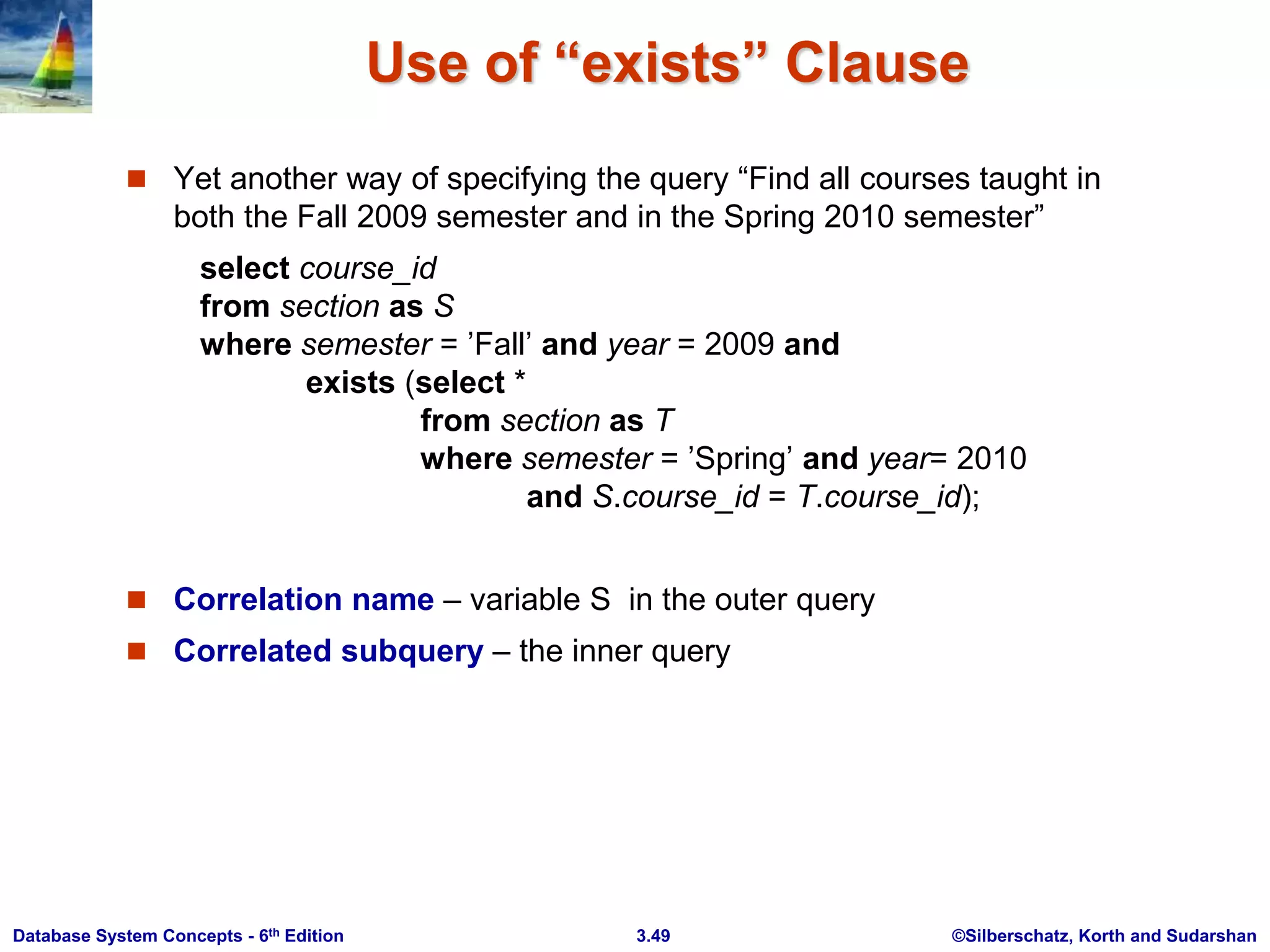

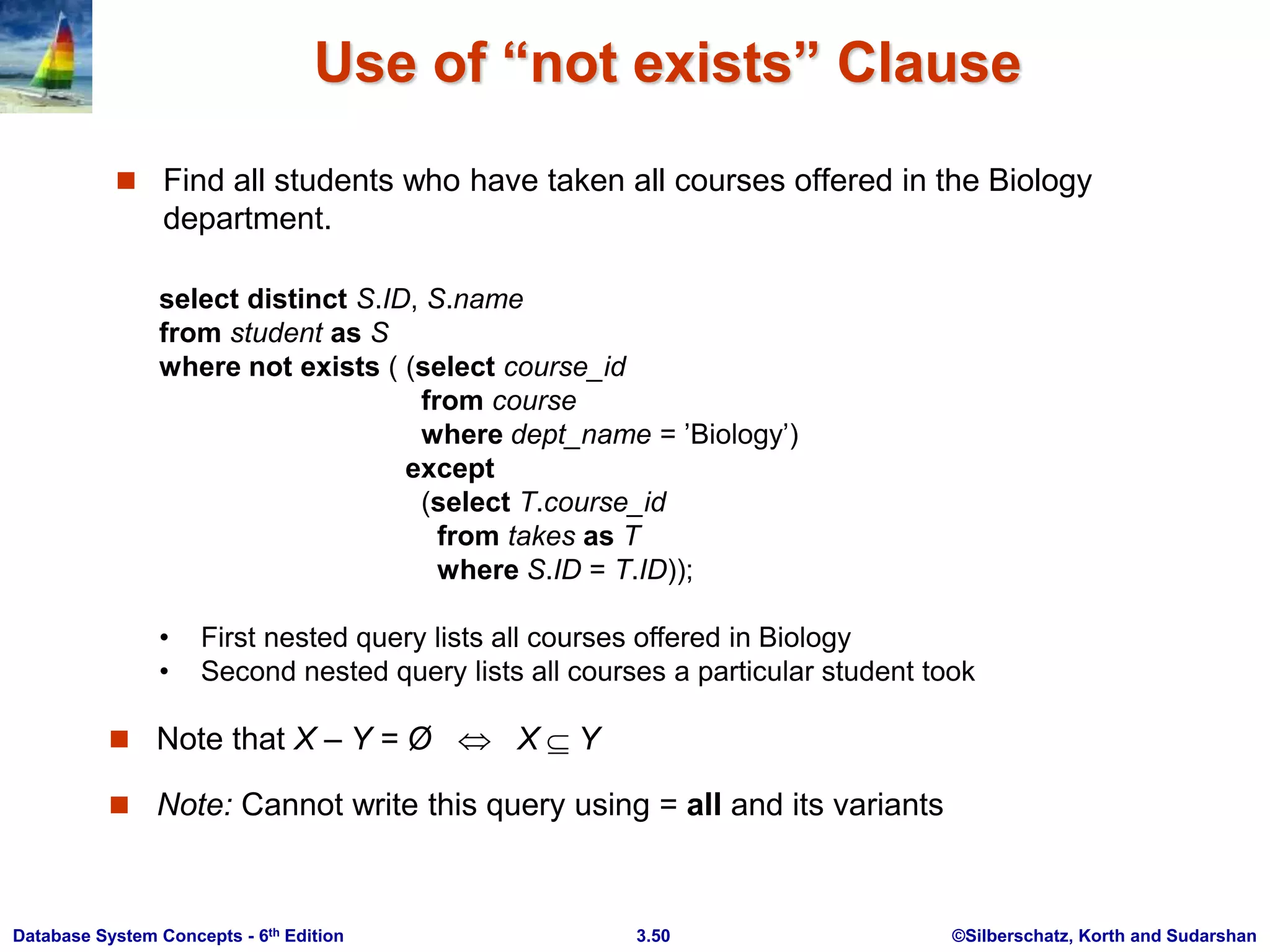

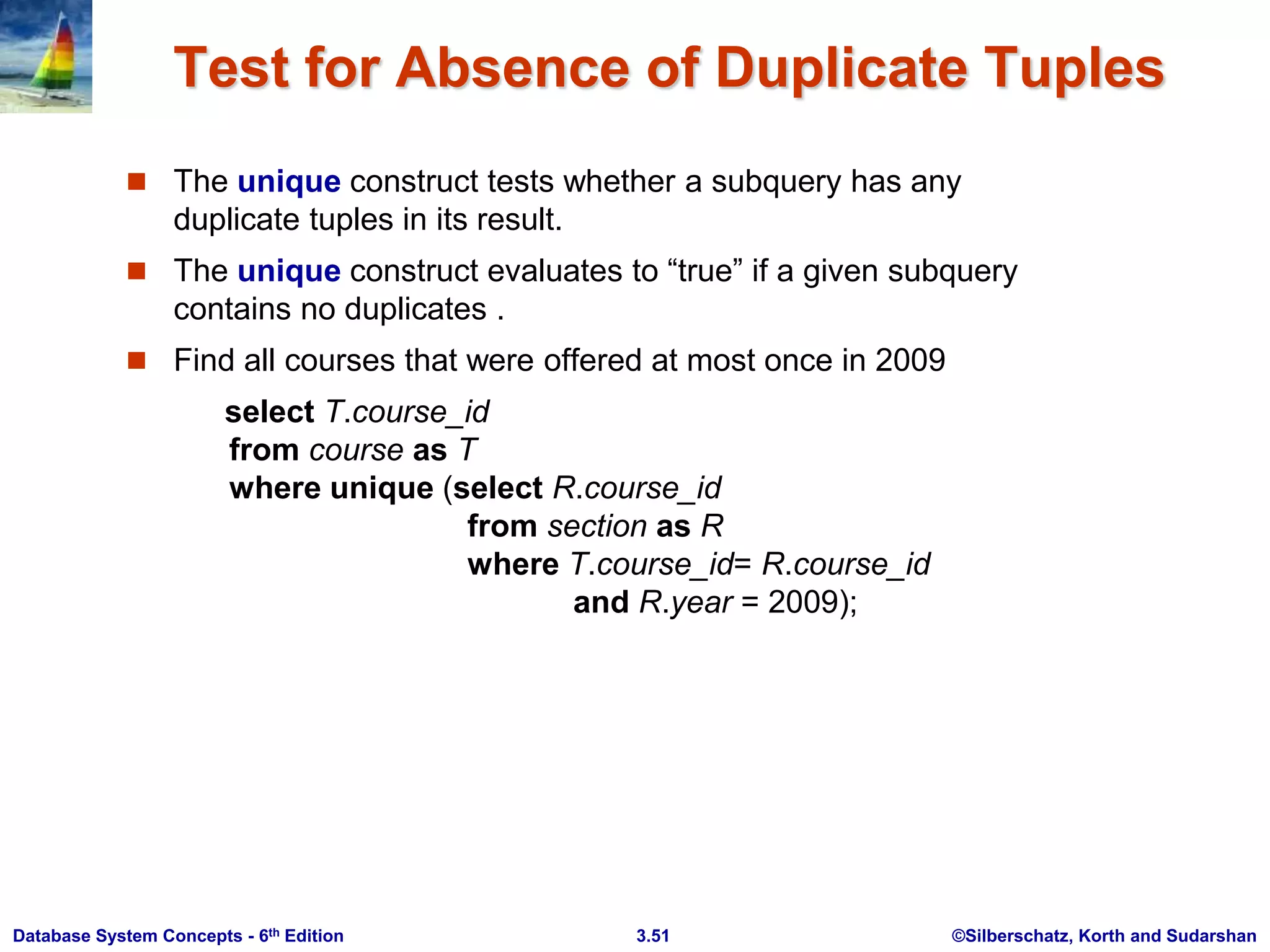

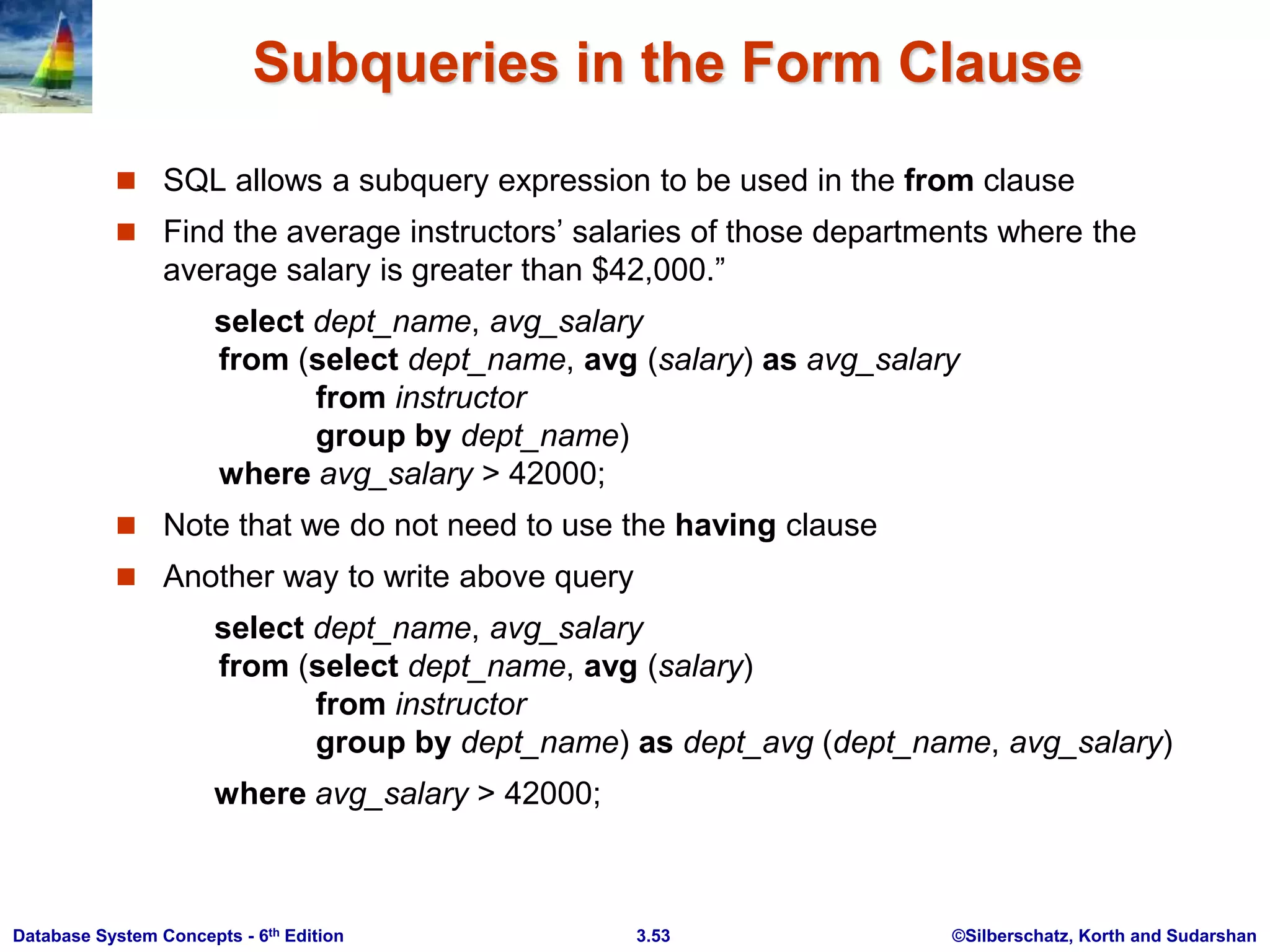

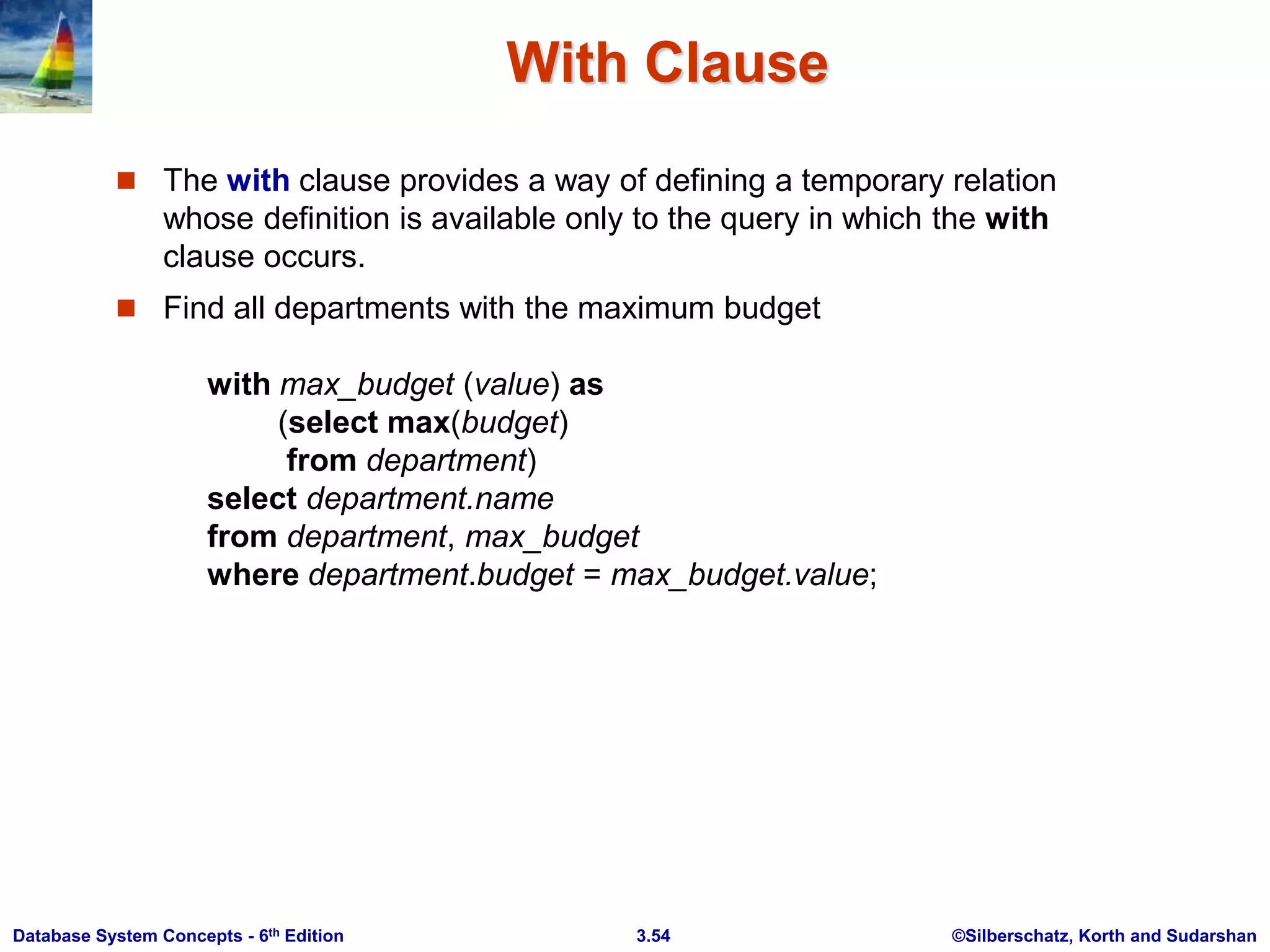

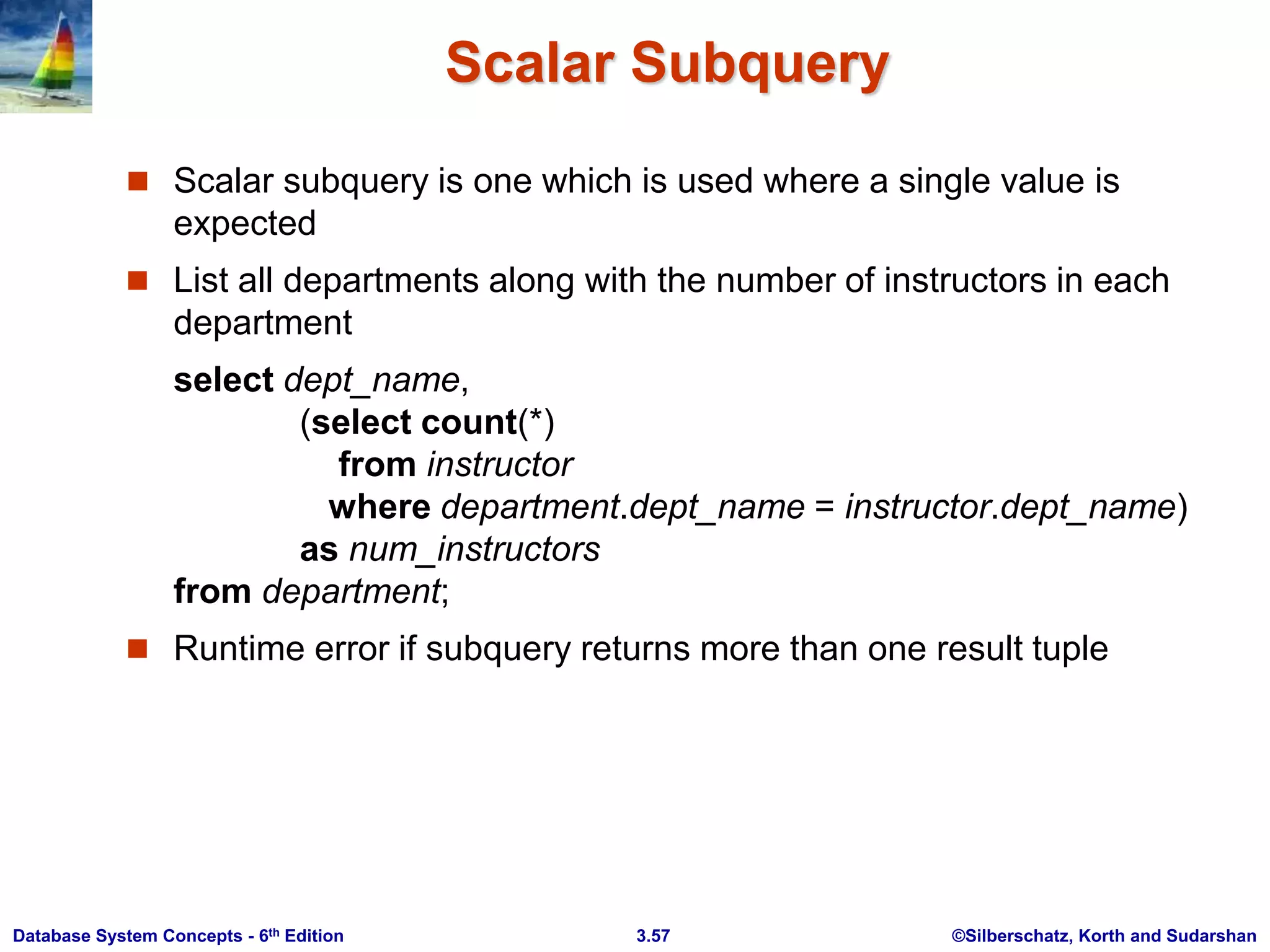

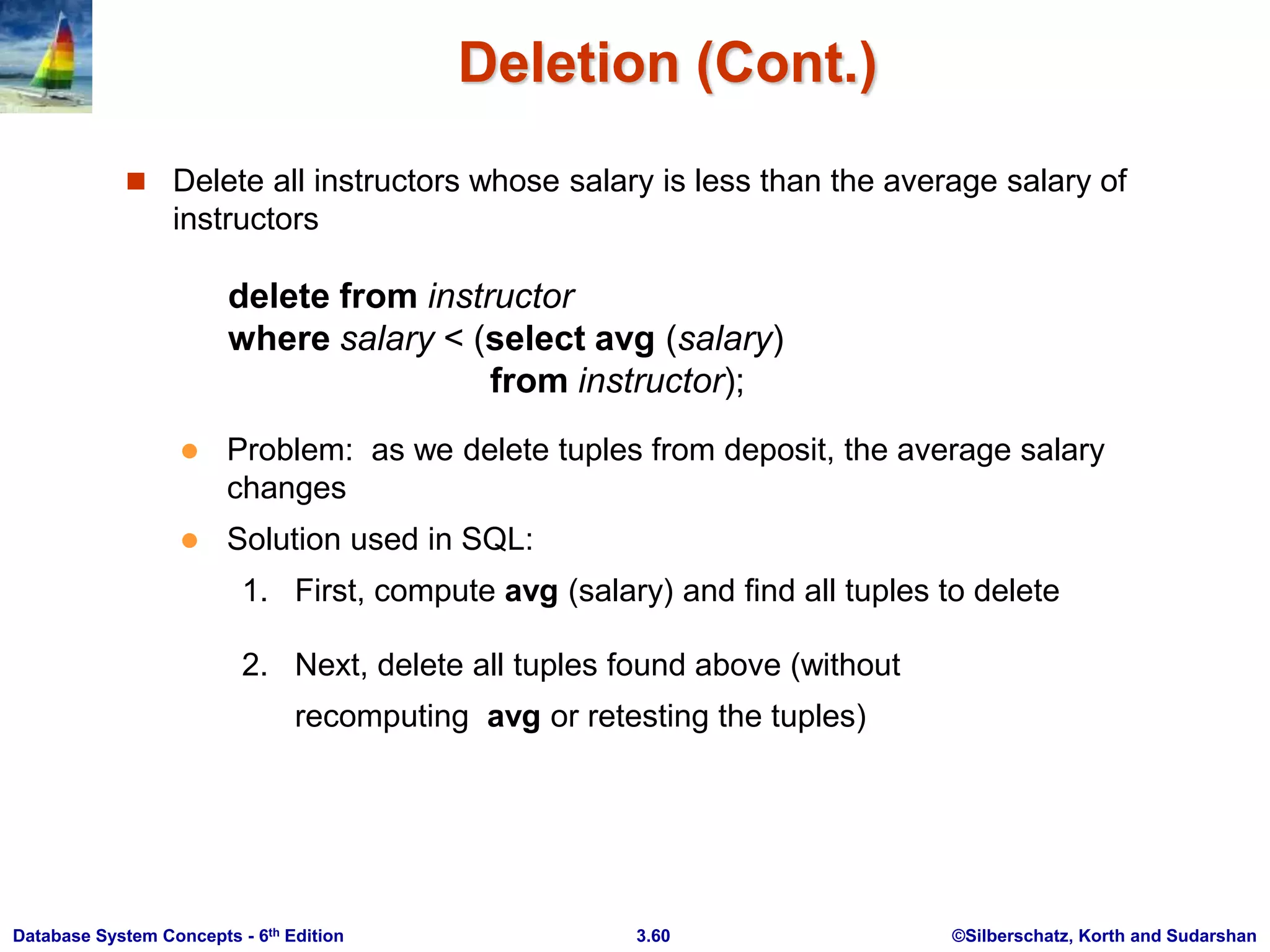

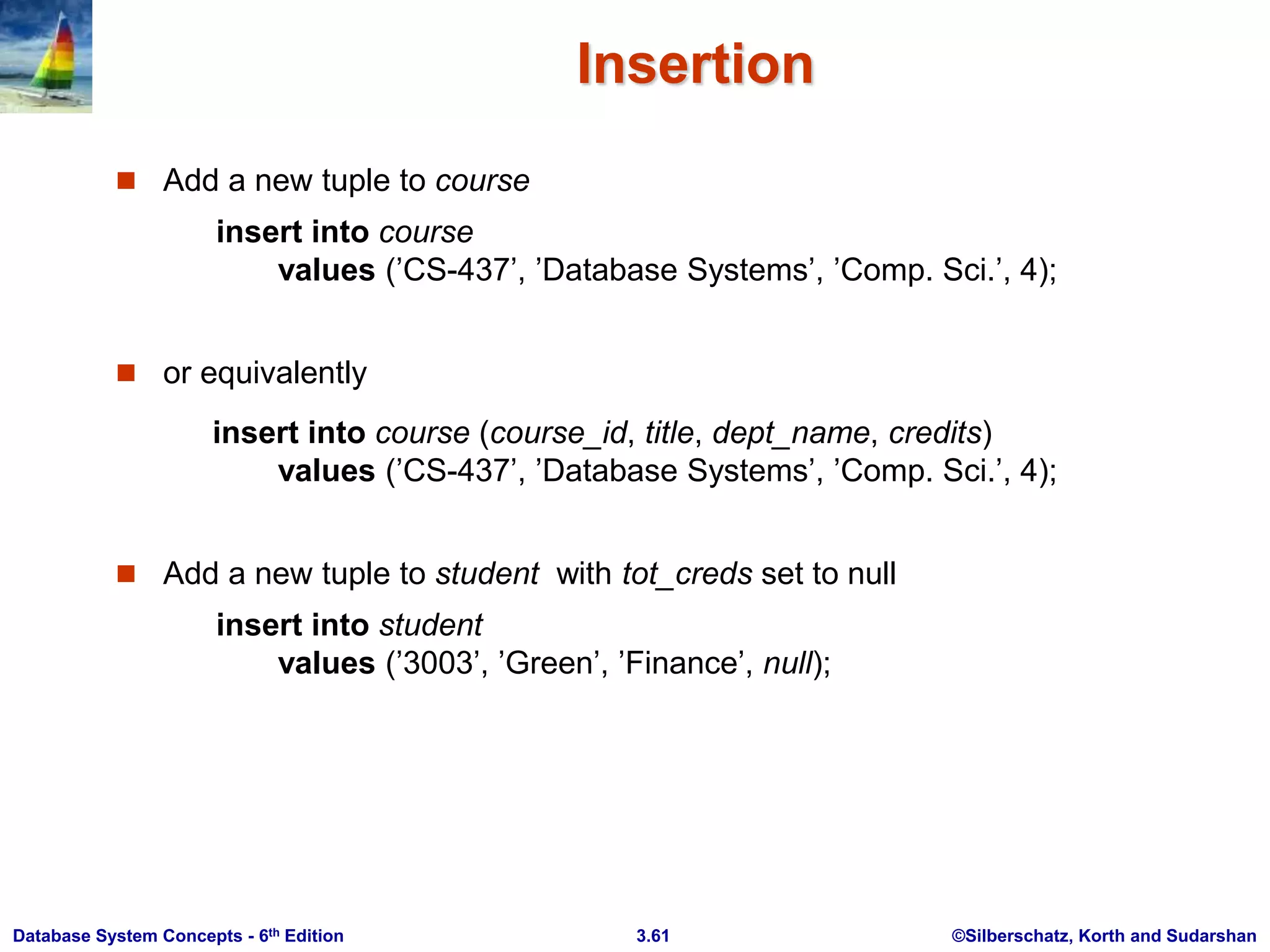

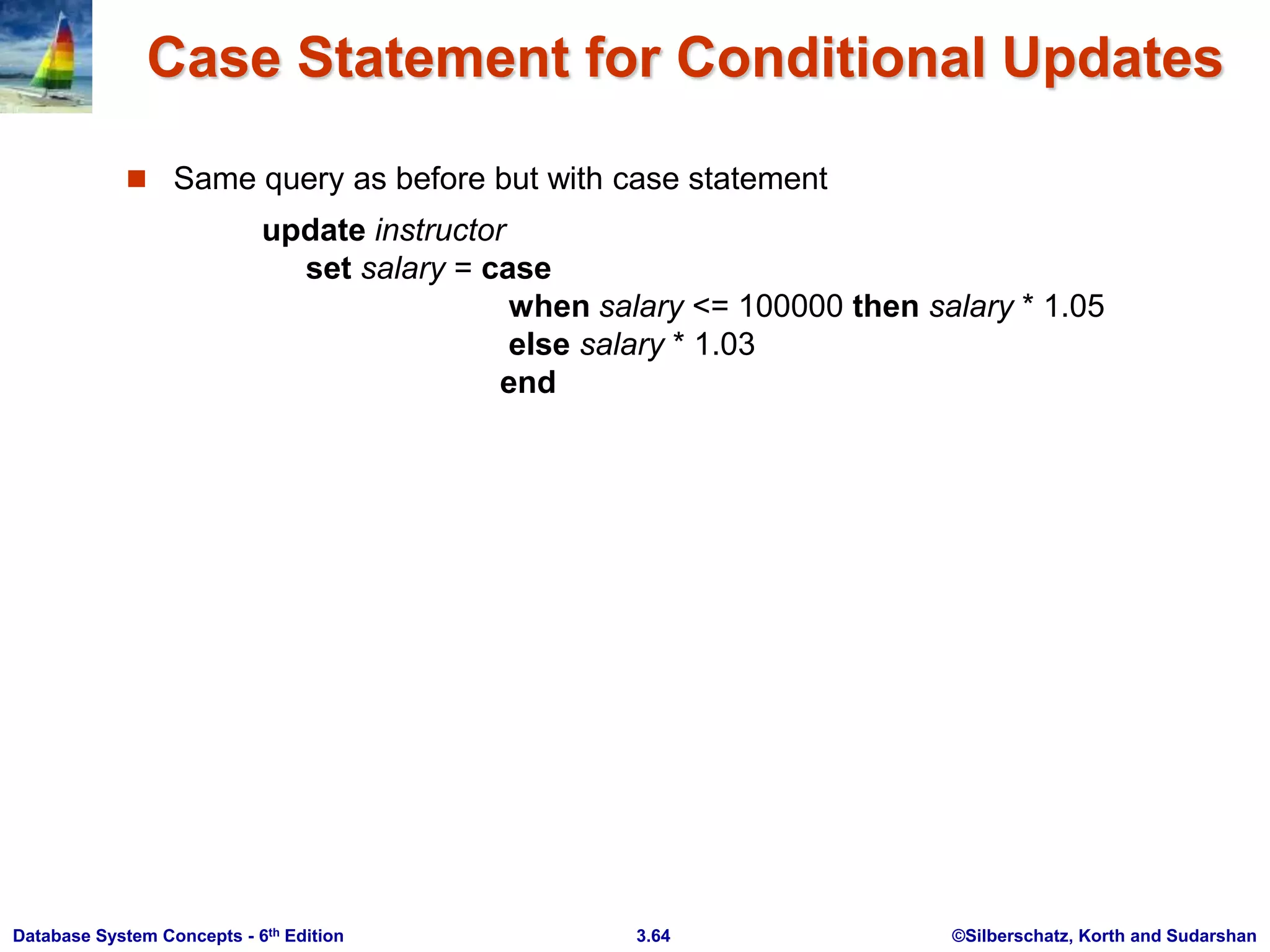

This document provides an overview of Chapter 3 from the textbook "Database System Concepts, 6th Ed." by Silberschatz, Korth and Sudarshan. The chapter introduces SQL, including its history, data definition language, data types, basic query structure using SELECT, FROM, and WHERE clauses, and additional query capabilities like aggregation, subqueries and string operations. It also covers modifying the database using INSERT, DELETE, ALTER and DROP statements.