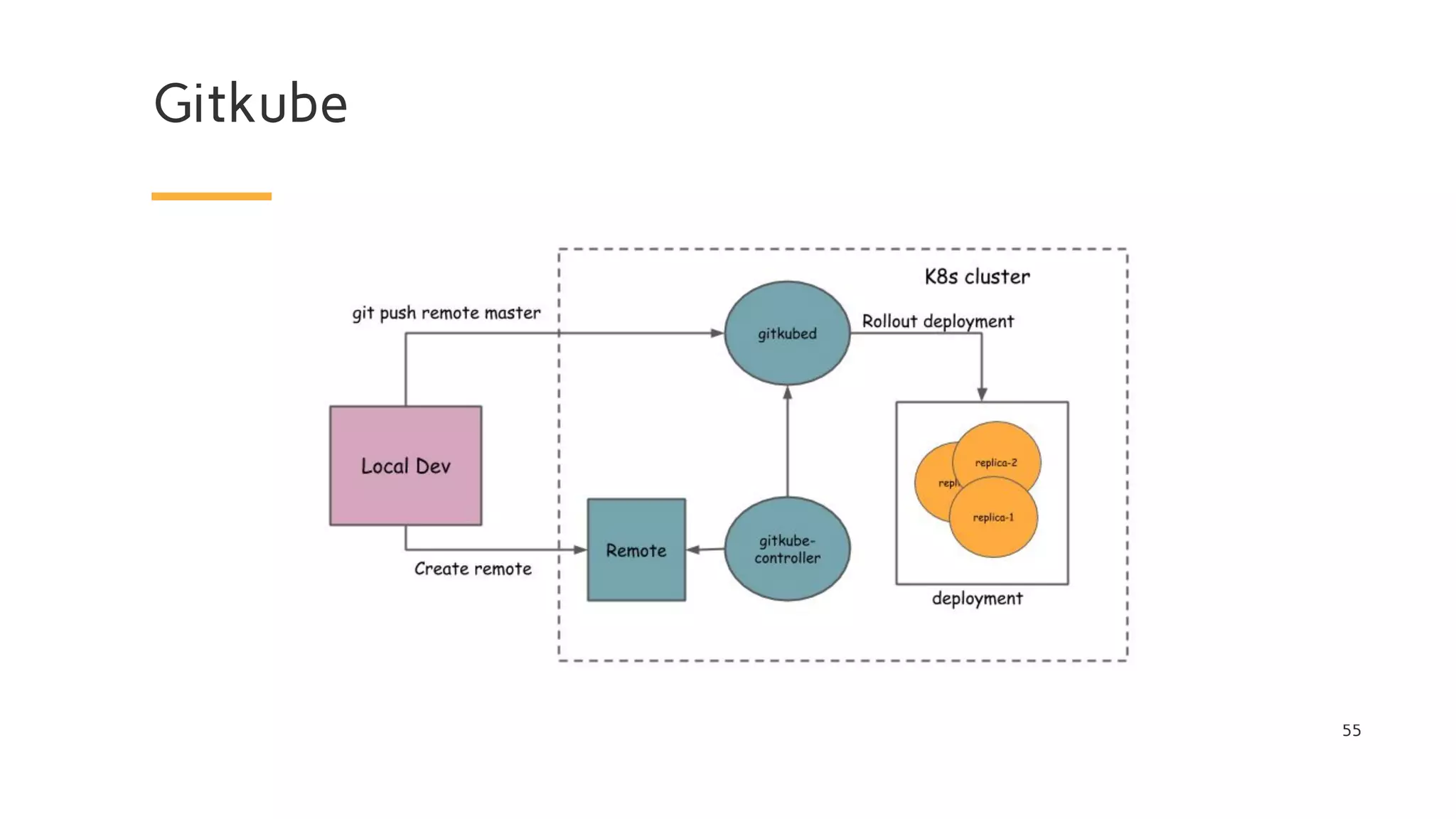

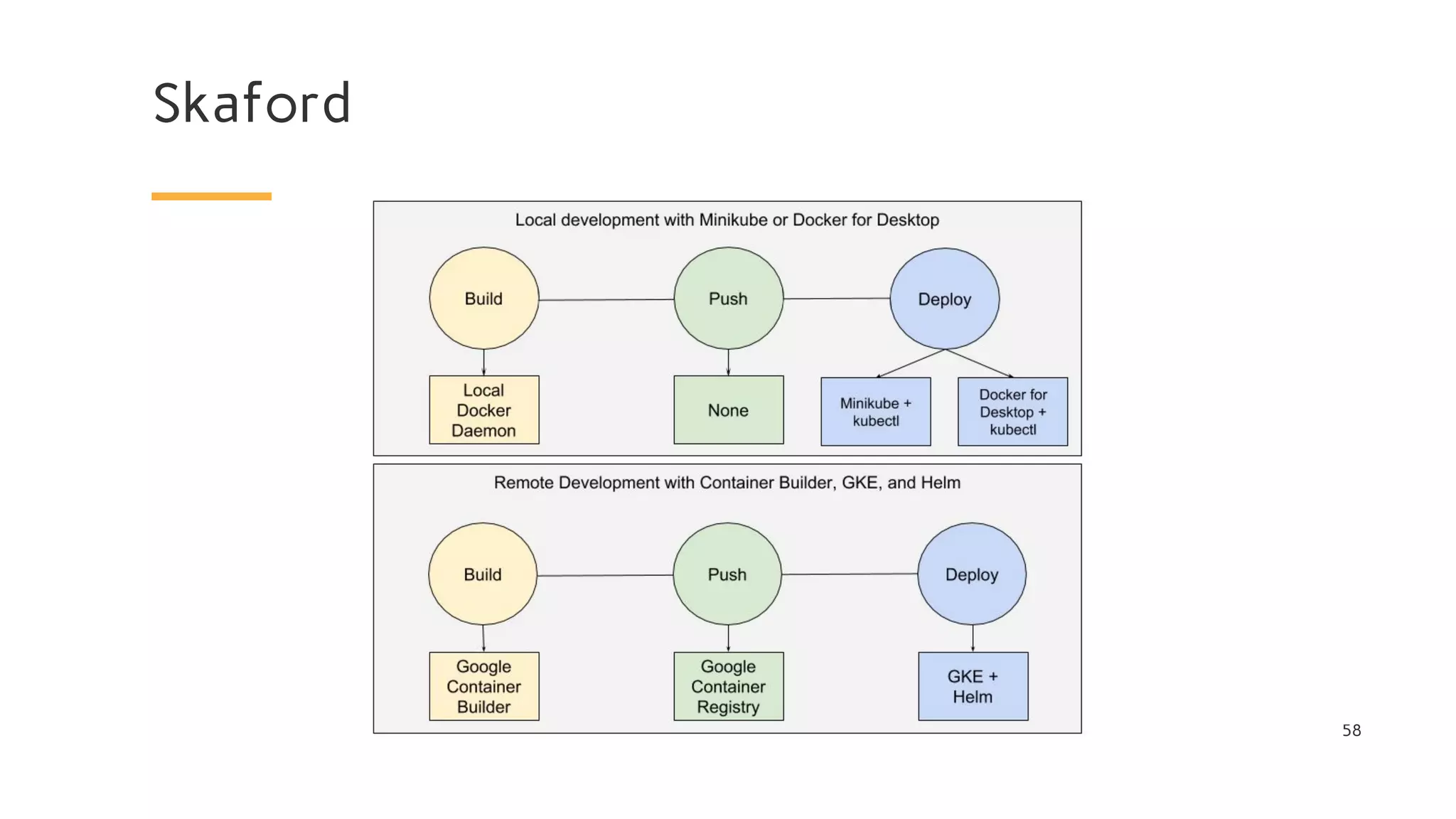

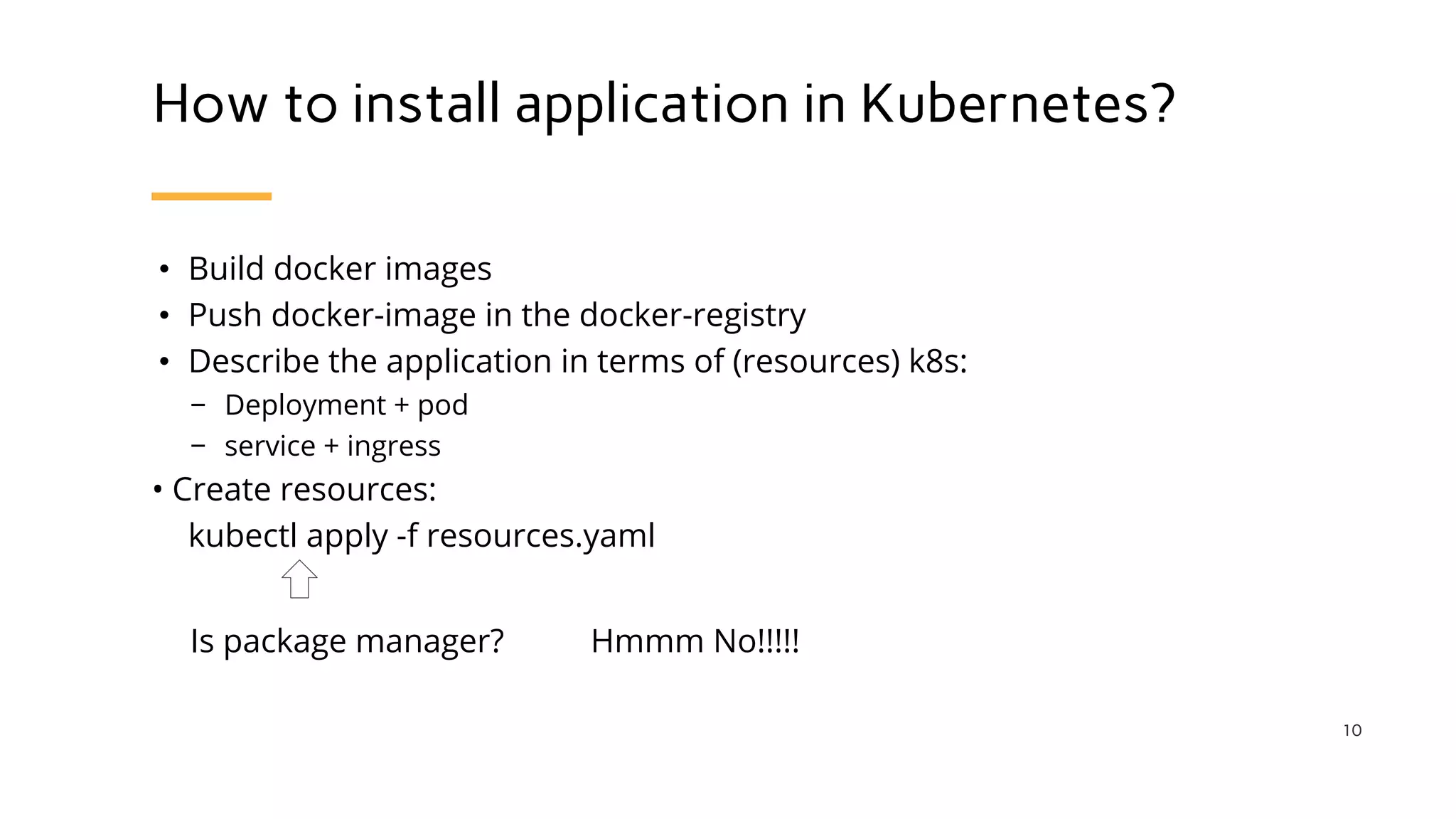

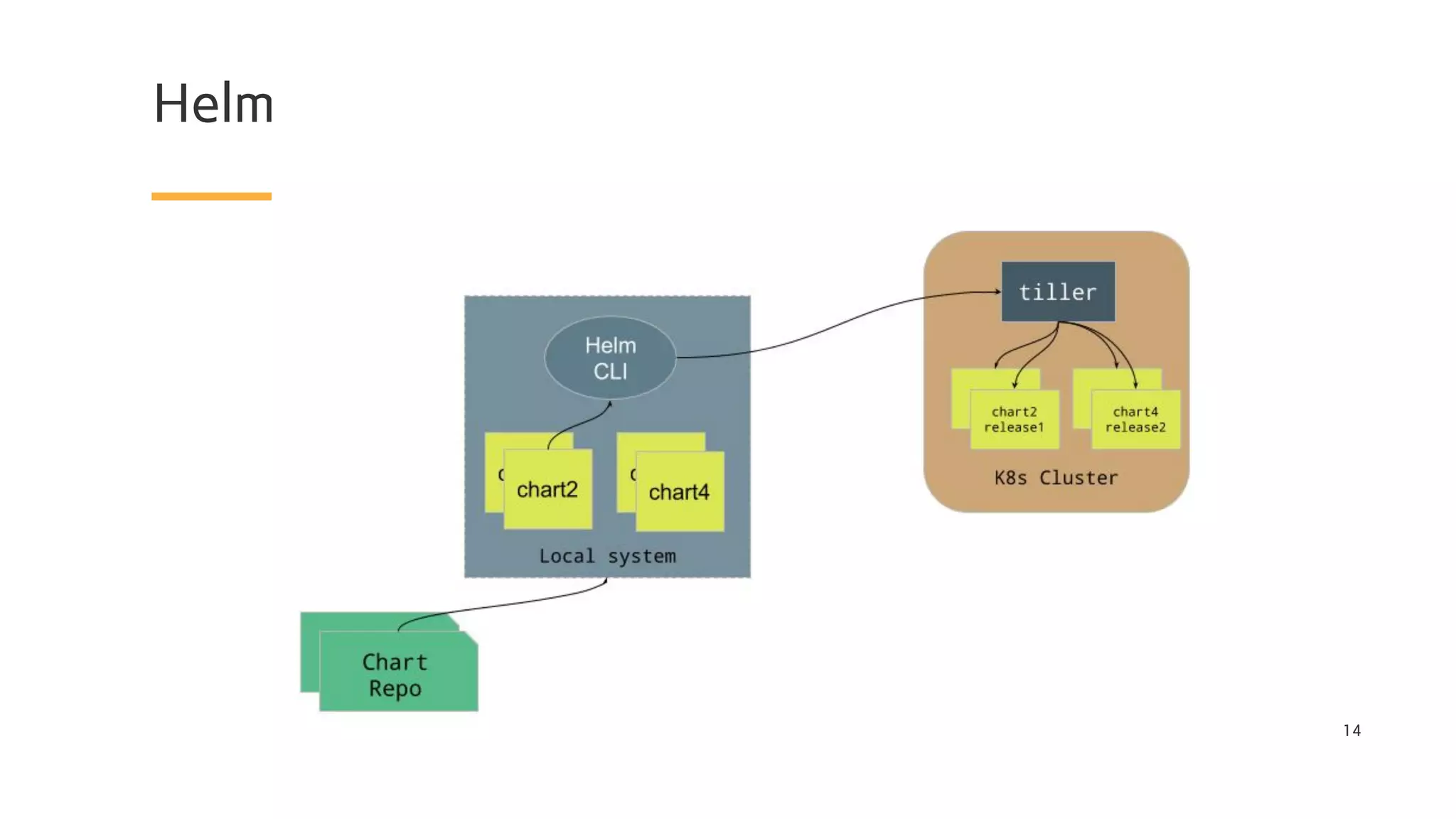

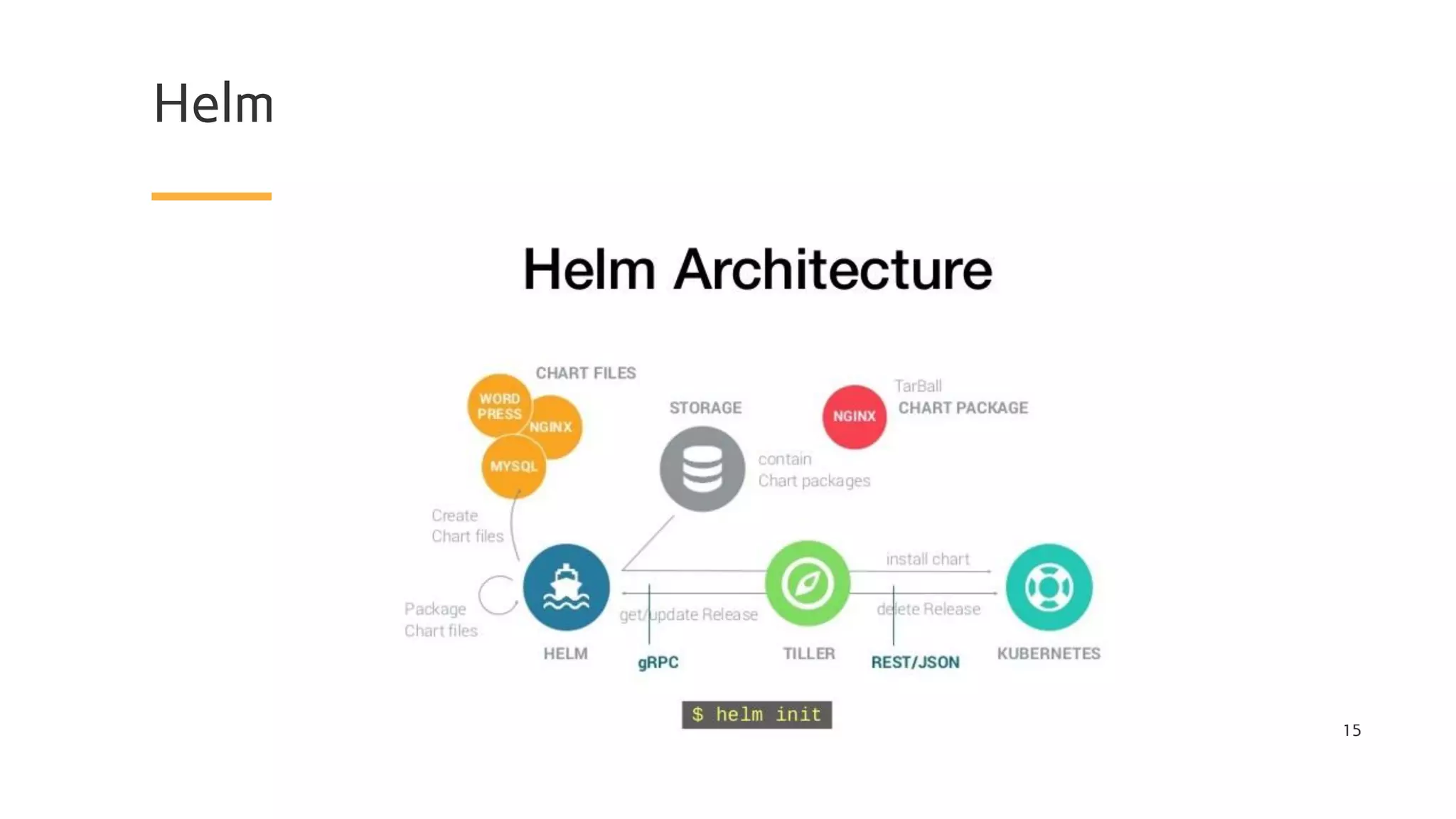

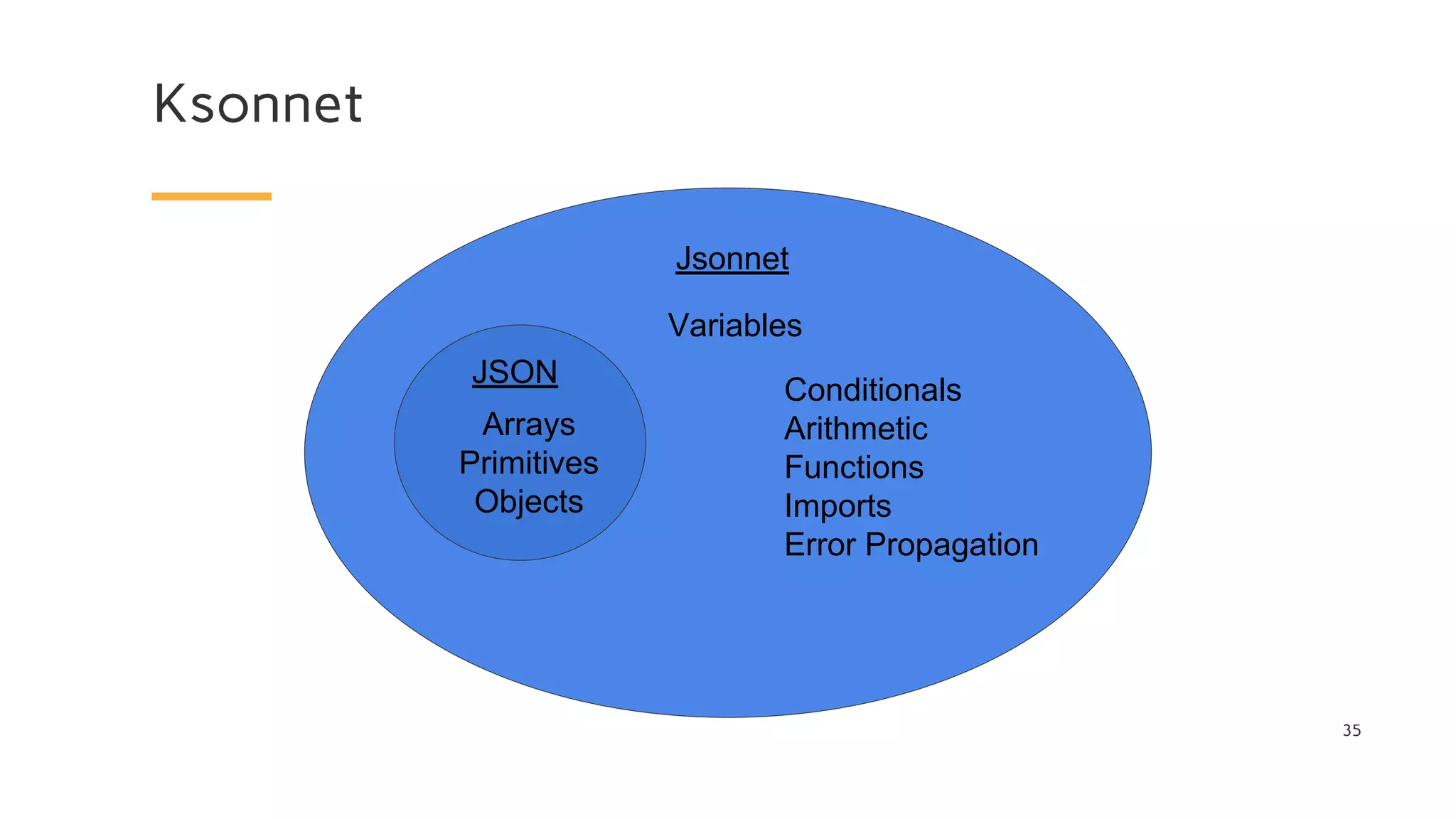

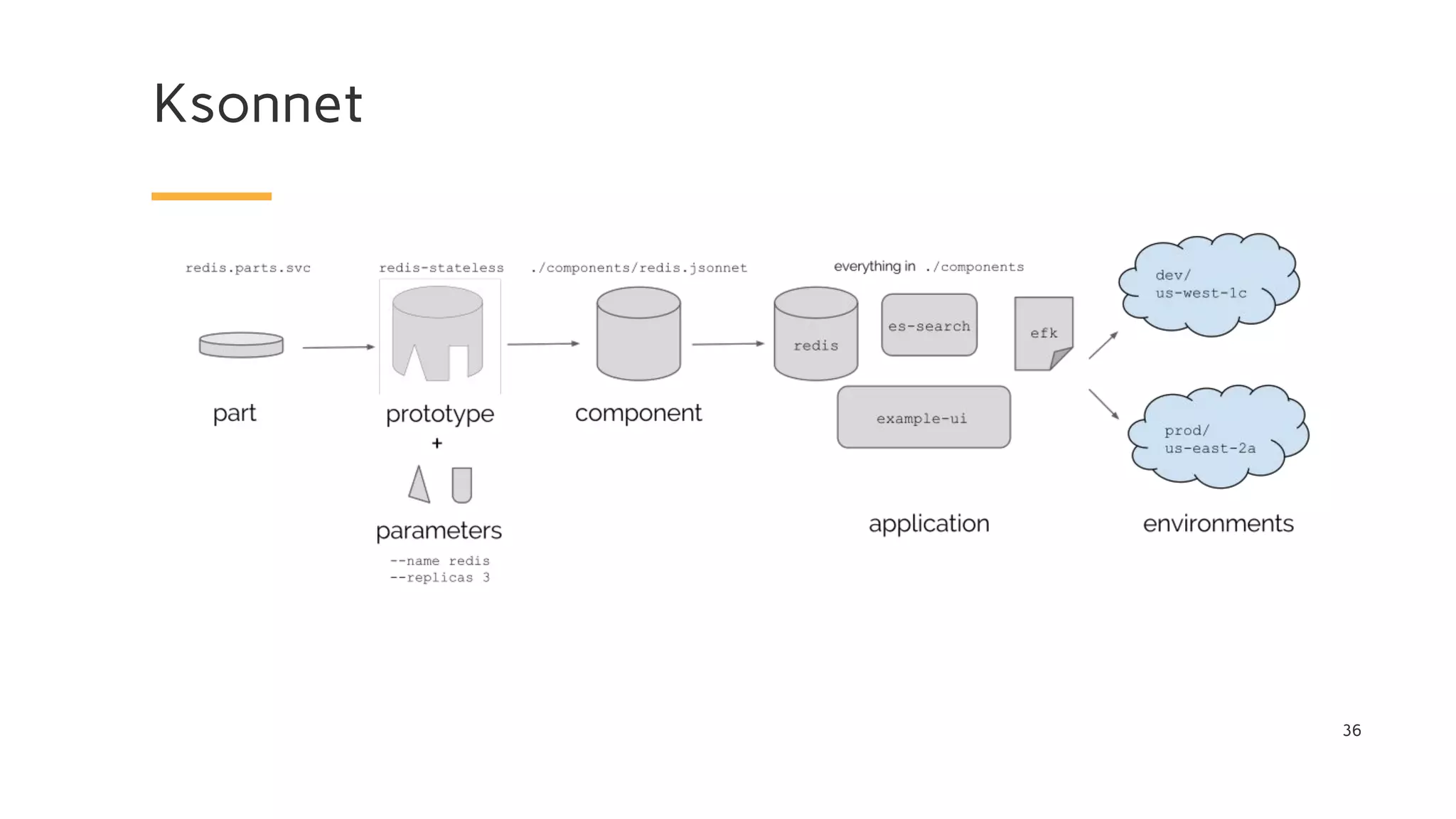

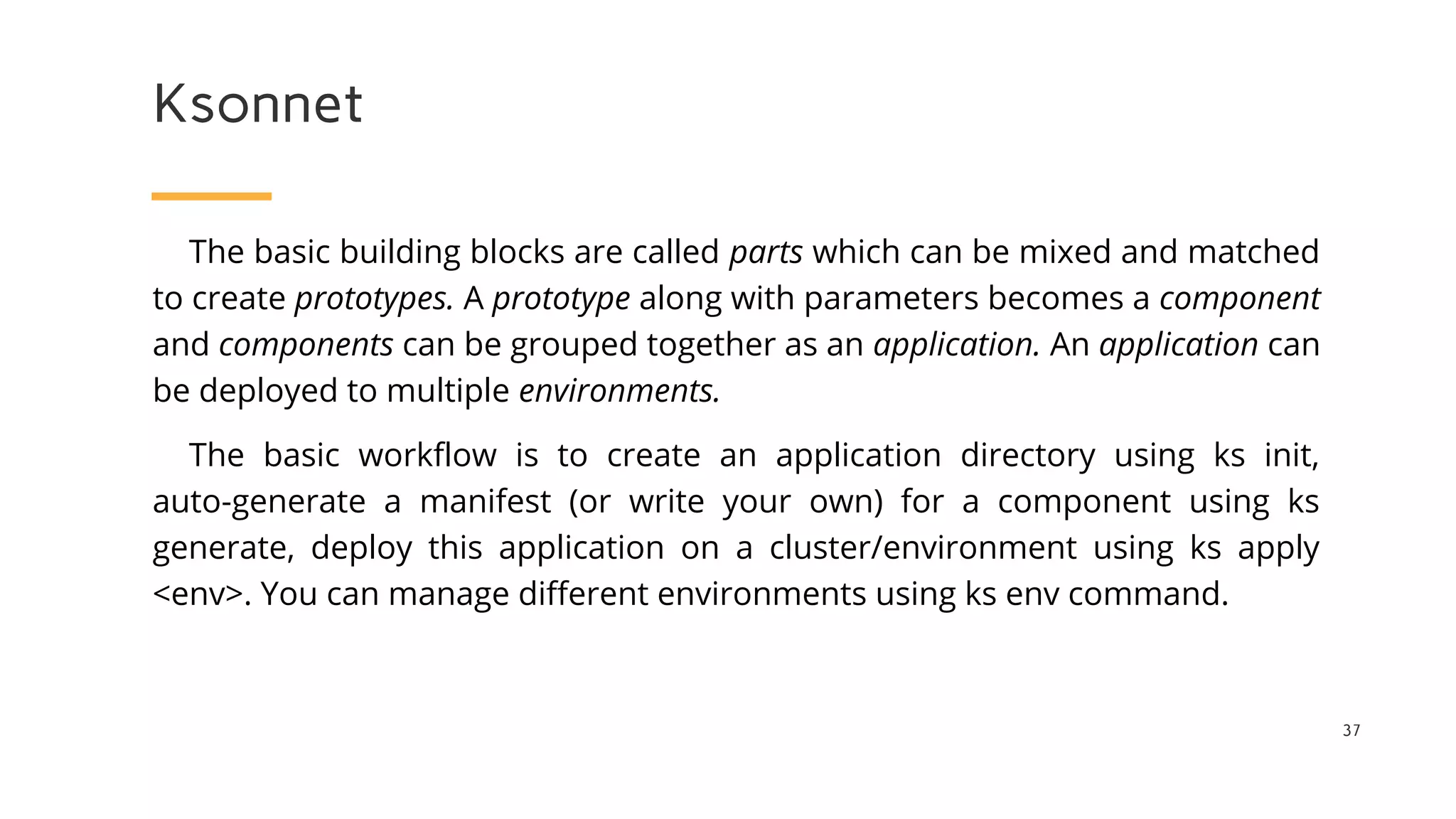

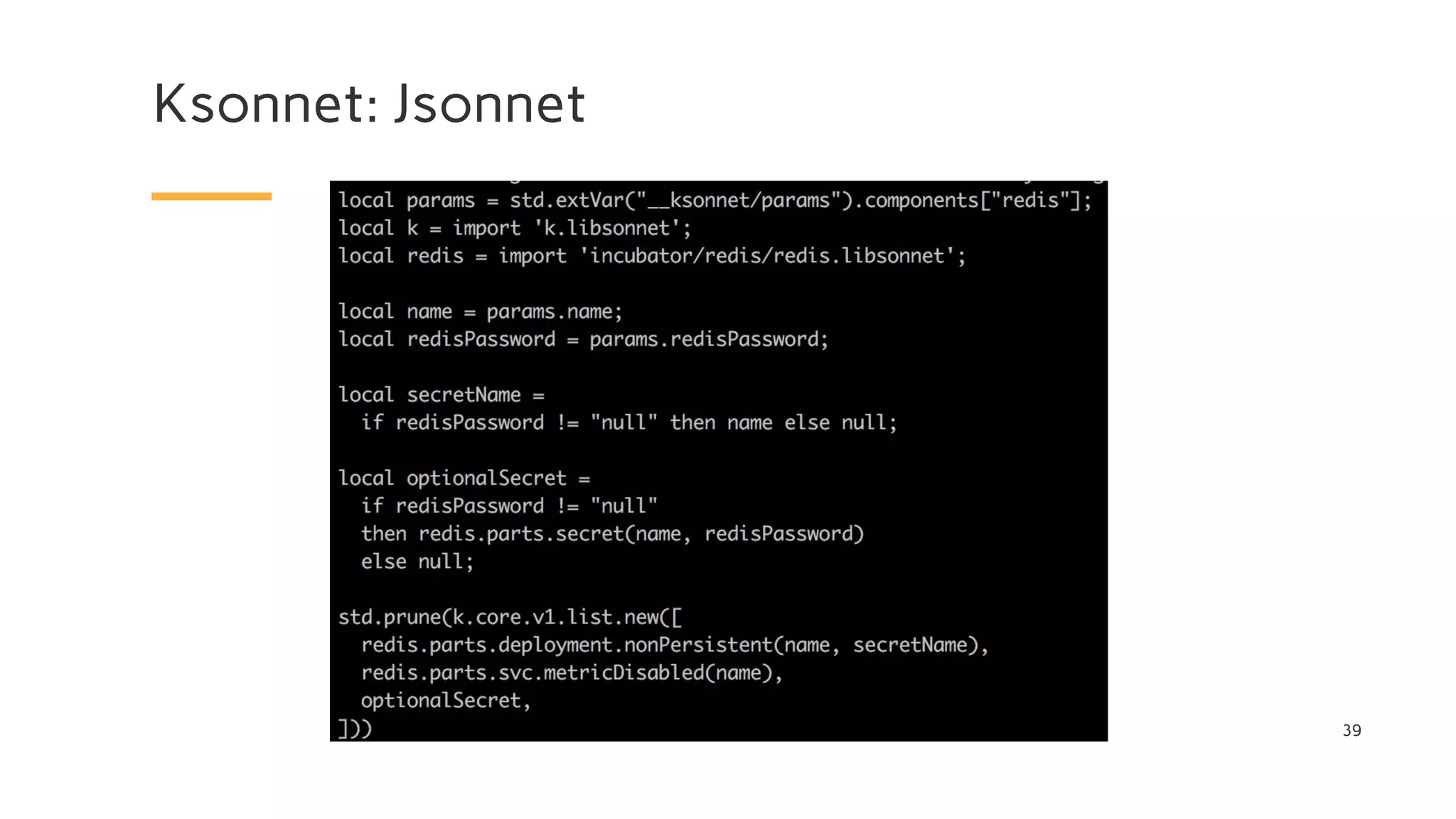

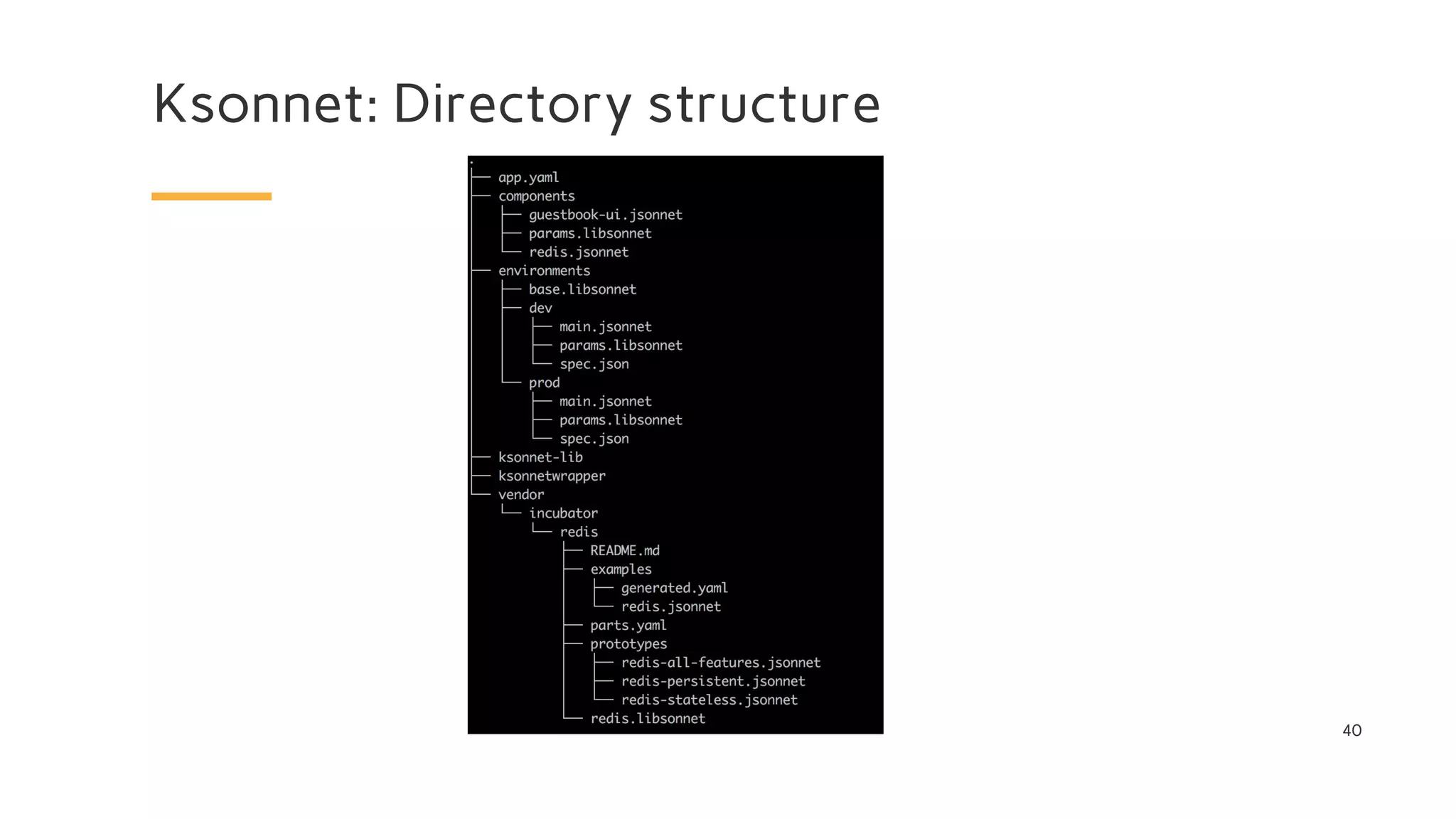

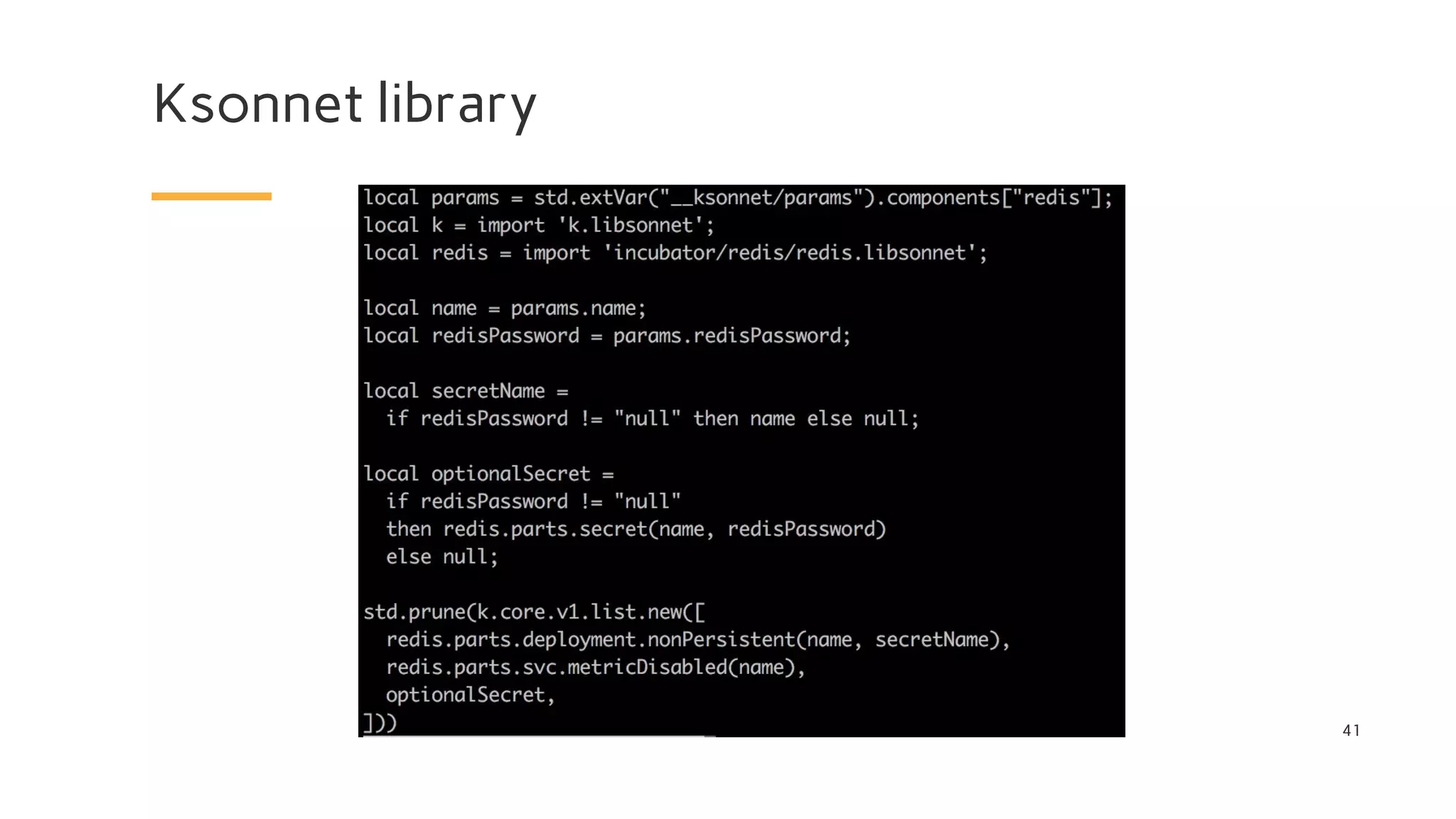

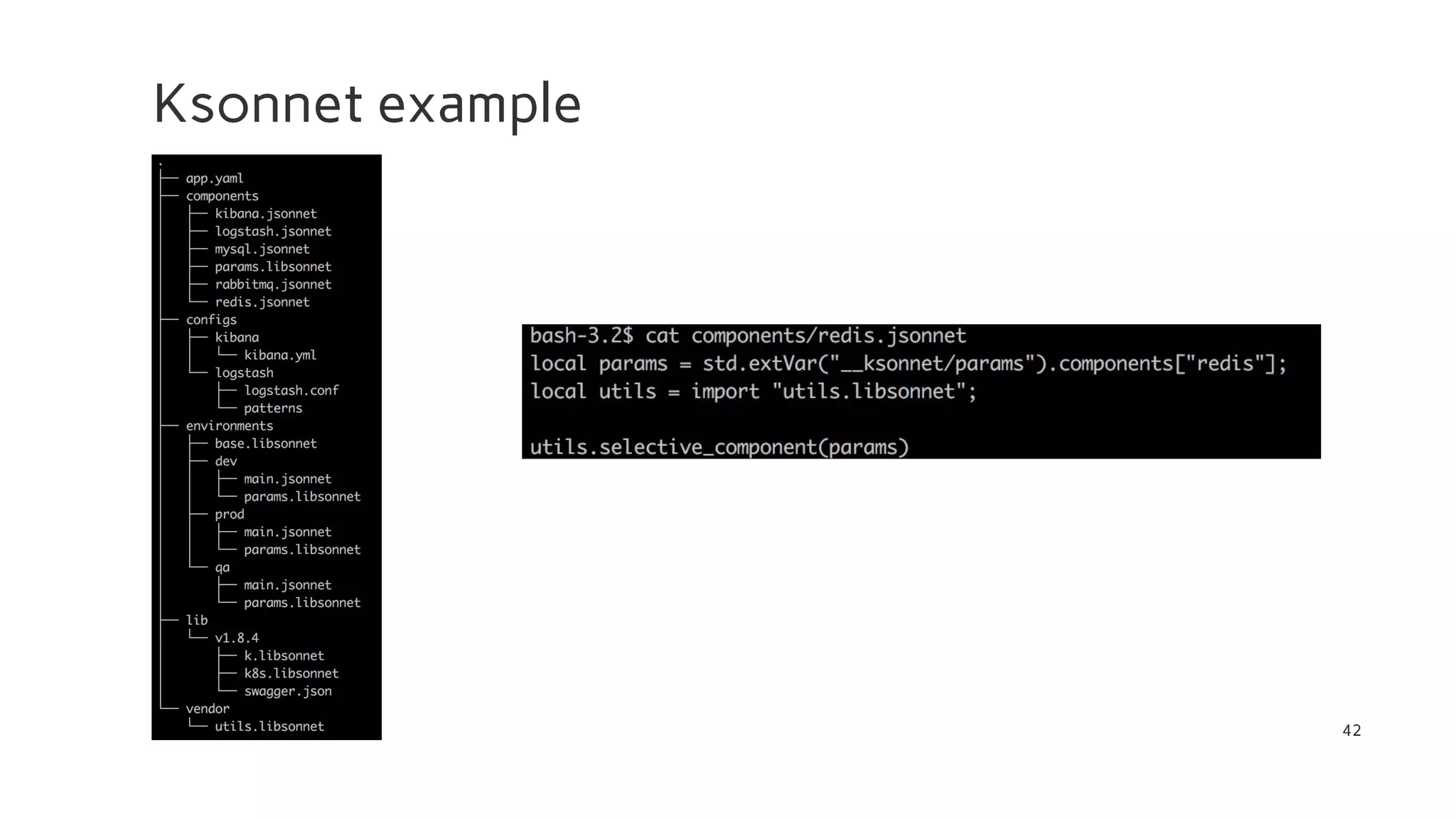

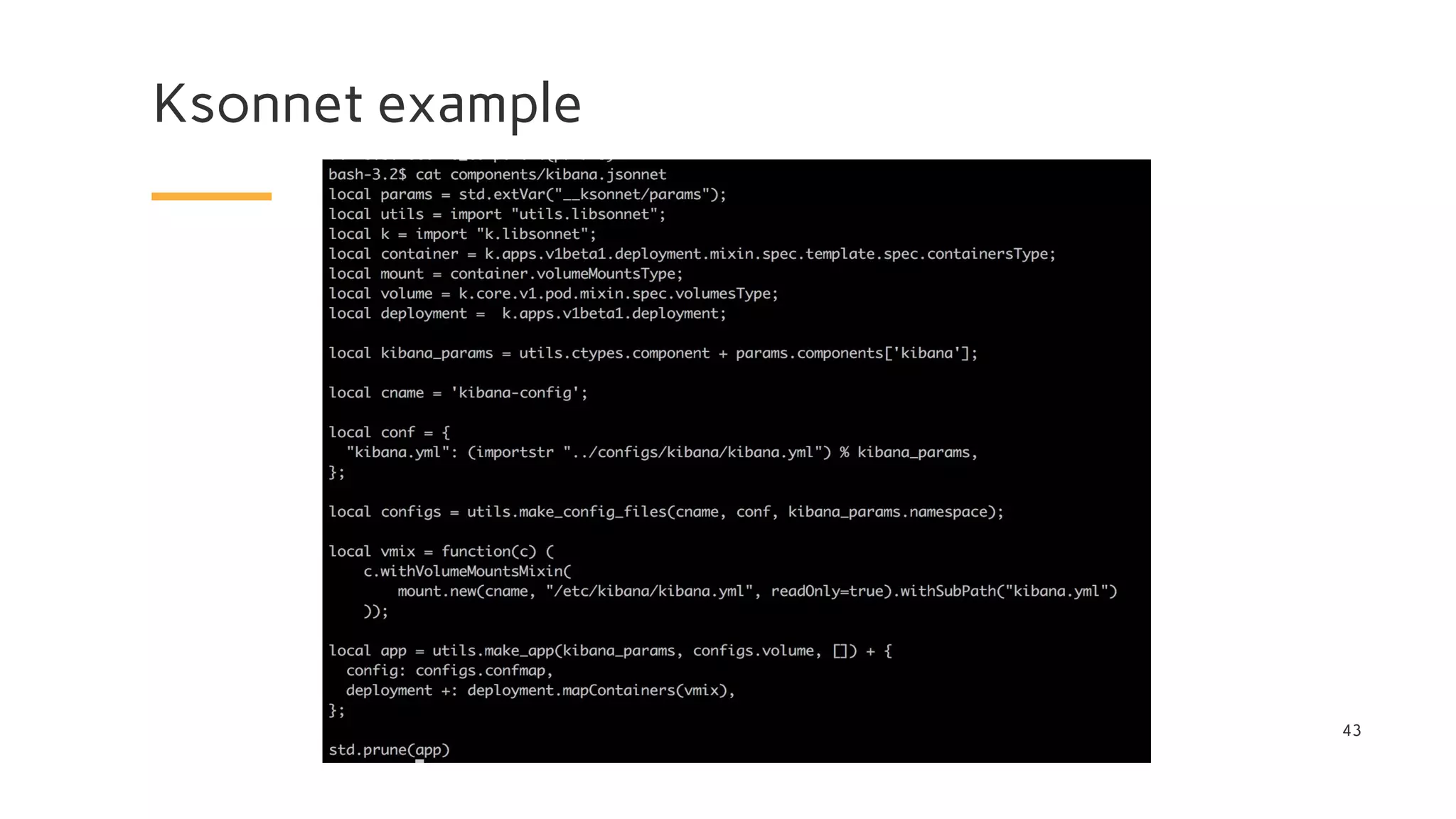

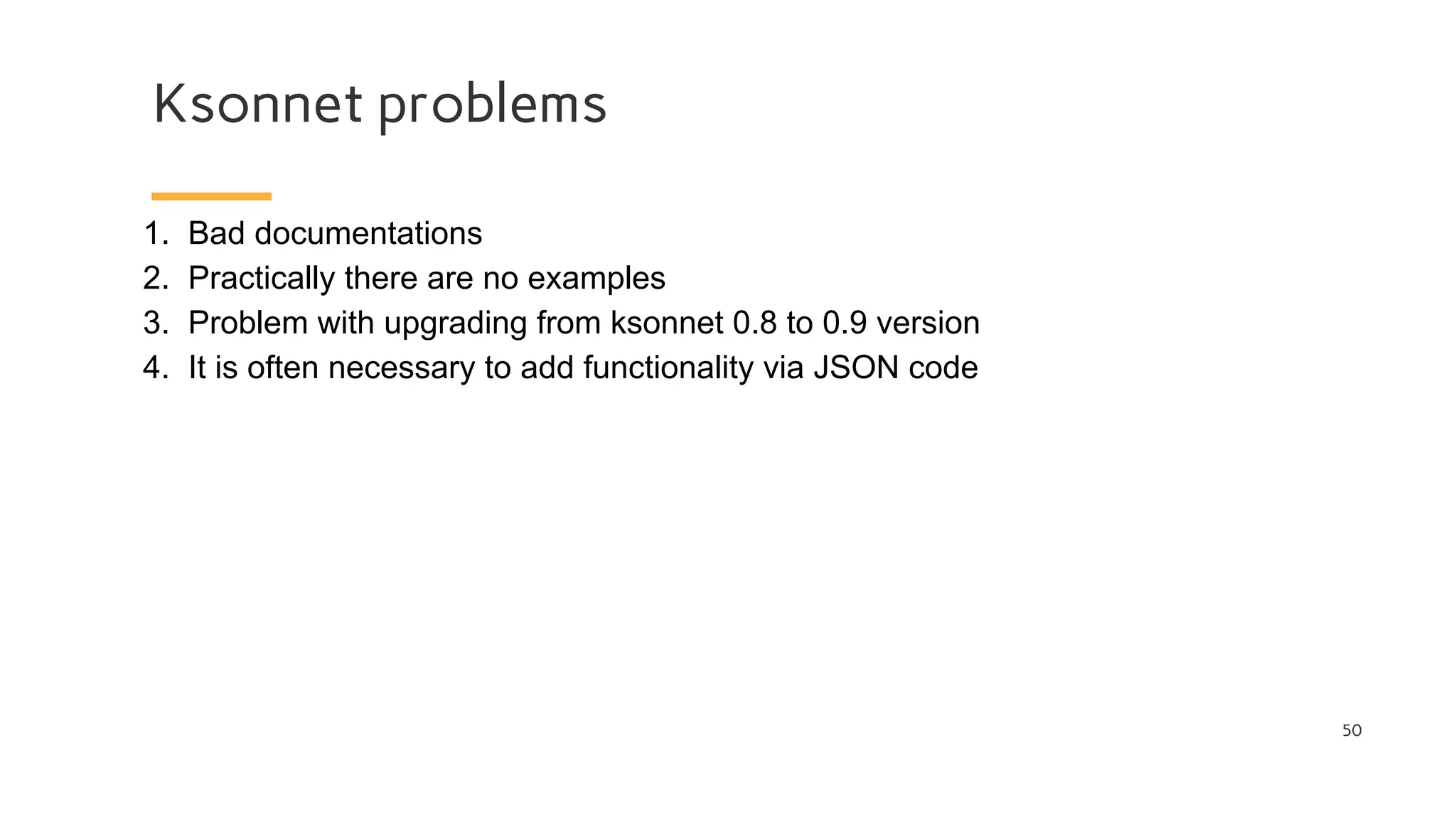

This document discusses various tools for deploying applications to Kubernetes, including Helm, Ksonnet, Draft, Gitkube, Metaparticle, Skaffold, KSync, and Telepresence. It provides an overview of each tool, including their motivations, workflows, and how they compare to each other. Many of the tools aim to simplify deployments by automating builds, pushes to registries, and deployments to clusters. Ksonnet stands out as a tool that uses Jsonnet to define reusable application components and deploy them across multiple environments and clusters.

![Ksonnet problems

51

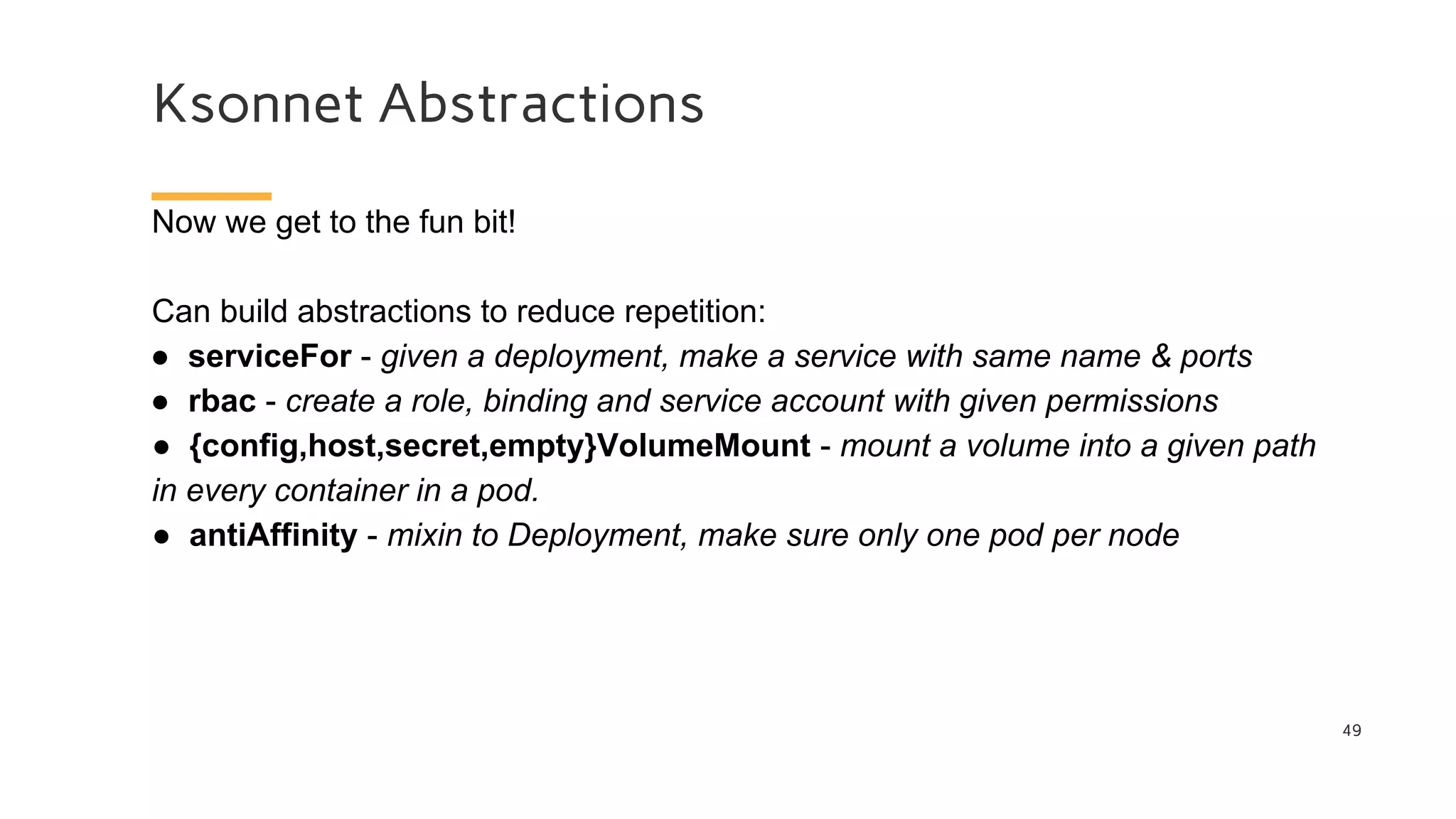

apiVersion: autoscaling/v2beta1

kind: HorizontalPodAutoscaler

metadata:

maxReplicas: 10

metrics:

- pods:

metricName: test_redisqlen

targetAverageValue: 3

type: Pods

minReplicas: 3

scaleTargetRef:

apiVersion: extensions/v1beta1

kind: Deployment

name: test-deploy

To add the highlighted red options, you must add the following to the

ksonnet code:

{spec+: {metrics+:

[{"pods":{"metricName":"test_redisqlen","targetAverageValue":3},"t

ype":"Pods"}]}}

{spec+: {scaleTargetRef+: {"apiVersion":"extensions/v1beta1"}}}](https://image.slidesharecdn.com/cdinkubernetesusinghelmandksonnet-180720134251/75/CD-in-kubernetes-using-helm-and-ksonnet-Stas-Kolenkin-51-2048.jpg)