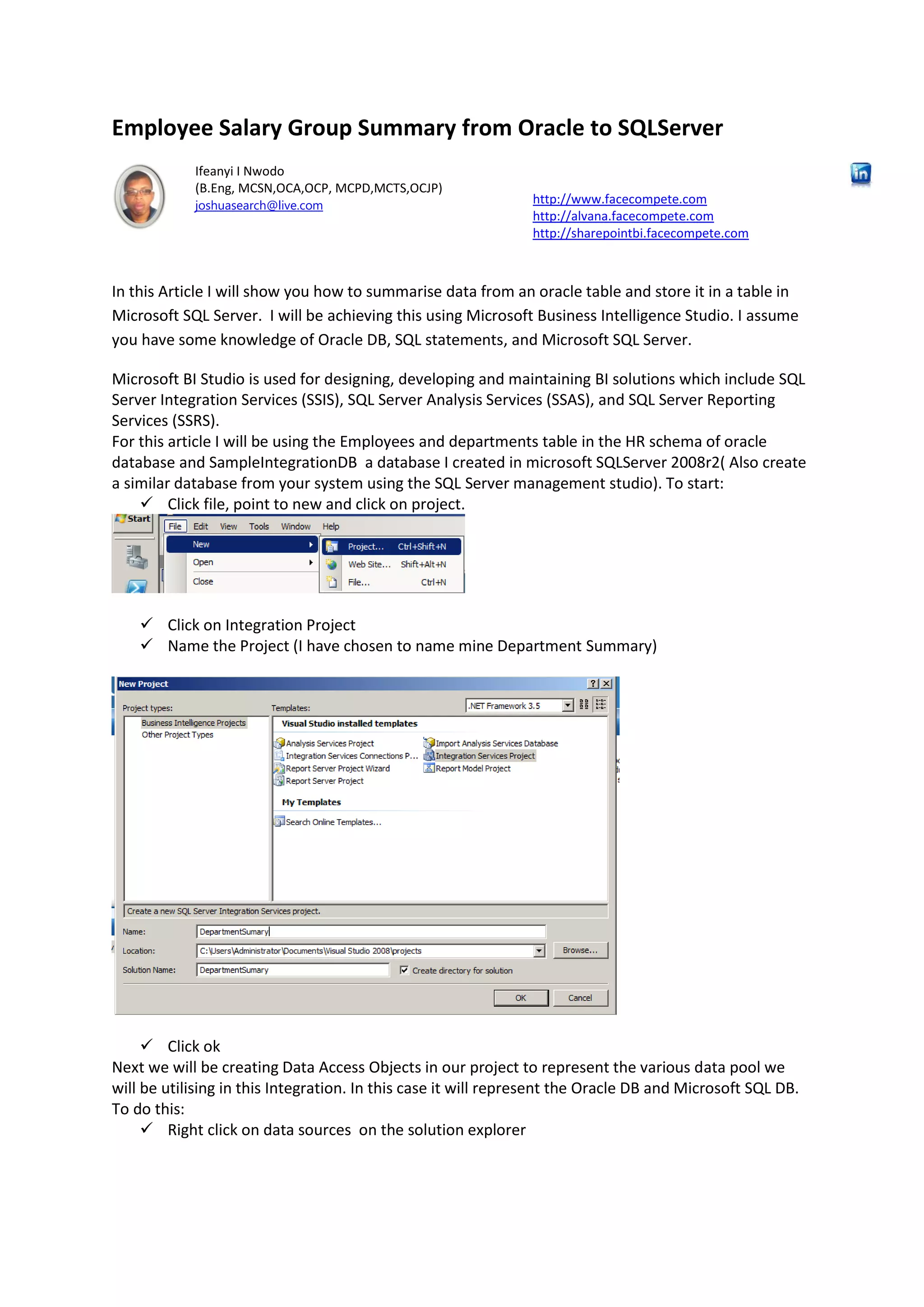

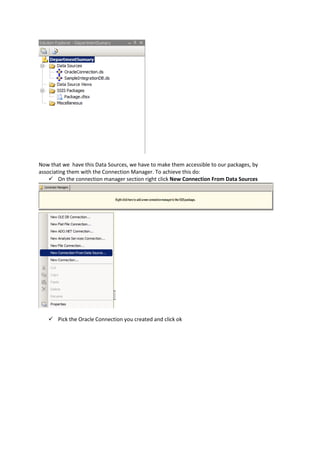

This document provides a step-by-step guide to transfer employee salary data from an Oracle database to a Microsoft SQL Server database using Microsoft Business Intelligence Studio. It covers project setup, data connection configuration, and the process of creating a data flow to summarise salaries by department. The tutorial assumes familiarity with Oracle DB and SQL Server, and offers troubleshooting tips for runtime issues.

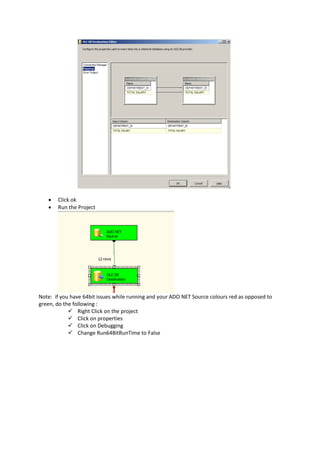

![o Modify the query statement to create a table with a better name

CREATE TABLE EmpTotalSalary (

[DEPARTMENT_ID] numeric(4,0),

[TOTAL SALARY] nvarchar(40)

)

o Click ok

o

o Click Mappings to map the destination and source appropriately.

Yours should look like](https://image.slidesharecdn.com/bitutorialemployee-140502031024-phpapp02/85/BI-Tutorial-Copying-Data-from-Oracle-to-Microsoft-SQLServer-9-320.jpg)