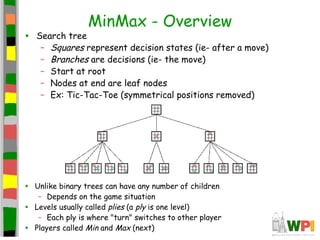

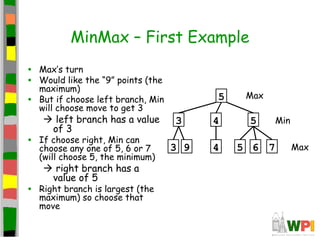

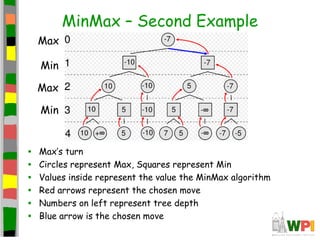

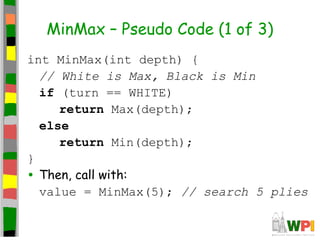

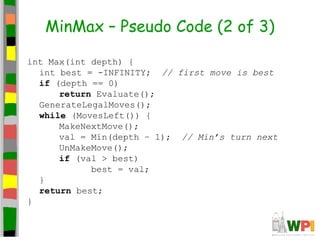

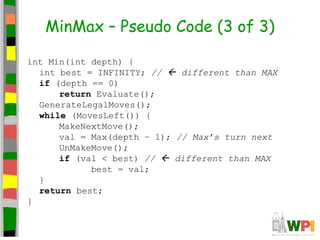

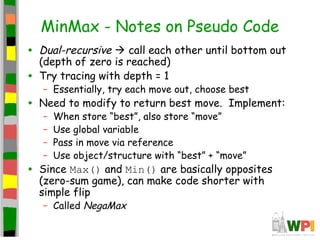

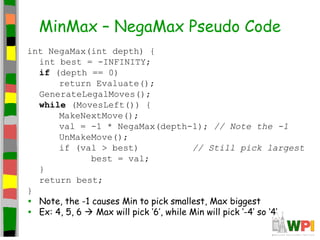

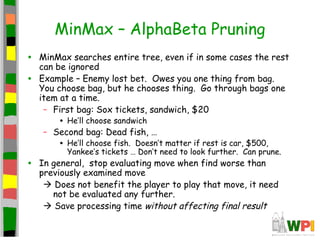

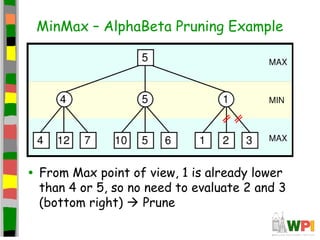

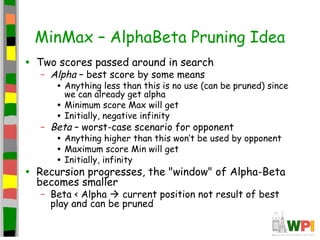

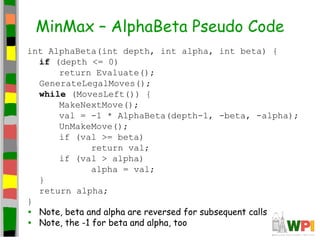

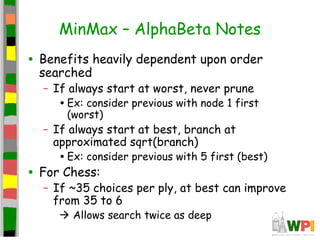

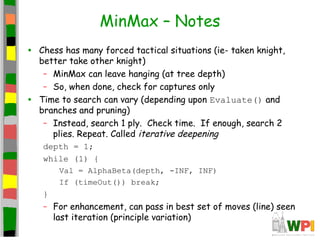

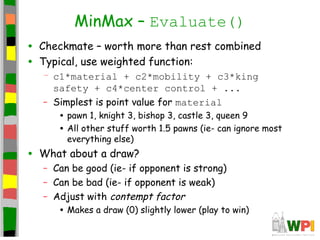

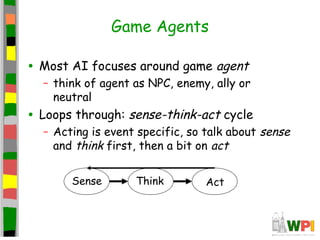

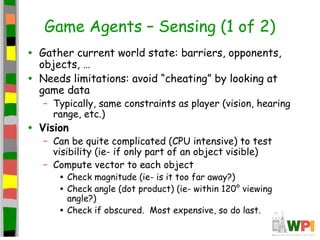

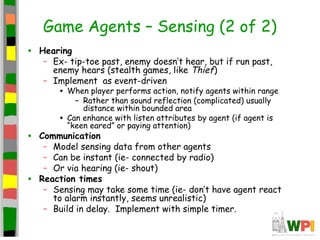

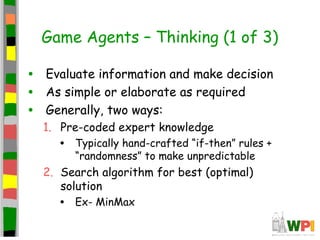

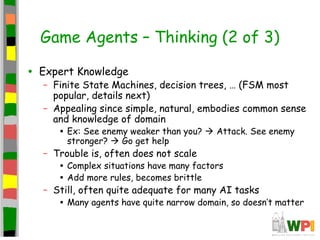

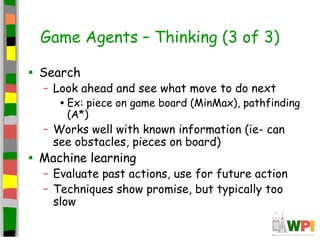

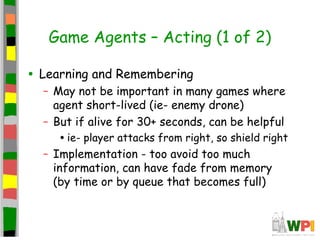

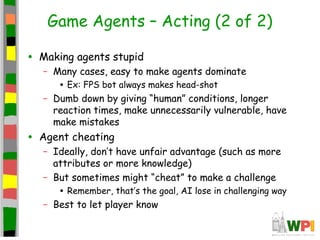

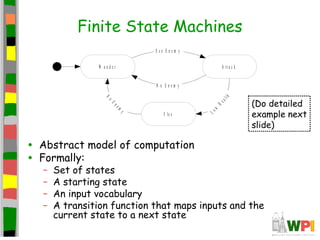

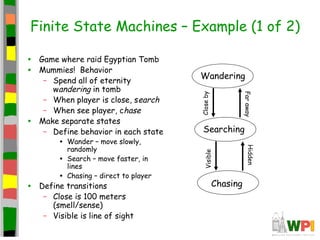

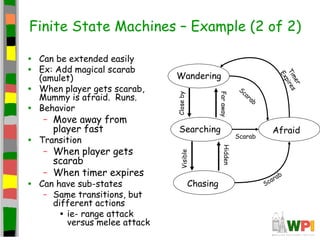

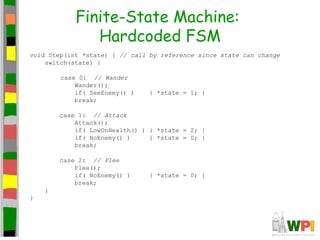

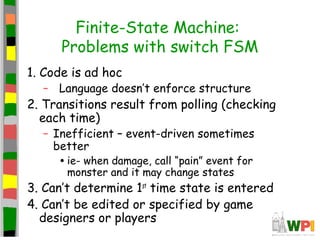

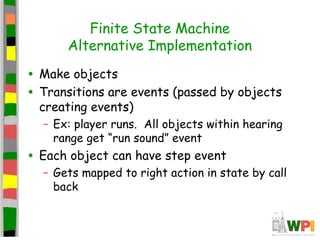

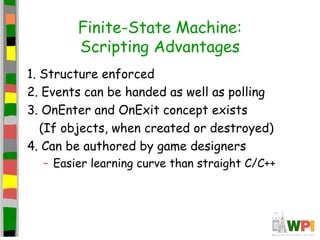

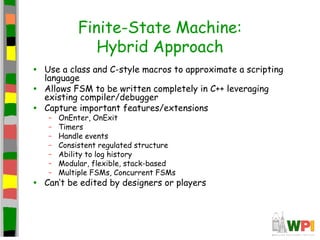

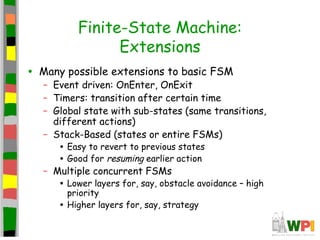

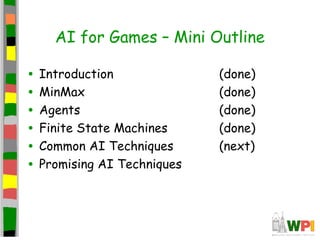

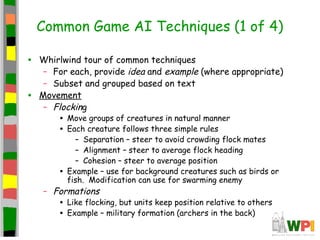

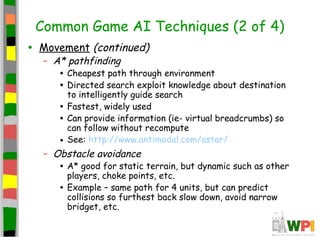

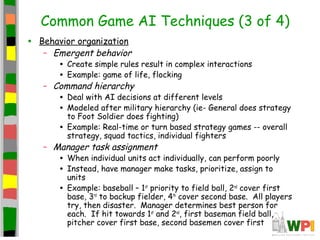

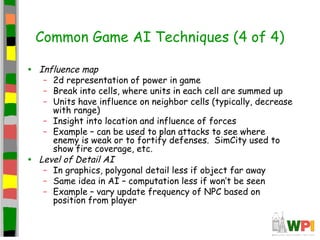

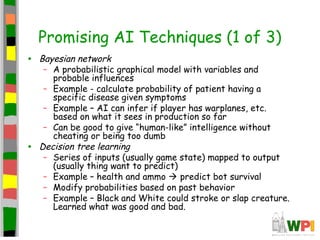

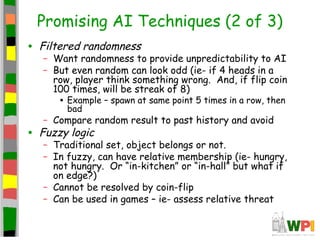

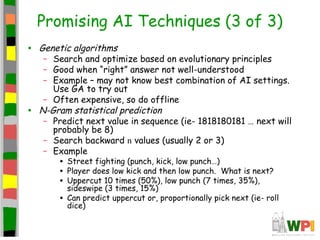

This document provides an introduction to using artificial intelligence for games. It discusses how AI can be used to create challenging opponents or helpful allies that act autonomously based on their programming. It notes that while human-level general intelligence is difficult to achieve, AI can perform well in narrow contexts like chess. For games, the AI must be intentionally flawed to ensure a fun challenge and cannot have obvious weaknesses. It must also be able to perform calculations and make decisions in real-time to interact with the game. The document then outlines some common AI techniques used in games, including MinMax search trees and finite state machines to control agent behavior. It provides pseudocode examples of the MinMax algorithm and discusses enhancements like Alpha-Beta pruning