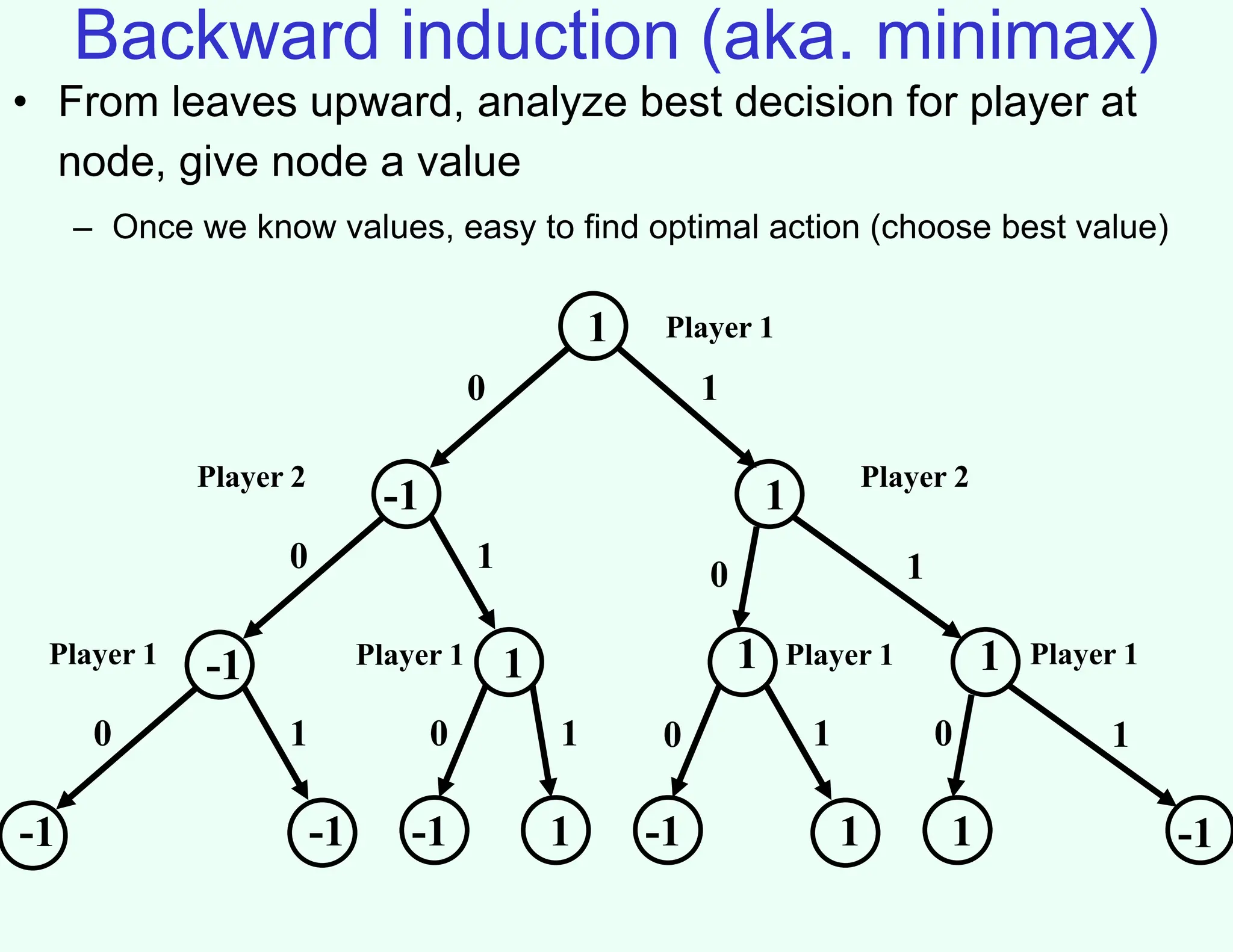

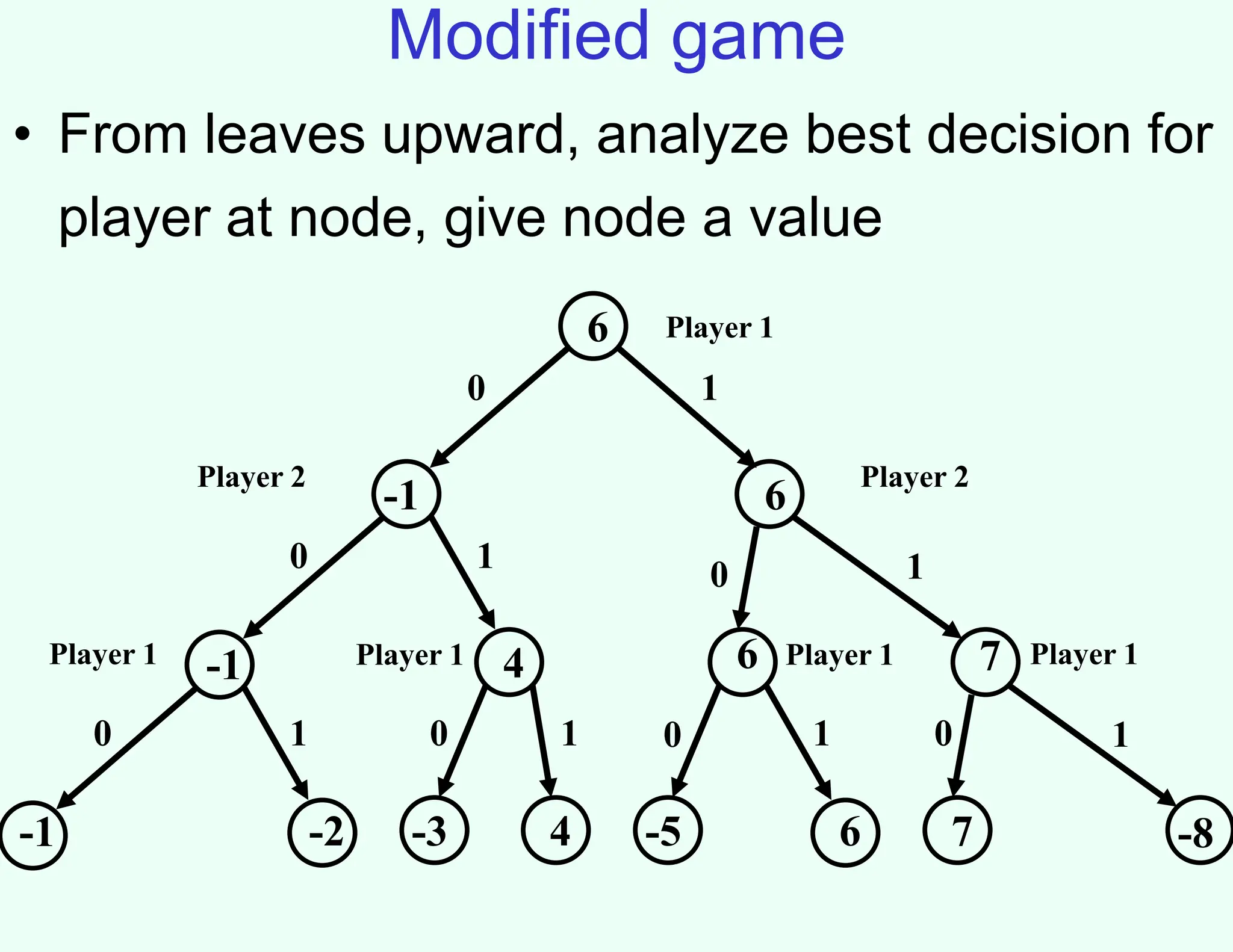

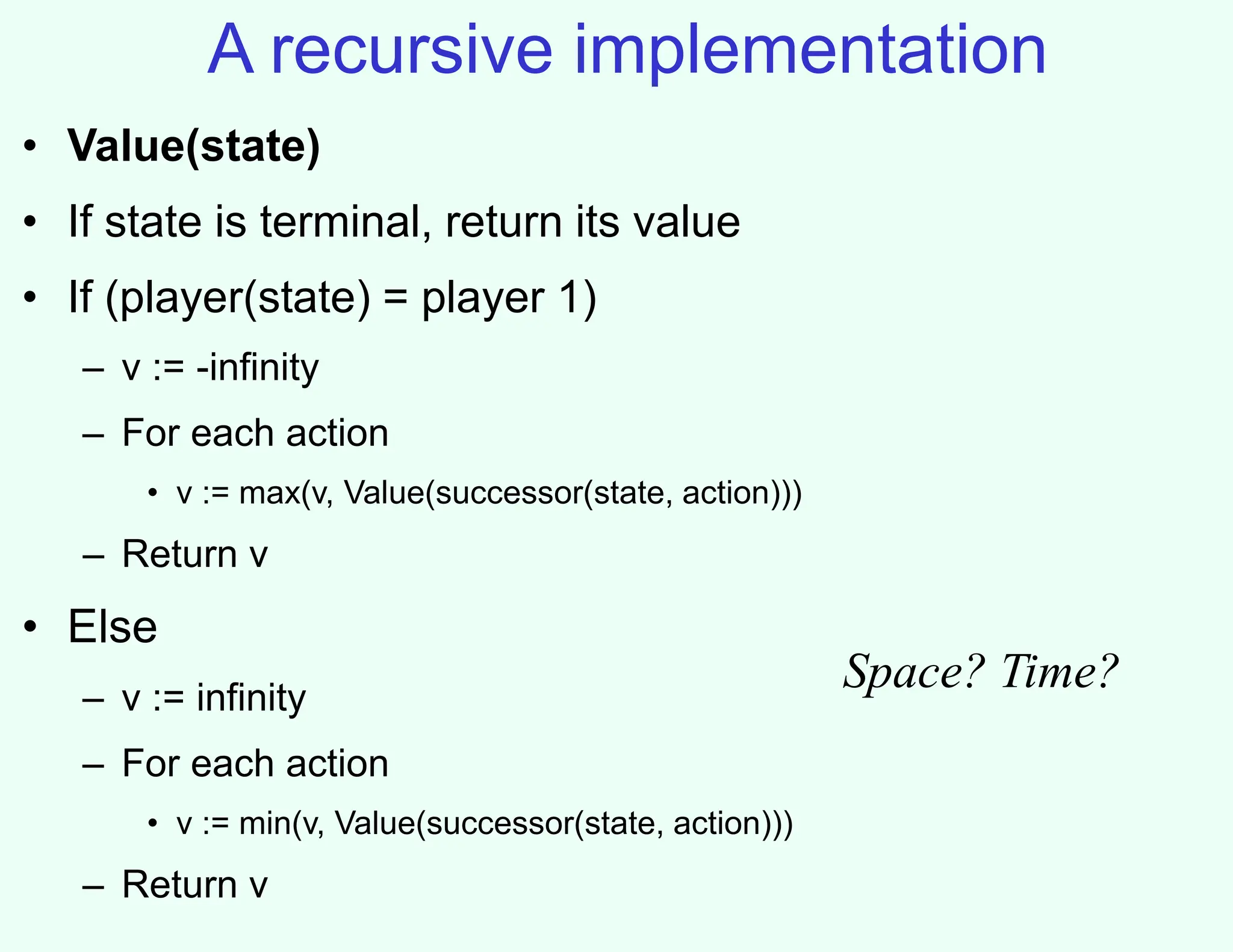

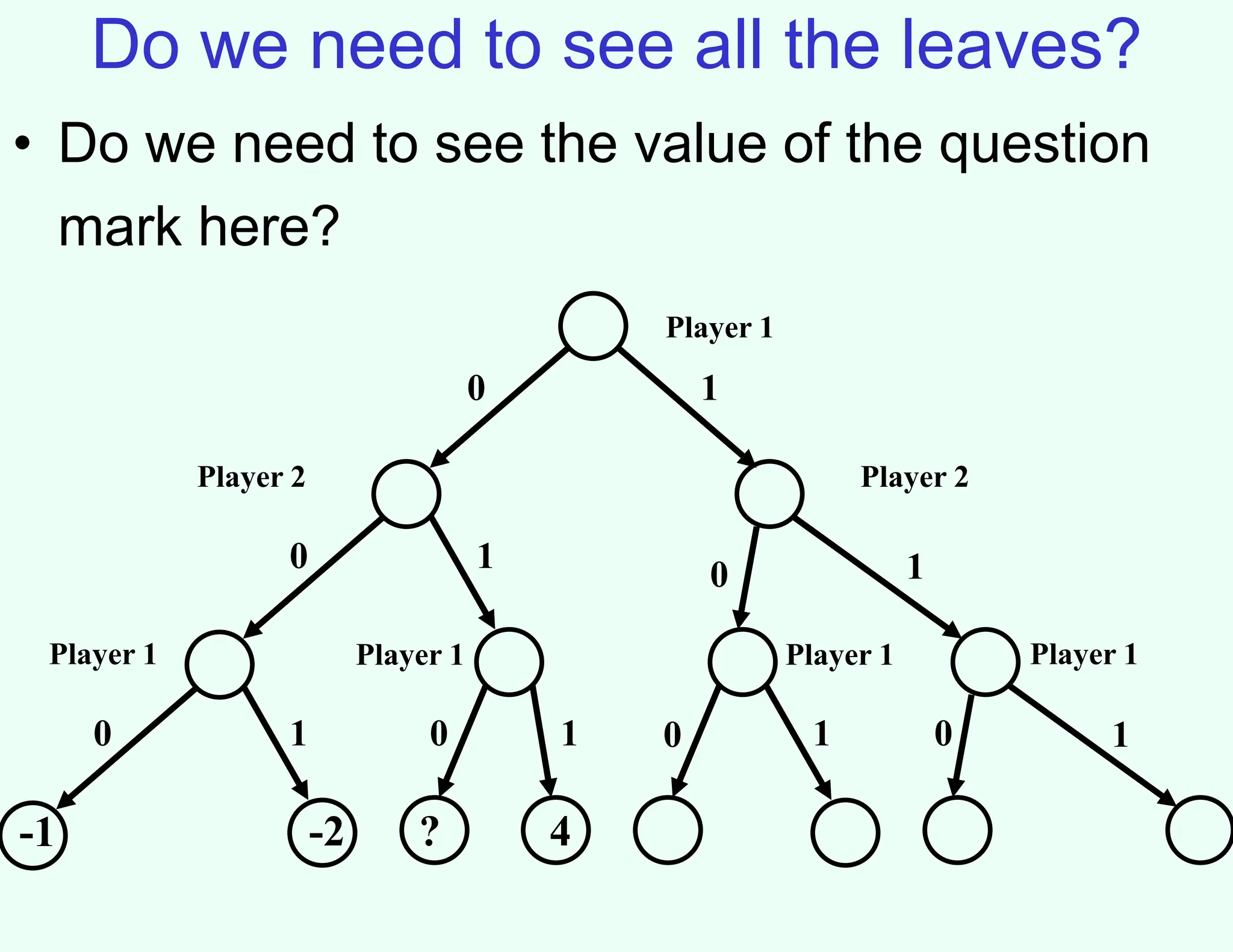

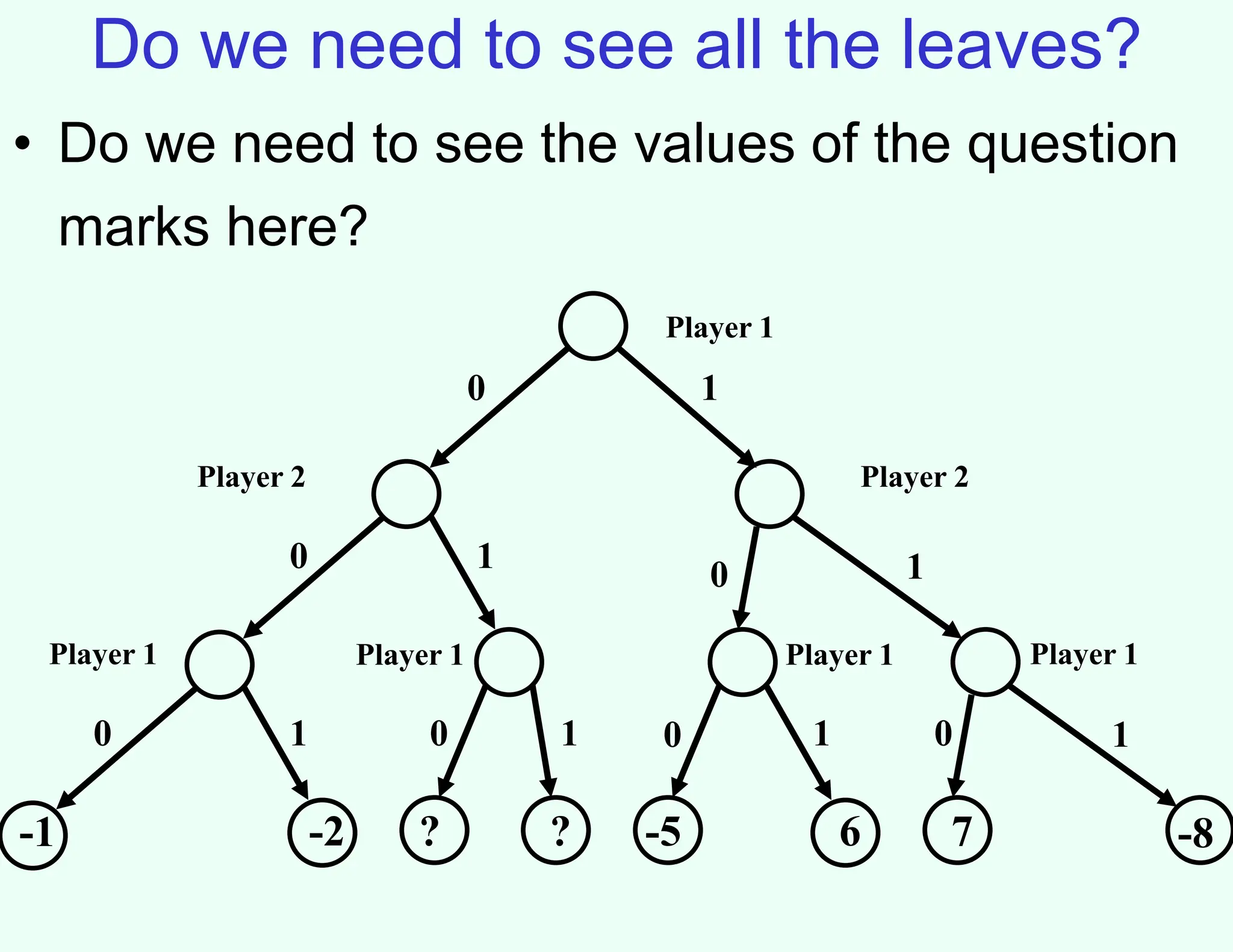

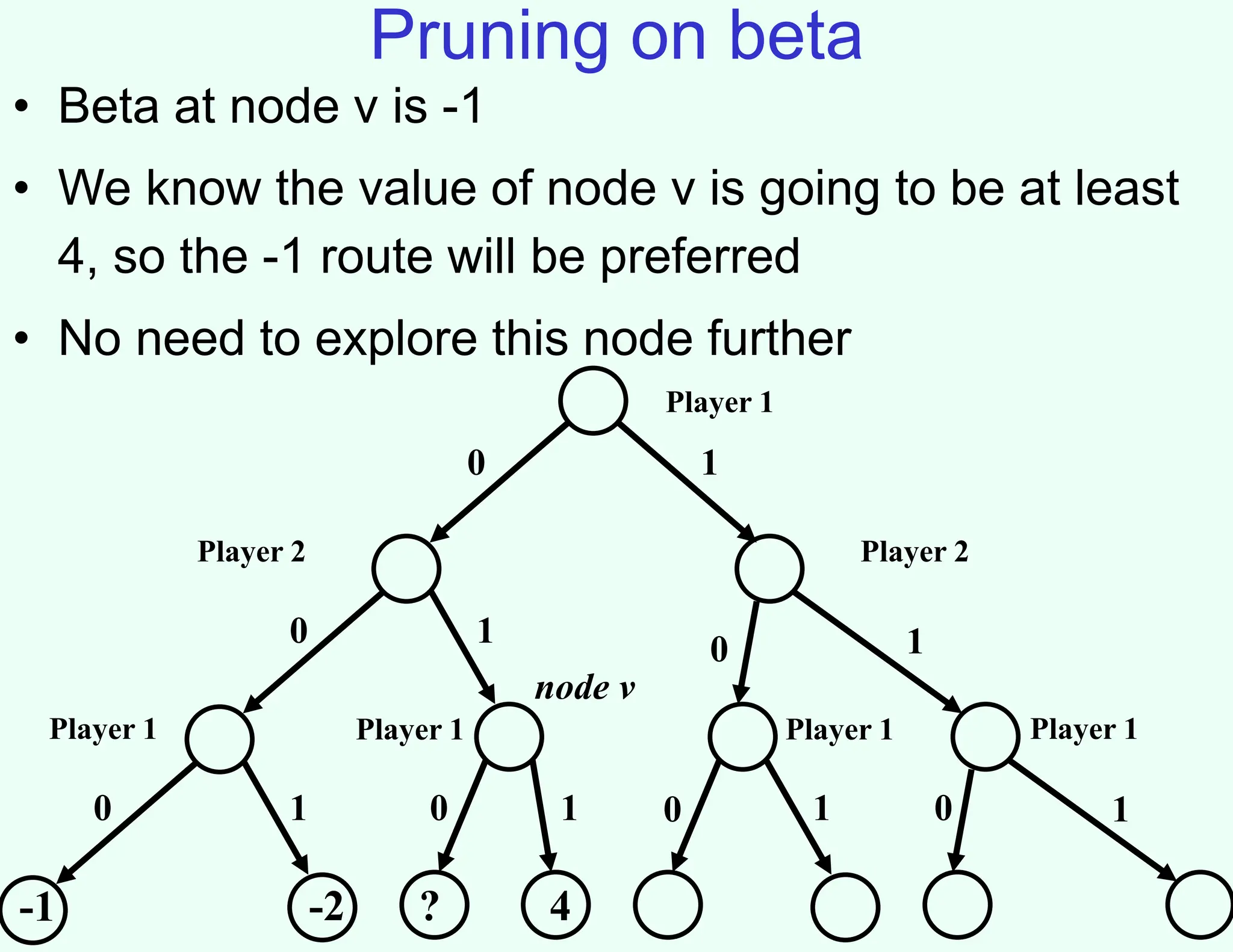

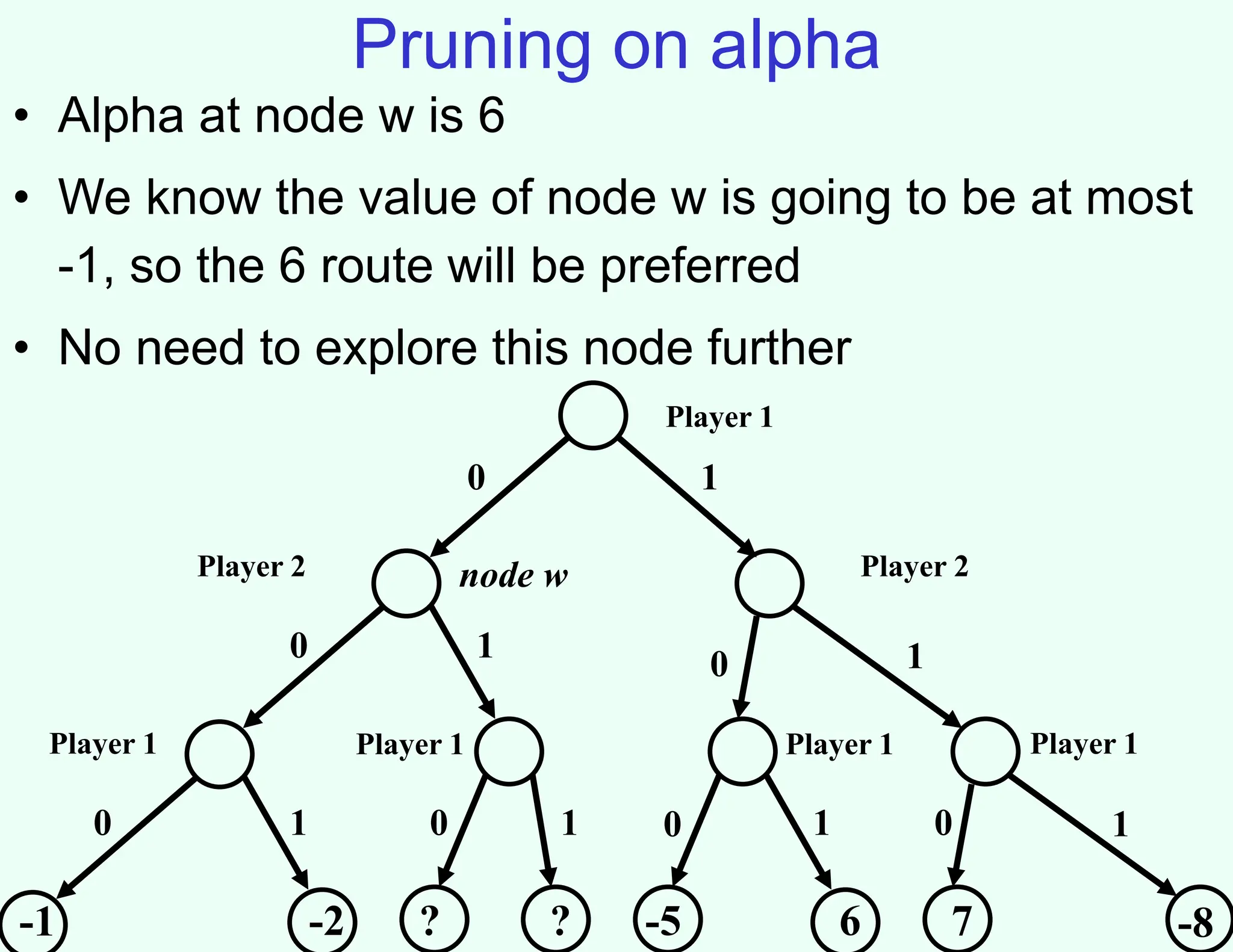

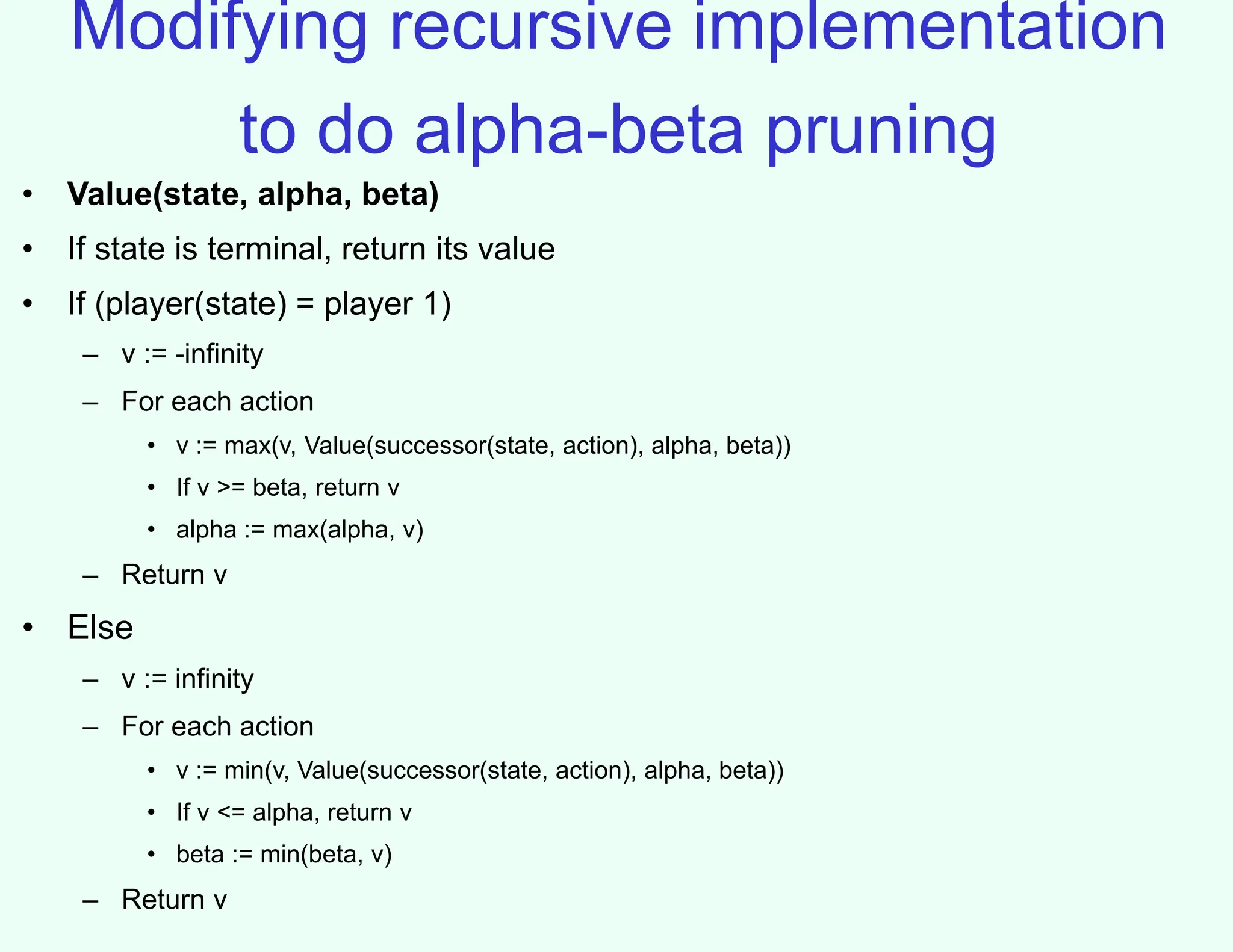

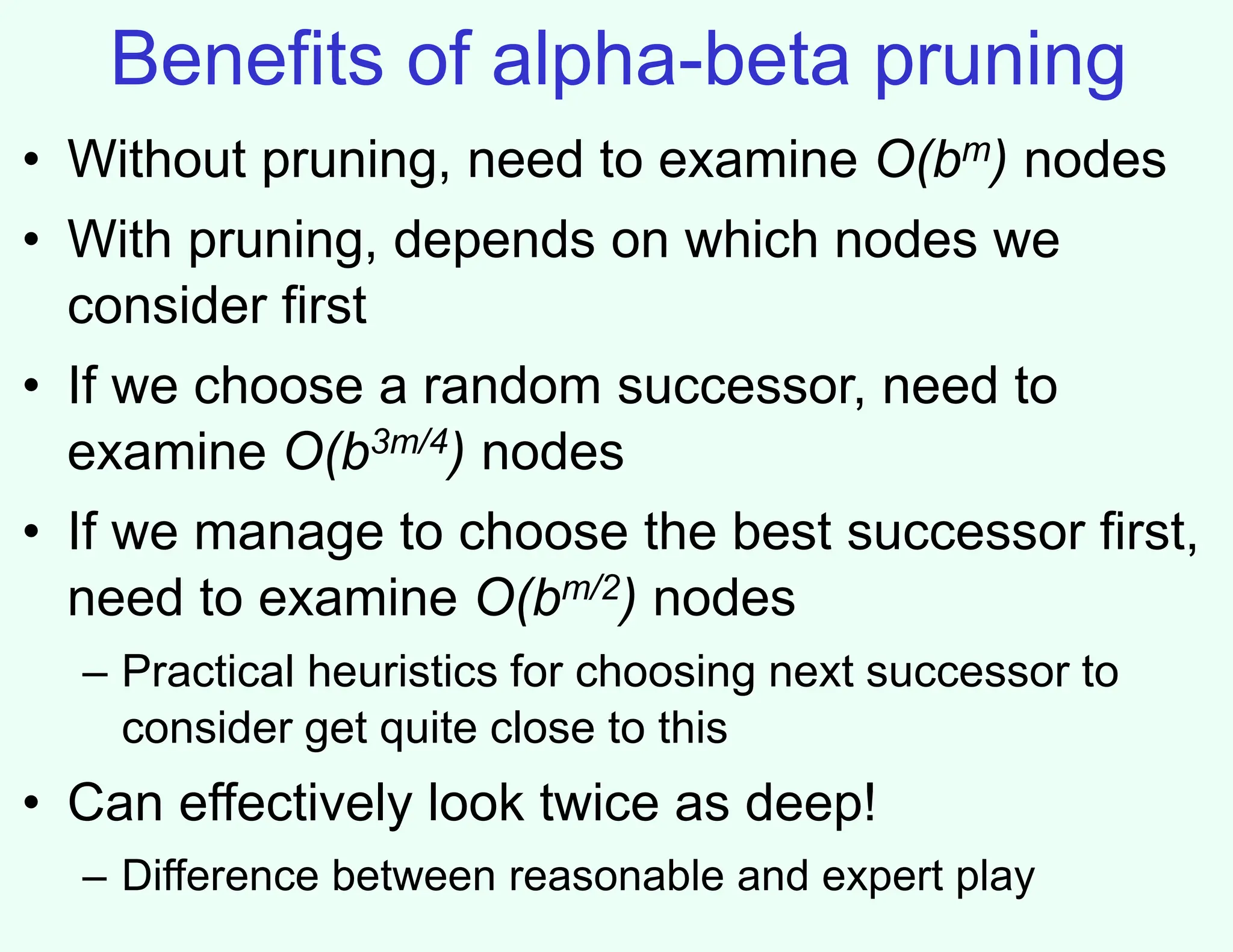

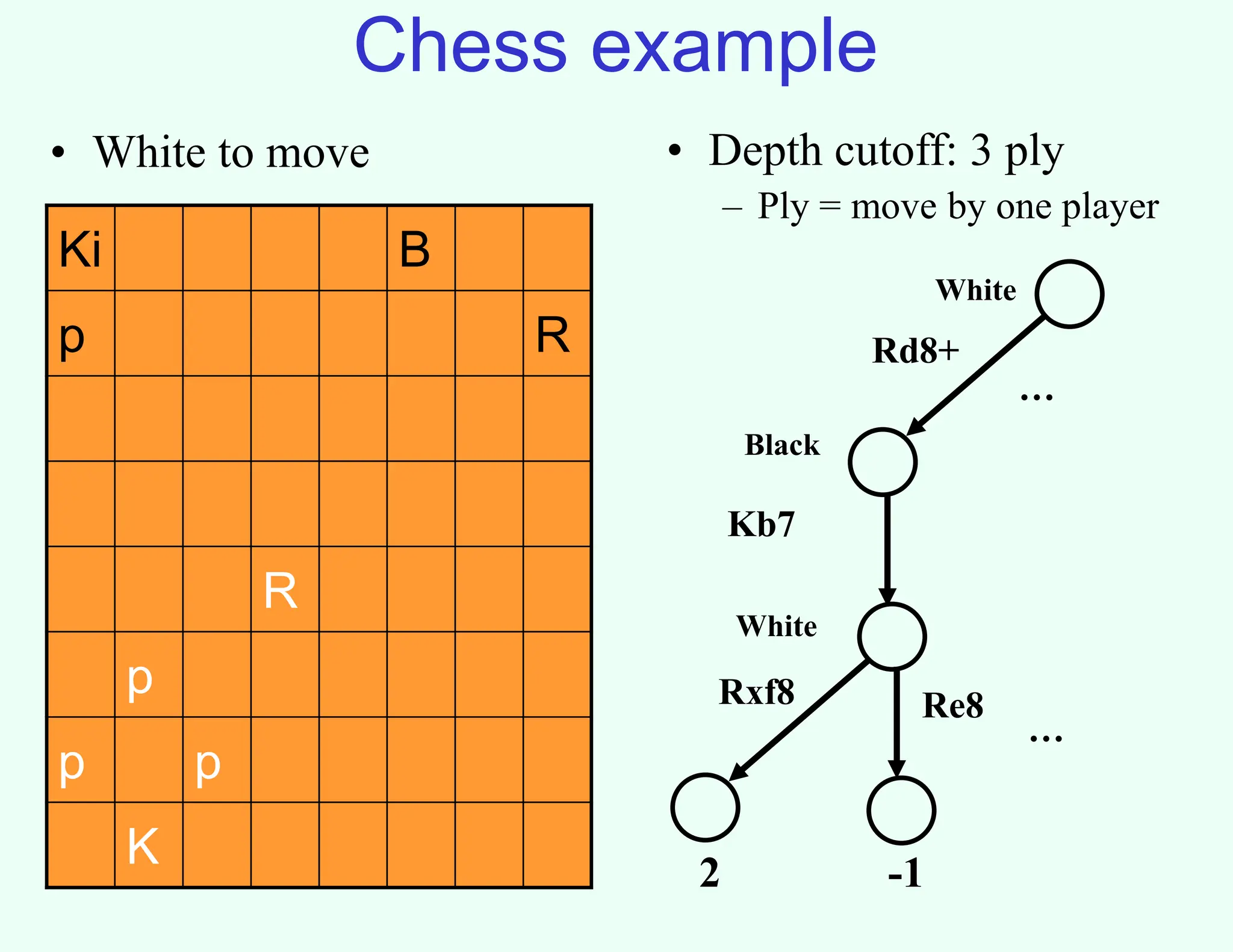

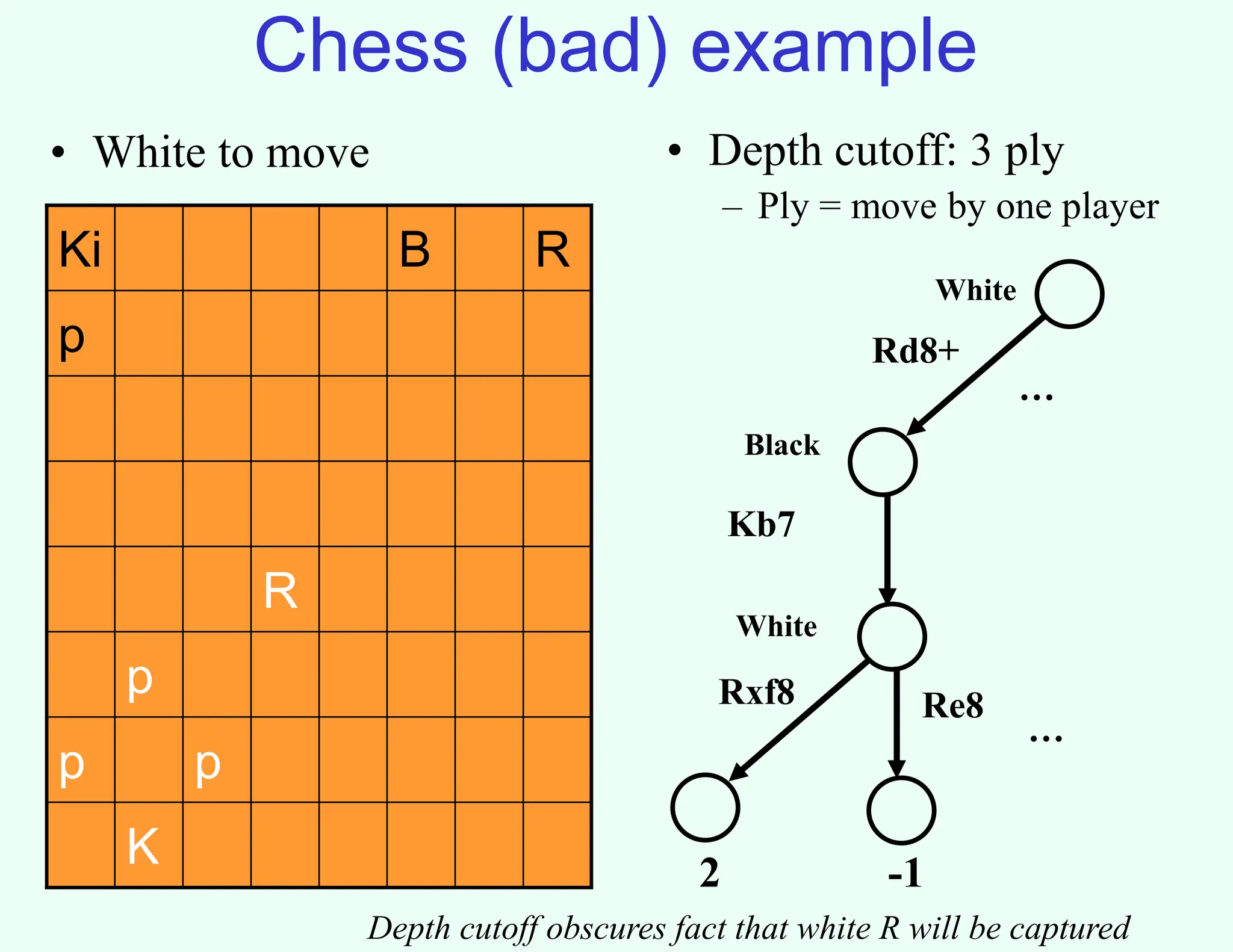

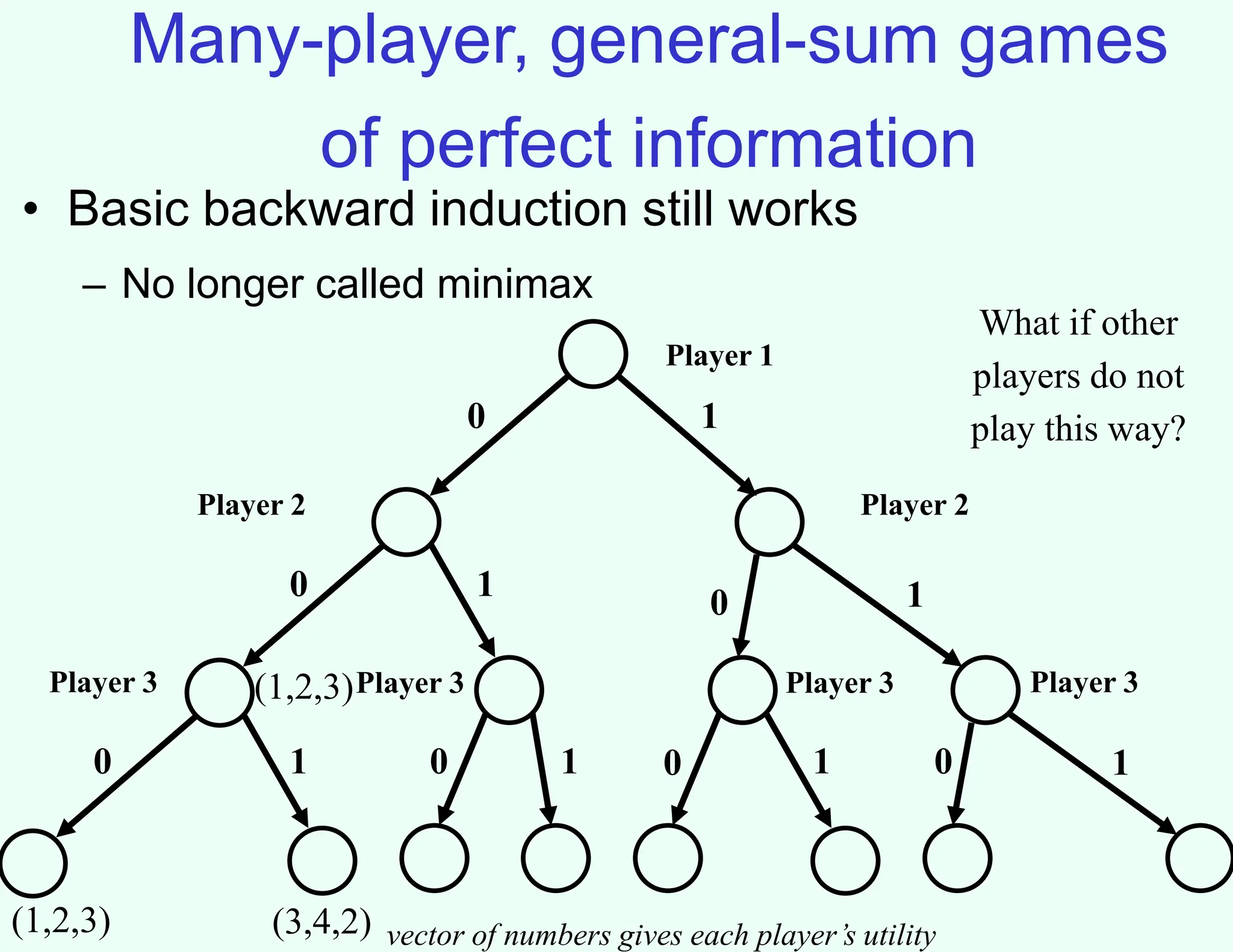

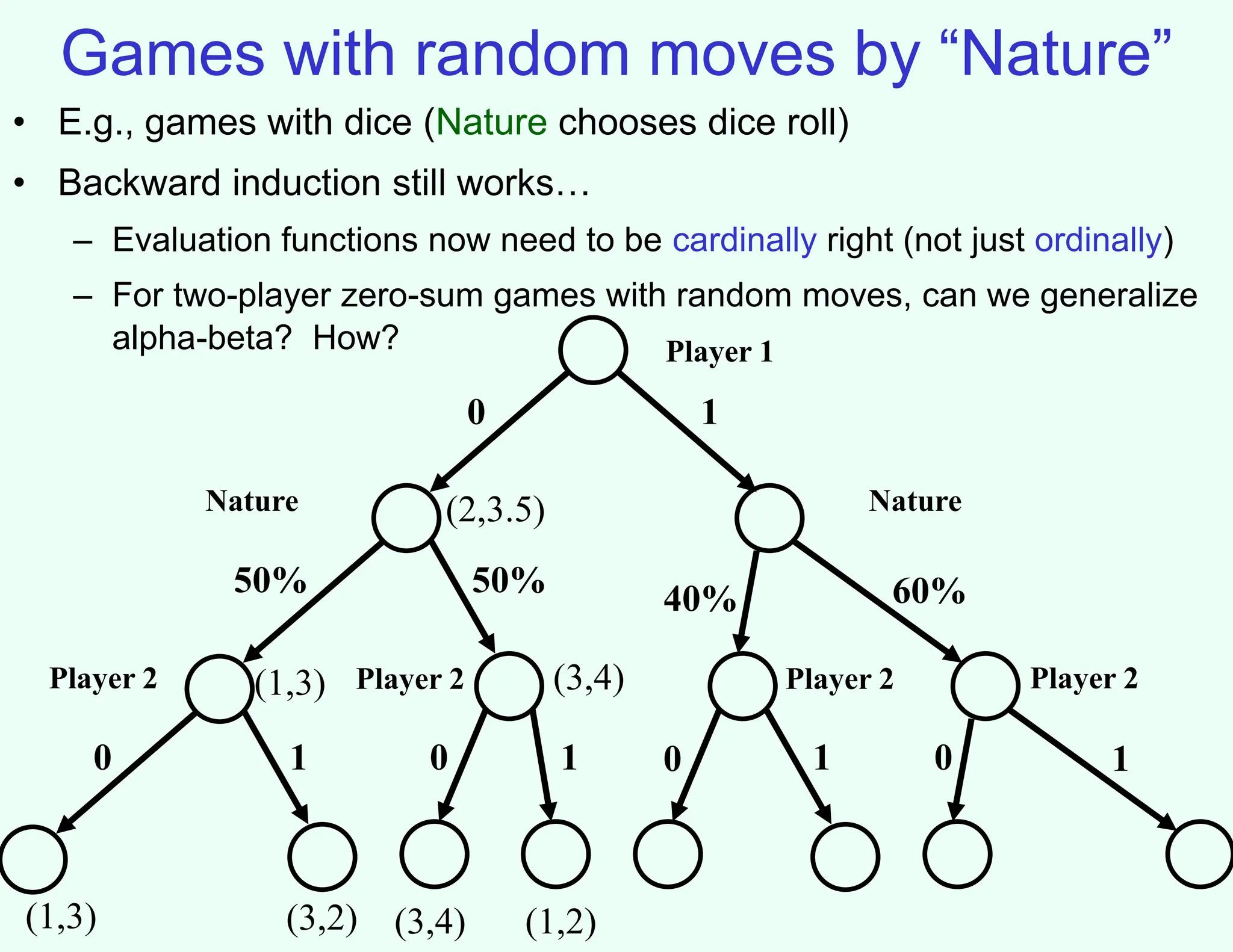

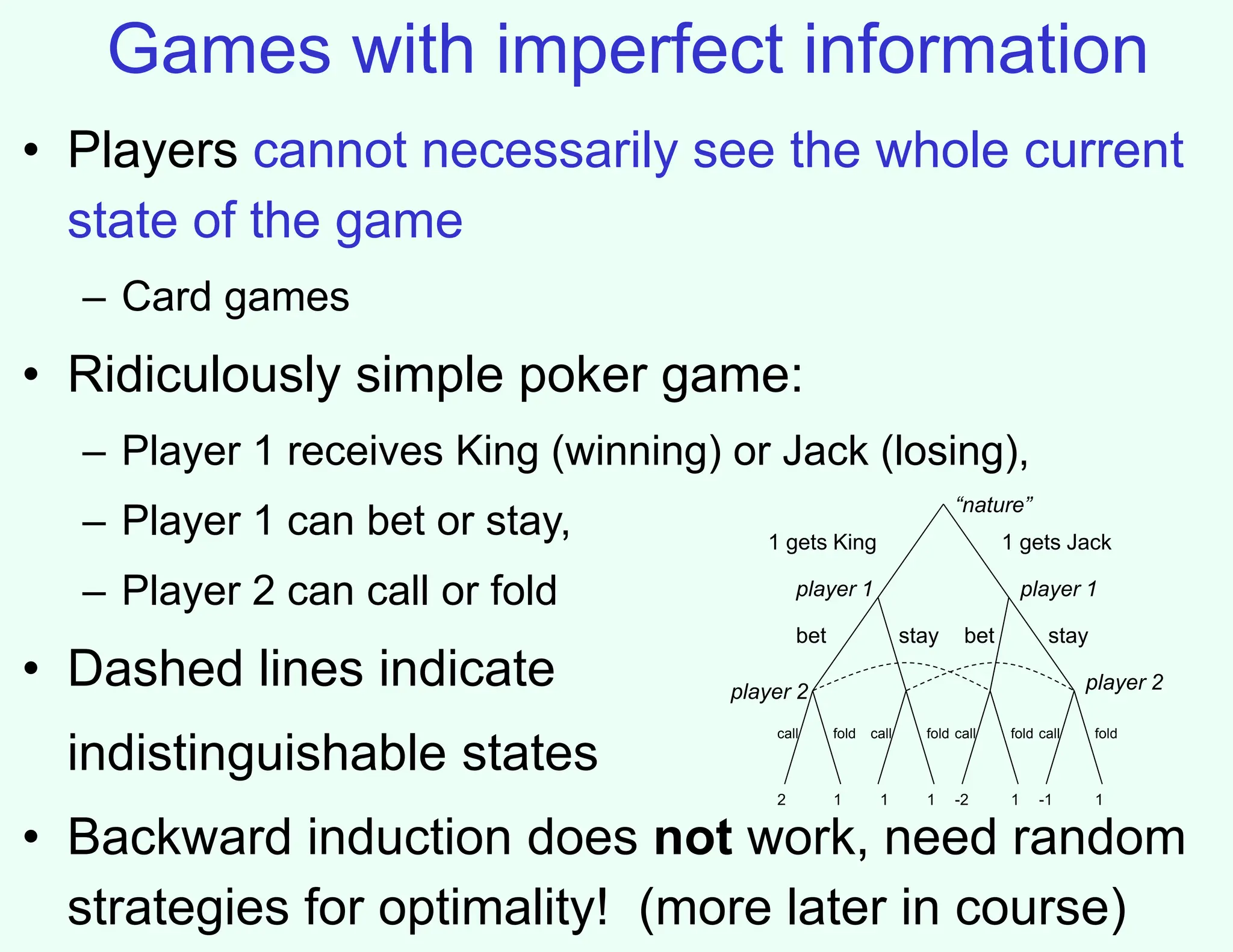

The document discusses the principles of two-player, zero-sum, and perfect-information games in artificial intelligence, specifically focusing on game playing and strategies such as backward induction (minimax) and alpha-beta pruning. It highlights the importance of evaluating game states with heuristics, particularly in complex games like chess and poker, where random strategies and depth cutoffs impact gameplay. Additionally, it points out that techniques developed for game strategies have applications in real-world adversarial settings, such as security scheduling.