The document discusses analyzing and improving kernel security. It describes how kernels work and why kernel security is important. Methods for analyzing kernel security like DIGGER are presented, which can identify critical kernel objects like pointers without prior knowledge. The document also discusses approaches for improving kernel security, such as protecting generic pointers with techniques like Sentry that control access to kernel data structures through object partitioning. Future work areas include automatically detecting all kernel data structures and expanding Sentry's protections.

![What is Kernel ?

• A computer program that manages

input/output requests from software

and translates them into data

processing instructions for the

central processing unit and other

electronic components of a

computer. [Wikipedia]

• The kernel is a fundamental part of a

modern computer's operating

system.

• OS rests on a outer ring, and

application above that.

Fig: Privilege rings for the x86 available in protected mode

[Source: Wikipedia]

Milan Rajpara

October 8, 2013

3](https://image.slidesharecdn.com/analyzing-20kernel-20security-20and-20approaches-20for-20improving-20it-140121054415-phpapp01/85/Analyzing-Kernel-Security-and-Approaches-for-Improving-it-3-320.jpg)

![We talk on ..

• Kernels for General Purpose Operating System

• Some Linux flavor gives Server Optimized Kernel

• Ex. Ubuntu older then 12.04, were gave this option. Since 12.04, linux-image-server is merged into linuximage-generic, there is no difference between Generic and Server kernel. [4]

• Windows do not disclose.

• Kernels which Constructed in C language

• Almost kernels are in C

• Improvement for Monolithic kernels

• All work performed in Virtual environment

• The Xen, and VMware used

Milan Rajpara

October 8, 2013

5](https://image.slidesharecdn.com/analyzing-20kernel-20security-20and-20approaches-20for-20improving-20it-140121054415-phpapp01/85/Analyzing-Kernel-Security-and-Approaches-for-Improving-it-5-320.jpg)

![To Find Critical Objects

3. DIGGER

[1]

• Uncover all system runtime objects without any prior knowledge of the OS kernel

data layout in memory.

• First it performs offline and constructs type-graph (which is used to enable

systematic memory traversal of the object details).

• Then it uses the 4-byte pool memory tagging schema (to uncover kernel runtime

objects from the kernel address space.)

• (+)

• Accurate result

• Low performance overhead

• Fast and nearly complete coverage

Milan Rajpara

October 8, 2013

12](https://image.slidesharecdn.com/analyzing-20kernel-20security-20and-20approaches-20for-20improving-20it-140121054415-phpapp01/85/Analyzing-Kernel-Security-and-Approaches-for-Improving-it-12-320.jpg)

![DIGGER & KDD

• DIGGER uses the KDD (Kernel Data Disambiguator) to precisely models the

direct and indirect relations between data structures.

• KDD is a static analysis tool that operates offline on an OS kernel’s source code

• Generates a type-graph for the kernel data with direct and indirect relations

between structures, models data structures [2]

• KDD disambiguates pointer-based relations (including generic pointers)

• by performing static points-to analysis on the kernel’s source code.

• Points-to analysis is the problem of determining statically a set of locations to

which a given variable may point to at runtime.

Milan Rajpara

October 8, 2013

13](https://image.slidesharecdn.com/analyzing-20kernel-20security-20and-20approaches-20for-20improving-20it-140121054415-phpapp01/85/Analyzing-Kernel-Security-and-Approaches-for-Improving-it-13-320.jpg)

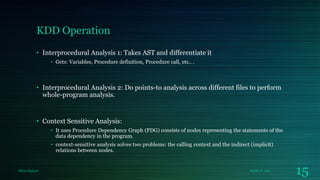

![KDD Operation

Source: Ref [2]

AST: Abstract Syntax Tree (high-level intermediate representation for the source code )

Milan Rajpara

October 8, 2013

14](https://image.slidesharecdn.com/analyzing-20kernel-20security-20and-20approaches-20for-20improving-20it-140121054415-phpapp01/85/Analyzing-Kernel-Security-and-Approaches-for-Improving-it-14-320.jpg)

![Soundness and Precision of KDD

• The points-to analysis algorithm is sound if the points-to set for each variable

contains all its actual runtime targets, and is imprecise if the inferred set is larger

than necessary.

• Check on C programs from the SPEC2000 and SPEC2006 benchmark suites.

• Achieved a high level of precision and 100% of soundness.

• And 96% precision on Windows (WRK*, Vista) and Linux kernel (v3.0.22). [2]

*WRK – Windows Research Kernel, the only available code from windows [6]

Milan Rajpara

October 8, 2013

16](https://image.slidesharecdn.com/analyzing-20kernel-20security-20and-20approaches-20for-20improving-20it-140121054415-phpapp01/85/Analyzing-Kernel-Security-and-Approaches-for-Improving-it-16-320.jpg)

![DIGGER Approach

Source: Ref [1]

Milan Rajpara

October 8, 2013

17](https://image.slidesharecdn.com/analyzing-20kernel-20security-20and-20approaches-20for-20improving-20it-140121054415-phpapp01/85/Analyzing-Kernel-Security-and-Approaches-for-Improving-it-17-320.jpg)

![Protection of Kernel

• Protect the generic pointers.

• Microsoft added a feature PatchGuard, which blocks kernel mode drivers from

altering sensitive parts of the Windows kernel.

• But TDL (rootkit) manages to circumvent this protection as well, by altering a machine's MBR so

that it can intercept Windows startup routines. [7]

• One approach is use of “Object Partitioning” to protect kernel data structure. [3]

• Uses Sentry, that creates access control protections for security-critical kernel data.

Milan Rajpara

October 8, 2013

20](https://image.slidesharecdn.com/analyzing-20kernel-20security-20and-20approaches-20for-20improving-20it-140121054415-phpapp01/85/Analyzing-Kernel-Security-and-Approaches-for-Improving-it-20-320.jpg)

![Sentry Architecture

• Sentry protects critical data and

enforces data access restrictions

based upon the origin of the access

within the code of the kernel and its

modules or drivers. [3]

• The data integrity model is

straightforward and matches that of

the Biba ring policy [9]

• The malicious code that modifies

privileges by directly writing to

memory is in a loaded module and

not in the core kernel code, so Sentry

will prevent the write

Milan Rajpara

October 8, 2013

21](https://image.slidesharecdn.com/analyzing-20kernel-20security-20and-20approaches-20for-20improving-20it-140121054415-phpapp01/85/Analyzing-Kernel-Security-and-Approaches-for-Improving-it-21-320.jpg)

![References

[1] Amani S. Ibrahim, James Hamlyn-Harris, John Grundy, Mohamed Almorsy, "Identifying OS Kernel Objects for

Run-Time Security Analysis", DOI: 10.1007/978-3-642-34601-9_6

[2] Amani S. Ibrahim, John Grundy, James Hamlyn-Harris, Mohamed Almorsy, "Operating System Kernel Data

Disambiguation to Support Security Analysis", DOI: 10.1007/978-3-642-34601-9_20

[3] Abhinav Srivastava, Jonathon Giffin, "Efficient Protection of Kernel Data Structures via Object Partitioning", DOI:

10.1145/2420950.2421012

[4] RFC: Linux kernel merging. https://lists.ubuntu.com/archives/kernel-team/2011-October/017471.html

[5] Rootkits detail by Symantec http://www.symantec.com/avcenter/reference/windows.rootkit.overview.pdf

[6] Windows Research Kernel https://www.facultyresourcecenter.com/curriculum/pfv.aspx?ID=7366&c1=enus&c2=0

[7] TDL Rootkit: http://www.theregister.co.uk/2010/11/16/tdl_rootkit_does_64_bit_windows

[8] Windows hooks: http://msdn.microsoft.com/en-us/library/ms644959(v=vs.85).aspx

[9] K. J. Biba. Integrity considerations for secure computer systems. Technical Report MTR-3153, Mitre, Apr. 1977

Milan Rajpara

October 8, 2013

26](https://image.slidesharecdn.com/analyzing-20kernel-20security-20and-20approaches-20for-20improving-20it-140121054415-phpapp01/85/Analyzing-Kernel-Security-and-Approaches-for-Improving-it-26-320.jpg)