This document provides a comprehensive overview of Java build tools, focusing on Maven, Gradle, and SBT. It details the features, advantages, and usage of each tool, including project setup, dependency management, and plugin application. The content includes examples and commands for creating and managing Java projects effectively using these tools.

![Goals and Plugins

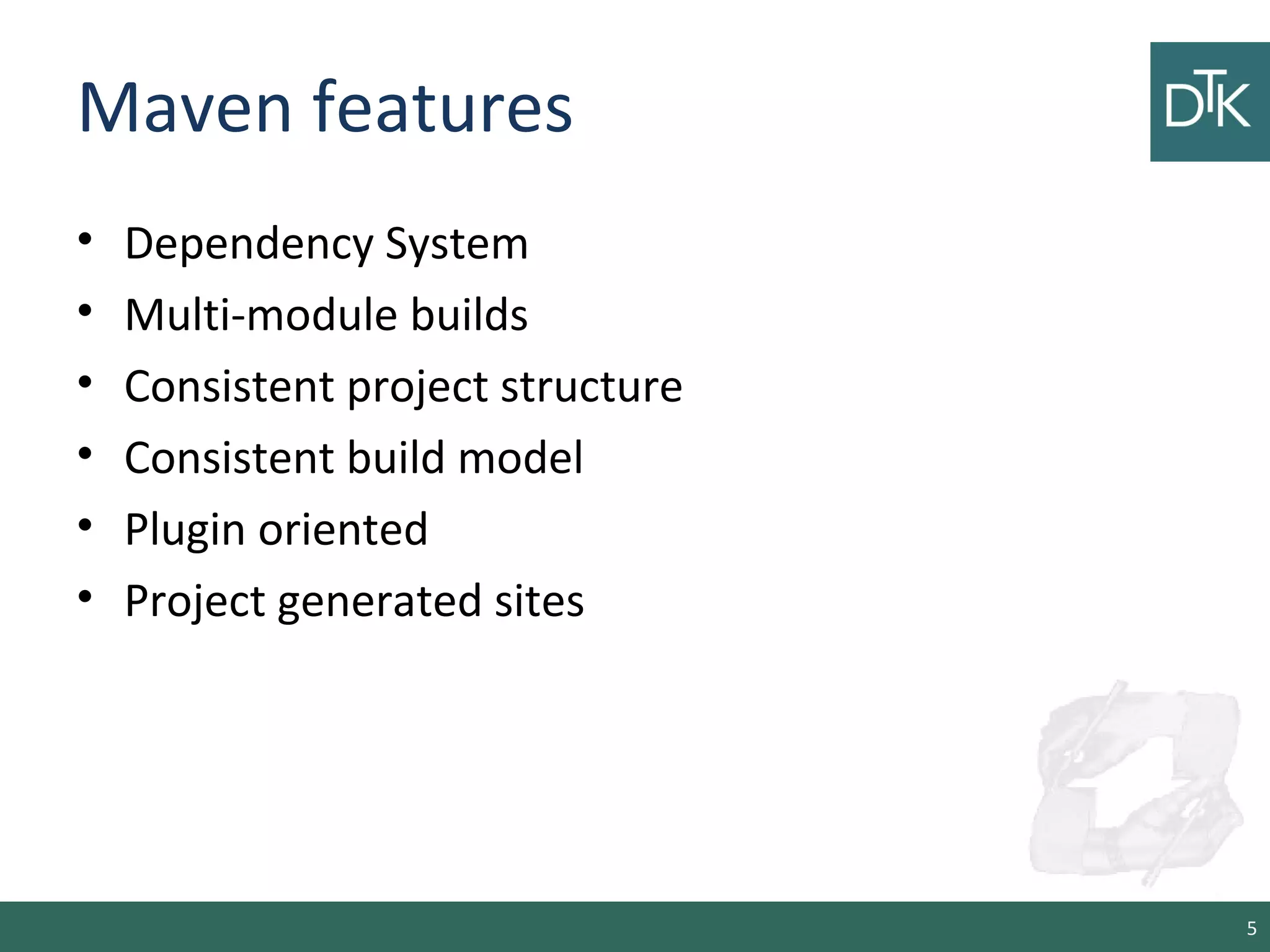

• Are an extension of the standard maven lifecycles

steps.

– Clean, compile, test, package, install and deploy

• There are plugin to do other steps such as

12

<plugins>

<plugin>

<artifactId>maven-assembly-plugin</artifactId>

<version>2.5.3</version>

<configuration>

<descriptorRefs>

<descriptorRef>jar-with-dependencies</descriptorRef>

</descriptorRefs>

</configuration>

[...]

</plugin> </plugins>](https://image.slidesharecdn.com/3-150108024444-conversion-gate01/75/An-introduction-to-maven-gradle-and-sbt-12-2048.jpg)

![Referencing files & file

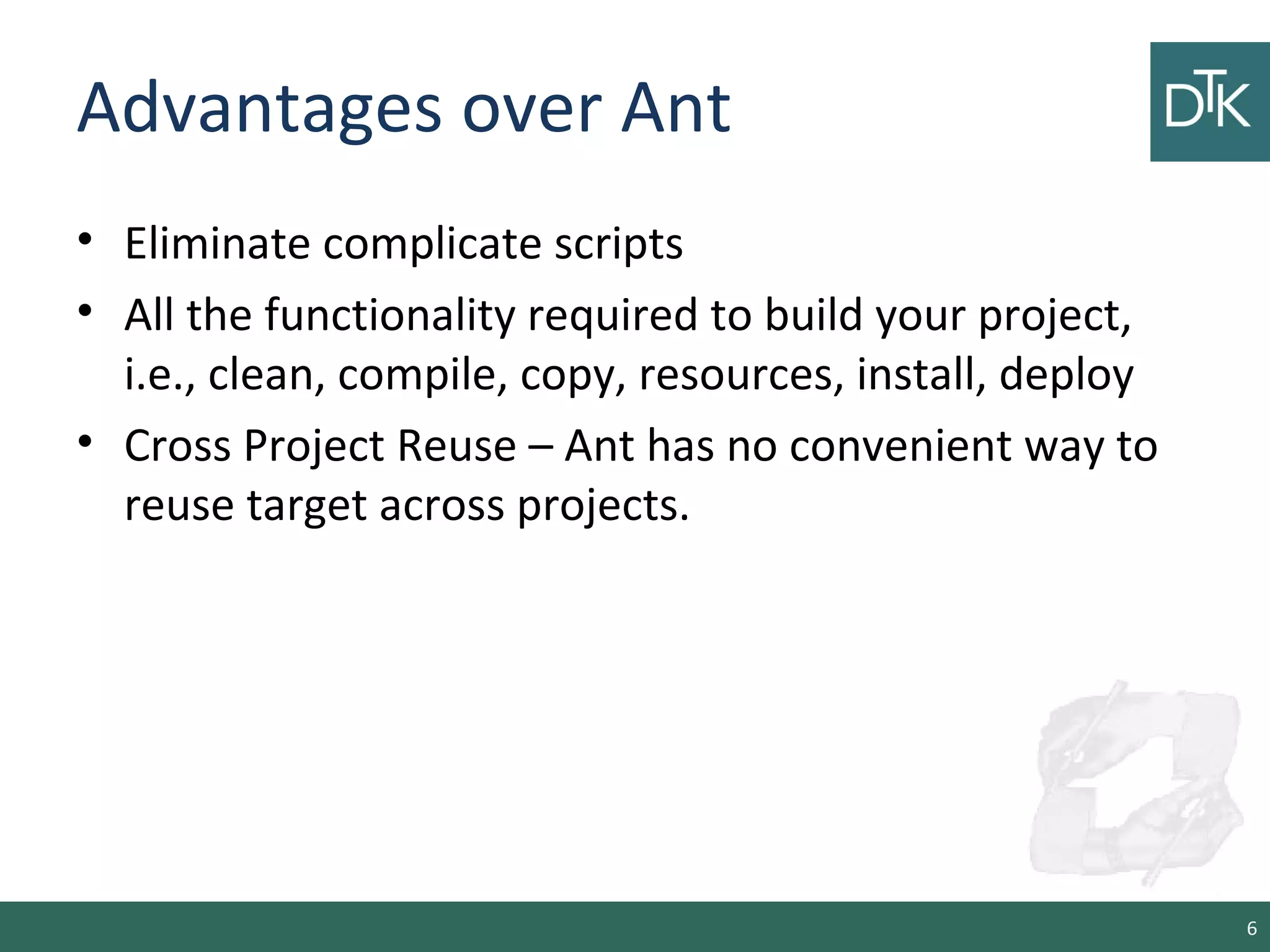

collections• Groovy-like syntax:

File configFile = file('src/config.xml')

• Create a file collection from a bunch of files:

FileCollection collection = files( 'src/file1.txt',

new File('src/file2.txt'), ['src/file3.txt', 'src/file4.txt'])

• Create a files collection by referencing project

properties:

collection = files { srcDir.listFiles() }

• Operation on collections

def union = collection + files('src/file4.txt')

def different = collection - files('src/file3.txt')}

21](https://image.slidesharecdn.com/3-150108024444-conversion-gate01/75/An-introduction-to-maven-gradle-and-sbt-21-2048.jpg)

![Repository configuration

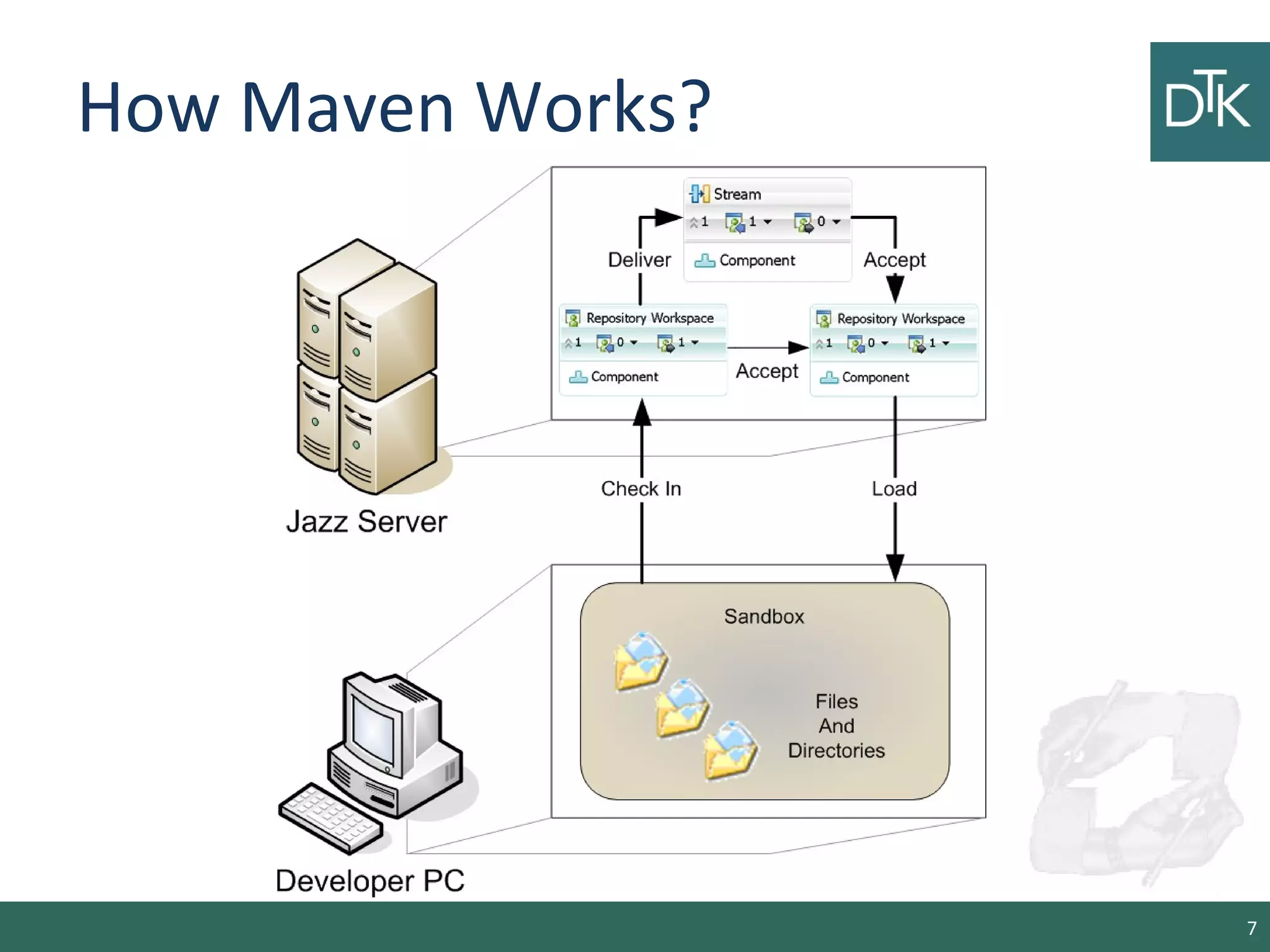

• Remote Repos

repositories {

mavenCentral()

mavenCentral name: 'multi-jar-repos', urls:

["http://repo.mycompany.com/jars1",

"http://repo.mycompany.com/jars1"]

}

• Local Repos

repositories {

flatDir name: 'localRepository',

dirs: 'lib' flatDir dirs: ['lib1', 'lib2']

} 25](https://image.slidesharecdn.com/3-150108024444-conversion-gate01/75/An-introduction-to-maven-gradle-and-sbt-25-2048.jpg)

![Referencing dependencies

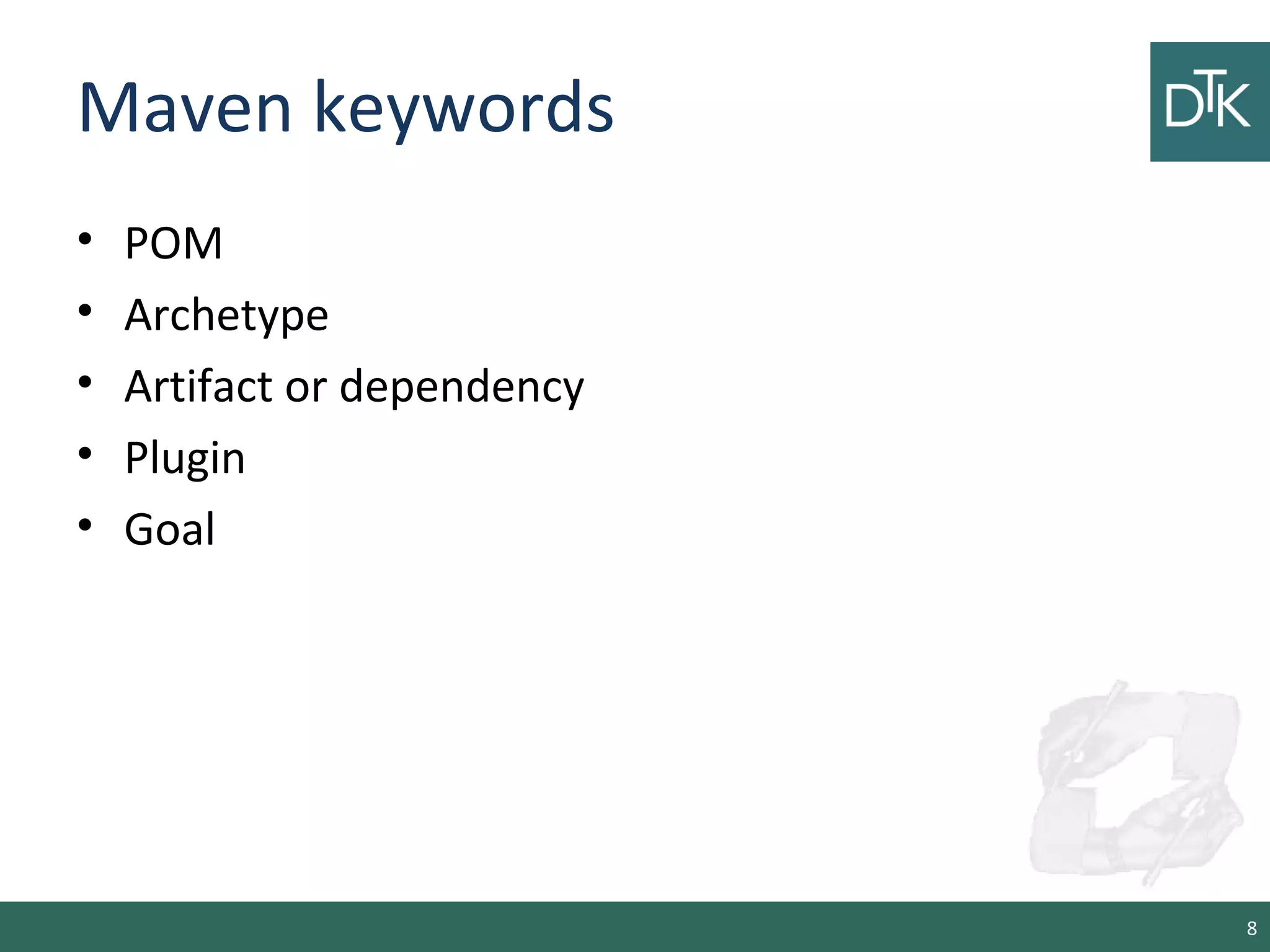

dependencies {

runtime files('libs/a.jar', 'libs/b.jar’)

runtime fileTree(dir: 'libs', includes: ['*.jar'])

}

dependencies {

compile 'org.springframework:spring-webmvc:3.0.0.RELEASE'

testCompile 'org.springframework:spring-test:3.0.0.RELEASE'

testCompile 'junit:junit:4.7'

}

26](https://image.slidesharecdn.com/3-150108024444-conversion-gate01/75/An-introduction-to-maven-gradle-and-sbt-26-2048.jpg)

![Referencing dependencies

List groovy = ["org.codehaus.groovy:groovy-all:1.5.4@jar",

"commons-cli:commons-cli:1.0@jar",

"org.apache.ant:ant:1.7.0@jar"]

List hibernate = ['org.hibernate:hibernate:3.0.5@jar',

'somegroup:someorg:1.0@jar']

dependencies {

runtime groovy, hibernate

}

27](https://image.slidesharecdn.com/3-150108024444-conversion-gate01/75/An-introduction-to-maven-gradle-and-sbt-27-2048.jpg)

![SettingKey, TaskKey and InputKey

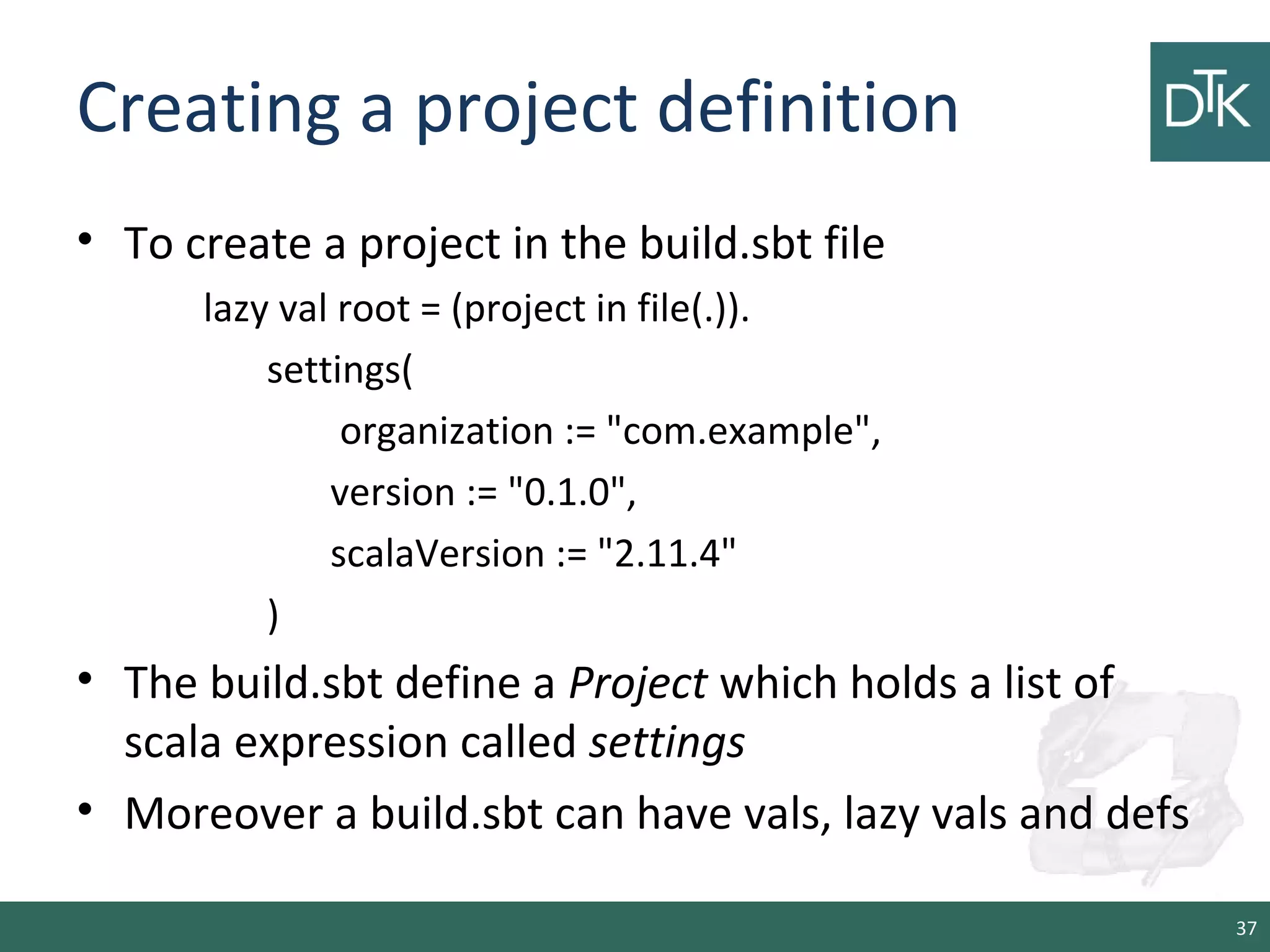

lazy val root = (project in file(".")).

settings(

name.:=("hello")

)

•.:= is a method that takes a parameter and return s

Setting[String]

lazy val root = (project in file(".")).

settings(

name := 42 // will not compile

)

•Does it run?

38](https://image.slidesharecdn.com/3-150108024444-conversion-gate01/75/An-introduction-to-maven-gradle-and-sbt-38-2048.jpg)

![Types of key

• SettingKey[T]: a key for a value computed once (the

value is computed when loading the project, and

kept around).

• TaskKey[T]: a key for a value, called a task, that has

to be recomputed each time, potentially with side

effects.

• InputKey[T]: a key for a task that has command line

arguments as input. Check out Input Tasks for more

details.

• The built-in key are field of the object Keys

(http://www.scala-

sbt.org/0.13/sxr/sbt/Keys.scala.html) 39](https://image.slidesharecdn.com/3-150108024444-conversion-gate01/75/An-introduction-to-maven-gradle-and-sbt-39-2048.jpg)

• A TaskKey[T] can be used to define task such as

compile or package.

40](https://image.slidesharecdn.com/3-150108024444-conversion-gate01/75/An-introduction-to-maven-gradle-and-sbt-40-2048.jpg)

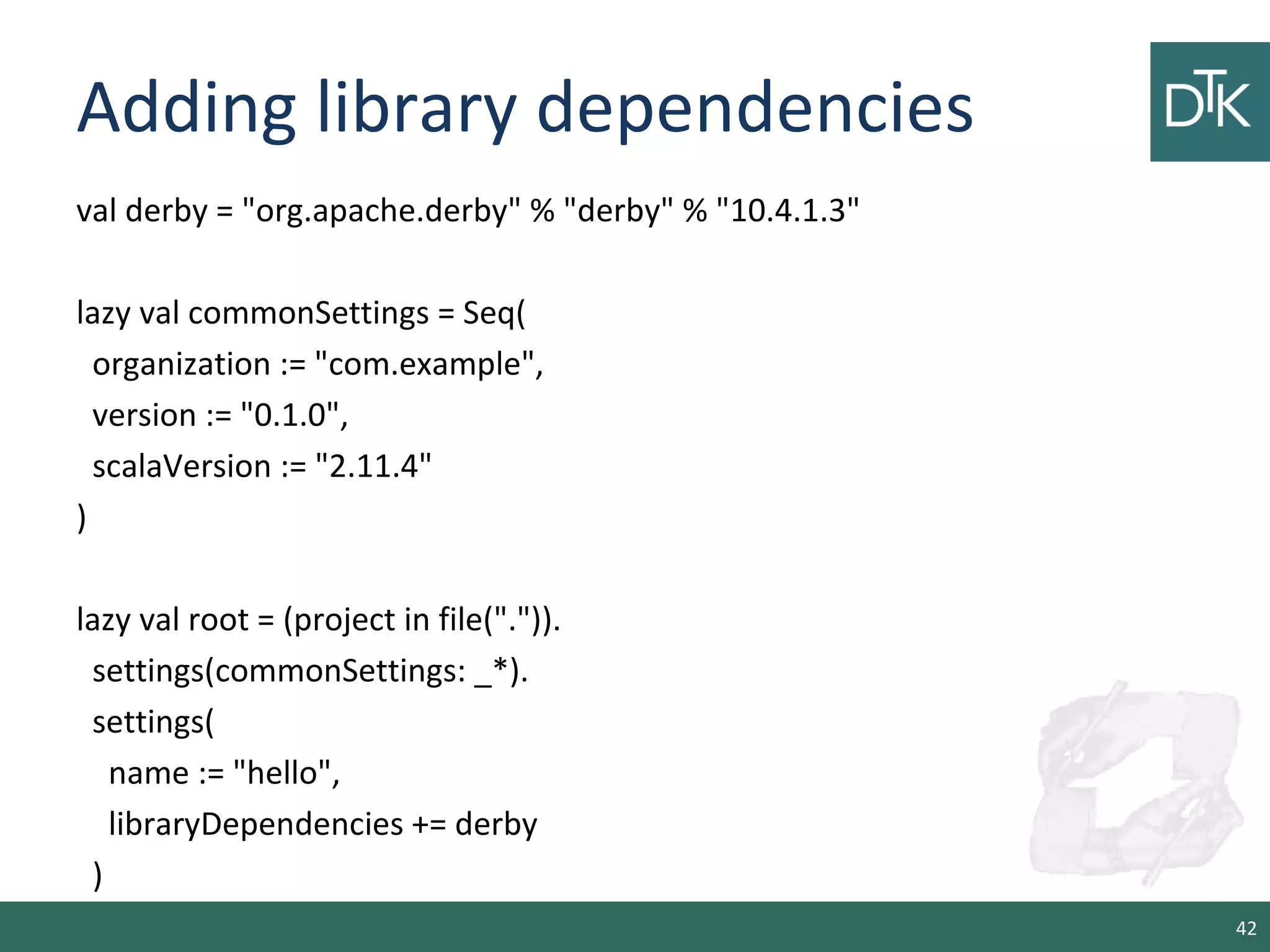

lazy val root = (project in file(".")).

settings(

hello := { println("Hello!") }

)

• Imports in build.sbt

import sbt._

import Process._

import Keys._

41](https://image.slidesharecdn.com/3-150108024444-conversion-gate01/75/An-introduction-to-maven-gradle-and-sbt-41-2048.jpg)