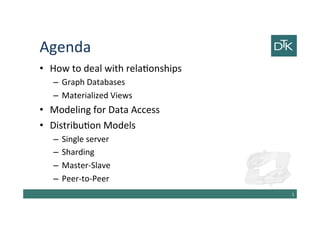

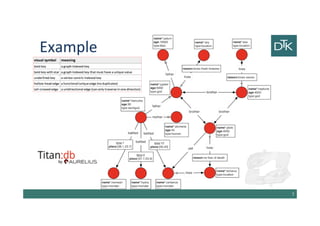

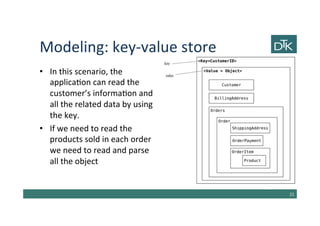

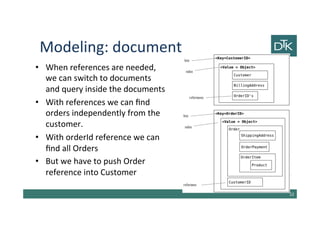

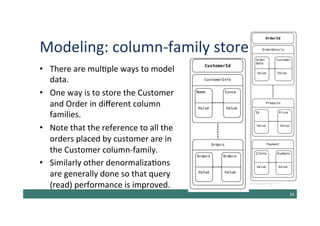

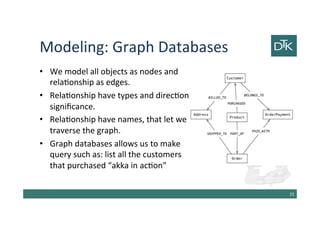

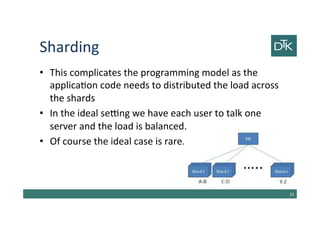

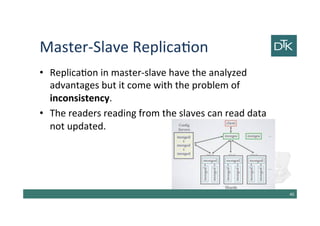

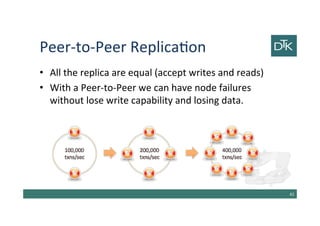

The document discusses various methods for data modeling, focusing on handling relationships and data distribution in database systems. It covers the use of graph databases, materialized views, and different distribution models such as sharding and master-slave replication, emphasizing their benefits and challenges. The importance of optimizing data access and ensuring efficient querying and resilience in distributed systems is highlighted throughout the text.