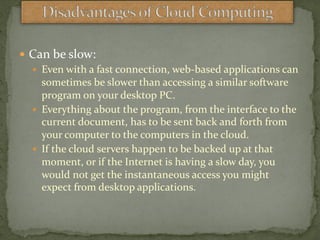

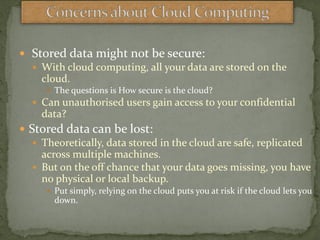

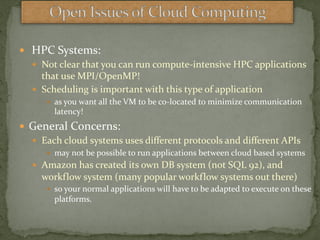

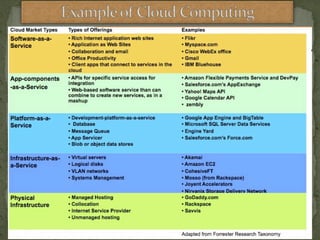

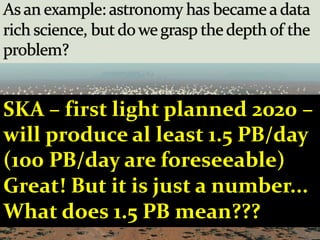

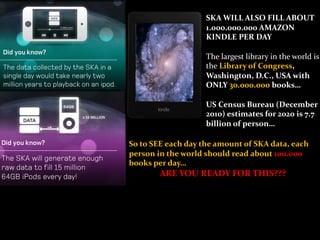

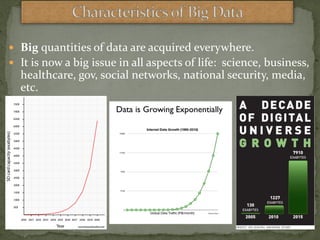

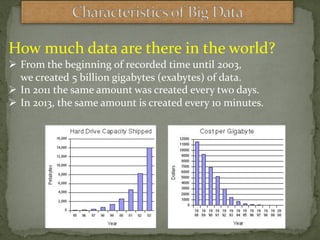

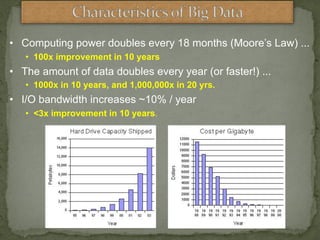

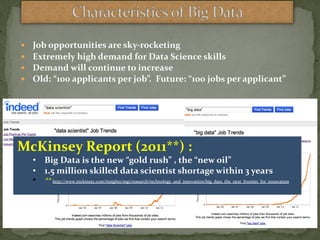

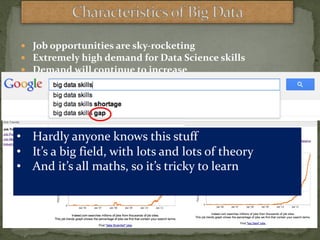

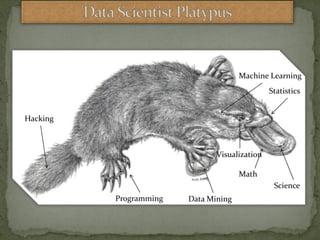

The document discusses cloud computing, defining it as internet-based computing that increases efficiency and reduces costs for businesses. It highlights the benefits of cloud services, such as improved collaboration and data reliability, while also addressing concerns like security risks and dependence on providers. Additionally, it explores the growing significance of big data, emphasizing the rapid increase in data generation and the demand for skilled data scientists.