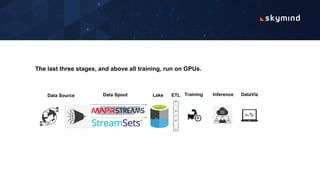

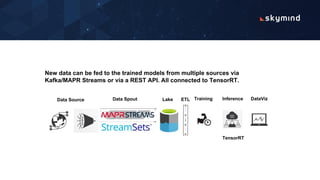

Network intrusion detection uses deep learning to analyze network traffic logs and detect anomalous activity that could indicate hackers. The logs are preprocessed and fed into a neural network to be analyzed in batches on a GPU cluster. The trained model can then detect intrusions in new incoming log data from multiple sources in real-time and help network administrators find malicious traffic on the network.