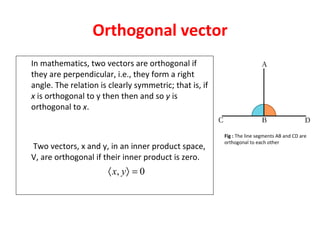

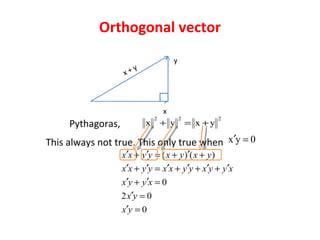

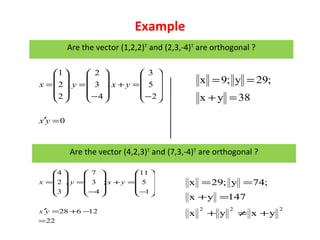

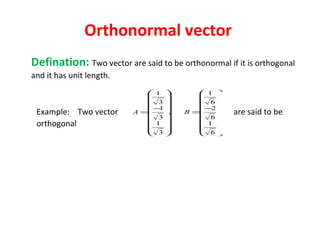

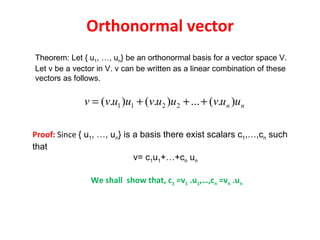

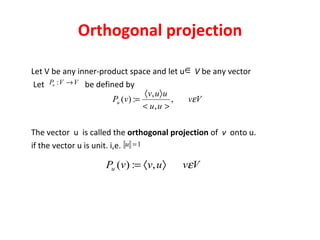

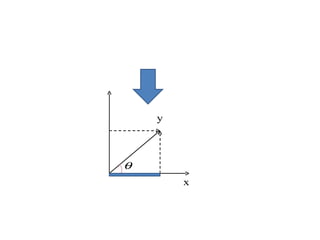

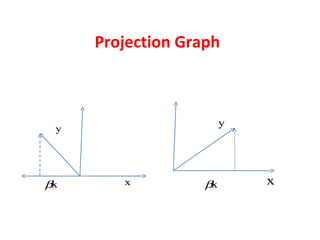

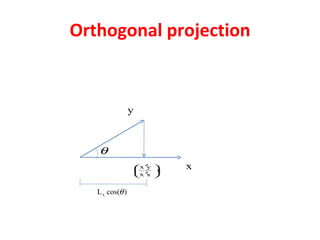

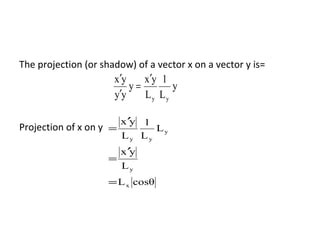

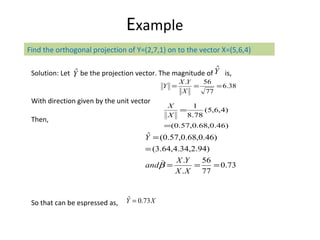

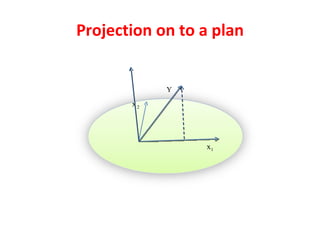

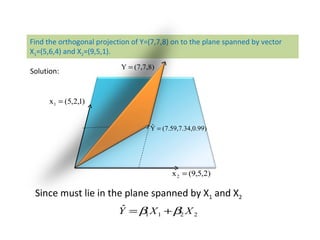

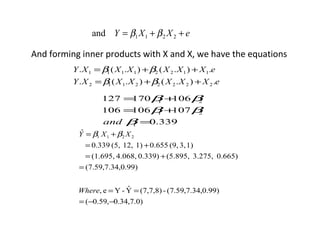

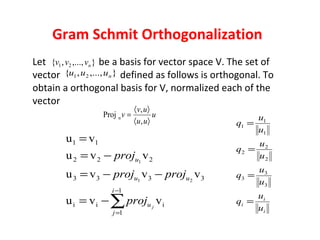

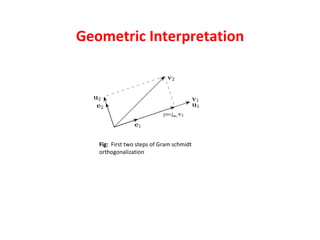

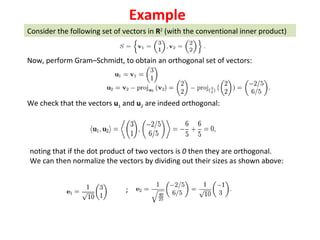

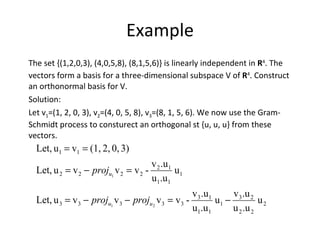

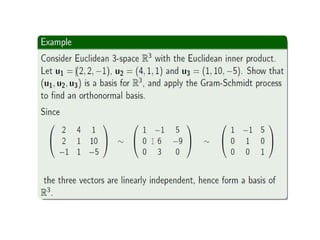

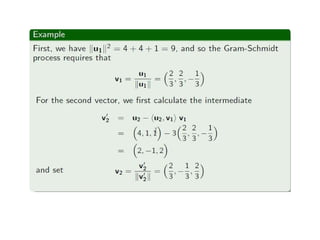

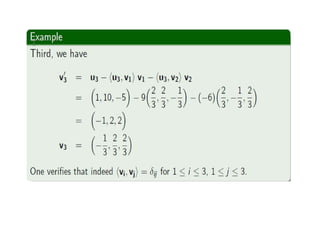

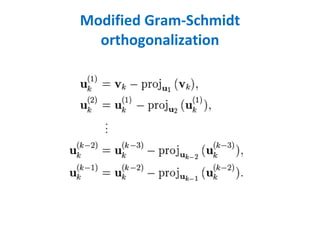

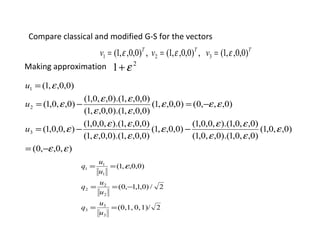

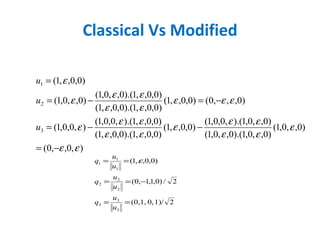

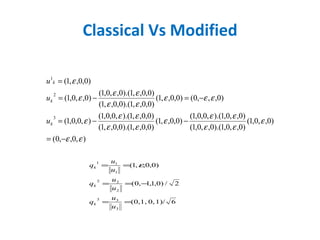

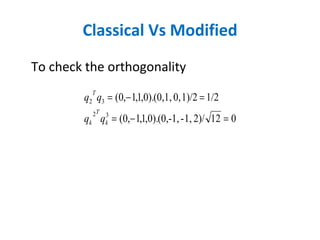

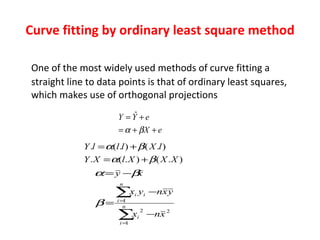

The document discusses various linear algebra concepts related to vectors and projections. It defines orthogonal vectors as vectors that are perpendicular to each other, forming a right angle. It also defines orthonormal vectors as orthogonal vectors that have a unit length. The document discusses the orthogonal projection of one vector onto another vector. It explains how to find the orthogonal projection using an inner product formula. It also discusses applications of projections, including Gram-Schmidt orthogonalization and least squares curve fitting.