The document discusses several topics related to distributed machine learning and distributed systems including:

- Reasons for using distributed machine learning being either due to large data volumes or hopes of increased speed

- Failure rates of hardware and network links in large data centers

- Examples of database inconsistencies and data loss caused by network partitions in different distributed databases

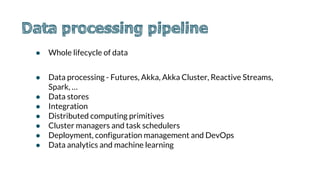

- Key aspects of distributed data processing including data storage, integration, computing primitives, and analytics

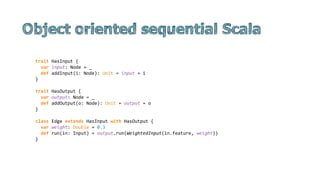

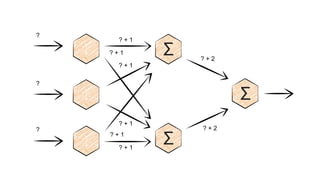

![● Shared memory, disk, shared nothing, threads, mutexes, transactional memory,

message passing, CSP, actors, futures, coroutines, evented, dataflow, ...

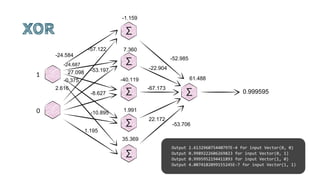

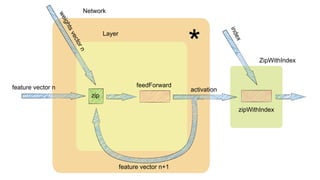

We can think of two reasons for using distributed machine learning: because you

have to (so much data), or because you want to (hoping it will be faster). Only the first

reason is good.

Zygmunt Z

Elapsed times for 20 PageRank iterations

[1, 2]](https://image.slidesharecdn.com/zapletalmartinlargevolumedataanalytics-150611204218-lva1-app6891/85/Large-volume-data-analysis-on-the-Typesafe-Reactive-Platform-3-320.jpg)

![● Microsoft's data centers average failure rate is 5.2 devices per day and 40.8 links per day,

with a median time to repair of approximately five minutes (and a maximum of one week).

● Google new cluster over one year. Five times rack issues 40-80 machines seeing 50 percent

packet loss. Eight network maintenance events (four of which might cause ~30-minute

random connectivity losses). Three router failures (resulting in the need to pull traffic

immediately for an hour).

● CENIC 500 isolating network partitions with median 2.7 and 32 minutes; 95th percentile of

19.9 minutes and 3.7 days, respectively for software and hardware problems [3]](https://image.slidesharecdn.com/zapletalmartinlargevolumedataanalytics-150611204218-lva1-app6891/85/Large-volume-data-analysis-on-the-Typesafe-Reactive-Platform-4-320.jpg)

![● MongoDB separated primary from its 2 secondaries. 2 hours later the old primary rejoined and rolled back

everything on the new primary

● A network partition isolated the Redis primary from all secondaries. Every API call caused the billing

system to recharge customer credit cards automatically, resulting in 1.1 percent of customers being

overbilled over a period of 40 minutes.

● The partition caused inconsistency in the MySQL database. Because foreign key relationships were not

consistent, Github showed private repositories to the wrong users' dashboards and incorrectly routed

some newly created repositories.

● For several seconds, Elasticsearch is happy to believe two nodes in the same cluster are both primaries, will

accept writes on both of those nodes, and later discard the writes to one side.

● RabbitMQ lost ~35% of acknowledged writes under those conditions.

● Redis threw away 56% of the writes it told us succeeded.

● In Riak, last-write-wins resulted in dropping 30-70% of writes, even with the strongest consistency

settings

● MongoDB “strictly consistent” reads see stale versions of documents, but they can also return garbage

data from writes that never should have occurred. [4]](https://image.slidesharecdn.com/zapletalmartinlargevolumedataanalytics-150611204218-lva1-app6891/85/Large-volume-data-analysis-on-the-Typesafe-Reactive-Platform-5-320.jpg)

![CQRS

Kappa architecture

Batch-Pipeline

Kafka

Allyourdata

NoSQL

SQL

Spark

Client

Client

Client Views

Stream

processor

Client

QueryCommand

DBDB

Denormalise

/Precompute

Flume

Scoop

Hive

Impala

Serving DB

Oozie

HDFS

Lambda Architecture

Batch Layer Serving

Layer

Stream layer (fast)

Query

Query

Allyourdata

[5]](https://image.slidesharecdn.com/zapletalmartinlargevolumedataanalytics-150611204218-lva1-app6891/85/Large-volume-data-analysis-on-the-Typesafe-Reactive-Platform-9-320.jpg)

![[6]](https://image.slidesharecdn.com/zapletalmartinlargevolumedataanalytics-150611204218-lva1-app6891/85/Large-volume-data-analysis-on-the-Typesafe-Reactive-Platform-10-320.jpg)

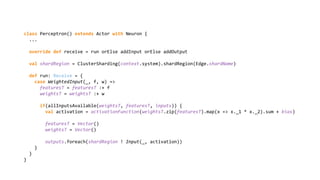

![class Perceptron extends Neuron {

override var activationFunction: Double => Double = Neuron.sigmoid

override var bias: Double = 0.2

var inputs: Seq[Edge] = Seq()

var outputs: Seq[Edge] = Seq()

var weightsT: Seq[Double] = Vector()

var featuresT: Seq[Double] = Vector()

private def allInputsAvailable(w: Seq[Double], f: Seq[Double], in: Seq[Edge]) =

w.length == in.length && f.length == in.length

override def run(in: WeightedInput): Unit = {

featuresT = featuresT :+ in.feature

weightsT = weightsT :+ in.weight

if(allInputsAvailable(weightsT, featuresT, inputs)) {

val activation = activationFunction(weightsT.zip(featuresT).map(x => x._1 * x._2).sum + bias)

featuresT = Vector()

weightsT = Vector()

outputs.foreach(_.run(Input(activation)))

}

}

}](https://image.slidesharecdn.com/zapletalmartinlargevolumedataanalytics-150611204218-lva1-app6891/85/Large-volume-data-analysis-on-the-Typesafe-Reactive-Platform-16-320.jpg)

![object Perceptron {

def activation(w: Vector[Double], f: Vector[Double], bias: Double, activationFunction: Double => Double) =

activationFunction(w.zip(f).map(x => x._1 * x._2).sum + bias)

}

object Network {

def feedForward(features: Vector[Double], network: Seq[Vector[Vector[Double] => Double]]): Vector[Double] =

network.foldLeft(features)((b, a) => a.map(_(b)))

}

val network = Seq[Vector[Vector[Double] => Double]](

Vector(

Perceptron.activation(Vector(0.3, 0.3, 0.3), _, 0.2, Neuron.sigmoid),

Perceptron.activation(Vector(0.3, 0.3, 0.3), _, 0.2, Neuron.sigmoid)),

Vector(Perceptron.activation(Vector(0.3, 0.3, 0.3), _, 0.2, Neuron.sigmoid))

)

Network.feedForward(Vector(splits(0).toDouble, splits(1).toDouble, splits(2).toDouble), network)](https://image.slidesharecdn.com/zapletalmartinlargevolumedataanalytics-150611204218-lva1-app6891/85/Large-volume-data-analysis-on-the-Typesafe-Reactive-Platform-20-320.jpg)

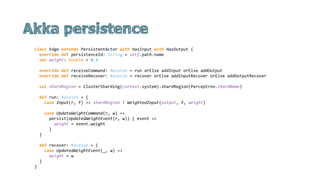

![def props() = Props(behaviour)

def behaviour = addInput(addOutput(feedForward(_, _, 0.2, sigmoid, Vector(), Vector()), _))

private def allInputsAvailable(w: Vector[Double], f: Vector[Double], in: Seq[ActorRef[Nothing]]) =

w.length == in.length && f.length == in.length

def feedForward(

inputs: Seq[ActorRef[Nothing]],

outputs: Seq[ActorRef[Input]],

bias: Double,

activationFunction: Double => Double,

weightsT: Vector[Double],

featuresT: Vector[Double]): Behavior[NodeMessage] = Partial[NodeMessage] {

case WeightedInput(f, w) =>

val featuresTplusOne = featuresT :+ f

val weightsTplusOne = weightsT :+ w

if (allInputsAvailable(featuresTplusOne, weightsTplusOne, inputs)) {

val activation = activationFunction(weightsTplusOne.zip(featuresTplusOne).map(x => x._1 * x._2).sum +

bias)

outputs.foreach(_ ! Input(activation))

feedForward(inputs, outputs, bias, activationFunction, Vector(), Vector())

} else {

feedForward(inputs, outputs, bias, activationFunction, weightsTplusOne, featuresTplusOne)

}

}](https://image.slidesharecdn.com/zapletalmartinlargevolumedataanalytics-150611204218-lva1-app6891/85/Large-volume-data-analysis-on-the-Typesafe-Reactive-Platform-22-320.jpg)

![● At-most-once. Messages may be lost.

● At-least-once. Messages may be duplicated but not lost.

● Exactly-once.

Ack [8]](https://image.slidesharecdn.com/zapletalmartinlargevolumedataanalytics-150611204218-lva1-app6891/85/Large-volume-data-analysis-on-the-Typesafe-Reactive-Platform-25-320.jpg)

![Output 76 with result 0.6298492571946717 in 2015-05-21 17:26:56.504

Output 77 with result 0.6298357692712147 in 2015-05-21 17:26:56.504

Output 78 with result 0.6298679316729997 in 2015-05-21 17:26:56.504

Output 79 with result 0.6298674125610421 in 2015-05-21 17:26:56.504

Output 80 with result 0.6298866035455875 in 2015-05-21 17:26:56.504

Output 81 with result 0.6298959406028078 in 2015-05-21 17:26:56.504

Output 82 with result 0.6299052760580531 in 2015-05-21 17:26:56.504

Output 83 with result 0.6299057948796922 in 2015-05-21 17:26:56.505

Output 84 with result 0.6299094252583786 in 2015-05-21 17:26:56.505

Output 85 with result 0.6299332807659122 in 2015-05-21 17:26:56.505

Output 86 with result 0.6299426148804811 in 2015-05-21 17:26:56.505

Output 87 with result 0.6299462447313531 in 2015-05-21 17:26:56.505

Output 88 with result 0.6299612820238325 in 2015-05-21 17:26:56.505

[INFO] [05/21/2015 17:26:56.504] [akka-akka.actor.default-dispatcher-13] [akka://akka/user/hiddenLayer1] Message

[Node$WeightedInput] from Actor[akka://akka/deadLetters] to Actor[akka://akka/user/hiddenLayer1#162015581] was not

delivered. [1] dead letters encountered. This logging can be turned off or adjusted with configuration settings

'akka.log-dead-letters' and 'akka.log-dead-letters-during-shutdown'.

Output 89 with result 0.6299706150403437 in 2015-05-21 17:26:56.505

Output 90 with result 0.6299799476901787 in 2015-05-21 17:26:56.506

[INFO] [05/21/2015 17:26:56.504] [akka-akka.actor.default-dispatcher-13] [akka://akka/user/hiddenLayer1] Message

[Node$WeightedInput] from Actor[akka://akka/deadLetters] to Actor[akka://akka/user/hiddenLayer1#162015581] was not

delivered. [2] dead letters encountered. This logging can be turned off or adjusted with configuration settings

'akka.log-dead-letters' and 'akka.log-dead-letters-during-shutdown'.

Output 91 with result 0.6299809846419518 in 2015-05-21 17:26:56.506

Output 92 with result 0.6299986118617977 in 2015-05-21 17:26:56.506](https://image.slidesharecdn.com/zapletalmartinlargevolumedataanalytics-150611204218-lva1-app6891/85/Large-volume-data-analysis-on-the-Typesafe-Reactive-Platform-28-320.jpg)

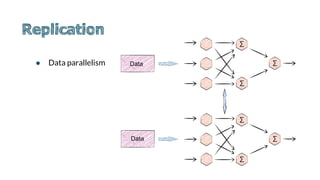

![● Model parallelism

● Actor creation manual or Cluster Sharding

val idExtractor: ShardRegion.IdExtractor = {

case i: AddInputs =>

}

val shardResolver: ShardRegion.ShardResolver = {

case i: AddInputs =>

}

Machine1

Machine2

Machine3

Machine4

Machine1

Machine2

Machine3

Machine4

[9]](https://image.slidesharecdn.com/zapletalmartinlargevolumedataanalytics-150611204218-lva1-app6891/85/Large-volume-data-analysis-on-the-Typesafe-Reactive-Platform-29-320.jpg)

![Output 18853 with result 0.6445355972059068 in 17:33:12.248

Output 18854 with result 0.6392081778097862 in 17:33:12.248

Output 18855 with result 0.6476549338361918 in 17:33:12.248

Output 18856 with result 0.6413832367161323 in 17:33:12.248

[17:33:12.353] [ClusterSystem-akka.actor.default-dispatcher-21] [Cluster(akka://ClusterSystem)] Cluster Node

[akka.tcp://ClusterSystem@127.0.0.1:2551] - Leader is removing unreachable node [akka.tcp:

//ClusterSystem@127.0.0.1:54495]

[17:33:12.388] [ClusterSystem-akka.actor.default-dispatcher-22] [akka.tcp://ClusterSystem@127.0.0.1:

2551/user/sharding/PerceptronCoordinator] Member removed [akka.tcp://ClusterSystem@127.0.0.1:54495]

[17:33:12.388] [ClusterSystem-akka.actor.default-dispatcher-35] [akka.tcp://ClusterSystem@127.0.0.1:

2551/user/sharding/EdgeCoordinator] Member removed [akka.tcp://ClusterSystem@127.0.0.1:54495]

[17:33:12.415] [ClusterSystem-akka.actor.default-dispatcher-18] [akka://ClusterSystem/user/sharding/Edge/e-2-

1-3-1] null java.lang.NullPointerException

[17:33:12.436] [ClusterSystem-akka.actor.default-dispatcher-2] [akka://ClusterSystem/user/sharding/Edge/e-2-

1-3-1] null java.lang.NullPointerException

[17:33:12.436] [ClusterSystem-akka.actor.default-dispatcher-2] [akka://ClusterSystem/user/sharding/Edge/e-2-

1-3-1] null java.lang.NullPointerException](https://image.slidesharecdn.com/zapletalmartinlargevolumedataanalytics-150611204218-lva1-app6891/85/Large-volume-data-analysis-on-the-Typesafe-Reactive-Platform-31-320.jpg)

![ElasticSearch gives up on partition tolerance, it means, if enough nodes

fail, cluster state turns red and ES does not proceed to operate on that

index.

ES is not giving up on availability. Every request will be responded,

either true (with result) or false (error).

● Synchronous and asynchronout replication

● Avaiability and consistency during partition

[4]](https://image.slidesharecdn.com/zapletalmartinlargevolumedataanalytics-150611204218-lva1-app6891/85/Large-volume-data-analysis-on-the-Typesafe-Reactive-Platform-34-320.jpg)

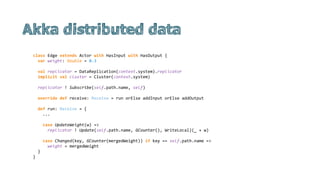

![class Edge(

override val aggregateId: Option[String],

override val replicaId: String,

override val eventLog: ActorRef) extends EventsourcedActor with HasInput with HasOutput {

var weight: Double = 0.3

override def onCommand: Receive = run orElse addInput orElse addOutput

private var versionedState: ConcurrentVersions[Double, Double] = ConcurrentVersions(0.3, (s, a) => a)

...

override def onEvent: Receive = {

case UpdatedWeightEvent(w) =>

versionedState = versionedState.update(w, lastVectorTimestamp, lastEmitterReplicaId)

if (versionedState.conflict) {

val conflictingVersions = versionedState.all

val avg = conflictingVersions.map(_.value).sum / conflictingVersions.size

val newTimestamp = conflictingVersions.map(_.updateTimestamp).foldLeft(VectorTime())(_.merge(_))

versionedState.update(avg, newTimestamp, replicaId)

versionedState = versionedState.resolve(newTimestamp)

weight = versionedState.all.head.value

} else {

weight = versionedState.all.head.value

}

}

}](https://image.slidesharecdn.com/zapletalmartinlargevolumedataanalytics-150611204218-lva1-app6891/85/Large-volume-data-analysis-on-the-Typesafe-Reactive-Platform-39-320.jpg)

![● Publisher and subscriber

● Source[Circle].map(_.toSquare).filter(_.color == blue)

● Lazy topology definition

Publisher Subscriber

toSquare

color == blue

backpressure](https://image.slidesharecdn.com/zapletalmartinlargevolumedataanalytics-150611204218-lva1-app6891/85/Large-volume-data-analysis-on-the-Typesafe-Reactive-Platform-43-320.jpg)

![input ~> network(topology, weights) ~> zipWithIndex ~> formatPrintSink

def buildLayer(

layer: Int,

input: Outlet[DenseMatrix[Double]],

topology: Array[Int],

weights: DenseMatrix[Double]): Outlet[DenseMatrix[Double]] = {

val currentLayer = builder.add(hiddenLayer(layer))

input ~> currentLayer.in0

hiddenLayerWeights(topology, layer, weights) ~> currentLayer.in1

if (layer < topology.length - 1) buildLayer(layer + 1, currentLayer.out, topology, weights)

else currentLayer.out

}](https://image.slidesharecdn.com/zapletalmartinlargevolumedataanalytics-150611204218-lva1-app6891/85/Large-volume-data-analysis-on-the-Typesafe-Reactive-Platform-44-320.jpg)

![def hiddenLayer(layer: Int) = {

def feedForward(features: DenseMatrix[Double], weightMatrices: DenseMatrix[Double]) = {

val bias = 0.2

val activation: DenseMatrix[Double] = weightMatrices * features

activation(::, *) :+= bias

sigmoid.inPlace(activation)

activation

}

FlowGraph.partial() { implicit builder: FlowGraph.Builder[Unit] =>

import akka.stream.scaladsl.FlowGraph.Implicits._

val zipInputAndWeights = builder.add(Zip[DenseMatrix[Double], DenseMatrix[Double]]())

val feedForwardFlow = builder.add(Flow[(DenseMatrix[Double], DenseMatrix[Double])]

.map(x => feedForward(x._1, x._2)))

zipInputAndWeights.out ~> feedForwardFlow

new FanInShape2(zipInputAndWeights.in0, zipInputAndWeights.in1, feedForwardFlow.outlet)

}

}](https://image.slidesharecdn.com/zapletalmartinlargevolumedataanalytics-150611204218-lva1-app6891/85/Large-volume-data-analysis-on-the-Typesafe-Reactive-Platform-45-320.jpg)

![[10]](https://image.slidesharecdn.com/zapletalmartinlargevolumedataanalytics-150611204218-lva1-app6891/85/Large-volume-data-analysis-on-the-Typesafe-Reactive-Platform-47-320.jpg)

![textFile mapmap

reduceByKey

collect

sc.textFile("counts")

.map(line => line.split("t"))

.map(word => (word(0), word(1).toInt))

.reduceByKey(_ + _)

.collect()

[11]](https://image.slidesharecdn.com/zapletalmartinlargevolumedataanalytics-150611204218-lva1-app6891/85/Large-volume-data-analysis-on-the-Typesafe-Reactive-Platform-49-320.jpg)

![● Accumulators

○ Processes can only add

○ Associative, commutative operation

○ Only driver program can read the value

○ Exactly once semantics only guaranteed for actions

object DoubleAccumulatorParam extends AccumulatorParam[Double] {

def zero(initialValue: Double): Double = 0

def addInPlace(d1: Double, d2: Double): Double = d1 + d2

}](https://image.slidesharecdn.com/zapletalmartinlargevolumedataanalytics-150611204218-lva1-app6891/85/Large-volume-data-analysis-on-the-Typesafe-Reactive-Platform-50-320.jpg)

![def forwardRun(

topology: Array[Int],

data: DenseMatrix[Double],

weightMatrices: Array[DenseMatrix[Double]]): DenseMatrix[Double] = {

val bias = 0.2

val outArray = new Array[DenseMatrix[Double]](topology.size)

val blas = BLAS.getInstance()

outArray(0) = data

for(i <- 1 until topology.size) {

val weights = hiddenLayerWeights(topology, i, weightMatrices)

val outputCurrent = new DenseMatrix[Double](weights.rows, data.cols)

val outputPrevious = outArray(i - 1)

blas.dgemm("N", "N", outputCurrent.rows, outputCurrent.cols,

weights.cols, 1.0, weights.data, weights.offset, weights.majorStride,

outputPrevious.data, outputPrevious.offset, outputPrevious.majorStride,

1.0, outputCurrent.data, outputCurrent.offset, outputCurrent.rows)

outArray(i) = outputCurrent

outArray(i)(::, *) :+= bias

sigmoid.inPlace(outArray(i))

}

outArray(topology.size - 1)

}](https://image.slidesharecdn.com/zapletalmartinlargevolumedataanalytics-150611204218-lva1-app6891/85/Large-volume-data-analysis-on-the-Typesafe-Reactive-Platform-51-320.jpg)

![● Multiple phases

● Catalyst

[12]](https://image.slidesharecdn.com/zapletalmartinlargevolumedataanalytics-150611204218-lva1-app6891/85/Large-volume-data-analysis-on-the-Typesafe-Reactive-Platform-55-320.jpg)

![object PushPredicateThroughProject extends Rule[LogicalPlan] {

def apply(plan: LogicalPlan): LogicalPlan = plan transform {

case filter @ Filter(condition, project @ Project(fields, grandChild)) =>

val sourceAliases = fields.collect { case a @ Alias(c, _) =>

(a.toAttribute: Attribute) -> c

}.toMap

project.copy(child = filter.copy(

replaceAlias(condition, sourceAliases),

grandChild))

}

}

case Divide(e1, e2) =>

val eval1 = expressionEvaluator(e1)

val eval2 = expressionEvaluator(e2)

eval1.code ++ eval2.code ++

q"""

var $nullTerm = false

var $primitiveTerm: ${termForType(e1.dataType)} = 0

if (${eval1.nullTerm} || ${eval2.nullTerm} ) {

$nullTerm = true

} else if (${eval2.primitiveTerm} == 0)

$nullTerm = true

else {

$primitiveTerm = ${eval1.primitiveTerm} / ${eval2.primitiveTerm}

}

""".children](https://image.slidesharecdn.com/zapletalmartinlargevolumedataanalytics-150611204218-lva1-app6891/85/Large-volume-data-analysis-on-the-Typesafe-Reactive-Platform-56-320.jpg)

![=== Result of Batch Resolution ===

=== Result of Batch Remove SubQueries ===

=== Result of Batch ConstantFolding ===

=== Result of Batch Filter Pushdown ===

== Parsed Logical Plan ==

'Project [('result + String) AS c0#2]

'Filter ('r.result >= String)

'Subquery r

'Project ['result]

'UnresolvedRelation [results], None

== Analyzed Logical Plan ==

Project [(CAST(result#1, DoubleType) + CAST(String, DoubleType)) AS c0#2]

Filter (CAST(result#1, DoubleType) >= CAST(String, DoubleType))

Subquery r

Project [result#1]

Subquery results

Project [_1#0 AS result#1]

LogicalRDD [_1#0], MapPartitionsRDD[5] at map at SQLContext.scala:394

== Optimized Logical Plan ==

LocalRelation [c0#2], []

== Physical Plan ==

LocalTableScan [c0#2], []](https://image.slidesharecdn.com/zapletalmartinlargevolumedataanalytics-150611204218-lva1-app6891/85/Large-volume-data-analysis-on-the-Typesafe-Reactive-Platform-57-320.jpg)

![[1] http://www.csie.ntu.edu.tw/~cjlin/talks/twdatasci_cjlin.pdf

[2] http://blog.acolyer.org/2015/06/05/scalability-but-at-what-cost/

[3] https://queue.acm.org/detail.cfm?id=2655736

[4] https://aphyr.com/

[5] http://www.benstopford.com/2015/04/28/elements-of-scale-composing-and-scaling-data-platforms/

[6] http://malteschwarzkopf.de/research/assets/google-stack.pdf

[7] http://malteschwarzkopf.de/research/assets/facebook-stack.pdf

[8] http://en.wikipedia.org/wiki/Two_Generals%27_Problem

[9] http://static.googleusercontent.com/media/research.google.com/en/us/archive/large_deep_networks_nips2012.pdf

[10] http://www.smartjava.org/content/visualizing-back-pressure-and-reactive-streams-akka-streams-statsd-grafana-and-influxdb

[11] http://www.slideshare.net/LisaHua/spark-overview-37479609

[12] https://ogirardot.wordpress.com/2015/05/29/rdds-are-the-new-bytecode-of-apache-spark/](https://image.slidesharecdn.com/zapletalmartinlargevolumedataanalytics-150611204218-lva1-app6891/85/Large-volume-data-analysis-on-the-Typesafe-Reactive-Platform-62-320.jpg)