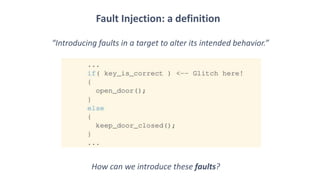

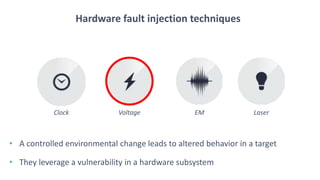

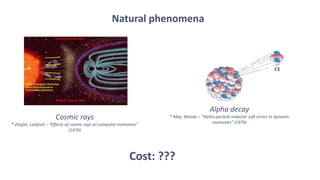

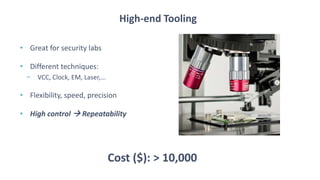

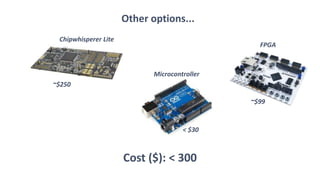

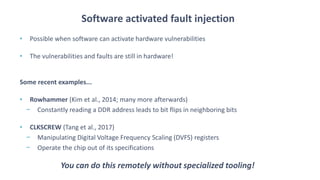

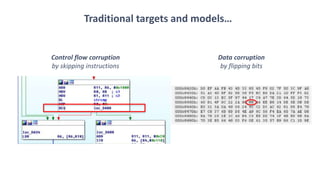

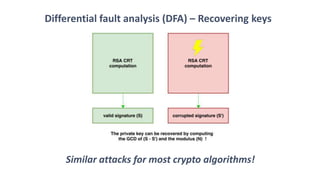

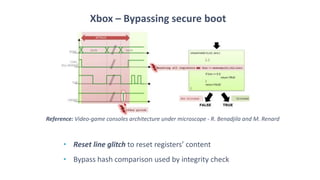

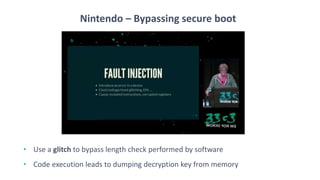

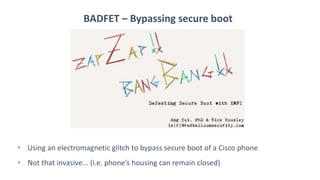

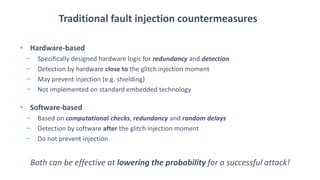

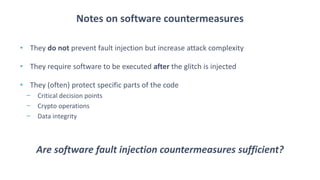

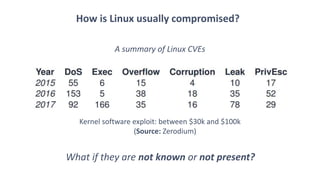

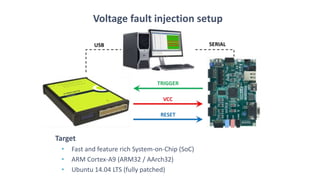

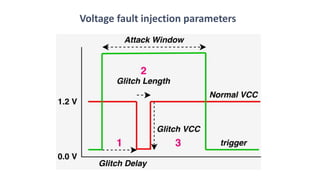

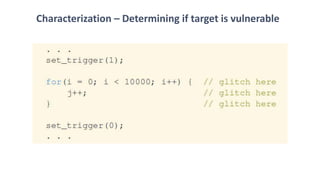

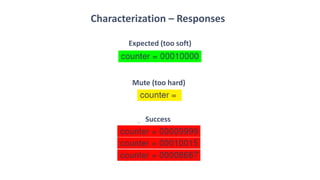

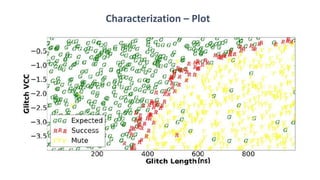

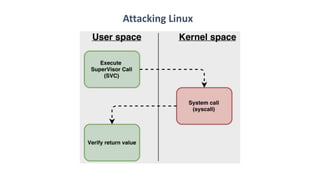

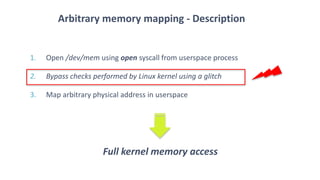

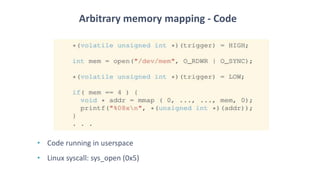

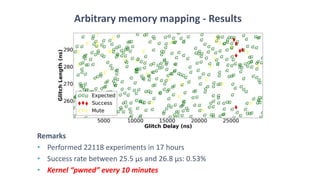

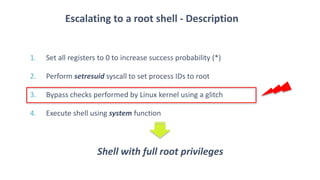

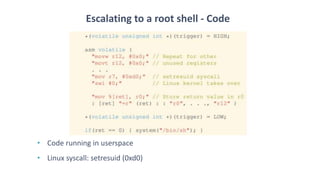

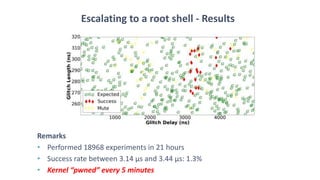

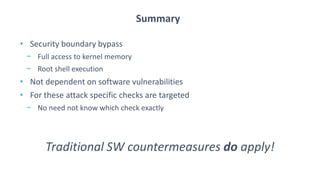

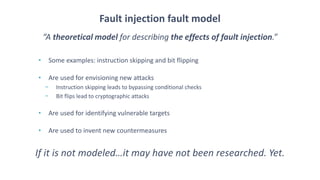

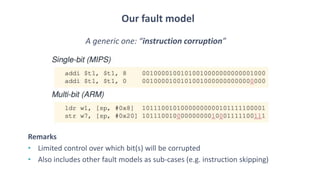

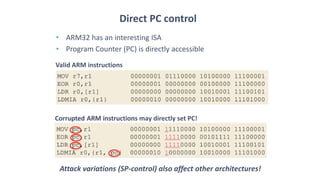

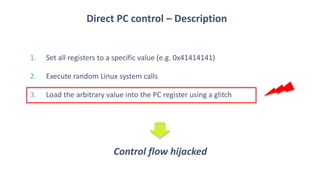

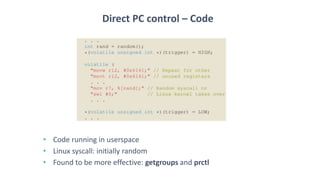

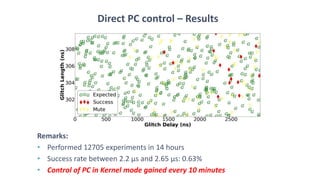

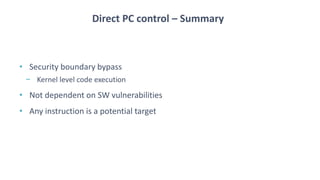

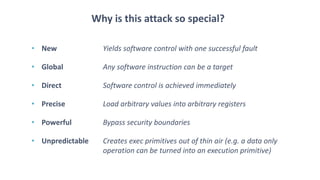

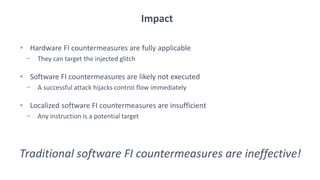

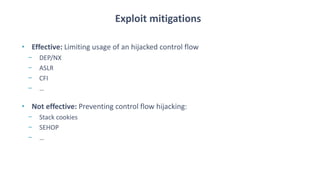

The document discusses fault injection techniques, specifically how hardware vulnerabilities can be exploited through methods like voltage manipulation and clock glitches to alter system operations. It highlights real-world examples, such as bypassing secure boot processes in gaming consoles, and underscores both software and hardware countermeasures that can be implemented to mitigate such attacks. The growing accessibility of fault injection tools and techniques poses a significant threat to conventional software security models, necessitating updated threat assessments and protective measures.