Meaning Representations for Natural Languages: Design, Models and Applications

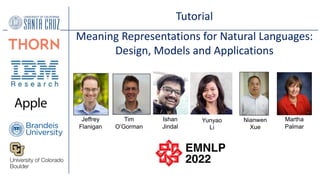

- 1. Tutorial Meaning Representations for Natural Languages: Design, Models and Applications Jeffrey Flanigan Tim O’Gorman Ishan Jindal Yunyao Li Nianwen Xue Martha Palmar

- 2. Meaning Representations for Natural Languages Tutorial Part 1 Introduction Jeffrey Flanigan, Tim O’Gorman, Ishan Jindal, Yunyao Li, Martha Palmer, Nianwen Xue

- 3. What should be in a Meaning Representation?

- 4. Mo#va#on: From Sentences to Proposi/ons Who did what to whom, when, where and how? Powell met Zhu Rongji Proposition: meet(Powell, Zhu Rongji) Powell met with Zhu Rongji Powell and Zhu Rongji met Powell and Zhu Rongji had a meeting . . . When Powell met Zhu Rongji on Thursday they discussed the return of the spy plane. meet(Powell, Zhu) discuss([Powell, Zhu], return(X, plane)) debate consult join wrestle battle meet(Somebody1, Somebody2)

- 5. Capturing seman.c roles SUBJ SUBJ SUBJ • Tim broke [ the laser pointer.] • [ The windows] were broken by the hurricane. • [ The vase] broke into pieces when it toppled over.

- 6. Capturing seman.c roles • Tim broke [ the laser pointer.] • [ The windows] were broken by the hurricane. • [ The vase] broke into pieces when it toppled over. Breake r Thing broken Thing broken

- 7. A proposition as a tree Zhu and Powell discussed the return of the spy plane discuss([Powell, Zhu], return(X, plane)) Zhu and Powell of the spy plane discuss return

- 8. discuss.01 - talk about Aliases: discussion (n.), discuss (v.), have_discussion (l.) • Roles: ARG0: discussant ARG1: topic ARG2: conversation partner, if explicit Valency Lexicon PropBank Frame File - 11,436 framesets Kingsbury & Palmer, LREC 2002 – Pradhan et. al., *SEM 2022,

- 9. discuss.01 - talk about Aliases: discussion (n.), discuss (v.), have_discussion (l.) • Roles: ARG0: discussant ARG1: topic ARG2: conversation partner, if explicit Valency Lexicon PropBank Frame File - 11,436 framesets Kingsbury & Palmer, LREC 2002 – Pradhan et. al., *SEM 2022,

- 10. discuss.01 ARG0: Zhu and Powell ARG1: return.01 Arg1: of the spy plane Zhu and Powell discussed the return of the spy plane

- 11. discuss.01 ARG0: Zhu and Powell ARG1: return.01 Arg1: of the spy plane discuss([Powell, Zhu], return(X, plane)) Zhu and Powell discussed the return of the spy plane

- 12. A proposi,on as a tree Zhu and Powell discussed the return of the spy plane discuss([Powell, Zhu], return(X, plane)) Zhu and Powell of the spy plane discuss.01 return.02 Arg0 Arg1 Arg1

- 13. A proposition as a tree Zhu and Powell discussed the return of the spy plane discuss([Powell, Zhu], return(X, plane)) Zhu and Powell of the spy plane discuss.01 return.02 Arg0 Arg1 Arg1 Arg0

- 14. A proposition as a tree Zhu and Powell discussed the return of the spy plane discuss([Powell, Zhu], return(X, plane)) Zhu and Powell of the spy plane discuss.01 return.02 Arg0 Arg1 Arg1 Arg0 ?? (Zhu)

- 15. A proposi,on as a tree Zhu and Powell discussed the return of the spy plane discuss([Powell, Zhu], return(X, plane)) Zhu and Powell of the spy plane discuss.01 return.02 Arg0 Arg1 Arg1

- 16. Proposi.on Bank • Hand annotated predicate argument structures for Penn Treebank • Standoff XML, points directly to syntac=c parse tree nodes, 1M words • Doubly annotated and adjudicated • (Kingsbury & Palmer, 2002, Palmer, Gildea, Xue, 2004, …). • Based on PropBank Frame Files • English valency lexicon: ~4K verb entries (2004) → ~11K v,n, adj, prep (2022) • Core arguments – Arg0-Arg5 • ArgM’s for modifiers and adjuncts • Mappings to VerbNet and FrameNet • Annotated PropBank Corpora • English 2M+, Chinese 1M+, Arabic .5M, Hindi/Urdu .6K, Korean, …

- 17. An Abstract Meaning Representation as a graph Zhu and Powell discussed the return of the spy plane discuss([Powell, Zhu], return(X, plane)) Zhu and Powell of the spy plane discuss.01 return.02 Arg0 Arg1 Arg1

- 18. An Abstract Meaning Representation as a graph Zhu and Powell discussed the return of the spy plane discuss([Zhu, Powell], return(X, plane)) and spy plane discuss.01 return.02 Arg0 Arg1 Arg1 AMR drops: Determiners Function words adds: NE tags. Wiki links

- 19. An Abstract Meaning Representation as a graph Zhu and Powell discussed the return of the spy plane discuss([Zhu, Powell], return(X, plane)) and plane discuss.01 return.02 Arg0 Arg1 Arg1 AMR drops: Determiners Function words adds: NE tags. Wiki links Noun Phrase Structure spy.01 Arg0-of

- 20. An Abstract Meaning Representa,on as a graph Zhu and Powell discussed the return of the spy plane discuss([Powell, Zhu], return(X, plane)) and plane discuss.01 return.02 Arg0 Arg1 Arg1 Arg0 ?? (Zhu) AMR drops: Determiners Function words adds: NE tags. Wiki links Noun Phrase Structure Implicit Arguments Coreference Links spy.01 Arg0-of

- 21. An Abstract Meaning Representa,on as a graph Zhu and Powell discussed the return of the spy plane discuss([Powell, Zhu], return(X, plane)) and of the spy plane discuss.01 return.02 Arg0 Arg1 Arg1 Arg0 ?? (Zhu) AMR drops: Determiners Function words adds: NE tags. Wiki links Noun Phrase Structure Implicit Arguments Coreference Links spy.01 Arg0-of

- 22. • Stay tuned AMRs – Tim O’Gorman

- 23. Mo#va#on: From Sentences to Proposi/ons Who did what to whom, when, where and how? Powell met Zhu Rongji Proposition: meet(Powell, Zhu Rongji) Powell met with Zhu Rongji Powell and Zhu Rongji met Powell and Zhu Rongji had a meeting . . . When Powell met Zhu Rongji on Thursday they discussed the return of the spy plane. meet(Powell, Zhu) discuss([Powell, Zhu], return(X, plane)) debate consult join wrestle battle meet(Somebody1, Somebody2)

- 24. Motivation: From Sentences to Propositions Who did what to whom, when, where and how? Powell met Zhu Rongji Proposition: meet(Powell, Zhu Rongji) Powell met with Zhu Rongji Powell and Zhu Rongji met Powell and Zhu Rongji had a meeting . . . When Powell met Zhu Rongji on Thursday they discussed the return of the spy plane. meet(Powell, Zhu) discuss([Powell, Zhu], return(X, plane)) debate consult join wrestle battle meet(Somebody1, Somebody2) ENGLISH!

- 25. Mo#va#on: From Sentences to Proposi/ons Who did what to whom, when, where and how? Powell reunió Zhu Rongji Proposition: reunir(Powell, Zhu Rongji) Powell reunió con Zhu Rongji Powell y Zhu Rongji reunió Powell y Zhu Rongji tuvo una reunión . . . Powell se reunió con Zhu Rongji el jueves y hablaron sobre el regreso del avión espía. reunir(Powell, Zhu) hablar[Powell, Zhu], regresar(X, avión)) зустрів ا ﻟ ﺘ ﻘ ﻰ 遇⻅ मुलाकात की พบ meet(Somebody1, Somebody2) Thai Hindi Chinese Ukrainian Arabic Other Languages? Spanish

- 26. • Several languages already have valency lexicons • Chinese, Arabic, Hindi/Urdu, Korean PropBanks, …. • Czech Tectogrammatical SynSemClass , https://ufal.mff.cuni.cz/synsemclass • VerbNets, FrameNets: Spanish, Basque, Catalan, Portuguese, Japanese, … • Linguistic valency lexicons: Arapaho, Lakota, Turkish, Farsi, Japanese, … • For those without, follow EuroWordNet approach: project from English? • Universal Proposition Banks for Multilingual Semantic Role Labeling • See Ishan Jindal in Part 2 • Can AMR be applied universally to build language specific AMRs? • Uniform Meaning Representation • See Nianwen Xue after the AM break How do we cover thousands of languages?

- 27. • Universal PropBank was developed by IBM, primarily with translaLon Prac=cal and efficient, produces consistent representa=ons for all languages Projects English frames to parallel sentences in 23 languages • BUT - May obscure language specific seman=c nuances Not op=mal for target language applica=ons: IE, QA,… • Uniform Meaning RepresentaLon • Richer than PropBank alone • Captures language specific characteris=cs while preserving • consistency • BUT - Producing sufficient hand annotated data is SLOW! • Comparisons of UP/UMR will teach us a lot about differences between languages UP vs UMR

- 28. • Morning Session, Part 1 • Introduc=on - Martha Palmer • Background and Resources – Martha Palmer • Abstract Meaning Representa=ons - Tim O’Gorman • Break • Morning Session, Part 2 • Rela=ons to other Meaning Formalisms: AMR, UCCA, Tectogramma=cal, DRS (Parallel Meaning Bank), Minimal Recursion Seman=cs and Seman=c Parsing – Tim O’Gorman • Uniform Meaning Representa=ons – Nianwen Xue Tutorial Outline

- 29. • Afternoon Session, Part 1 • Modeling Meaning Representation: SRL - Ishan Jindal • Modeling Meaning Representation: AMR – Jeff Flanigan • Break • Afternoon Session, Part 2 • Applying Meaning Representations – Yunyao Li, Jeff Flanigan • Open Questions and Future Work – Tim O’Gorman Tutorial Outline

- 30. Meaning Representations for Natural Languages Tutorial Part 2 Common Meaning Representations Jeffrey Flanigan, Tim O’Gorman, Ishan Jindal, Yunyao Li, Martha Palmer, Nianwen Xue

- 31. • AMR as a format is older (Kasper 1989, Langkilde & Knight 1998), but with no PropBank, no training data. • Propbank showed that large-scale training sets could be annotated for SRL • Modern AMR (Banarescu et al. (2013) main innovation: making large-scale sembanking possible: • AMR 3.0 more than 60k sentences in English • CAMR more than 20k sentences in Chinese “AMR” annota,on

- 32. • Shi$ from SRL to AMR – from spans to graphs • In SRL we separately represent each predicate’s arguments with spans • AMR instead uses graphs with one node per concept AMR Basics – SRL to AMR

- 33. • “PENMAN” is the text-based format used to represent these graphs AMR Basics – PENMAN (l / like-01 :ARG0 (c / cat :mod (l / little)) :ARG1 (e / eat-01 :ARG0 c :ARG1 (c2 / cheese)))

- 34. • Edges are represented by indentation and colons (:EDGE) • Individual variables identify each node AMR Basics – PENMAN (l / like-01 :ARG0 (c / cat :mod (l / little)) :ARG1 (e / eat-01 :ARG0 c :ARG1 (c2 / cheese)))

- 35. • If a node has more than one edge, it can be referred to again using that variable. • Terminology: We call that a re- entrancy • This is used for all references to the same enPty/thing in a sentence! • This is what allows us to encode graphs in this tree-like format AMR Basics – PENMAN (l / like-01 :ARG0 (c / cat :mod (l / little)) :ARG1 (e / eat-01 :ARG0 c :ARG1 (c2 / cheese)))

- 36. • Inverse roles allow us to encode things like relative clauses • Any relation of the form “:X-of” is an inverse. • Interchangeable! • (entity, ARG0-of, predicate) generally equal to (predicate, ARG0, entity) AMR Basics – PENMAN (l / like-01 :ARG0 (h / he) :ARG1 (c / cat :ARG0-of (e / eat-01 :ARG1 (c2 / cheese))))

- 37. • Are the graphs the same for “cats that eat cheese” and “cats eat cheese”? • No! Every graph gets a “Top” edge defining the semantic head/root AMR Basics – PENMAN (c / cat :ARG0-of (e / eat-01 :ARG1 (c2 / cheese))) (e / eat-01 :ARG0 (c / cat) :ARG1 (c2 / cheese))

- 38. • Named en))es are typed and then linked to a “name” node with features for each name token. • 70+ categories like person, government-organiza)on, newspaper, city, food-dish, conference • Note that name strings (and some other things like numbers) are constants — they aren’t assigned variables. • En)ty linking: connect to wikipedia entry for each NE (when available) AMR Basics – PENMAN

- 39. • That’s AMR notation! Let’s review before discussing how we annotate AMRs. (e / eat-01 :ARG0 (d / dog) :ARG1 (b / bone :quant 4 :ARG1-of (f / find-01 :ARG0 d))) 3 9 variable concept constant inverse rela>on reentrancy AMR Basics – PENMAN

- 40. • AMR does limited normalization aimed at reducing arbitrary syntactic variation (“syntactic sugar”) and maximizing cross- linguistic robustness • Mapping all predicative things (verbs, adjectives, many nouns) to PropBank predicates. Some morphological decomposition • Limited speculation: mostly represent direct contents of sentence (add pragmatic content only when it can be done consistently) • Canonicalize the rest: removal of semantically light predicates and some features like definiteness (controversial) AMR Basics 2 – Annotation Philosophy

- 41. AMR Basics 2 – Annotation Philosophy • We generalize across parts of speech and etymologically related words: • But we don’t generalize over synonyms (hard to do consistently): 4 1 My fear of snakes fear-01 I’m terrified of snakes terrify-01 Snakes creep me out creep_out-03 My fear of snakes fear-01 I am fearful of snakes fear-01 I fear snakes fear-01 I’m afraid of snakes fear-01

- 42. AMR Basics 2 – Annotation Philosophy • Predicates use the PropBank inventory. • Each frame presents annotators with a list of senses. • Each sense has its own definitions for its numbered (core) arguments 4 2

- 43. AMR Basics 2 – Annotation Philosophy • If a seman)c role is not in the core roles for a roleset, AMR provides an inventory of non-core roles • These express things like :+me, :manner, :part, :loca+on, :frequency • Inventory on handout, or in editor (the [roles] bu@on) 4 3

- 44. AMR Basics 2 – Annota4on Philosophy • Ideally one seman)c concept = one node • Mul)-word predicates modeled as a single node • Complex words can be decomposed • Only limited, replicable decomposi)on (e.g. kill does not become “cause to die”) 4 4 The thief was lining his pockets with their investments (l / line-pocket-02 :ARG0 (p / person :ARG0-of (t / thieve-01)) :ARG1 (t2 / thing :ARG2-of (i2 / invest-01 :ARG0 (t3 / they))))

- 45. AMR Basics 2 – Annotation Philosophy • All concepts drop plurality, aspect, definiteness, and tense. • Non-predicative terms simply represented in singular, nominative form 4 5 A cat The cat cats the cats (c / cat) ea=ng eats ate will eat (e / eat-01) They Their Them (t / they)

- 46. 4 6 The man described the mission as a disaster. The man’s description of the mission: disaster. As the man described it, the mission was a disaster. The man described the mission as disastrous. (d / describe-01 :ARG0 (m / man) :ARG1 (m2 / mission) :ARG2 (d / disaster)) AMR Basics 2 – Annotation Philosophy

- 47. Meaning Representa=ons for Natural Languages Tutorial Part 2 Common Meaning Representa0ons • Format & Basics • Some Details & Design Decisions • Prac=ce - Walking through a few AMRs • Mul=-sentence AMRs • Rela=on to Other Formalisms • UMRs • Open Ques=ons in Representa=on Representa)on Roadmap

- 48. Details- Specialized Normaliza3ons • We also have special entity types we use for normalizable entities. 4 8 (d / date-entity :weekday (t / tuesday) :day 19) (m / monetary-quantity :unit dollar :quant 5) “Tuesday the 19th” “five bucks”

- 49. Details- Specialized Normaliza3ons • We also have special enLty types we use for normalizable enMMes. 4 9 (r / rate-entity-91 :ARG1 (m / monetary-quantity :unit dollar :quant 3) :ARG2 (v / volume-quantity :unit gallon :quant 1)) “$3 / gallon”

- 50. Details - Specialized Predicates • Common construcLons for kinship and organizaLonal relaLons are given general predicates like have-org-role-91 5 0 (p / person :ARG0-of (h / have-org-role-91 :ARG1 (c / country :name (n / name :op1 "US") :wiki "United_States") :ARG2 (p2 / president) “The US president” have-org-role-91 ARG0: office holder ARG1: organization ARG2: title of office held ARG3: description of responsibility

- 51. Details - Specialized Predicates • Common constructions for kinship and organizational relations are given general predicates like have-org-role-91 5 1 (p / person :ARG0-of (h / have-rel-role-91 :ARG1 (s / she) :ARG2 (f / father) “Her father” have-rel-role-91 ARG0: entity A ARG1: entity B ARG2: role of entity A ARG3: role of entity B ARG4: relationship basis

- 52. Coreference and Control 5 2 • Within sentences, all references to the same “referent” are merged into the same variable. • This applies even with pronouns or even descriptions Pat saw a moose and she ran (a / and :op1 (s / see-01 :ARG0 (p /person :name (n / name :op1 “Pat”)) :ARG1 (m / moose) ) :op2 (run-02 :ARG0 p))

- 53. Reduc)on of Seman)cally Light Matrix Verbs 5 3 • Specific predicates (specifically the English copula) NOT used in AMR. • Copular predicates which *many languages would omit* are good candidates for removal • Replace with rela=ve SEMANTIC asser=ons (e.g. :domain is “is an atribute of”) • UMR will discuss alterna=ves to just omiung these. the pizza is free (f / free-01 :arg1 (p / pizza)) The house is a pit (p / pit :domain (h / house))

- 54. • For two-place discourse connectives, we define frames • Although it rained, we walked home • For list-like things (including coordination) we use “:op#” to define places in the list: • Apples and bananas 5 4 (a / and :op1 (a2 / apple) :op2 (b / banana)) Have-concession-91: Arg2: “although” clause Arg1: main clause Discourse Connec)ves and Coordina)on

- 55. Meaning Representations for Natural Languages Tutorial Part 2 Common Meaning Representations • Format & Basics • Some Details & Design Decisions • Practice - Walking through a few AMRs • Multi-sentence AMRs • Relation to Other Formalisms • UMRs • Open Questions in Representation Representation Roadmap

- 56. Practice - Let’s Try some Sentences • Feel free to annotate by hand (or ponder how you’d want to represent them) • Edmund Pope tasted freedom today for the first 3me in more than eight months. • Pope is the American businessman who was convicted last week on spying charges and sentenced to 20 years in a Russian prison. Taste-01: Arg0: taster Arg1: food Useful Normalized forms: - Rate-en5ty - Ordinal-en5ty - Date-en5ty - Temporal-quan5ty Useful NER types: - Person - Country Convict-01 Arg0: judge Arg1: person convicted Arg2: convicted of what Spy-01 Arg0: secret agent Arg1: entity spied /seen Charge-01 Asking price Arg0: seller Arg1: asking price Arg2: buyer Arg3 :commodity Charge-05 Assign a role (including criminal charges) Arg0:assigner Arg1 : assignee Arg2: role or crime Sentence-01 Arg0: judge/jury Arg1: criminal Arg2: punishment

- 57. Prac3ce- Let’s Try some Sentences Edmund Pope tasted freedom today for the first time in more than eight months. (t2 / taste-01 :ARG0 (p / person :wiki "Edmond_Pope" :name (n2 / name :op1 "Edmund" :op2 "Pope")) :ARG1 (f / free-04 :ARG1 p) :time (t3 / today) :ord (o3 / ordinal-entity :value 1 :range (m / more-than :op1 (t / temporal-quantity :quant 8 :unit (m2 / month)))))

- 58. Prac3ce- Let’s Try some Sentences Pope is the American businessman who was convicted last week on spying charges and sentenced to 20 years in a Russian prison. (b2 / businessman :mod (c5 / country :wiki "United_States" :name (n6 / name :op1 "America")) :domain (p / person :wiki "Edmond_Pope" :name (n5 / name :op1 "Pope")) :ARG1-of (c4 / convict-01 :ARG2 (c / charge-05 :ARG1 b2 :ARG2 (s2 / spy-01 :ARG0 p)) :time (w / week :mod (l / last))) :ARG1-of (s / sentence-01 :ARG2 (p2 / prison :mod (c3 / country :wiki "Russia" :name (n4 / name :op1 "Russia")) :duration (t3 / temporal-quantity :quant 20 :unit (y2 / year))) :ARG3 s2))

- 59. Meaning Representations for Natural Languages Tutorial Part 2 Common Meaning Representations • Format & Basics • Some Details & Design Decisions • Practice - Walking through a few AMRs • Multi-sentence AMRs • Relation to Other Formalisms • UMRs • Open Questions in Representation Representation Roadmap

- 60. A final component in AMR: Multi-sentence! • AMR 3.0 release contains Mul--sentence AMR annota-ons • Document-level coreference: • Connec=ng men=ons that co-refer • Connec=ng some par=al coreference • Making cross-sentence implicit seman=c roles • John took his car to the store. • He bought milk (from the store). • He put it in the trunk.

- 61. A final component in AMR: Mul)-sentence! • AMR 3.0 release contains Mul--sentence AMR annota-ons • Annota=on was done between AMR variables, not raw text — nodes are coreferent • (t / take-01 :ARG0 (p / person :name (n / name :op1 “John”)) :ARG1 (c / car :poss p) :ARG3 (s / store) • (B / buy-01 :ARG0 (h / he) :ARG1 (m / milk))

- 62. A final component in AMR: Mul)-sentence! • AMR 3.0 release contains Multi-sentence AMR annotations • "implicit role" annotation was done by showing the remaining roles to annotators and allowing them to be added to coreference chains. • (t / take-01 :ARG0 (p / person :name (n / name :op1 “John”)) :ARG1 (c / car :poss p) • :ARG2 (x / implicit :op1 “taken from, source…” :ARG3 (s / store) • (B / buy-01 :ARG0 (h / he) :ARG1 (m / milk) :ARG2 (x / implicit :op1“seller”)

- 63. A final component in AMR: Multi-sentence! • AMR 3.0 release contains Multi-sentence AMR annotations • Implicit roles are worth considering for meaning representation, especially for languages other than English • Null subject (and sometimes null object) constructions are very cross-linguistically common, can carry lots of information • Arguments of nominalizations can carry a lot of assumed information in scientific domains

- 64. A final component in AMR: Multi-sentence! • MulL-sentence AMR data: training and evaluaLon data for creaLng a graph for a whole document • Was not impossible before mul=-sentence AMR: could boostrap with span-based coreference data • Also extended to spa=al AMRs (human-robot interac=ons - Bonn et al .2022 • MS-AMR work was done on top of exisLng gold AMR annotaLons — a separate process.

- 65. Meaning Representa=ons for Natural Languages Tutorial Part 2 Common Meaning Representa0ons • Format & Basics • Some Details & Design Decisions • Prac=ce - Walking through a few AMRs • Mul=-sentence AMRs • Rela>on to Other Formalisms • UMRs • Open Ques=ons in Representa=on Representa6on Roadmap

- 66. Comparison to Other Frameworks 6 6 • Meaning representations vary along many dimensions! • How meaning is connected to text • Relationship to logical and/or executable form • Mapping to Lexicons/Ontologies/Tasks • Relationship to discourse • We’ll overview these followed by some side- by-side comparisons

- 67. Alignment to Text / Compositionality 6 7 • Historical approach to meaning representa1ons: represent context-free seman1cs, as defined by a par1cular grammar model • AMR at other extreme: AMR graph annotated for a single sentence, but no individual mapping from tokens to nodes

- 68. Alignment to Text / Composi6onality 6 8 Oepen & Kuhlmann (2016) “flavors” of meaning representations: Type 0: Bilexical Type 1: Anchored Type 2: Unanchored Nodes each correspond to one token (Dependency parsing) Nodes are aligned to text (can be subtoken or multi-token) No mapping from graph to surface form Universal Dependencies UCCA AMR MRS-connected frameworks (DM, EDS) DRS-based frameworks (PMB / GMB) Some executable/task- specific semantic parsing frameworks Prague Semantic dependencies Prague tectogrammatical

- 69. Alignment to Text / Compositionality 6 9 Less thoroughly defined: adherence to grammar/composiAonally (cf. Bender et al. 2015) Some frameworks (MRS/ DRS below) have parAcular asserAons about how a given meaning representaAon was derived (Aed to a parAcular grammar) AMR encodes many useful things that are oPen *not* considered composiAonal — named enAty typing, cross-sentence coreference, word senses, etc. <- “Sentence meaning” Extragrammatical inference -> Only encode “compositional” meanings predicted by a particular theory of grammar some useful pragmatic inference (e.g. sense distinctions, named entity types) Any wild inferences needed for task

- 70. Alignment to Text / Compositionality - UCCA 7 0 • Universal Conceptual Cogni2ve Annota2on : based on a typological theory (Dixon’s BLT) of how to do coarse-grained seman2cs across languages • Similar to a cross between dependency and cons2tuency parses (labeled edges)- some2mes very syntac2c • Coarse-grained roles, e.g.: • A: par2cipant • S: State • C: Center • D: Adverbial • E: elaborator • “Anchored” graphs, in the Open & Kuhlman taxonomy (somewhat composi2onal, but no formal rules for how a given node is derived)

- 71. Alignment to Text / Compositionality - Prague 7 1 • Very similar to AMR with more general semantic roles (predicates use Vallex predicates (valency lexicon) and a shared set of semantic roles similar to VerbNet) • Semantic graph is aligned to syntactic graph layers (“type 1”) • “Prague Czech-English Dependency Treebank” • “PSD” reduced form fully bilexical (“Type 0”) for dependency parsing. • Full PCEDT also has rich semantics like implicit roles (e.g. null subjects) – “anchored” (“Type 1”) For the Czech version of “An earthquake struck Northern California, killing more than 50 people.” (Čmejrek et al. 2004)

- 72. Logical & Executable Forms 7 2 • Lots of logical desiderata: • Modeling whether events happen and/or are believed (and other modality questions): Sam believes that Bill didn’t eat the plums. • Understanding quantifications: whether “every child has a favorite song” refers to one song or many • Technically our default assumption for AMR is Neo-Davidsonian: bag of triples like (“instance-of(b, believe-01)”, “instance-of(h, he), “ARG0(b, h)” • One cannot modify more than one node in the graph • PENMAN is a bracketed tree that can be treated like a logical form (with certain assumptions or addition to certain new annotations) • Artzi et al. 2015), Bos (2016), Stabler (2017), : Pustejovsky et al. (2019), etc. • Competing frameworks like DRS and MRS more specialized for this.

- 73. Logical & Executable Forms 7 3 • Lots of logical desiderata: • Modeling whether events happen and/or are believed (and other modality questions): Sam believes that Bill didn’t eat the plums. • Understanding quantifications: whether “every child has a favorite song” refers to one song or many • Technically our default assumption for AMR just means that something like “:polarity -“ is a feature of a single node; no semantics for quantifiers like “every” • With certain assumptions or addition to certain new annotations, PENMAN is a bracketed tree that can be treated like a logical form • Artzi et al. 2015), Bos (2016), Stabler (2017), : Pustejovsky et al. (2019), etc.; proposals for “UMR” treatments as well. • Competing frameworks like DRS and MRS more specialized for this.

- 74. Logical & Executable Forms - DRS 7 4 • Discourse Representa1on Structures (annota1ons in Groening Meaning Bank and Parallel Meaning Bank) • DRS frameworks do scoped meaning representa1on • Outputs originally modified from CCG parser LF outputs-> DRS • DRS uses “boxes” which can be negated, asserted, believed in. • This is not na1vely a graph representa1on! “box variables”(bo[om) one way of thinking about these • a triple like “agent(e1, x1)” is part of b3 • Box b3 is modified (e.g. b2 POS b3)

- 75. Logical & Executable Forms - DRS 7 5 • Grounded in long theore5cal DRS tradi5on (Heim & Kamp) for handling discourse referents, presupposi/ons, discourse connec/ves, temporal rela/ons across sentences, etc. • DRS for “everyone was killed” (Liu et al. 2021)

- 76. Logical & Executable Forms - MRS 7 6 Minimal Recursion Semantics (and related frameworks) • Copestake (1997) model proposed for semantics of HPSG - this is connected to other underspecification solutions (Glue semantics / hole semantics / etc. ) • Define set of constraints over which variables outscope other variables • HPSG grammars like the English Resource Grammar produce ERS (English resource semantics) outputs (which are roughly MRS) and have been modified into a simplified DM format (“type 0” bilexical dependency)

- 77. Logical & Executable Forms - MRS 7 7 • Underspecification in practice: • MRS can the thought of as many fragments with constraints on how they scope together • Those define a set of MANY possible combinations into a fully scoped output, e.g.: Every dog barks and chases a cat(as interpreted in Manshadi et al. 2017)

- 78. Logical & Executable Forms- MRS 7 8 • Variables starting with h are “handle” variables used to define constraints on scope. • h19 = things under scope of negation • H21 = leave_v_1 head • H19 =q h21 : equality modulo quantifiers • (Neg outscopes leave) • “forest” of possible readings • Takeaway: Constraints on which variables “outscope" others can add flexible amounts of scope info

- 79. Lexicon/Ontology Differences 7 9 • Predicates can use different ontologies – e.g. more grounded in grammar/valency, or more tied to taxonomies like WordNet • Semantic Roles can be encoded differently, e.g. with non-lexicalized semantic roles (discussed for UMR later) • Some additional proposals: “BabelNet Meaning Representation” propose using VerbAtlas (clusters over wordnet senses with VerbNet semantic role templates) DRS (GMB/PMB) MRS Prague (PCEDT ) AMR UCCA Semantic Roles VerbNet (general roles) General roles General roles + valency lexicon Lexicalized numbered arguments Fixed general roles Predicates WordNet grammatical entries Vallex valency lexicon (Propbank-like) Propbank Predicates A few types (State vs process …) non-predicates wordnet Lemmas Lemmas Named entity types Lemmas

- 80. Task-specific Representations 8 0 • Many use “Seman1c Parsing” to refer to task-specific, executable representa1ons • Text-to-SQL • interac1on with robots, text to code/commands • interac1on with determinis1c systems like calendars/travel planners • Similar dis1nc1ons to a general-purpose meaning representa1on, BUT • May need to map into specific task taxonomies and ignore content not relevant to task • Can require more detail or inference than what’s assumed for “context-free” representa1ons • Ogen can be thought of as first-order logic forms — simple predicates + scope

- 81. Task-specific Representations 8 1 • Classic datasets (Table from Dong & Lapata 2016) regard household commands or querying KBs • Recent tasks for text-to-SQL

- 82. Task-specific Representa6ons- Spa6al AMR 8 2 • Additional example of task-specific semantic parsing is human-robot interaction • Non-trivial to simply pull those interactions from AMR: normal human language is not normally sufficiently informative about spatial positioning, frames of reference, etc. • Spatial AMR project (Bonn et al. 2020) a good example of project attempting to add all “additional detail” needed to handle structure- building dialogues (giving instructions for building Minecraft structures) • Released with dataset of building actions, success/failures, views of the event different angles.

- 83. Discourse-Level Annotation 8 3 • Do you do multi-sentence coreference? • Partial coreference (set-subset, implicit roles, etc.)? • Discourse connectives? • Treatment of multi-sentence tense, modality, etc.? • Prague Tectogrammatical annotations & AMR only general-purpose representations with extensive multi-sentence annotations

- 84. Overviewing Frameworks vs. AMR Alignment Logical Scoping & Interpretation Ontologies and Task-Specifc Discourse-Level DRS (Groeningen / Parallel) Compositional /Anchored Scoped representation (boxes) Rich predicates (WordNet), general roles Can handle referents, connectives MRS Compositional /Anchored Underspecified scoped representation Simple predicates, general roles N/a UCCA Anchored Not really scoped Simple predicates, general roles Some implicit roles Prague Tecto Anchored Not really scoped Rich predicates, semi-lexicalizekd roles Rich multi- sentence conference AMR Unanchored (English); Anchored (Chinese) Not really scoped yet Rich predicates, lexicalized roles Rich multi- sentence conference

- 85. End of Meaning Representation Comparison • What’s next: UMR — proposal within AMR-connected scholars on next steps for AMR. • QuesHons about how AMR is annotated? • QuesHons about how it relates to other meaning representaHon formalisms?

- 86. Meaning Representations for Natural Languages Tutorial Part 2 Common Meaning Representations Jeffrey Flanigan, Tim O’Gorman, Ishan Jindal, Yunyao Li, Martha Palmer, Nianwen Xue

- 87. Outline ► Background ► Do we need a new meaning representation? What’s wrong with existing meaning representations? ► Aspects of Uniform Meaning Representation (UMR) ► UMR starts with AMR but made a number of enrichments ► UMR is a document-level meaning representation that represents temporal dependencies, modal dependencies, and coreference ► UMR is a cross-lingual meaning representation that separates aspects of meaning that are shared across languages language-independent from those that are idiosyncratic to individual languages (language-specific) ► UMR-Writer -- a tool for annotating UMRs

- 88. Why aren’t exisHng meaning representaHons sufficient? ► Existing meaning representations vary a great deal in their focus and perspective ► Formal semantic representations aimed at supporting logical inference focus on the proper representation of quantification, negation, tense, and modality (e.g., Minimal Recursion Semantics (MRS) and Discourse Representation Theory (DRT). ► Lexical semantic representations focus on the proper representation of core predicate-argument structures, word sense, named entities and relations between them, coreference (e.g., Tectogrammatical Representation (TR), AMR). ► The semantic ontology they use also differ a great deal. For example, MRS doesn’t have a classification of named entities at all, while AMR has over 100 types of named entities

- 89. UMR uses AMR as a starting point ► Our starting point is AMR, which has a number of attractive properties: ► Easy to read, ► scalable (can be directly annotated without relying on syntactic structures), ► has information that is important to downstream applications (e.g., semantic roles, named entities and coreference), ► represented in a well-defined mathematical structure (asingle-rooted, directed, acylical graph) ► Our general strategy is to augment AMR with meaning components that are missing and adapt it to cross-lingual settings

- 90. ParHcipants of the UMR project ► UMR stands for Uniform Meaning Representation, and it is an NSF funded collaborative project between Brandeis University, University of Colorado, and University of New Mexico, with a number of partners outside these institions

- 91. From AMR to UMR Gysel et al. (2021) ► At the sentence level, UMR adds: ► An aspect attribute to eventive concepts ► Person and number attributes for pronouns and other nominal expressions ► Quantification scope between quantified expressions ► At the document level UMR adds: ► Temporal dependencies in lieu of tense ► Modal dependencies in lieu of modality ► Coreference relations beyond sentence boundaries ► To make UMR cross-linguistically applicable, UMR ► defines a set of language-independent abstract concepts and participant roles, ► uses lattices to accommodate linguistic variability ► designs specifications for complicated mappings between words and UMR concepts.

- 92. UMR sentence-level addi6ons ► An Aspect attribute to event concepts ► Aspect refers to the internal constituency of events - their temporal and qualitative boundedness ► Person and number attributes for pronouns and other nominal expressions ► A set of concepts and relations for discourse relations between clauses ► Quantification scope between quantified expressions to facilitate translation of UMR to logical expressions

- 93. UMR attribute: aspect Aspect Habitual Imperfective Process State Atelic Process Perfective Activity Endeavor Performance Reversible State Irreversible State Inherent State Point State Undirected Activity Directed Activity Semelfactive Undirected Endeavor Directed Endeavor Incremental Accomplishment Nonincremental Accomplishment Directed Achievement Reversible Irreversible

- 94. UMR aNribute: coarse-grained aspect ► State: unspecified type of state ► Habitual: an event that occurs regularly in the past or present, including generic statements ► Activity: an event that has not necessarily ended and may be ongoing at Document Creation Time (DCT). ► Endeavor: a process that ends without reaching completion (i.e., termination) ► Performance: a process that reaches a completed result state

- 95. Coarse-grained Aspect as an UMR attribute He wants to travel to Albuquerque. (w / want :aspect State) She rides her bike to work. (r / ride :aspect Habitual) He was writing his paper yesterday. (w / write :aspect Activity) Mary mowed the lawn for thirty minutes. (m / mow :aspect Endeavor)

- 96. Fine-grained Aspect as an UMR attribute My cat is hungry. (h / have-mod-91 :aspect Reversible state) The wine glass is shattered. (h / have-mod-91 :aspect Irreversible state) My cat is black and white. (h / have-mod-91 :aspect Inherent state) It is 2:30pm. (h / have-mod-91 :aspect Point state)

- 97. AMR vs UMR on how pronouns are represented ► In AMR, pronouns are treated as unanalyzable concepts ► However, pronouns differ from language to language, so UMR decomposes them into person and number attributes ► These attributes can be applied to nominal expressions too AMR: (s / see-01 :ARG0 (h/ he) :ARG1 (b/ bird :mod (r/ rare))) UMR: (s / see-01 :ARG0 (p / person :ref-person 3rd :ref-number Sing.) :ARG1 (b / bird :mod (r/ rare) :ref-number Plural)) “He saw rare birds today.”

- 100. Discourse relations in UMR ► In AMR, there is a minimal system for indicating relationships between clauses - specifically coordination: ► and concept and :opX relations for addition ► or/either/neither concepts and :opX relations for disjunction ► contrast-01 and its participant roles for contrast ► Many subordinated relationships are represented through participant roles, e.g.: ► :manner ► :purpose ► :condition ► UMR makes explicit the semantic relations between (more general) “coordination” semantics and (more specific) “subordination” semantics

- 101. Discourse relations in UMR Discours e Relations inclusive-disj or and + but exclusive-disj and + unexpected and + contrast but-91 and consecutive additive unexpected-co- occurrence-91 contrast-91 :apprehensive :condition :cause :purpose :temporal :manner :pure-addition :substitute :concession :concessive- condition :subtraction

- 102. Disambiguation of quantification scope in UMR “Someone didn’t answer all the questions” (a / answer-01 :ARG0 (p / person) :ARG1 (q / question :quant All :polarity -) :pred-of (s / scope :ARG0 p :ARG1 q)) ∃p(person(p) ∧ ¬∀q(question(q) → ∃a(answer-01(a) ∧ ARG1(a, q) ∧ ARG0(a, p))))

- 103. Quantification scope annotation ► Scope will not be annotated for summation readings, nor is it annotated where a distributive or collective reading can be predictably derived from the lexical semantics. ► The linguistics students ran 5 kilometers to raise money for charity. ► The linguistics students carried a piano into the theater. ► Ten hurricanes hit six states over the weekend. ► The scope annotation only comes into play when some overt linguistic element forces an interpretation that diverges from the lexical default ► The linguistics students together ran 200 kilometers to raise money for charity. ► The bodybuilders each carried a piano into the theater. ► Ten hurricanes each hit six states over the weekend.

- 104. From AMR to UMR Gysel et al. (2021) ► At the sentence level, UMR adds: ► An aspect attribute to eventive concepts ► Person and number attributes for pronouns and other nominal expressions ► Quantification scope between quantified expressions ► At the document level UMR adds: ► Temporal dependencies in lieu of tense ► Modal dependencies in lieu of modality ► Coreference relations beyond sentence boundaries ► To make UMR cross-linguistically applicable, UMR ► defines a set of language-independent abstract concepts and participant roles, ► uses lattices to accommodate linguistic variability ► designs specifications for complicated mappings between words and UMR concepts.

- 105. UMR is a document-level representation ► Temporal relations are added to UMR graphs as temporal dependencies ► Modal relations are also added to UMR graphs as modal dependencies ► Coreference is added to UMR graphs as identity or subset relations between named entities or events

- 106. No representation of tense in AMR talk-01 she he ARG0 ARG2 medium language name name op1 “French” (t / talk-01 :ARG0 (s / she) :ARG2 (h / he) :medium (l / language :name (n / name :op1 "French"))) ► “She talked to him in French.” ► “She is talking to him in French.” ► “She will talk to him in French.”

- 107. Adding tense seems straighMorward... Adding tense to AMR involves defining a temporal relation between event-time and the Document Creation Time (DCT) or speech time (Donatelli et al 2019). talk-01 she he ARG0 ARG2 medium time before op1 now language name name op1 “French” (t / talk-01 :time (b / before :op1 (n / now))) :ARG0 (s / she) :ARG2 (h / he) :medium (l / language :name (n / name :op1 "French"))) “She talked to him in French.”

- 108. ... but it isn’t ► For some events, its temporal relation to the DCT or speech time is undefined. “John said he would go to the florist shop”. ► Is “going to the florist shop” before or after the DCT? ► Its temporal relation is more naturally defined with respect to “said”. ► In quoted speech, the speech time has shifted. “I visited my aunt on the weekend,” Tom said. ► The reference time for “visited” has shifted to the time when Tom said this. We only know the “visiting” event happened before the DCT indirectly. ► Tense is not universally grammaticalized, e.g., Chinese

- 109. Limita9ons of simply adding tense ► Even in cases when tense, i.e., the temporal relation between an event and the DCT is clear, tense may not give us the most precise temporal location of the event. ► John went into the florist shop. ► He had promised Mary some flowers. ► He picked out three red roses, two white ones and one pale pink ► Example from (Webber 1988) ► All three events happened before the DCT, but we also know that the “going” event happened after the “promising” event, but before the “picking out” event.

- 110. UMR represents temporal relations in a document as temporal dependency structures (TDS) ► The temporal dependency structure annotation involves identifying the most specific reference time for each event ► Time expressions and other events are normally the most specific reference times ► In some cases, an event may require two reference times in order to make its temporal location as specific as possible Zhang and Xue (2018); Yao et al. (2020)

- 111. TDS Annotation ► If an event is not clearly linked temporally to either a time expression or another event, then it can be linked to the DCT or tense metanodes ► Tense metanodes capture vague stretches of time that correspond to grammatical tense ► Past_Ref, Present_Ref, Future_Ref ► DCT is a more specific reference time than a tense metanode

- 112. Temporal dependency Structure (TDS) ► If we identify a reference time for every event and time expression in a document, the result will be a Temporal Dependency Graph. descended arrested assaulted ROOT Temporal DCT (4/30/2020 Depends-on today Contained Contained Contained After Before “700 people descended on the state Capitol today, according to Michigan State Police. State Police made one arrest, where one protester had assaulted another, Lt. Brian Oleksyk said.”

- 113. Genre in TDS Annotation ► Temporal relations function differently depending on the genre of the text (e.g., Smith 2003) ► Certain genres proceed in temporal sequence from one clause to the next ► While other genres involve generally non-sequenced events ► News stories are a special type ► many events are temporally sequenced ► temporal sequence does not match with sequencing in the text

- 114. TDS Annotation ► Annotators may also consider the modal annotation when annotating temporal relations ► Events in the same modal “world” can be temporally linked to each other ► Events in non-real mental spaces rarely make good reference times for events in the “real world” ► Joe got to the restaurant, but his friends had not arrived. So, he sat down and ordered a drink. ► Exception to this are deontic complement-taking predicates ► Events in the complement are temporally linked to the complement-taking predicate ► E.g. I want to travel to France: After (want, travel)

- 115. Modality in AMR ► Modality characterizes the reality status of events, without which the meaning representation of a text is incomplete ► AMR has six concepts that represent modality: ► possible-01, e.g., “The boy can go.” ► obligate-01, e.g., “The boy must go.” ► permit-01, e.g., “The boy may go.” ► recommend-01, e.g., “The boy should go.” ► likely-01, e.g., “The boy is likely to go.” ► prefer-01, e.g., “They boy would rather go.” ► Modality in AMR is represented as senses of an English verb or adjective. ► However, the same exact concepts for modality may not apply to other languages

- 116. Modal dependency structure ► Modality is represented as a dependency structure in UMR ► Similar to the temporal relations ► Events and conceivers (sources) are nodes in the dependency structure ► Modal strength and polarity values characterize the edges ► Mary might be walking the dog. AUTH Neutral walk

- 117. Modal dependency structure ► A dependency structure: ► Allows for the nesting of modal operators (scope) ► Allows for the annotation of scope relations between modality and negation ► Allows for the import of theoretical insights from Mental Space Theory (Fauconnier 1994, 1997)

- 118. Modal dependency structure ► There are two types of nodes in the modal dependency structure: events and conceivers ► Conceivers ► Mental-level entities whose perspective is modelled in the text ► Each text has an author node (or nodes) ► All other conceivers are children of the AUTH node ► Conceivers may be nested under other conceivers ► Mary said that Henry wants... AUTH MARY HENRY

- 119. Epistemic strength lattice Epistemic Strength Non-neutral Non-full Partial Full Neutral Strong partial Weak partial Strong neutral Weak neutral Full: The dog barked. Partial: The dog probably barked. Neutral: The dog might have barked.

- 120. Modal dependency structure (MDS) Michigan State Police descended arrested assaulted ROOT MODAL AUTH (CNN) FULLAFF FULLAFF FULLAFF Lt. Brian Oleksyk FULLAFF FULLAFF “700 people descended on the state Capitol today, according to Michigan State Police. State Police made one arrest, where one protester had assaulted another, Lt. Brian Oleksyk said.” (Vigus et al., 2019; Yao et al., 2021):

- 121. En9ty Coreference in UMR ► same-entity: 1. Edmund Pope tasted freedom today for the first time in more than eight months. 2. He denied any wrongdoing. ► subset: 1. He is very possesive and controlling but he has no right to be as we are not together.

- 122. Event coreference in UMR ► same-event 1. El-Shater and Malek’s property was confiscated and is believed to be worth millions of dollars. 2. Abdel-Maksoud stated the confiscation will affect the Brotherhood’s financial bases. ► same-event 1. The Three Gorges project on the Yangtze River has recently introduced the first foreign capital. 2. The loan , a sum of 12.5 million US dollars , is an export credit provided to the Three Gorges project by the Canadian government , which will be used mainly for the management system of the Three Gorges project . ► subset: 1. 1 arrest took place in the Netherlands and another in Germany. 2. The arrests were ordered by anti-terrorism judge fragnoli.

- 123. An UMR example with coreference He is controlling but he has no right to be as we are not together. (s4c / but-91 :ARG1 (s4c3 / control-01 :ARG0 (s4p2 / person :ref-person 3rd :ref-number Singular)) :ARG2 (s4r / right-05 :ARG1 s4p2 :ARG1-of (s4c2 / cause-01 :ARG0 (s4h / have-mod-91 :ARG0 (s4p3 / person :ref-person 1st :ref-number Plural) :ARG1 (s4t/ together) :aspect State :modstr FullNeg)) :modstr FullNeg)) (s / sentence :coref ((s4p2 :subset-of s4p3)))

- 124. Implicit arguments ► Like MS-AMRs, UMR also annotates implicit arguments when they can be inferred from context and can be annotated for coreference like overt (pronominal) expressions (s3d / deny-01 :Aspect Performance :ARG0 (s3p / person :ref-number Singular :ref-person 3rd) :ARG1 (s3t / thing :ARG1-of (s3d2 / do-02 :ARG0 s3p :ARG1-of (s3w / wrong-02) :aspect Process :modpred s3d)) :modstr FullAff) “He denied any wrongdoing”

- 125. The challenge: Integration of different meaning components into one graph ► How do we represent all this information in a unified structure that is still easy to read and scalable? ► UMR pairs a sentence-level representation (a modified form of AMR) with a document-level representation. ► We assume that a text will still have to be processed sentence by sentence, so each sentence will have a fragment of the document-level super-structure.

- 126. Integrated UMR representa6on 1. Edmund Pope tasted freedom today for the first time in more than eight months. 2. Pope is the American businessman who was convicted last week on spying charges and sentenced to 20 years in a Russian prison. 3. He denied any wrongdoing.

- 127. Sentence-level representation vs document-level representation (s1t2 / taste-01 :Aspect Performance :ARG0 (s1p / person :name (s1n2 / name :op1 “Edmund” :op2 “Pope”)) :ARG1 (s1f / free-04 :ARG1 s1p) :time (s1t3 / today) :ord (s1o3 / ordinal-entity :value 1 :range (s1m / more-than :op1 (s1t / temporal-quantity :quant 8 :unit (s1m2 / month))))) Edmund Pope tasted freedom today for the first time in more than eight months. (s1 / sentence :temporal ((DCT :before s1t2) (s1t3 :contained s1t2) (DCT :depends-on s1t3)) :modal ((ROOT :MODALAUTH) (AUTH :FullAff s1t2)))

- 128. Pope is the American businessman who was convicted last week on spying charges and sentenced to 20 years in a Russian prison. (s2i/ identity-91 :ARG0 (p/ person :wiki "Edmond_Pope" :name (n/ name "op1 "Pope)) :ARG1 (b/ businessman :mod (n2/ nationality :wiki "United_States" :name (n3/ name :op1 "America"))) :ARG1-of (c/ convict-01 :ARG2 (c2/ charge-05 :ARG1b :ARG2 (s/ spy-02 :ARG0b :modpred c2)) :temporal (w/ week :mod ( l / last)) :aspect Performance :modstr FullAff) :ARG1-of (s2/ sentence-01 :ARG2 (p2/ prison :mod (c3/ country :wiki "Russia" :name (n4/ name :op1 "Russia)) :duration ( t / temporal-quantity :quant 20 :unit (y/ year))) :ARG3s :aspect Performance :modstr FullAff) :aspect State :modstr FullAff) ( s2 / sentence :temporal ((s2c4 :before s1t2) (DCT :depends-on s2w) (s2w :contained s2c (s2w :contained s2s2) (s2c :after s2s) (s2s :after s2c4)) :modal ((AUTH :FullAff s2i) (AUTH :FullAff s2c) (AUTH :FullAff Null Charger) (Null Charger :FullAff s2c2) (s2c2 :Unsp s2s) (AUTH :FullAff s2s2)) :coref ((s1p :same-entity s2p))) Sentence-level representation vs document-level representation

- 129. He denied any wrongdoing. (s3d/deny-01 :Aspect Performance :ARG0 (s3p / person :ref-number Singular :ref-person 3rd) :ARG1 (s3t / thing :ARG1-of (s3d2 / do-02 :ARG0 s3p :ARG1-of (s3w/wrong-02) :aspect Performance :modpred s3d) :modpred FullAff)) (s3 / sentence :temporal ((s2c :before s3d)) :modal ( (AUTH :FullAff s3p) (s3p :FullAff s3d (s3d :Unsp s3d2))) :coref ((s2p :same-entity s3p))) Sentence-level representation vs document-level representation

- 130. UMR graph

- 131. From AMR to UMR Gysel et al. (2021) ► At the sentence level, UMR adds: ► An aspect attribute to eventive concepts ► Person and number attributes for pronouns and other nominal expressions ► Quantification scope between quantified expressions ► At the document level UMR adds: ► Temporal dependencies in lieu of tense ► Modal dependencies in lieu of modality ► Coreference relations beyond sentence boundaries ► To make UMR cross-linguistically applicable, UMR ► defines a set of language-independent abstract concepts and participant roles, ► uses lattices to accommodate linguistic variability ► designs specifications for complicated mappings between words and UMR concepts.

- 132. Elements of AMR are already cross-linguistically applicable ► Abstract concepts (e.g., person, thing, have-org-role-91): ► Abstract concepts are concepts that do not have explicit lexical support but can be inferred from context ► Some semantic relations (e.g., :manner, :purpose, :time) are also cross-linguistically applicable

- 133. Language-independent vs language-specific aspects of AMR 加入-01 person 董事会 date-entity name temporal-quantity ” 文肯” ” 皮埃尔” 61 岁 have-org-role-91 董事 11 29 Arg0 Arg1 time name op1 op2 age quant unit Arg1-of Arg0 Arg2 month day mod 执行 polarity - “61 岁的 Pierre Vinken 将于 11 月 29 日加入董事会,担任 非执行董事。”

- 134. Language-independent vs language-specific aspects of AMR join-01 person board date-entity name temporal-quantity ”Vinken” ”Pierre” 61 year have-org-role-91 director 11 29 Arg0 Arg1 time name op1 op2 age quant unit Arg1-of Arg0 Arg2 month day mod executive polarity - ““Pierre Vinken , 61 years old , will join the board as a nonexecutive director Nov. 29 .”

- 135. Abstract concepts in UMR ► Abstract concepts inherited from AMR: ► Standardization of quantities, dates etc.: have-name-91, have-frequency-91, have-quant-91, temporal-quantity, date-entity... ► New concepts for abstract events: “non-verbal” predication. ► New concepts for abstract entities: entity types are annotated for named entities and implicit arguments. ► Scope: scope concept to disambiguate scope ambiguity to facilitate translation of UMR to logical expressions (see sentence-level structure). ► Discourse relations: concepts to capture sentence-internal discourse relations (see sentence-level structure).

- 136. Sample abstract events Clause Type UMR Predicates Arg0 Arg1 Arg2 Thetic/present ational possession have-91 possessor possessum Predicative possession belong-91 possessum possessor Thetic/present ational location exist-91 location theme Predicative location have-location- 91 theme location property- predicaOon have-mod-91 theme property Object predication have-role-91 theme Ref point Object category Equational identity-91 theme equated referent

- 137. How do we find abstract eventive concepts? ► Languages use different strategies to express these meanings: ► Predicativized possessum: Yukaghir pulun-die jowje-n'-i old.man-DIM net-PROP 3SG.INTR `The old man has a net, lit. The old man net- has.' ► UMR trains annotators to recognize the semantics of these constructions and select the appropriate abstract predicate and its participant roles

- 138. Language-independent vs language-specific participant roles ► Core participant roles are defined in a set of frame files (valency lexicon, see Palmer et al. 2005). The semantic roles for each sense of a predicate are defined: ► E.g. boil-01: apply heat to water ARG0-PAG: applier of heat ARG1-PPT: water ► Most languages do not have frame files ► But see e.g. Hindi (Bhat et al. 2014), Chinese (Xue 2006) ► UMR defines language-independent participant roles ► Based on ValPaL data on co-expression patterns of different micro-roles (Hartmann et al., 2013)

- 139. Language-independent roles: an incomplete list UMR Annotation Actor Definition animate entity that initiates the action Undergoer theme Recipient force Causer causer experiencer stimulus entity (animate or inanimate) that is affected by the action entity (animate or inanimate) that moves from one entity to another entity, either spatially or metaphorically animate entity that gains possession (or at least temporary control) of another entity inanimate entity that initiates the action animate entity that acts on another animate entity to initiate the action animate entity that acts on another animate entity to initiate the action animate entity that cognitively or sensorily experiences a stimulus entity (animate or inanimate) that is experi- enced by an experiencer

- 140. Road Map for annotating UMRs for under- resourced languages ► Participant Roles: ► Stage 0: General participant roles ► Stage 1: Language-specific frame files ► UMR-Writer allows for the creation of lexicon with argument structure information during annotation ► Morphosemantic Tests: ► Stage 0: Identify one concept per word ► Stage 1: Apply more fine-grained tests to identify concepts ► Annotation Categories with Lattices: ► Stage 0: Use grammatically encoded categories (more general if necessary) ► Stage 1: Use (overtly expressed) fine-grained categories ► Modal Dependencies: ► Stage 0: Use simplified modal annotation ► Stage 1: Fill in lexically based modal strength values

- 141. How UMR accommodates cross-linguistic variability ► Not all languages grammaticalize/overtly express the same meaning contrasts: ► English: I (1SG) vs. you (2SG) vs. she/he (3SG) ► Sanapaná: as- (1SG) vs. an-/ap- (2/3SG) ► However, there are typological patterns in how semantic domains get subdivided: ► A 1/3SG person category would be much more surprising than a 2/3SG one ► UMR uses lattices for abstract concepts, attribute values, and relations to accommodate variability across languages. ► Languages with overt grammatical distinctions can choose to use more fine-grained categories

- 142. Lattic es ►Semantic categories are organized in “lattices” to achieve cross-lingual compatibility while accommodating variability. ►We have lattices for abstract concepts, relations, as well as attributes Non-3rd Non-1st 1st 2nd 3rd Excl. Incl. person

- 143. Wordhood vs concepthood across languages ► The mapping between words and concepts in languages is not one-to-one: UMR designs specifications for complicated mappings between words and concepts. ► Multiple words can map to one concept (e.g., multi-word expressions) ► One word can map to multiple concepts (morphological complexity)

- 144. Multiple words can map to a single (discontinuous) concept (x0/帮忙-01 :aspect Performance :arg0 (x1/地理学) :affectee (x2/我) :degree (x3/大)) 地理学帮 了我很大的忙。 “Geography has helped me a lot” (w / want-01 :Aspect State :ARG0 (p / person) :ref-person 3rd :ref-number Singular :ARG1 (g / give-up-07 :ARG0 h :ARG1 (t / that) :aspect Performance :modpred w) :ARG1-of (c / cause-01 :ARG0 (a / umr-unknown)) :aspect State) “Why would he want to give that up?”

- 145. One word maps to multiple UMR concepts ► One word containing predicate and arguments Sanapaná: yavhan anmen m-e-l-yen-ek honey alcohol NEG-2/3M-DSTR-drink-POT "They did not drink alcohol from honey." (e / elyama :actor (p / person :ref-person 3rd :ref-number Plural) :undergoer (a / anmen :material (y/ yavhan)) :modstr FullNeg :aspect Habitual) ► Argument Indexation: Identify both predicate concept and argument concept, don’t morphologically decompose word

- 146. One word maps to multiple UMR concepts ► One word containing predicate and arguments Arapaho: he'ih'iixooxookbixoh'oekoohuutoono' he'ih'ii-xoo-xook- bixoh'oekoohuutoo-no' NARR.PST.IPFV-REDUP-through-make.hand.appear.quickly-PL ``They were sticking their hands right through them [the ghosts] to the other side.'' (b/ bixoh'oekoohuutoo `stick hands through' :actor (p/ person :ref-person 3rd :ref-number Plural) :theme (h/ hands) :undergoer (g/ [ghosts]) :aspect Endeavor :modstr FullAff) ► Noun Incorporation (less grammaticalized): identify predicate and argument concept

- 147. UMR-Writer ► The annotation interface we use for UMR annotation is called UMR-Writer ► UMR-Writer includes interfaces for project management, sentence-level and document-level annotation, as well as lexicon (frame file) creation. ► UMR-Writer has both keyboard-based and click-based interfaces to accommodate the annotation habits of different anntotators. ► UMR-Writer is web-based and supports UMR annotation for avariety of languages and formats. Sofar it supports Arabic, Arapaho, Chinese, English,Kukama Navajo, and Sanapana. It can easily extended to more languages.

- 148. UMR writer: Project management

- 149. UMR writer: Project management

- 150. UMR writer: Sentence-level interface

- 151. UMR writer: Lexicon interface

- 152. UMR Writer: Document-level interface

- 153. UMR summary ► UMR is a rooted directed node-labeled and edge-labeled document-level graph. ► UMR is a document-level meaning representation that builds on sentence-level meaning representations ► UMR aims to achieve semantic stability across syntactic variations and support logical inference ► UMR is across-lingual meaning representation that separates language-general aspects of meaning from those that are language-specific ► We are doing UMR English, Chinese, Arabic, Arapaho, Kukama, Sanapana, Navajo, Quechua

- 154. Use cases of UMR ► T emporal reasoning ► UMR can be used to extract temporal dependencies, which can then be used to perform temporal reasoning ► Knowledge extraction ► UMR annotates aspect, and this can be used to extract habitual events or state, which are typical knowledge forms ► Factuality determination ► UMR annotates modal dependencies, and this can be used to verify the factuality of events or claims ► As intermediate representation for dialogue systems where control is more needed. ► UMR annotates entities and coreferences, which helps tracking dialogue states

- 155. Planned UMR activities • The DMR international workshops • UMR summer schools, tentatively in 2024 and 2025. • UMR shared tasks once we have sufficient amount of UMR-annotated data as well as evaluation metrics and baseline parsing models

- 156. References Banarescu, L., Bonial, C., Cai, S., Georgescu, M., Griffitt, K., Hermjakob, U., Knight, K., Koehn, P ., Palmer, M., and Schneider, N. (2013). Abstract meaning representation for sembanking. In Proceedings of the 7th linguistic annotation workshop and interoperability with discourse, pages 178–186. Hartmann, I., Haspelmath, M., and Taylor, B., editors (2013). TheValency Patterns Leipzig online database. Max Planck Institute for Evolutionary Anthropology, Leipzig. Van Gysel, J. E. L., Vigus, M., Chun, J., Lai, K., Moeller, S., Yao, J., O’Gorman, T. J., Cowell, A., Croft, W. B., Huang, C. R., Hajic, J., Martin, J. H., Oepen, S., Palmer, M., Pustejovsky, J.,Vallejos, R.,and Xue, N. (2021). Designing auniform meaning representation for natural language processing. Künstliche Intelligenz, pages 1– 18. Vigus, M., Van Gysel, J. E., and Croft, W. (2019). A dependency structure annotation for modality. In Proceedings of the First International Workshop on Designing Meaning Representations, pages 182–198. Yao, J., Qiu, H., Min, B., and Xue, N. (2020). Annotating temporal dependency graphs via crowdsourcing. In Proceedings of the 2020 Conference on Empirical Methods in Natural LanguageProcessing (EMNLP), pages 5368–5380. Yao, J., Qiu, H., Zhao, J., Min, B., and Xue, N. (2021). Factuality assessment as modal dependency parsing. In Proceedingsof the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers), pages 1540–1550. Zhang, Y . and Xue, N. (2018). Structured interpretation of temporal relations. In Proceedings of LREC2018.

- 157. Acknowledgements We would like to acknowledge the support of National Science Foundation: • NSF IIS (2018): “Building a Uniform Meaning Representation for Natural Language Processing” awarded to Brandeis (Xue, Pustejovsky), Colorado (M. Palmer, Martin, and Cowell) and UNM (Croft). • NSF CCRI (2022): ``Building a Broad Infrastructure for Uniform Meaning Representations'', awarded to Brandeis (Xue, Pustejovsky) and Colorado (A. Palmer, M. Palmer, cowell, Martin), with Croft as consultant All views expressed in this paper are those of the authors and do not necessarily represent the view of the National Science Foundation.

- 159. Meaning Representations for Natural Languages Tutorial Part 3a Modeling Meaning Representation: SRL Jeffrey Flanigan, Tim O’Gorman, Ishan Jindal, Yunyao Li, Martha Palmer, Nianwen Xue

- 160. Who did what to whom, when, where and how? (Gildea and Jurafsky, 2000; Màrquez et al., 2008) 160 Semantic Role Labeling (SRL)

- 161. broke Derik the window with a hammer to 161 Predicate Identification 1 Identify all predicates in the sentence broke Semantic Role Labeling (SRL) escape escape

- 162. break.01 broke Predicate Identification 1 2 Identify all predicates in the sentence Sense Disambiguation Classify sense of each predicate 162 break.01, break A0: breaker A1: thing broken A2: instrument A3: pieces A4: arg1 broken away from what? English Propbank Breaking_apart Pieces Whole Criterion Manner Means Place… FrameNet Frame Break-45.1 Agent Patient Instrument Result VerbNet Semantic Role Labeling (SRL) Derik the window with a hammer to escape.

- 163. break.01 Predicate Identification 1 2 3 Identify all predicates in the sentence Sense Disambiguation Classify sense of each predicate Argument Identification Find all roles of each predicate 163 Argument identification can either be - Identification of span, (span SRL) OR - Identification of head (dependency SRL) broke Semantic Role Labeling (SRL) Derik the window with a hammer to escape

- 164. Predicate Identification 1 2 4 3 Identify all predicates in the sentence Sense Disambiguation Classify sense of each predicate Argument Identification Find all roles of each predicate Argument Classification Assign semantic label to each role 164 Breaker thing broken break.01 instrument Purpose Semantic Role Labeling (SRL) break.01 broke Derik the window with a hammer to escape

- 165. Predicate Identification 1 2 4 3 Identify all predicates in the sentence Sense Disambiguation Classify sense of each predicate Argument Identification Find all roles of each predicate Argument Classification Assign semantic label to each role 165 A0: Breaker A1: thing broken break.01 A2: instrument AM-PRP: Purpose Semantic Role Labeling (SRL) break.01 broke Derik the window with a hammer to escape If using PropBank

- 166. Predicate Identification 1 2 4 3 Identify all predicates in the sentence Sense Disambiguation Classify sense of each predicate Argument Identification Find all roles of each predicate Argument Classification Assign semantic label to each role 166 A0: Breaker A1: thing broken break.01 A2: instrument AM-PRP: Purpose Semantic Role Labeling (SRL) break.01 broke Derik the window with a hammer to escape 5 Global Optimization Global constraints (predicates and arguments)

- 167. 167 Outline q Early SRL approaches [< 2017] q Typical neural SRL model components q Performance analysis q Syntax-aware neural SRL models q What, When and Where? q Performance analysis q How to incorporate Syntax? q Syntax-agnostic neural SRL models q Performance Analysis q Do we really need syntax for SRL? q Are high quality contextual embedding enough for SRL task? q Practical SRL systems q Should we rely on this pipelined approach? q End-to-end SRL systems q Can we jointly predict dependency and span? q More recent approaches q Handling low-frequency exceptions q Incorporate semantic role label definitions q SRL as MRC task q Practical SRL system evaluations q Are we evaluating SRL systems correctly? q Conclusion

- 168. 168 Outline q Early SRL approaches q Typical neural SRL model components q Performance analysis q Syntax-aware neural SRL models q What, When and Where? q Performance analysis q How to incorporate Syntax? q Syntax-agnostic neural SRL models q Performance Analysis q Do we really need syntax for SRL? q Are high quality contextual embedding enough for SRL task? q Practical SRL systems q Should we rely on this pipelined approach? q End-to-end SRL systems q Can we jointly predict dependency and span? q More recent approaches q Handling low-frequency exceptions q Incorporate semantic role label definitions q SRL as MRC task q Practical SRL system evaluations q Are we evaluating SRL systems correctly? q Conclusion

- 169. 169 Early SRL Approaches Ø 2 to 3 steps to obtain complete predicate- argument structure Ø Predicate Identification Ø Generally considered as not a task, as all the existing SRL datasets provided Gold predicate location. Ø Predicate sense disambiguation Ø Logistic Regression [Roth and Lapata, 2016] Ø Argument Identification Ø Binary classifier [Pradhan et al., 2005; Toutanova et al., 2008] Ø Role Labeling Ø Labeling is performed using a classifier (SVM, logistic regression) Ø Argmax over roles will result in a local assignment Ø Requires Feature Engineering Ø Mostly Syntactic [Gildea and Jurafsky, 2002] Ø Re-ranking Ø Enforce linguiscc and structural constraint (e.g., no overlaps, disconcnuous arguments, reference arguments, ...) Ø Viterbi decoding (k-best list with constraints) [Täckström et al., 2015] Ø Dynamic programming [Täckström et al., 2015; Toutanova et al., 2008] Ø Integer linear programming [Punyakanok et al., 2008] Ø Re-ranking [Toutanova et al., 2008; Bjö̈rkelund et al., 2009]

- 170. 170 Outline q Early SRL approaches q Typical neural SRL model components q Performance analysis q Syntax-aware neural SRL models q What, When and Where? q Performance analysis q How to incorporate Syntax? q Syntax-agnostic neural SRL models q Performance Analysis q Do we really need syntax for SRL? q Are high quality contextual embedding enough for SRL task? q Practical SRL systems q Should we rely on this pipelined approach? q End-to-end SRL systems q Can we jointly predict dependency and span? q More recent approaches q Handling low-frequency exceptions q Incorporate semantic role label definitions q SRL as MRC task q Practical SRL system evaluations q Are we evaluating SRL systems correctly? q Conclusion

- 171. Encoder Classifier Embedder Input Sentence Word embeddings - FastText, GloVe - ELMo, BERT Types of encoder - LSTMs, Attention - MLP Typical Neural SRL Components 171 A typical neural SRL model contains three components Ø Classifier Ø Assign a semantic role label to each token in the input sentence. [Local + Global] Ø Encoder: Ø Encodes the context information to each token. Ø Embedder: Ø Represent input token into continuous vector representation.

- 172. Encoder Classifier Embedder Input Sentence Word embeddings - FastText, GloVe - ELMo, BERT Neural SRL Components – Embedder 172 Ø Embedder: Ø Represent input token into continuous vector representation. He had dared to defy nature Embedder Ø Could be static or dynamic embeddings Ø Could include syntax information Ø Usually, a binary flag Ø 0 à represents no predicate Ø 1 à represent predicate End-to-end systems do not include this flag

- 173. Encoder Classifier Embedder Input Sentence Word embeddings - FastText, GloVe - ELMo, BERT Dynamic Embeddings Merchant et al., 2020 Neural SRL Components – Embedder Static Embeddings GLoVe: • He et al., 2017 • Strubell et al., 2018 SENNA: • Ouchi et al., 2018 ELMo: • Marcheggiani et al., 2017 • Ouchi et al., 2018 • Li et al., 2019 • Lyu et al., 2019 • Jindal et al., 2020 • Li et al., 2020 BERT: • Shi et al., 2019 • Jindal et al., 2020 • Li et al., 2020 BERT: • Shi et al., 2019 • Conia et al., 2020 • Zhang et al., 2021 • Tian et al., 2022 RoBERTa: • Conia et al., 2020 • Blloshmi et al., 2021 • Fei et al., 2021 • Wang et al., 2022 • Zhang et al. 2022 XLNet: • Zhou et al., 2020 • Tian et al., 2022 173 Ø Embedder: Ø Represent input token into continuous vector representation.

- 174. 85.28 89.6 91.4 91.5 92.6 93.3 70 75 80 85 90 95 100 Random GLoVe; Cai et al., 2018 ELMo; Liet al., 2019 BERT; Conia et al., 2020 BERT; Conia et al., 2020 RoBERTa; Wang et al., 2022 WSJ F1 75.09 79.3 83.28 84.67 85.9 87.2 70 75 80 85 90 95 100 Random GLoVe; He et al., 2018 ELMo; Liet al., 2019 BERT; Conia et al., 2020 BERT; Conia et al., 2020 RoBERTa; Wang et al., 2022 Brown F1 Static Static Dataset: CoNLL09 EN Performance Analysis Best performing model for each word embedding type 174

- 175. Encoder Classifier Embedder Input Sentence Neural SRL Components – Encoder 175 Ø Encoder: Ø Encodes the context information to each token. Types of encoder - BiLSTMs - Attention He had dared to defy nature Embedder Encoder Left pass Right pass Encoder could be Ø Stacked BiLSTMs or some variant of LSTMs Ø Attention Network Ø Include syntax information

- 176. Encoder Classifier Embedder Input Sentence Neural SRL Components – Classifier 176 Ø Classifier Ø Assign a semantic role label to each token in the input sentence. He had dared to defy nature Embedder Encoder Usually a FF followed by Softmax - MLP Classifier B-A0 0 0 B-A2 I-A2 I-A2

- 177. 177 Outline q Early SRL approaches q Typical neural SRL model components q Performance analysis q Syntax-aware neural SRL models q What, When and Where? q Performance analysis q How to incorporate Syntax? q Syntax-agnostic neural SRL models q Performance Analysis q Do we really need syntax for SRL? q Are high quality contextual embedding enough for SRL task? q Prac/cal SRL systems q Should we rely on this pipelined approach? q End-to-end SRL systems q Can we jointly predict dependency and span? q More recent approaches q Handling low-frequency excepcons q Incorporate semancc role label definicons q SRL as MRC task q Prac/cal SRL system evalua/ons q Are we evaluacng SRL systems correctly? q Conclusion

- 178. 178 What and Where Syntax? <latexit sha1_base64="DDfPssnDMCfvIPspxYHzFdvDzxQ=">AAAMkXicrVZtb9s2EHa7ruk8d2tXYPuwL+yCDN1gG5bbvA0wkKQJNmBr4nlJW8AyAko+2VwoUaCo2g4hYH9zv2B/YR93pOT6JTa6ohUM63T3PHfH44knL+YsUY3G37duf3Ln07sb9z4rf165/8WXDx5+9TIRqfThwhdcyNceTYCzCC4UUxxexxJo6HF45V09N/ZXb0AmTETnahJDL6SDiAXMpwpVlw/v/uV6MGCRDtgglfBj1lXeMO6VXR8iBZJFg7IrIWHX4Imxdk2YEeurYaYfZ7pccPsQQ9SHyJ8gfQghkBZJWBhzqBLoD4AMhWTXIlKUk5j2++i15dS3YdwrE0JmThSMVdbFRaVhRBKIWw6EVSLFyD7UnYKQX26i6EAfY47+VaYz4n5PiCfFFRgJszC3HDNiUV+MEIOaEVNDvFELz81DGoYgCxdKzHiQ+DSG3FAnrou/t+HzuEVQoFdzQfNwy7HyKLMQuXMUlj23O2ftU0t5edI5MsLxybm5nZ5dnOLt8Lg901mlEdqHHauYctoXp+fzXrcI0e5AAkTajakc6EaW2QVPtXYV9YZTaBdsOcPJFmwrEM1sPftFrd3pFPY8Lxd75u2uW4XpI2yXru0Z3AKpyJiIIEhAtWo7saoSTj3gaJpwaOkAO6rlemiWDJKq8dPyOMV26GEqmK+OktT7M9NNGC8G+N9unmX6aYZJqg9xgrmgH/FhmexmejvTIZVXK7x8hIKh/511K/04+7FrasA/pAb7md57vxo4TcySSp/QaIDedxvvl/L+ipSlECoPlkZMkT6e0TTyofUUxu/nvHN2dj5zXbyl7kjI/kCKNDaQ/IcHen+GmUM4WfGjjXX2d3t4aruTOuvs23bnaHOdfc+WiYaxlFl5+k5PxwFqbEnMePkpkbyGtgI1HTiXDzYb9Ya9yE3BKYTNUnG1Lx/e+dftCz8NcUL5nCZJ12nEqqdx55nPAd2nOC6wzBS7AsWIhpD0tJ2VGdlCTZ8EQhKzPcRq5xm4kiSZhB4iQ6qGybLNKFfZuqkK9nqaRXGqcOl5oCDl5rg3gxcbRYKv+AQF6kuGuRJ/SCX1ccwuRlHs6jq7oanNldUajZIzT1I50Vu4OckQB0pSNSKVODRzMRYJM6MeZ6599in3c0yqBK6CbpUXY8E4Ni2JrR7gsLf10VQ8AXyBWDL8IdNy4GUaN6iKu7Rt/hpLaF/ICDg3PTcF7xqc4+T/S/A4wP7q/HyEnbyzUyWOs1clzX0E4aeGL8KQYrO4WHfOlcLPA6eni0ftmldKKb1pRtMSwc6eAr1iEUghyxSPp7DAsIqVULO2eeT8km02i3AfbQsJ4XNOtNrHze3VYYJUcktxAzx1IqFAu6gqQixizZTNsYBjT4Lp0LcVOlyRlCH4axnPa+s4ci2ns5YTrs/sRW1dbuYwz/RcRq9X57OE66zAme+5rNtcSoFDoLqWZirqSjYYqt5lrnAViyZks3nDlVzta+rlnXwcIPhOmkZpS/YC/uj8Vjt5Q/lywvjJrAx0BsMNx7PSWT4Zbwovm3Vnp/7s9+bmwVFxat4rfVv6rvSk5JR2SwelX0rt0kXJv/vPxv2Nrze+qTyq7FcOKgX29q2C86i0cFV+/Q8g42bX</latexit> [Derick] broke the [window] with a [hammer] to [escape] . Derick break the window with a hammer to escape . PROPN VERB DET NOUN ADP DET NOUN PART VERB PUNT nsubj det obj mark det obl mark obl ROOT Surface form Lemma form U{X}POS Dependency Relation Everything or anything that explains the syntactic structure of the sentence Parsed with UDPipe Parser: hjp://lindat.mff.cuni.cz/services/udpipe/ What Syntax for SRL?

- 179. Syntax at Embedder Concatenate {POS, dependency relation, dependency head and other syntactic information} Where the Syntax is being used? Marcheggiani et al.,2017b Li et al., 2018 He et al., 2018 Wang et al., 2019 Kasai et al., 2019 HE et al., 2019 Li et al., 2020 Zhou et al., 2020 179 Encoder Classifier Embedder Input Sentence Word embeddings - FastText, GloVe - ELMo, BERT EMB

- 180. Syntax at Encoder Dependency tree - Graphs - LSTMs Trees Marcheggiani et al., 2017 Zhou et al., 2020 Marcheggiani et al., 2020 Zhang et al., 2021 Tian et al., 2022 180 Encoder Classifier Embedder Input Sentence Types of encoder - BiLSTMs - Attention ENC Where the Syntax is being used?

- 181. Joint Learning At what level Syntax is used? Strubell et al., 2018 Shi et al., 2020 Multi-task learning 181 Encoder Classifier Embedder Input Sentence Word embeddings - FastText, GloVe - ELMo, BERT Types of encoder - BiLSTMs - Attention - MLP

- 182. 87.7 88 89.5 89.8 90.2 90.86 90.99 91.27 91.7 92.83 80 82 84 86 88 90 92 94 Marcheggiani et al.,2017b Marcheggiani et al., 2017 Heet al., 2018 LI et al., 2018 Kasaiet al., 2019 HEet al., 2019 Lyu et al., 2019 Zhou et al., 2020 LI et al., 2020 Fei et al., 2021 WSJ F1 Dataset: CoNLL09 EN 2018 2019 2020 2021à 2017 Emb Enc Emb Emb Emb Emb Enc + Emb Enc Emb BERT/Fine-tune Regime +2.0 -2.9 Comparing Syntax aware models Performance Analysis Enc 182

- 183. Dataset: CoNLL09 EN Comparing Syntax aware models Observations q Syntax at encoder level provides the best performance. q Most likely, Encoder is best suited for incorporaOng dependency or consOtuent relaOons. q BERT models raised the bar q With Max improvement over Out-of-domain dataset q However, the improvement since 2019 is marginal 183

- 184. A Simple and Accurate Syntax-Agnostic Neural Model for Dependency-based Semantic Role Labeling Marcheggiani et al., 2017 Ø Predict semantic dependency edges between predicates and arguments. Ø Use predicate-specific roles (such as make-A0 instead of A0) as opposed to generic sequence labeling task. 184 Syntax at embedder level Diego Marcheggiani, Anton Frolov, and Ivan Titov. 2017. A Simple and Accurate Syntax-Agnostic Neural Model for Dependency-based Semantic Role Labeling. In Proceedings of the 21st Conference on Computational Natural Language Learning (CoNLL 2017), pages 411–420, Vancouver, Canada. Association for Computational Linguistics.

- 185. Marcheggiani et al., 2017 Wp à Randomly initialized word embeddings Wr à Pre-trained word embeddings PO à Randomly initialized POS embeddings Le à Randomly initialized Lemma embeddings à Predicate specific feature [Binary] Embedder OR Input word representation He had dared to defy nature Embedder 185 Wp Wr PO Le Wp Wr PO Le Wp Wr PO Le Wp Wr PO Le Wp Wr PO Le Wp Wr PO Le Wp Wr PO Le Syntax at embedder level

- 186. Marcheggiani et al., 2017 Encoder He had dared to defy nature Wp Wr PO Le Wp Wr PO Le Wp Wr PO Le Wp Wr PO Le Wp Wr PO Le Wp Wr PO Le Embedder Encoder Several BiLSTMs layers - Capturing both the left and the right context - Each BiLSTM layer takes the lower layer as input 186 Syntax at embedder level

- 187. Marcheggiani et al., 2017 Preparation for classifier Provide predicate hidden state as another another input to classifier along with each token. He had dared to defy nature Wp Wr PO Le Wp Wr PO Le Wp Wr PO Le Wp Wr PO Le Wp Wr PO Le Wp Wr PO Le Embedder Encoder + ~6% F1 on CoNLL09 EN 187 The two ways of encoding predicate information, using predicate-specific flag at embedder level and incorporating the predicate state in the classifier, turn out to be complementary. Syntax at embedder level Predicate Hidden state

- 188. Marcheggiani et al., 2017 86.9 87.3 87.3 87.7 87.7 80 81 82 83 84 85 86 87 88 89 90 Bjö̈rkelund et al. (2010) Täckström et al. (2015) FitzGerald et al. (2015) Roth and Lapata (2016) Marcheggianiet al. (2017) WSJ 75.6 75.7 75.2 76.1 77.7 65 70 75 80 85 90 Bjö̈rkelund et al. (2010) Täckström et al. (2015) FitzGerald et al. (2015) Roth and Lapata (2016) Marcheggiani et al. (2017) Brown 188 Syntax at embedder level Dataset: CoNLL09 EN