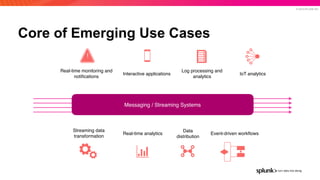

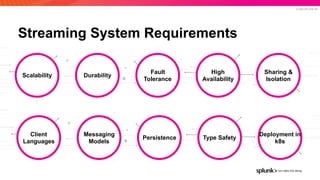

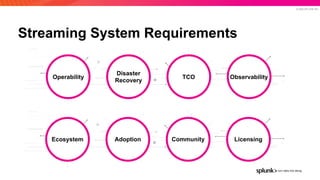

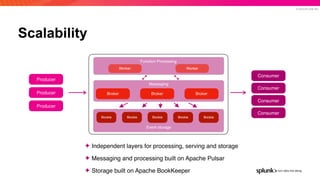

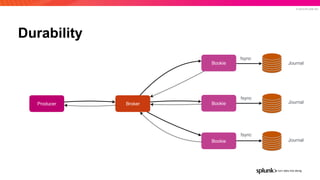

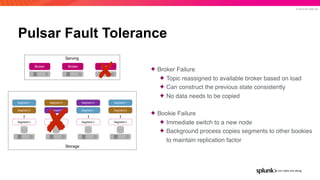

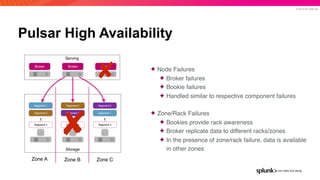

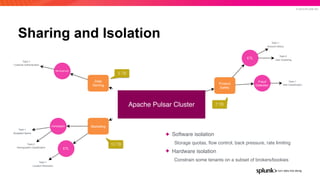

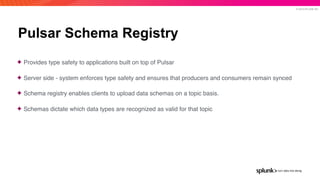

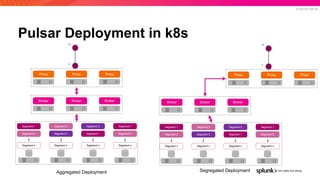

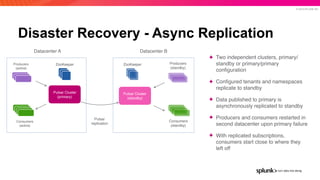

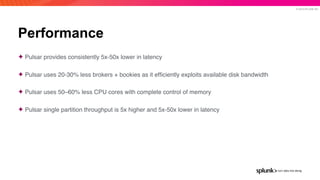

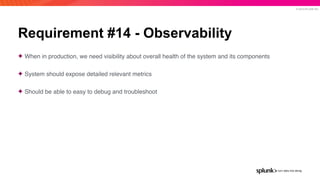

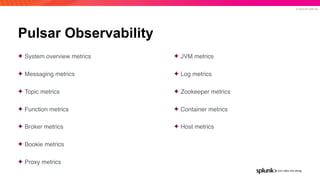

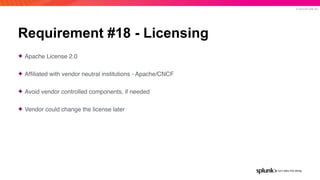

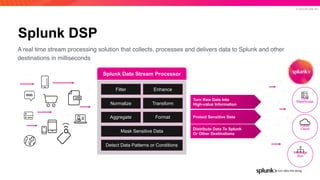

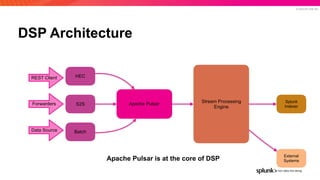

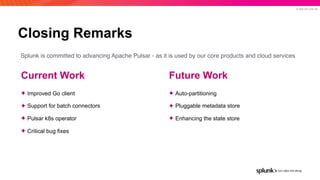

The document presents an overview of why Splunk chose Apache Pulsar as their streaming system, detailing its requirements such as scalability, durability, fault tolerance, and high availability. It highlights Pulsar's capabilities in handling real-time data processing efficiently, with lower latency and costs compared to other systems. Additionally, it discusses Splunk's successful integration of Pulsar within its data stream processing solutions, aimed at enhancing data-driven decision-making.