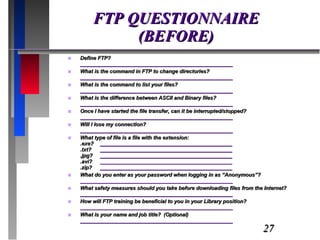

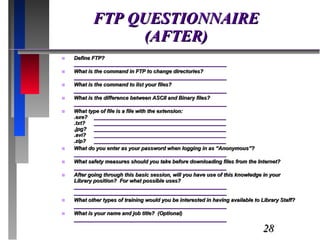

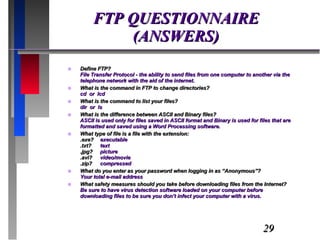

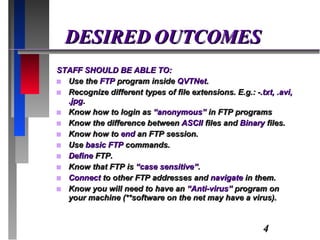

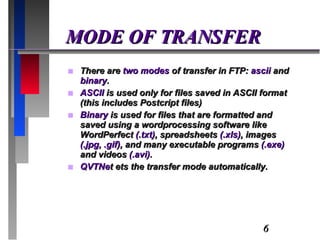

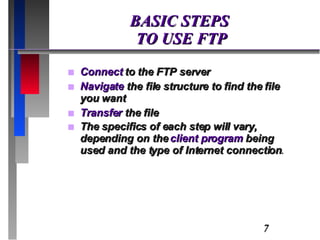

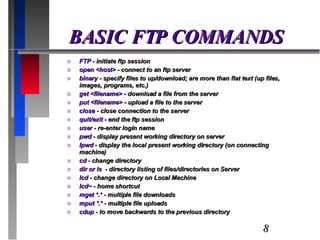

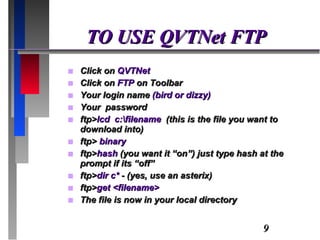

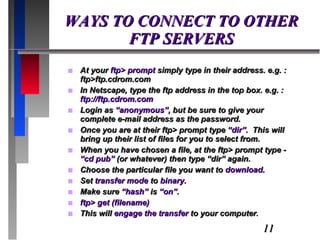

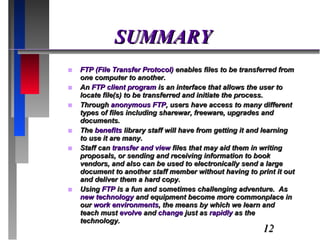

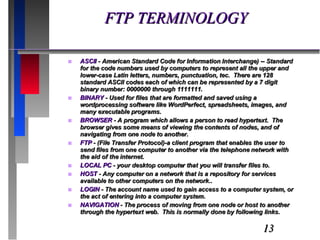

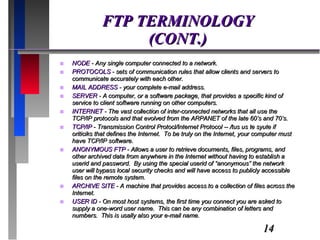

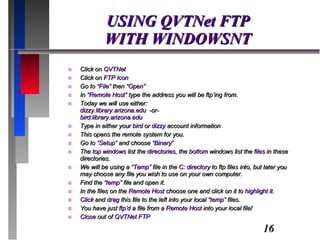

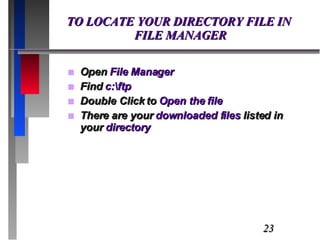

The document provides an overview of FTP (File Transfer Protocol), detailing its purpose in file sharing, modes of transfer (ASCII and binary), and basic commands for using FTP. It outlines the objectives of FTP training, expected outcomes for participants, and describes the steps to connect to FTP servers, navigate directories, and transfer files. Additional terminology, safety measures, and the importance of virus protection while using FTP are also discussed.

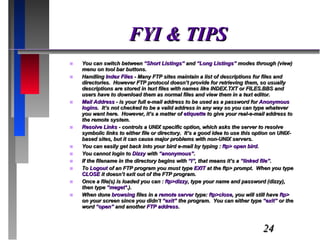

![FYI & TIPS (CONT.) When retrieving non-text files , you must use Binary Mode , otherwise the file(s) get messed up. Files reside on disks, denoted by NAME: e.g., NETDISK: and a file on that disk could be denoted by: NETDISK:[FAQ.INTERNET]FTP.FAQ -You can change to that directory by typing: cd netdisk:[faq.internet] -Or you can type: cd faq and then: cd internet To read a file (UNIX system) while you are connected by retrieving to the screen itself, use: get filename.idx- FTP Client programs for MS-Windows (addresses) ftp://ftp.cica.indiana.edu/pc/win3/winsock/ http://www.tucows.com/ Case counts (ftp is case sensitive) Be aware that software files on the net may have a virus .](https://image.slidesharecdn.com/113-1218449656797578-9/85/slide-25-320.jpg)