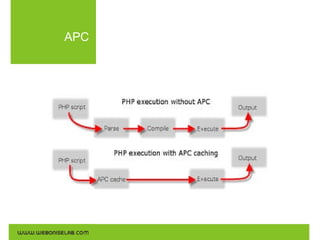

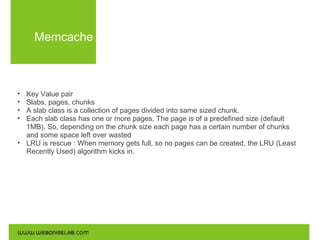

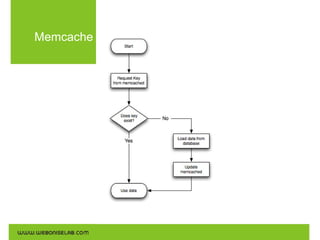

Web application caching stores dynamically generated data for reuse, improving performance and reducing server requests. Different caching mechanisms include opcode caches (APC, eAccelerator), query result caches (Memcache, Redis), and content delivery networks (CDNs) for static data. Implementing caching can lead to significant performance gains and reduced hardware costs.